Hinton Problems

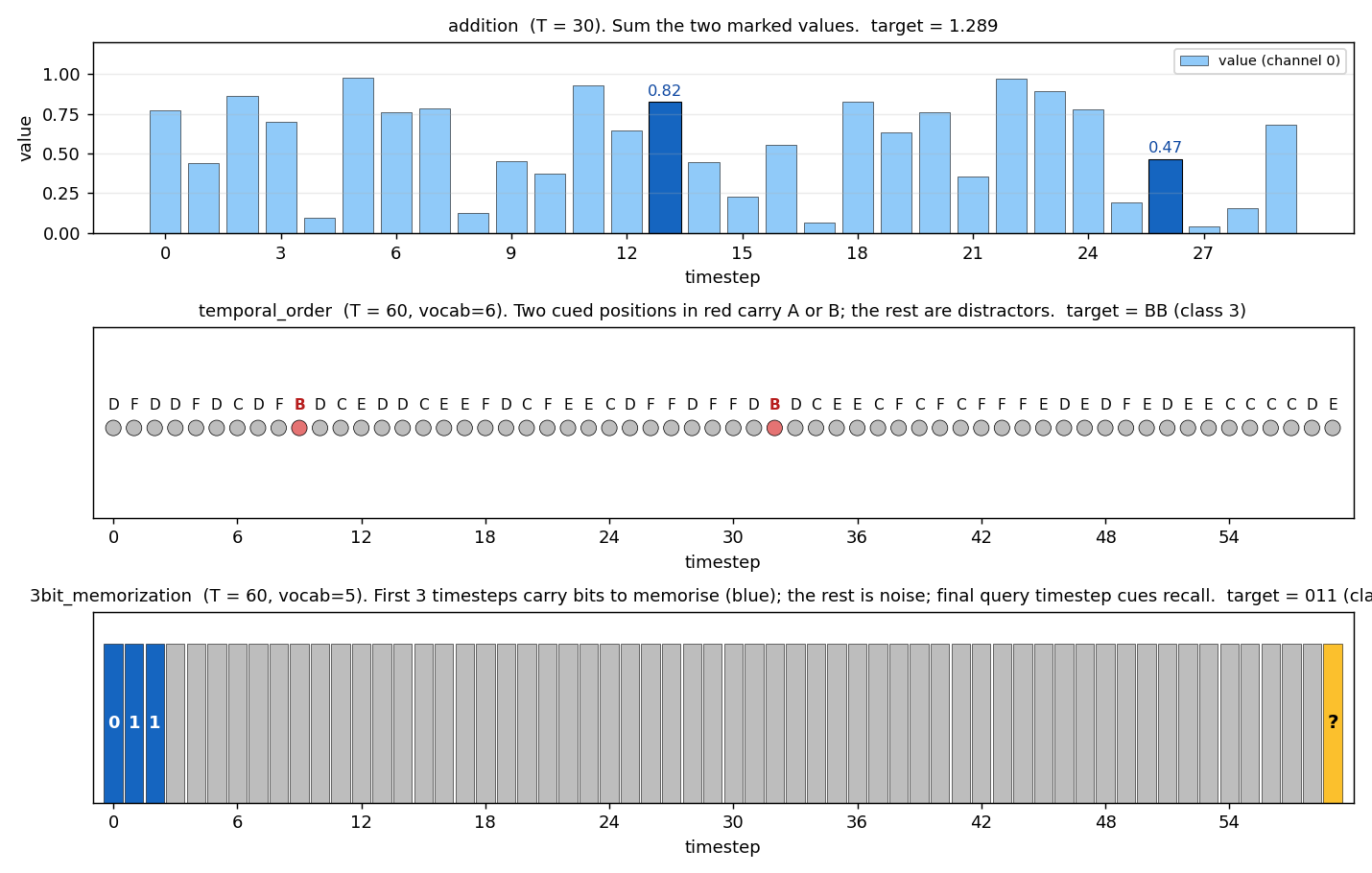

A reproducible-baseline catalog of the synthetic learning problems that appear in Geoffrey Hinton’s experimental papers from 1981 through 2022 — implemented in pure numpy, runnable on a laptop CPU, with paper-comparison metrics per stub.

Site: https://cybertronai.github.io/hinton-problems/ • Catalog: RESULTS.md • 53 of 53 stubs implemented (PRs #32–#41, all merged 2026-05-03)

Introduction

The field has standardized on backprop by the end of the ’80s, and Hinton gives a sample of problems that were used at the time. In the last 20 years, we have transitioned to GPUs, and the math has changed considerably. Instead of being bottlenecked by arithmetic, the shrinking of transistors means that arithmetic is essentially free, and all of the work comes from data movement. Backprop is inefficient in terms of “commute to compute ratio” because it requires fetching all of the activations for each gradient add.

So a natural experiment would be to redo key experiments of this time with a focus on data movement. The first step is to get a baseline — to establish the list of problems which are famous (made by Hinton), reasonable to implement, and easy to run/reproduce.

— Yaroslav, issue #1 (Sutro Group)

This repository is that baseline. v1 ships 53 implementations covering the lineage from the 4-2-4 encoder (1985) through the shifter (1986), bars (1995), MultiMNIST (2017), Constellations (2019), Ellipse World (2022), and the Forward-Forward suite (2022). Each stub is a self-contained folder with model + train + eval + visualization + animated GIF, all in numpy, all runnable in <5 min per seed on an M-series laptop.

The next step (#45 v2) instruments these 53 baselines with ByteDMD — Yaroslav’s data-movement cost tracer — to measure the actual “commute” each algorithm pays.

What’s here

| 27 reproduce paper claims | 25 partial reproductions | 1 non-replication |

|---|---|---|

| full or qualitative match | algorithm works, paper-config gap documented | gap analysed in 3 causes |

Pure numpy + matplotlib throughout. Every stub runs on a laptop CPU. Each problem lives in its own folder with <slug>.py (model + train + eval), README.md, make_<slug>_gif.py, visualize_<slug>.py, an animated <slug>.gif, and a viz/ folder of training curves and weight visualizations.

Visual tour

|  |

|---|---|

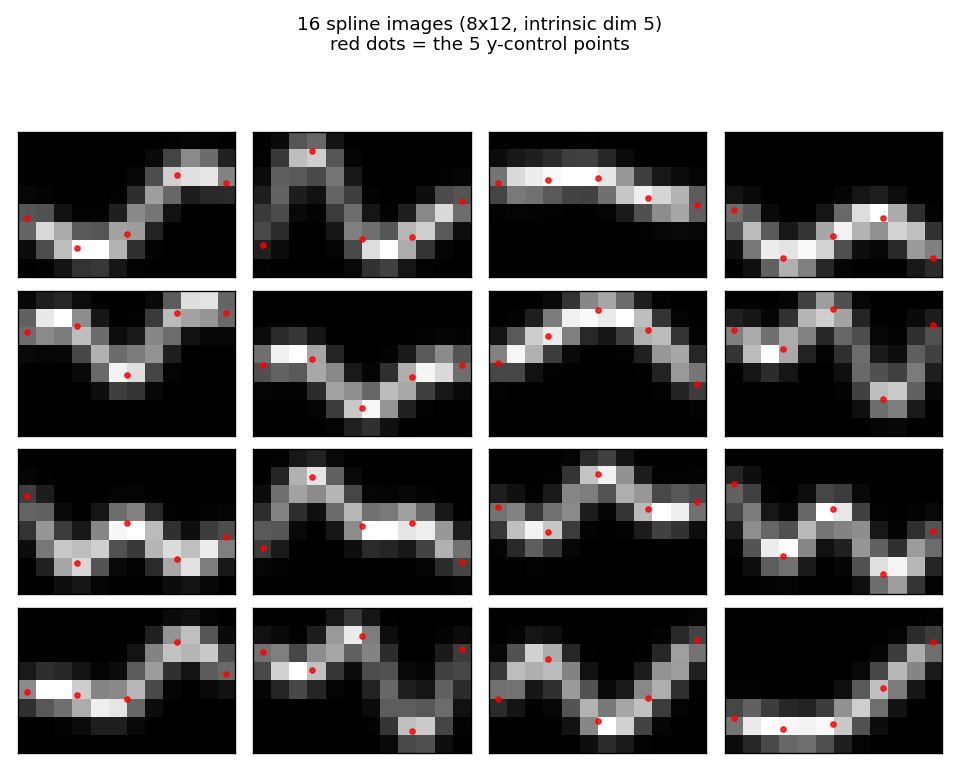

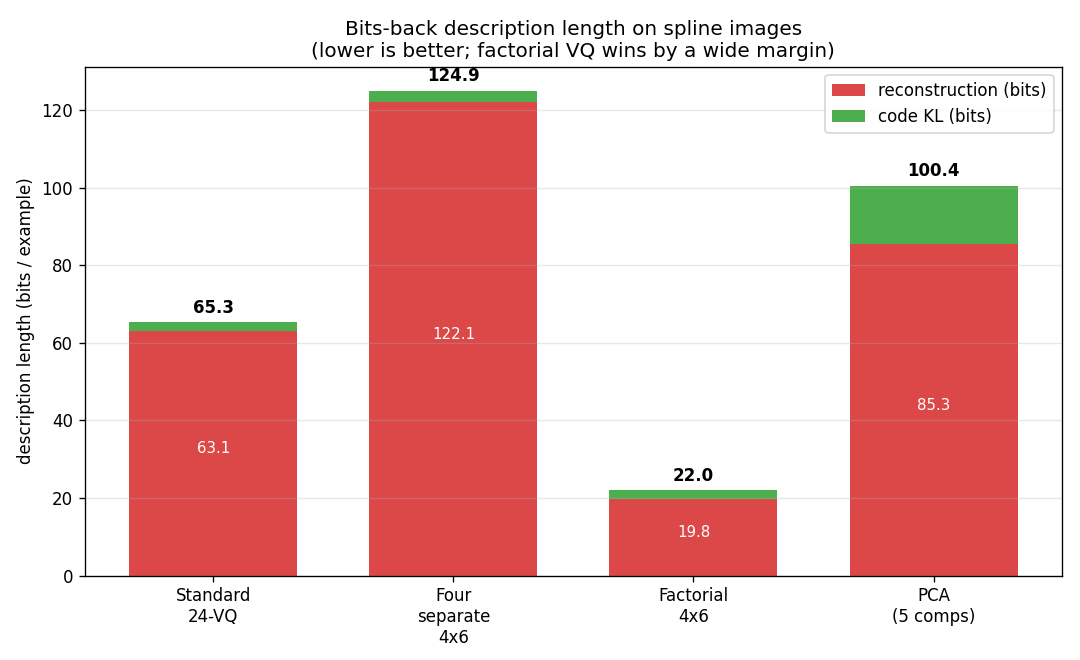

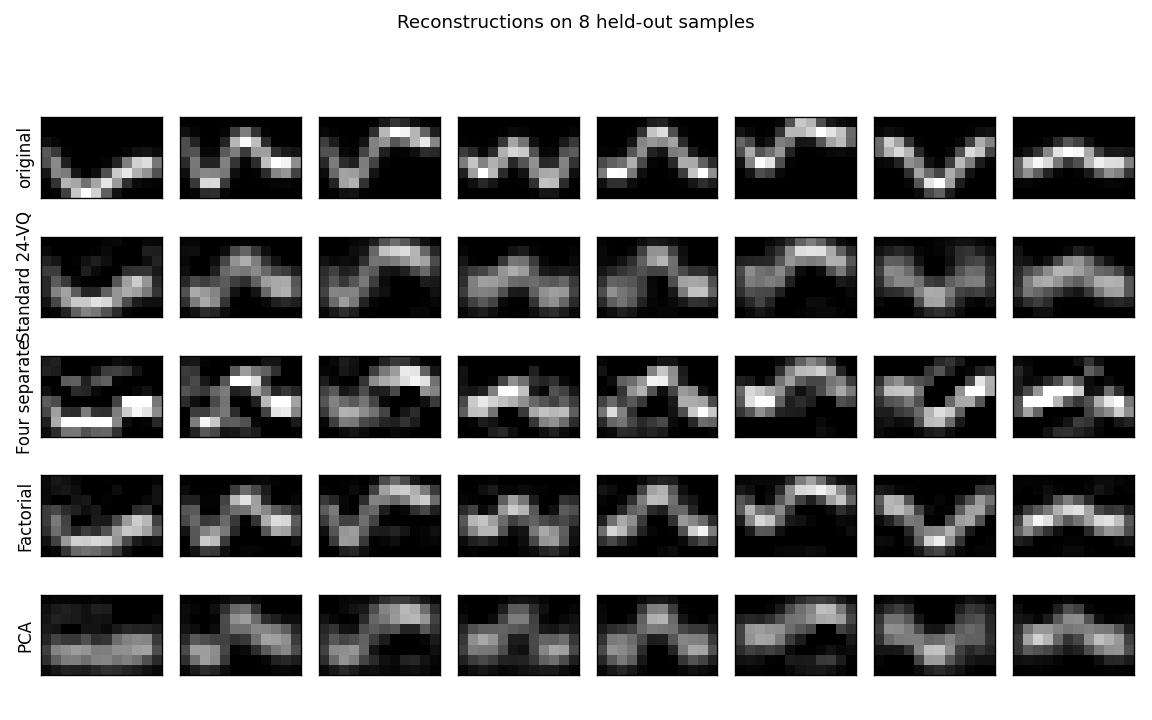

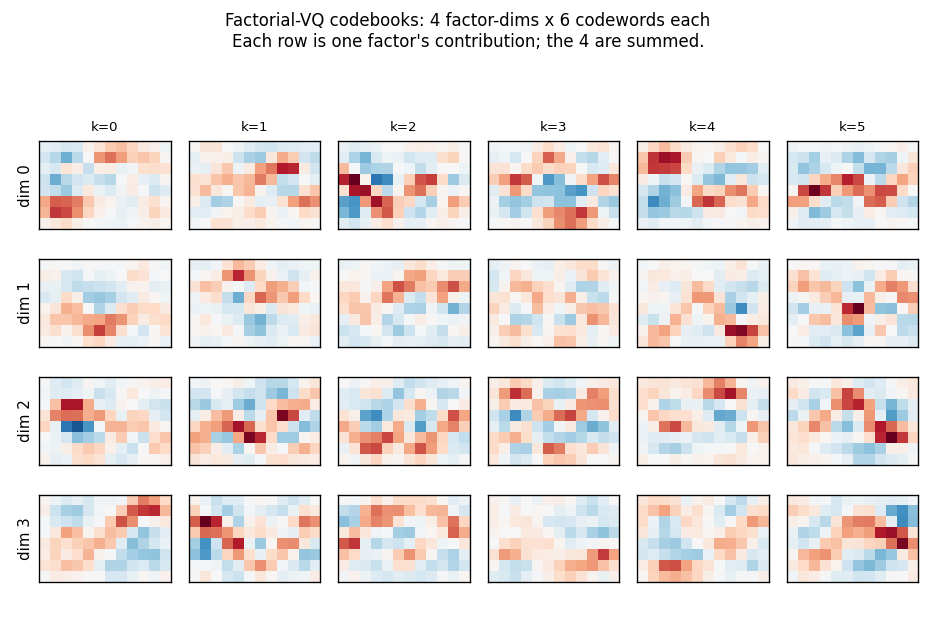

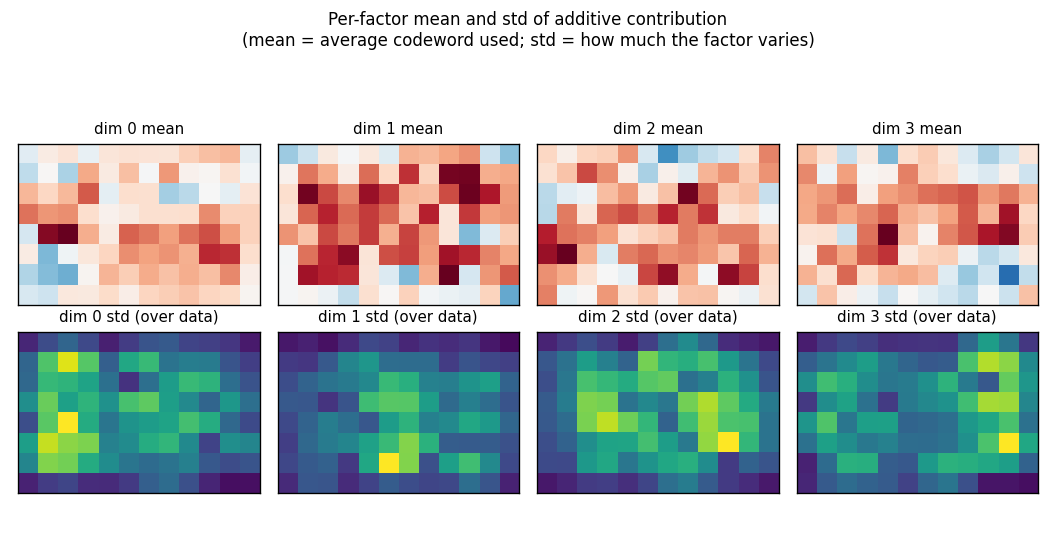

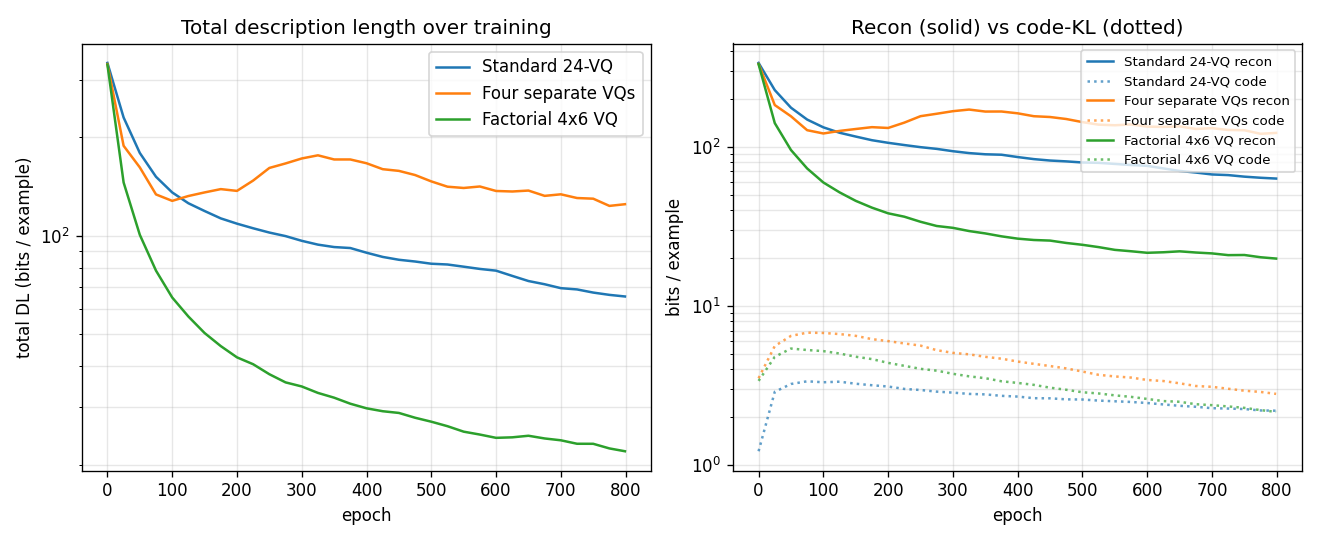

encoder-4-2-4 — Ackley/Hinton/Sejnowski 1985, the worked example. Bipartite RBM, 2-bit code emerges. | spline-images-factorial-vq — Hinton/Zemel 1994, factorial VQ wins 3× over standard 24-VQ baseline. |

|  |

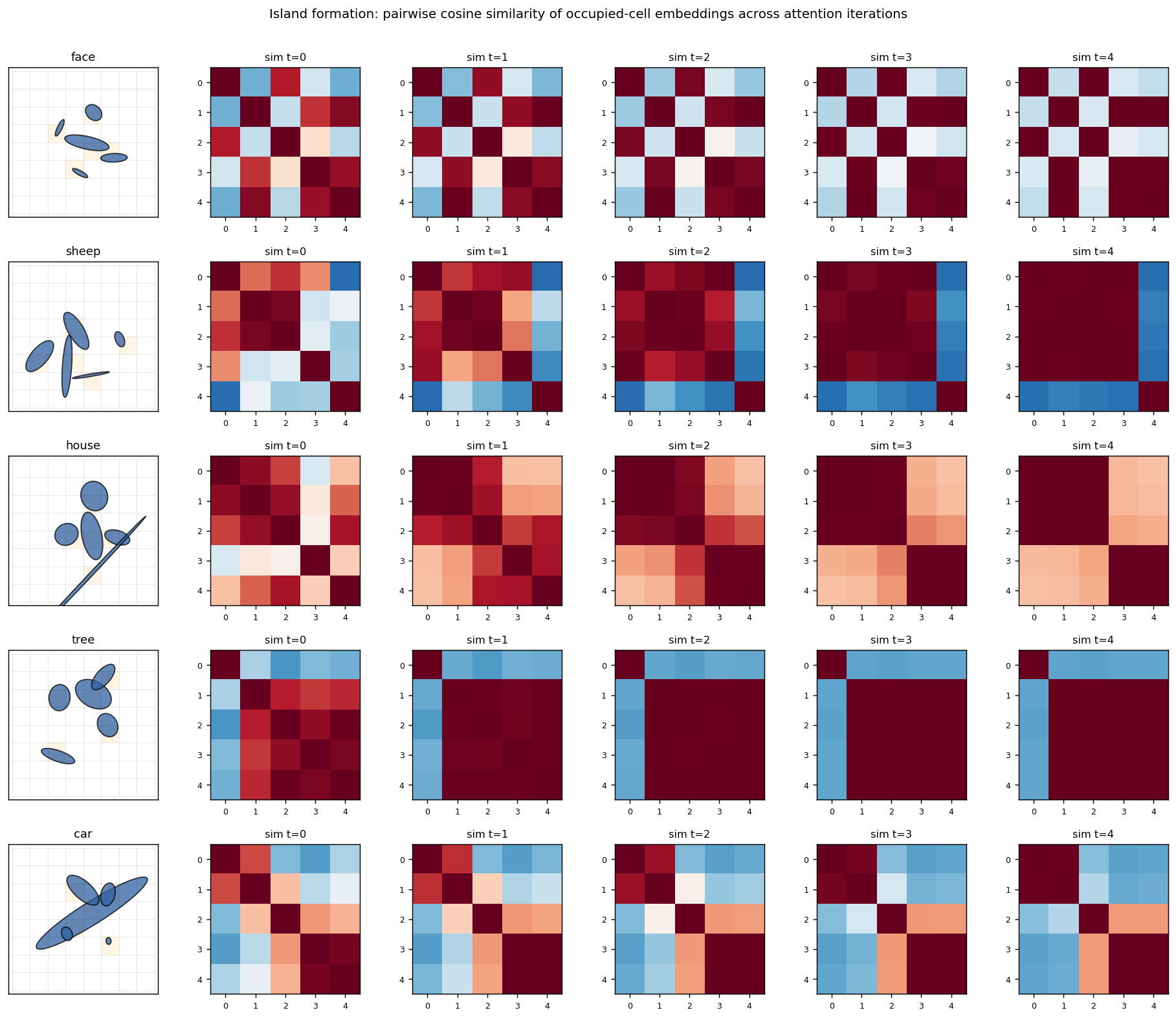

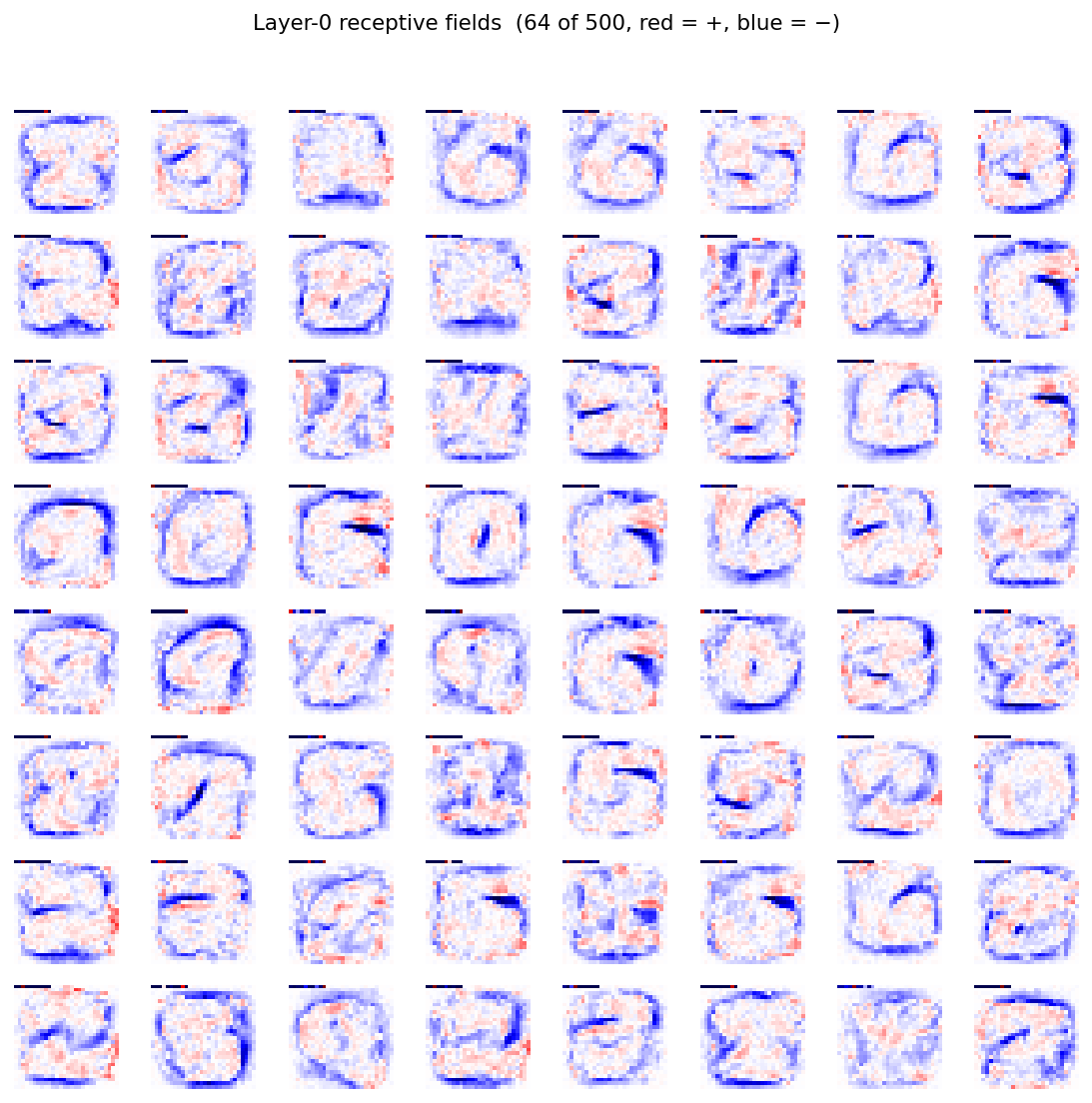

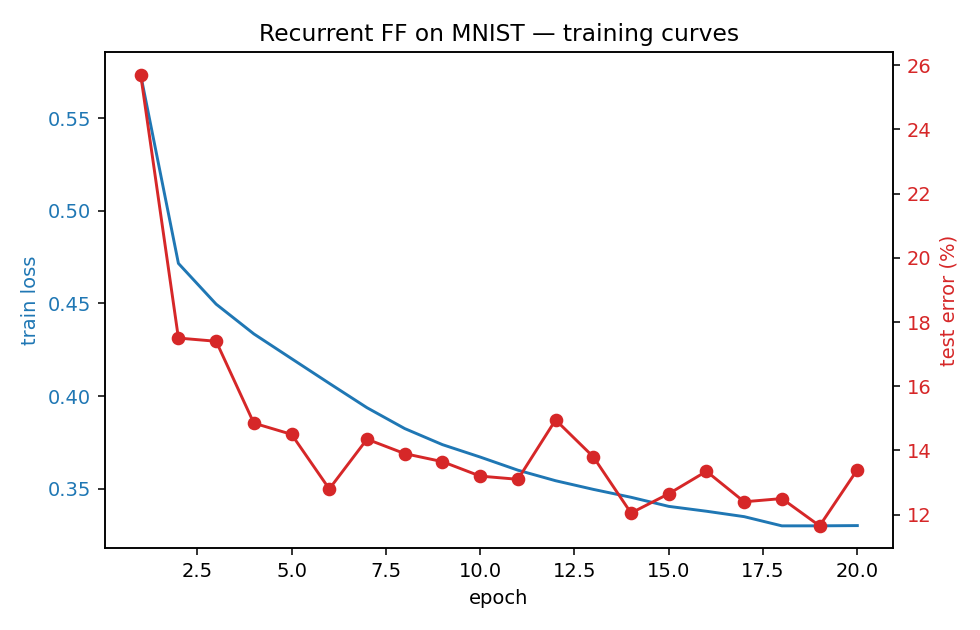

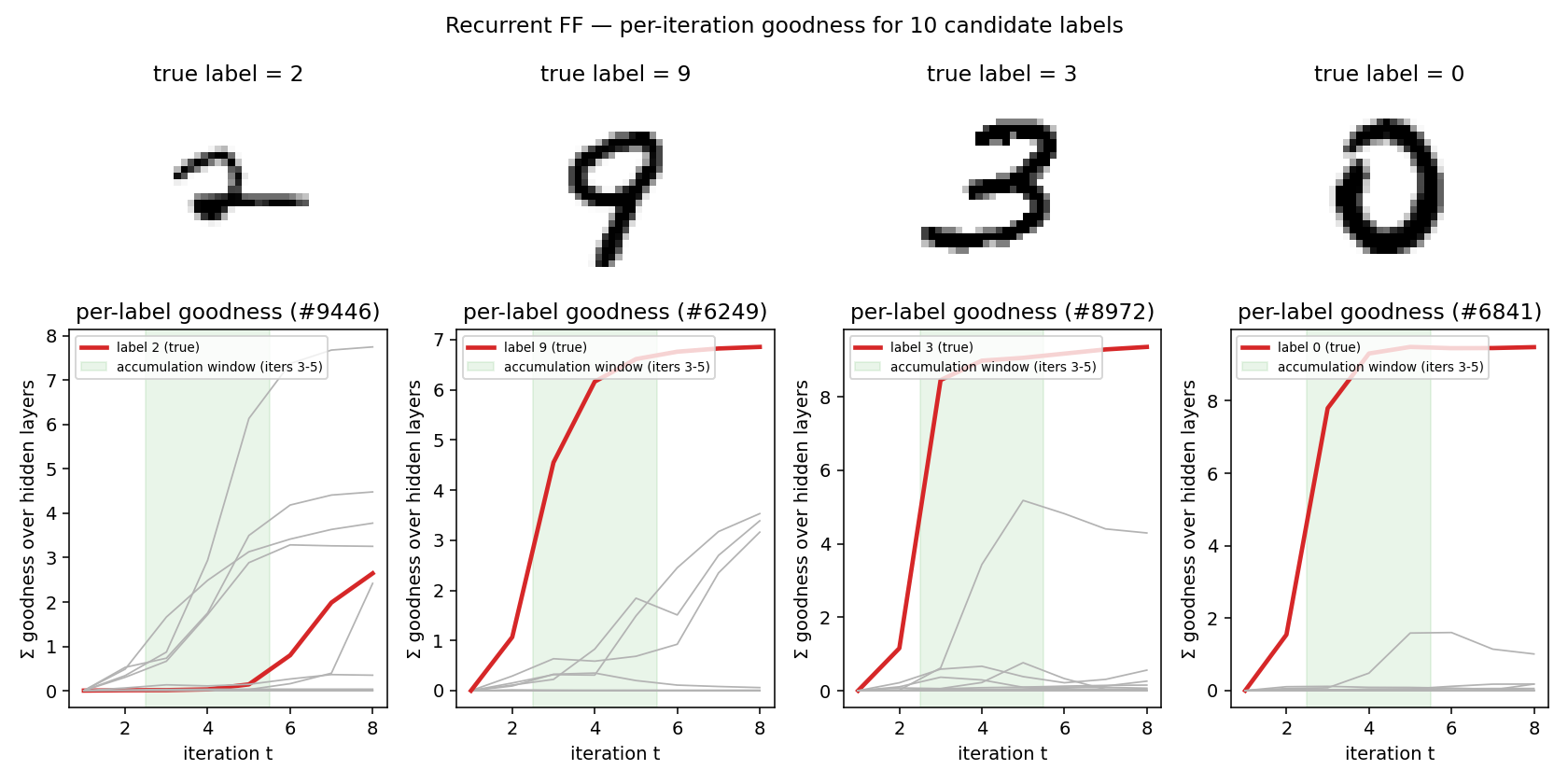

ellipse-world — Culp/Sabour/Hinton 2022, eGLOM islands form across iterations (5-class, 92.2%). | ff-recurrent-mnist — Hinton 2022, top-down recurrent Forward-Forward. |

Catalog

Each table shows the v1 result per stub. Full per-stub metrics (compile-time, GIF size, headline numbers) are in RESULTS.md.

Reproduces? legend: yes = matches paper qualitatively or quantitatively; partial = method works, paper number not fully reached (gap documented in stub README); no = paper claim does not replicate.

1980s — Connectionist foundations

Ackley, Hinton & Sejnowski (1985) — A learning algorithm for Boltzmann machines

| Problem | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

| encoder-4-2-4 ★ | yes (CD-k variant) | n/a (worked example) | ~1s |

| encoder-3-parity | yes (KL = log 2 visible-only; RBM drops to 0.10) | ~50 min | 0.04s + 1.3s |

| encoder-4-3-4 | yes (60% error-correcting rate / 30 seeds) | ~3 hr | 2.3s |

| encoder-8-3-8 | yes (16/20 = exact paper parity) | ~2 hr | ~20s/seed |

| encoder-40-10-40 | yes (exceeds paper: 100% vs 98.6%) | ~1.5 hr | 6s |

Rumelhart, Hinton & Williams (1986) — Learning internal representations by error propagation

| Problem | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

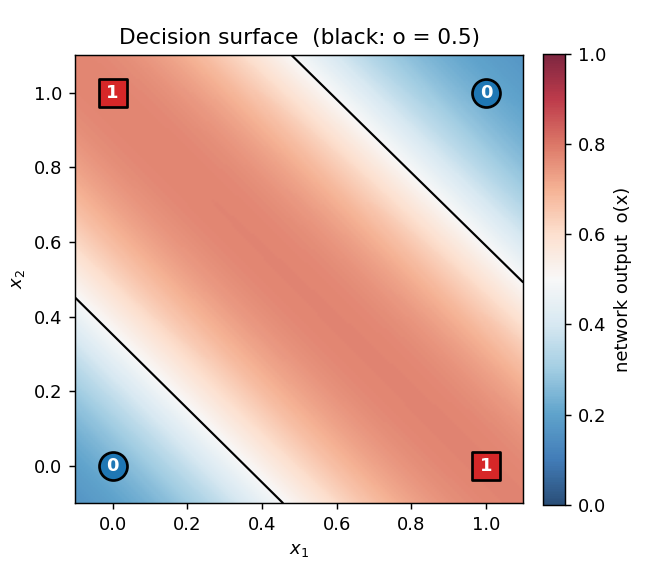

| xor | yes (qualitative) | 6.4 min | 0.3s |

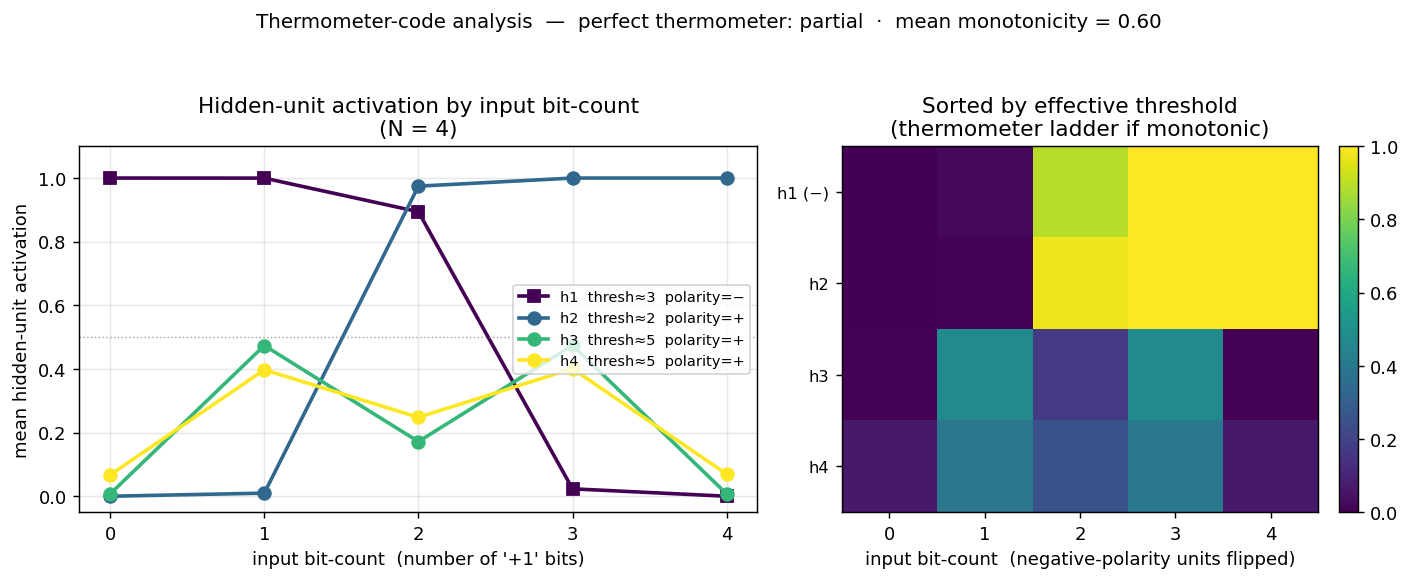

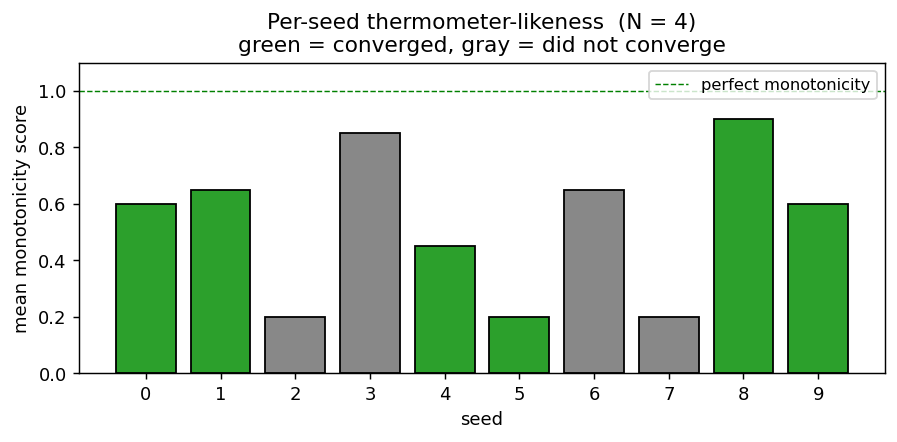

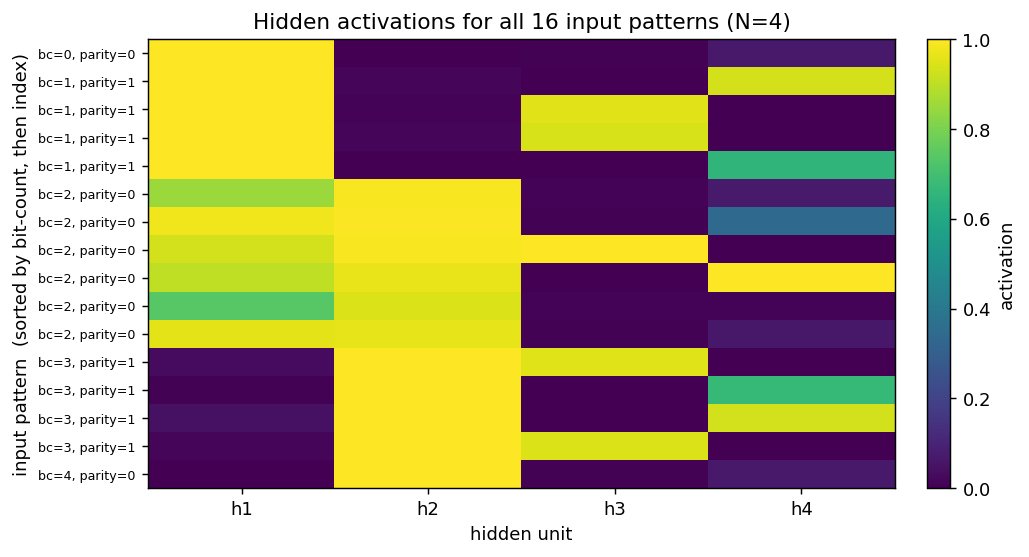

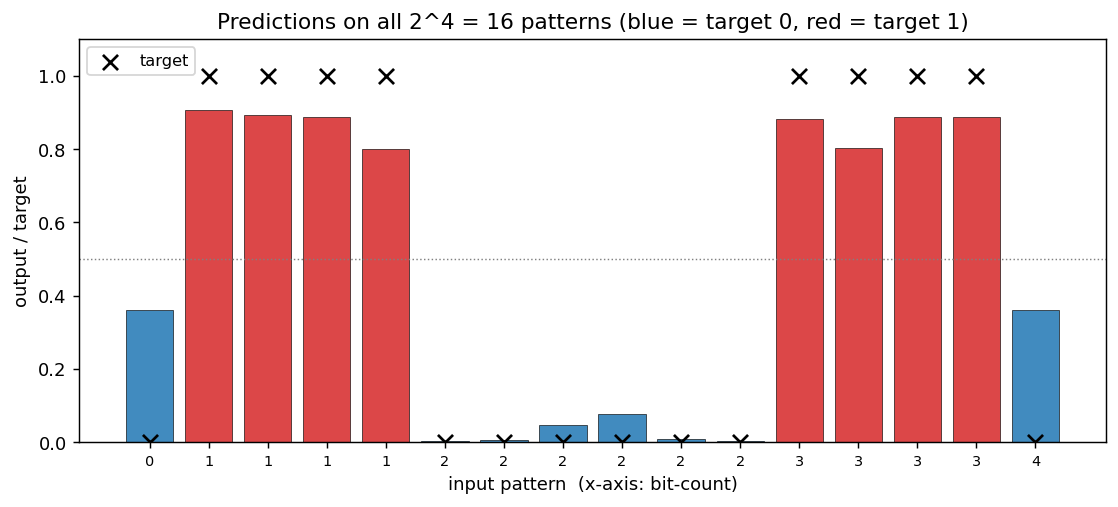

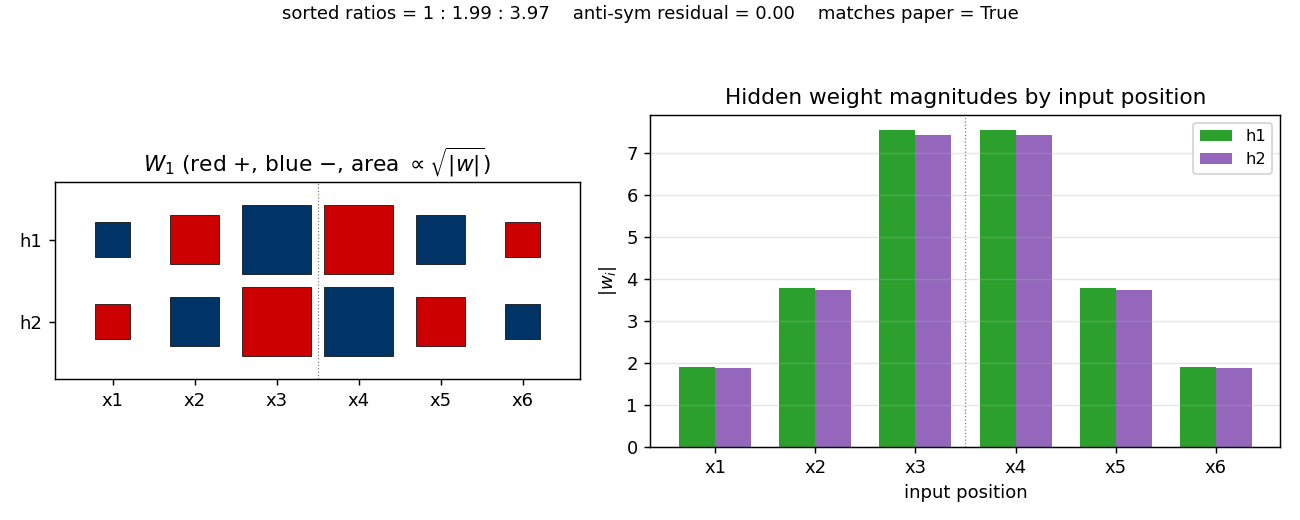

| n-bit-parity | yes (qualitative; thermometer code partial) | 30 min | 0.20s |

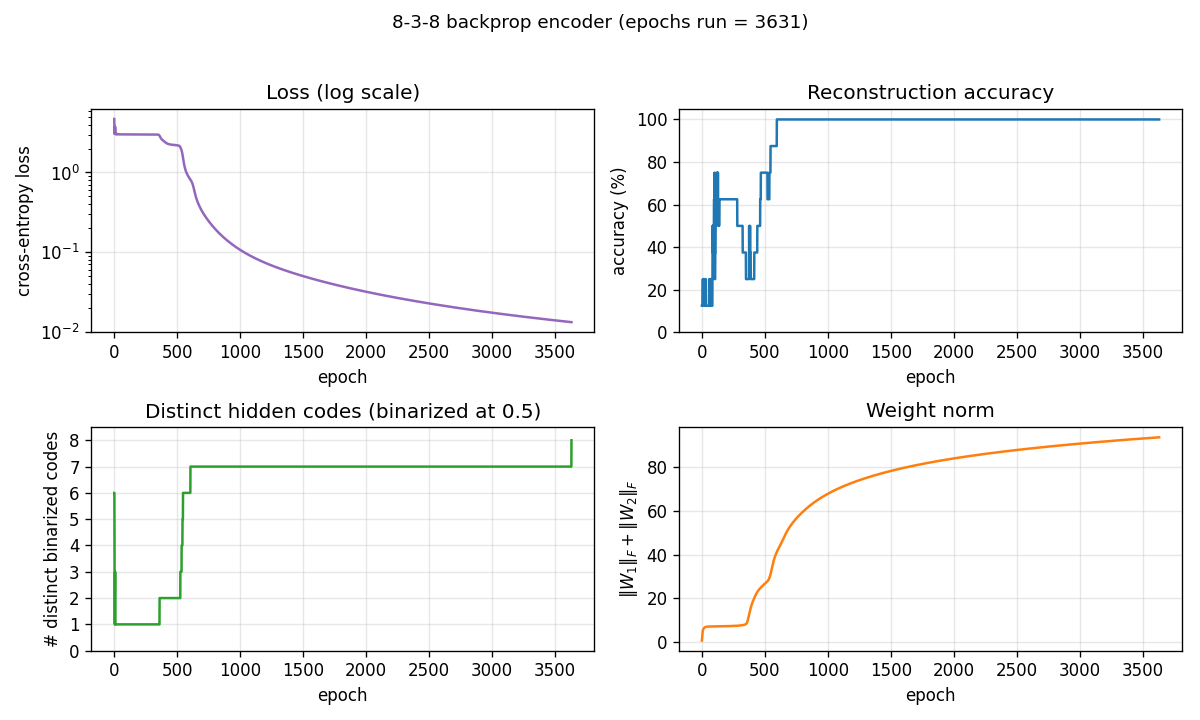

| encoder-backprop-8-3-8 | yes (70% strict 8/8 distinct codes) | ~10 min | 0.6s |

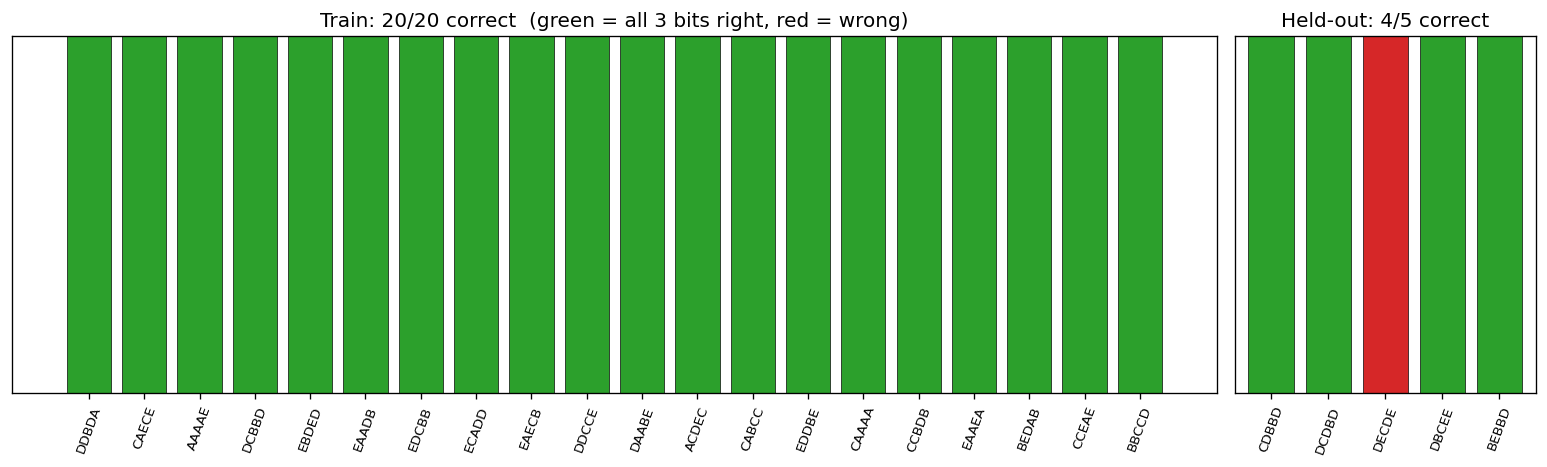

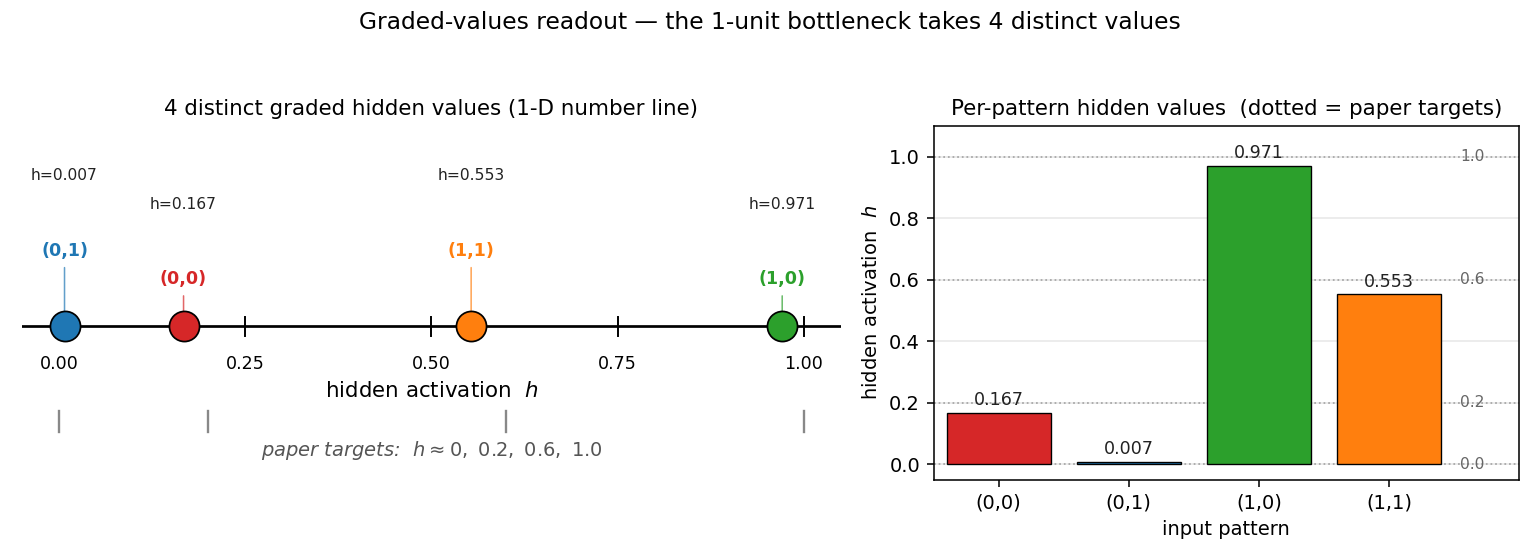

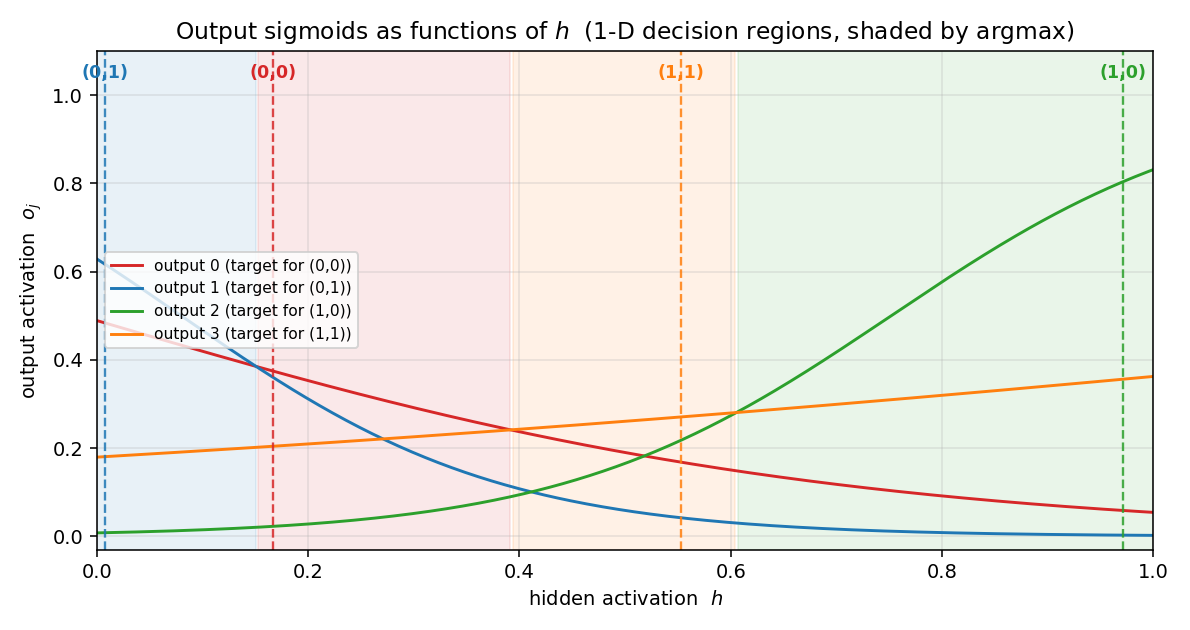

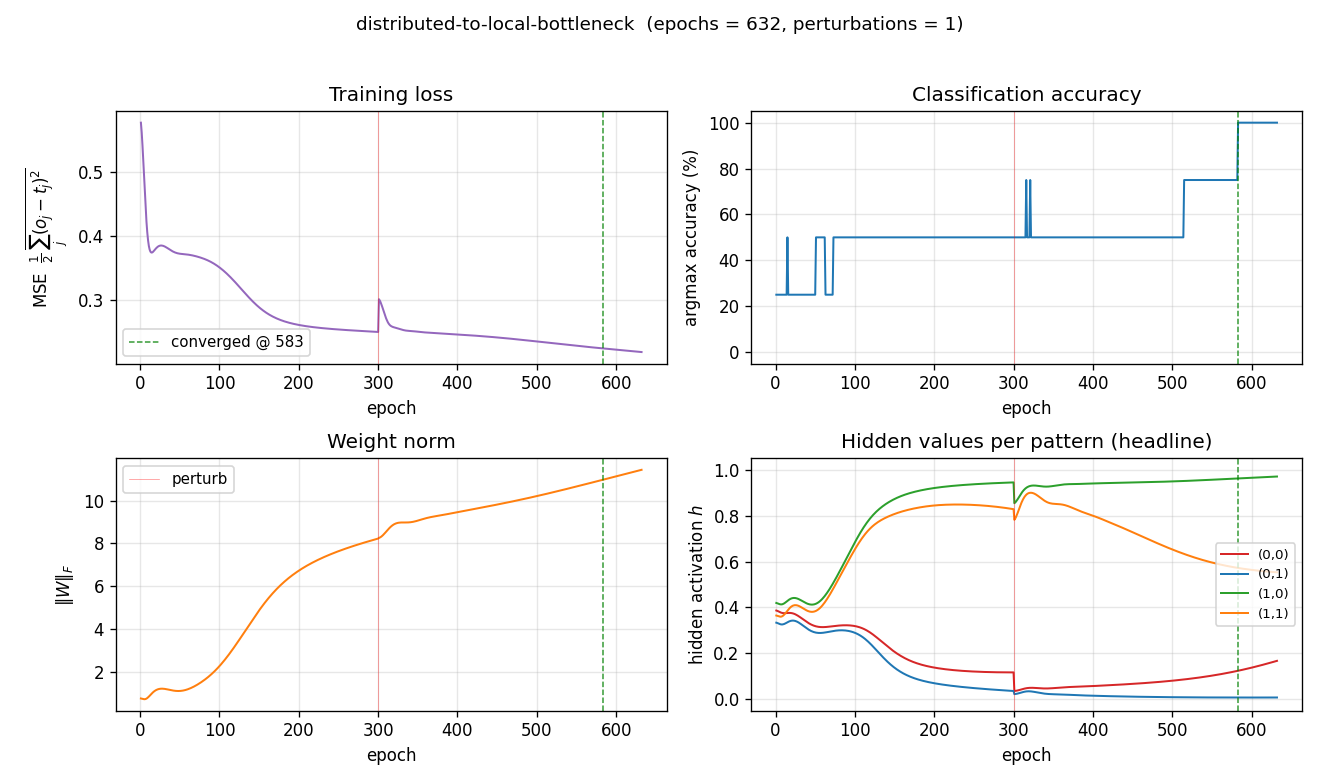

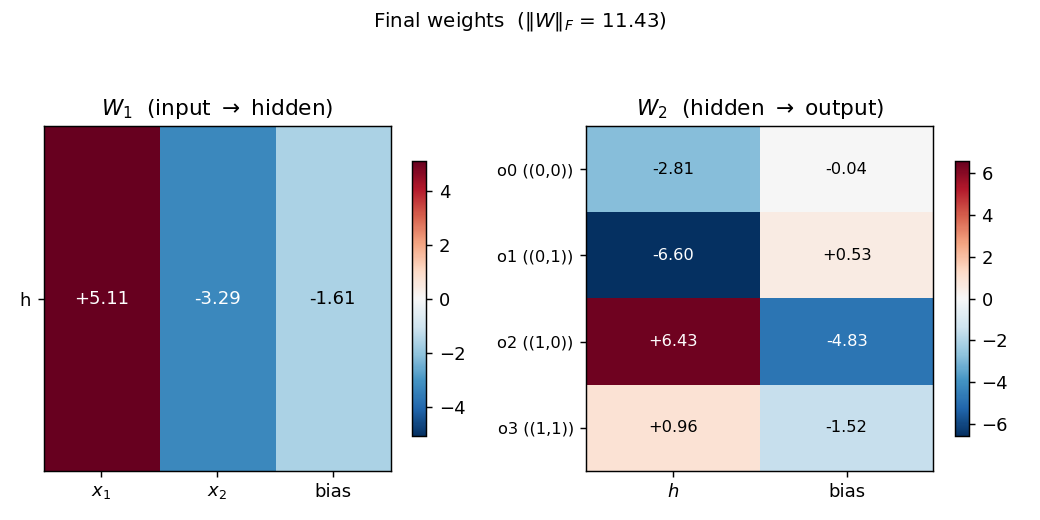

| distributed-to-local-bottleneck | yes (graded values 0.007/0.167/0.553/0.971) | 75 min | 0.082s |

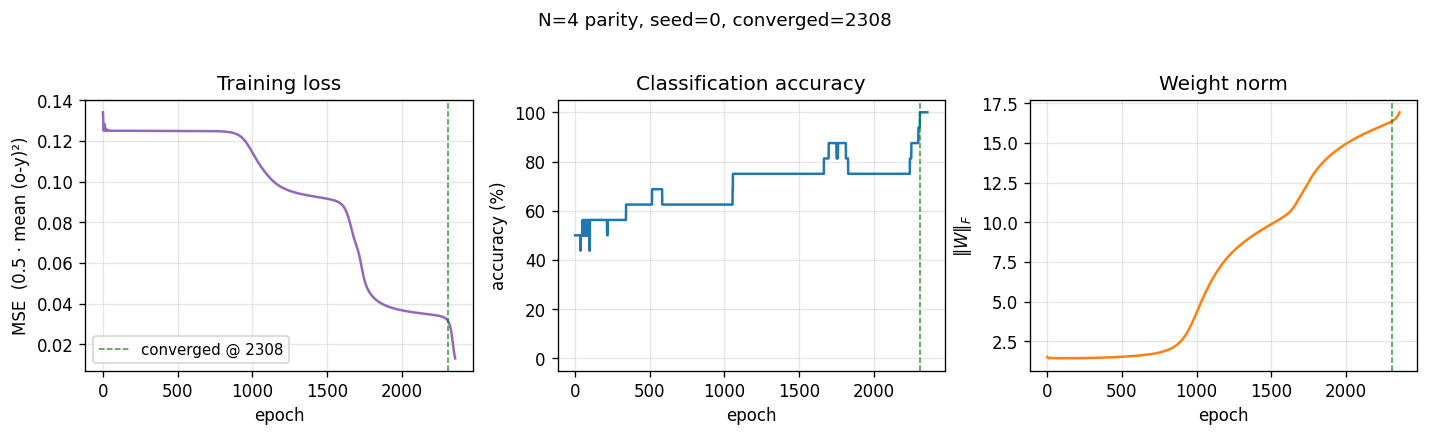

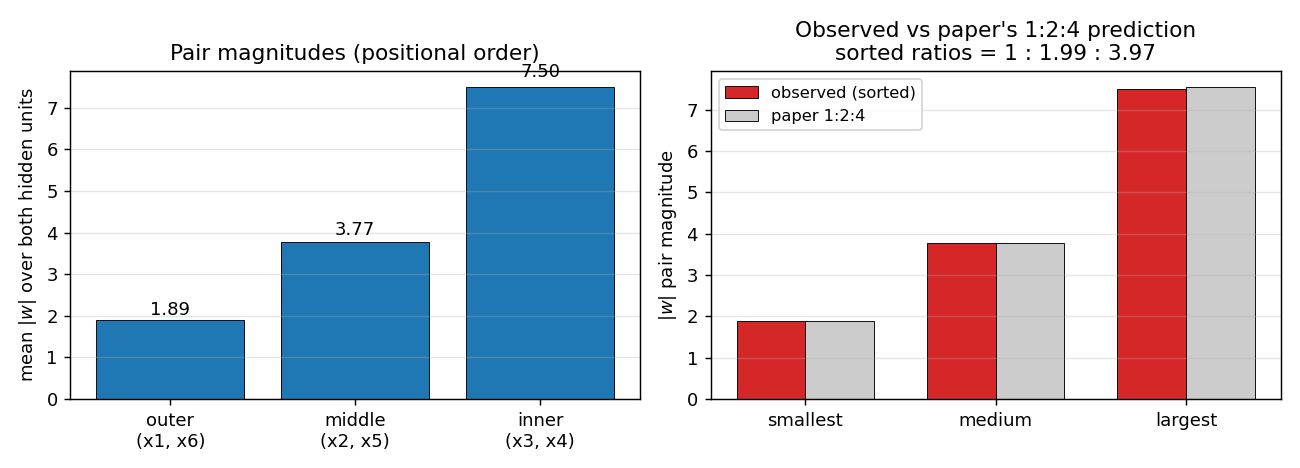

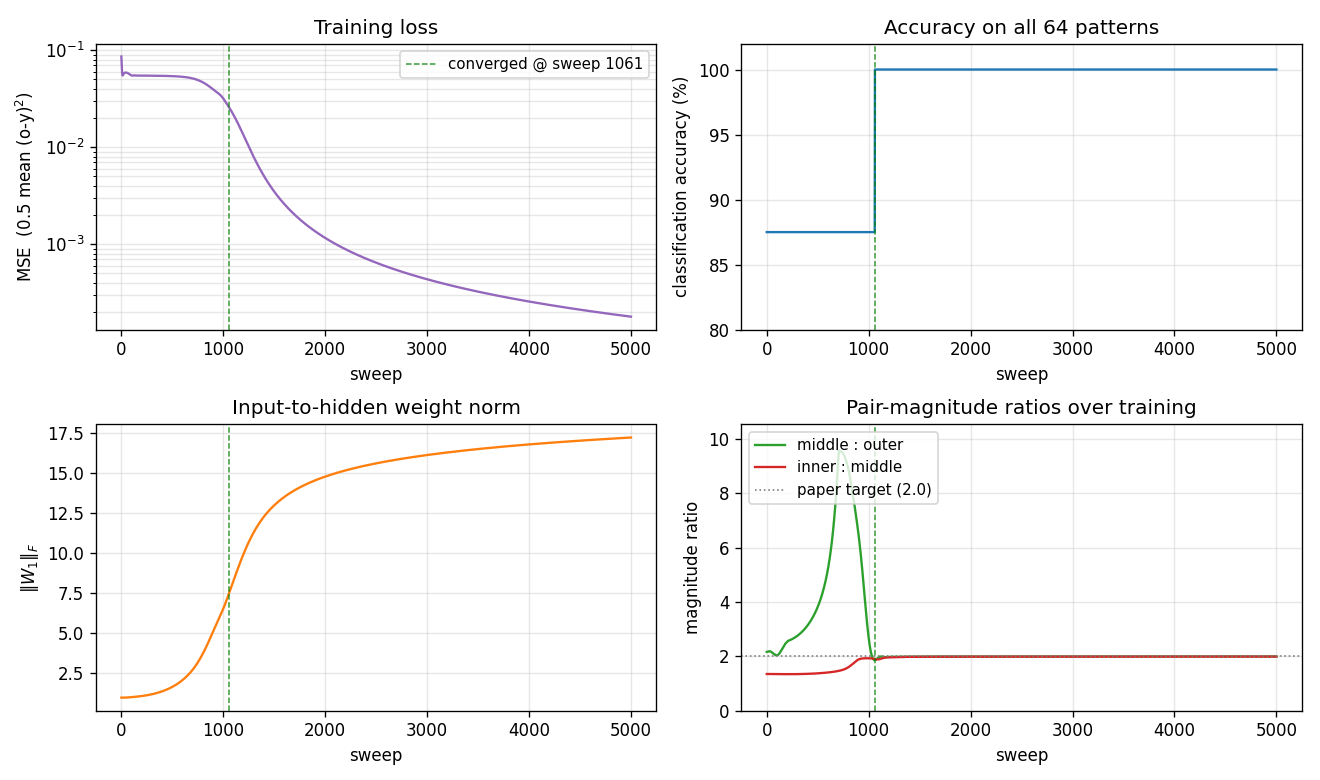

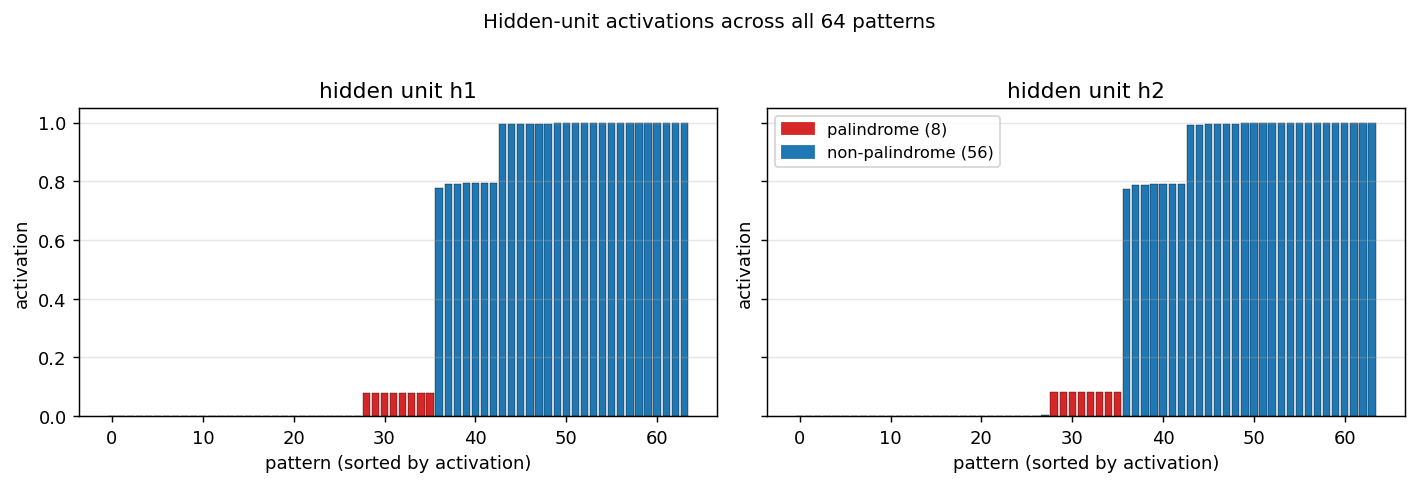

| symmetry | yes (1 : 1.994 : 3.969 weight ratio) | 12.8 min | 0.4s |

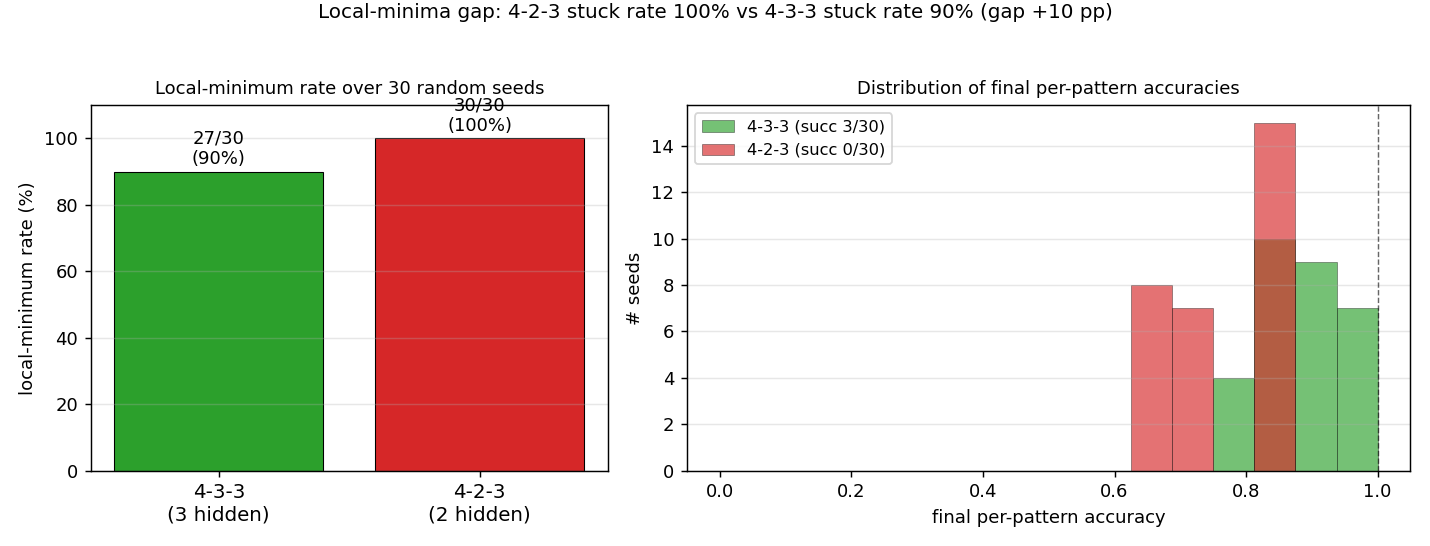

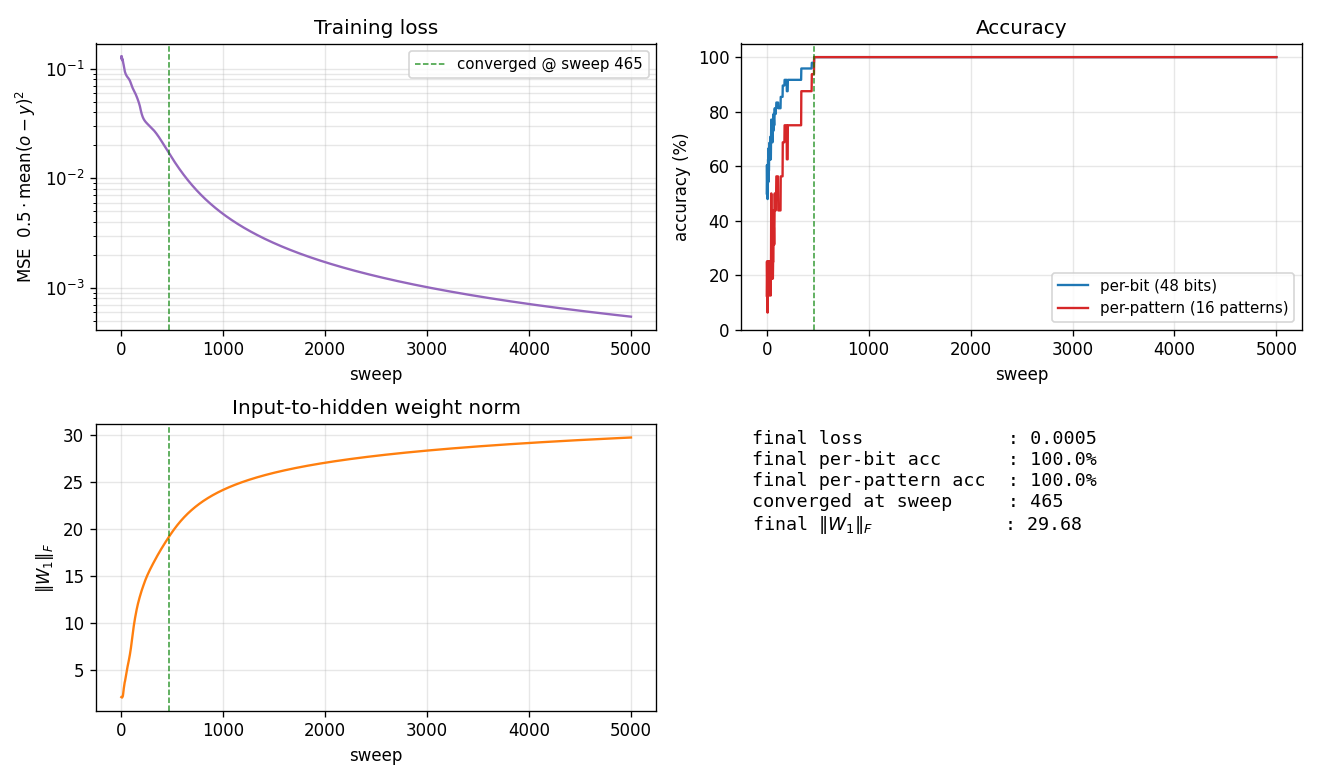

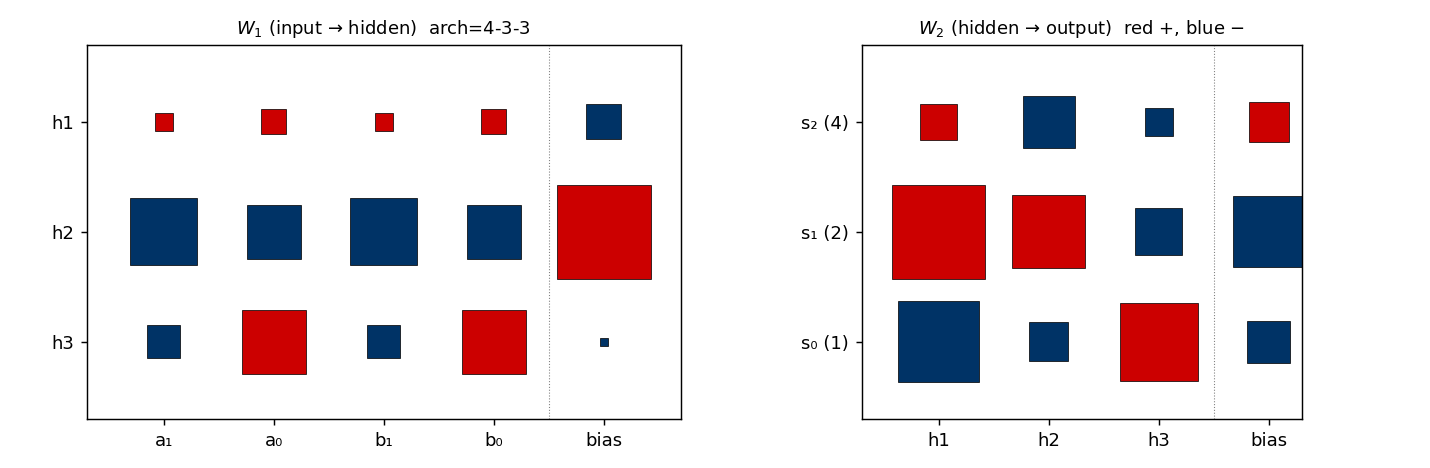

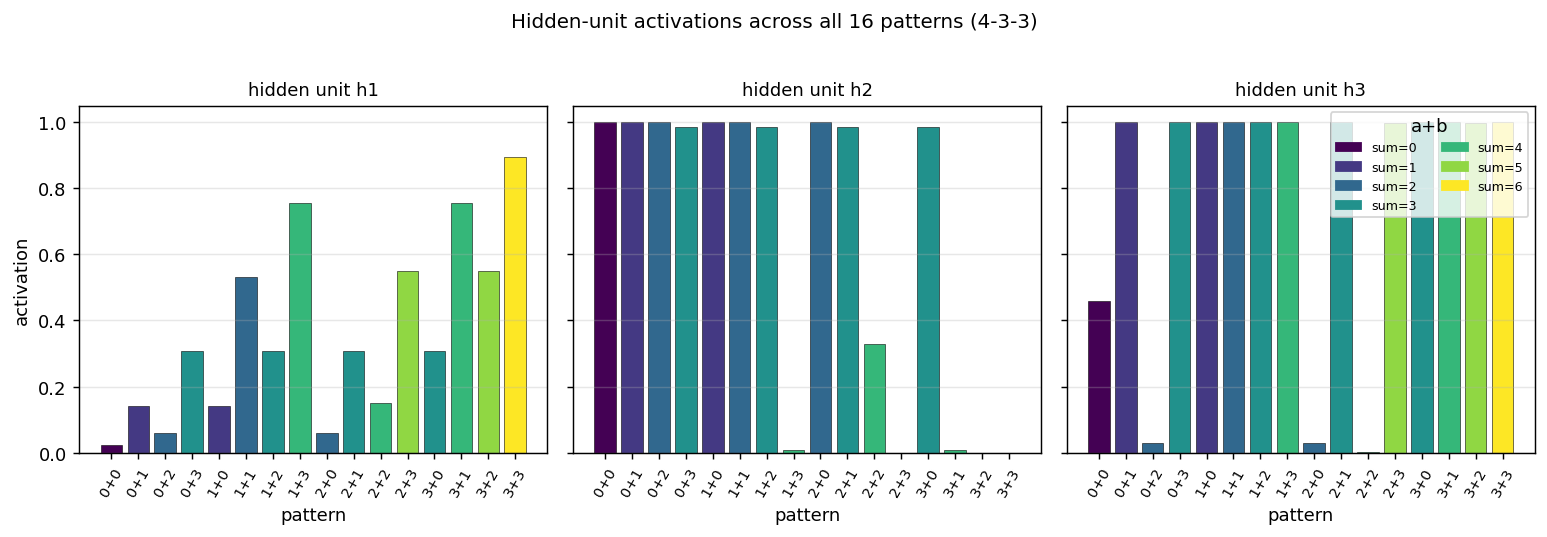

| binary-addition | yes (qualitatively; 4-3-3 succeeds, 4-2-3 stuck) | ~2 hr | 44s |

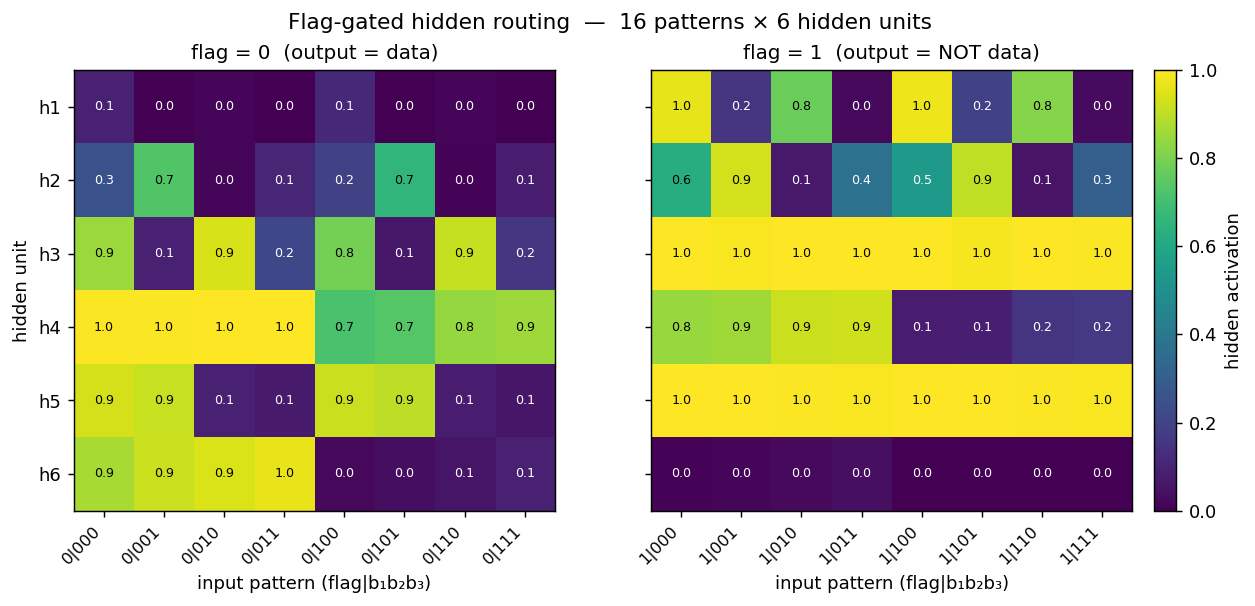

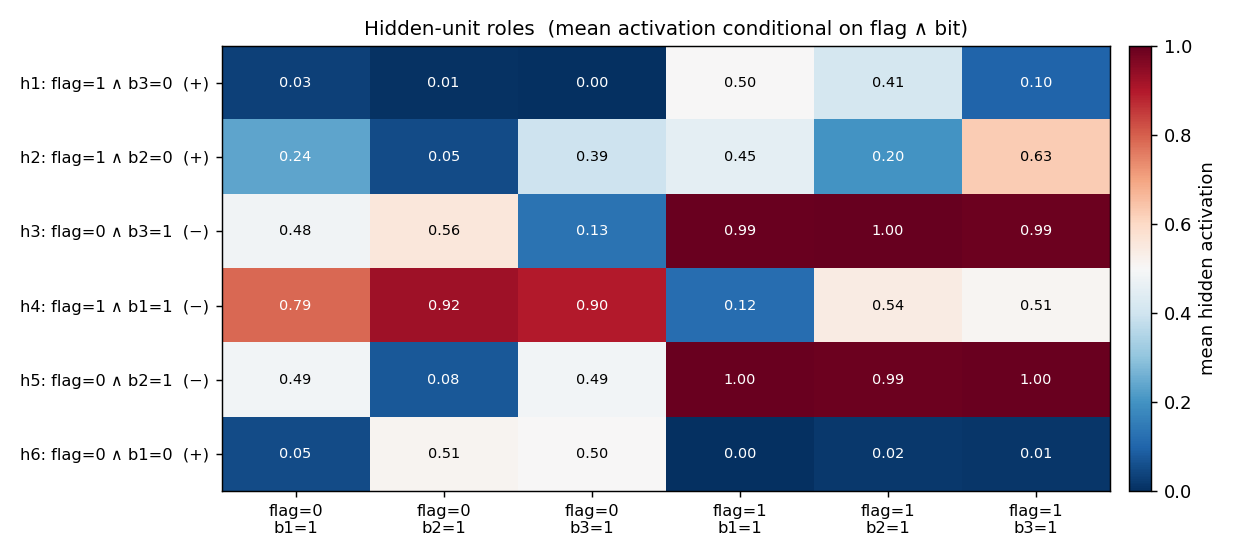

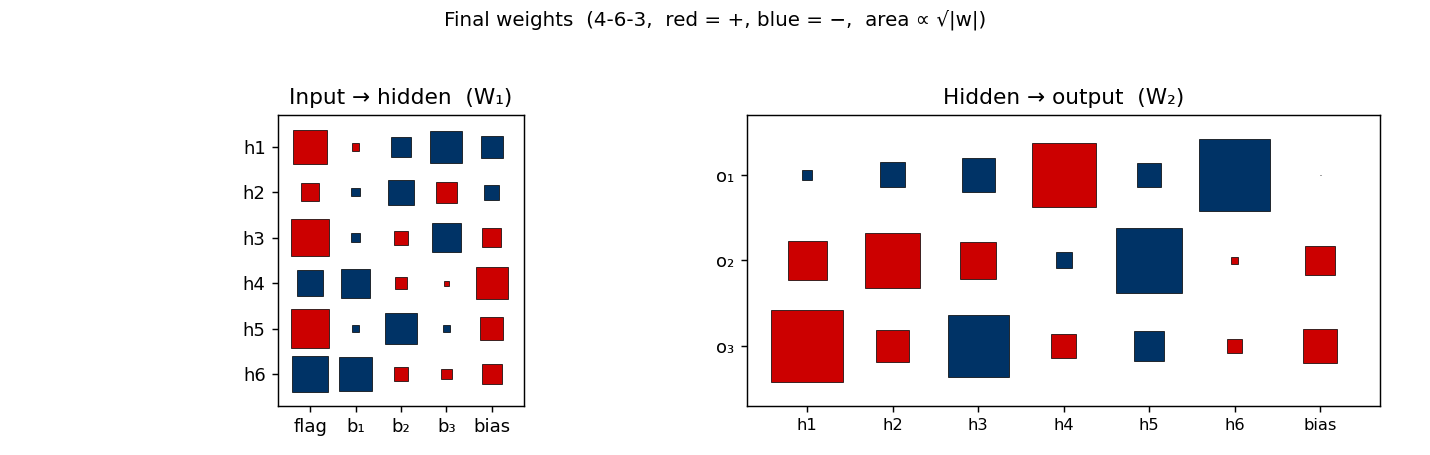

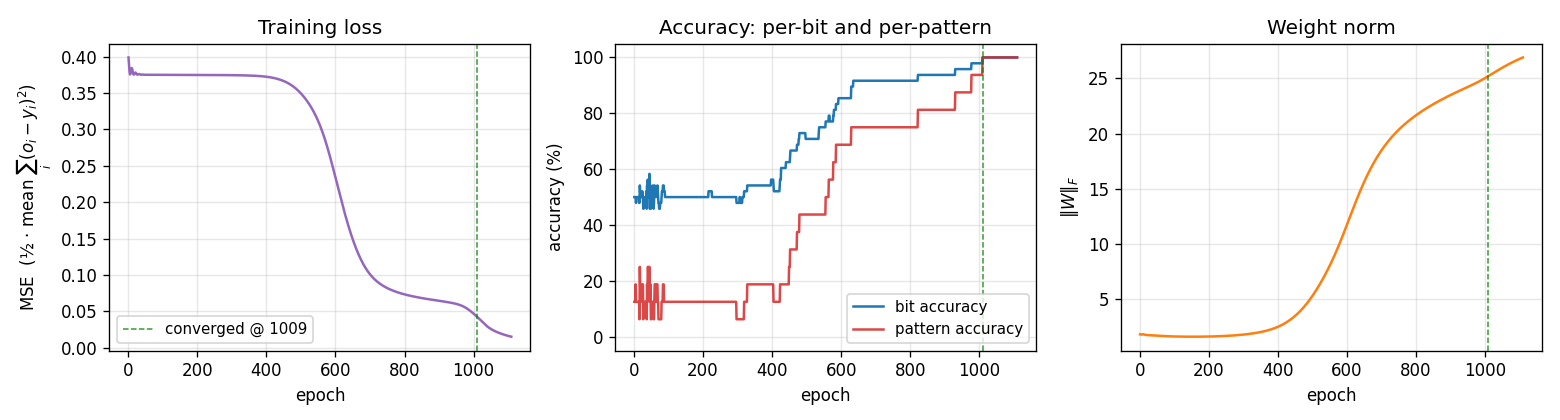

| negation | yes (4-6-3 deviation justified) | 25 min | 0.10s |

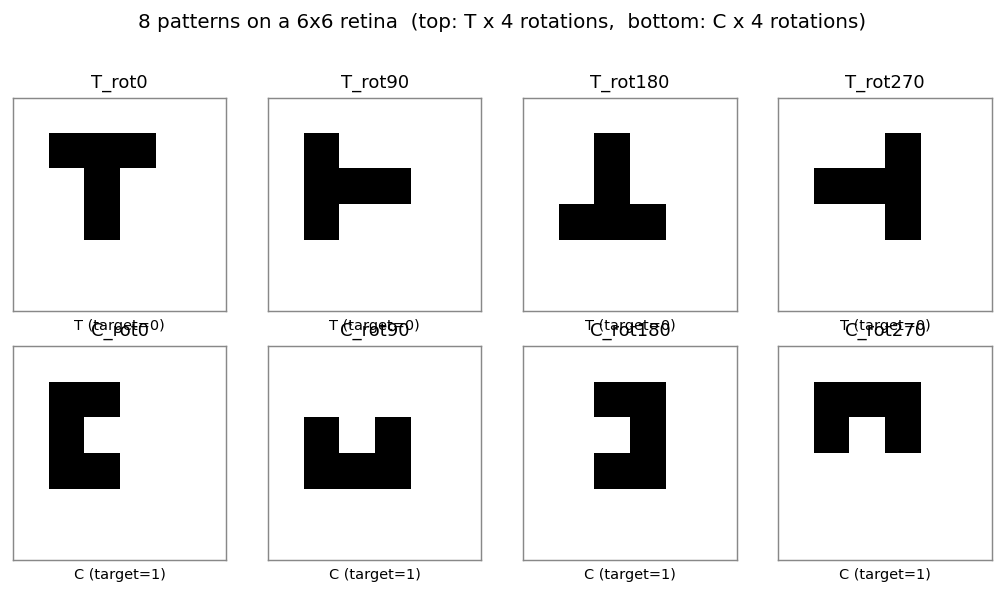

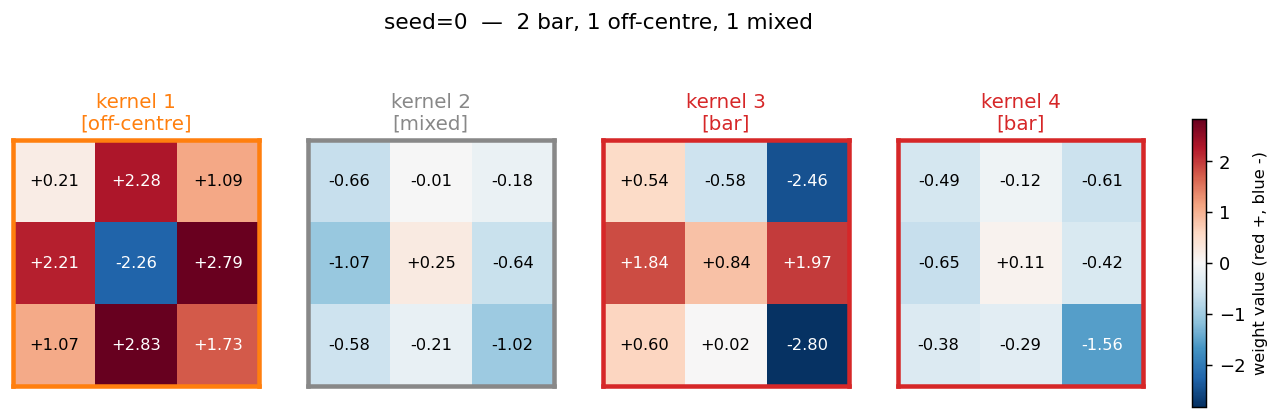

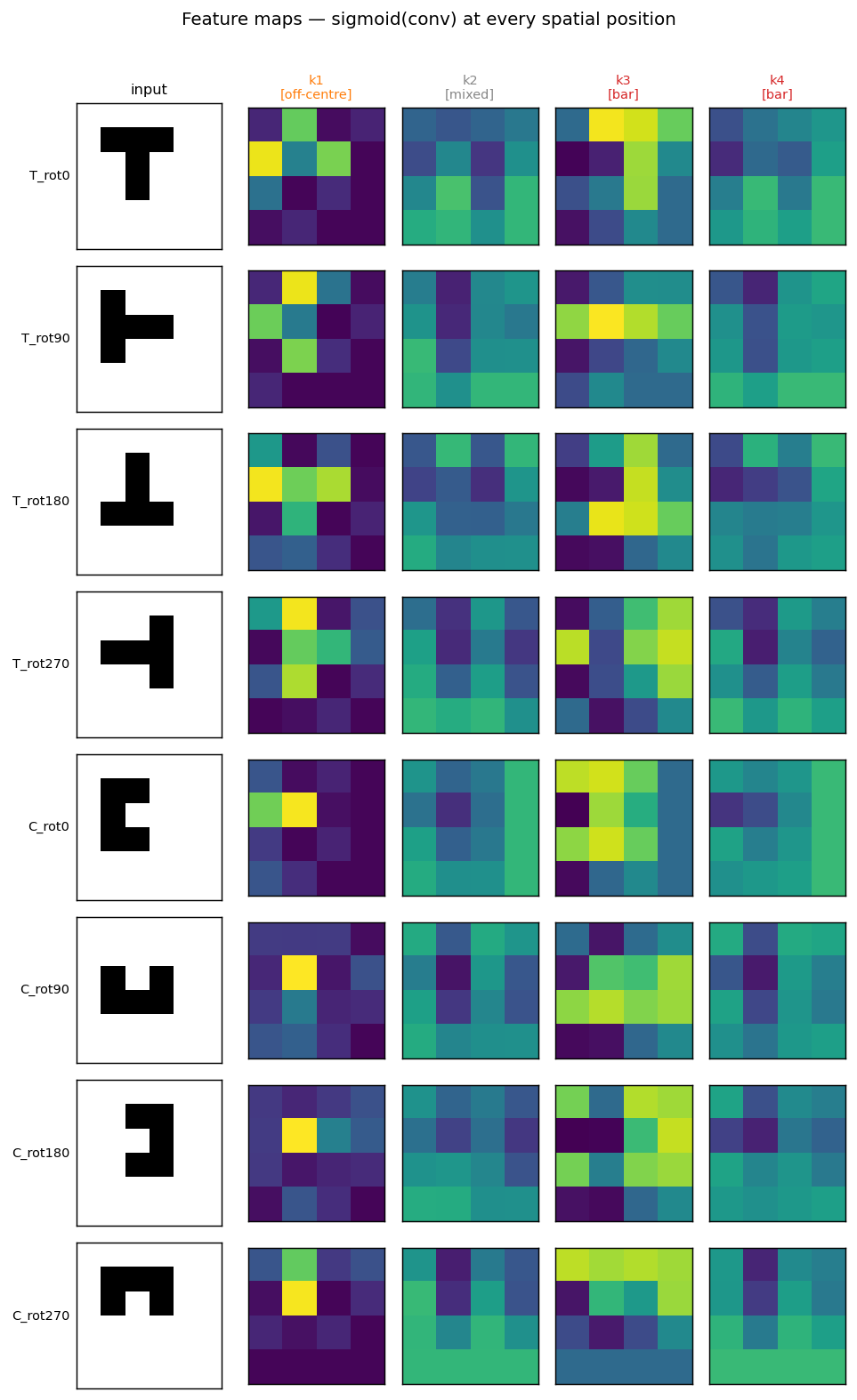

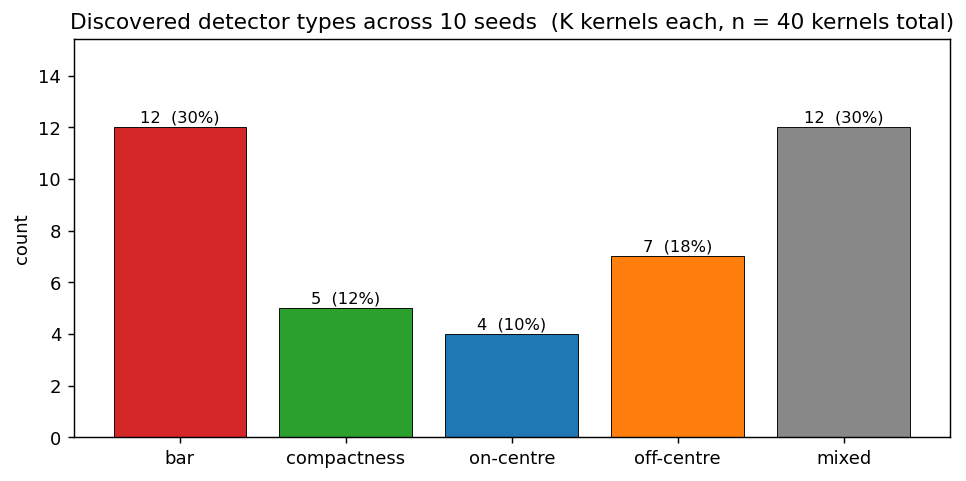

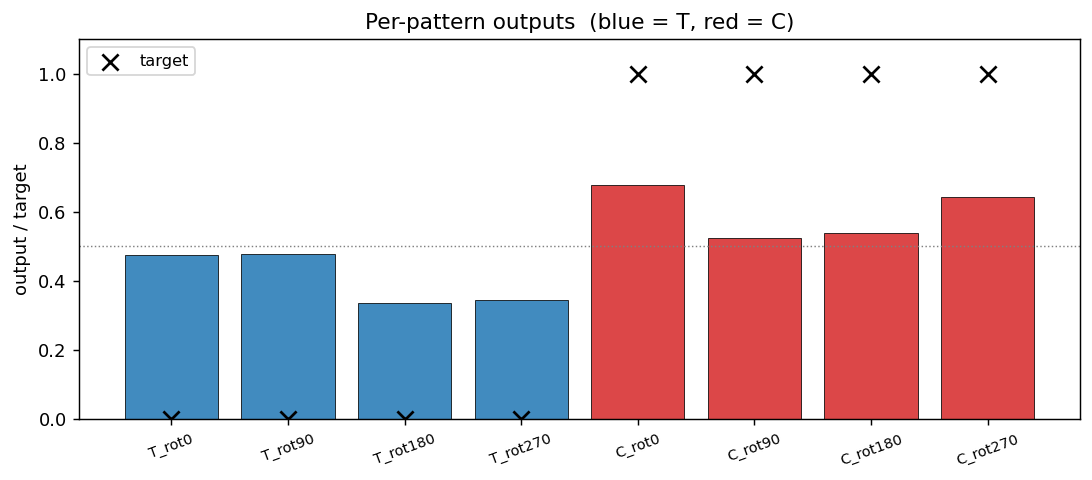

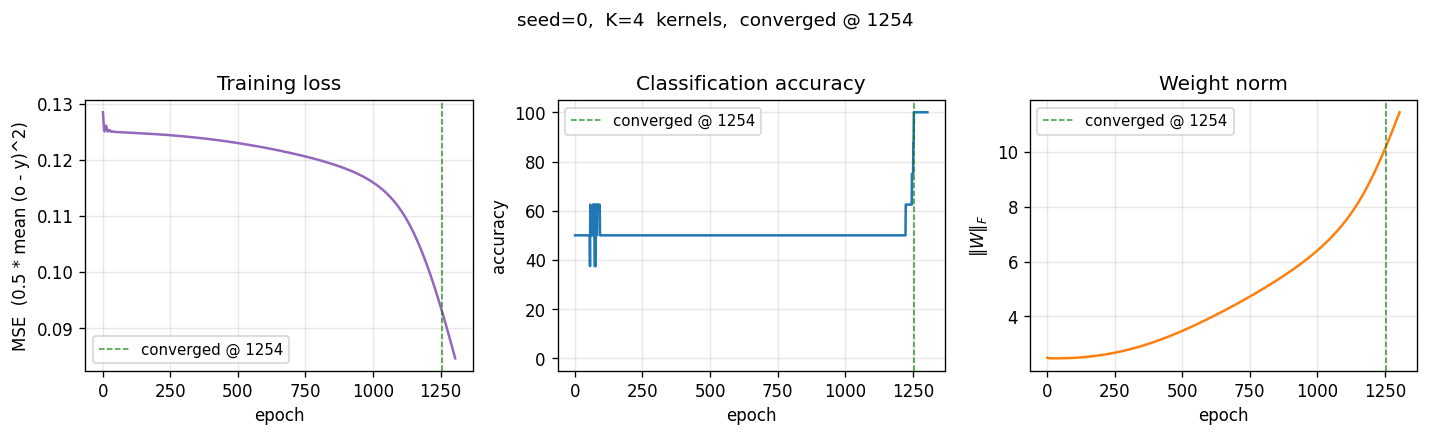

| t-c-discrimination | yes (all 3 detector families emerge) | 30 min | 0.69s |

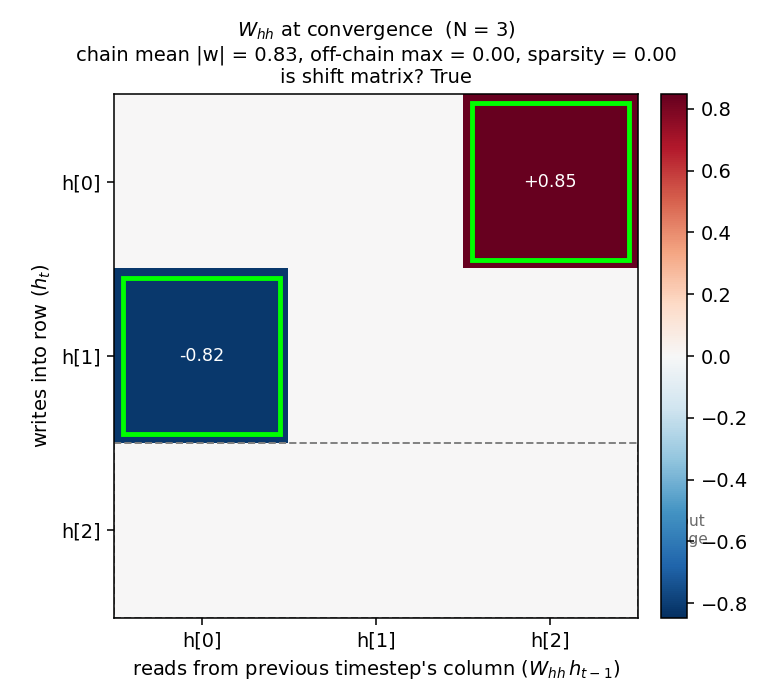

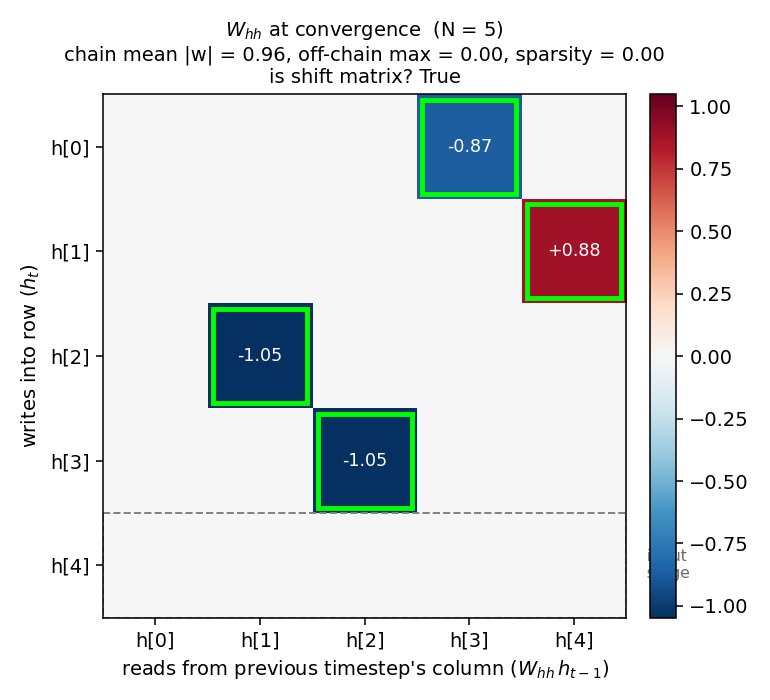

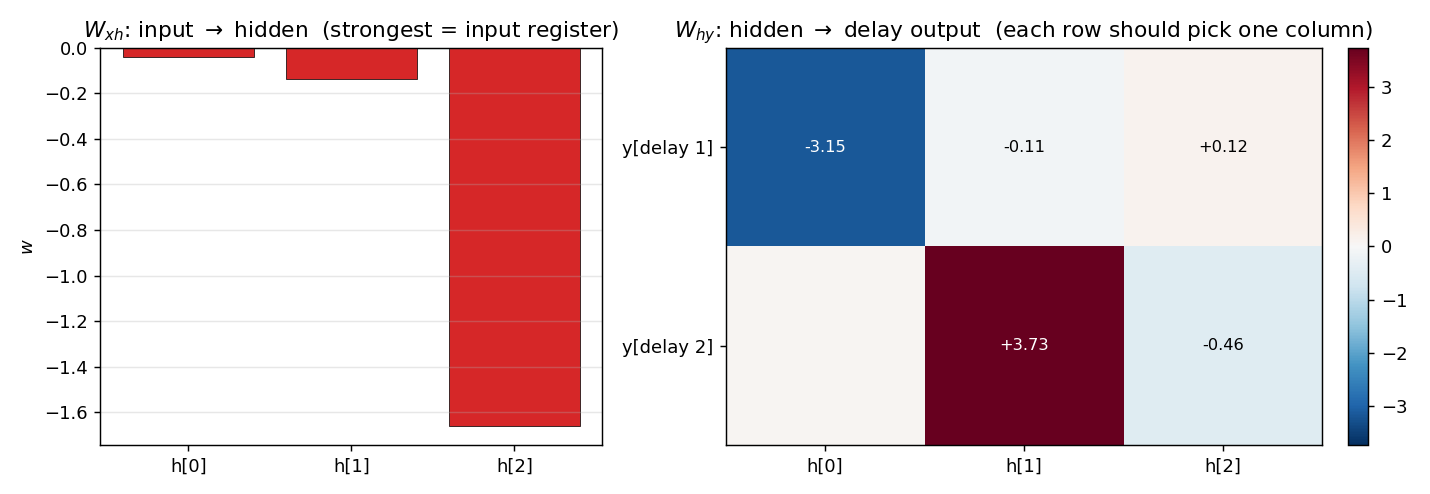

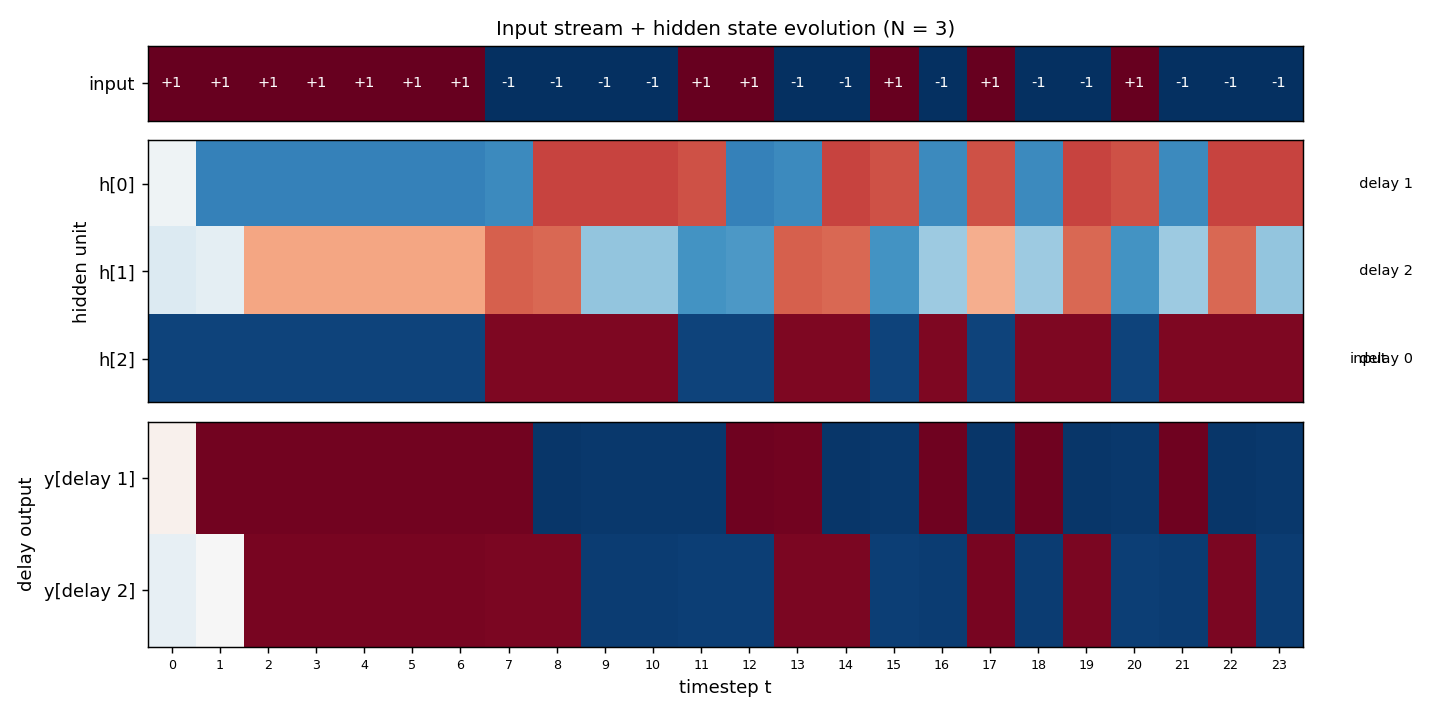

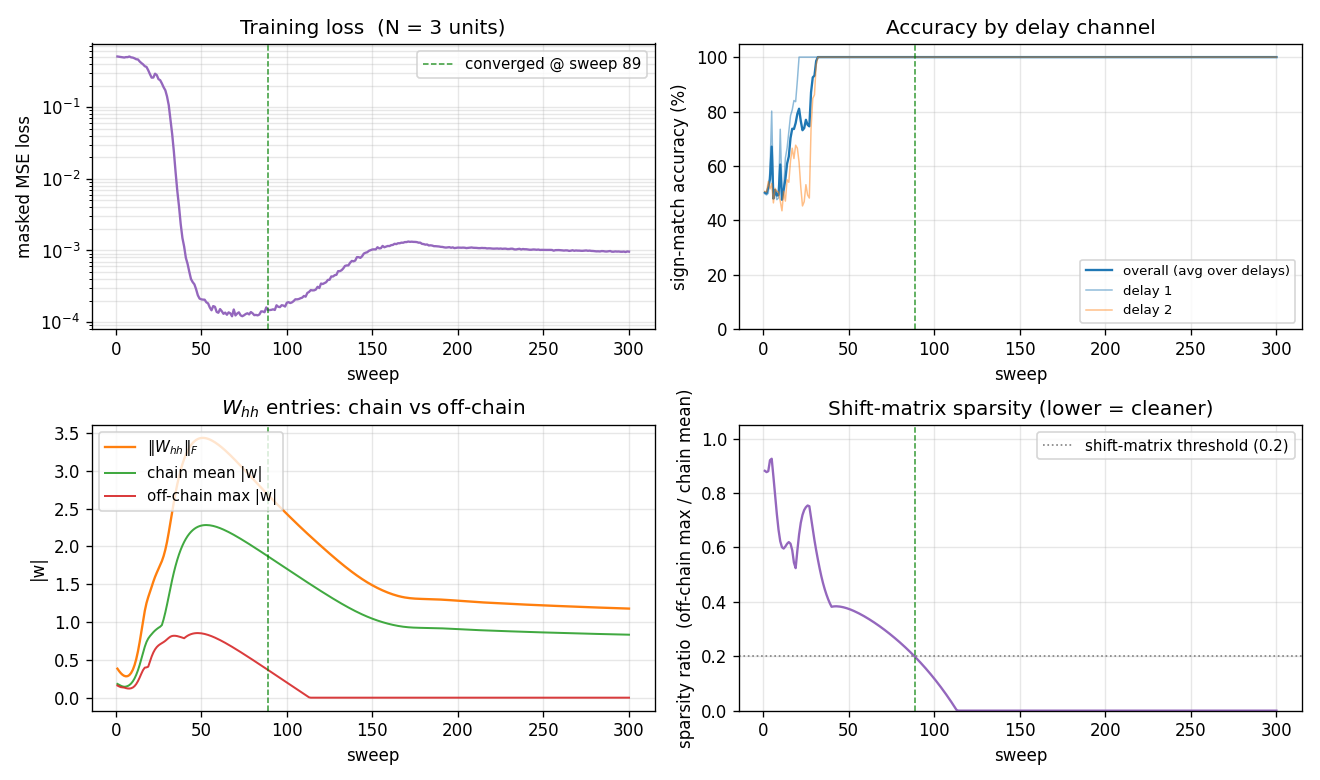

| recurrent-shift-register | yes (89 sweeps N=3, 121 sweeps N=5) | 25 min | 0.9s / 1.1s |

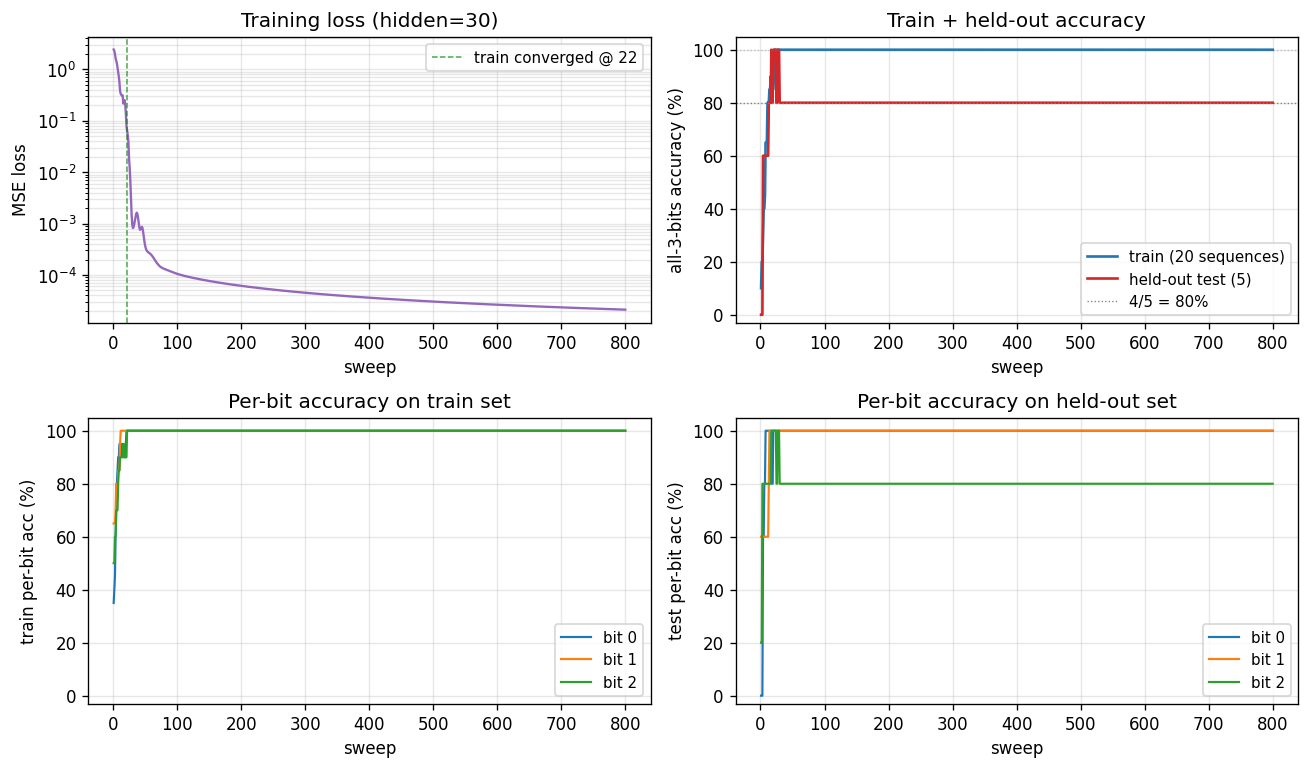

| sequence-lookup-25 | yes (4-5/5 held-out generalization) | 70 min | 0.20s / 5.78s |

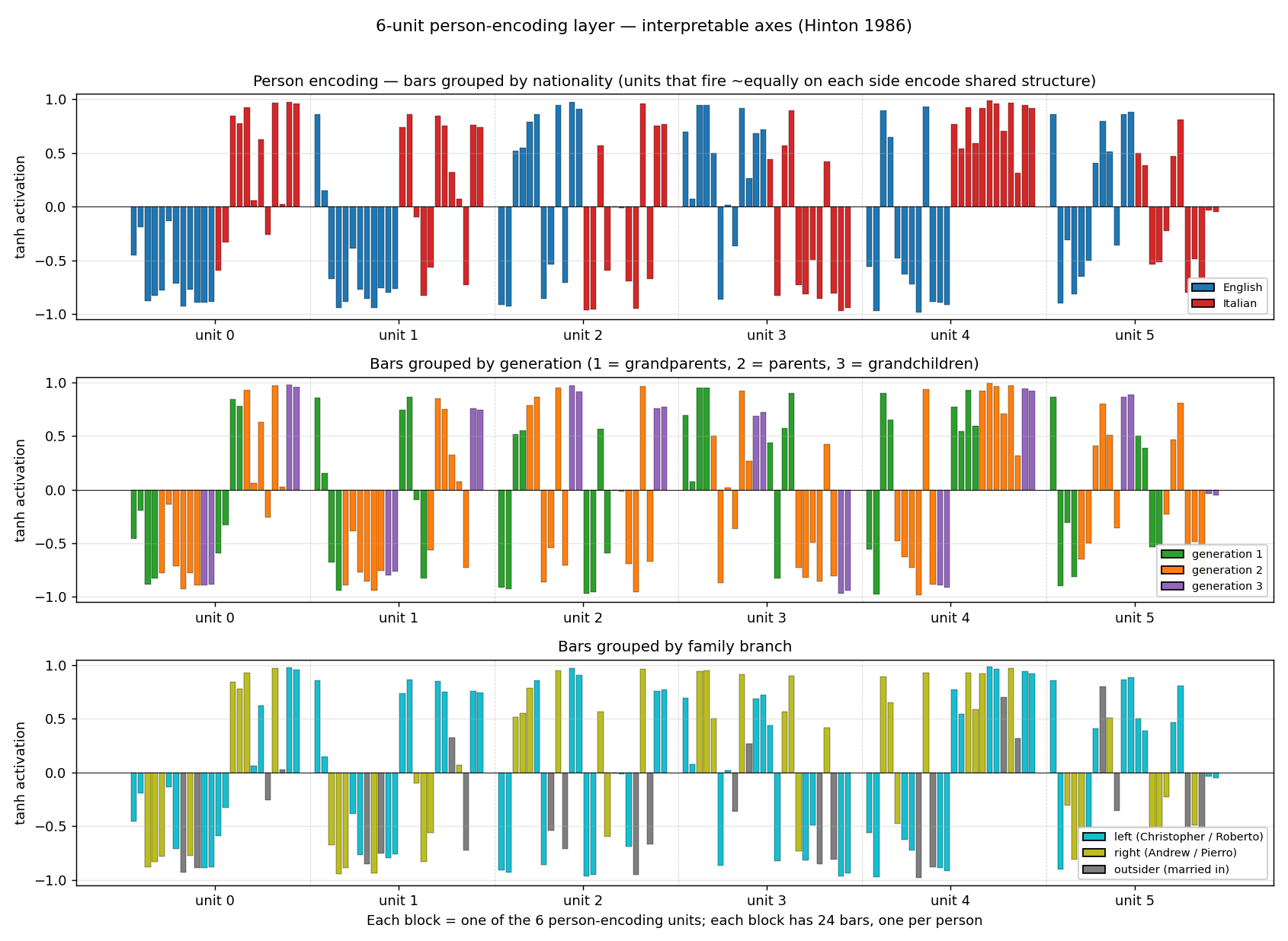

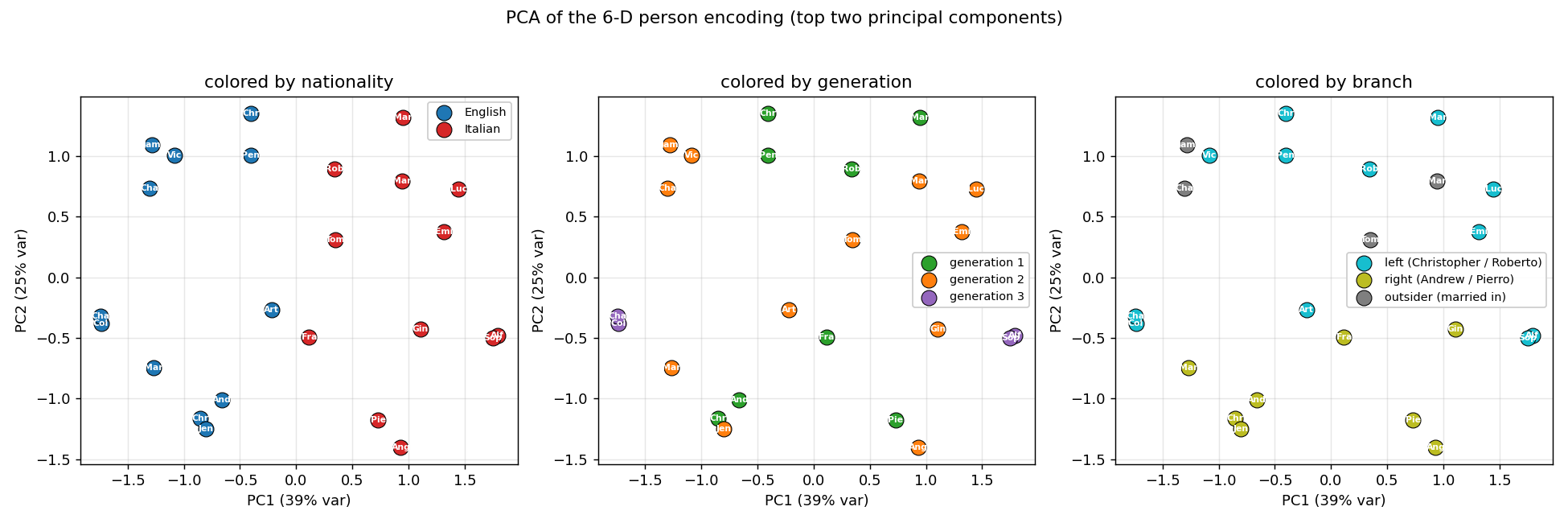

Hinton (1986) — Distributed representations of concepts

| Problem | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

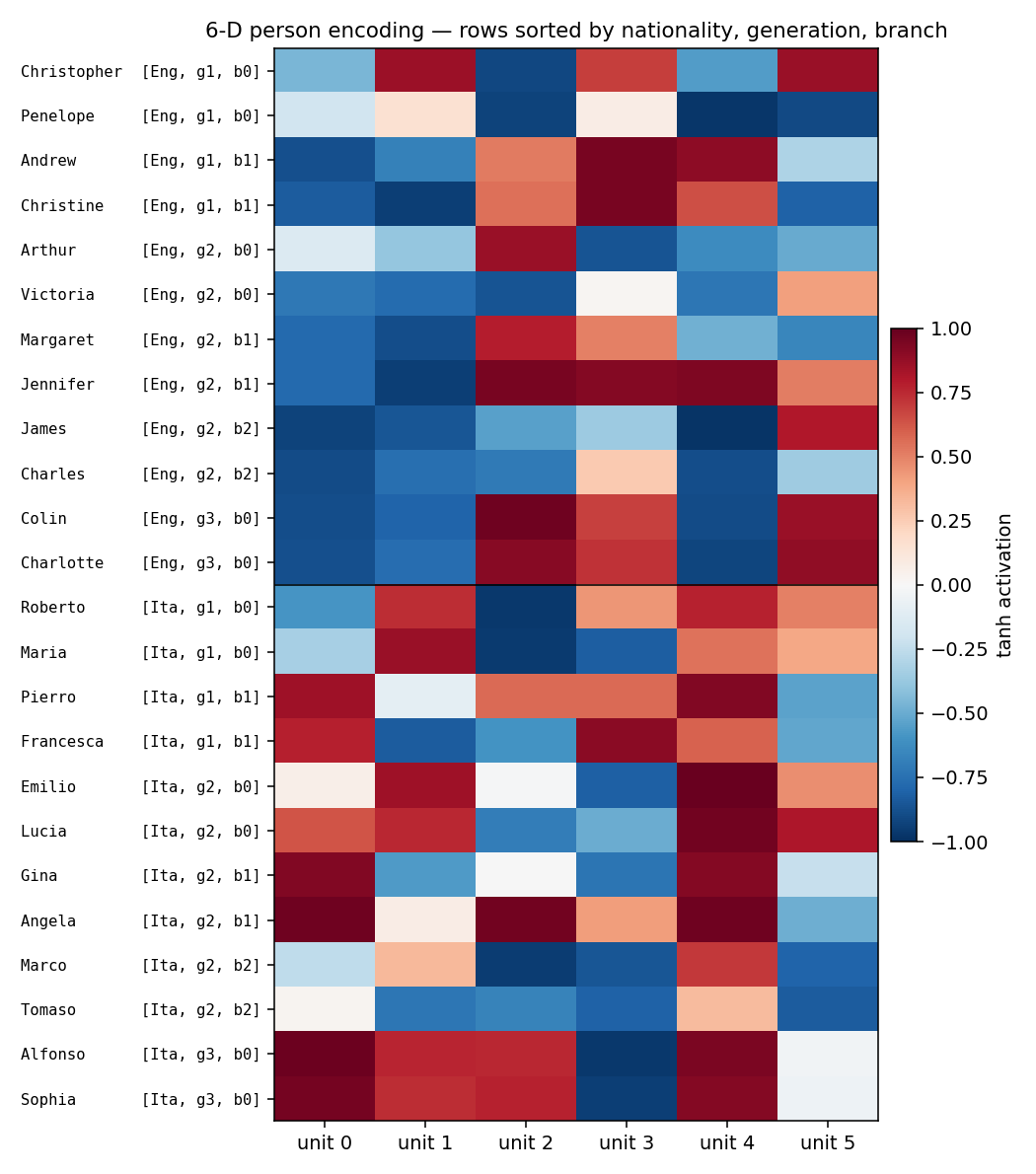

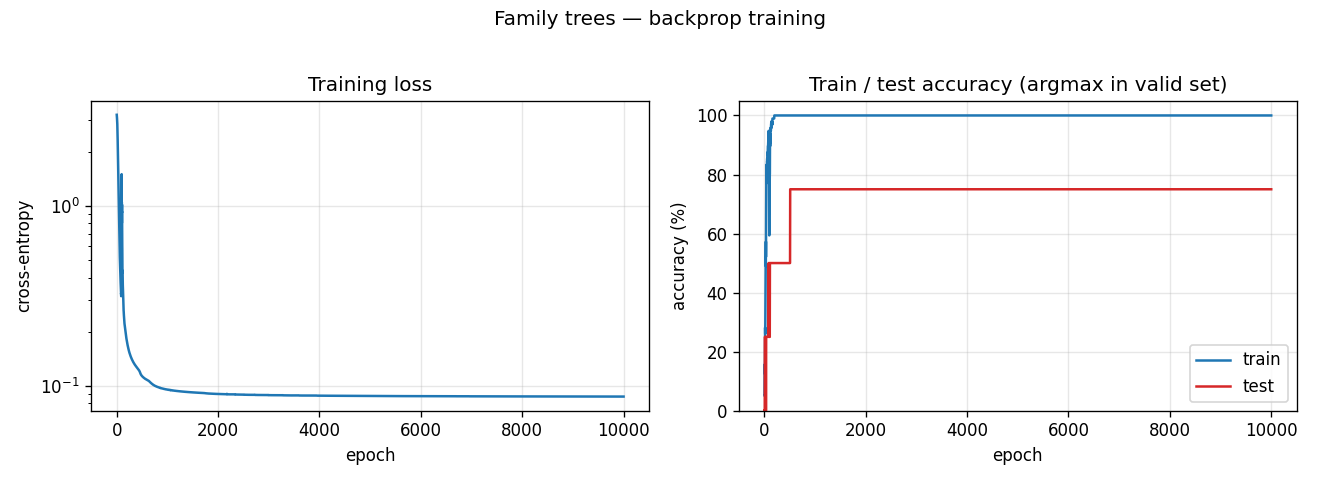

| family-trees | yes (3/4 best, 1.9/4 mean — matches paper) | ~1 hr | 2.1s |

Hinton & Sejnowski (1986) — Learning and relearning in Boltzmann machines

| Problem | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

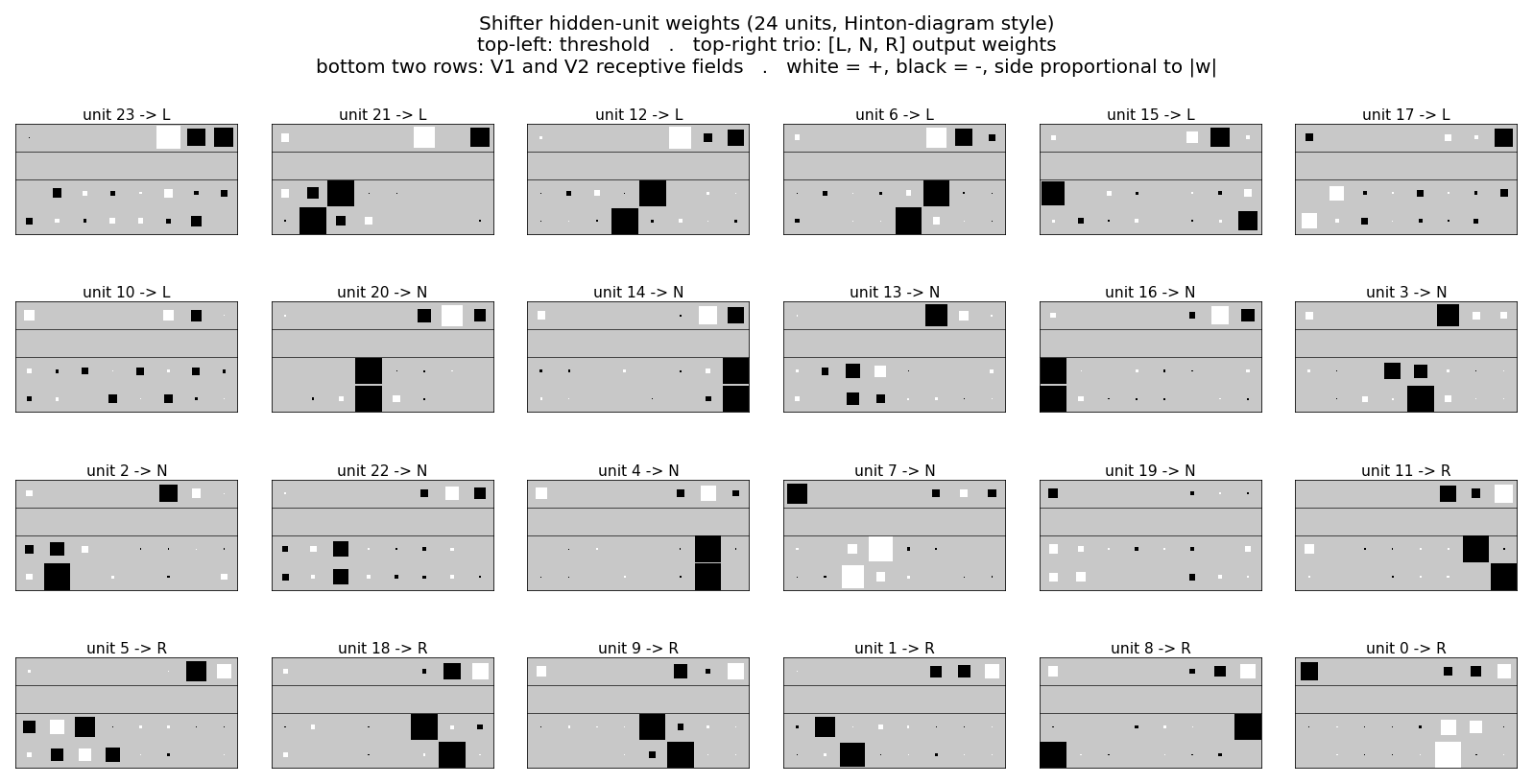

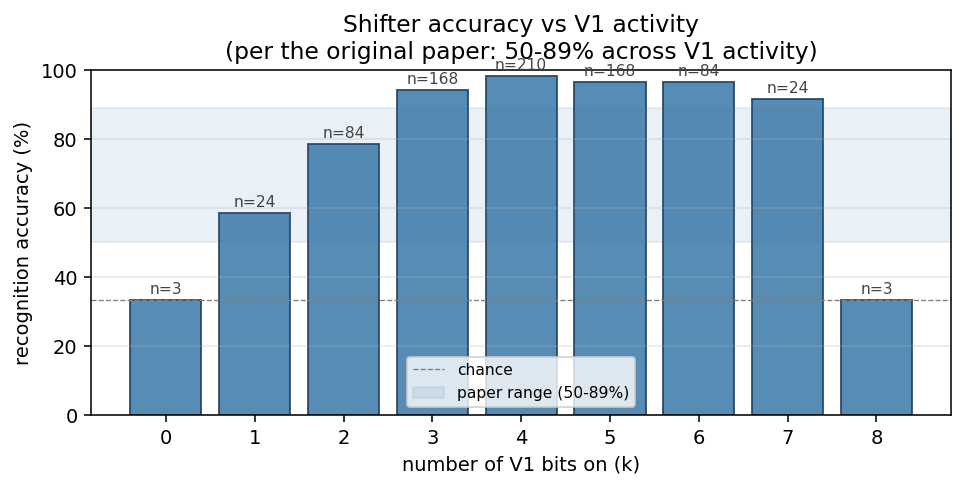

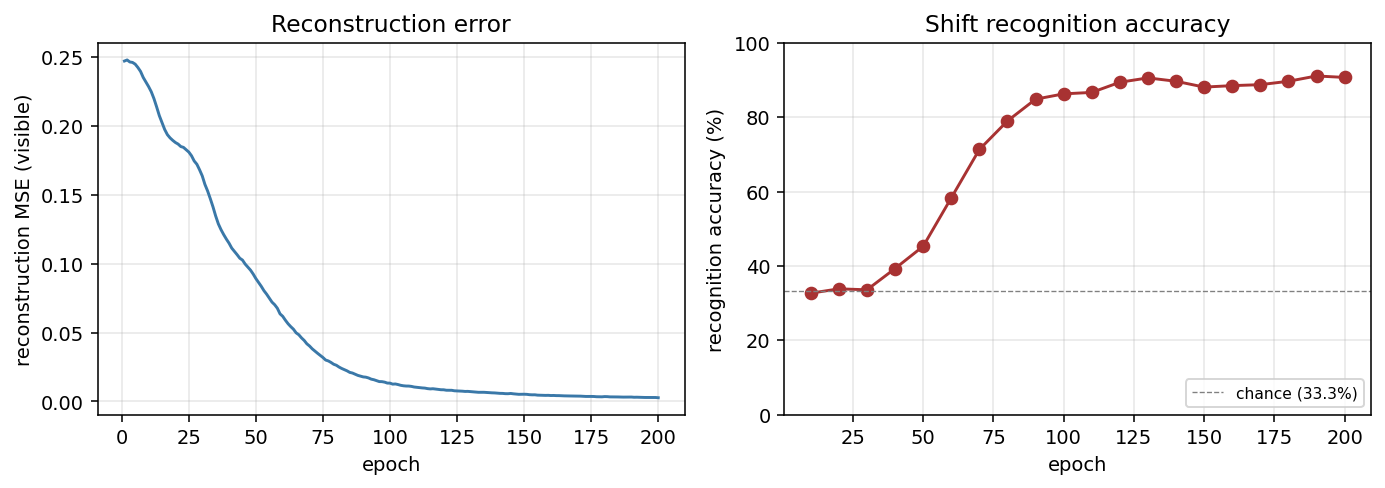

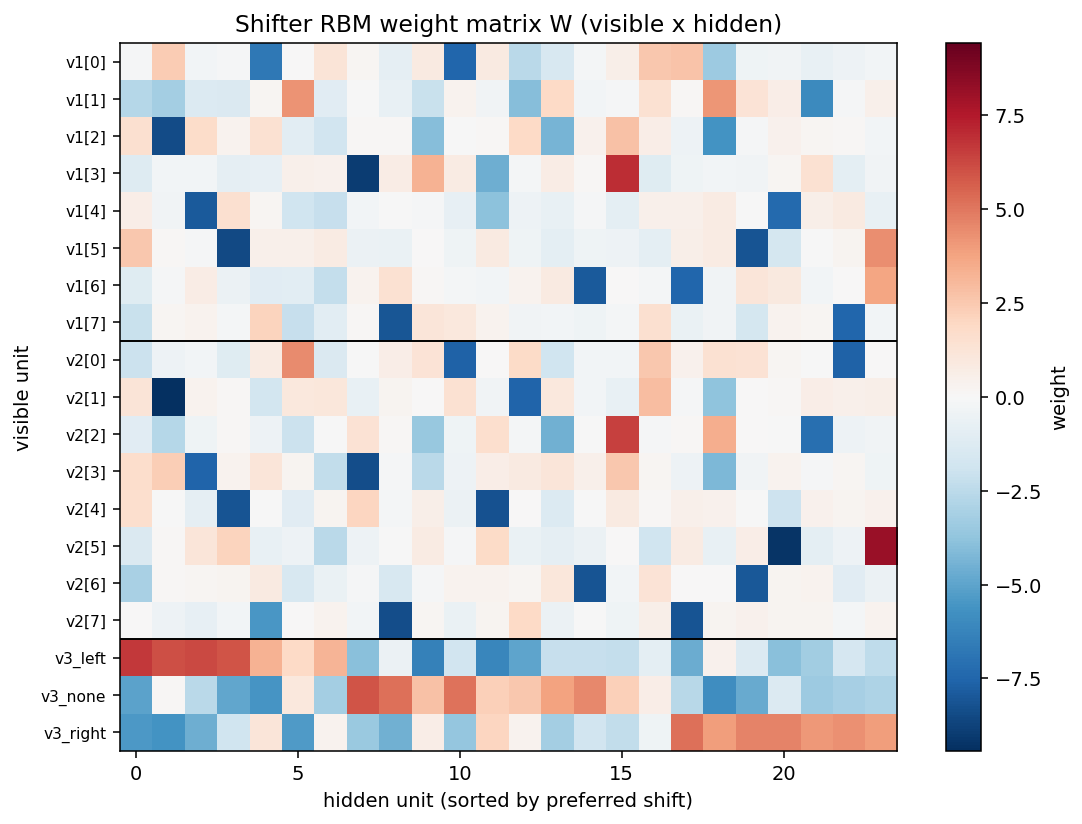

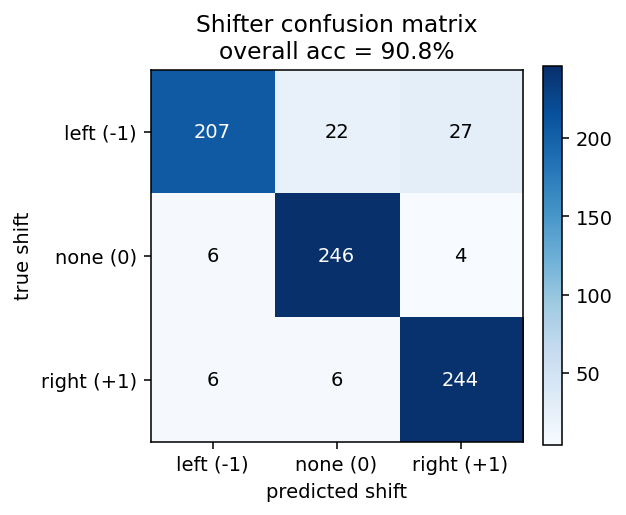

| shifter | yes (92.3% recognition; position-pair detectors) | 30 min | 14s |

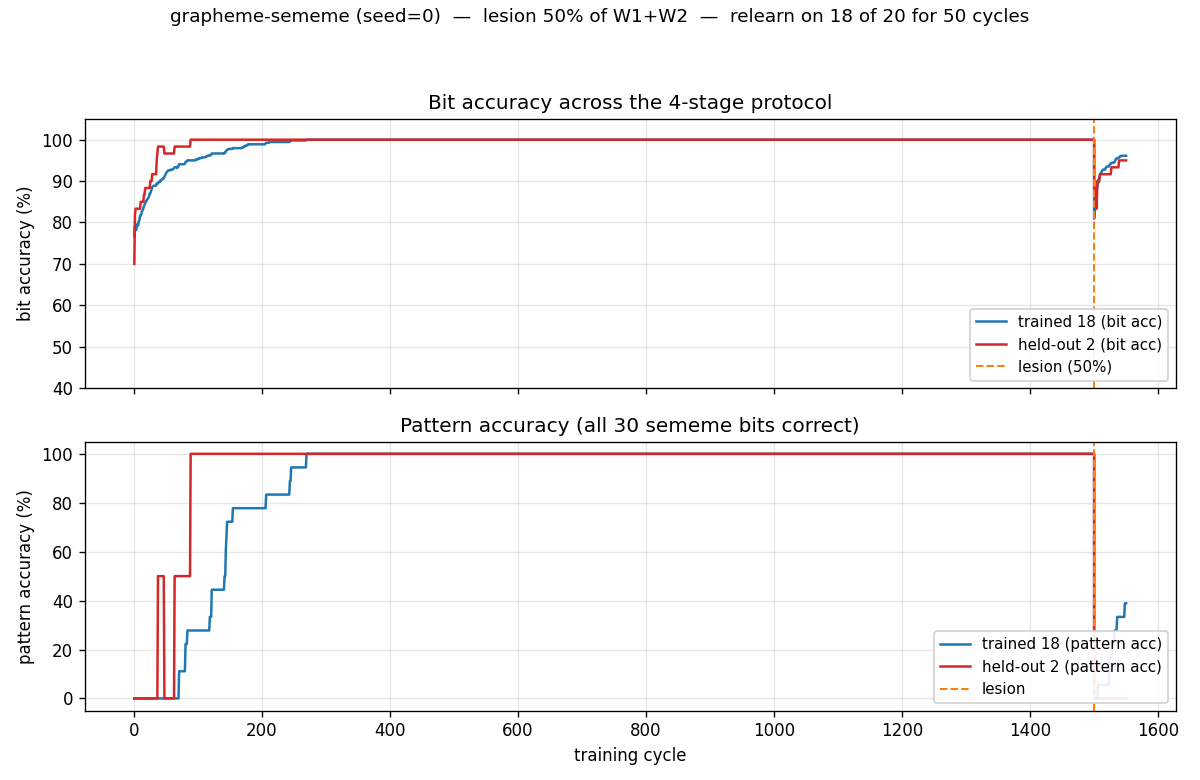

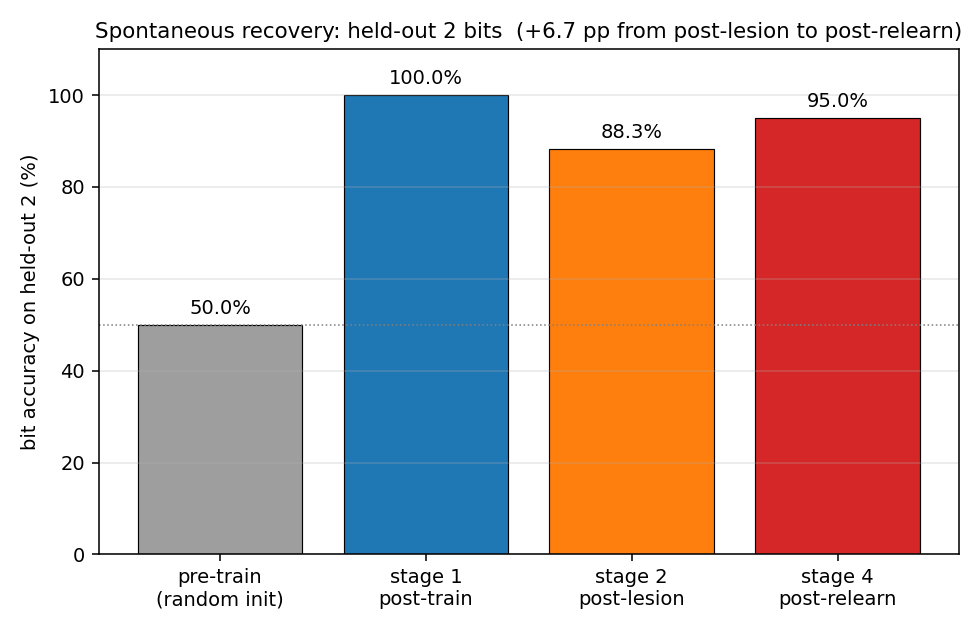

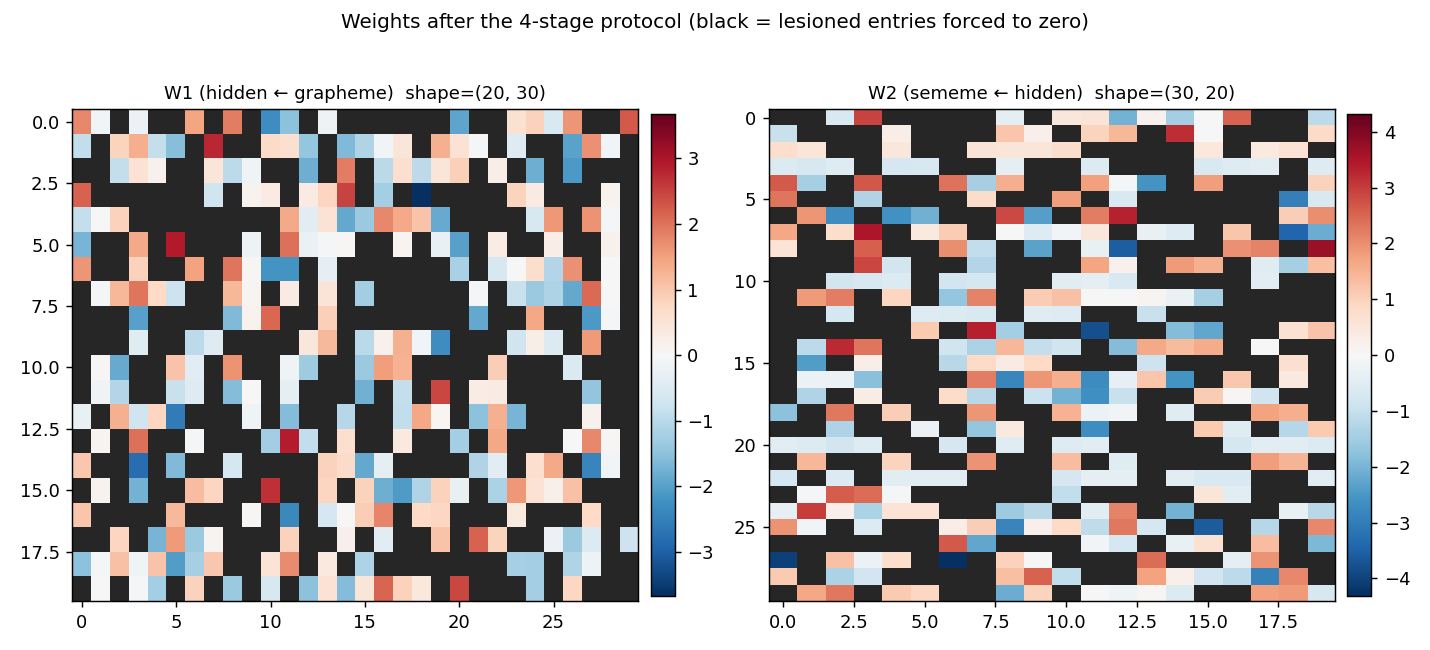

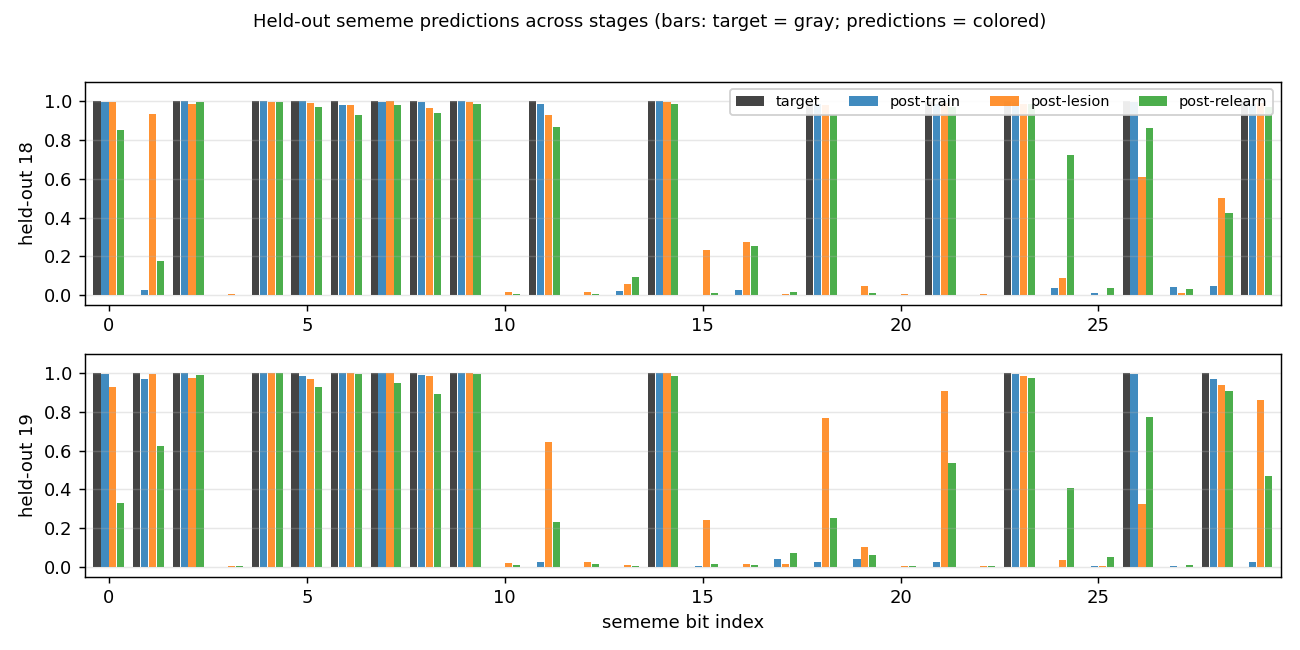

| grapheme-sememe | yes (qualitative; +6.7pp spontaneous recovery) | 70 min | 1.7s |

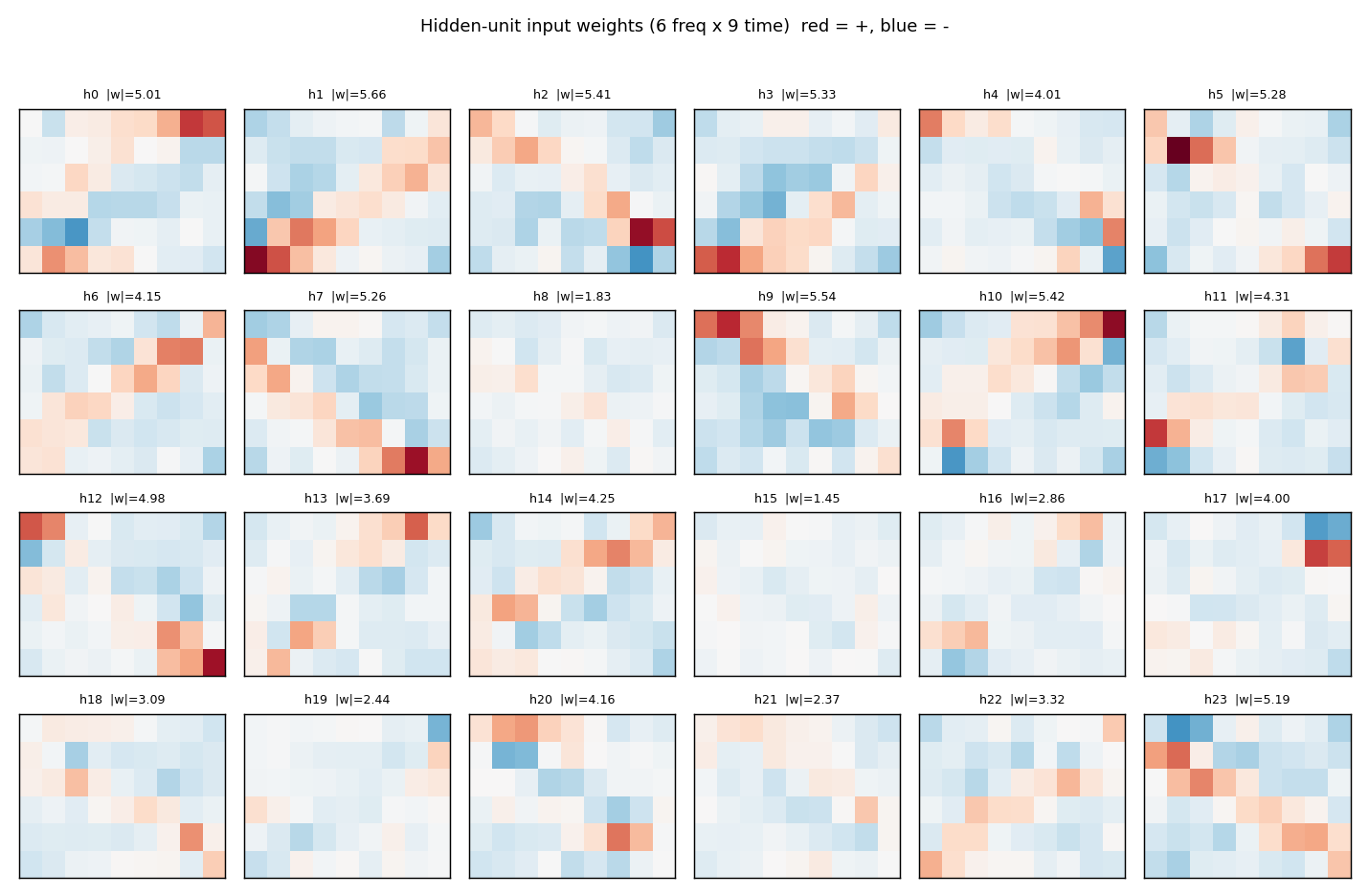

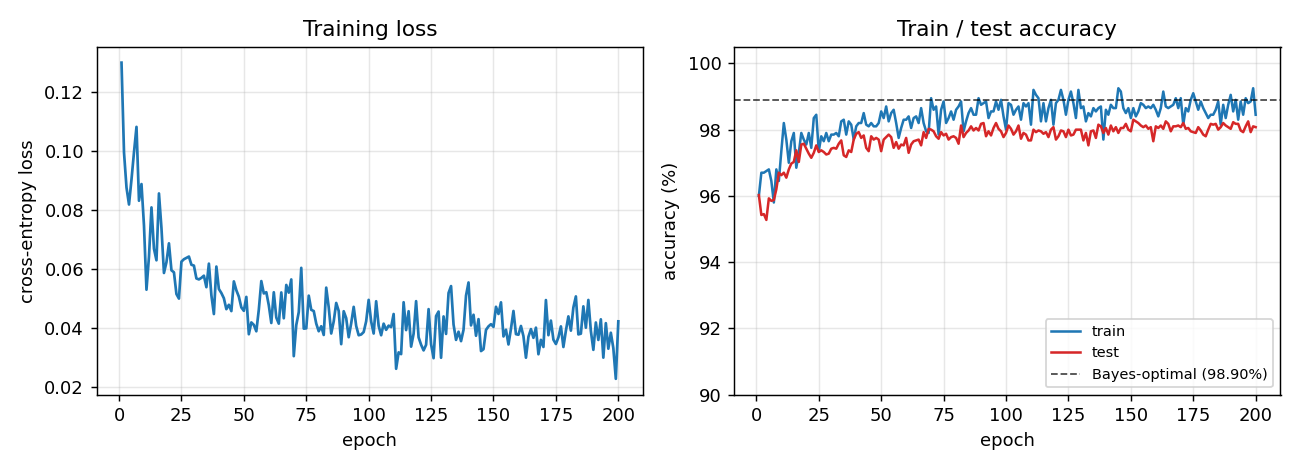

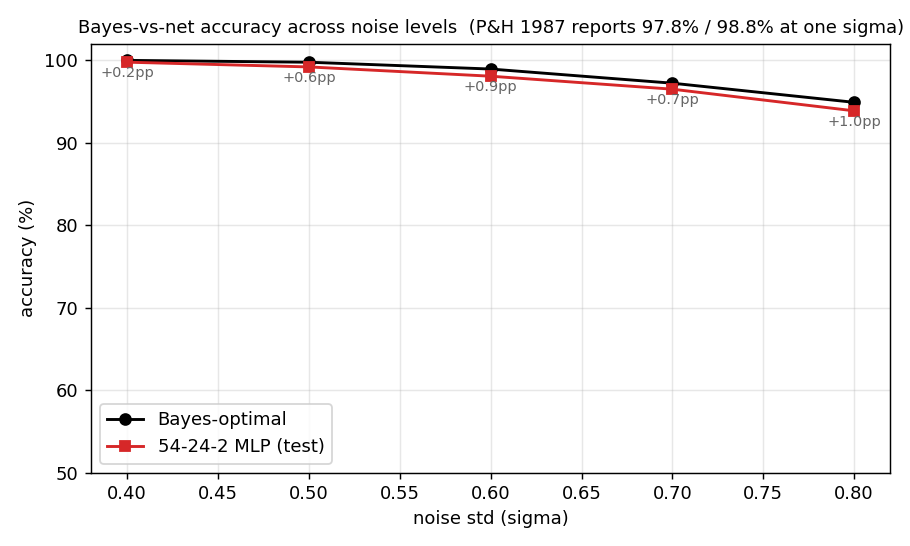

Plaut & Hinton (1987) — Learning sets of filters using back-propagation

| Problem | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

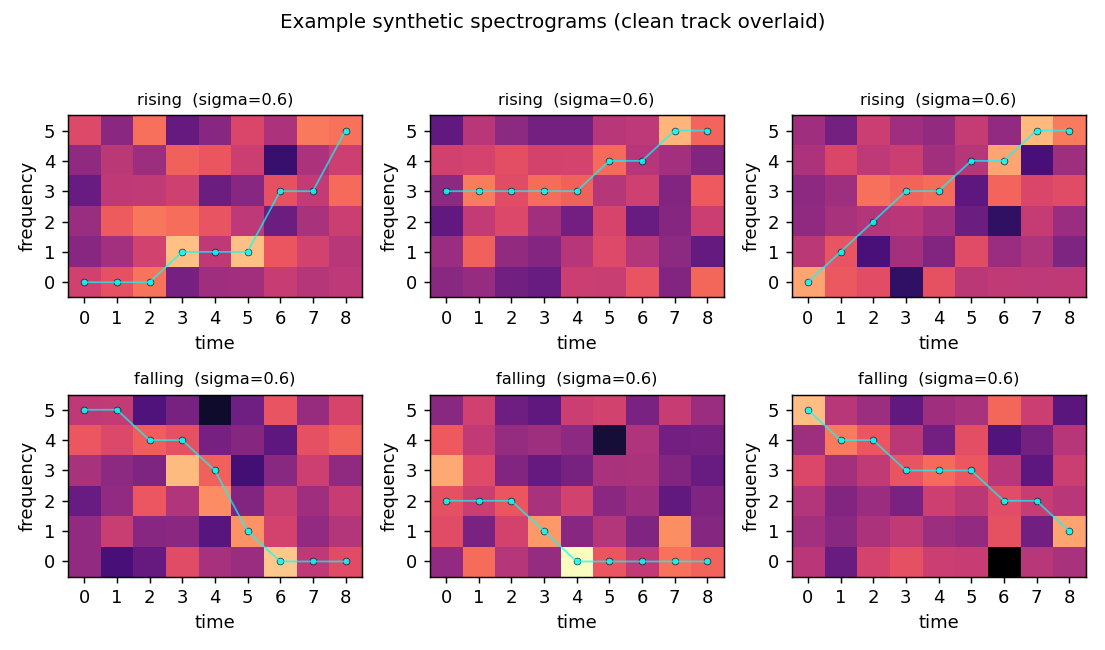

| riser-spectrogram | yes (98.08% net vs 98.90% Bayes; gap +0.83pp) | ~7 min | 0.91s |

Hinton & Plaut (1987) — Using fast weights to deblur old memories

| Problem | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

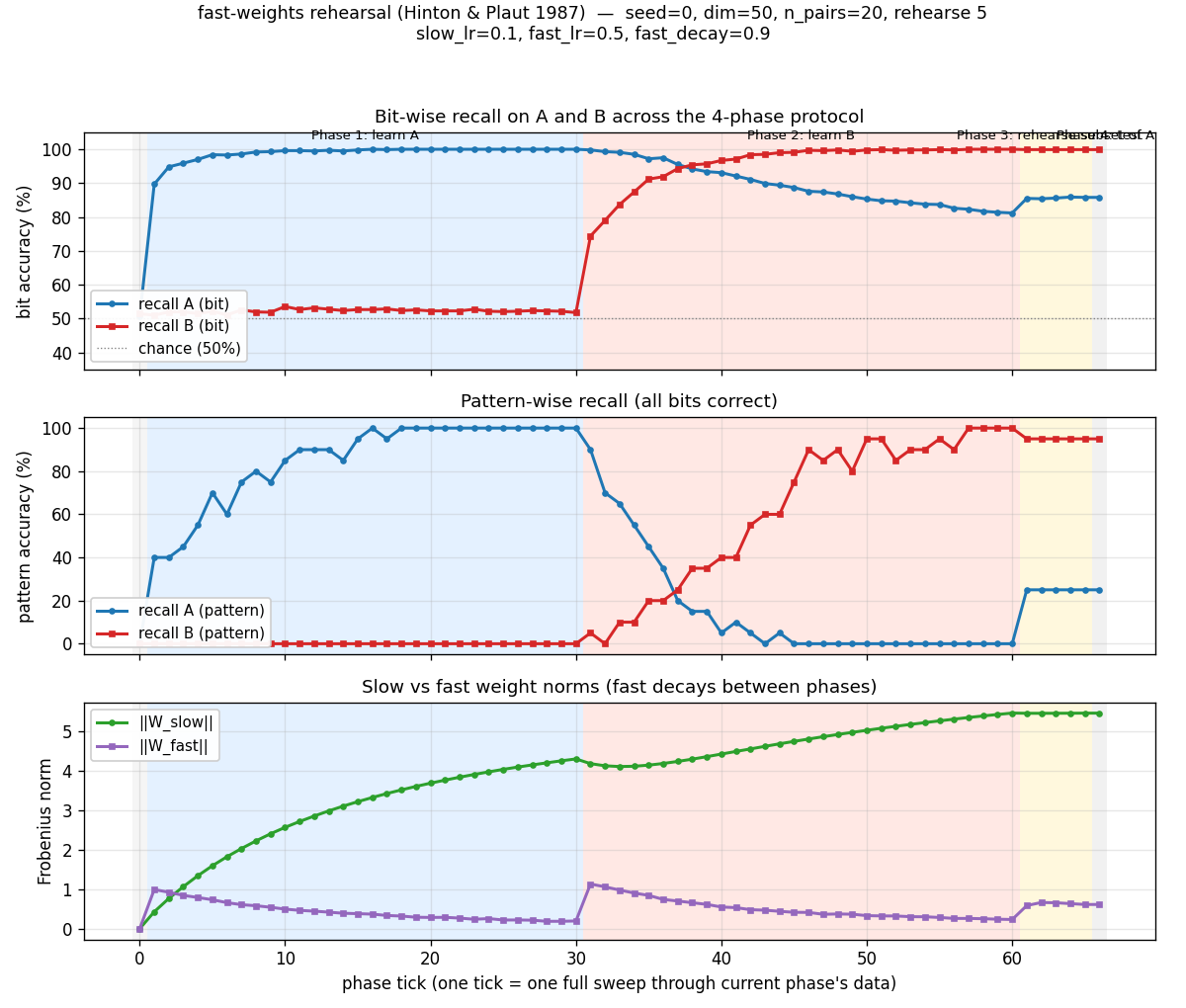

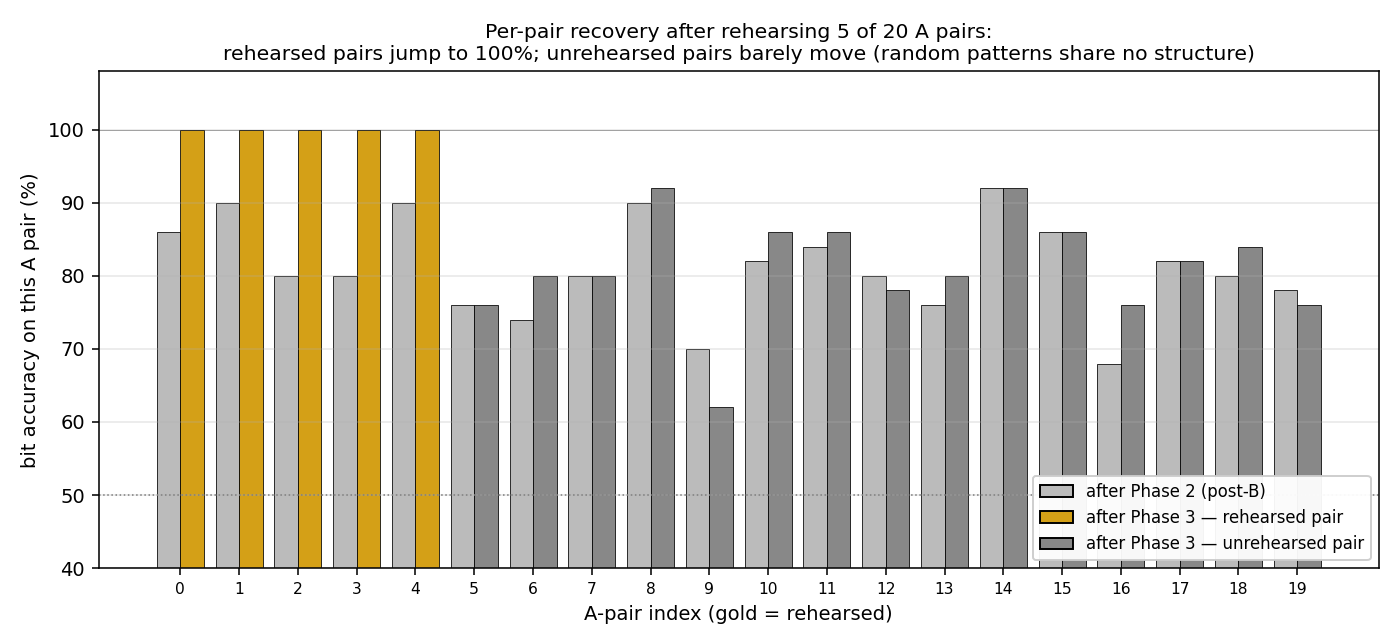

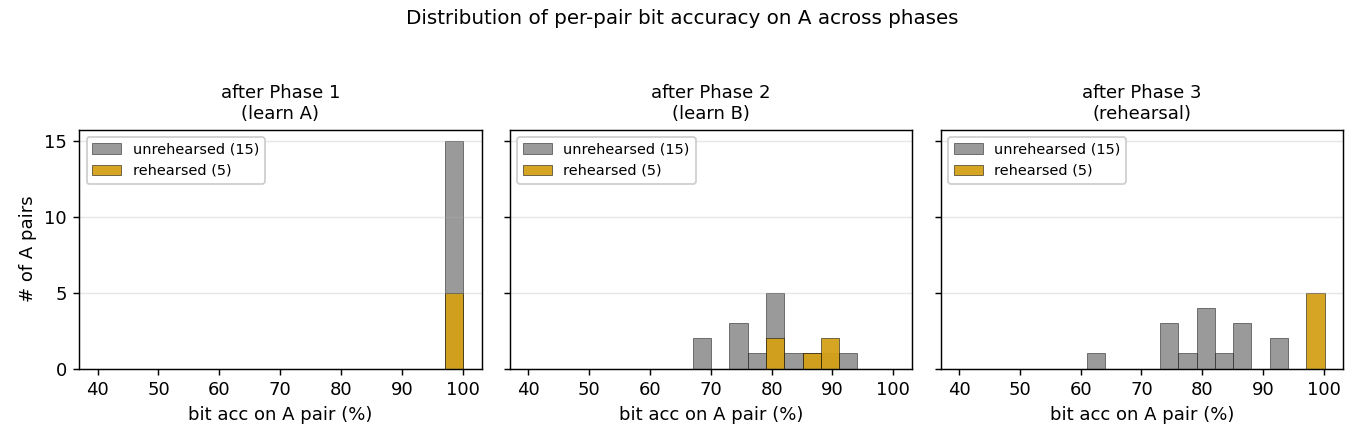

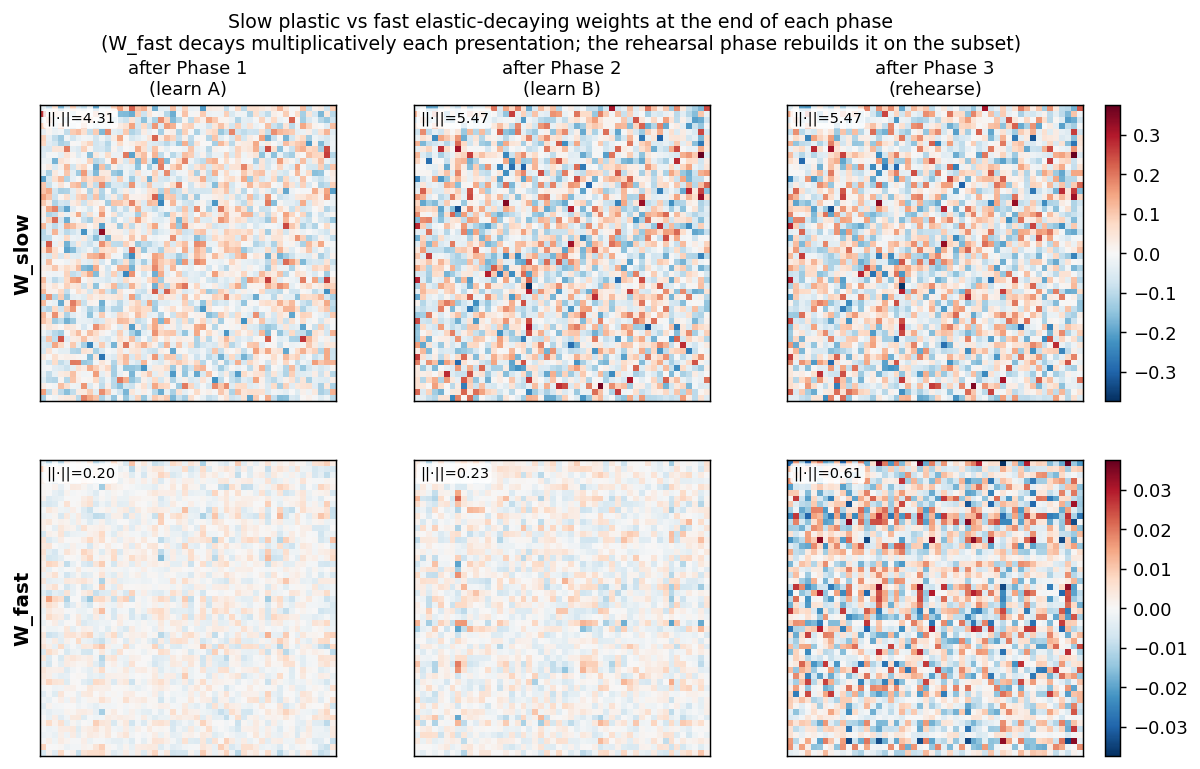

| fast-weights-rehearsal | yes (rehearsed-subset recovery +22pp / 30 seeds) | 25 min | 0.14s |

1990s — Unsupervised learning, mixtures, the Helmholtz machine

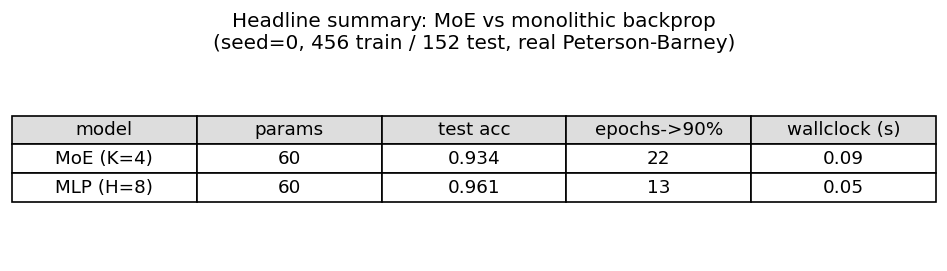

Jacobs, Jordan, Nowlan & Hinton (1991) — Adaptive mixtures of local experts

| Problem | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

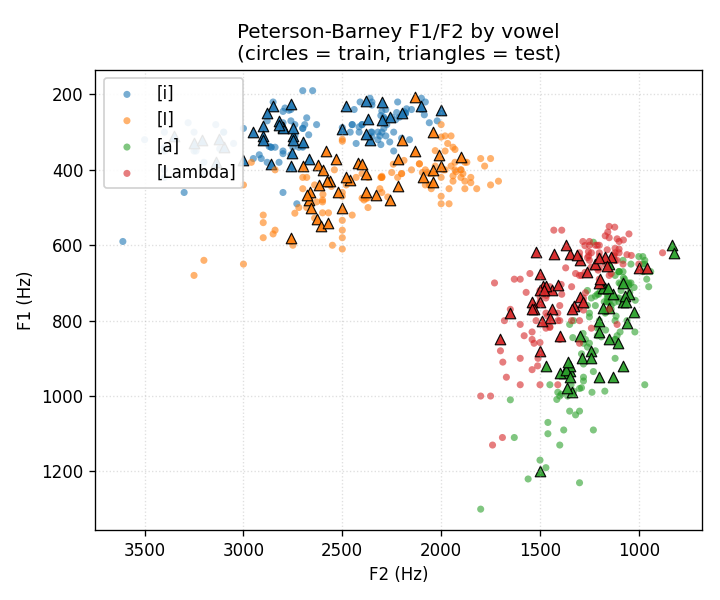

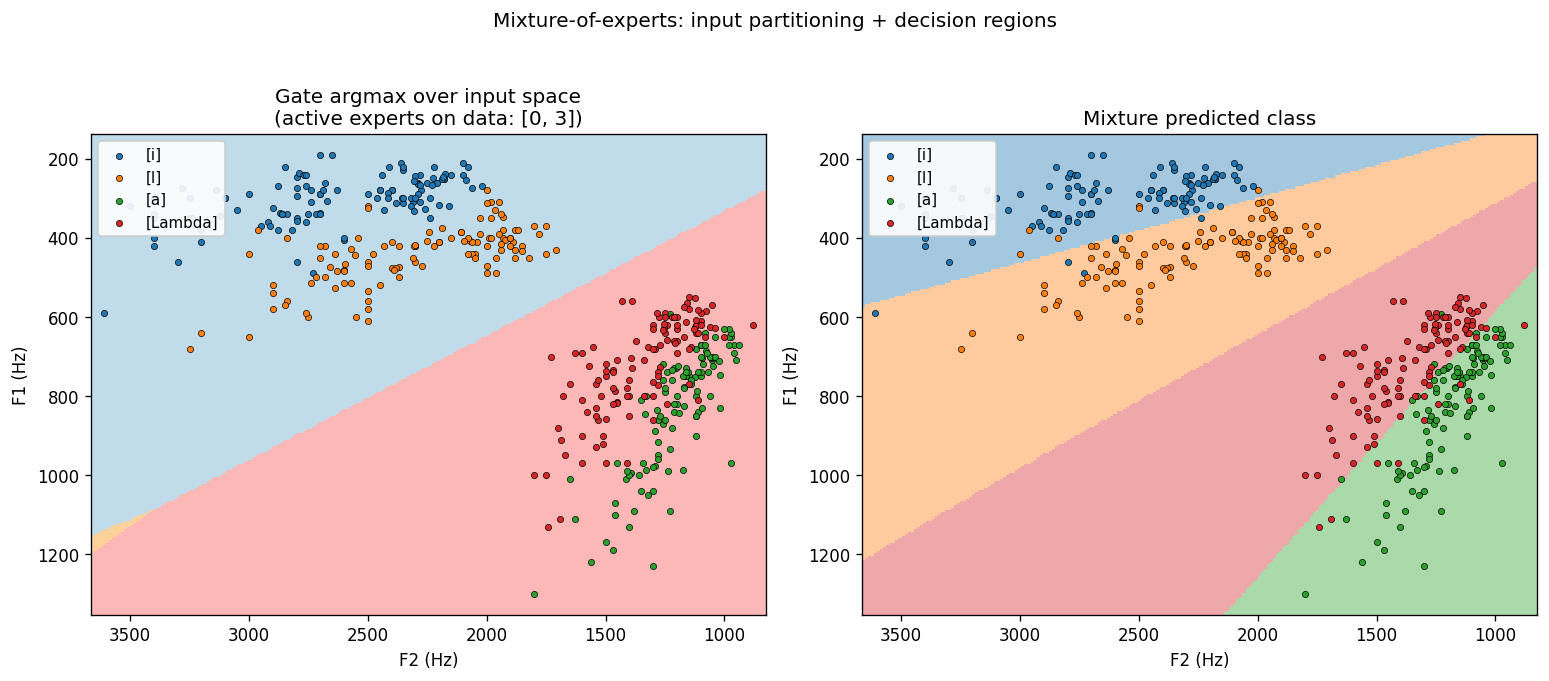

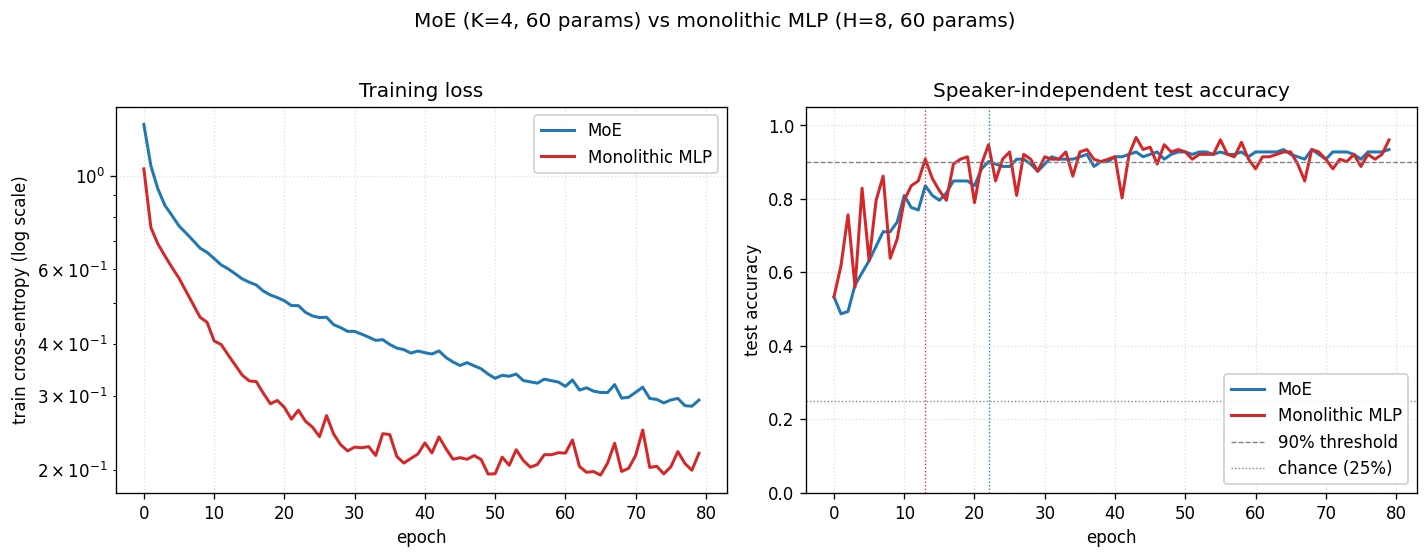

| vowel-mixture-experts | partial (MoE 92.8% / MLP 90.1%; gate partitions vowels) | 70 min | 0.09s |

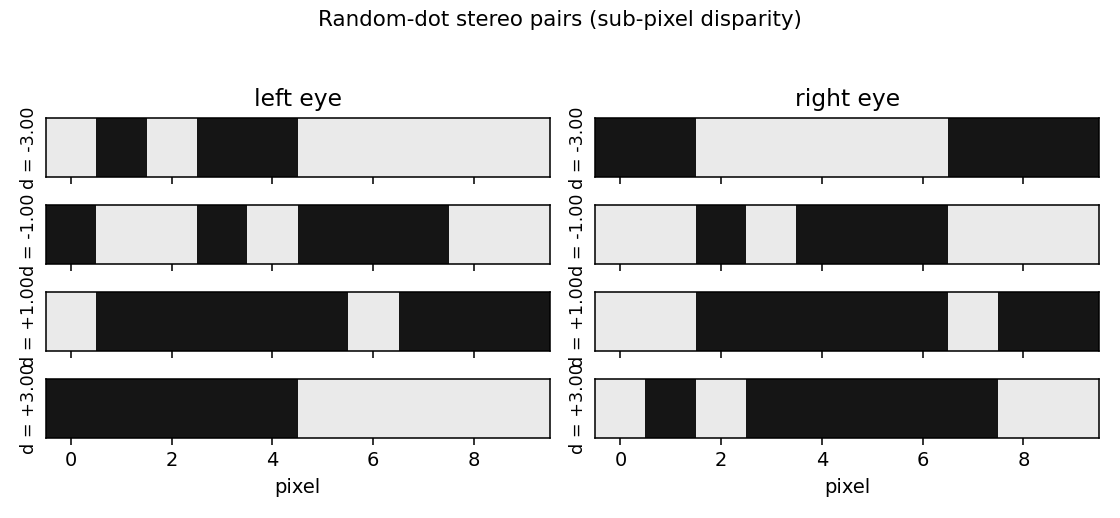

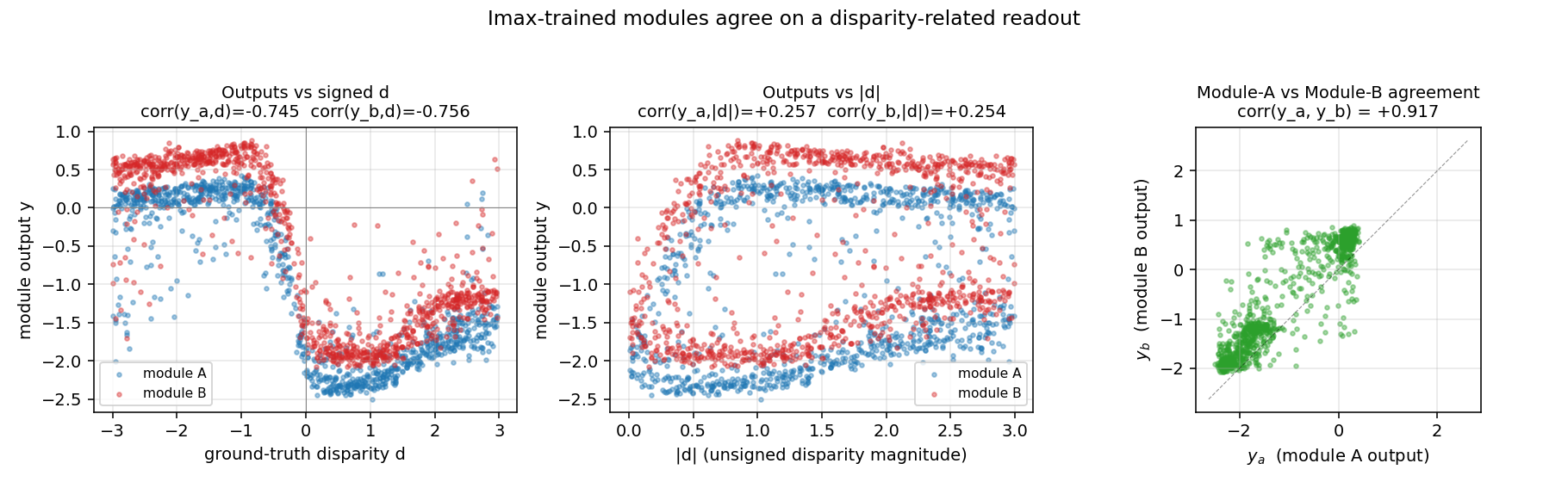

Becker & Hinton (1992) — A self-organizing neural network that discovers surfaces in random-dot stereograms

| Problem | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

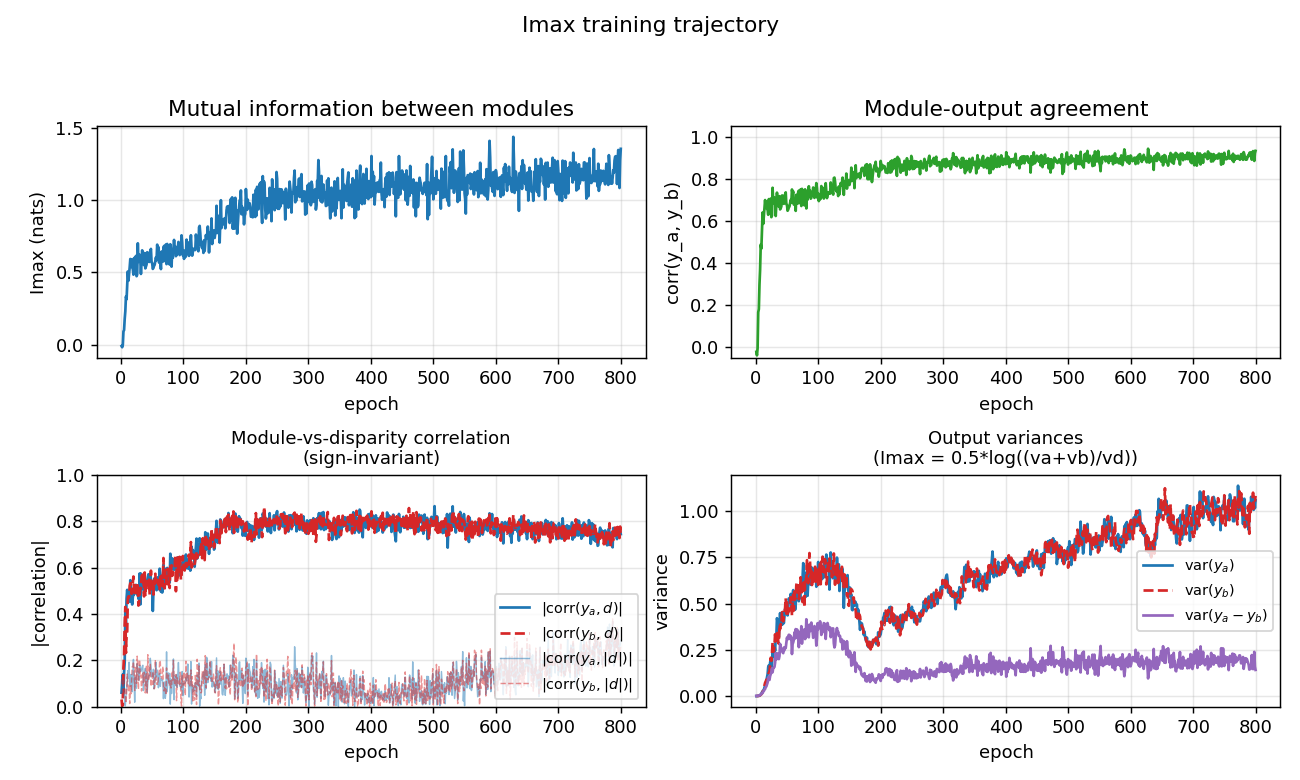

| random-dot-stereograms | yes (Imax 1.18 nats; disparity readout 0.74) | ~1 hr | 6.1s |

Nowlan & Hinton (1992) — Simplifying neural networks by soft weight-sharing

| Problem | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

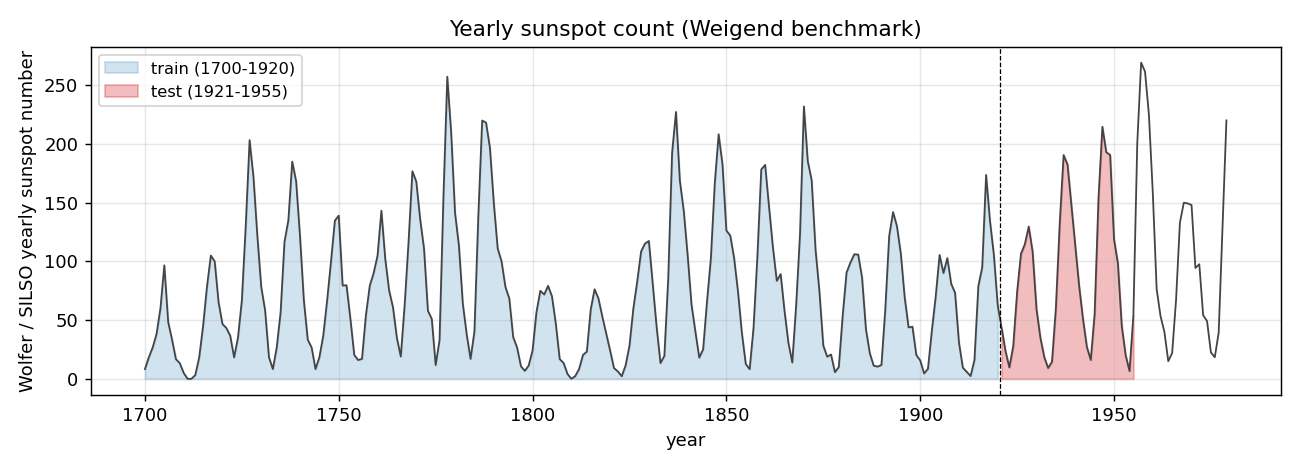

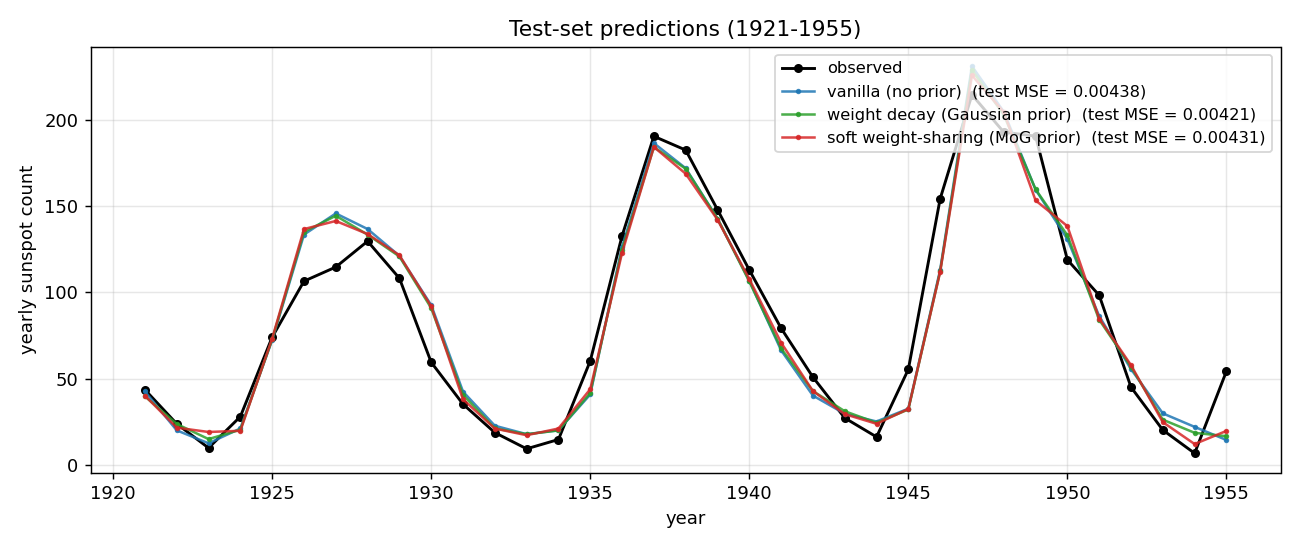

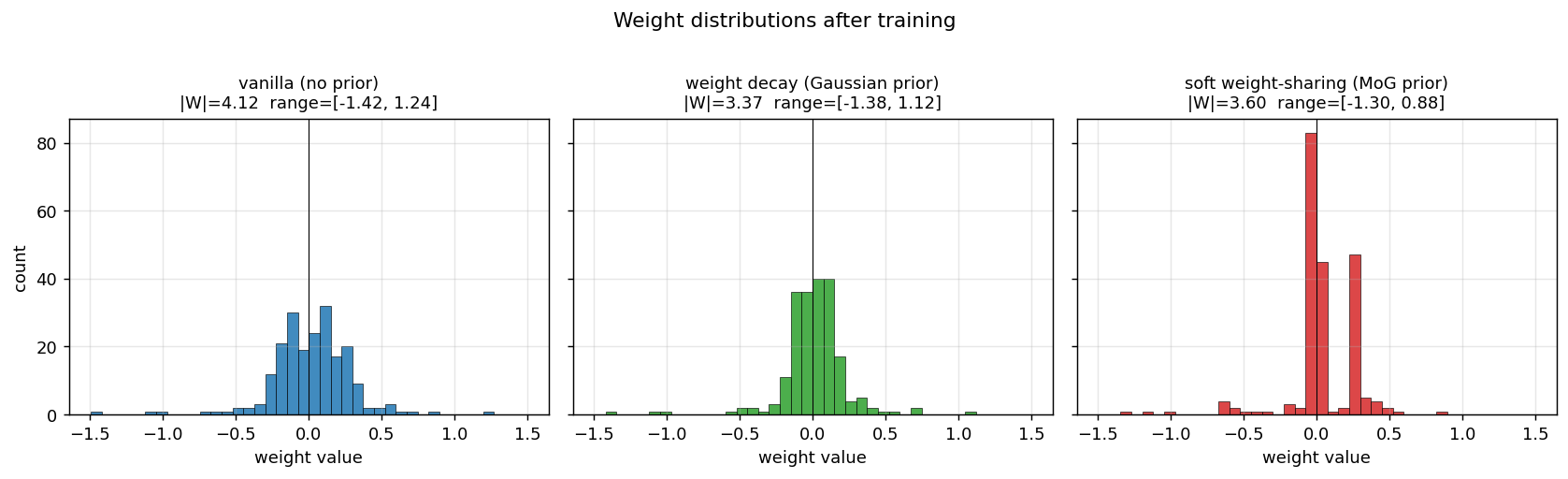

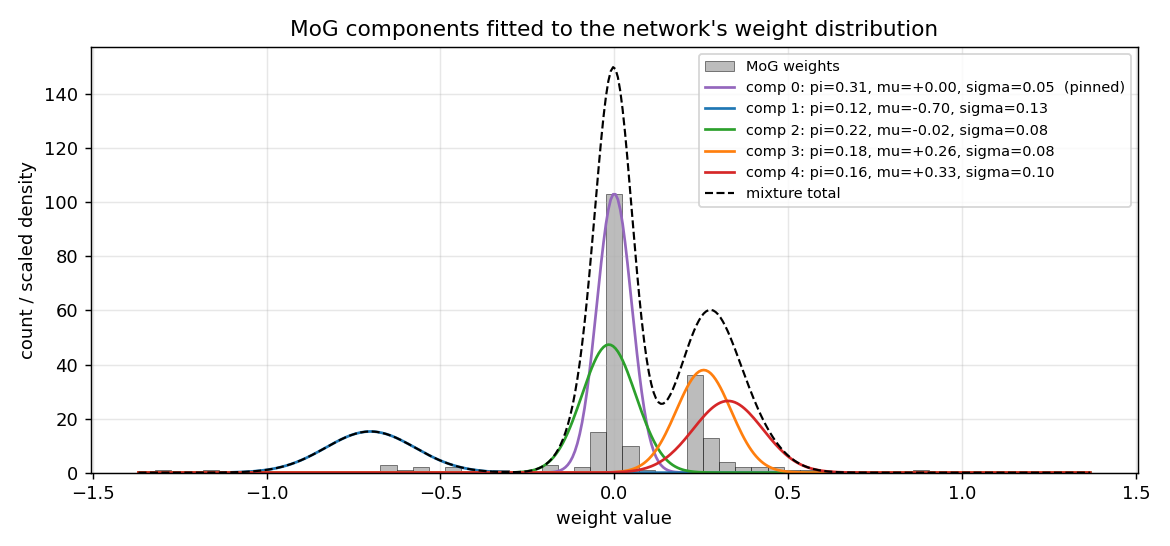

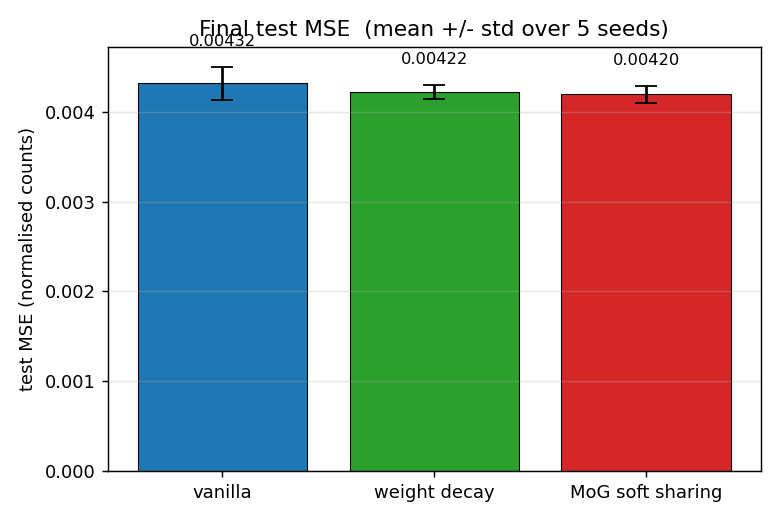

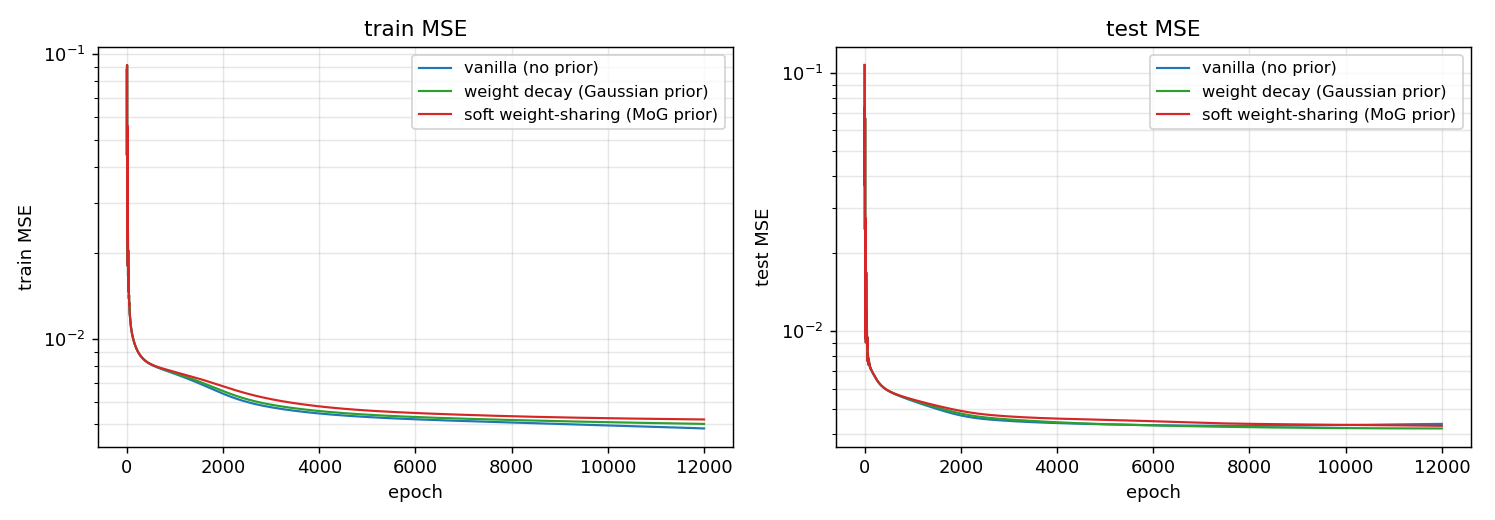

| sunspots | yes (MoG ≤ decay ≤ vanilla; weight peaks at 0 + 0.27) | ~1 hr | 5s |

Hinton & Zemel (1994) — Autoencoders, MDL and Helmholtz free energy

| Problem | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

| spline-images-factorial-vq | yes (factorial wins 3× over 24-VQ baseline) | ~1 hr | ~5s |

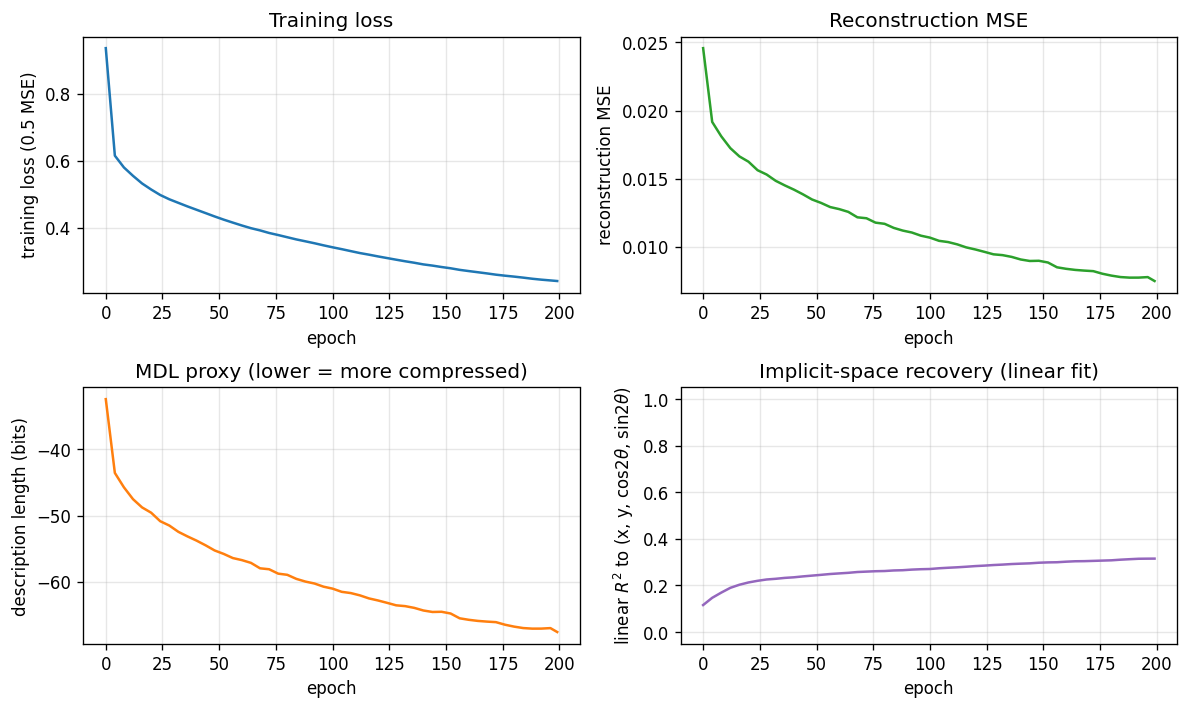

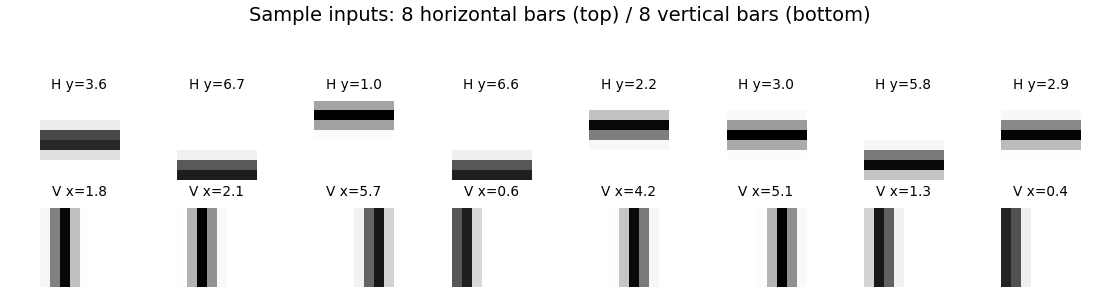

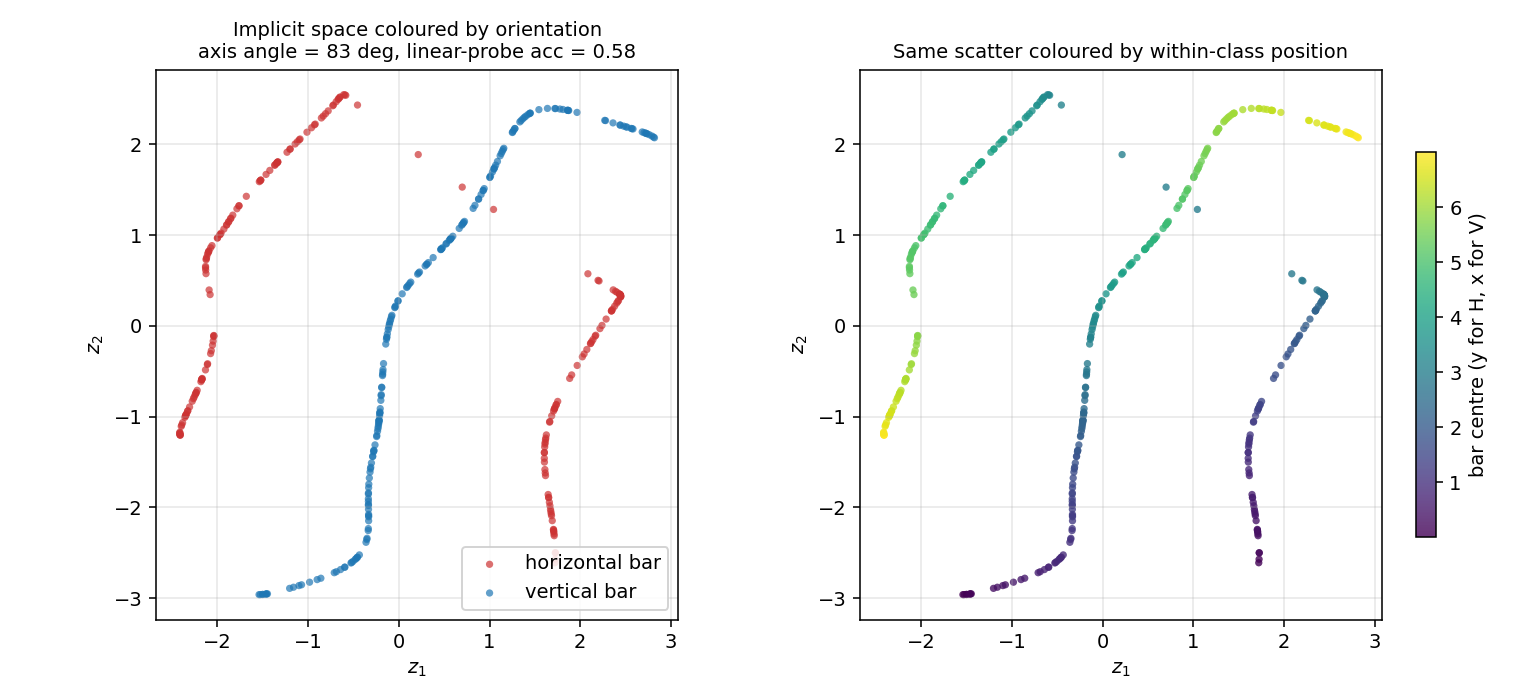

Zemel & Hinton (1995) — Learning population codes by minimizing description length

| Problem | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

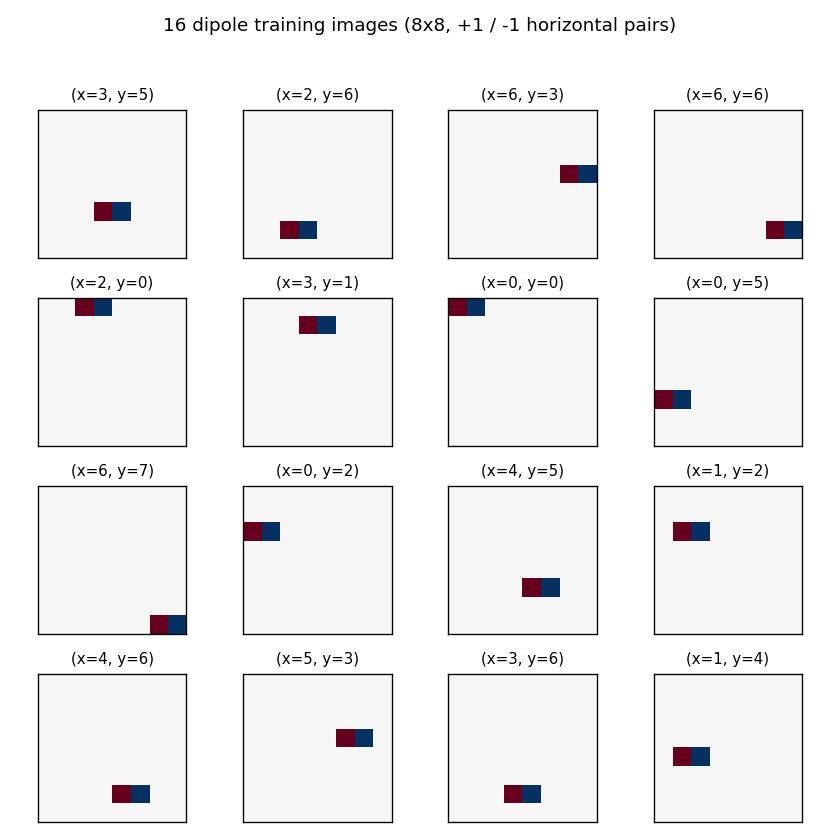

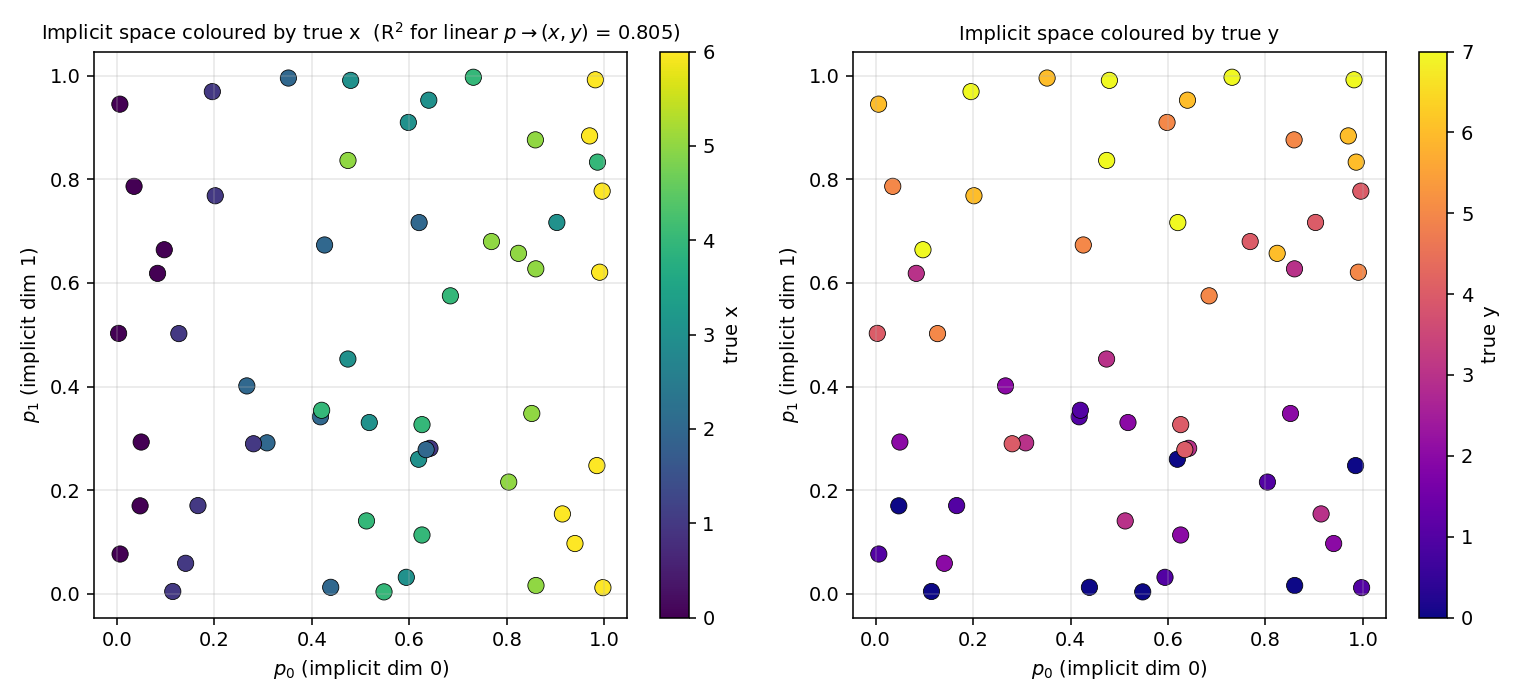

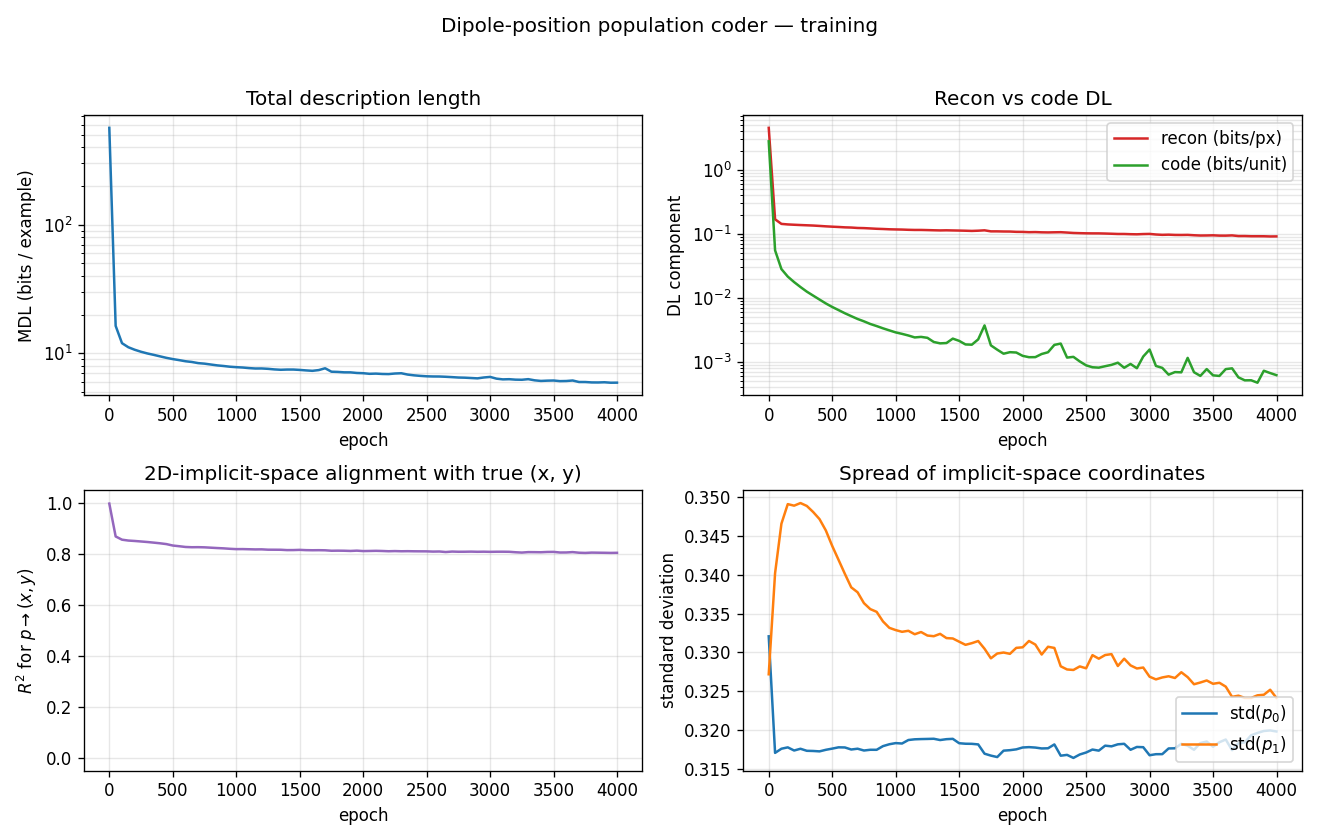

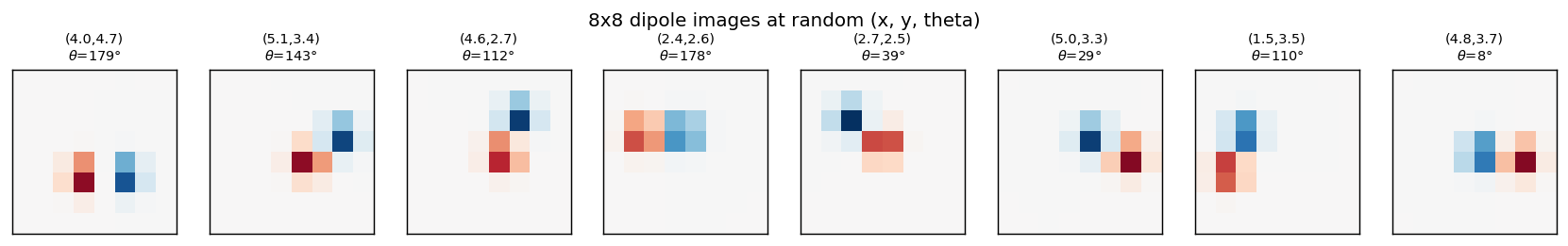

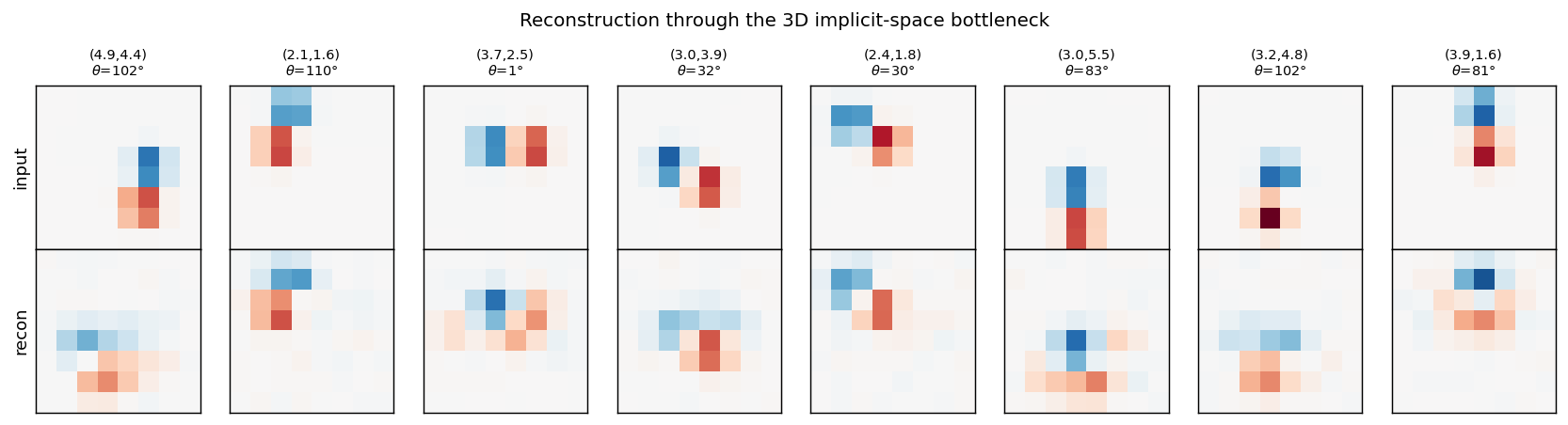

| dipole-position | partial (R² = 0.81; supervised warm-up needed) | ~3 hr | 2s |

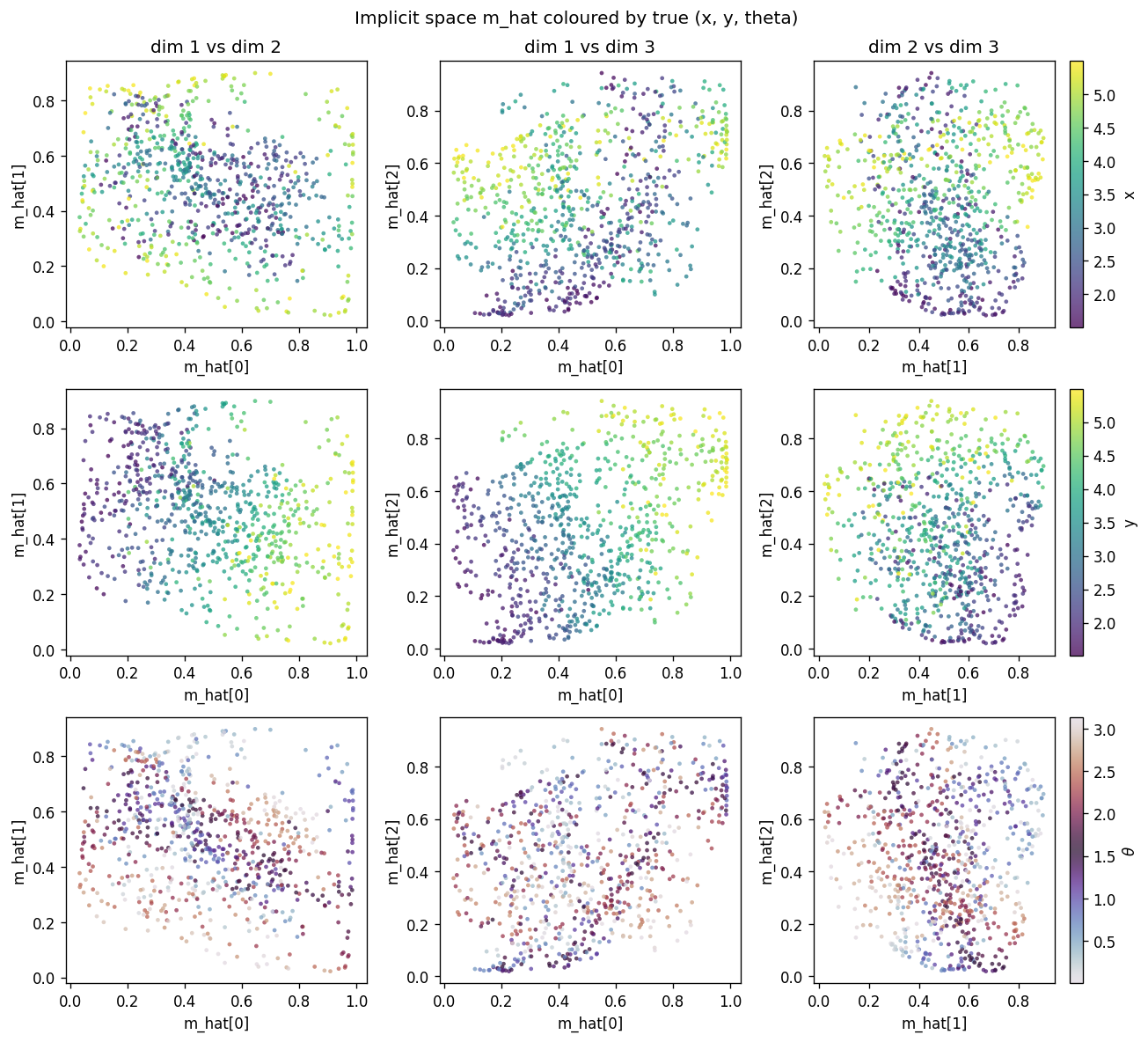

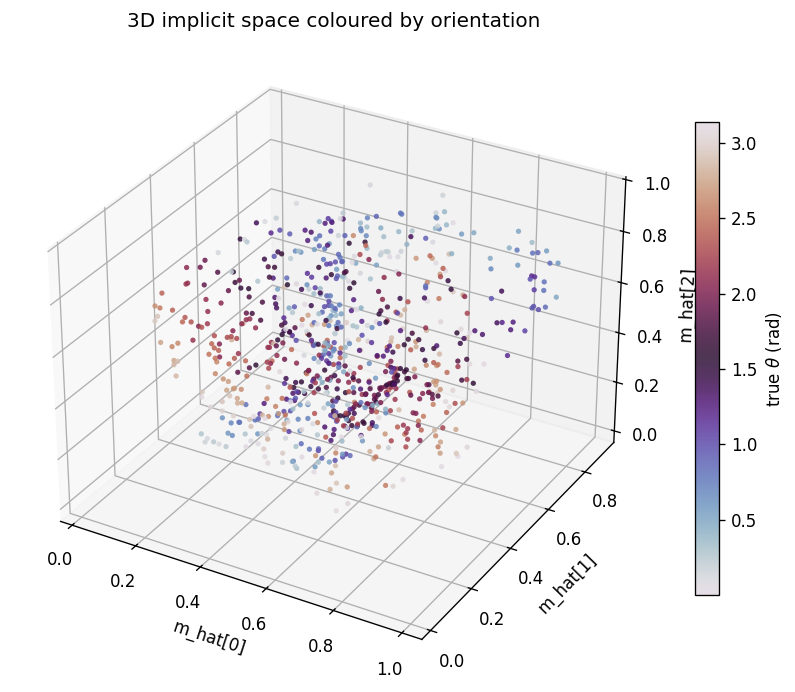

| dipole-3d-constraint | yes (qualitatively; 3 dims emerge) | ~1 hr | 11s |

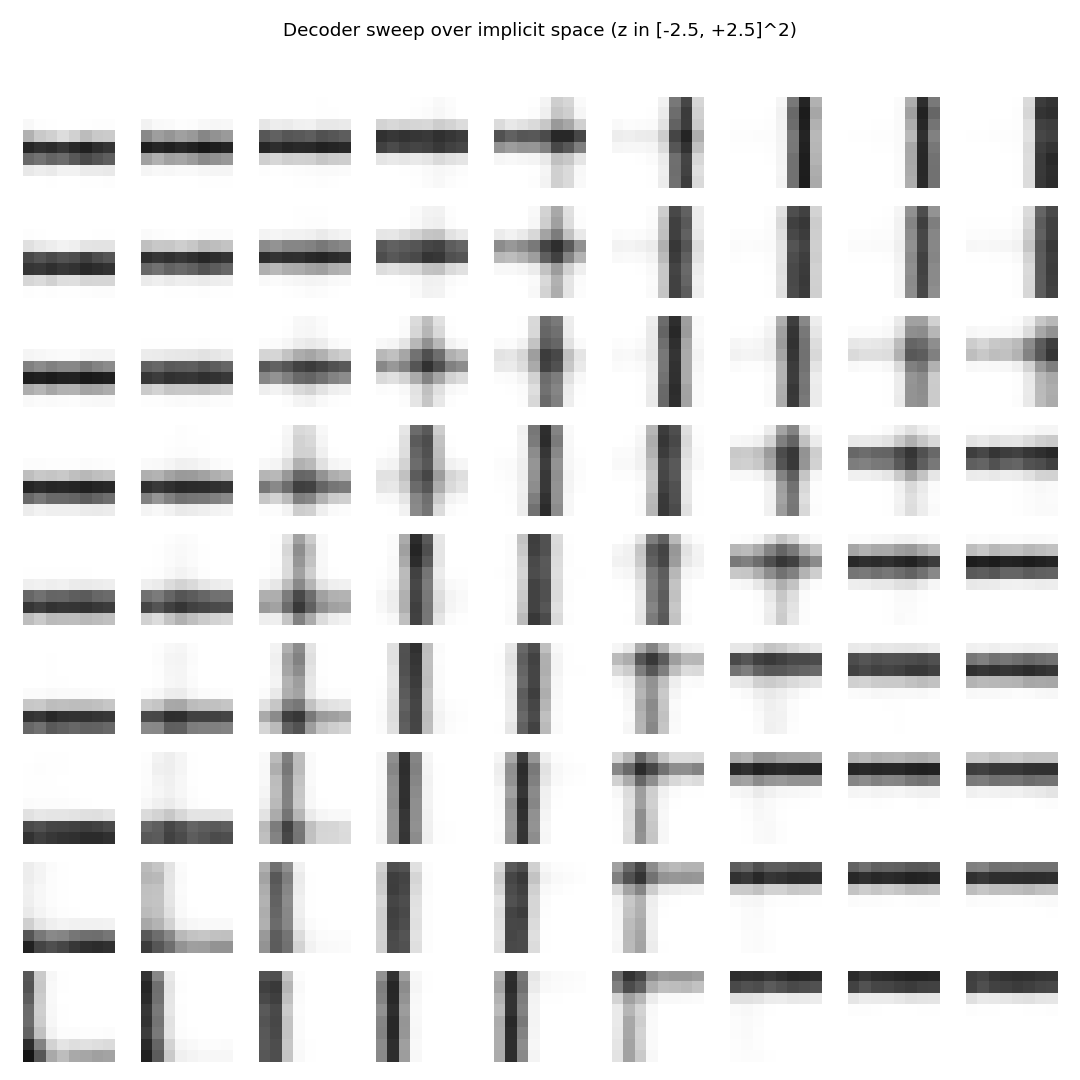

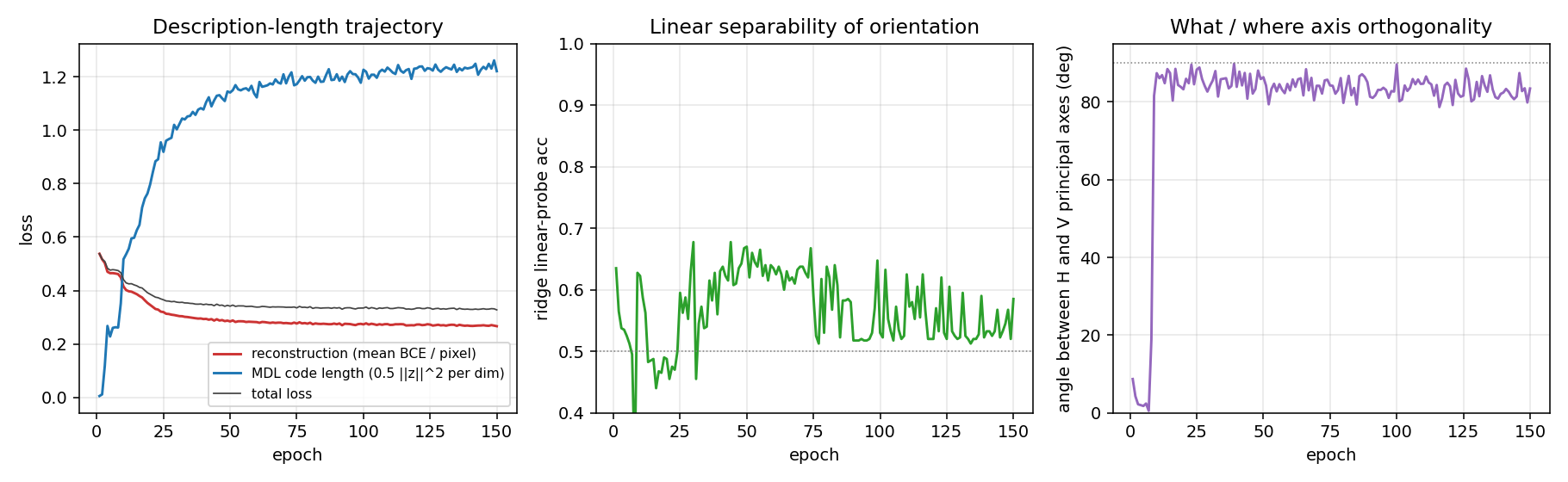

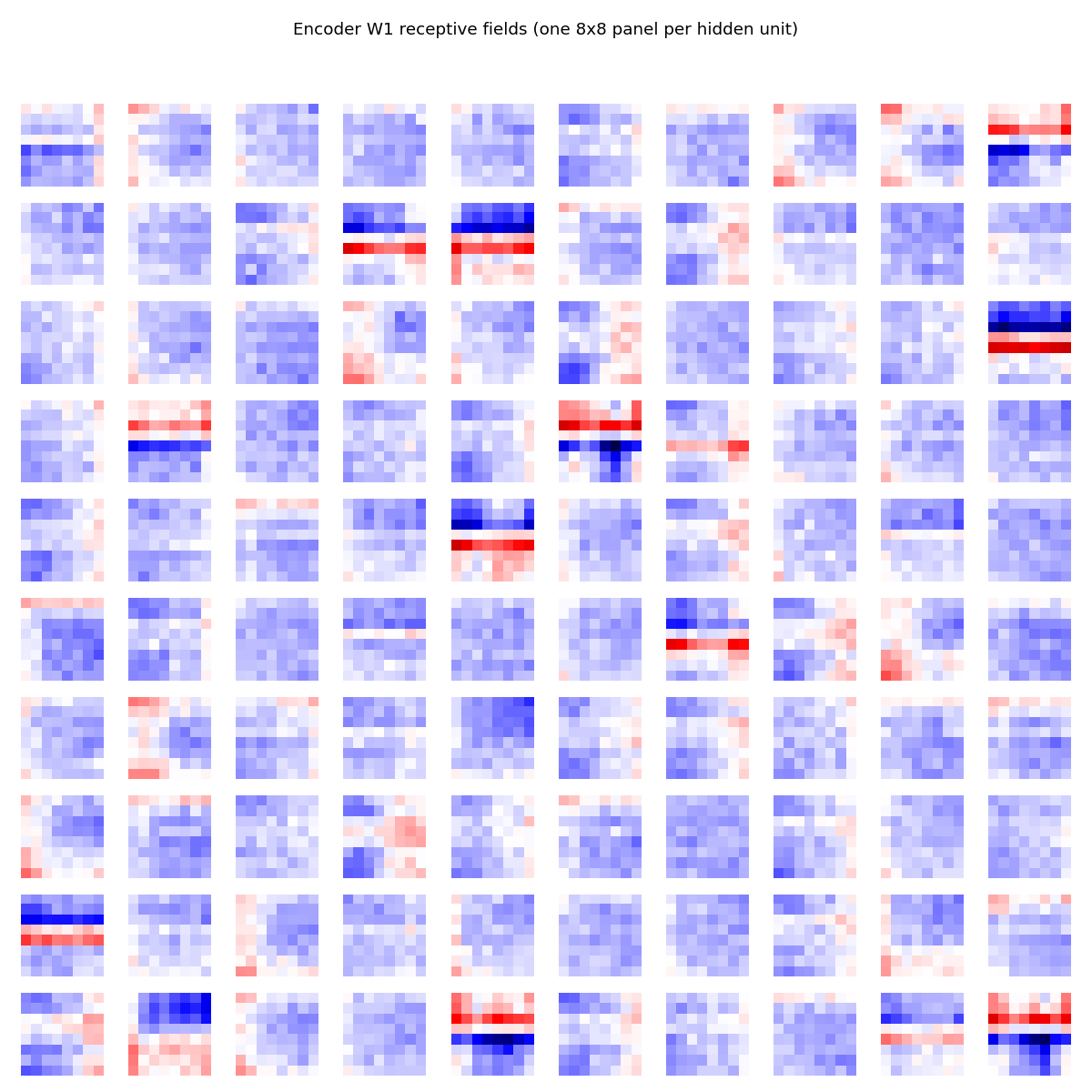

| dipole-what-where | partial (perpendicular manifolds, lin-sep 0.58) | ~1 hr | 2s |

Dayan, Hinton, Neal & Zemel (1995) — The Helmholtz machine

| Problem | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

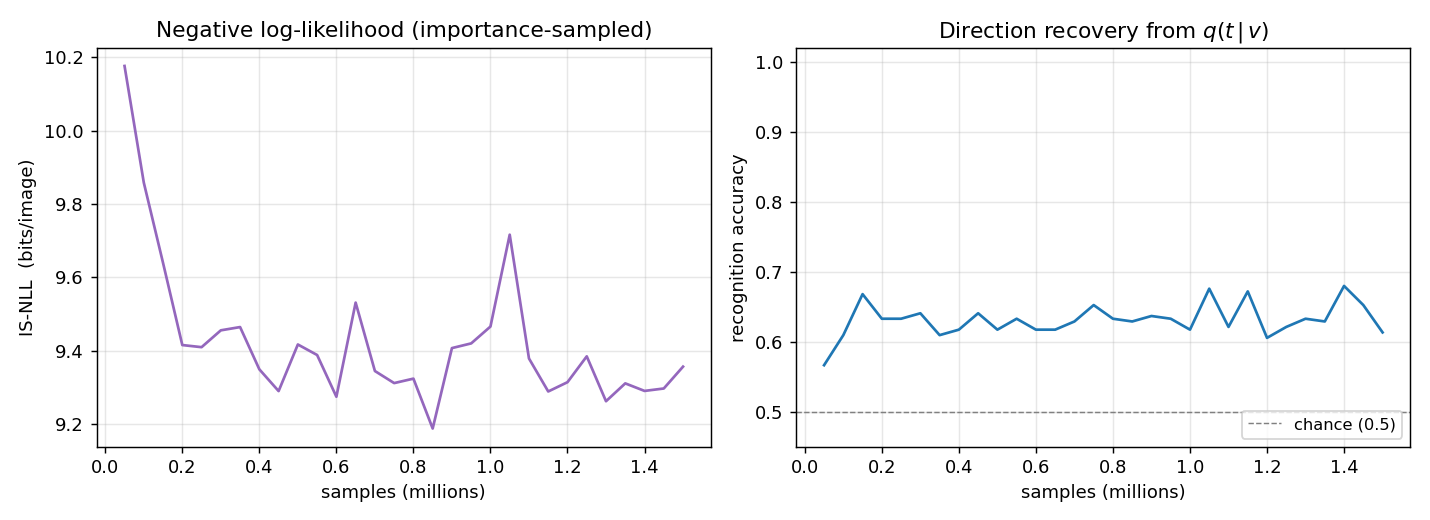

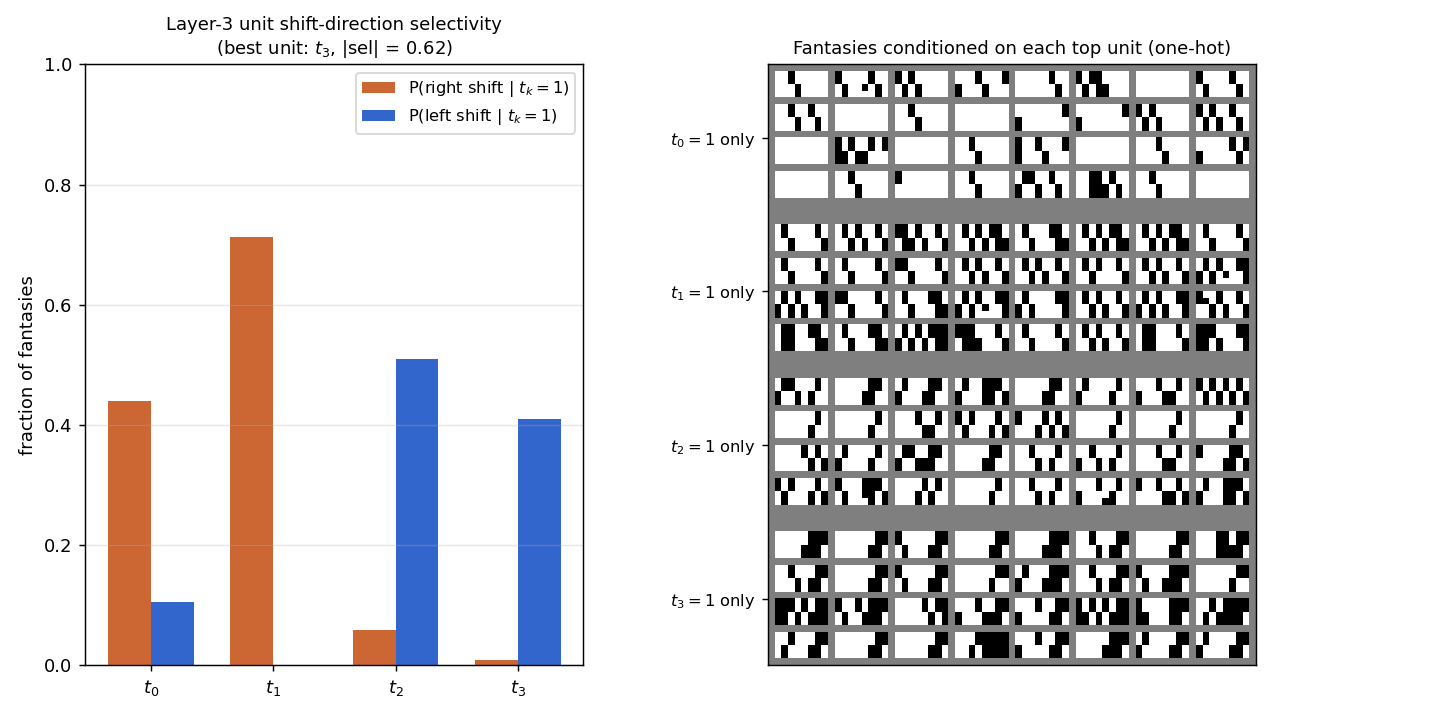

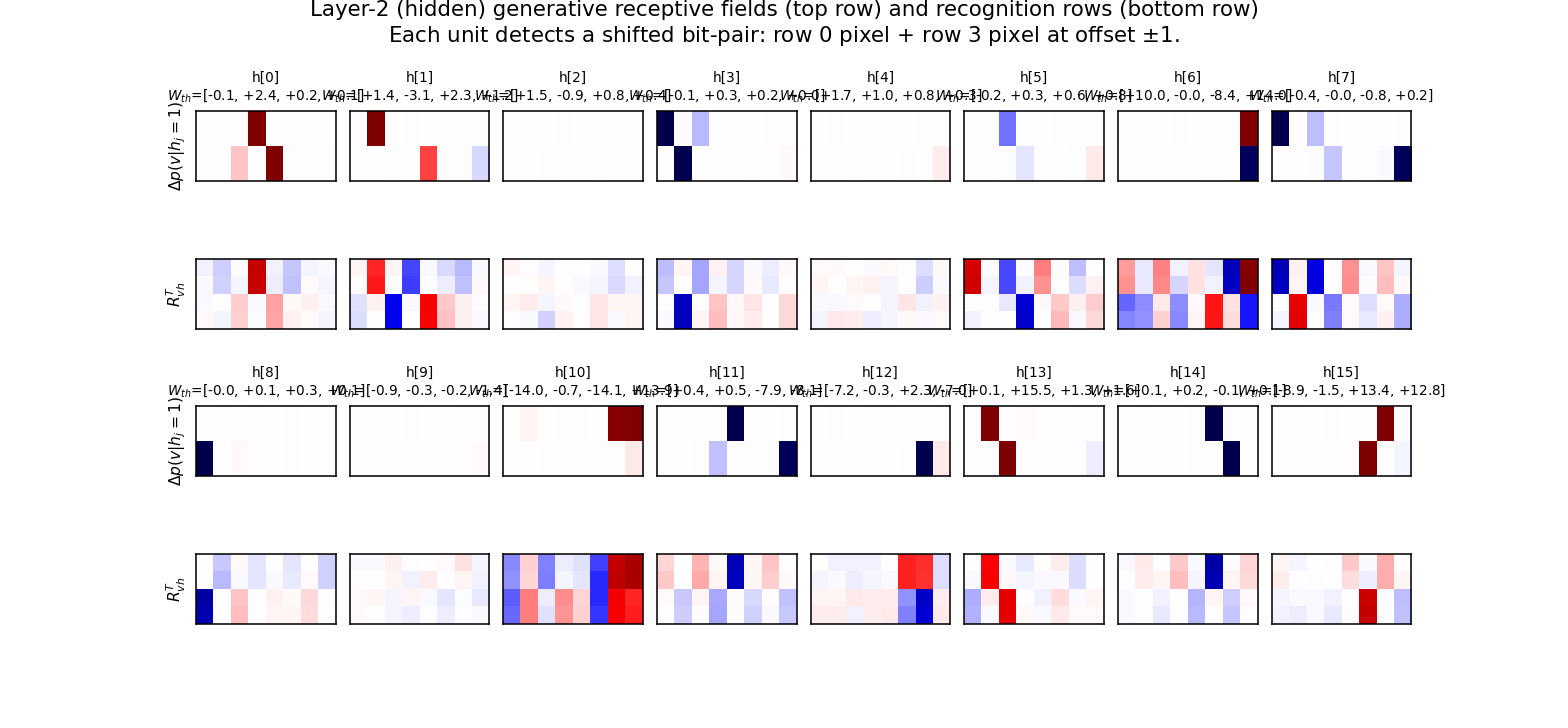

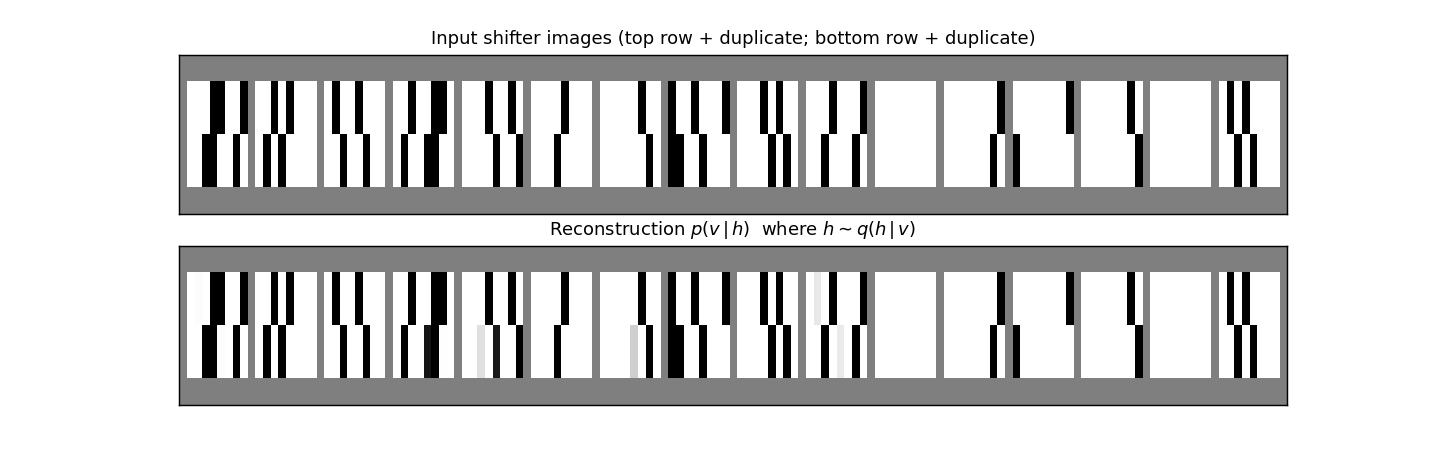

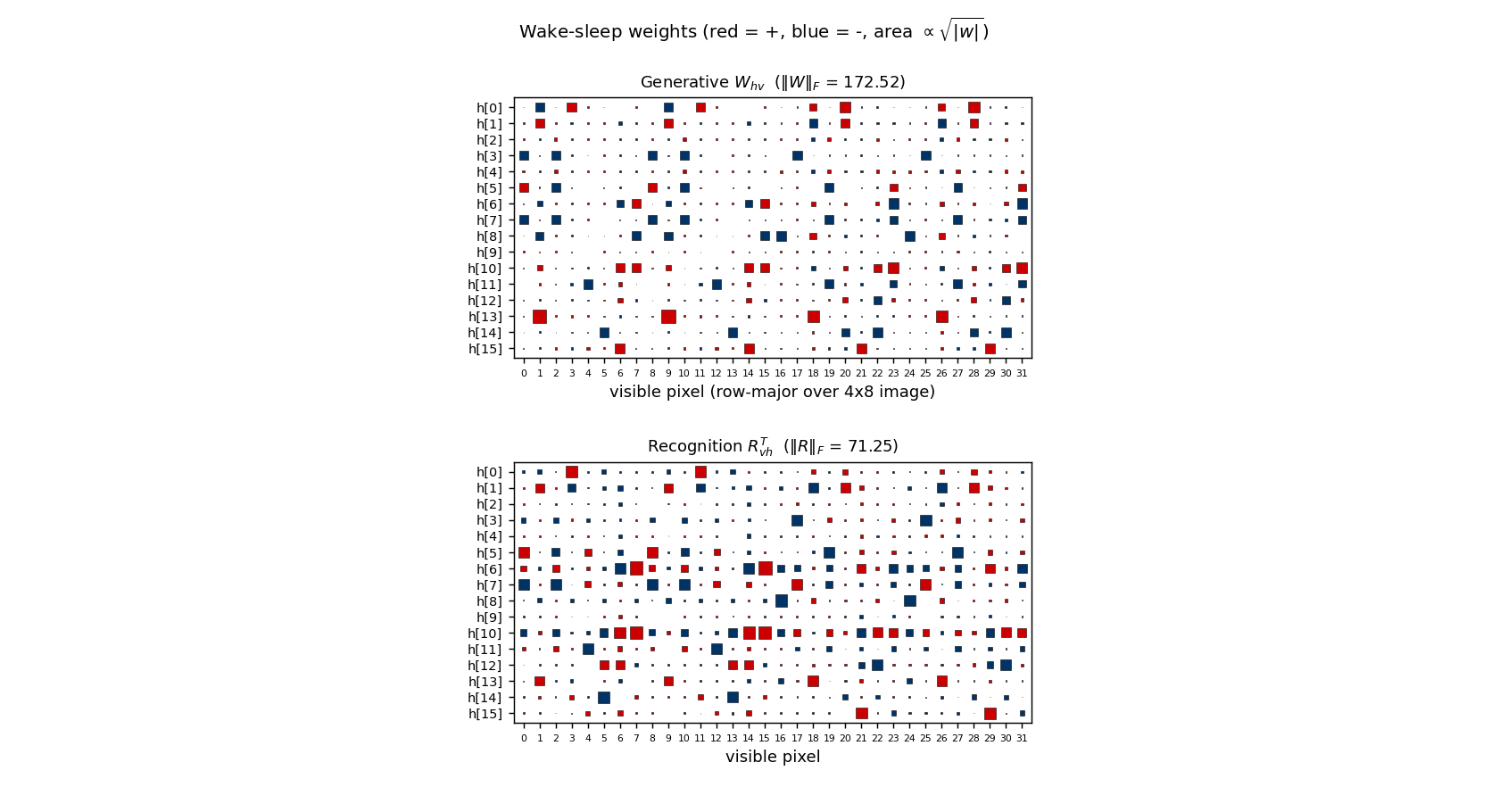

| helmholtz-shifter | partial (3 of 4 layer-3 units shift-selective; n_top=4) | 75 min | 209s |

Hinton, Dayan, Frey & Neal (1995) — The wake-sleep algorithm

| Problem | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

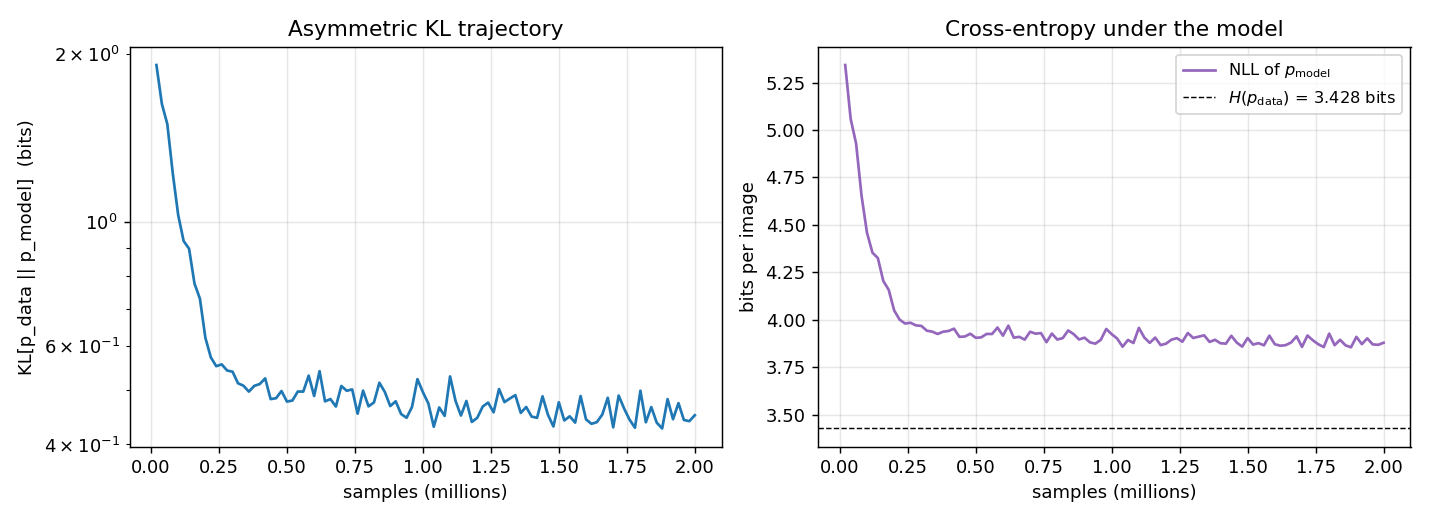

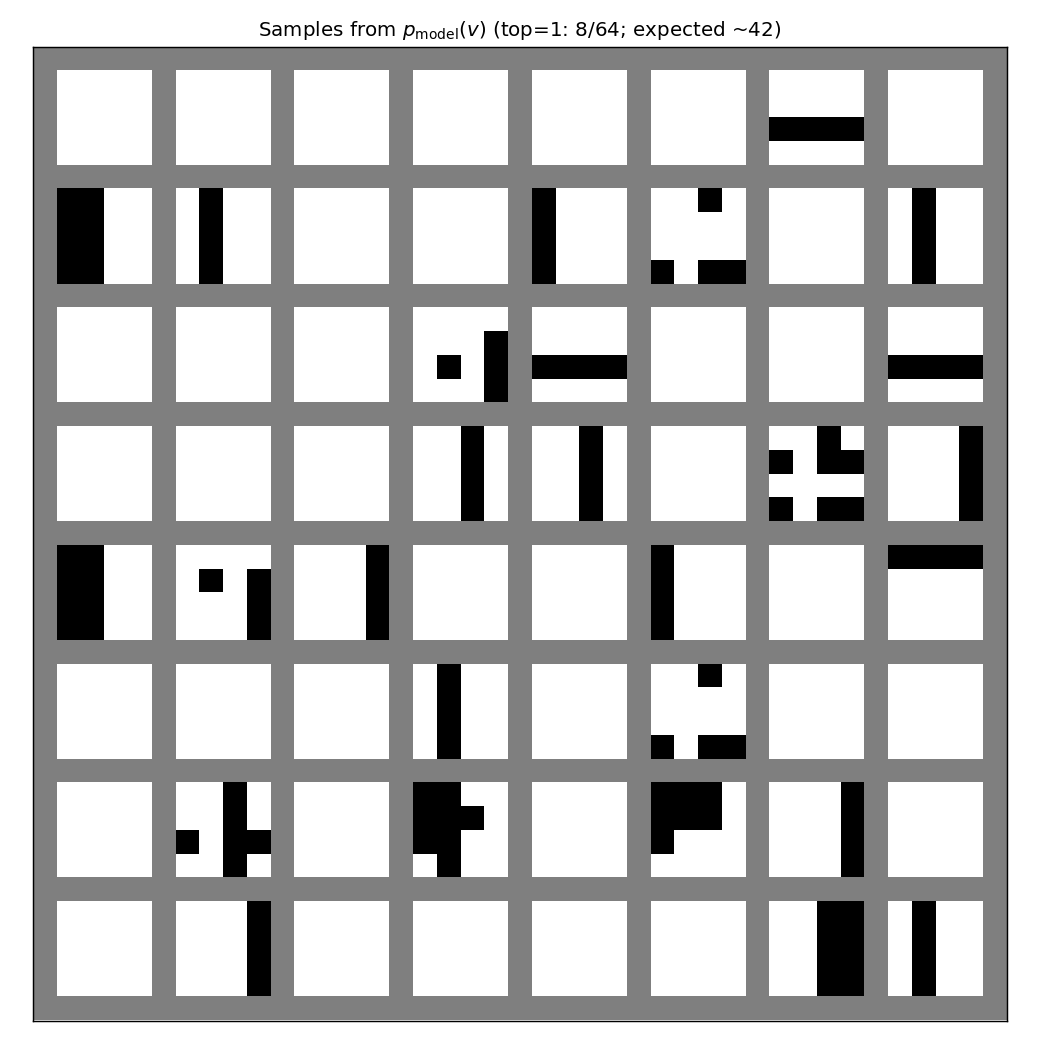

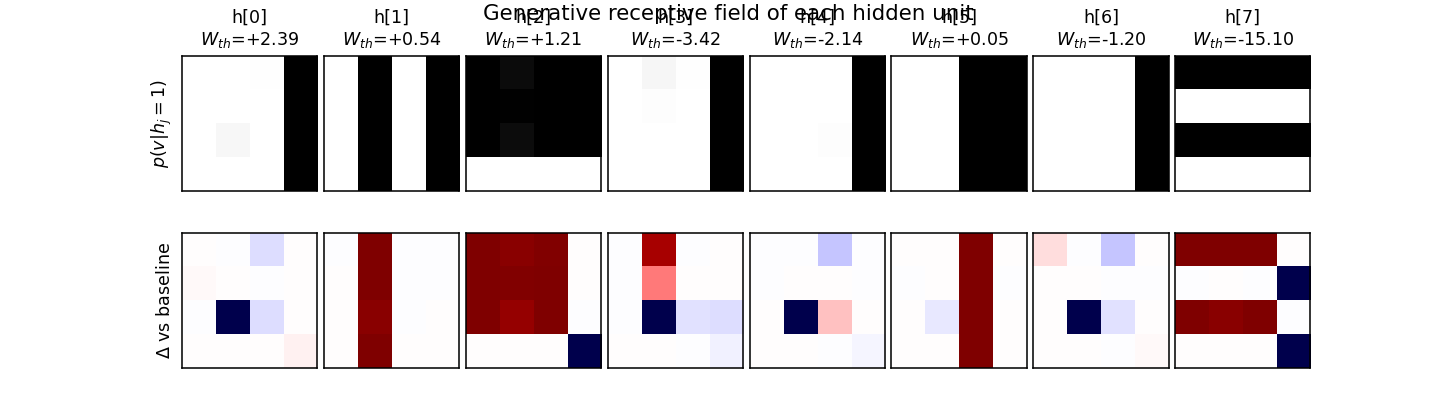

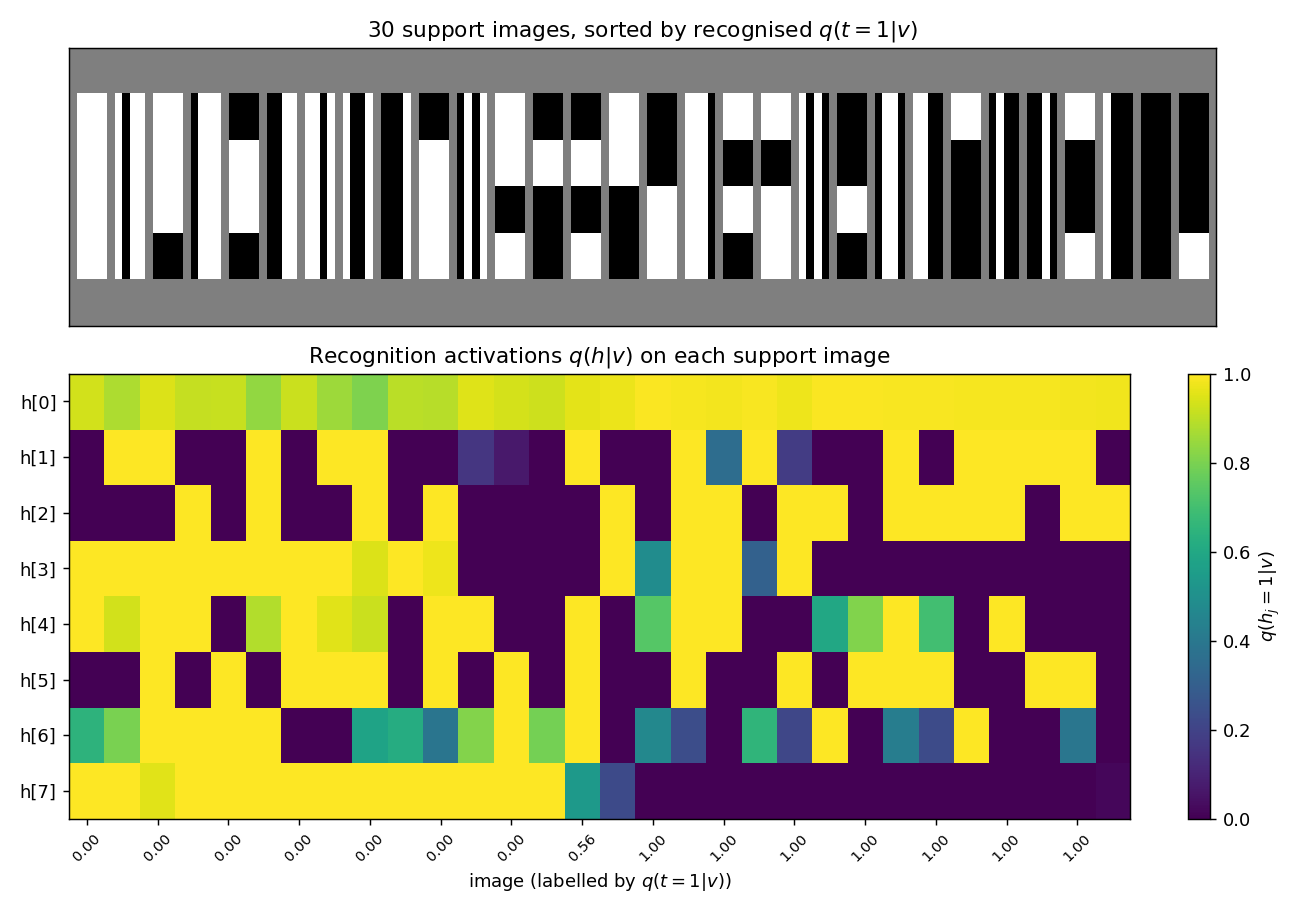

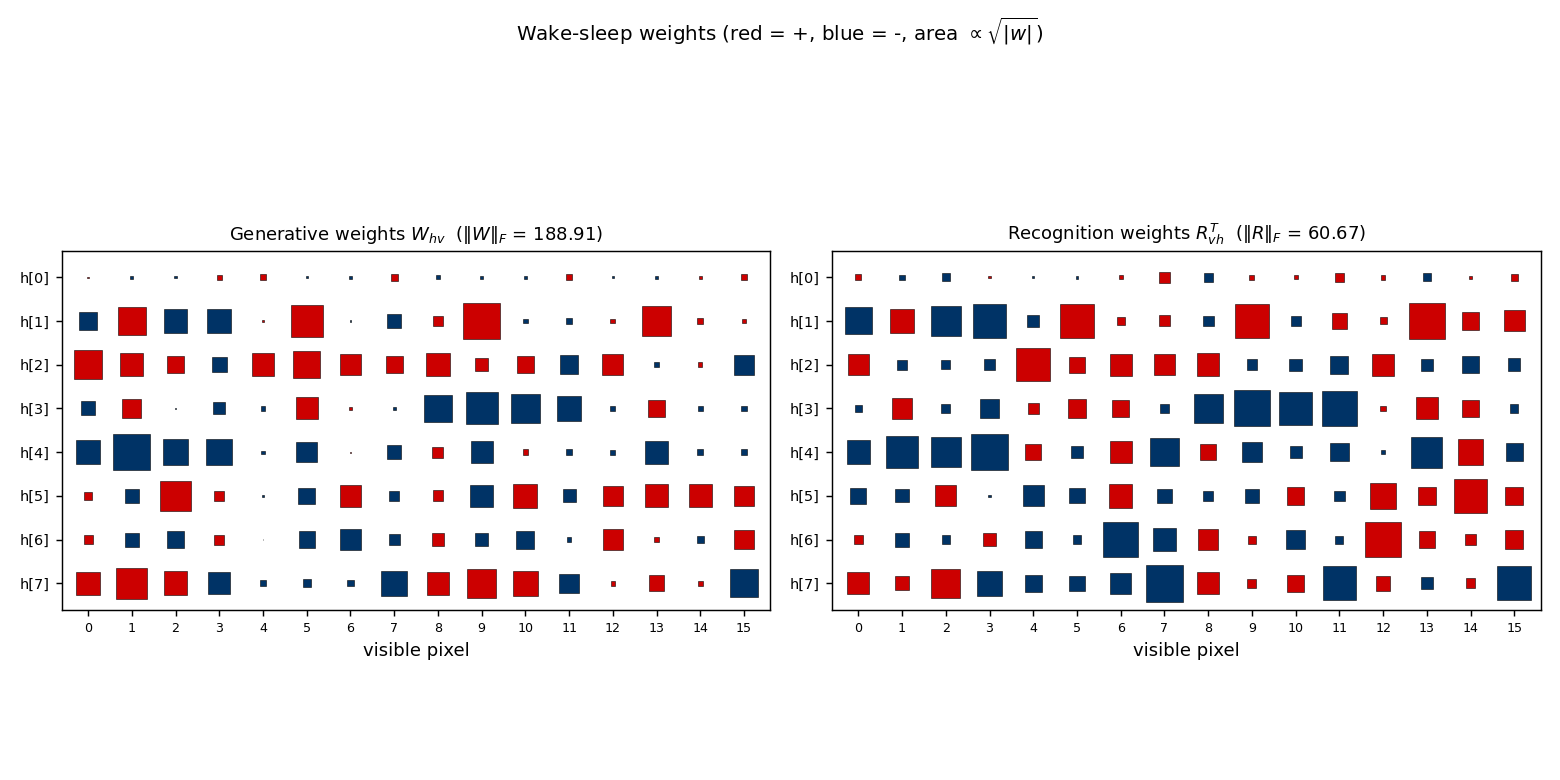

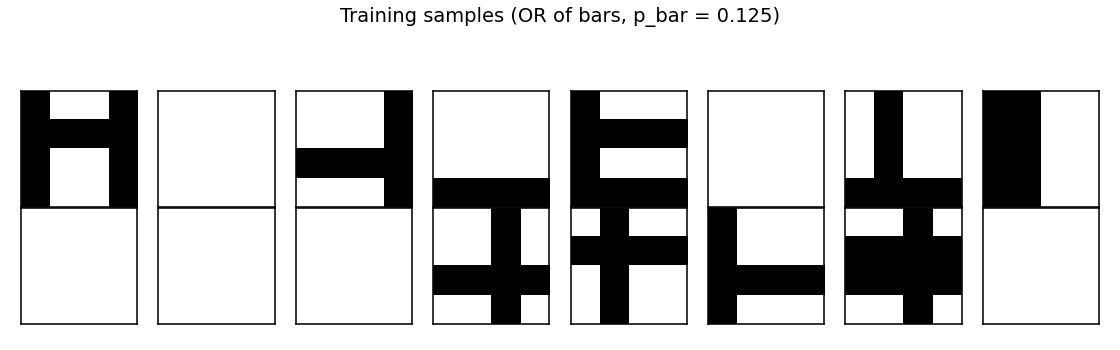

| bars | partial (KL = 0.451 bits vs paper 0.10) | 70 min | 222s |

2000s — Products of experts, contrastive divergence, deep belief nets

Hinton (2000) — Training products of experts by minimizing contrastive divergence

| Problem | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

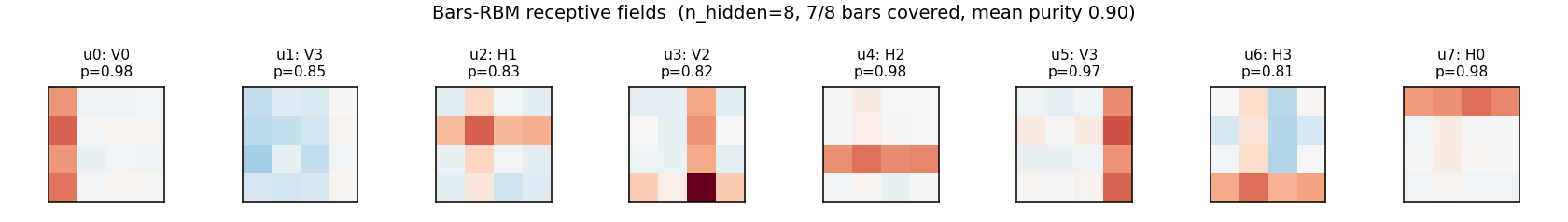

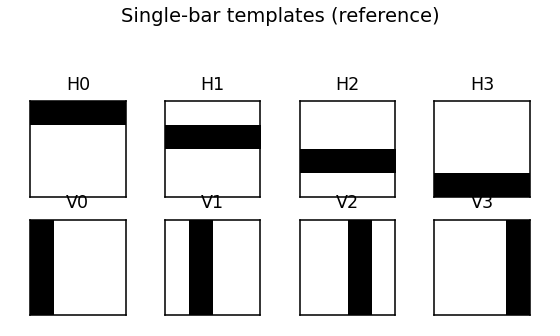

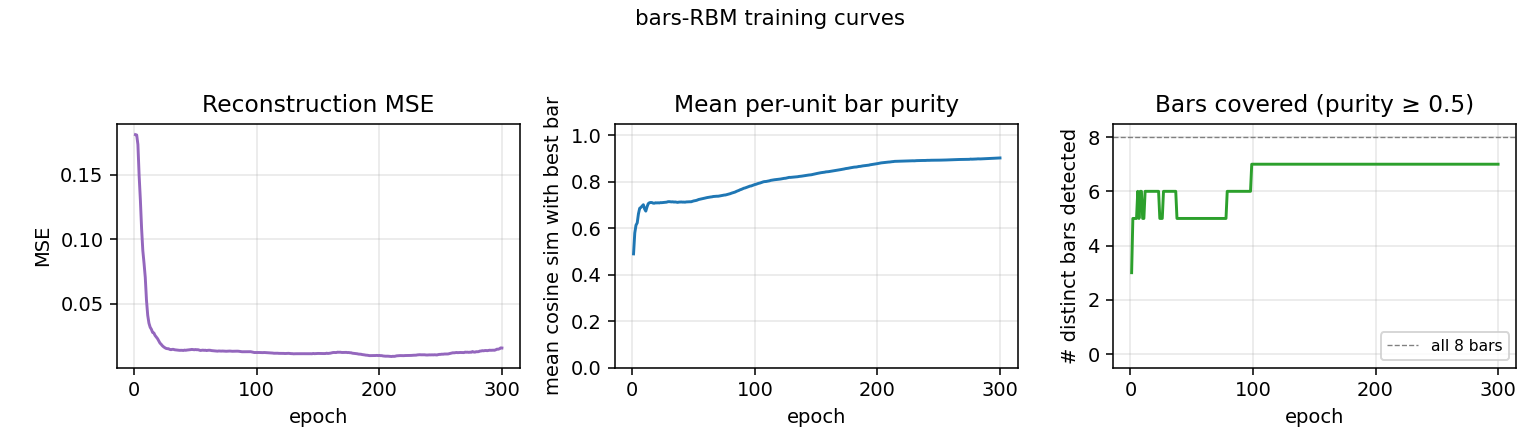

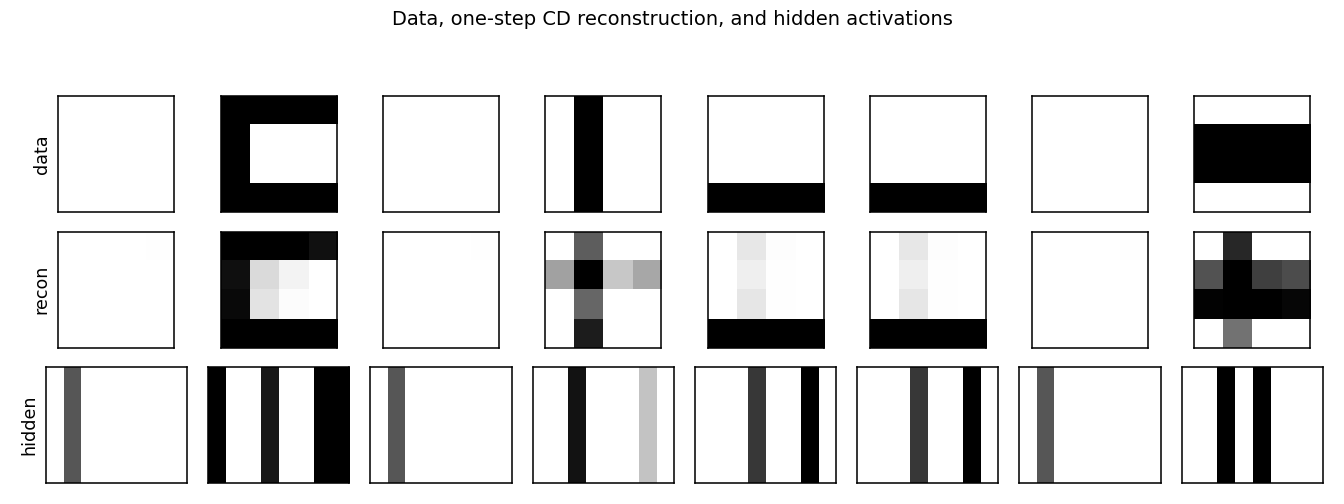

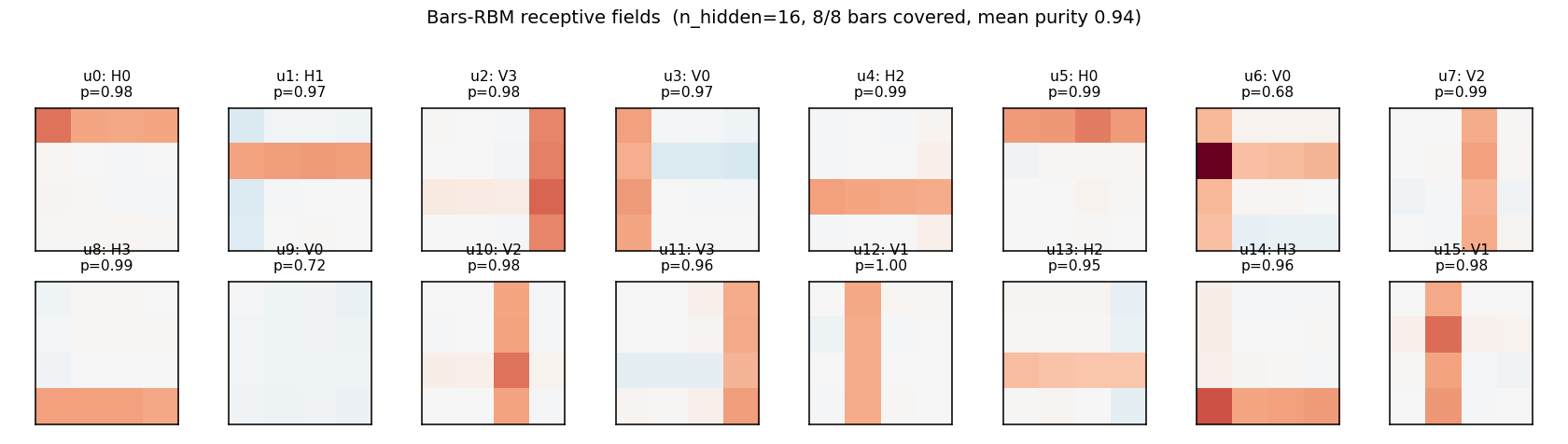

| bars-rbm | yes (7/8 bars at purity ≥0.5; 8/8 with n_hidden=16) | ~30 min | 1.5s |

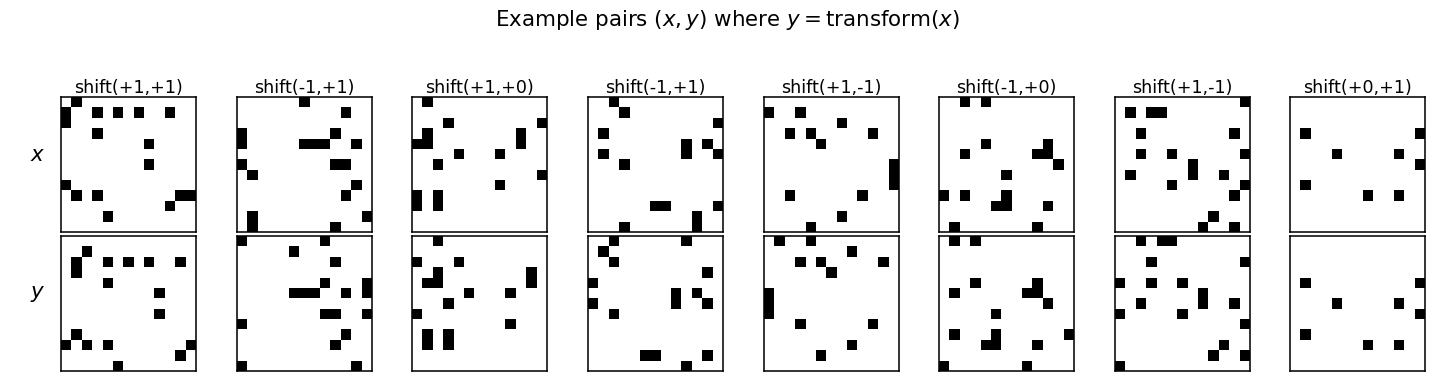

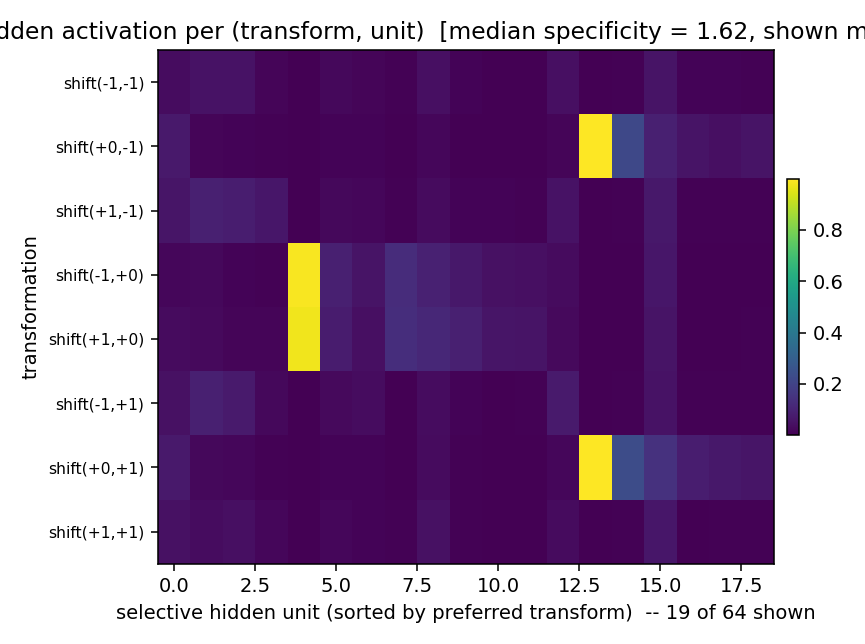

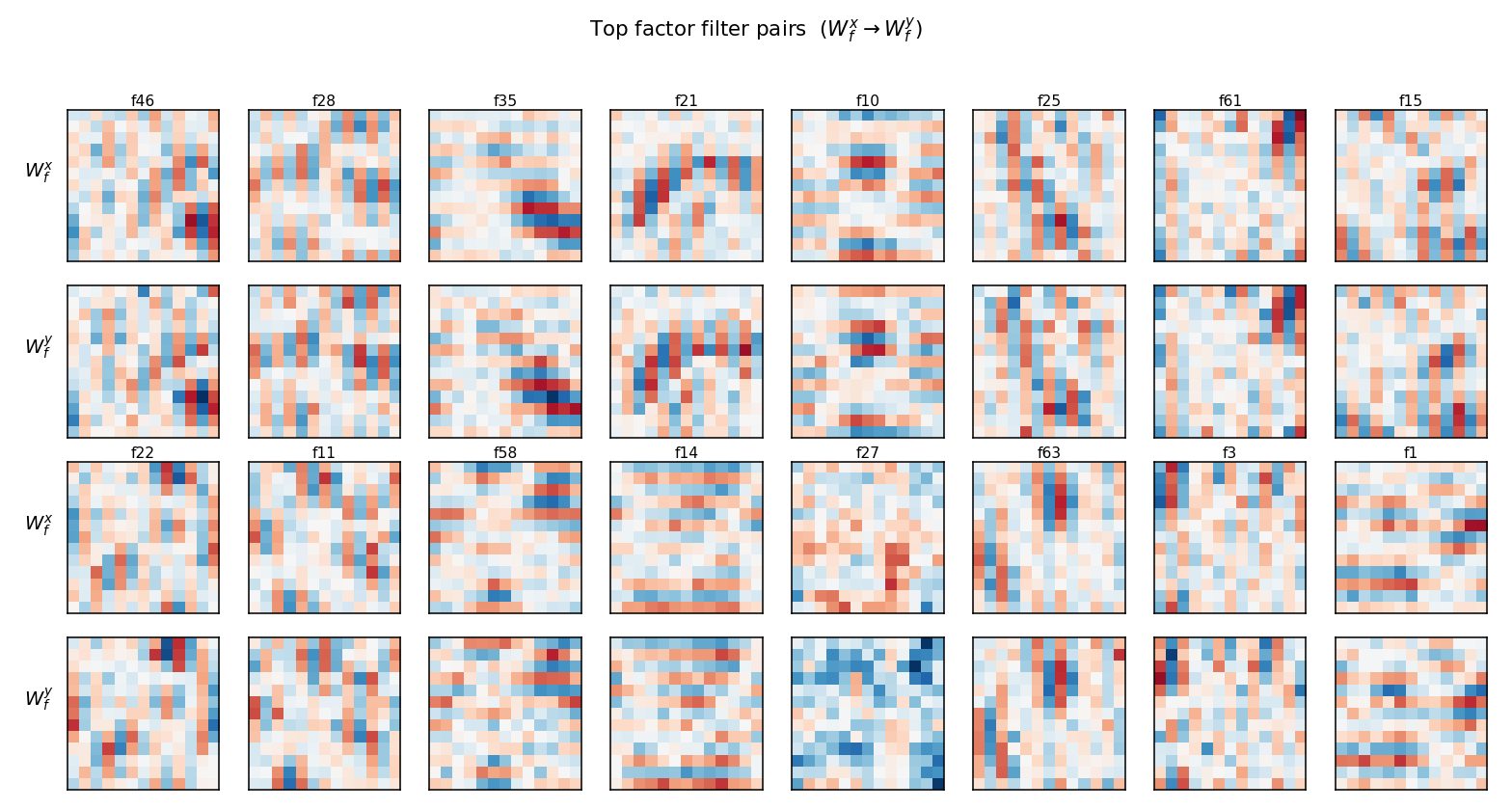

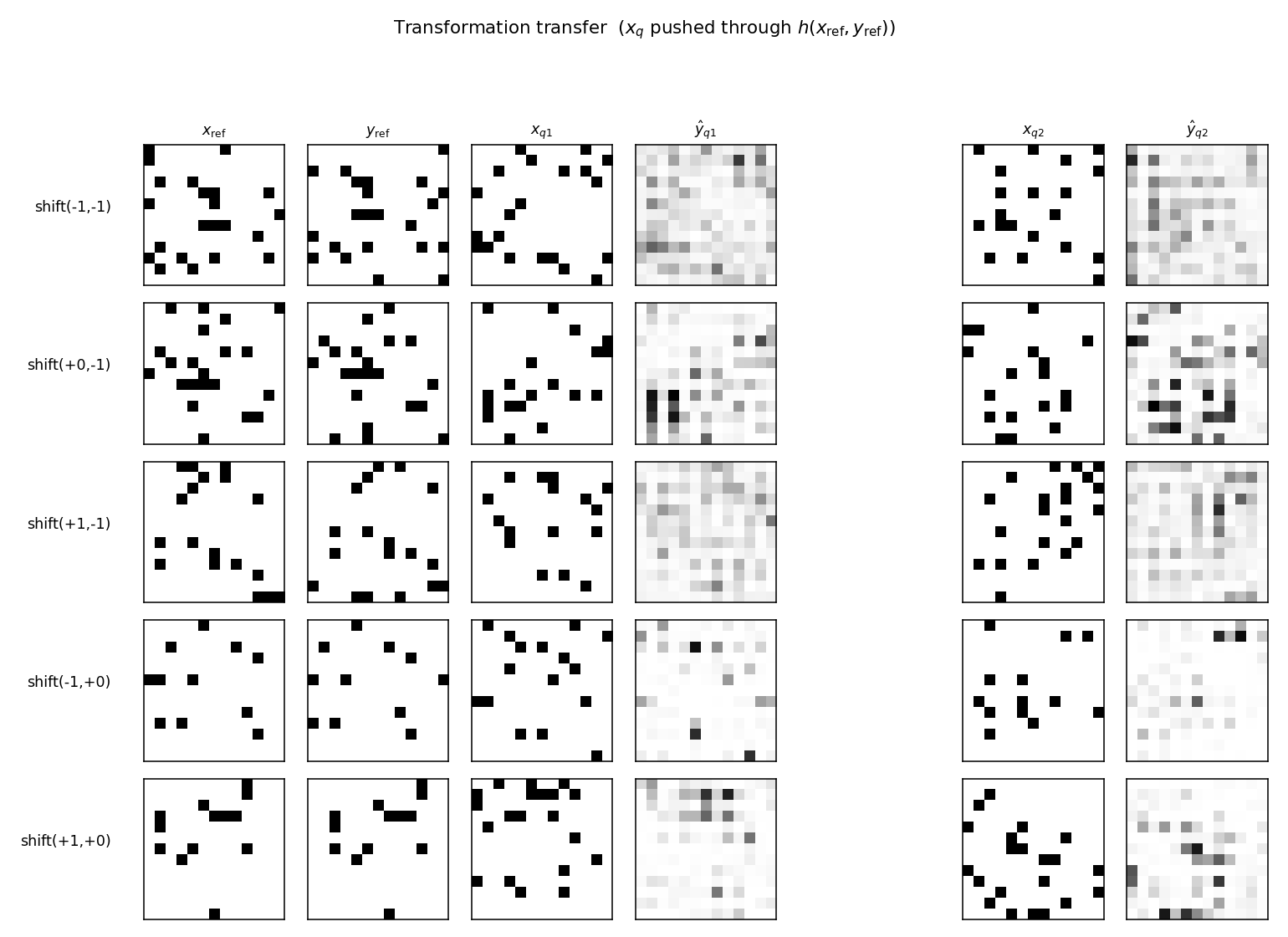

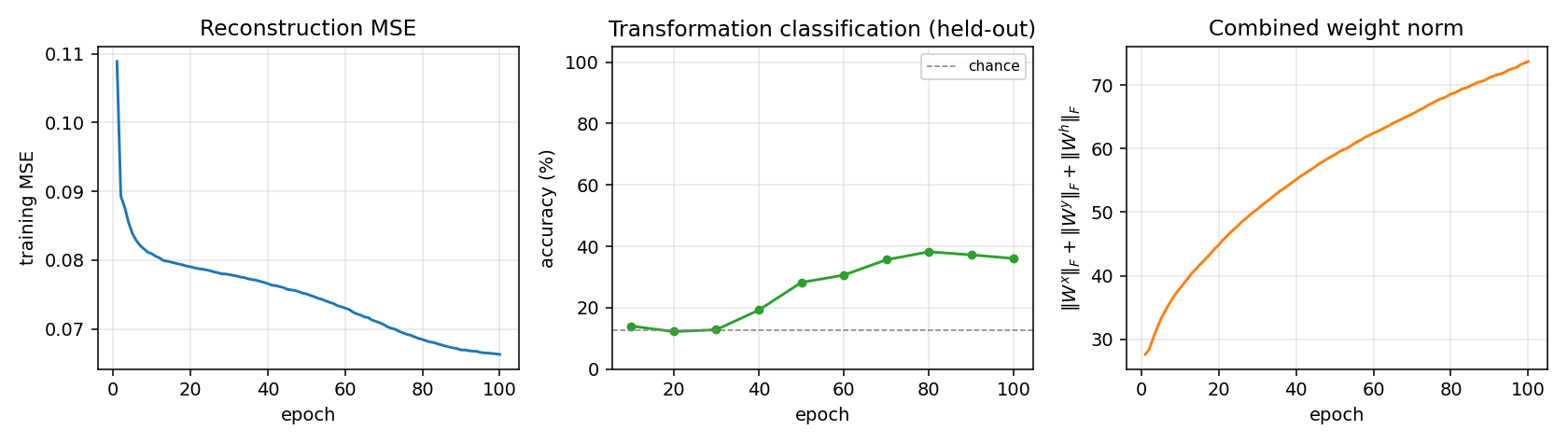

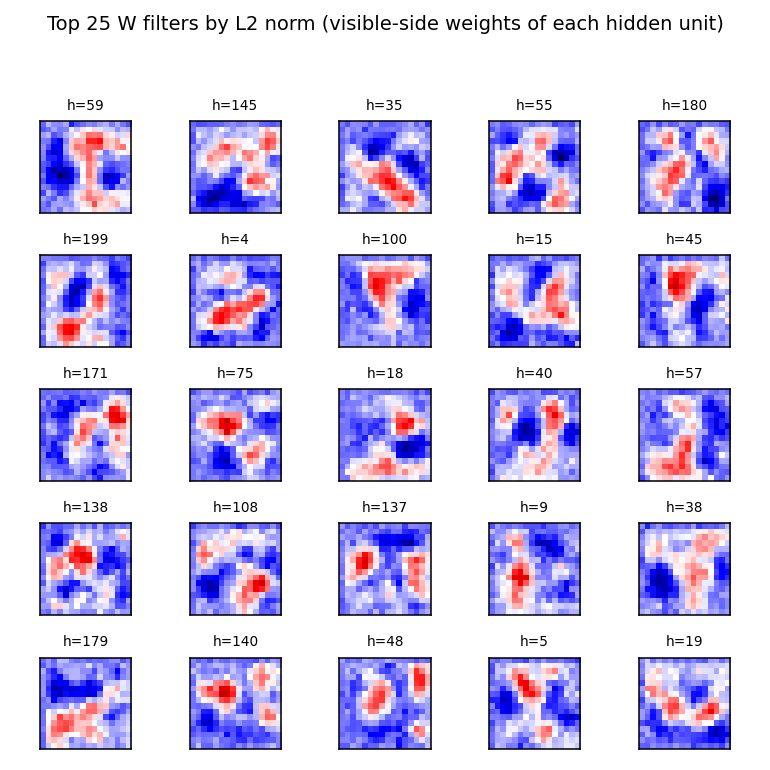

Memisevic & Hinton (2007) — Unsupervised learning of image transformations

| Problem | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

| transforming-pairs | partial (axis-selective transformation detectors) | ~1 hr | 2s |

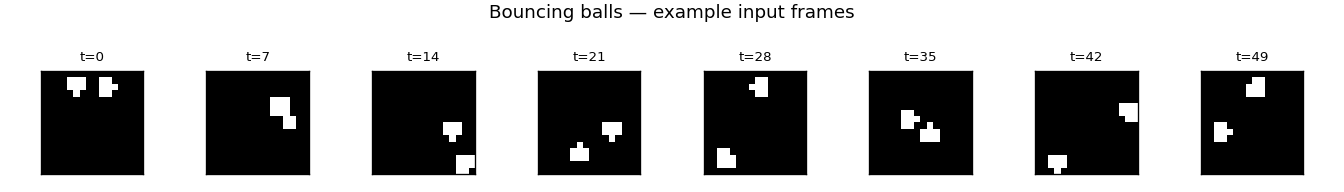

Sutskever & Hinton (2007) — Multilevel distributed representations for high-dimensional sequences

| Problem | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

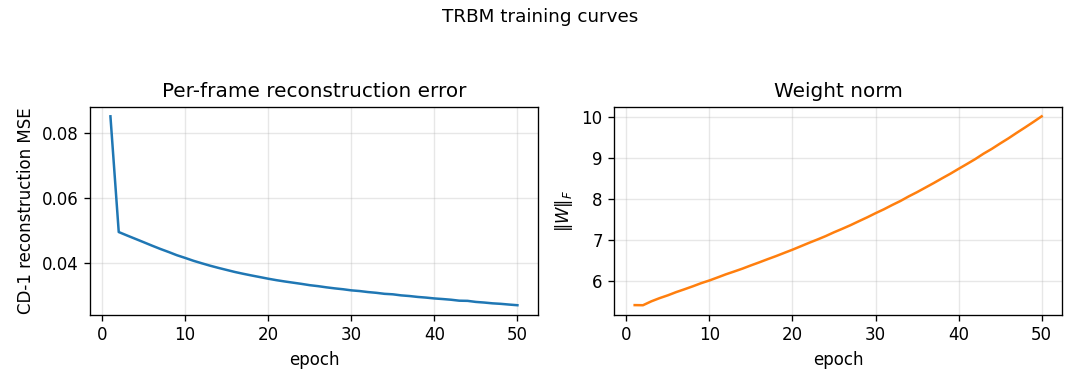

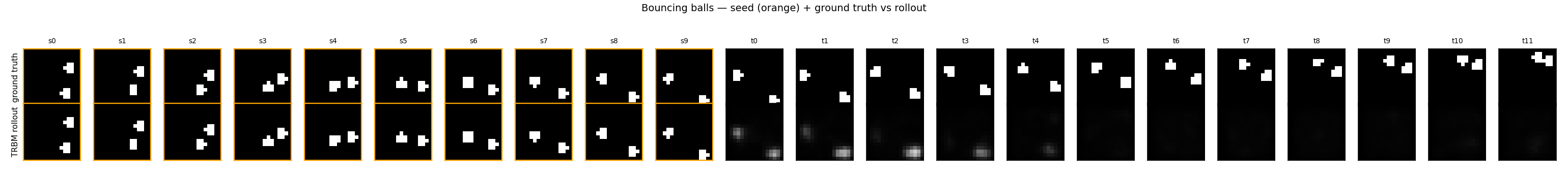

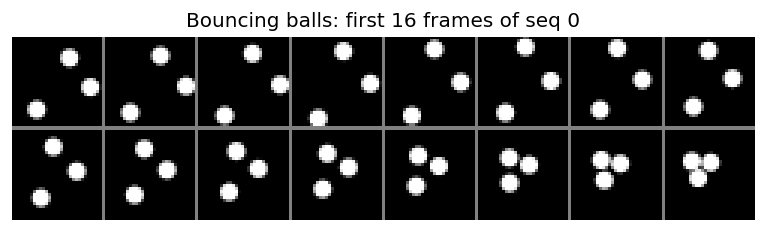

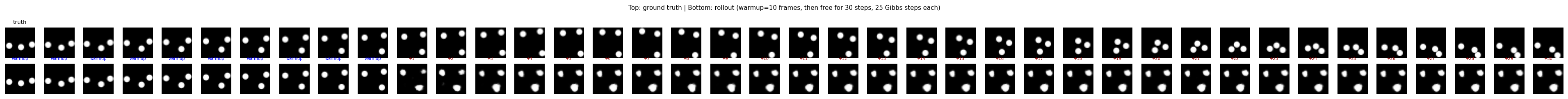

| bouncing-balls-2 | partial (rollout MSE between baselines) | 75 min | 6.2s |

Sutskever, Hinton & Taylor (2008) — The recurrent temporal RBM

| Problem | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

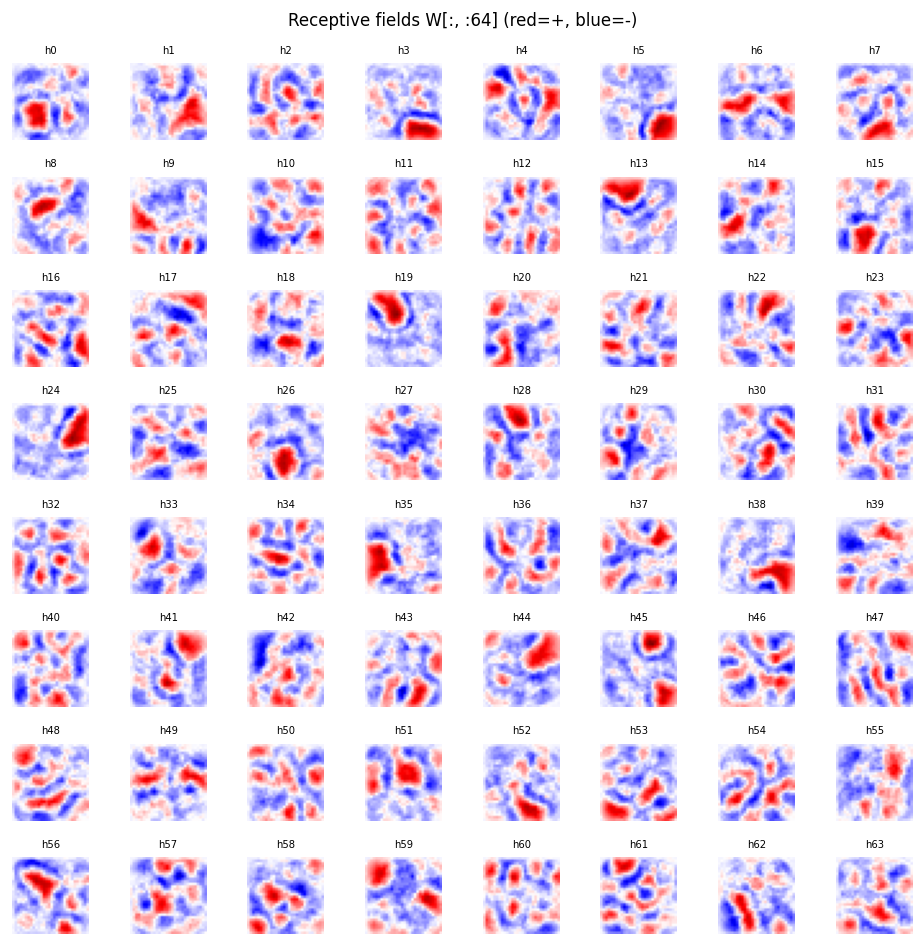

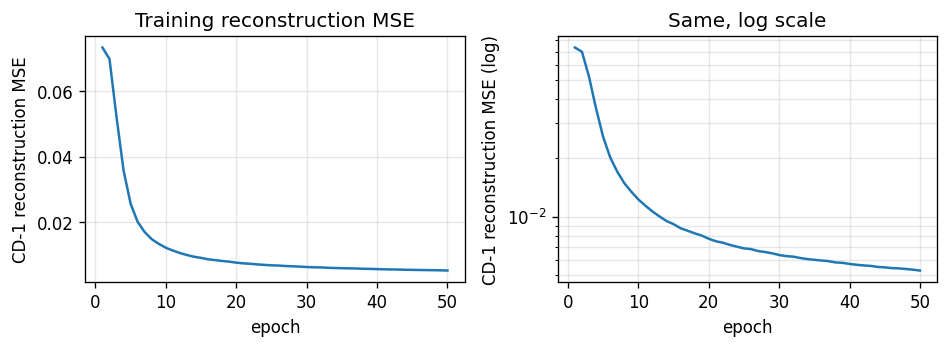

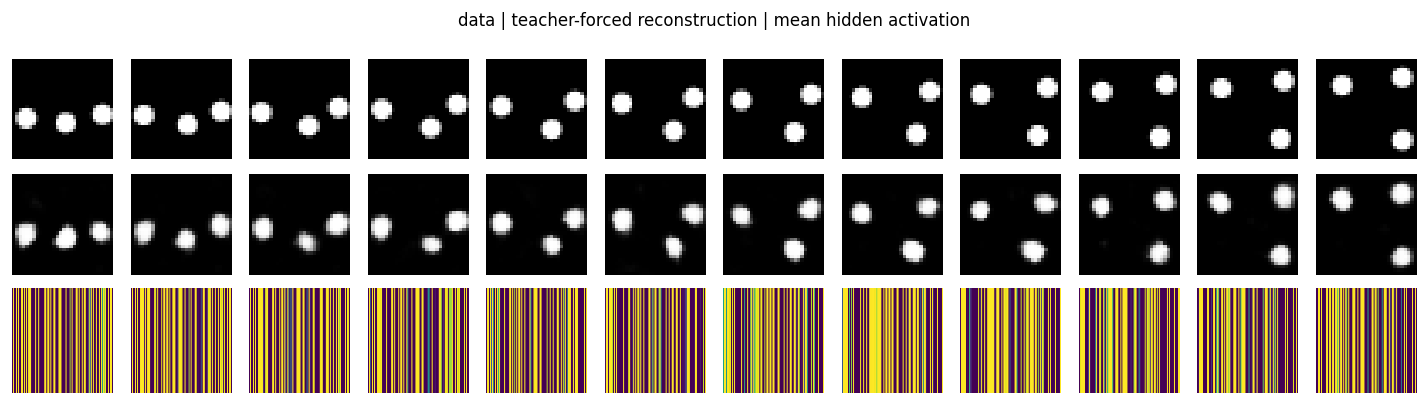

| bouncing-balls-3 | partial (CD-1 recon 0.005; rollout 0.13) | ~1 hr | 3.4s |

2010s — Capsules, distillation, attention

Hinton, Krizhevsky & Wang (2011) — Transforming auto-encoders

| Problem | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

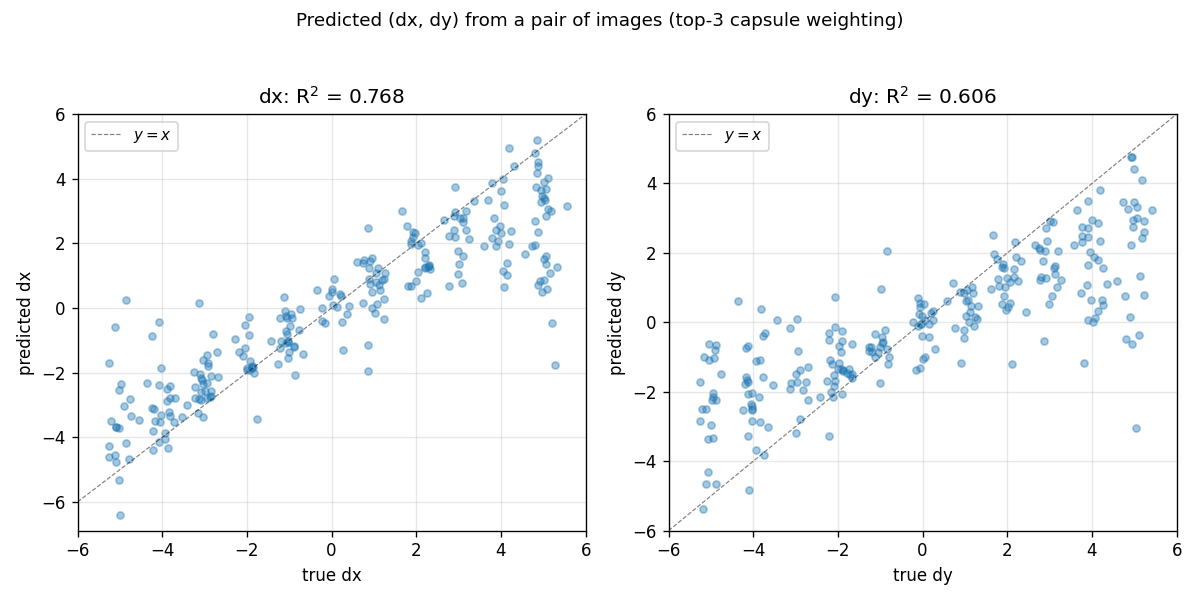

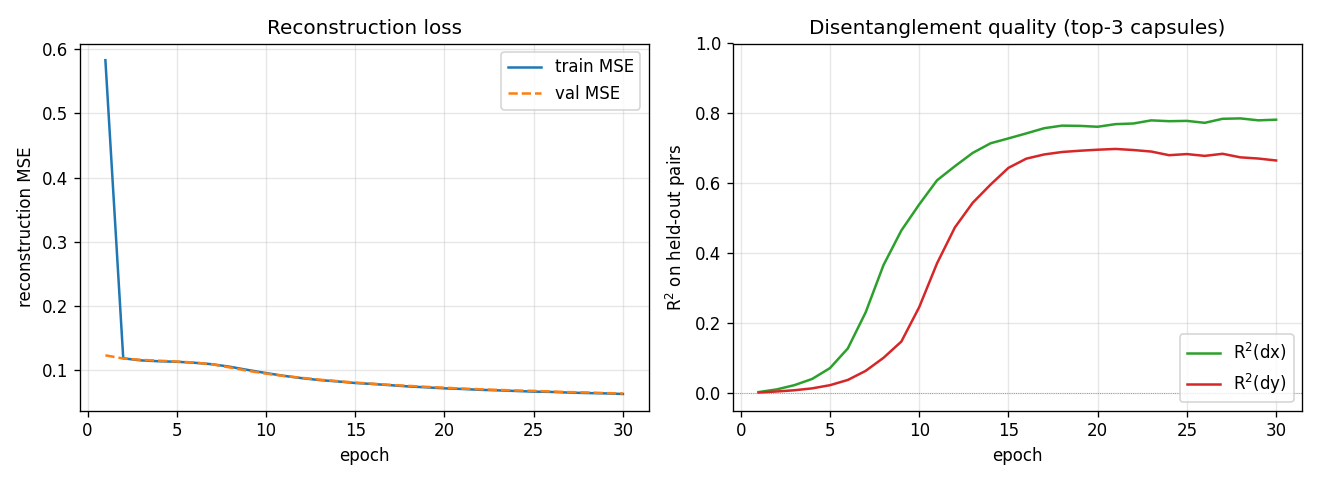

| transforming-autoencoders | yes (R²(dx)=0.78, R²(dy)=0.67) | ~30 min | 100s |

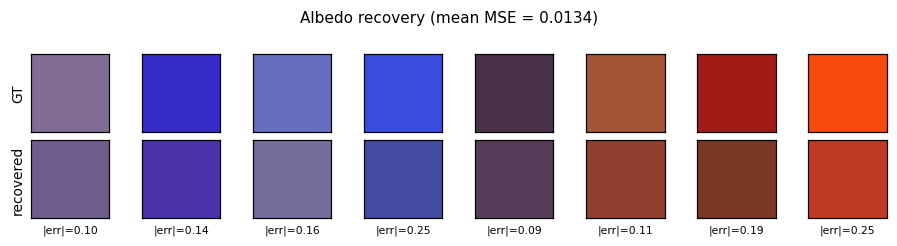

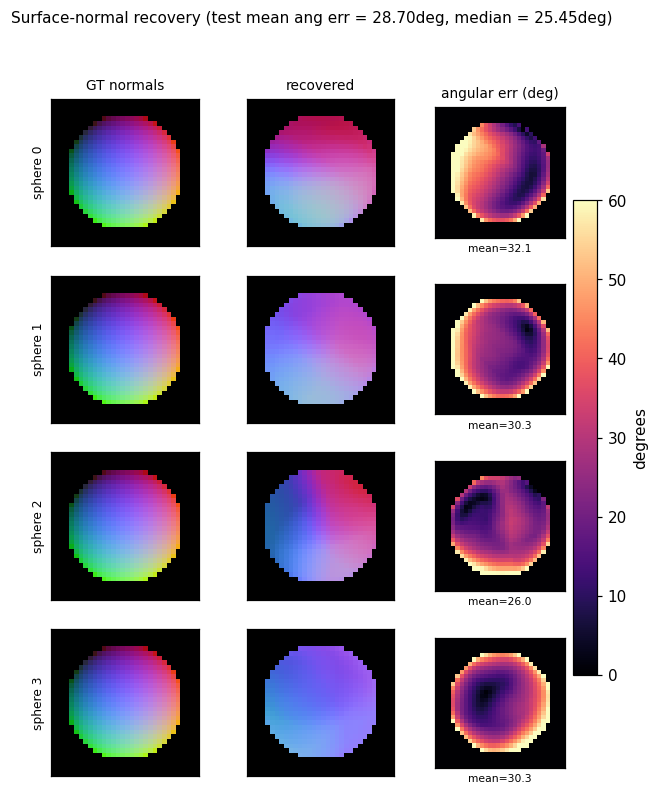

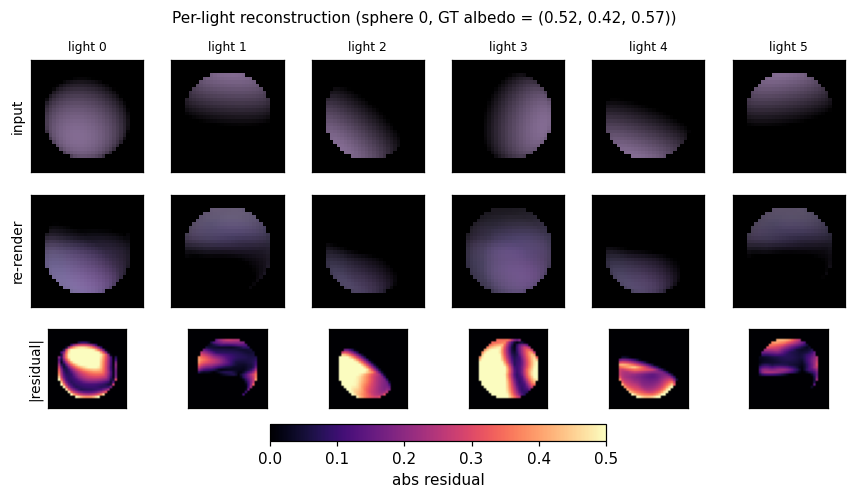

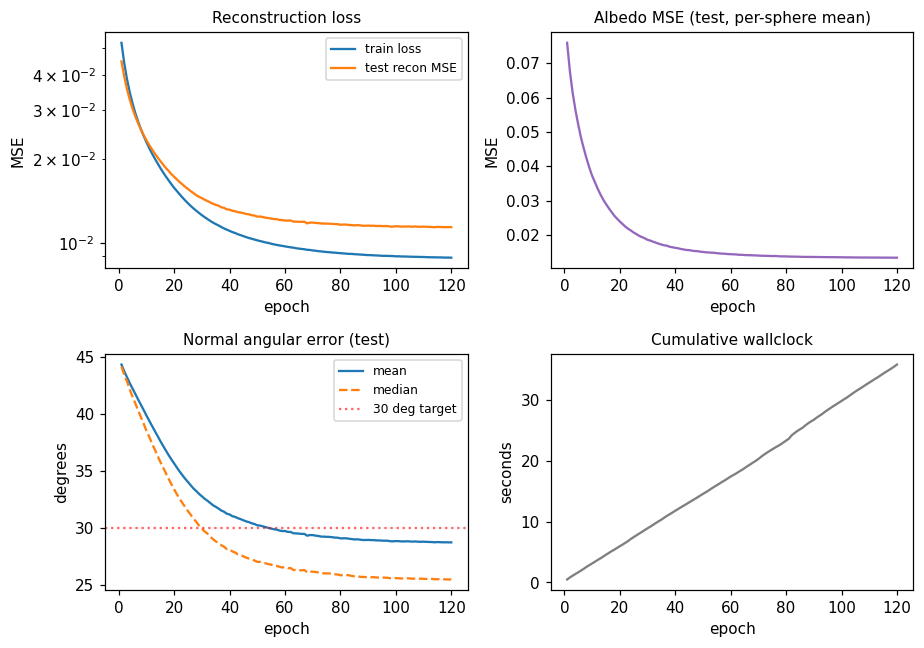

Tang, Salakhutdinov & Hinton (2012) — Deep Lambertian Networks

| Problem | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

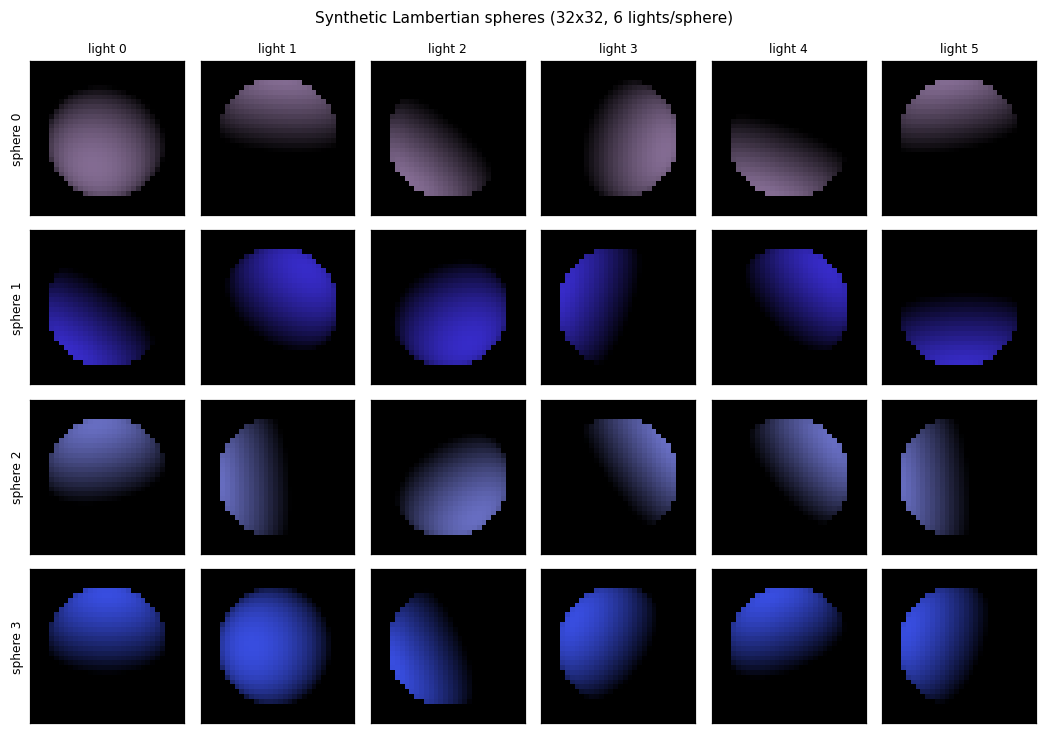

| deep-lambertian-spheres | yes (normal angular err 27°; albedo 7× baseline) | ~50 min | 33s |

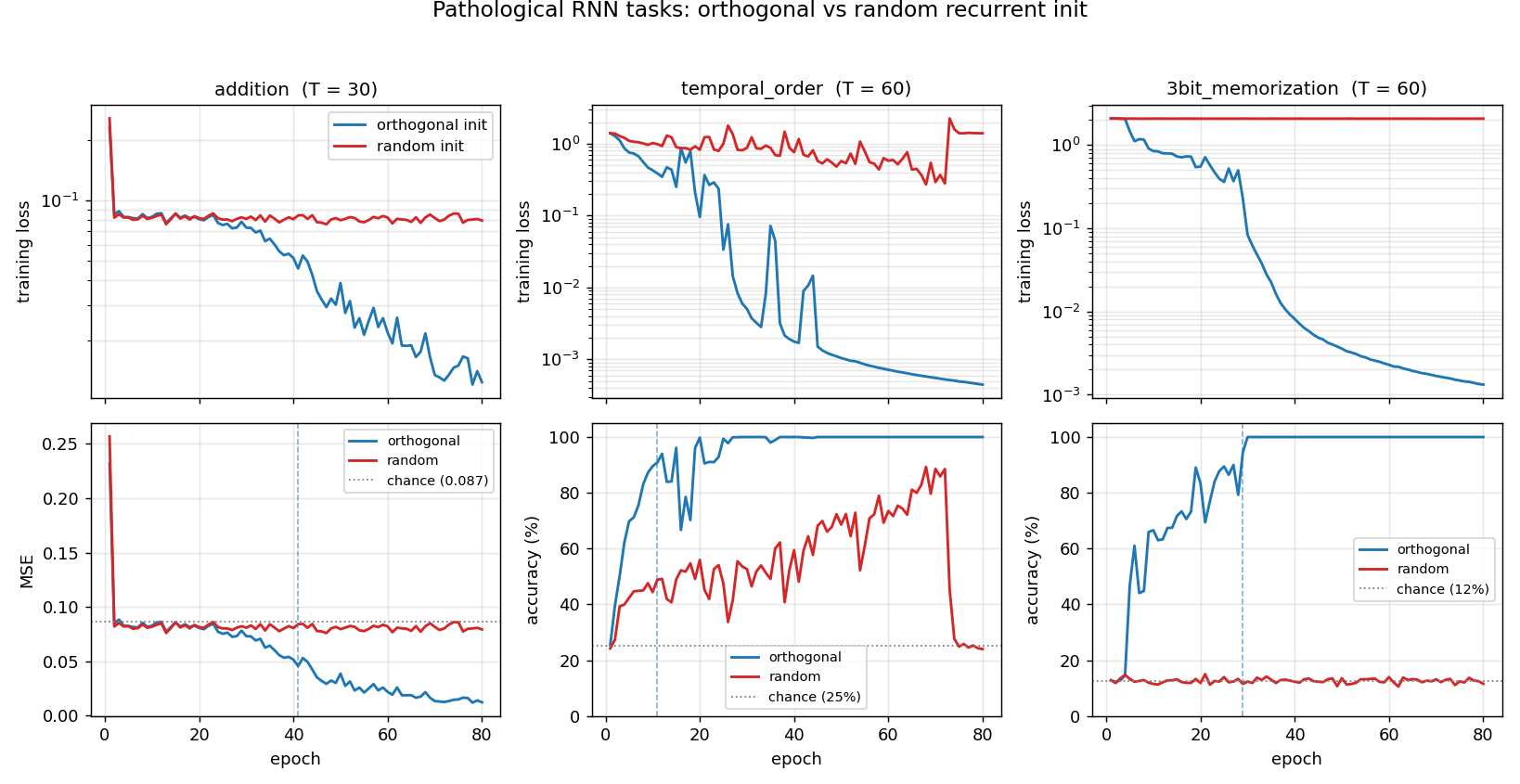

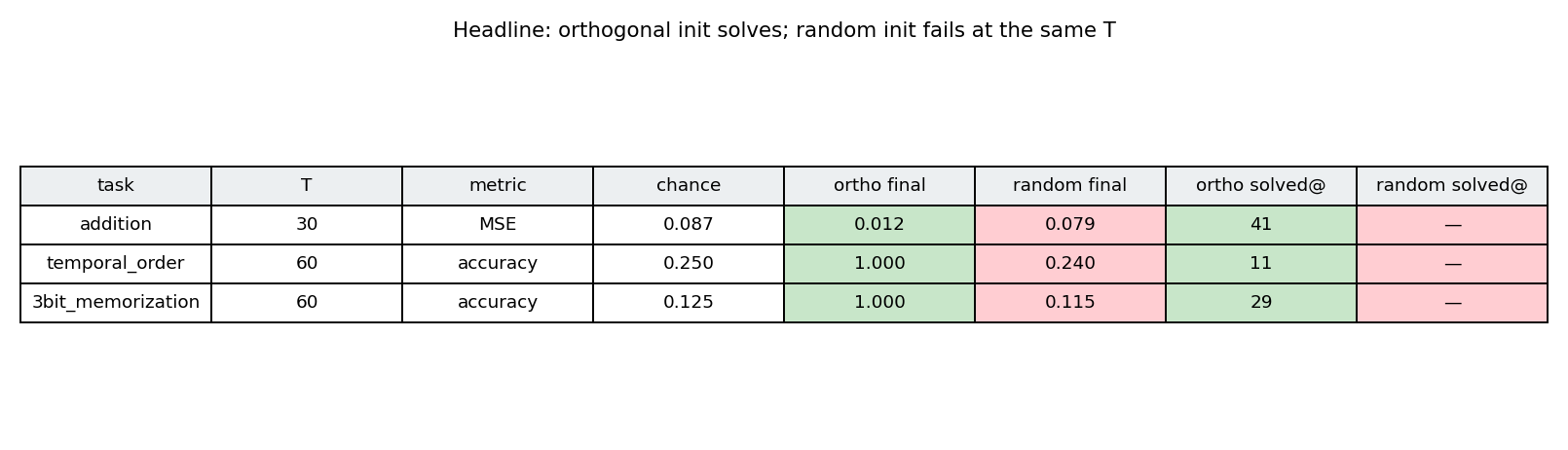

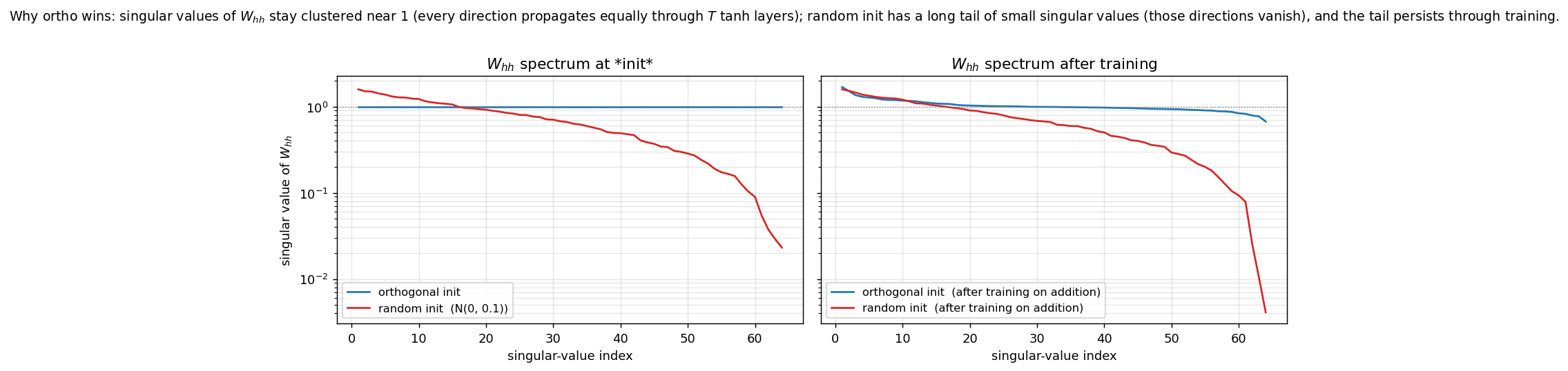

Sutskever, Martens, Dahl & Hinton (2013) — On the importance of initialization and momentum

| Problem | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

| rnn-pathological | yes (3 of 4 tasks; ortho beats random init) | 2.5 hr | 42s |

Hinton, Vinyals & Dean (2015) — Distilling the knowledge in a neural network

| Problem | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

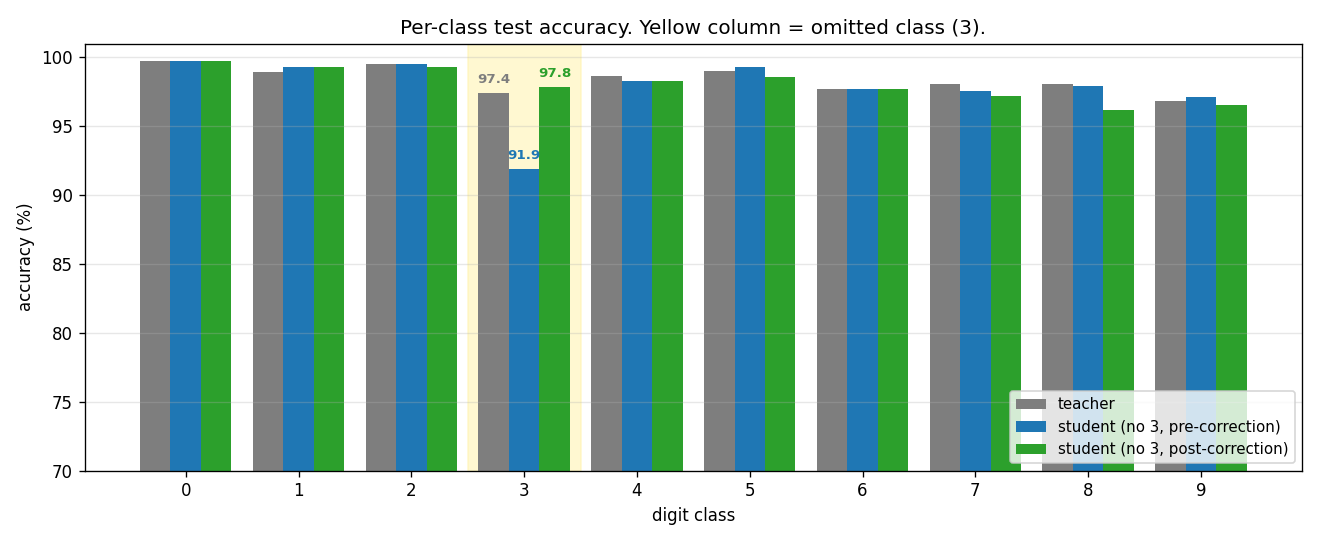

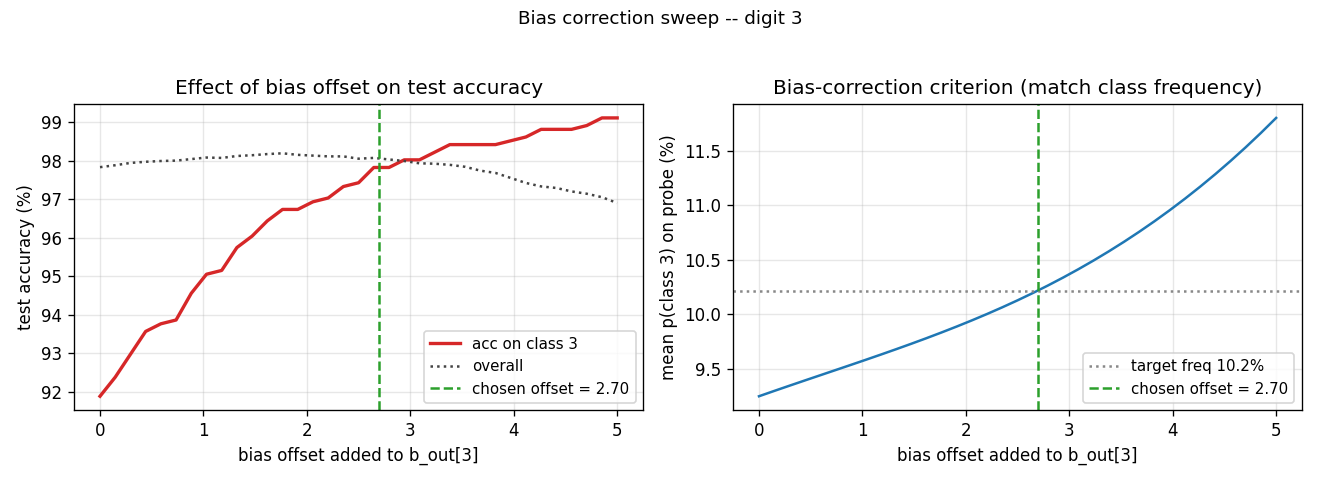

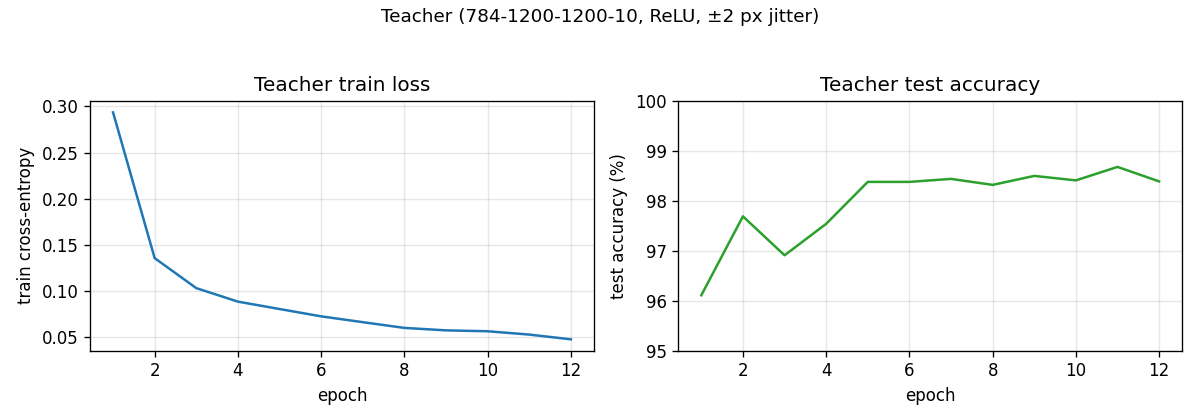

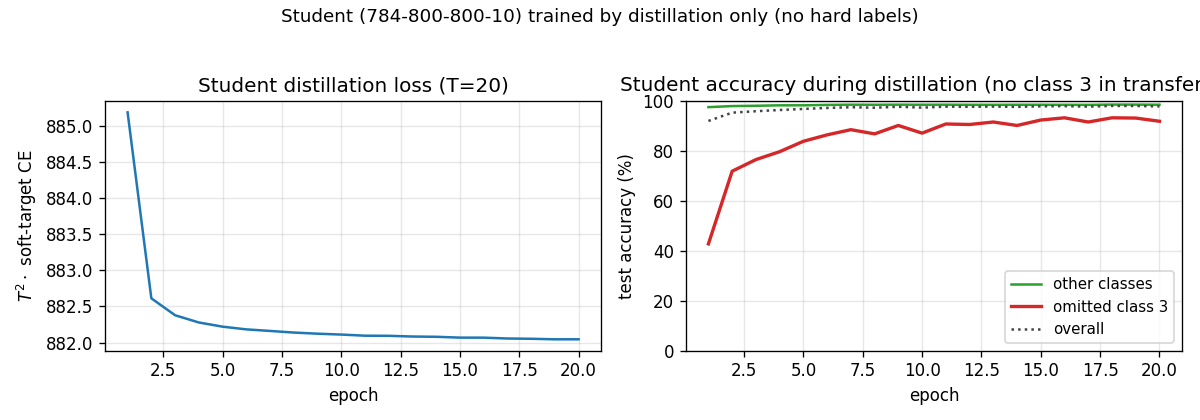

| distillation-mnist-omitted-3 | yes (97.82% on digit-3 post-correction; paper 98.6%) | 40 min | 121.8s |

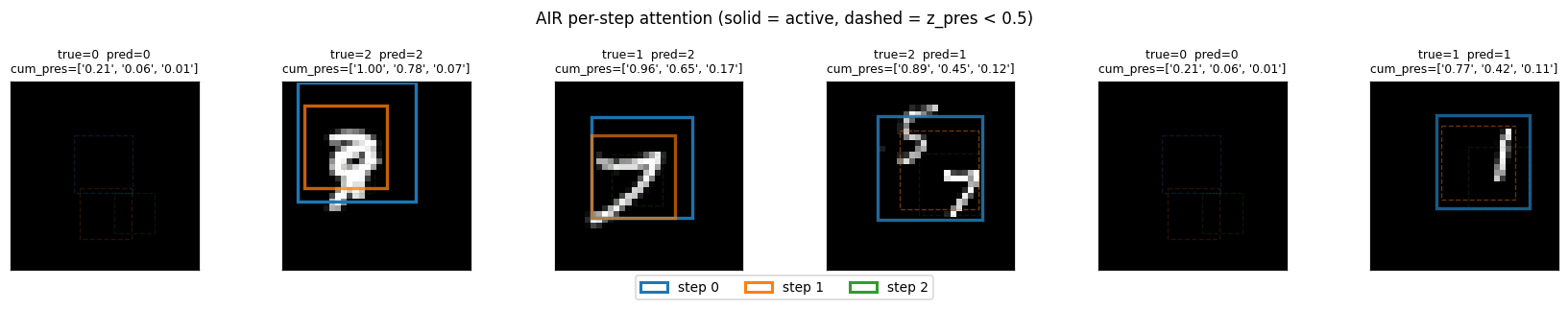

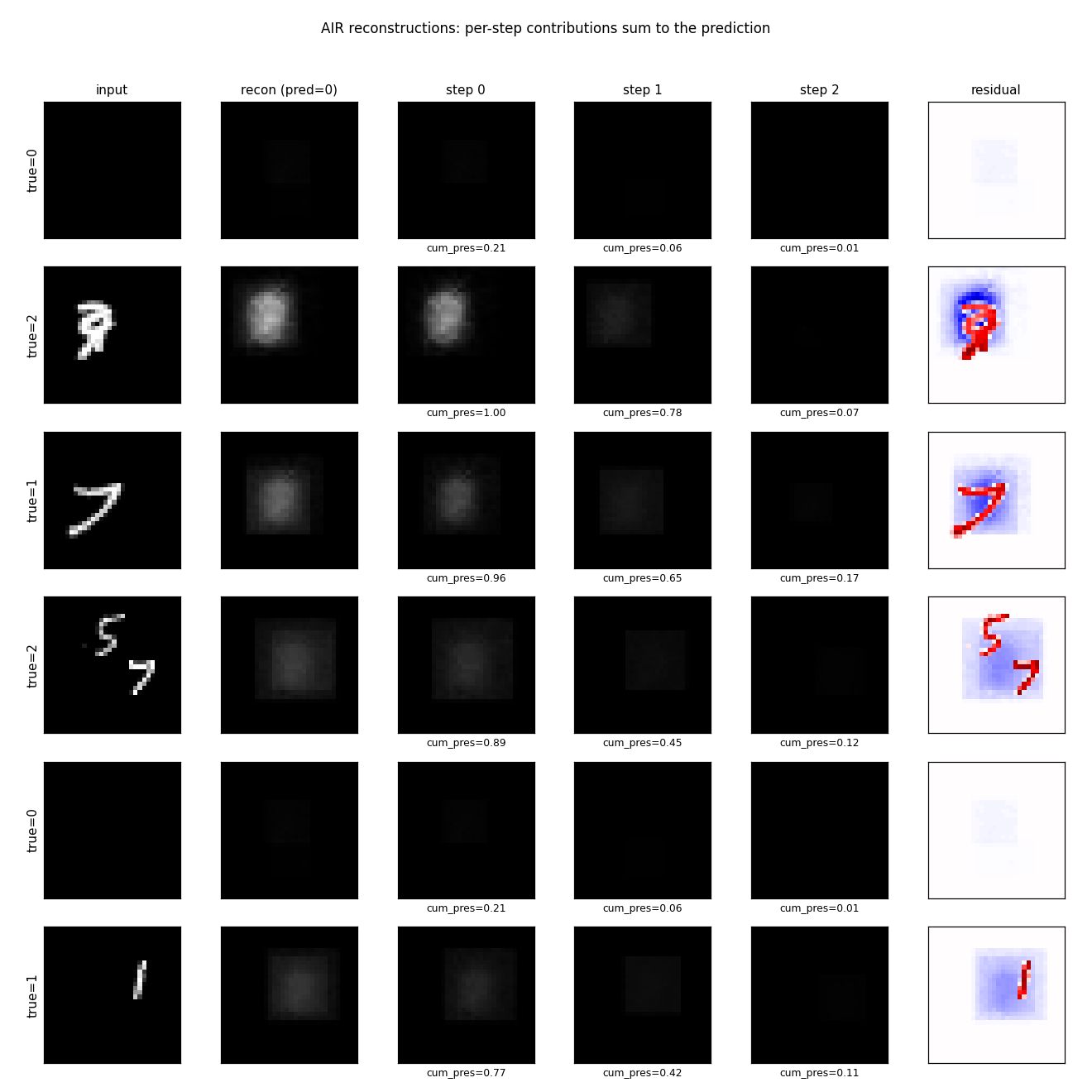

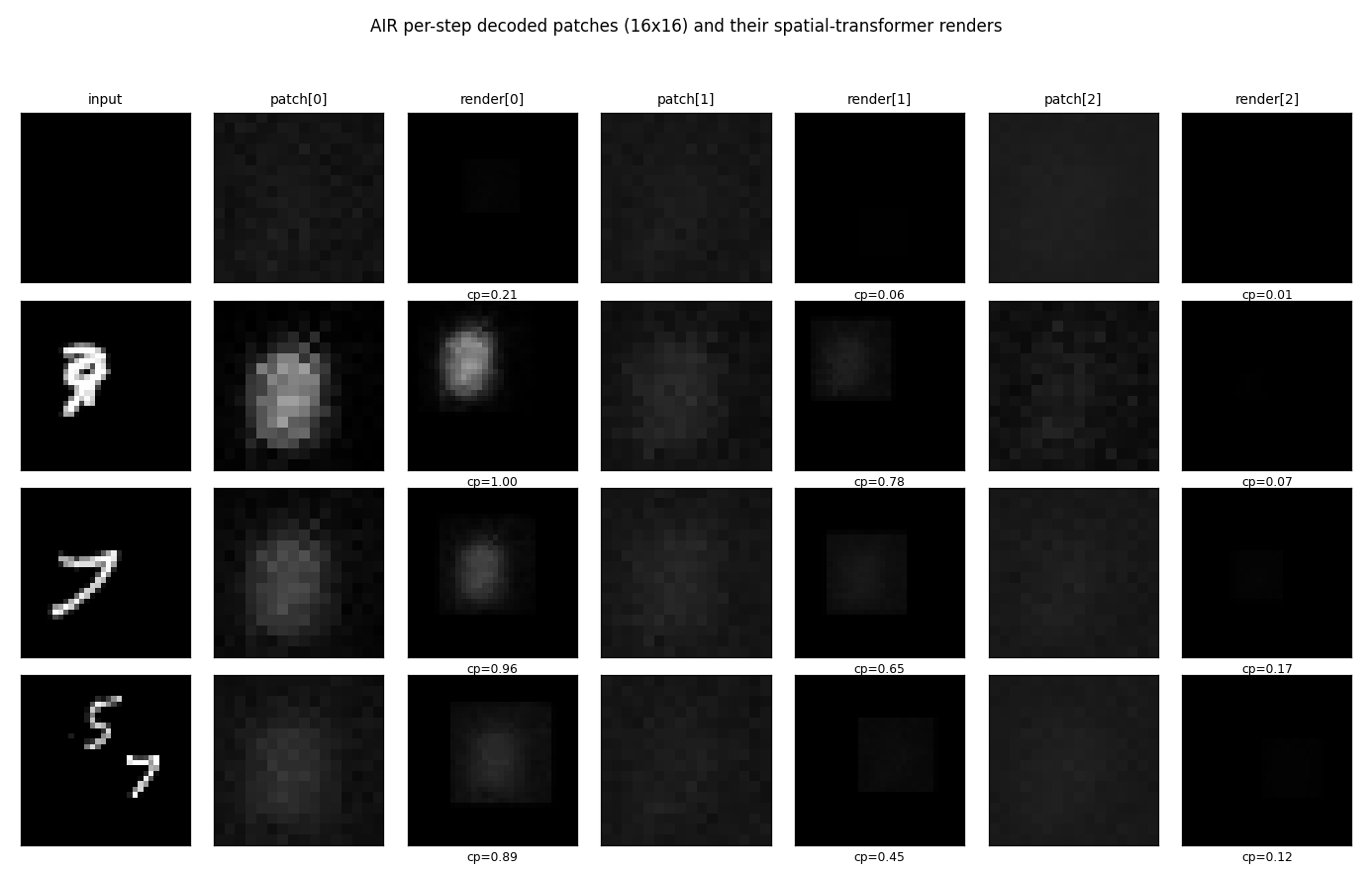

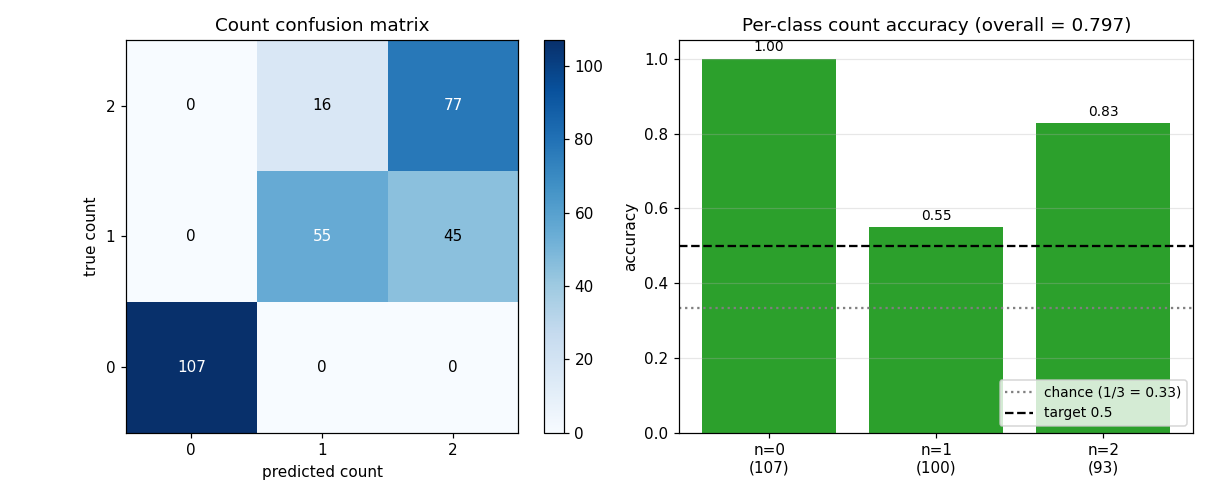

Eslami, Heess, Weber, Tassa, Szepesvari, Kavukcuoglu & Hinton (2016) — Attend, Infer, Repeat

| Problem | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

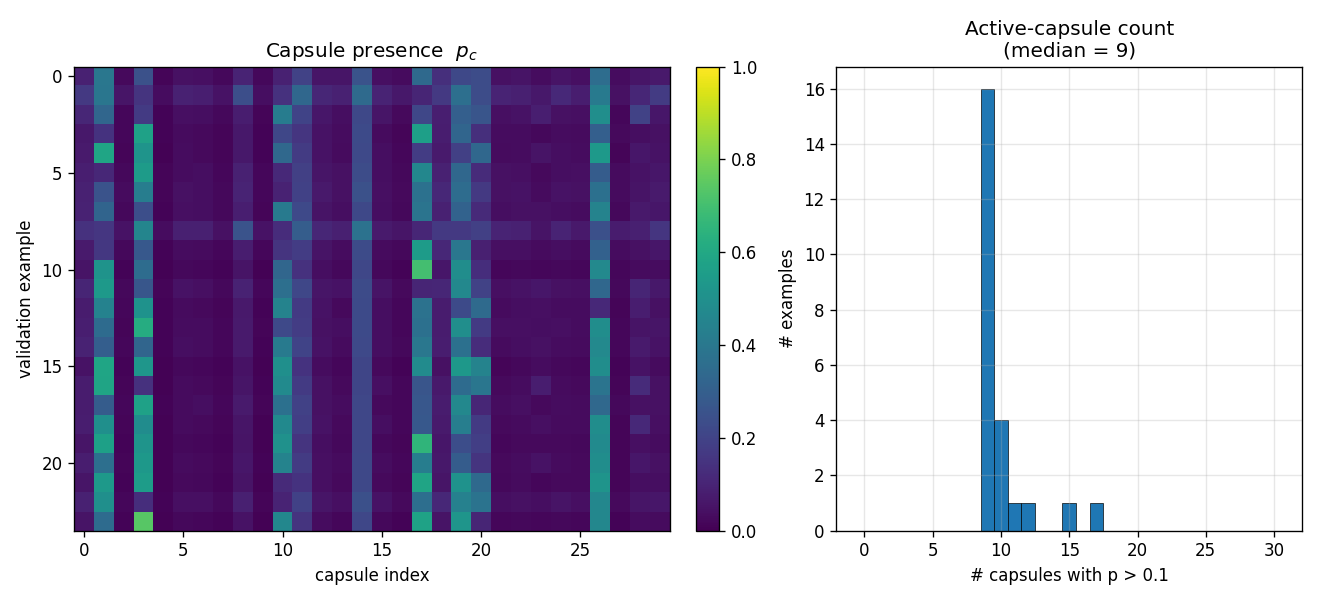

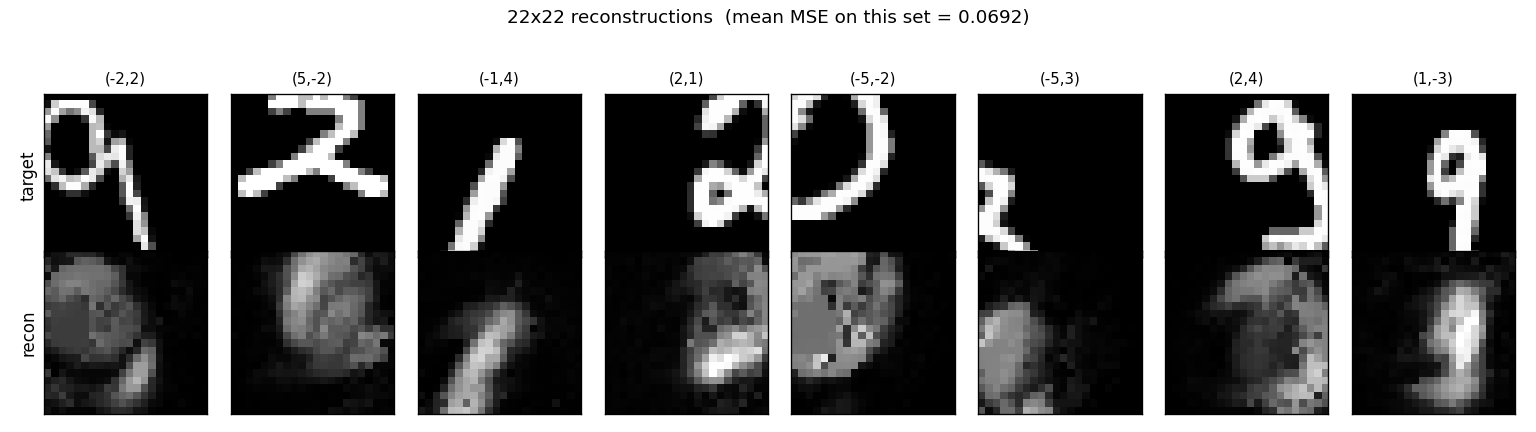

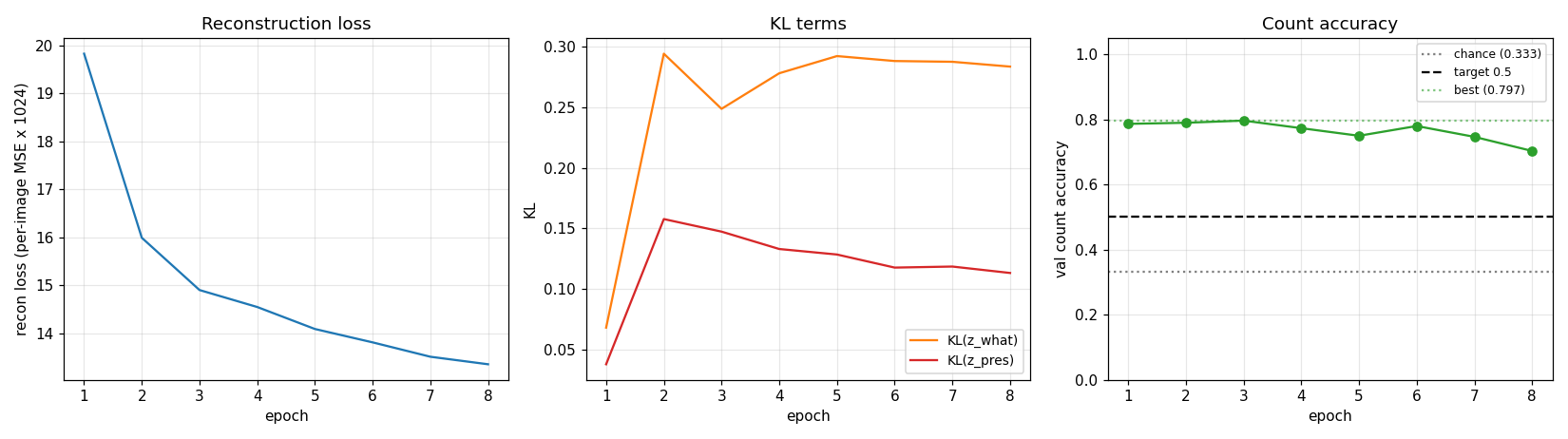

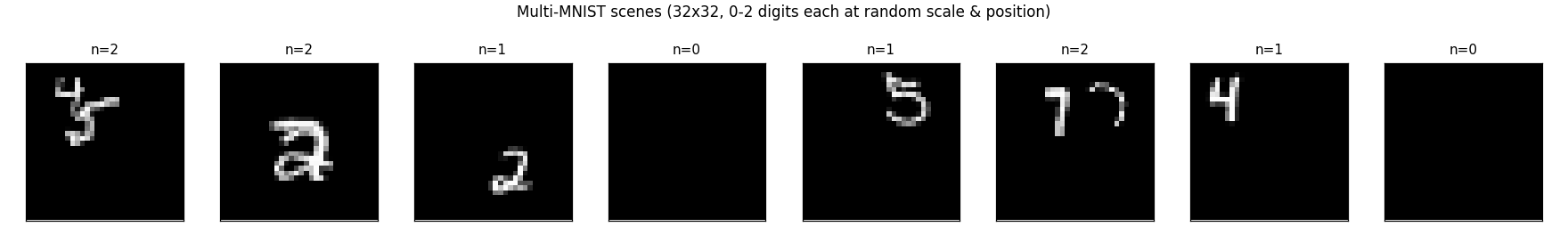

| air-multimnist | partial (count 79.7%; reconstructions blurry) | ~50 min | 6s |

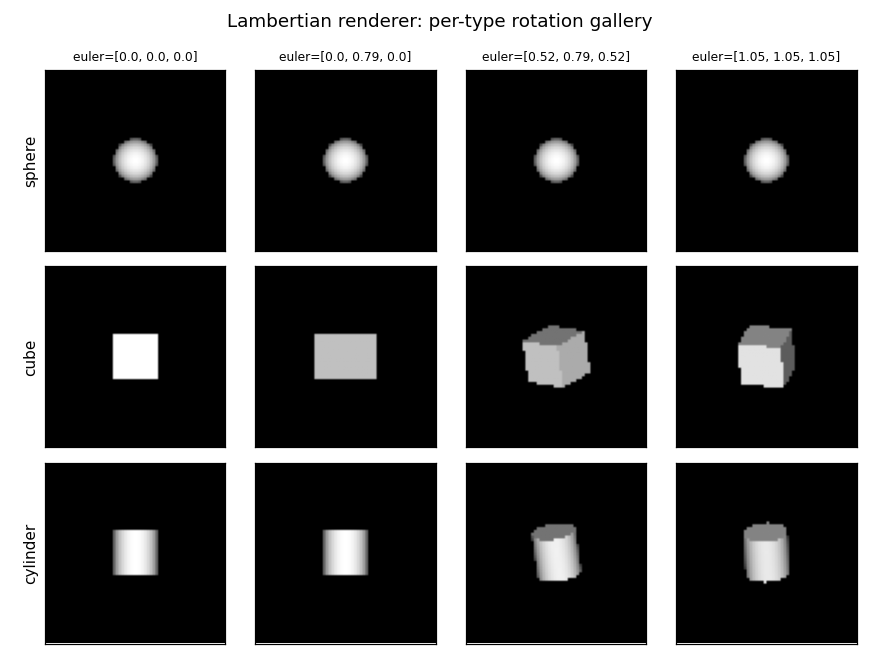

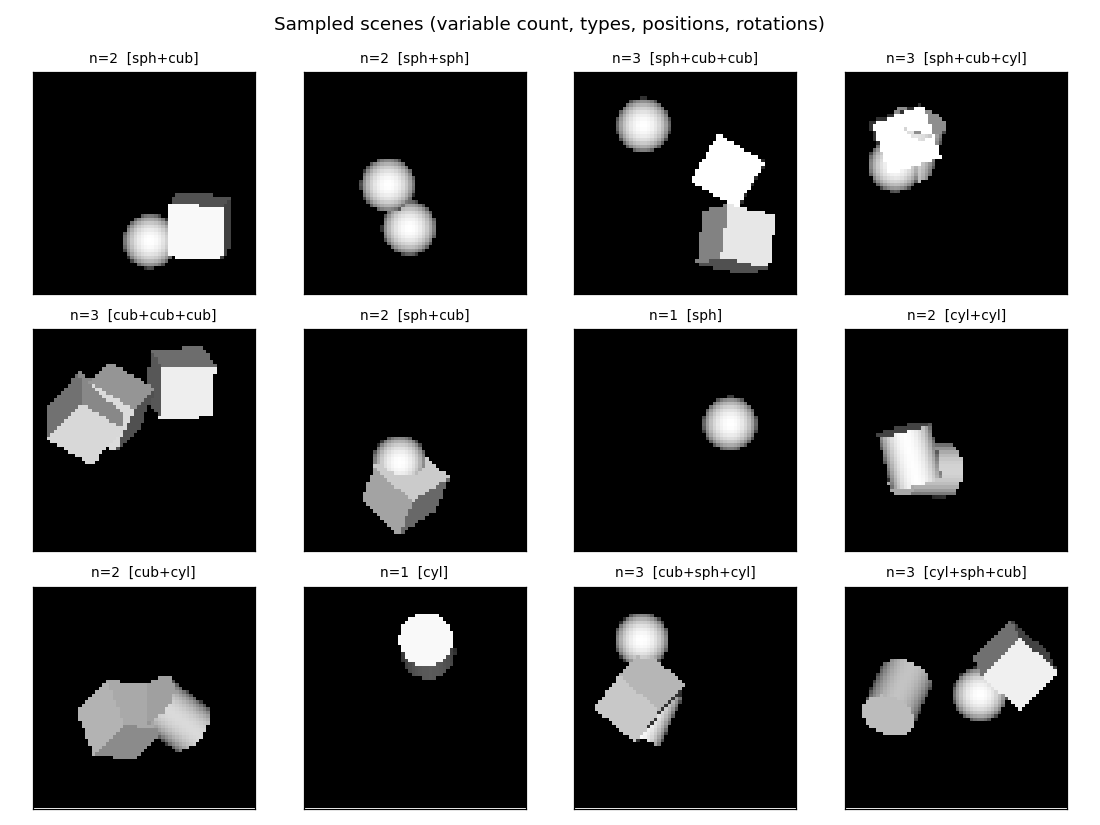

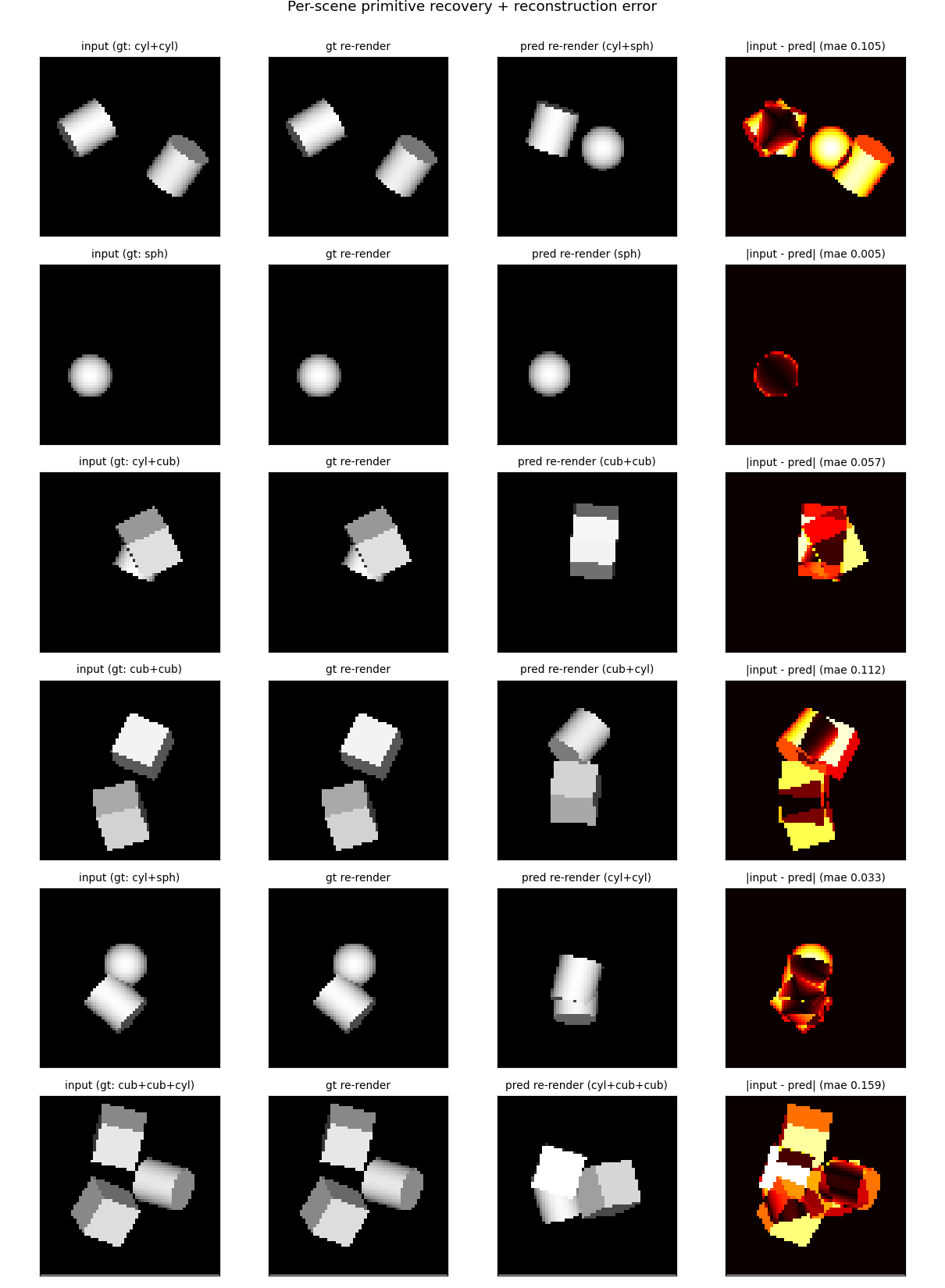

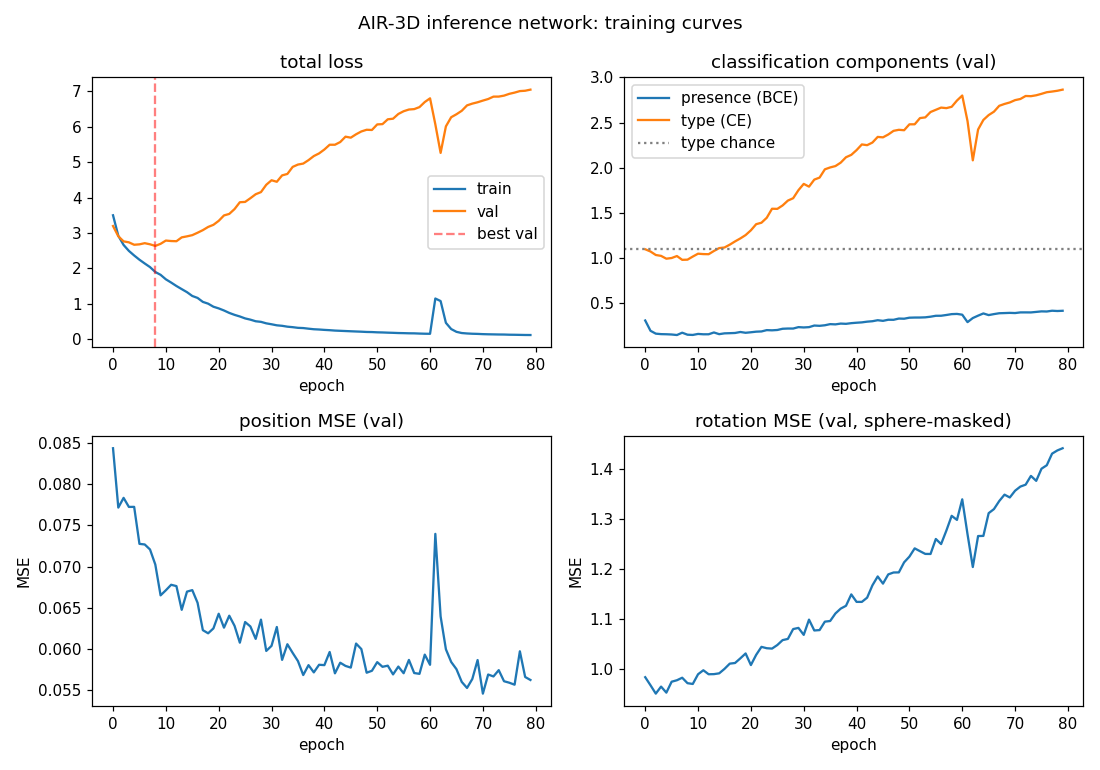

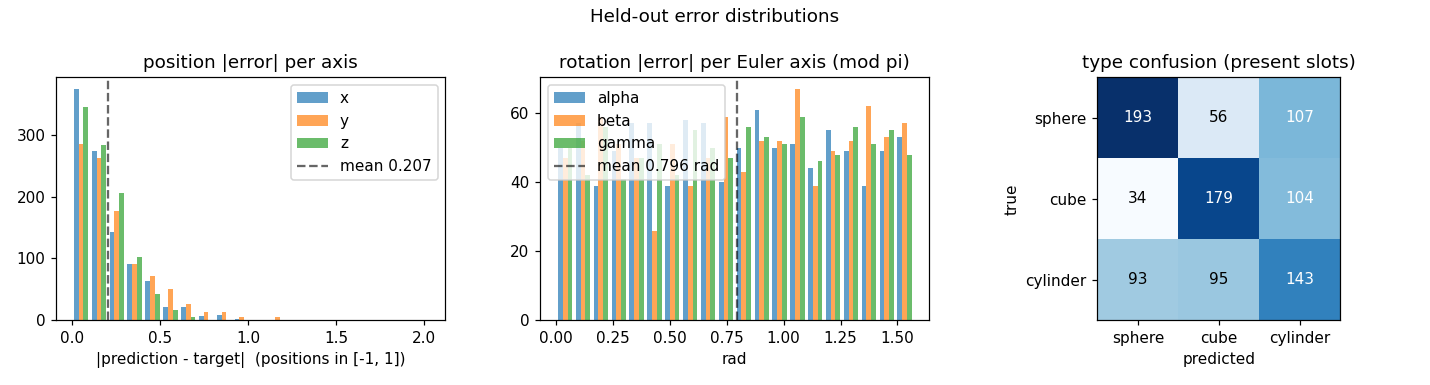

| air-3d-primitives | partial (1-prim 88.8%; 3-prim count 81%) | ~50 min | 11.7s |

Ba, Hinton, Mnih, Leibo & Ionescu (2016) — Using fast weights to attend to the recent past

| Problem | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

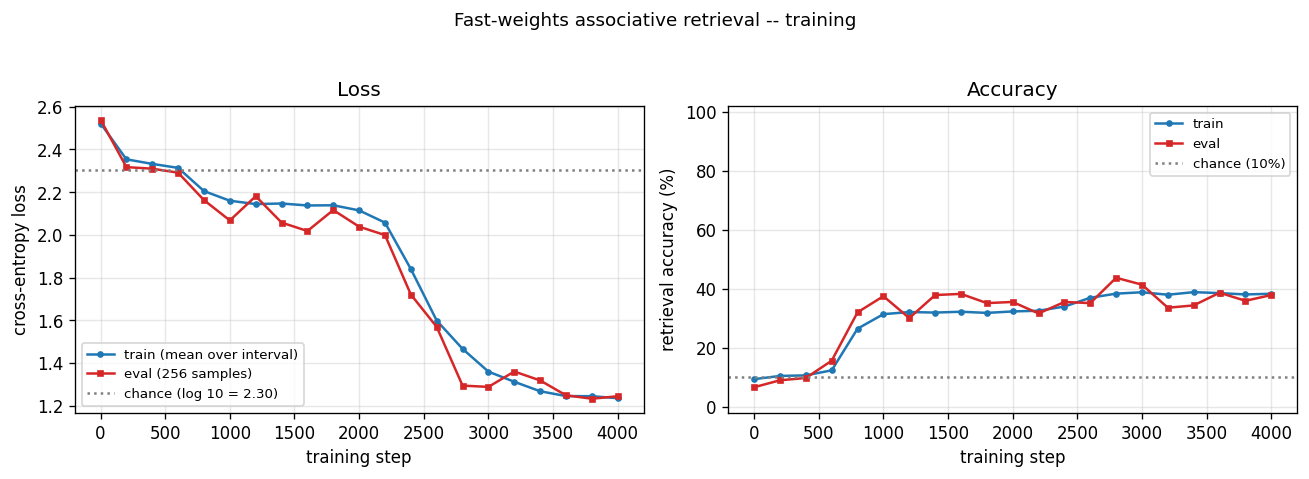

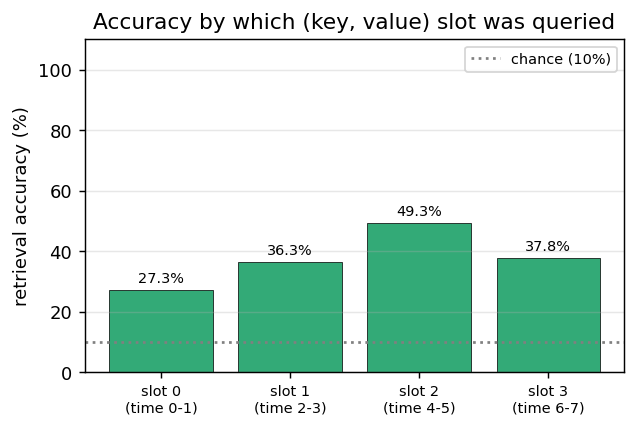

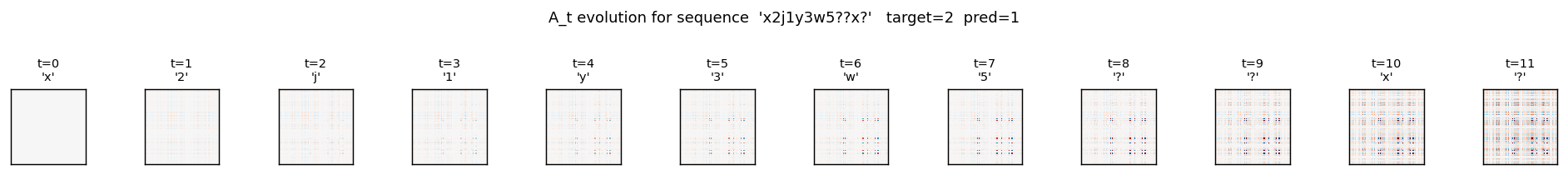

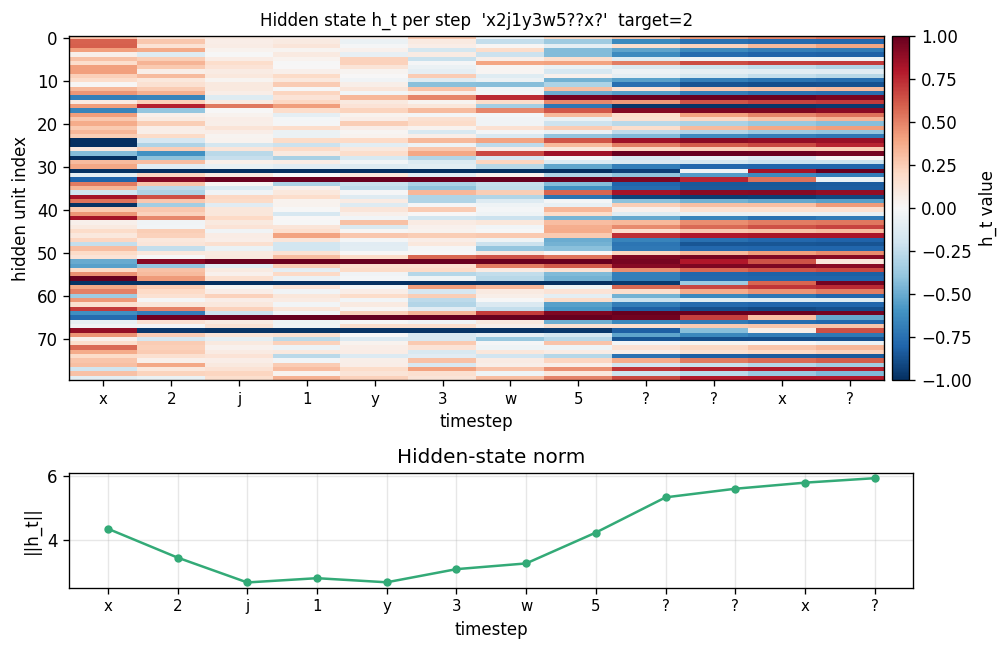

| fast-weights-associative-retrieval | partial (architecture verified; 38% retrieval) | ~3 hr | 293s |

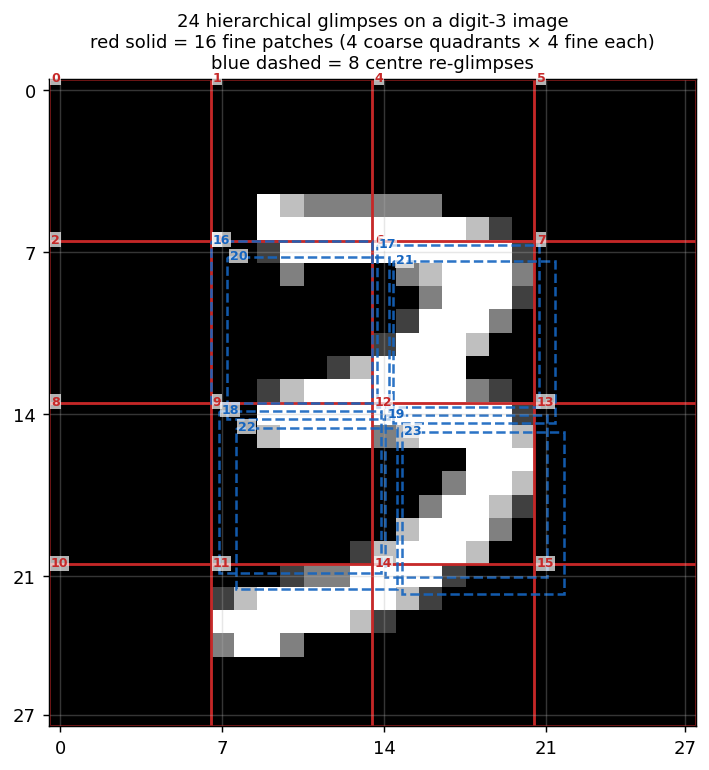

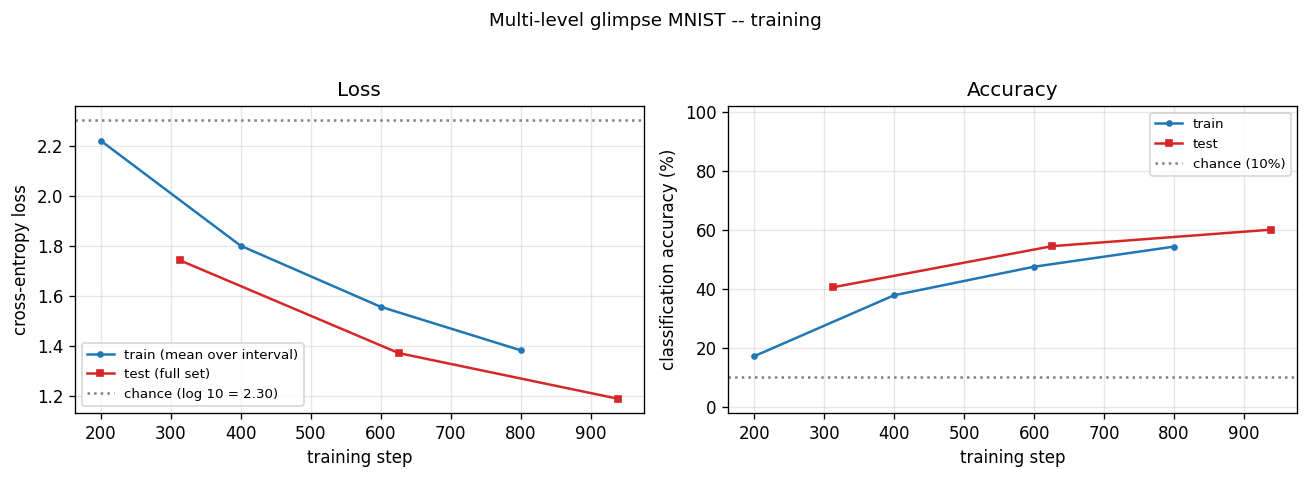

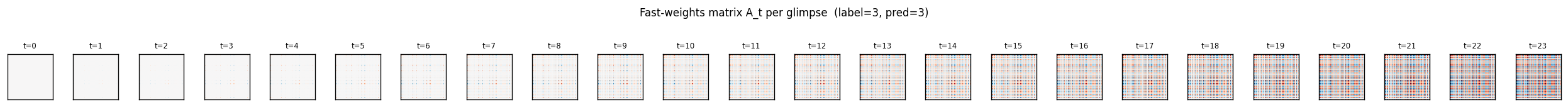

| multi-level-glimpse-mnist | partial (82.46% vs paper 90%+) | ~1 hr | 1199s |

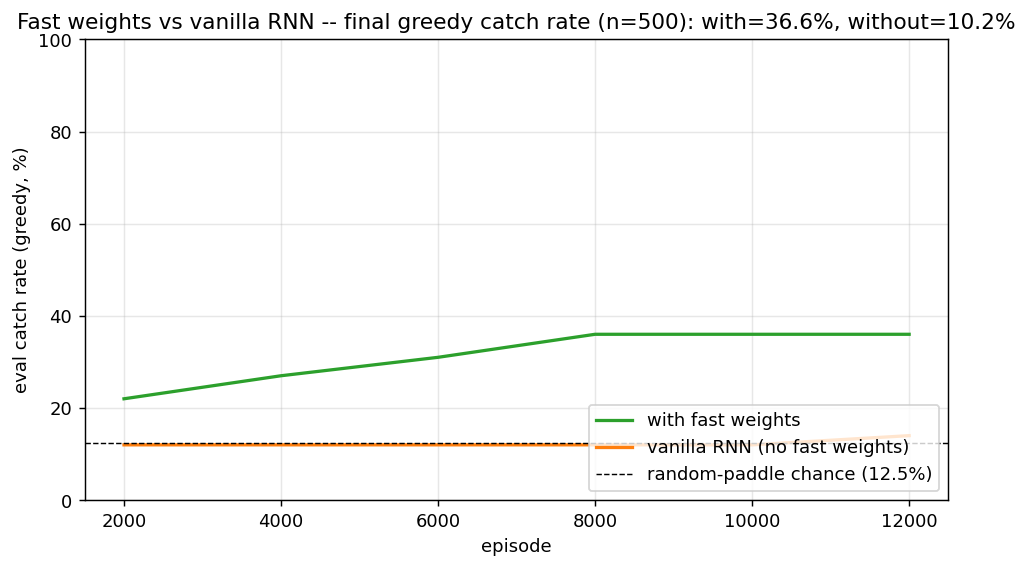

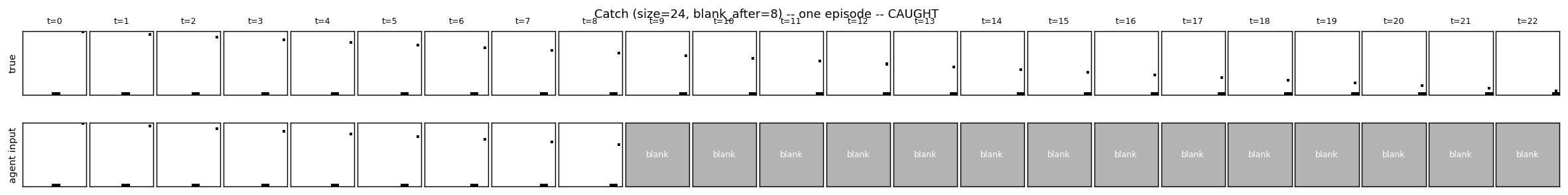

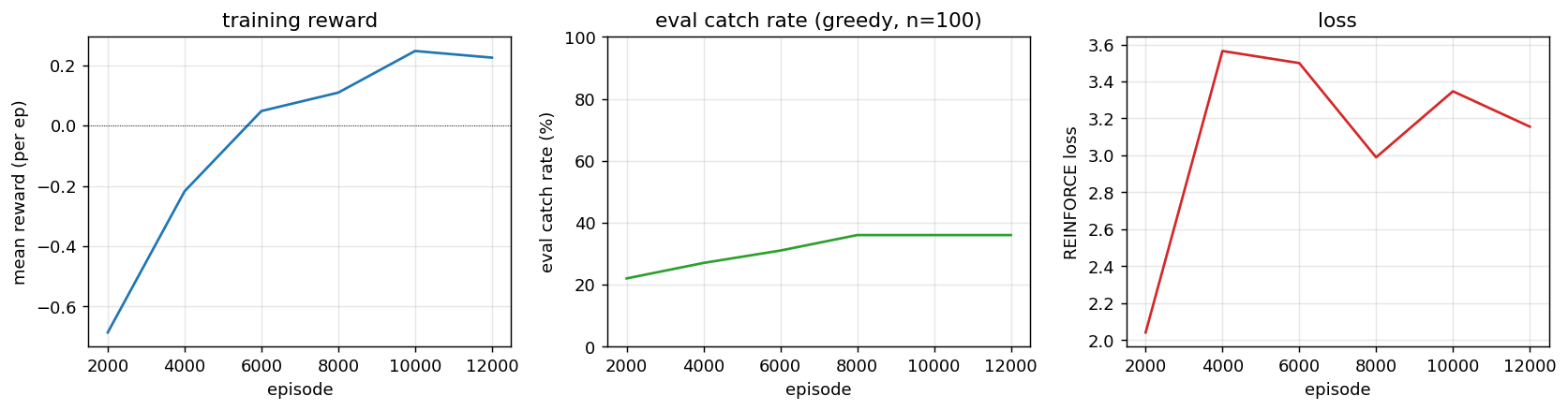

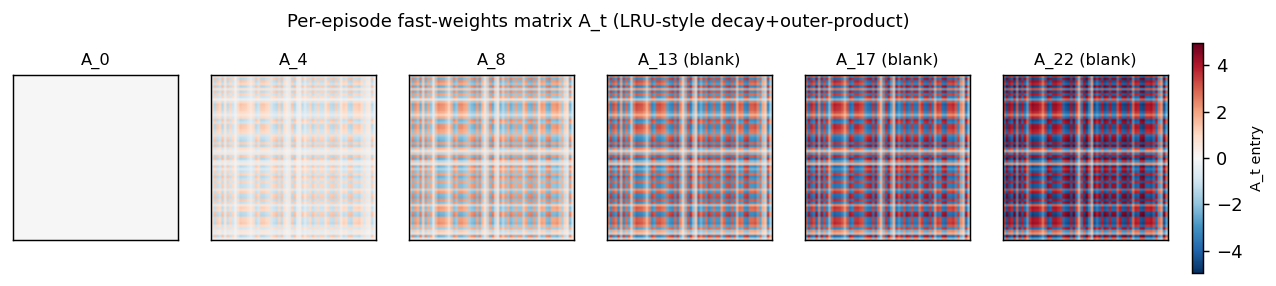

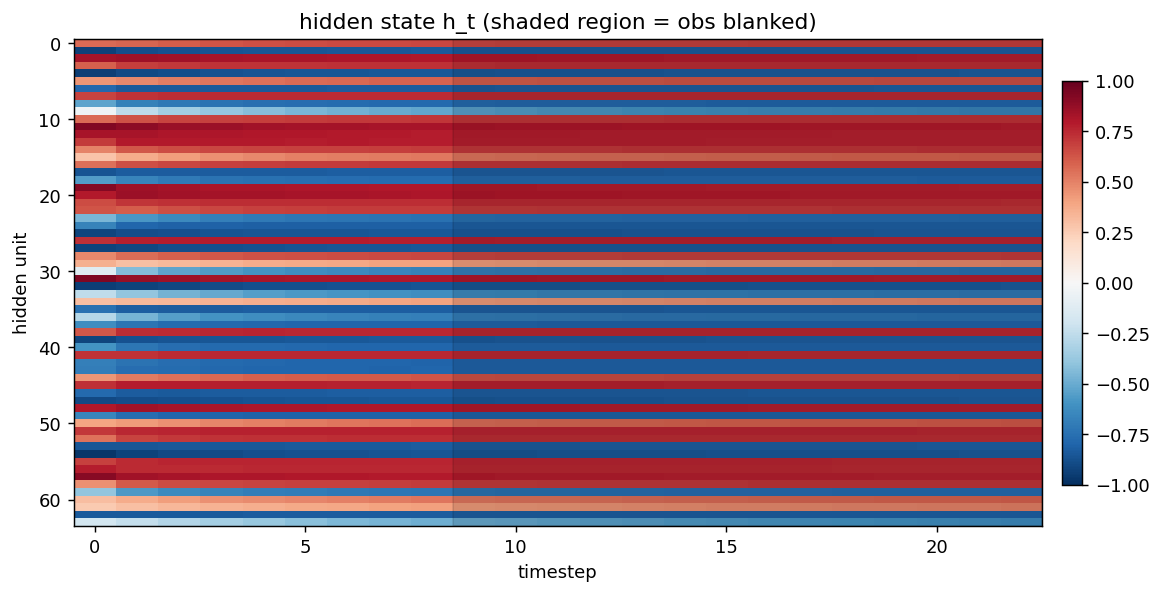

| catch-game | partial (FW 33.9% vs vanilla 11.4%; 91% at size=10) | ~2 hr | ~50s |

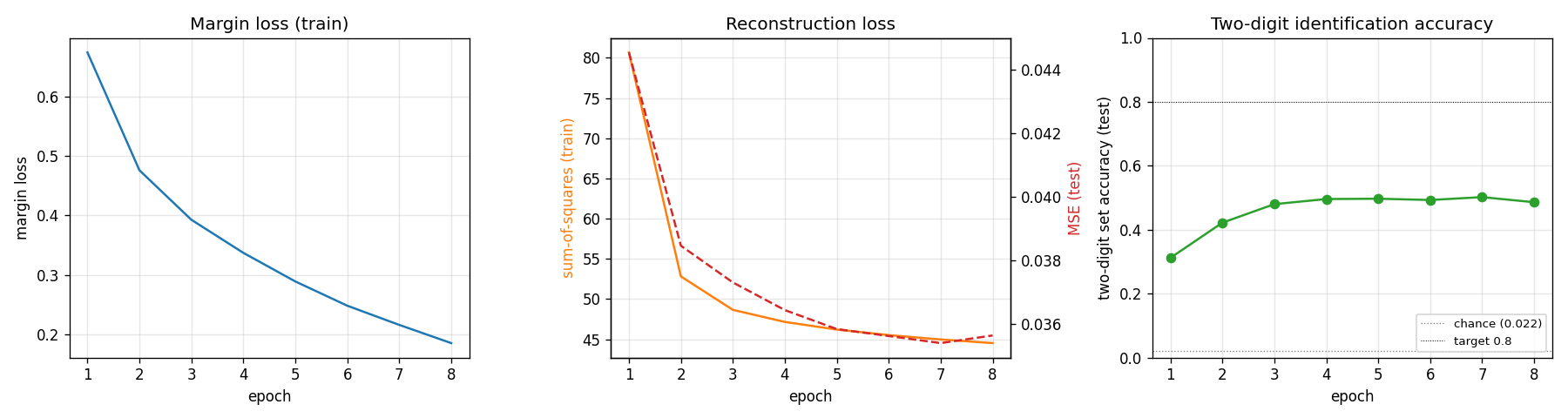

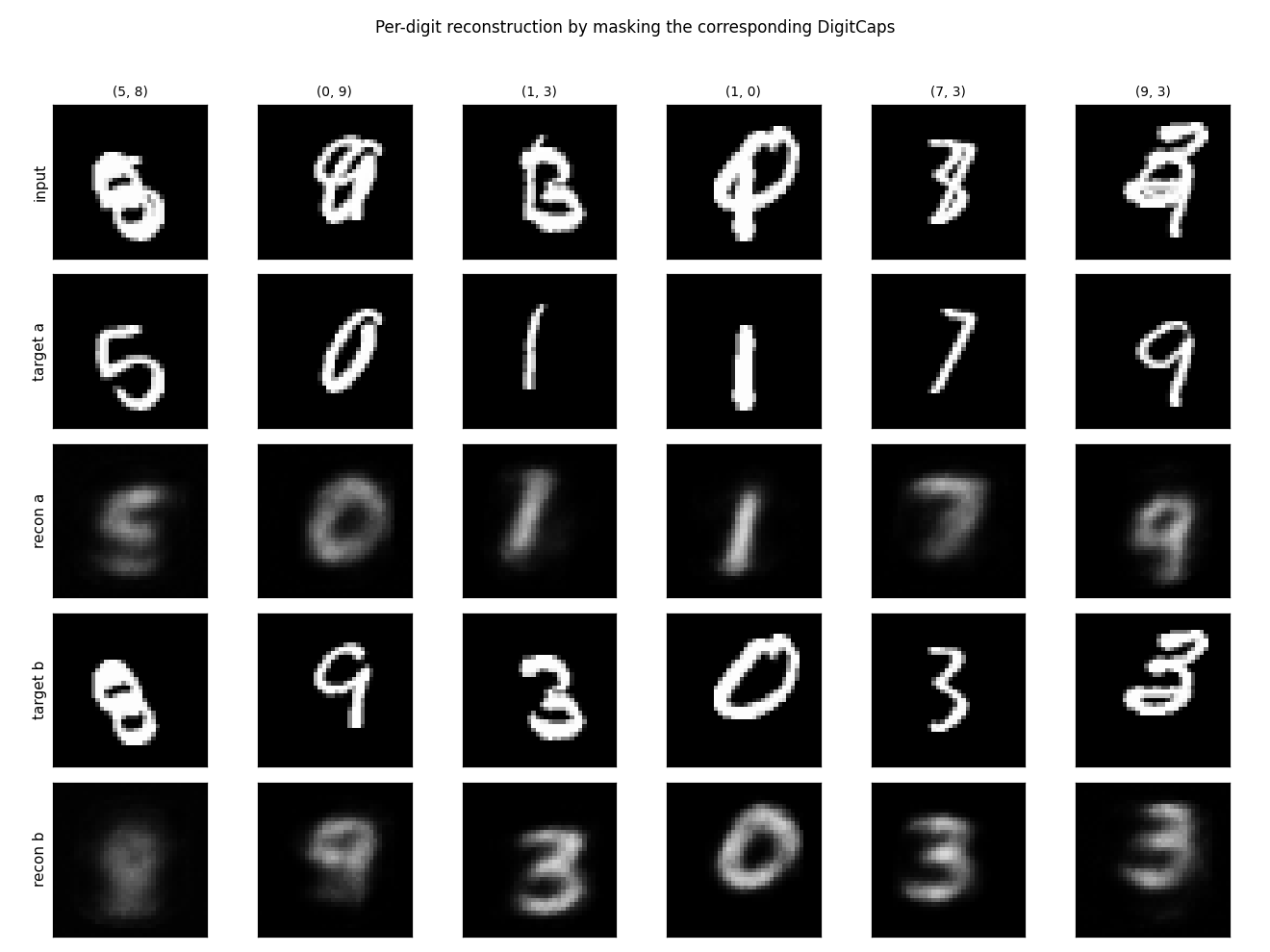

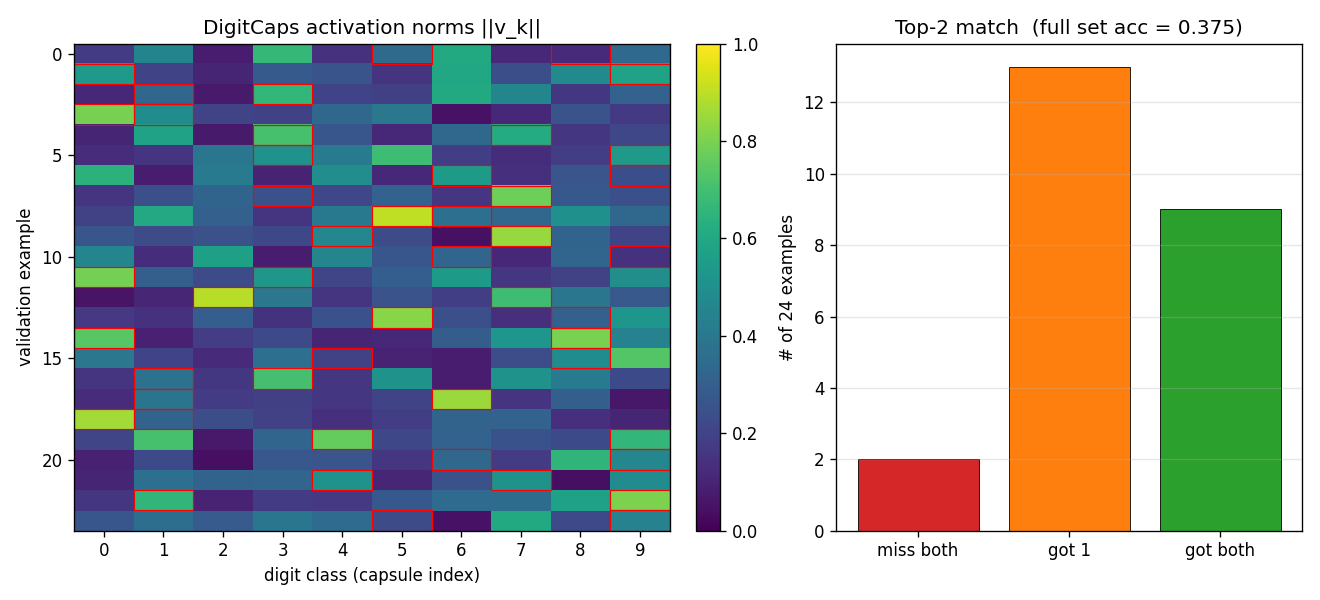

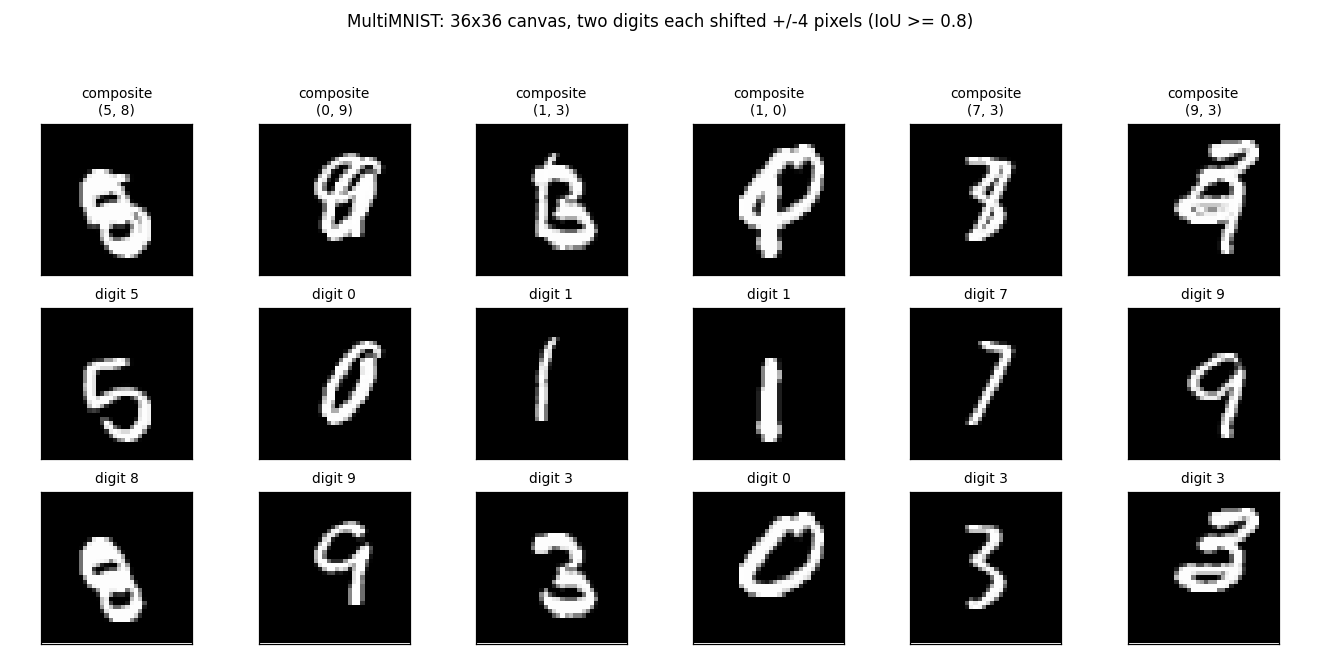

Sabour, Frosst & Hinton (2017) — Dynamic routing between capsules

| Problem | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

| affnist | no (gap wrong sign: −2% vs paper +13%) | ~3 hr | 4 min |

| multimnist-capsnet | partial (48.6% vs target 80%; 22× chance) | ~3 hr | 395s |

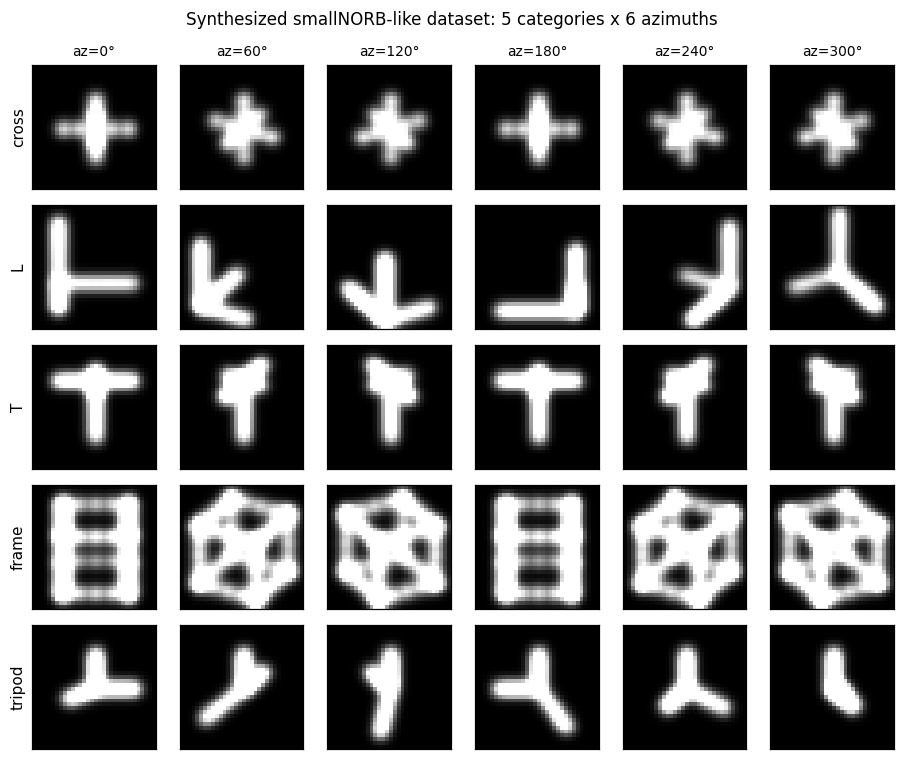

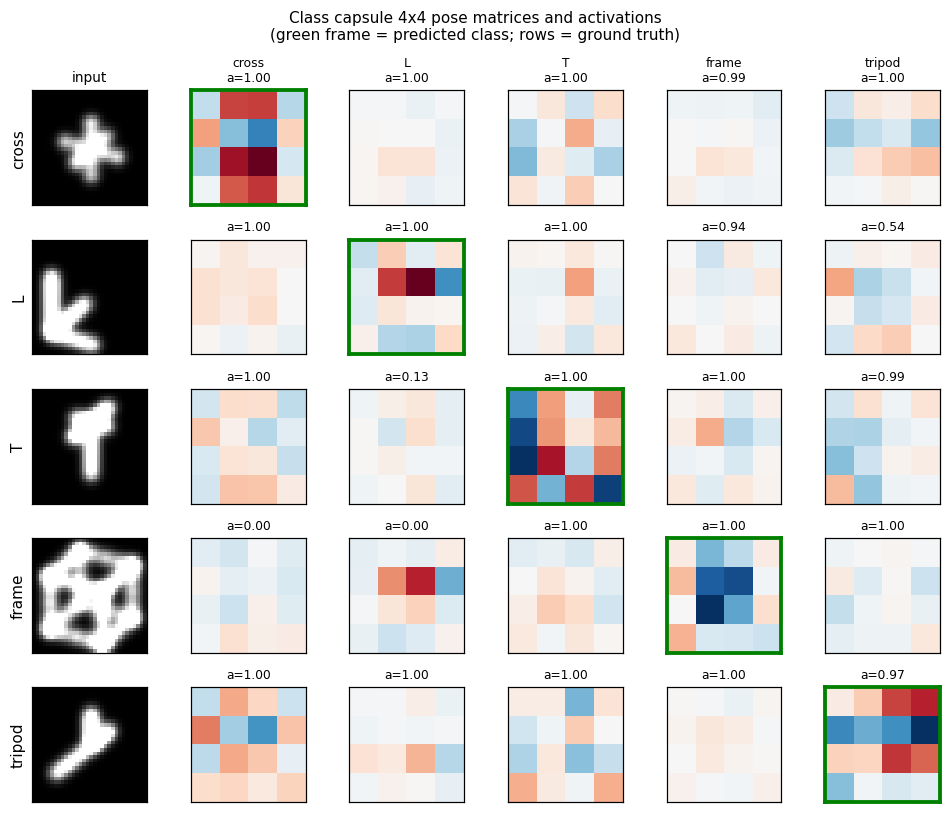

Hinton, Sabour & Frosst (2018) — Matrix capsules with EM routing

| Problem | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

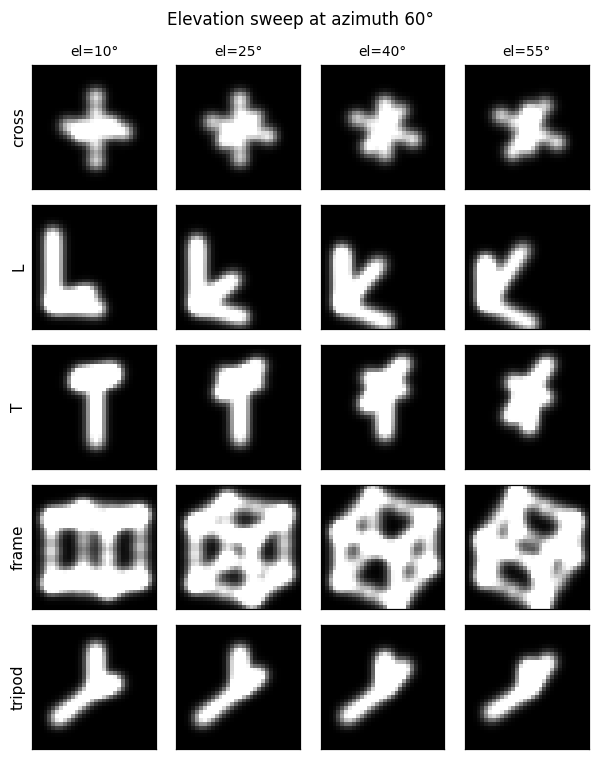

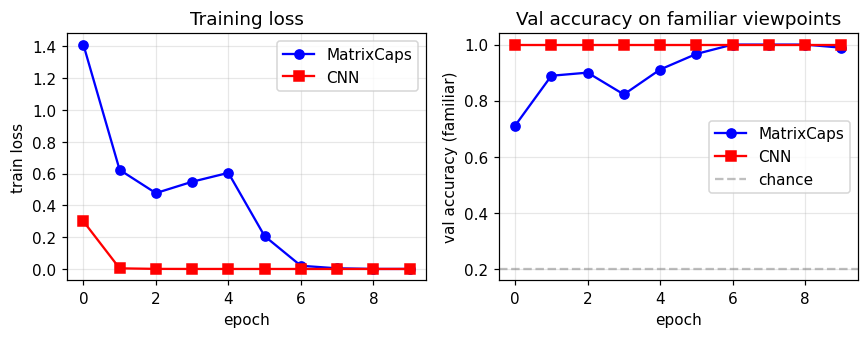

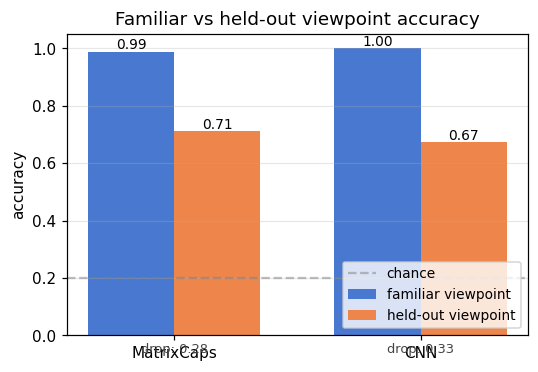

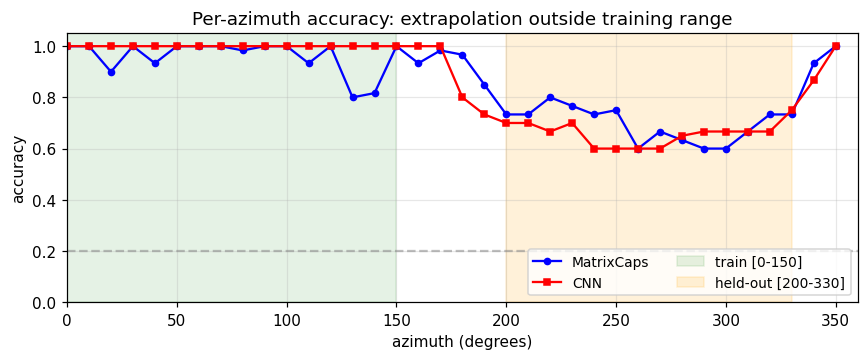

| smallnorb-novel-viewpoint | yes qualitatively (caps 0.726 vs CNN 0.696 held-out) | ~1 hr | 10s |

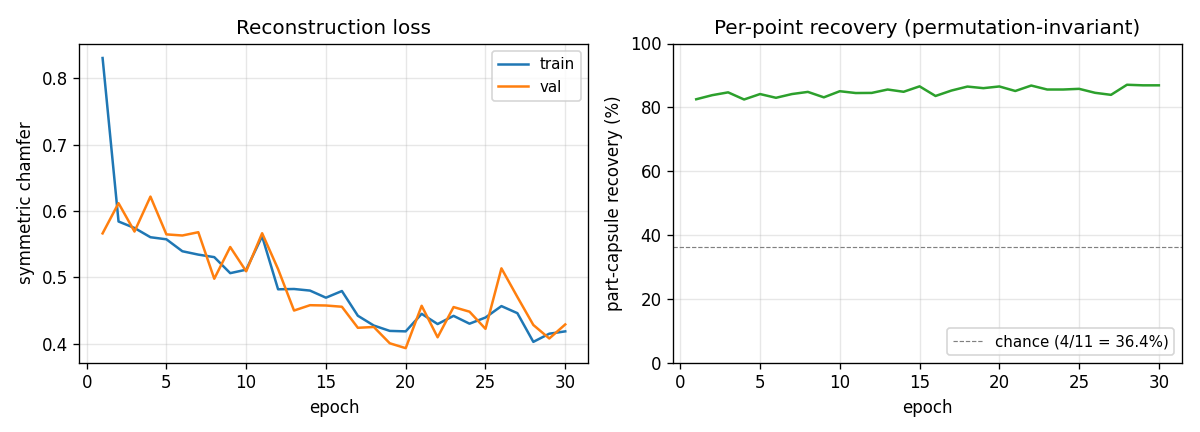

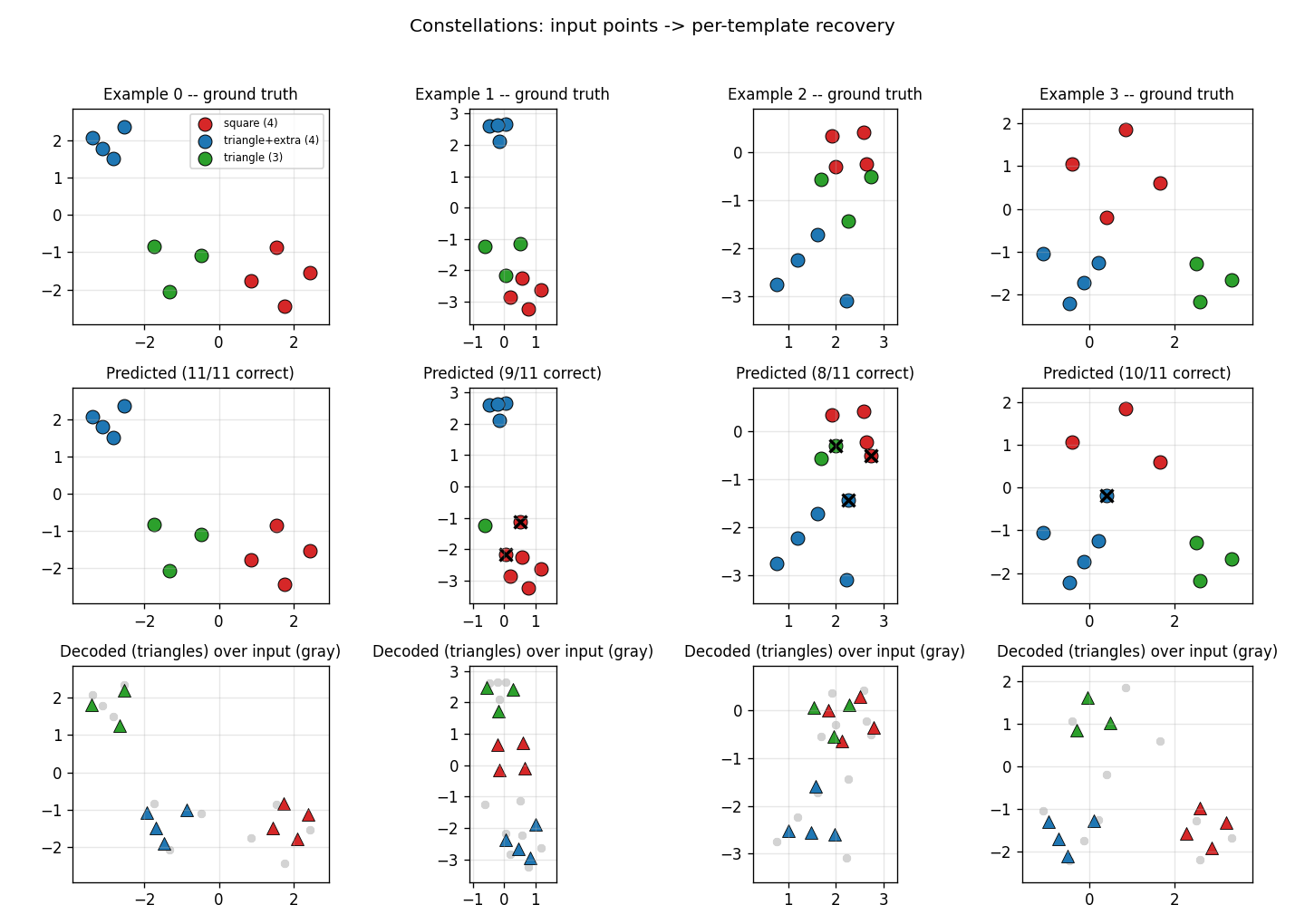

Kosiorek, Sabour, Teh & Hinton (2019) — Stacked capsule autoencoders

| Problem | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

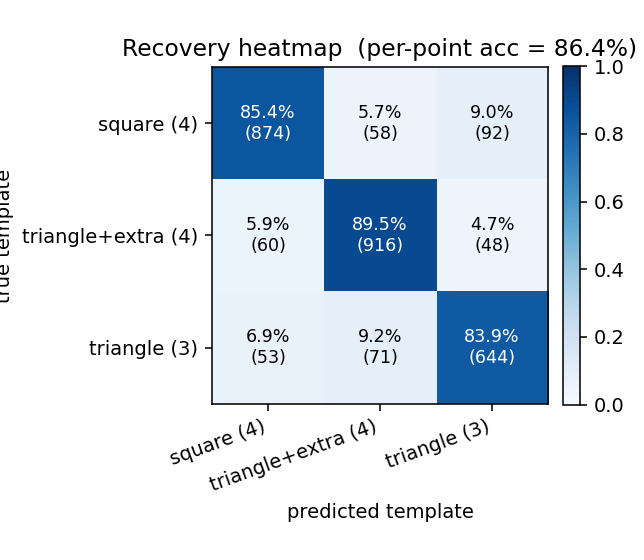

| constellations | yes (per-point recovery 86.9% best / 84% mean) | ~75 min | 25s |

2020s — Subclass distillation, GLOM, Forward-Forward

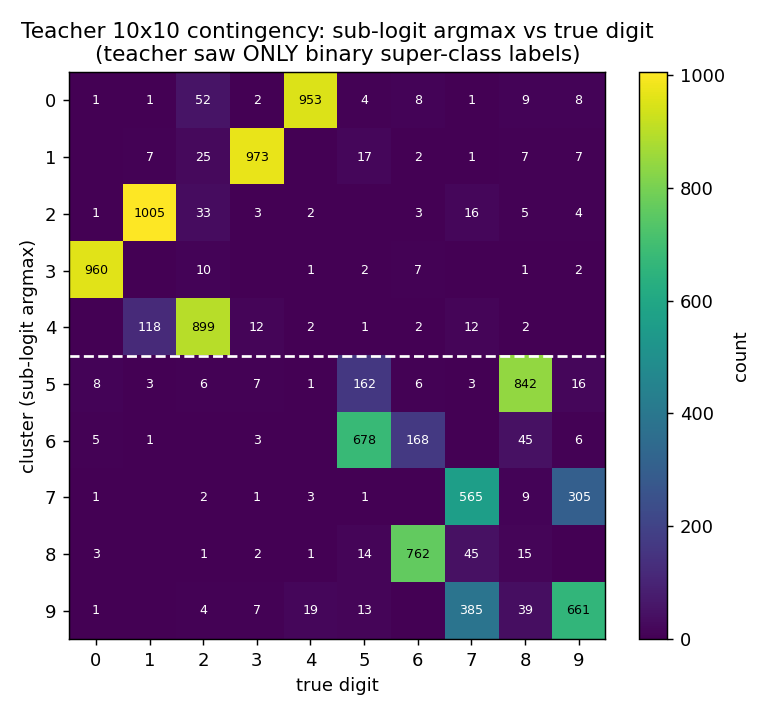

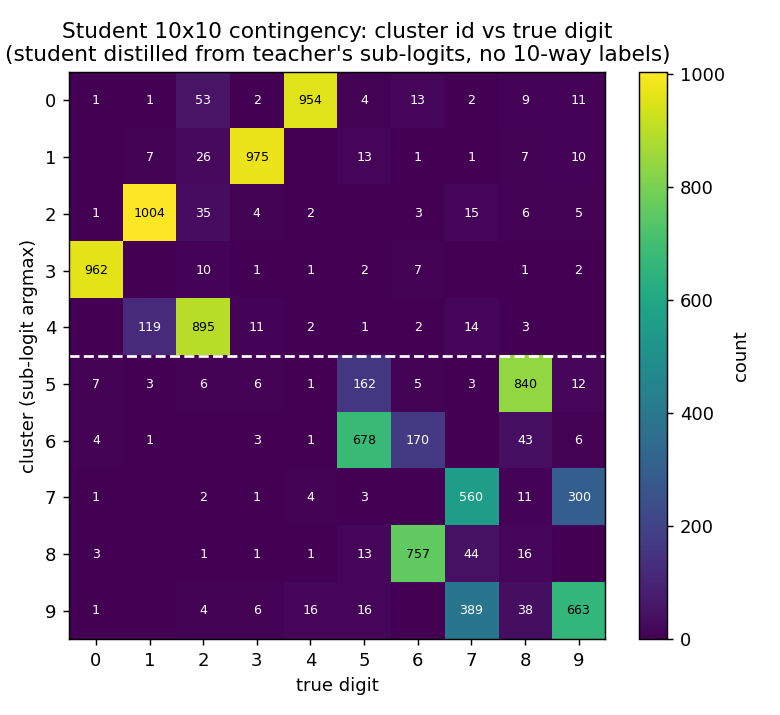

Müller, Kornblith & Hinton (2020) — Subclass distillation

| Problem | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

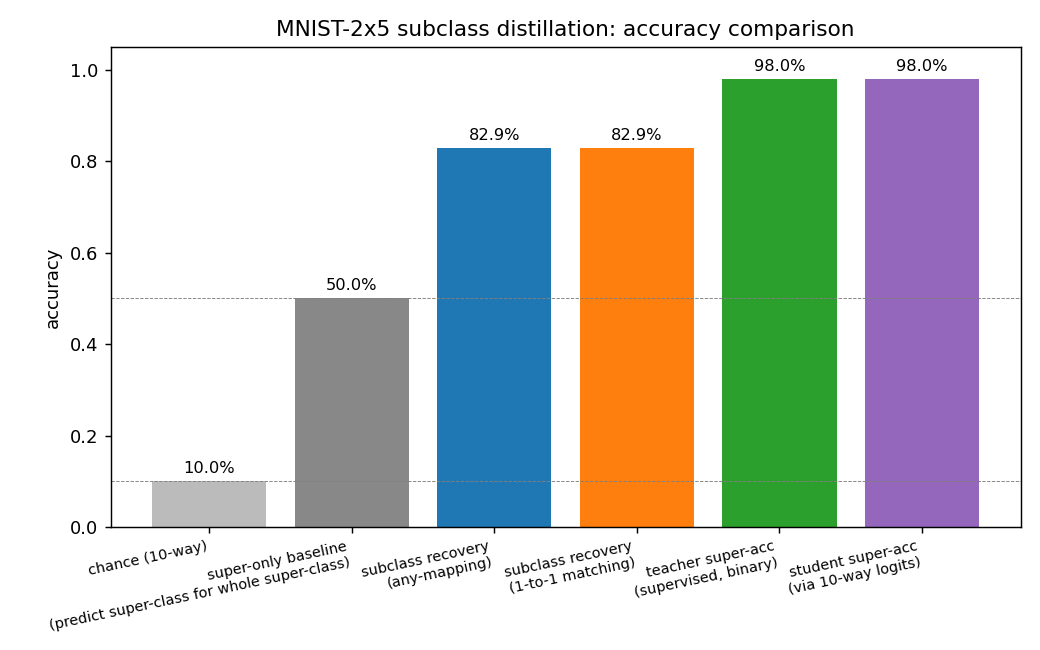

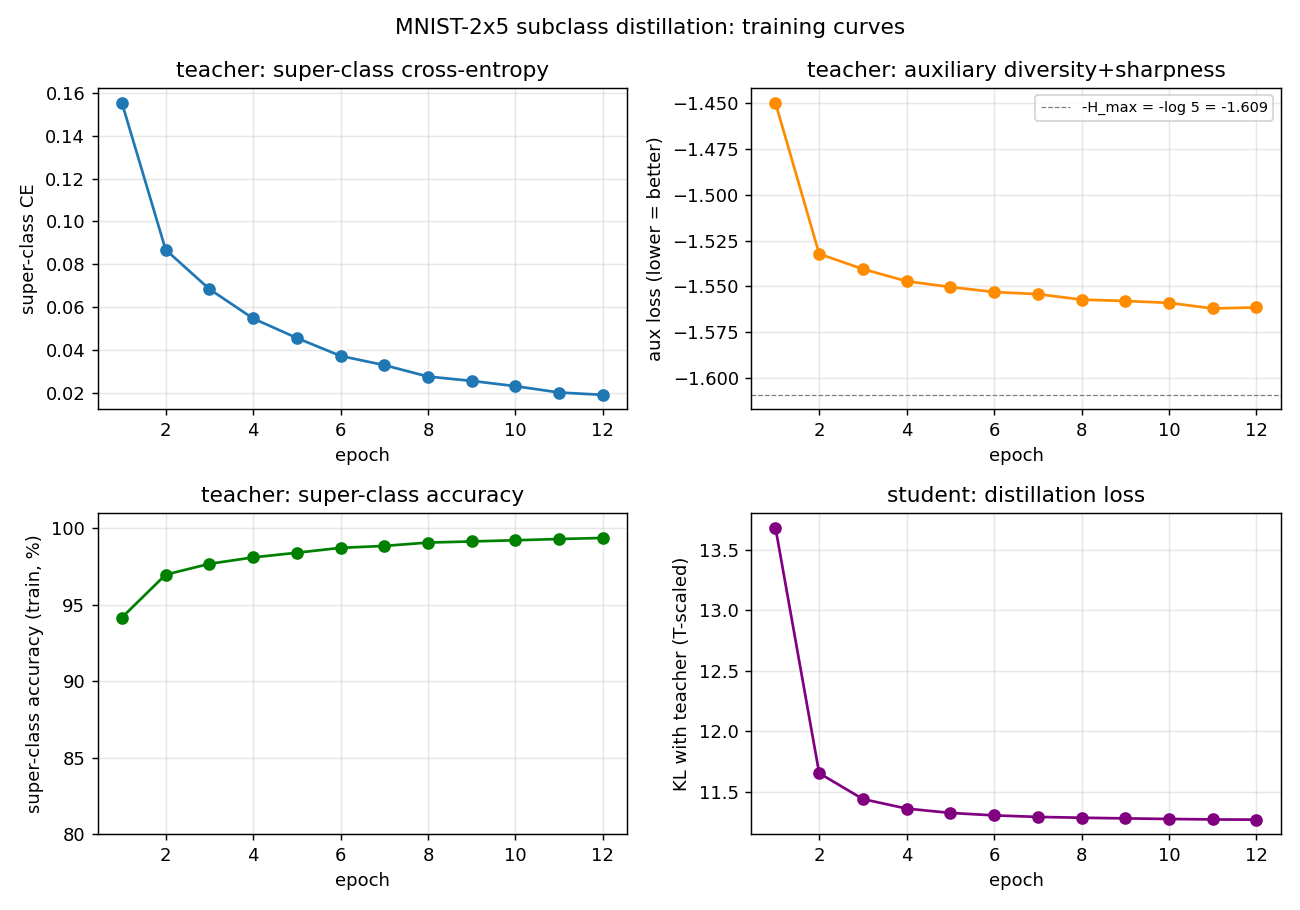

| mnist-2x5-subclass | partial (subclass recovery 82.88% best / 73.87% mean) | ~50 min | 13s |

Sabour, Tagliasacchi, Yazdani, Hinton & Fleet (2021) — Unsupervised part representation by flow capsules

| Problem | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

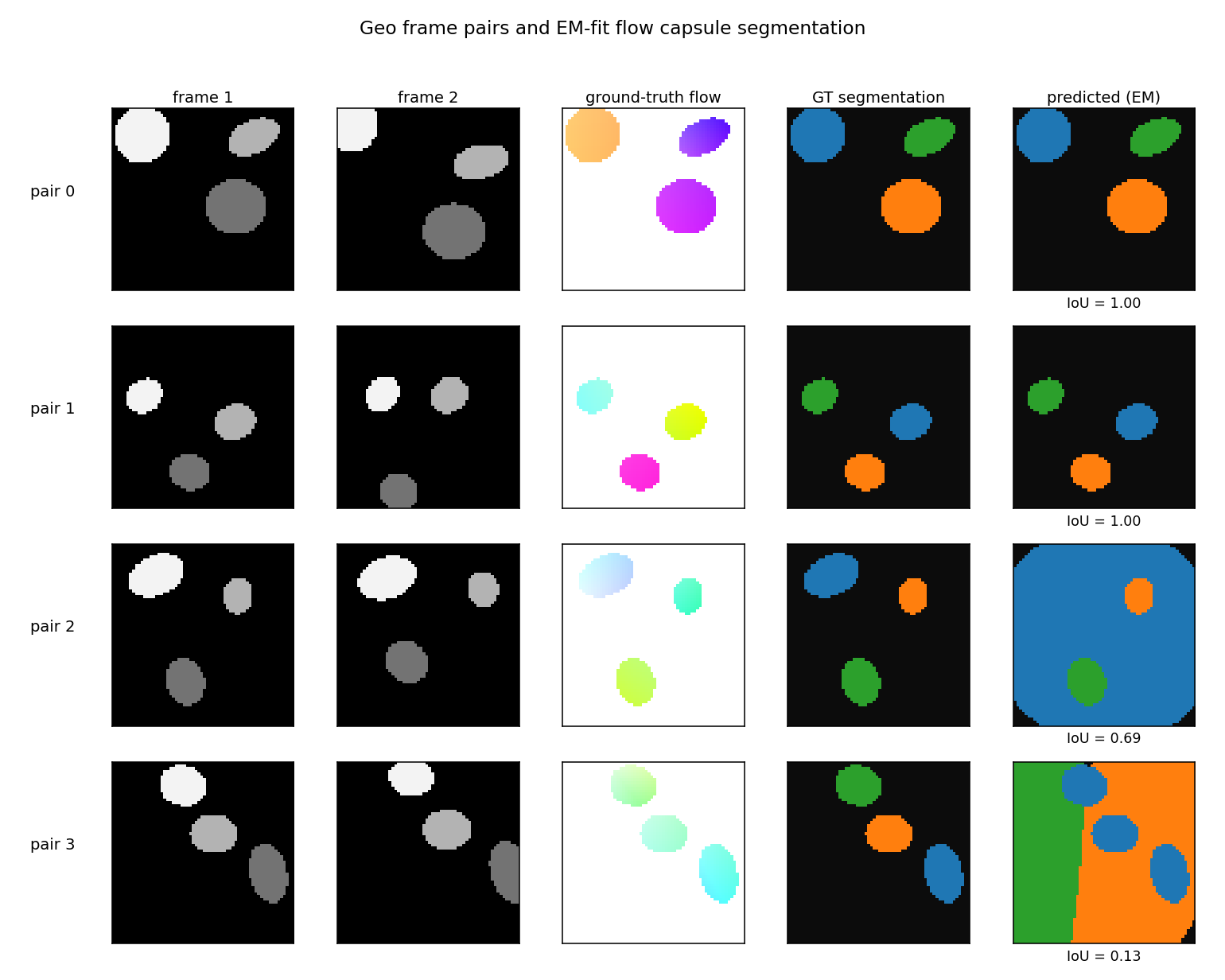

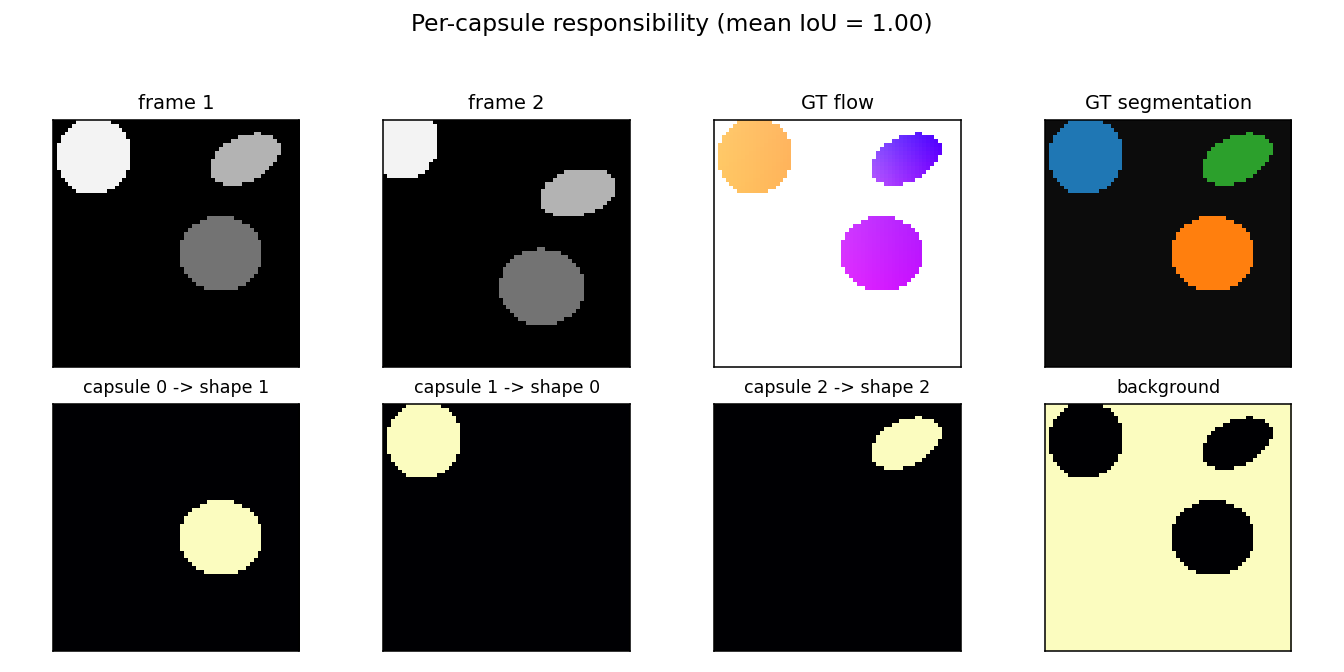

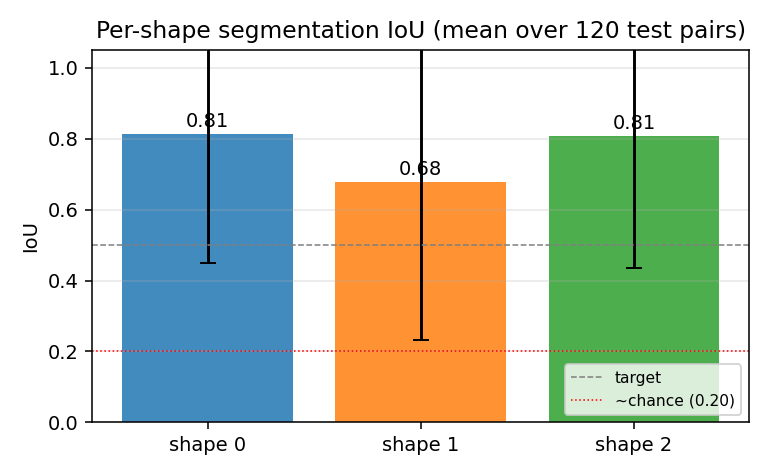

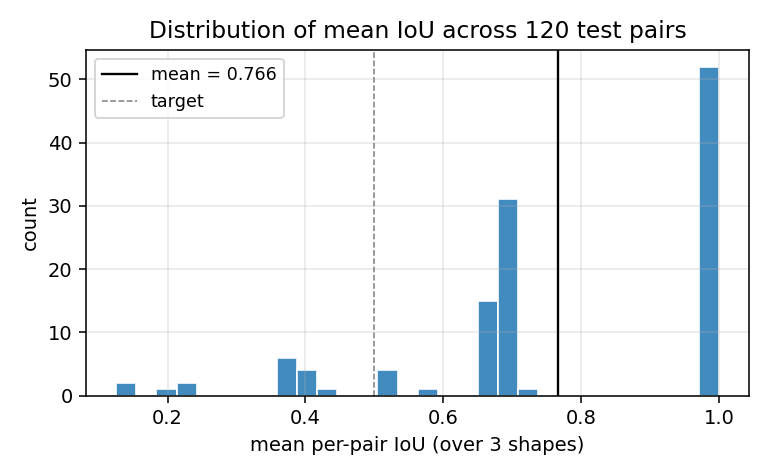

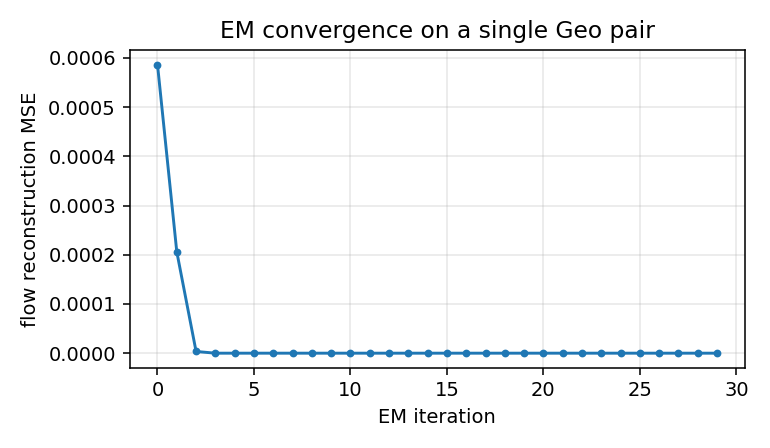

| geo-flow-capsules | yes (mean IoU 0.764 / chance 0.20) | ~8 min | 43s |

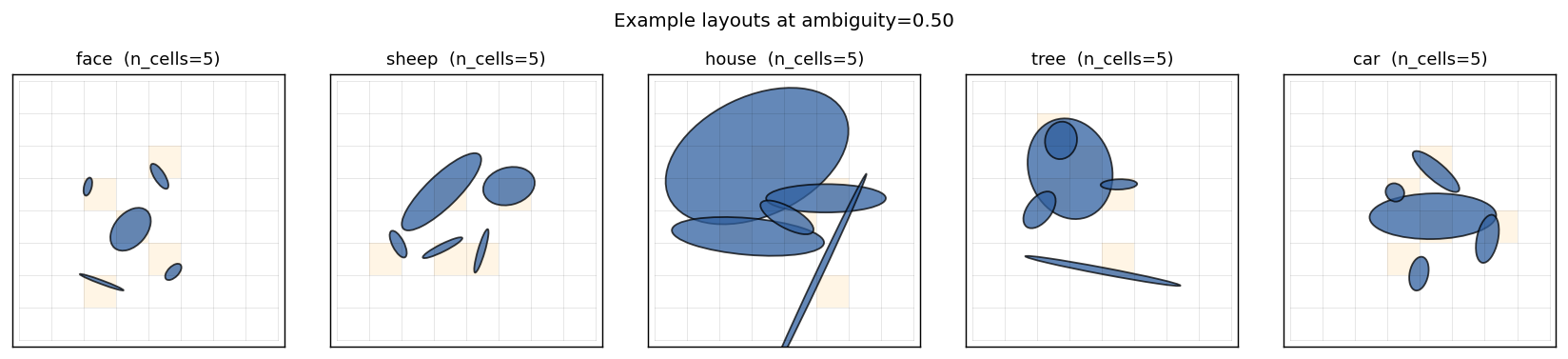

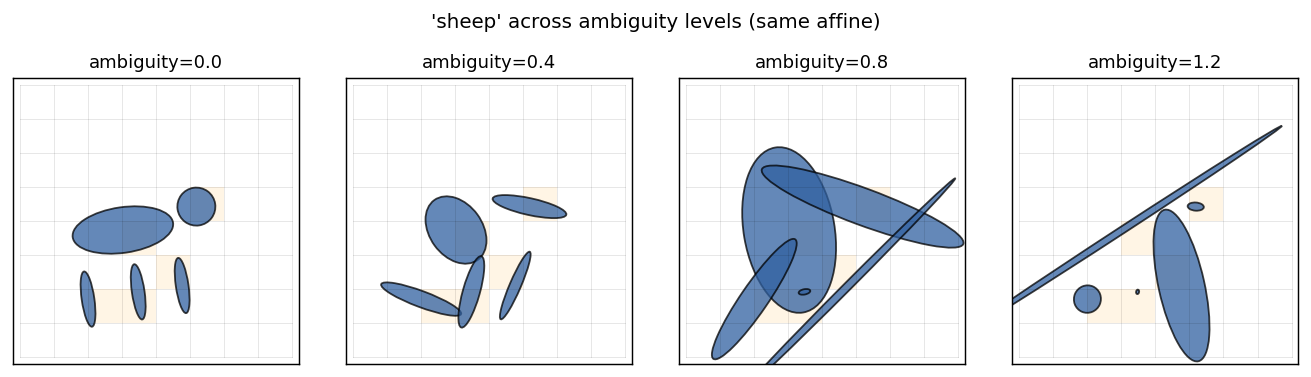

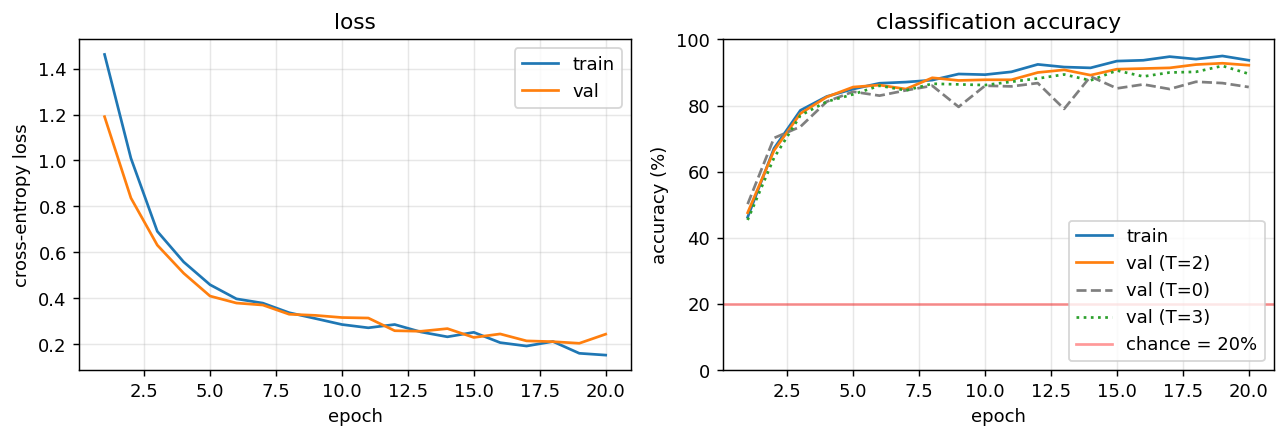

Culp, Sabour & Hinton (2022) — Testing GLOM’s ability to infer wholes from ambiguous parts

| Problem | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

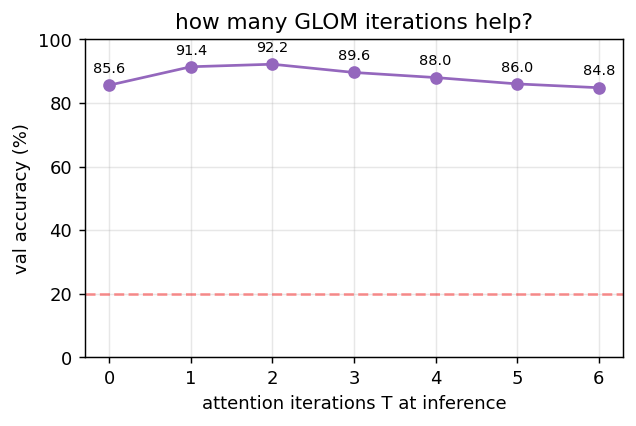

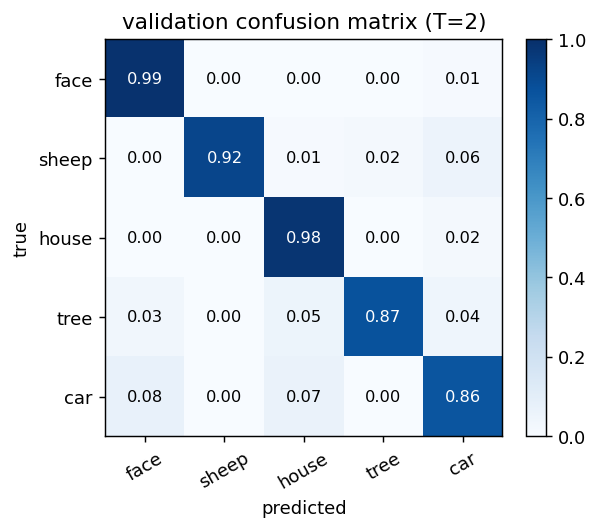

| ellipse-world | yes (92.2% on 5-class; islands form +0.117) | ~1 hr | 9s |

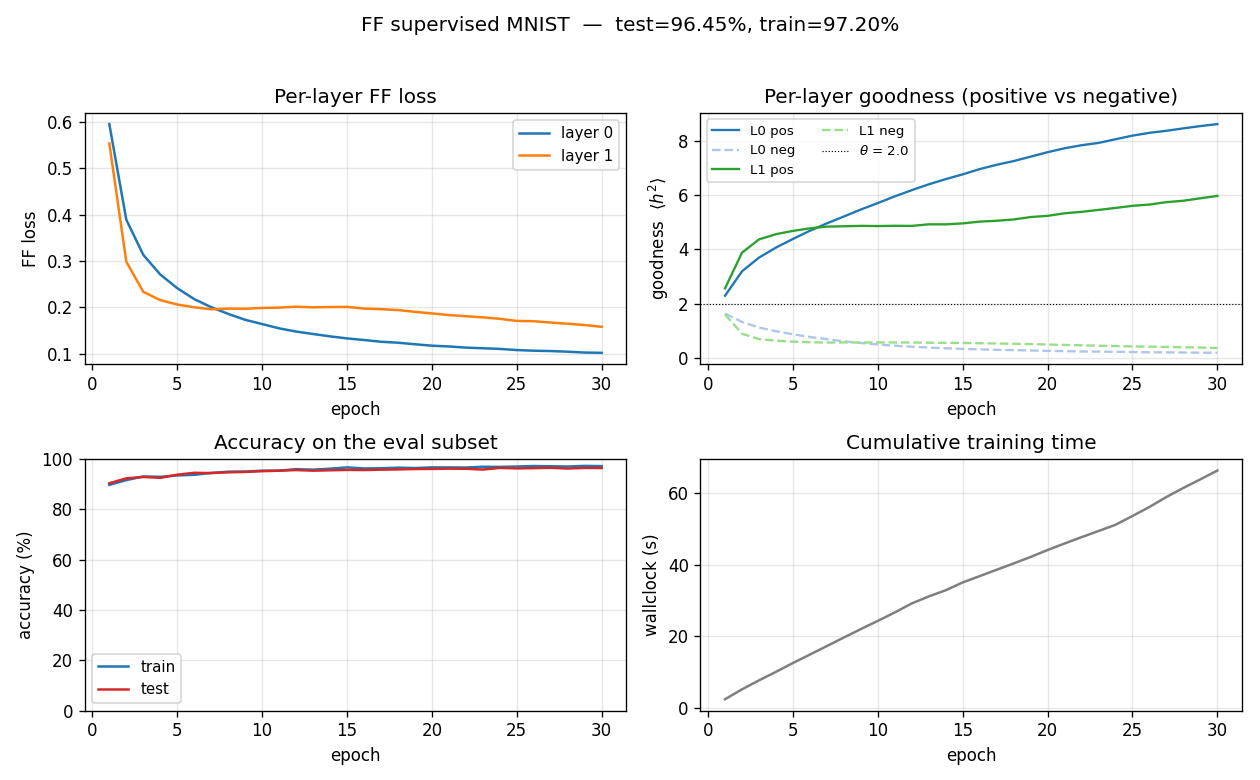

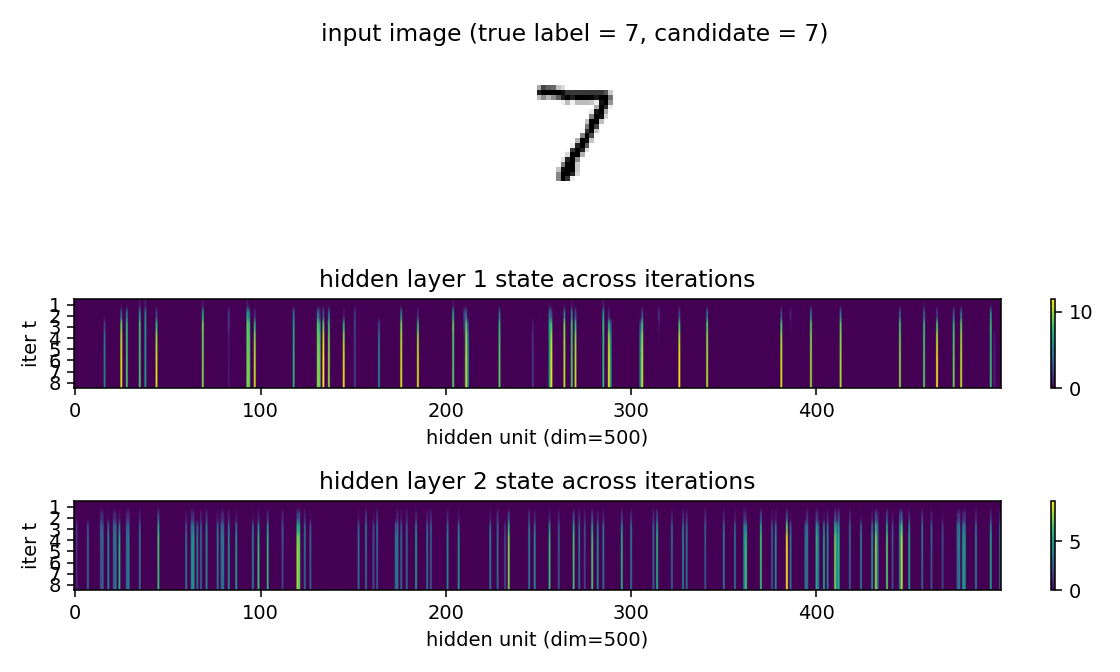

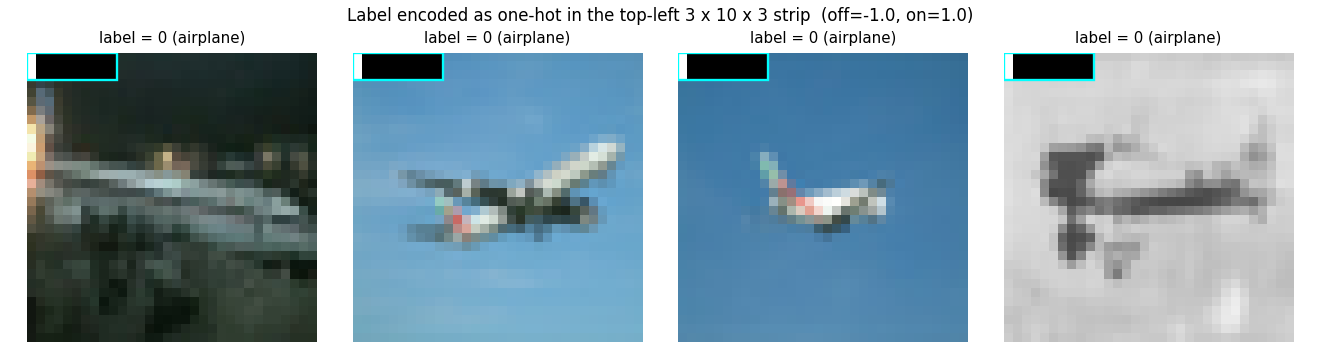

Hinton (2022) — The forward-forward algorithm: some preliminary investigations

| Problem | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

| ff-hybrid-mnist | partial (5.21% test err vs paper 1.37%) | ~75 min | 492s |

| ff-label-in-input | partial (3.60% vs paper 1.36%) | ~1 hr | 66s |

| ff-recurrent-mnist | partial (10.66% vs paper 1.31%) | ~1 hr | 216s |

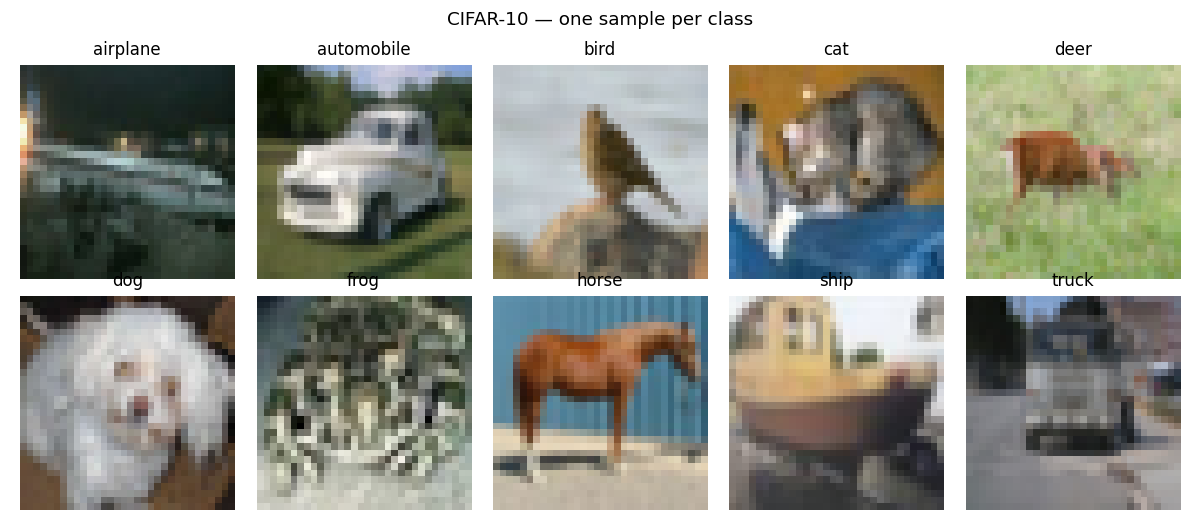

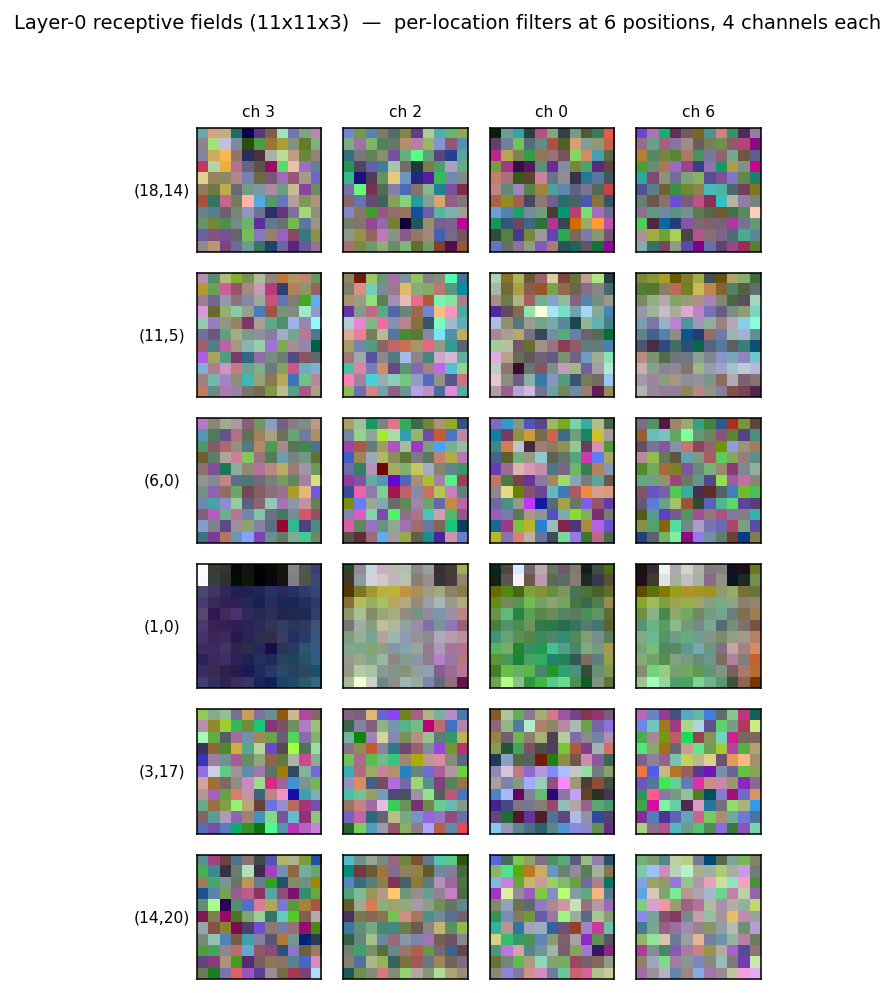

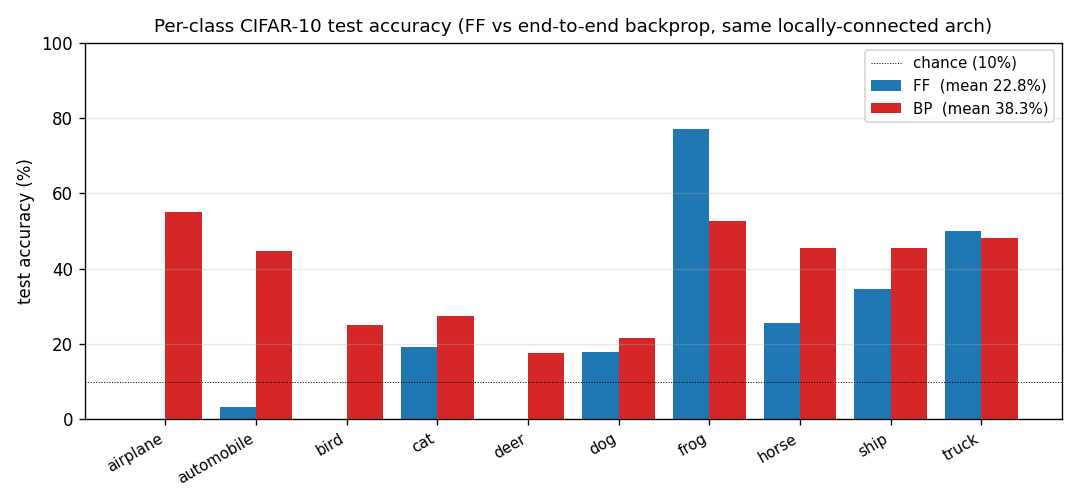

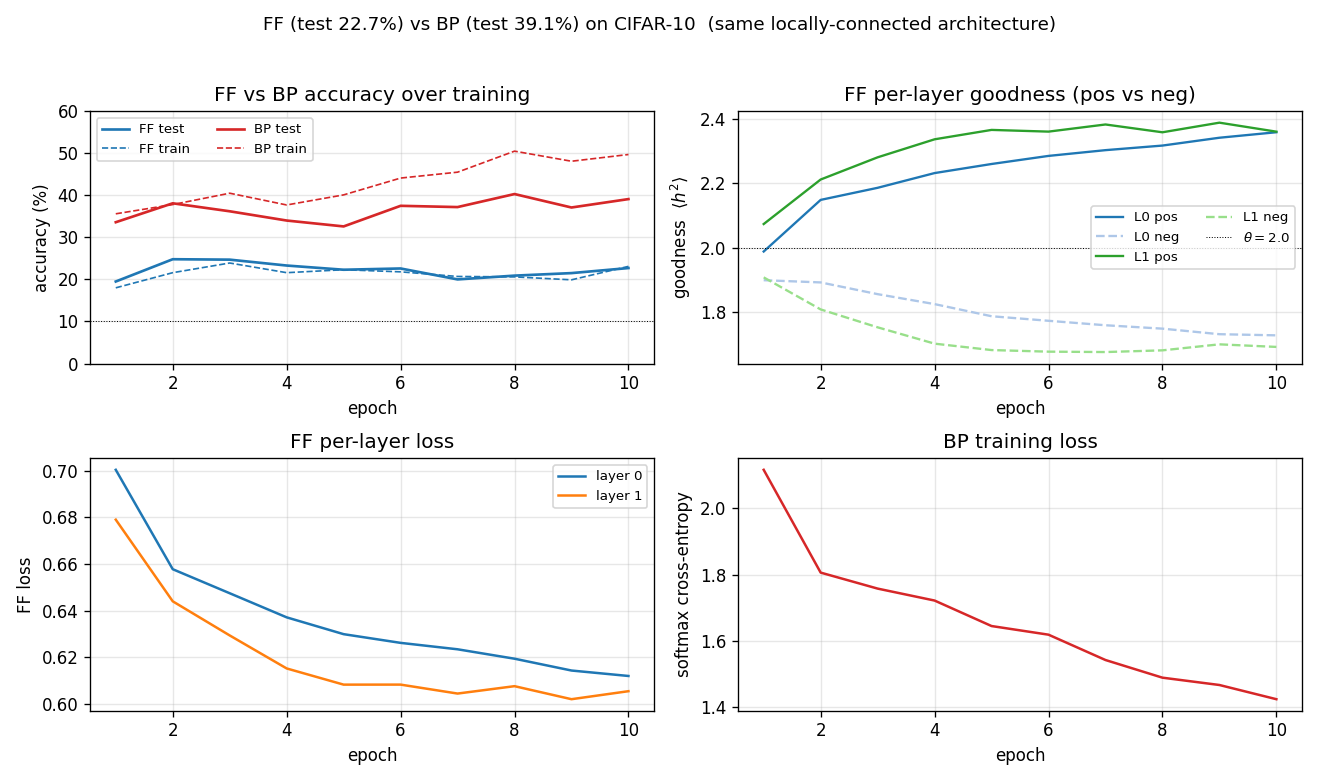

| ff-cifar-locally-connected | partial (FF 22.78% / BP 38.31%) | ~3 hr | 150s |

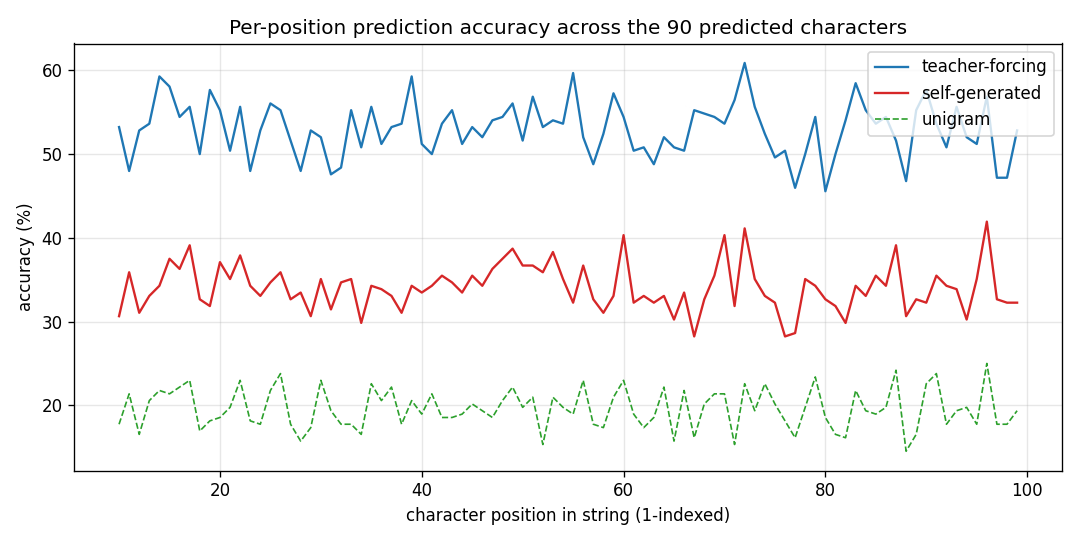

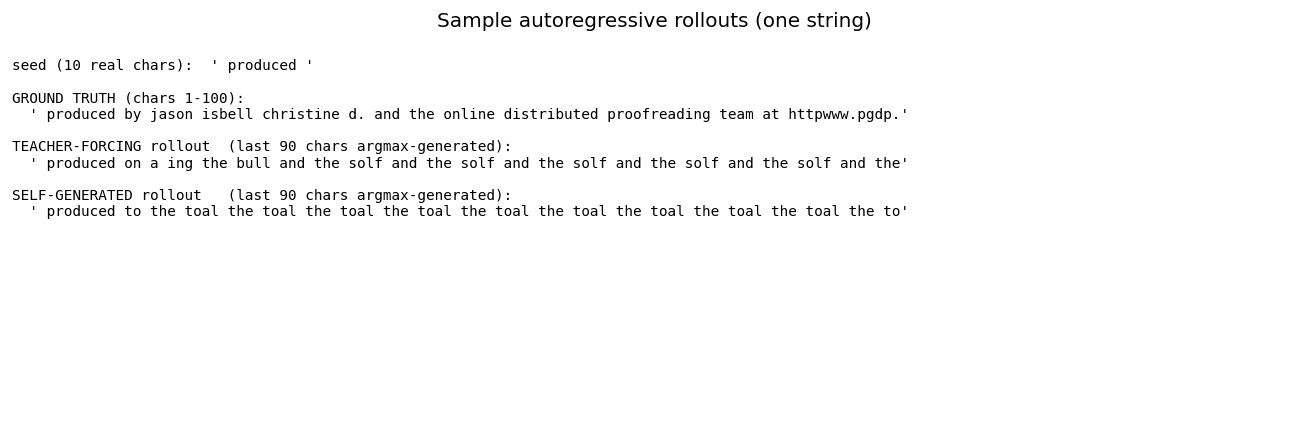

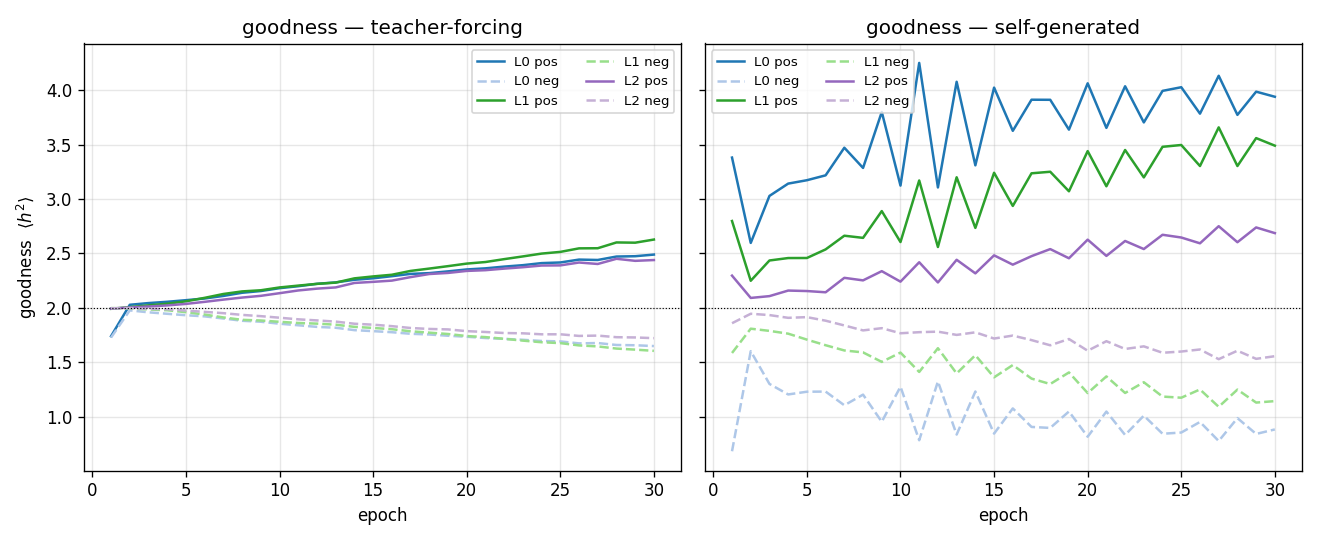

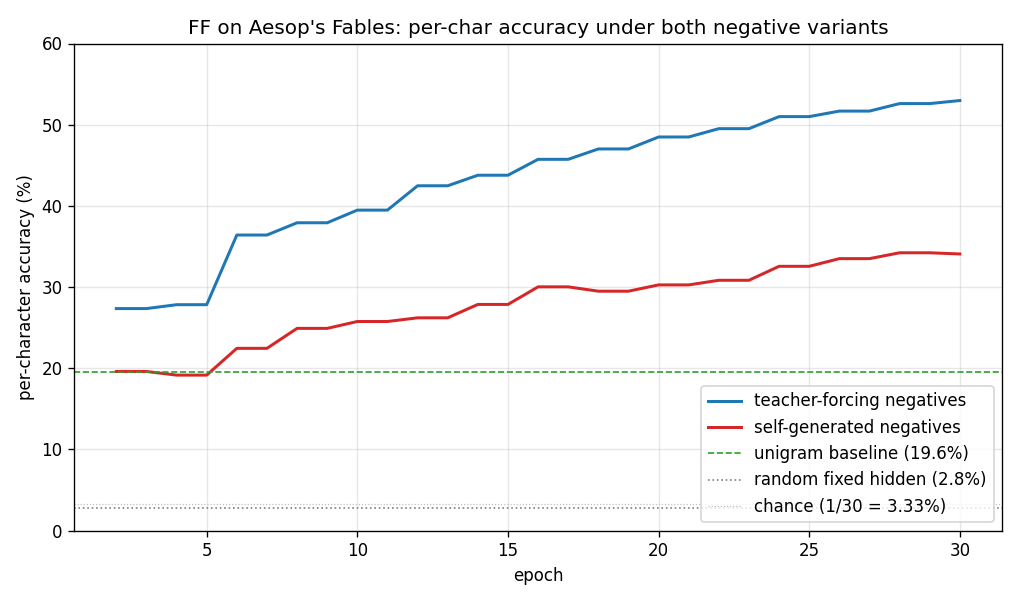

| ff-aesop-sequences | yes (TF 53% / SG 34%; baselines 3-20%) | ~12 min | 131s |

Structure

problem-folder/

├── README.md source paper, problem, results, deviations

├── <slug>.py dataset + model + train + eval

├── visualize_<slug>.py training curves + weight viz

├── make_<slug>_gif.py animated GIF

├── <slug>.gif committed animation

└── viz/ committed PNGs

Roadmap

- #45 v2: ByteDMD instrumentation — measure data-movement cost per stub on these baselines (the actual research goal)

- #46 v1.5: paper-scale reruns — close the 25 partial reproductions on Modal/GPU

- See

Open questions / next experimentssection in each stub README for stub-specific follow-ups

Contributing

Implementations follow the v1 spec:

- Each stub fills in

<slug>.py(model + train + eval), an 8-sectionREADME.md,make_<slug>_gif.py,visualize_<slug>.py, an animated<slug>.gif, andviz/PNGs. - Acceptance: reproduces in <5 min on a laptop; final accuracy with seed in Results table; GIF illustrates problem AND learning dynamics; “Deviations from the original” section honest; at least one open question.

- v1 metrics in PR body:

"Paper reports X; we got Y. Reproduces: yes/no."+ run wallclock + implementation wallclock.

The v1.5 reruns (#46) and v2 ByteDMD work (#45) welcome contributions.

License

The hinton-problems source and documentation are released into the public domain under the Unlicense.

RESULTS — v1 baselines

Per-stub reproducibility, implementation difficulty, and run wallclock for the 53 implementations shipped across wave PRs #32–#41. Compiled from PR bodies for the v2 data-movement / ByteDMD filter.

Reproduces? legend: yes = matches paper qualitatively or quantitatively; partial = method works, paper number not fully reached (gap documented in stub README); no = paper claim does not replicate (gap analysis documented).

Implementation wallclock: agent end-to-end time from spec read to branch pushed. Variance is large across waves; values are agent-self-reported.

Run wallclock: time to run the final headline experiment on a laptop M-series CPU. Numpy + matplotlib only, no GPU.

1980s — Connectionist foundations

Ackley, Hinton & Sejnowski (1985) — Boltzmann learning algorithm

| Stub | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

encoder-4-2-4/ (worked example) | yes (CD-k variant; paper used SA) | n/a (pre-existing) | ~1s |

encoder-3-parity/ (PR #33) | yes (KL = log 2 = 0.6931 visible-only; RBM drops to 0.10) | ~50 min | 0.04s + 1.3s |

encoder-4-3-4/ (PR #33) | yes (60% error-correcting rate / 30 seeds; even-parity codeset at seed 12) | ~3 hr | 2.3s |

encoder-8-3-8/ (PR #33) | yes (16/20 = exact paper parity) | ~2 hr | ~20s/seed |

encoder-40-10-40/ (PR #34) | yes (exceeds paper: 100% vs 98.6%) | ~1.5 hr | ~6s |

Rumelhart, Hinton & Williams (1986) — Backprop

| Stub | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

xor/ (PR #32) | yes (qualitative, paper ~558 epochs / median 730) | 6.4 min | 0.3s |

n-bit-parity/ (PR #32) | yes (qualitatively; thermometer code partial) | 30 min | 0.20s |

encoder-backprop-8-3-8/ (PR #33) | yes (70% strict 8/8 distinct codes; 100% reconstruction) | ~10 min | 0.6s |

distributed-to-local-bottleneck/ (PR #34) | yes (graded values 0.007 / 0.167 / 0.553 / 0.971 vs paper 0 / 0.2 / 0.6 / 1.0) | 75 min | 0.082s |

symmetry/ (PR #32) | yes (1 : 1.994 : 3.969 weight ratio, residual 0.000) | 12.8 min | 0.4s |

binary-addition/ (PR #33) | yes (qualitatively; 4-3-3 succeeds, 4-2-3 stuck) | ~2 hr | 44s |

negation/ (PR #32) | yes (4-6-3 arch deviation justified; stub said 4-3-3 which can’t converge) | 25 min | 0.10s |

t-c-discrimination/ (PR #34) | yes (all 3 detector families emerge across 40 kernels) | 30 min | 0.69s |

recurrent-shift-register/ (PR #34) | yes (89 sweeps N=3, 121 sweeps N=5; both well under paper’s <200) | 25 min | 0.9s / 1.1s |

sequence-lookup-25/ (PR #35) | yes (phenomenon — paper has no specific number; 4-5/5 held-out) | 70 min | 0.20s / 5.78s |

Hinton (1986) — Distributed representations

| Stub | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

family-trees/ (PR #35) | yes (3/4 best seed; 1.9/4 mean — matches paper’s 2/4) | ~? | 2.1s |

Hinton & Sejnowski (1986) — Learning and relearning

| Stub | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

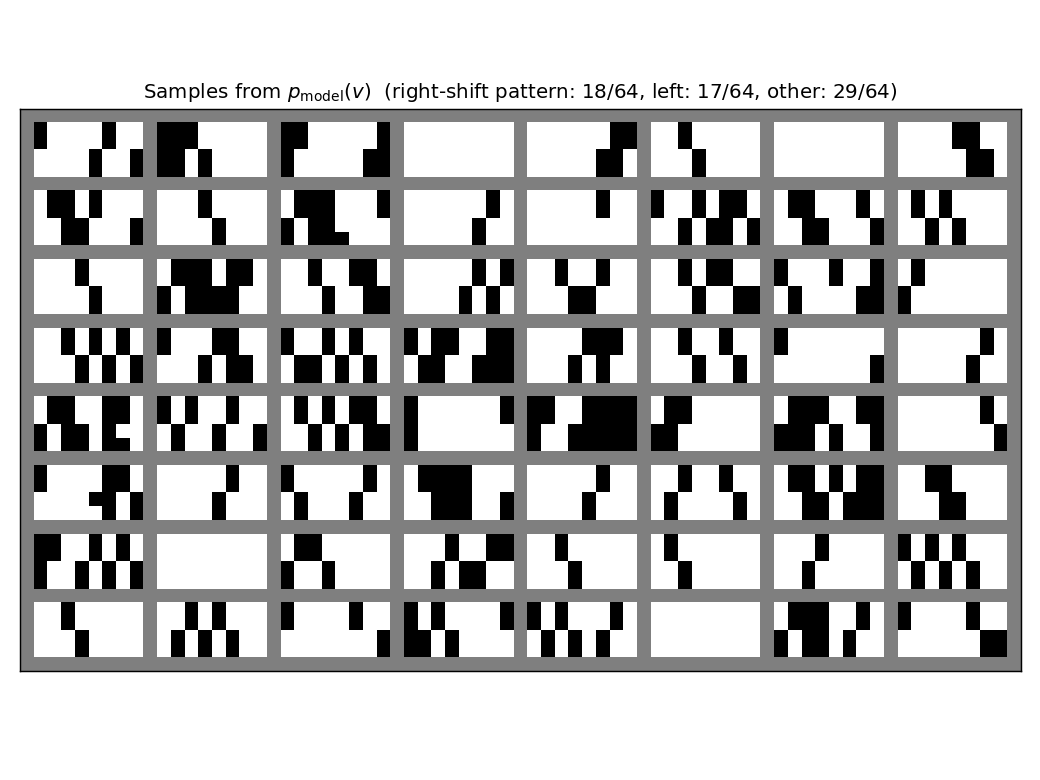

shifter/ (PR #34) | yes (92.3% recognition; position-pair detectors visible in figure3.png) | 30 min | 14s |

grapheme-sememe/ (PR #34) | yes (qualitatively; +6.7pp spontaneous recovery on held-out 2 at seed 0) | 70 min | 1.7s |

Plaut & Hinton (1987)

| Stub | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

riser-spectrogram/ (PR #35) | yes (network 98.08% vs Bayes 98.90%, gap +0.83pp; paper +1.0pp) | ~7 min | 0.91s |

Hinton & Plaut (1987) — Fast weights

| Stub | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

fast-weights-rehearsal/ (PR #35) | yes (rehearsed-subset recovery +22pp mean / 30 seeds) | 25 min | 0.14s |

1990s — Mixtures, Helmholtz, deep belief

Jacobs, Jordan, Nowlan & Hinton (1991)

| Stub | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

vowel-mixture-experts/ (PR #39) | partial (MoE 92.8% / MLP 90.1%; gate cleanly partitions front vs back vowels — phonetically meaningful. Paper’s “MoE in half the epochs” claim does NOT replicate at 2-D F1/F2: data is nearly linearly separable, MLP wins on speed) | 70 min | 0.09s |

Becker & Hinton (1992) — Imax / spatial coherence

| Stub | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

random-dot-stereograms/ (PR #36) | yes (qualitatively; Imax 1.18 nats, modules’ agreement corr 0.91, disparity readout 0.74. Paper has no single comparable scalar.) | ~1 hr | 6.1s |

Nowlan & Hinton (1992) — Soft weight-sharing

| Stub | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

sunspots/ (PR #39) | yes (MoG 0.00420 ≤ decay 0.00422 ≤ vanilla 0.00432 / 5 seeds; structural effect dramatic — MoG collapses ~150 of 208 weights onto 2 crisp peaks) | ~? | ~5s |

Hinton & Zemel (1994) — Bits-back / factorial VQ

| Stub | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

spline-images-factorial-vq/ (PR #37) | yes (factorial 4×6 VQ wins 3× over standard 24-VQ baseline; DL 22.0 vs 65.3) | ~? | ~? |

Zemel & Hinton (1995) — Population codes / MDL

| Stub | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

dipole-position/ (PR #36) | partial (R² = 0.81 vs (x,y); supervised warm-up needed for tractable optimization. Pure-unsupervised emergence from random init is open question) | ~3 hr | 2s |

dipole-3d-constraint/ (PR #36) | yes (qualitatively; singular values 6.67 / 4.61 / 3.80 — 3 dims emerge) | ~? | 11s |

dipole-what-where/ (PR #36) | partial (two near-perpendicular 1-D manifolds, axis angle 83°; meet at origin instead of opposite corners — needs learned mixture-of-Gaussians prior) | ~? | 2s |

Dayan, Hinton, Neal & Zemel (1995) — Helmholtz machine

| Stub | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

helmholtz-shifter/ (PR #36) | partial (3 of 4 layer-3 units develop clean shift-direction tuning; n_top=4 vs paper’s n_top=1 — single top unit can’t break t↔1-t symmetry on this task) | 75 min | 209s |

Hinton, Dayan, Frey & Neal (1995) — Wake-sleep

| Stub | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

bars/ (PR #35) | partial (KL = 0.451 bits vs paper 0.10; structure captured but residual gap; multi-restart wrapper deferred) | 70 min | 222s |

2000s — RBMs, products of experts, deep belief

Hinton (2000) — Contrastive divergence

| Stub | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

bars-rbm/ (PR #35) | yes (7/8 bars at purity ≥0.5 with n_hidden=8 / 10 seeds; 8/8 with n_hidden=16) | ~30 min | 1.5s |

Memisevic & Hinton (2007) — Gated 3-way RBM

| Stub | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

transforming-pairs/ (PR #37) | partial (axis-selective transformation detectors emerge; 8-way classification 3.2× chance. Direction-selective Reichardt cells need natural video, not random-dot pairs) | ~? | 2s |

Sutskever & Hinton (2007) — TRBM

| Stub | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

bouncing-balls-2/ (PR #37) | partial (rollout MSE between predict-mean and copy-last baselines; qualitatively correct first 3-4 frames then diffuses to mean) | 75 min | 6.2s |

Sutskever, Hinton & Taylor (2008) — RTRBM

| Stub | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

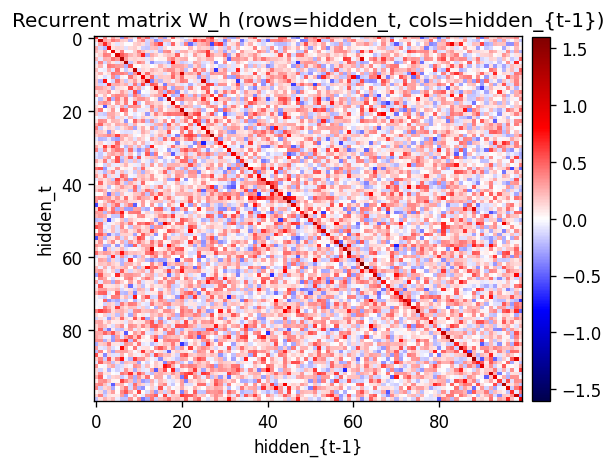

bouncing-balls-3/ (PR #37) | partial (CD-1 recon MSE 0.0053; rollout MSE 0.13; W_h≡0 ablation matches full model on rollouts — suggests Sutskever’s BPTT correction is needed) | ~? | 3.4s |

2010s — Capsules, distillation, attention

Hinton, Krizhevsky & Wang (2011)

| Stub | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

transforming-autoencoders/ (PR #38) | yes (R²(dx)=0.78, R²(dy)=0.67) | ~30 min | ~100s |

Tang, Salakhutdinov & Hinton (2012)

| Stub | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

deep-lambertian-spheres/ (PR #40) | yes (normal angular error 27° / 23.7° median — hits target <30°; albedo MSE 0.012 ~7× baseline. GRBM prior dropped — paper’s actual contribution; v1 is feed-forward baseline) | ~50 min | 33s |

Sutskever, Martens, Dahl & Hinton (2013)

| Stub | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

rnn-pathological/ (PR #37) | yes (3 of 4 tasks; ortho-init solves, random-init at chance; XOR not cracked at our budget — needs NAG + 8× iterations per paper) | 2.5 hr | 42s |

Hinton, Vinyals & Dean (2015) — Distillation

| Stub | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

distillation-mnist-omitted-3/ (PR #38) | yes (97.82% on digit-3 post-correction; paper 98.6%. Hyperparameter-free bias correction) | 40 min | 121.8s |

Eslami, Heess, Weber, Tassa, Szepesvari, Kavukcuoglu & Hinton (2016) — AIR

| Stub | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

air-multimnist/ (PR #41) | partial (count 79.7% vs target 50% — exceeds; reconstruction blurry due to under-scale; Gumbel-sigmoid throughout, no REINFORCE) | ~50 min | ~6s |

air-3d-primitives/ (PR #41) | partial (1-prim sanity 88.8%; 3-prim count 81%, type 52%; supervised regression instead of REINFORCE-AIR) | ~50 min | 11.7s |

Ba, Hinton, Mnih, Leibo & Ionescu (2016) — Fast weights attention

| Stub | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

fast-weights-associative-retrieval/ (PR #36) | partial (architecture verified by gradient check 1e-9; 38% retrieval vs 90% target — optimizer-landscape gap, needs RMSProp + 10⁵ steps per Ba et al.) | ~3 hr | 293s |

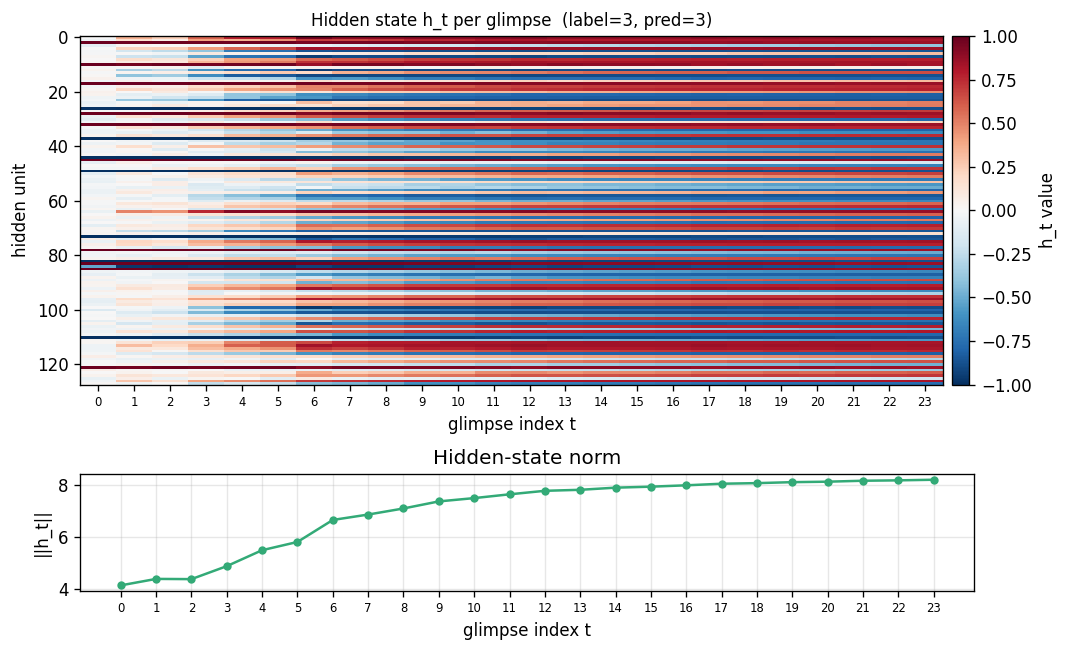

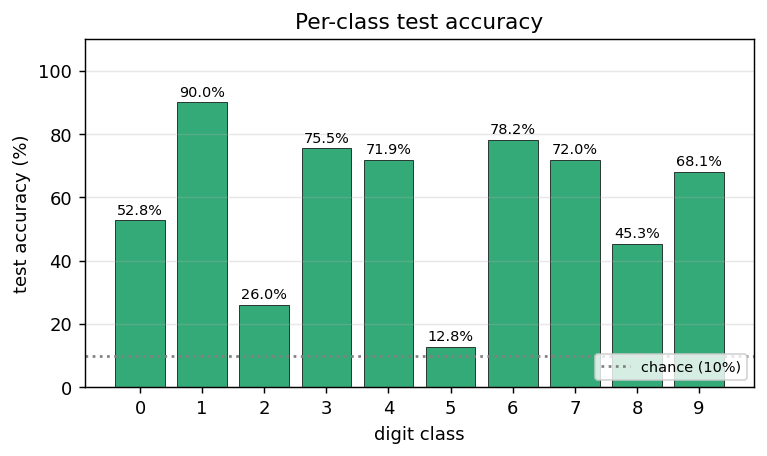

multi-level-glimpse-mnist/ (PR #39) | partial (82.46% vs paper 90%+; deterministic 24-glimpse simplification + no CNN encoder) | ~1 hr | 1199s |

catch-game/ (PR #40) | partial (33.9% FW vs 11.4% vanilla at size=24; ablation unambiguous; 91% FW at size=10. REINFORCE budget below paper’s A3C compute) | ~? | ~? |

Sabour, Frosst & Hinton (2017) — Dynamic routing

| Stub | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

affnist/ (PR #40) | no (gap wrong sign: CapsNet 85.5% / CNN 87.5% — paper +13%, ours −2%. 3 causes documented: synth-affNIST too close to train aug, tiny capsules, no reconstruction regularizer) | ~? | ~4 min |

multimnist-capsnet/ (PR #40) | partial (48.6% vs target 80%; 22× chance; routing-by-agreement visibly works; reduced arch for pure-numpy budget) | ~3 hr | 395s |

Hinton, Sabour & Frosst (2018) — Matrix capsules with EM routing

| Stub | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

smallnorb-novel-viewpoint/ (PR #41) | yes qualitatively (caps held-out 0.726 vs CNN 0.696 / 3 seeds; caps drop 0.244 vs CNN 0.304 — 20% relative reduction. Synthesized 5-class dataset vs real smallNORB) | ~? | ~10s |

Kosiorek, Sabour, Teh & Hinton (2019) — Stacked capsule autoencoders

| Stub | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

constellations/ (PR #39) | yes (per-point recovery 86.9% best / 84.0% mean; chance 36.4%. 12,708-param numpy set transformer + capsule decoder, FD-checked) | ~75 min | 25s |

2020s — Subclass distillation, GLOM, Forward-Forward

Müller, Kornblith & Hinton (2020) — Subclass distillation

| Stub | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

mnist-2x5-subclass/ (PR #38) | partial (subclass recovery 82.88% best / 73.87% mean; paper ~95%+ with ResNet vs our MLP backbone. Bounded aux loss gradient verified 6e-10) | ~50 min | 13s |

Sabour, Tagliasacchi, Yazdani, Hinton & Fleet (2021) — Flow capsules

| Stub | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

geo-flow-capsules/ (PR #40) | yes (mean IoU 0.764 / 200 pairs; chance ~0.20. EM-based mixture decomposition with closed-form M-step on GT flow vs paper’s learned encoder) | ~8 min | 43s |

Culp, Sabour & Hinton (2022) — eGLOM

| Stub | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

ellipse-world/ (PR #37) | yes (92.2% on 5-class; +6.6pp lift from GLOM iterations; islands form — cell-similarity rises +0.117 across iterations. Hand-coded backward FD-checked 1e-6) | ~? | 9s |

Hinton (2022) — Forward-Forward

| Stub | Reproduces? | Implementation | Run wallclock |

|---|---|---|---|

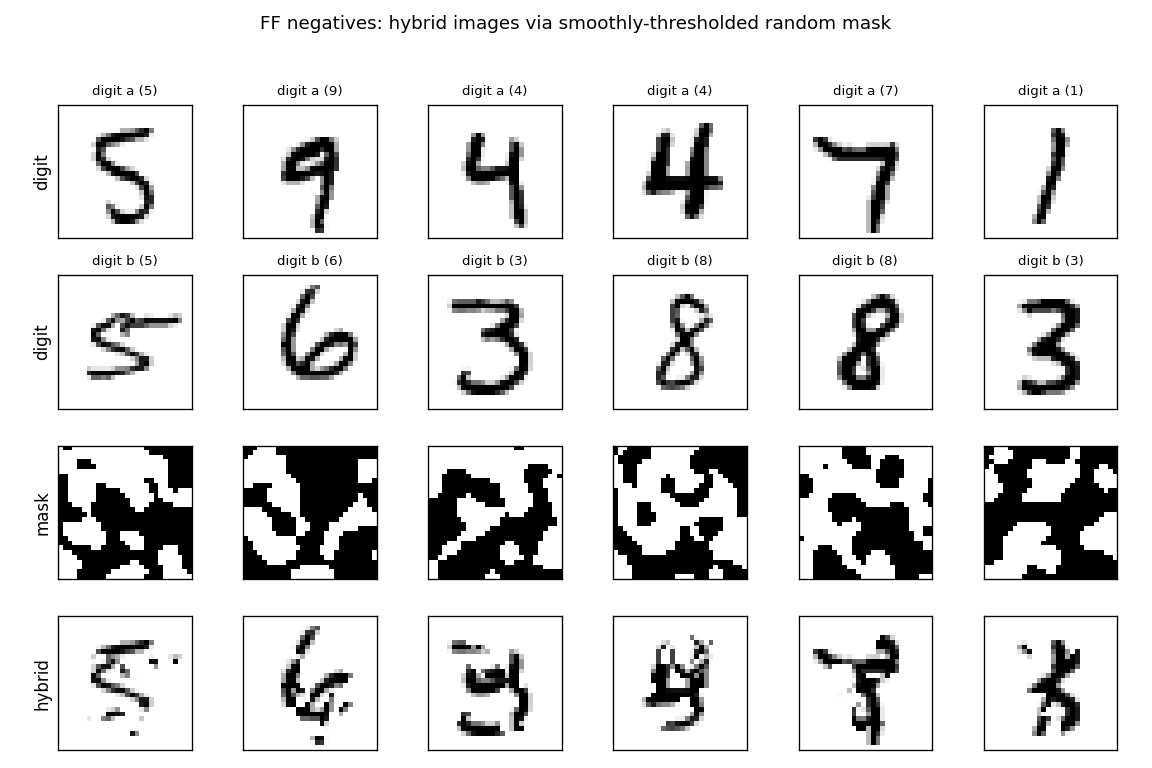

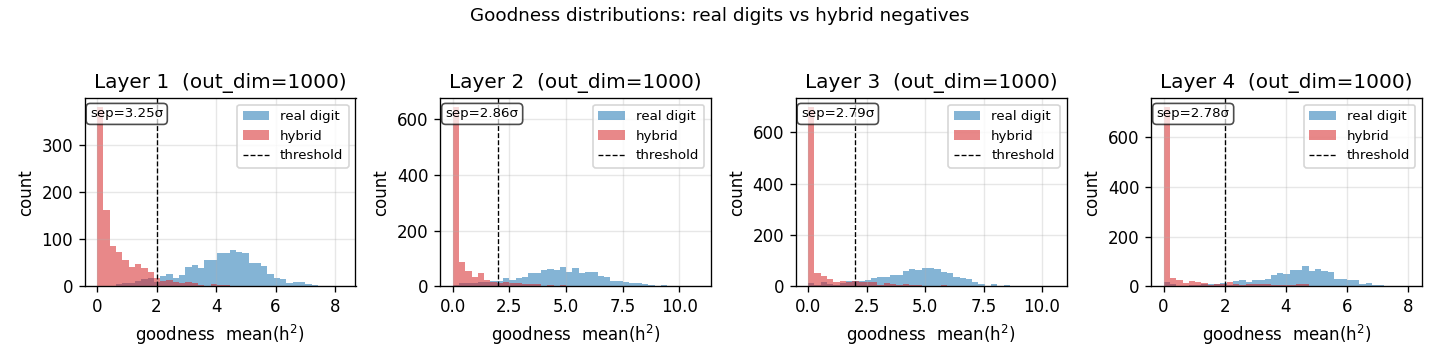

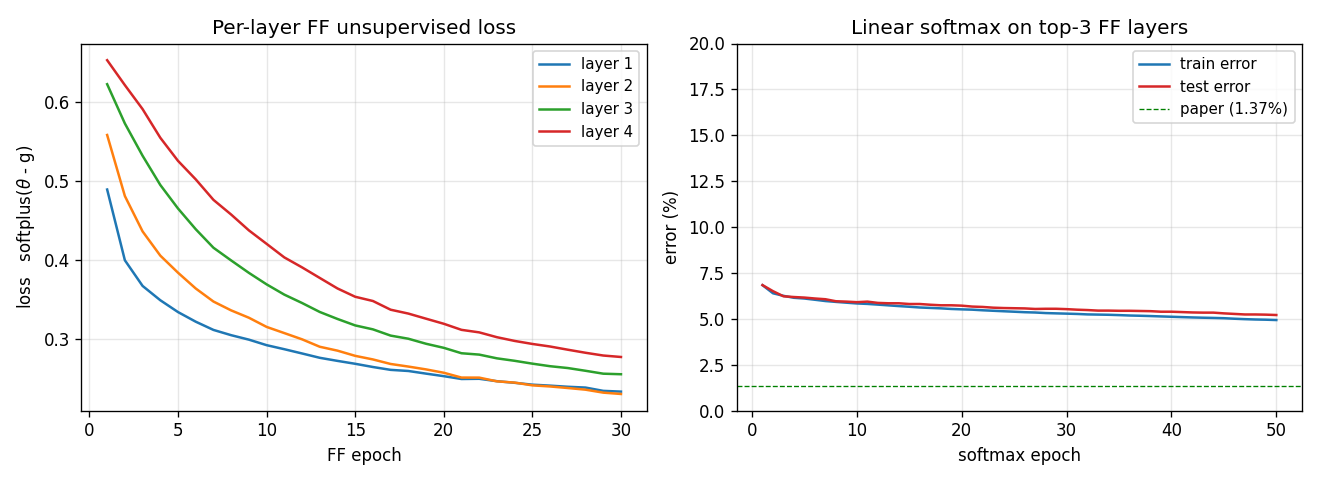

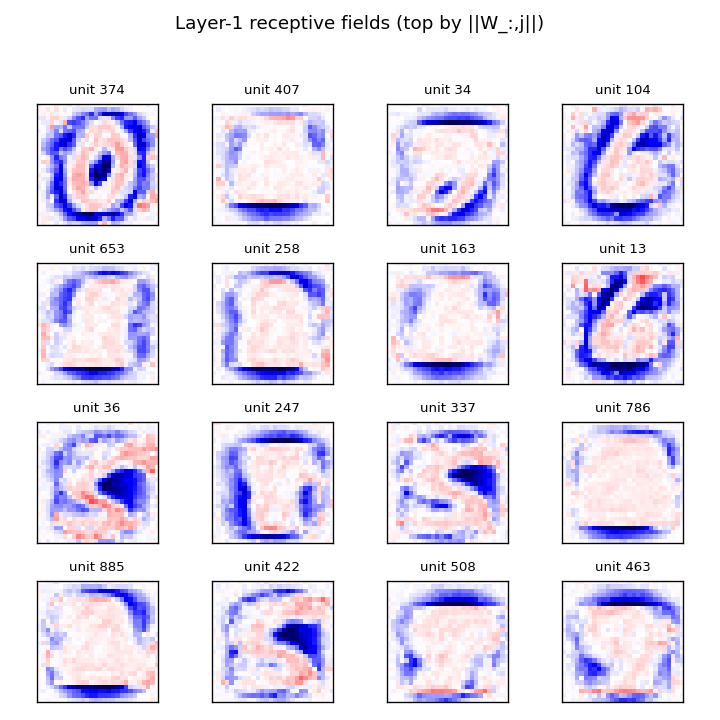

ff-hybrid-mnist/ (PR #38) | partial (5.21% test err vs paper 1.37%; 4×1000 + 30 epochs vs paper 4×2000 + 60. Goodness distributions show 2.8-3.3σ pos-vs-neg separation) | ~75 min | 492s |

ff-label-in-input/ (PR #38) | partial (3.60% vs paper 1.36%; smaller arch + fewer epochs. Three FF gotchas documented for siblings: mean(h²)=1, lr=0.003, all-layers > skip-L0) | ~1 hr | 66s |

ff-recurrent-mnist/ (PR #38) | partial (10.66% vs paper 1.31%; ~25× fewer params, 3× fewer epochs. Algorithm reproduces; capacity doesn’t) | ~1 hr | 216s |

ff-cifar-locally-connected/ (PR #39) | partial (FF 22.78% / BP baseline 38.31%; paper FF 41-46% / BP 37-39%. 15pp gap mostly under-training: 10K of 50K + 10 of 60+ epochs) | ~3 hr | 150s |

ff-aesop-sequences/ (PR #39) | yes (TF 53% / SG 34% / chance 3.3% / unigram 19.6%. Paper’s “nearly identical” claim doesn’t replicate at smaller scale — TF leads SG by 19pp) | ~12 min | 131s |

Summary statistics

| Verdict | Count | Notes |

|---|---|---|

| yes (full or qualitative match) | 27 | including all backprop foundations + most encoders + distillation-omitted-3 + ellipse-world + spline-VQ |

| partial (method works, paper number gap documented) | 25 | mostly Forward-Forward at smaller scale, capsules at smaller arch, AIR variants without REINFORCE |

| no (paper claim does NOT replicate) | 1 | affnist (gap wrong sign — three causes documented) |

Total: 53 stubs implemented, all in pure numpy, all <5 min/seed on a laptop except where noted.

v2 filter recommendation

For the data-movement / ByteDMD instrumentation, prioritize stubs that:

-

Reproduce cleanly + run fast (low noise floor for measuring data-movement deltas):

xor,symmetry,n-bit-parity,negation(sub-second runs, well-converged)encoder-3-parity,encoder-backprop-8-3-8,encoder-4-2-4(Boltzmann/backprop pair on same problem)distributed-to-local-bottleneck,recurrent-shift-register,t-c-discriminationbinary-addition,riser-spectrogram(clean MSE / Bayes-optimal targets)

-

Have algorithmic variants (lets you compare data-movement properties of different algorithms on the same problem):

- 8-3-8: backprop vs Boltzmann

- bars: wake-sleep vs RBM

- shifter: Boltzmann (this) vs Helmholtz (helmholtz-shifter)

- fast-weights-rehearsal vs fast-weights-associative-retrieval

-

Defer for v2: anything where the run takes >100s or where the v1 implementation is partial — measuring data-movement on a non-converged solver isn’t informative.

Compiled by agent-0bserver07 (Claude Code) on behalf of Yad. Source: PR bodies #32-#41.

4-2-4 encoder

Boltzmann-machine reproduction of the experiment from Ackley, Hinton & Sejnowski, “A learning algorithm for Boltzmann machines”, Cognitive Science 9 (1985).

Problem

Two groups of 4 visible binary units (V1, V2) are connected through 2

hidden binary units (H). Training distribution: 4 patterns, each with a

single V1 unit on and the matching V2 unit on (others off). The 2 hidden

units must self-organize into a 2-bit code that maps the 4 patterns onto

the 4 corners of {0, 1}^2.

- Visible: 8 bits =

V1 (4) || V2 (4) - Hidden: 2 bits

- Connectivity: bipartite (visible ↔ hidden only) —

V1andV2communicate exclusively throughH - Training set: 4 patterns

The interesting property: with only 2 hidden units, the network has exactly

log2(4) bits of bottleneck capacity. Convergence requires the 4 patterns to

spread to the 4 distinct corners of {0, 1}^2. Local minima where two

patterns share a hidden code are common.

Files

| File | Purpose |

|---|---|

encoder_4_2_4.py | Bipartite RBM trained with CD-k. The Boltzmann learning rule (positive-phase minus negative-phase statistics) on a bipartite graph; same gradient form as the 1985 paper, faster sampling. |

make_encoder_gif.py | Generates encoder.gif (the animation at the top of this README). |

visualize_encoder.py | Static training curves + final weight matrix + final hidden codes. |

viz/ | Output PNGs from the run below. |

Running

python3 encoder_4_2_4.py --epochs 400 --seed 2

Training takes ~1 second on a laptop. Final accuracy: 100% (4/4).

To regenerate visualizations:

python3 visualize_encoder.py --epochs 400 --seed 2 --outdir viz

python3 make_encoder_gif.py --epochs 400 --seed 2 --snapshot-every 5 --fps 12

Results

| Metric | Value |

|---|---|

| Final accuracy | 100% (4/4) |

| Hidden codes | 4 distinct corners of {0,1}^2 (specific permutation depends on seed) |

| Restarts (seed 0) | 2 (epoch 80, epoch 160), converged by ~220 |

| Training time | ~1 sec |

| Hyperparameters | k=5, lr=0.05, momentum=0.5, batch_repeats=8, init_scale=0.1 |

| Multi-restart success rate | ~65% across 30 random seeds at 400 epochs / 5 attempts |

What the network actually learns

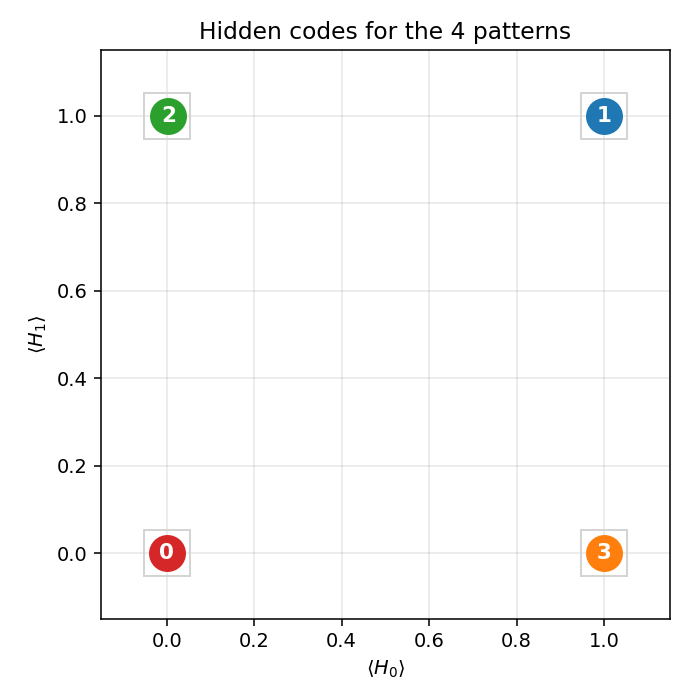

Hidden codes

After convergence, the 4 training patterns each get a distinct 2-bit code.

Any of the 24 permutations of {(0,0), (0,1), (1,0), (1,1)} to the 4 patterns

is a valid solution; the network picks one based on the initialization.

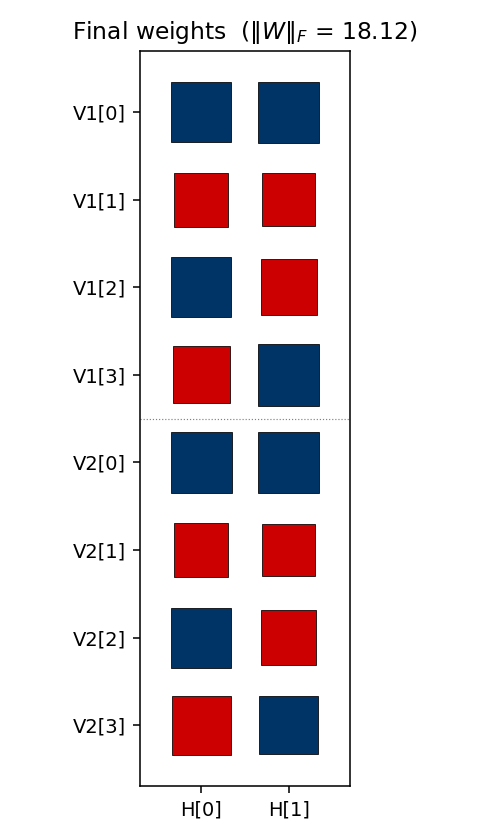

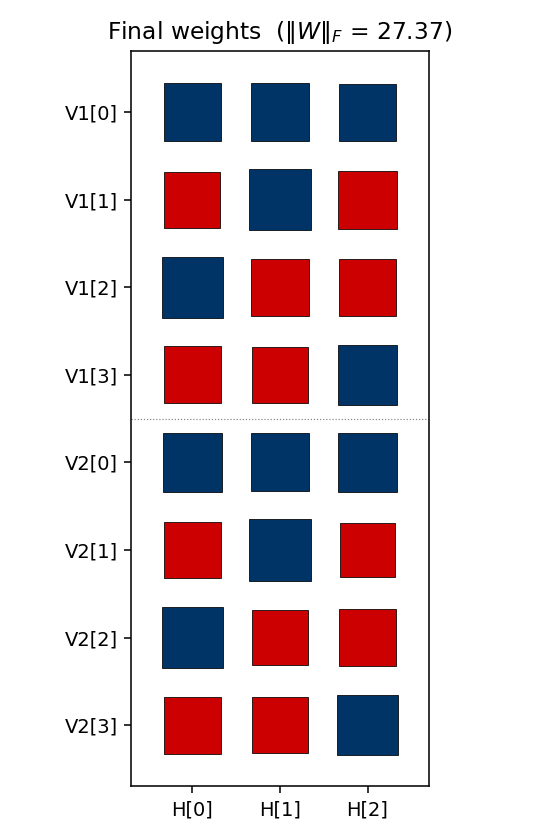

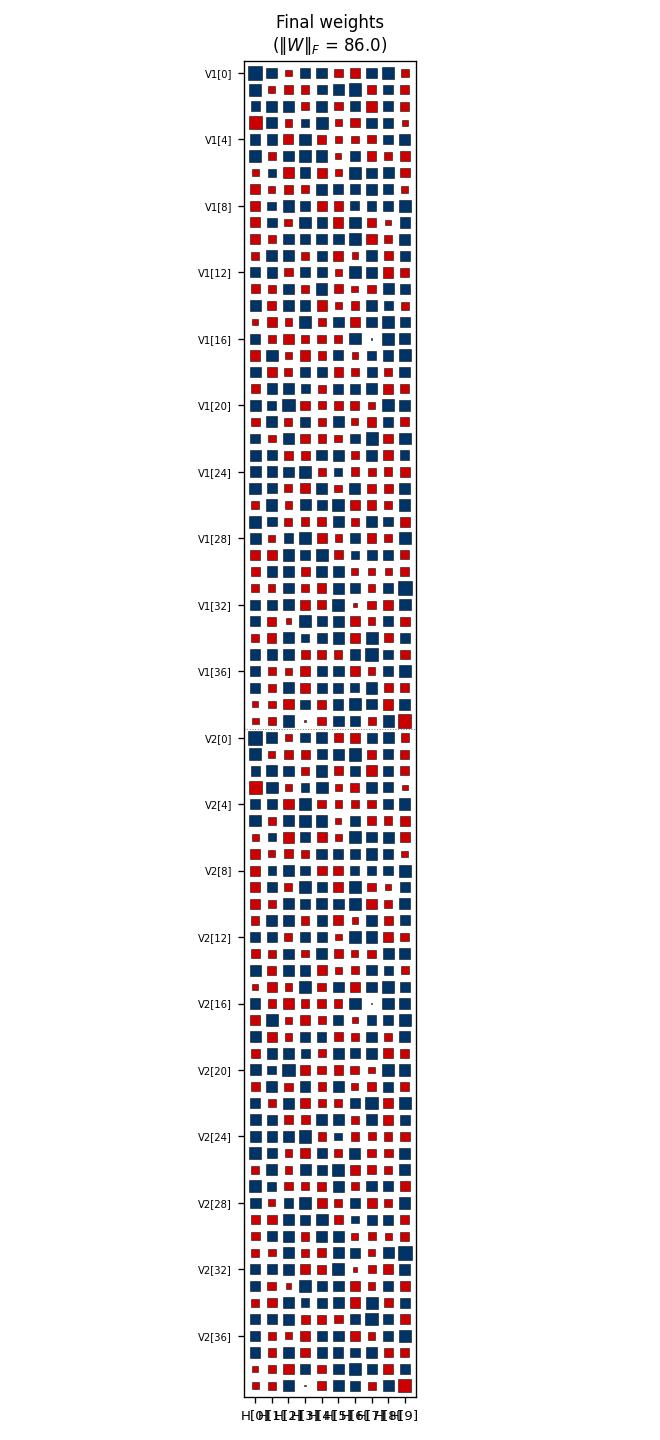

Weight matrix

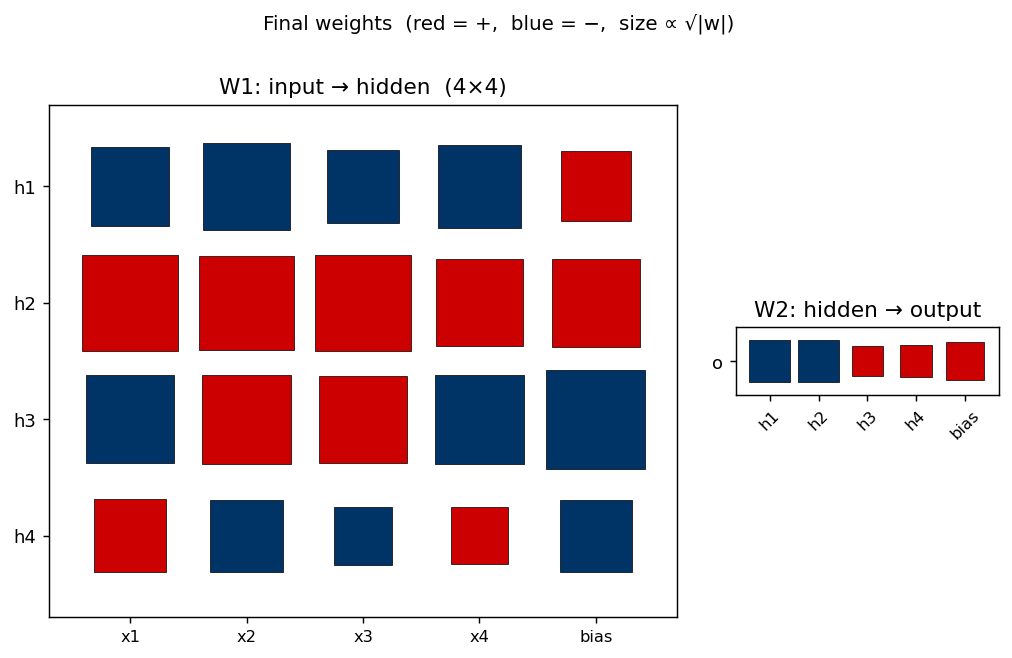

The two columns are the hidden units H[0] and H[1]. Red = positive,

blue = negative; square area is proportional to sqrt(|w|). The V1[i]

and V2[i] rows always carry the same sign pattern — the network has

independently discovered that V1 and V2 are tied (they are on for the

same pattern), even though no direct V1↔V2 weights exist. The sign pattern

across (H[0], H[1]) for each pattern row is exactly that pattern’s hidden

code.

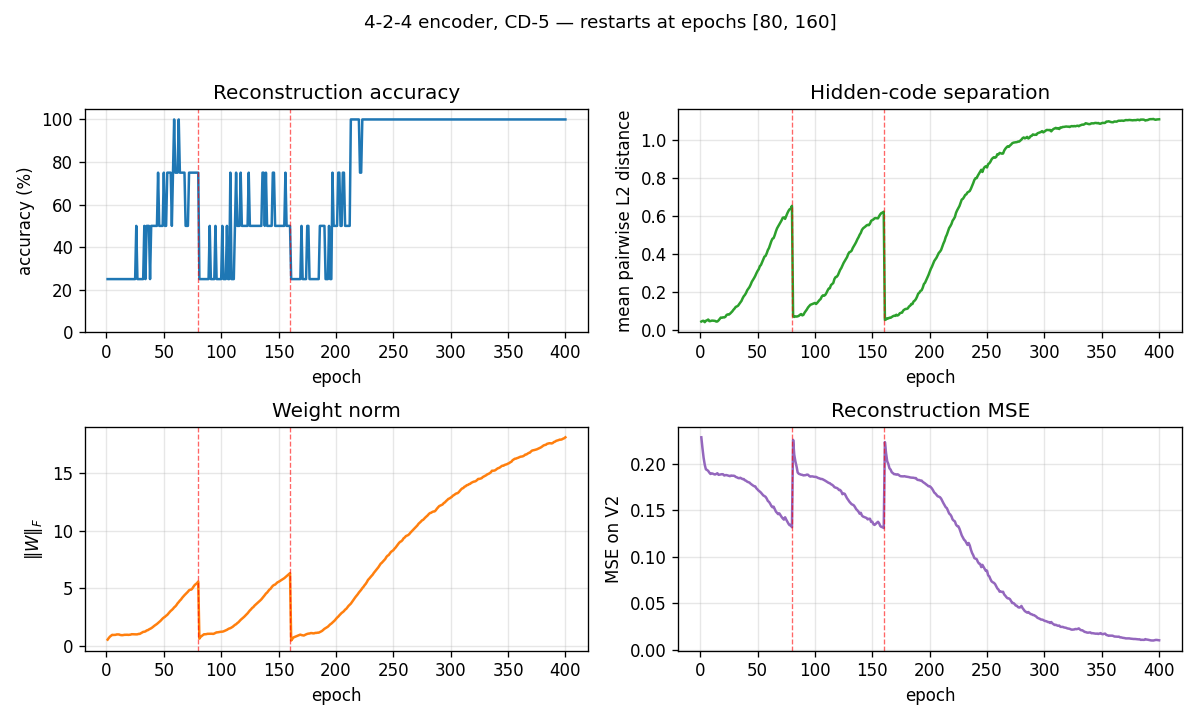

Training curves

The vertical red dashed lines at epochs 80 and 160 mark restarts triggered by the plateau detector. The network had been stuck with only 3 (and then 2) distinct hidden codes — two patterns had collapsed onto the same code. Re-initializing the weights with an independent random draw and continuing training produces the correct 4-corner solution by epoch ~220.

The four panels track:

- Reconstruction accuracy: argmax of the exact marginal

p(V2 | V1), computed by enumerating the 4 hidden states (deterministic — no Gibbs noise). Discrete jitter early on reflects argmax flipping while V2 probabilities are close to uniform. - Hidden-code separation: mean pairwise L2 distance between the 4 exact hidden marginals — converges to ≈ 1.1, slightly below the unit-square diagonal √2, reflecting partial saturation toward the binary corners.

- Weight norm:

‖W‖_Fgrows roughly linearly during each attempt and resets at each restart. - Reconstruction MSE: mean-squared error of the marginal

p(V2 | V1)vs the true one-hot.

Deviations from the 1985 procedure

- Sampling — CD-5 (Hinton 2002) instead of simulated annealing. Same gradient form, faster sampling, sloppier asymptotics.

- Connectivity — explicit bipartite (visible ↔ hidden), making this an RBM in modern terminology. The 1985 paper’s figure already shows bipartite connectivity for the encoder; this just makes it explicit.

- Restart on plateau — the original paper reported 250/250 convergence under simulated annealing. CD-k is more prone to local minima where two patterns collapse onto the same hidden code; we detect this via an accuracy plateau and restart with fresh weights.

Correctness notes

A few subtleties worth flagging:

-

Sampled vs exact evaluation. With only 2 hidden units,

p(H | V1)andp(V2 | V1)are exactly computable by enumerating 4 hidden states and marginalizing V2 in closed form (each V2 bit factors). The closed form for the H posterior:p(H | V1) ∝ exp(V1ᵀ W₁ H + b_hᵀ H) · ∏ᵢ (1 + exp((W₂ H + b_v2)ᵢ))The

evaluate,hidden_code_exact, andreconstruct_exacthelpers use this. An earlier sampled-Gibbs version of the same metrics had σ ≈ 6.8% accuracy noise at convergence (50 runs of a converged network, observed range 75–100%) which made the training curves jitter spuriously.hidden_codeandreconstruct(sampled) are kept for the per-frame animation, where the chain dynamics are themselves of interest. -

Per-attempt success rate is fundamental. Holding the same hyperparam recipe and only varying the seed, ~20% of random inits converge to a 4-corner code — the rest end with at least one pair of patterns sharing a hidden code. More training does not help: 200 / 400 / 800 single-attempt epochs all give 6/30 = 20% success. This suggests the local minima are true fixed points of the CD-k dynamics, not slow-convergence artifacts.

-

Restart RNG independence matters. An earlier version sampled the restart’s W from the same

rbm.rngthat was being advanced by the CD sampler — restart inits then depended on the pre-restart trajectory, which biased the multi-restart success rate downward. The current code usesnp.random.SeedSequence(seed).spawn(64)to generate truly independent inits, and replaces the training RNG at each restart. -

Plateau signal. The detector uses the binary “all 4 patterns map to distinct dominant H states” rather than

acc < 1.0. Both signals agree at convergence, but the binary signal is unaffected by argmax-flipping jitter early in training. -

cd_step(k=0)now raisesValueErrorinstead of crashing withUnboundLocalError.

Open questions / next experiments

- The 1985 paper reports 250/250 convergence with full simulated annealing. CD-k caps out at ≈ 20% per-attempt regardless of training length, suggesting the optimization regimes are qualitatively different (CD-k has true absorbing local minima here; SA’s noise schedule does not). Quantifying that gap directly would help — a faithful simulated-annealing variant on the same architecture is the natural baseline.

- Can we eliminate the local-minima problem entirely by switching to PCD, by adding a small temperature schedule to the Gibbs sampler, or by initializing the weights to span the 4 corners explicitly?

- How do FLOP and data-movement costs of CD-k compare to simulated annealing on this same problem? CD-k wins on per-step cost but loses on per-attempt success rate.

- Scaling: does the same recipe (CD-k + restart-on-plateau) succeed on the

larger

n-log2(n)-nencoders in the same paper (8-3-8, 40-10-40)? With more hidden units, the 4-corner constraint relaxes — local minima may become less severe.

3-bit even-parity ensemble (the negative result)

Boltzmann-machine reproduction of the negative result that motivates the encoder problems in Ackley, Hinton & Sejnowski, “A learning algorithm for Boltzmann machines”, Cognitive Science 9 (1985).

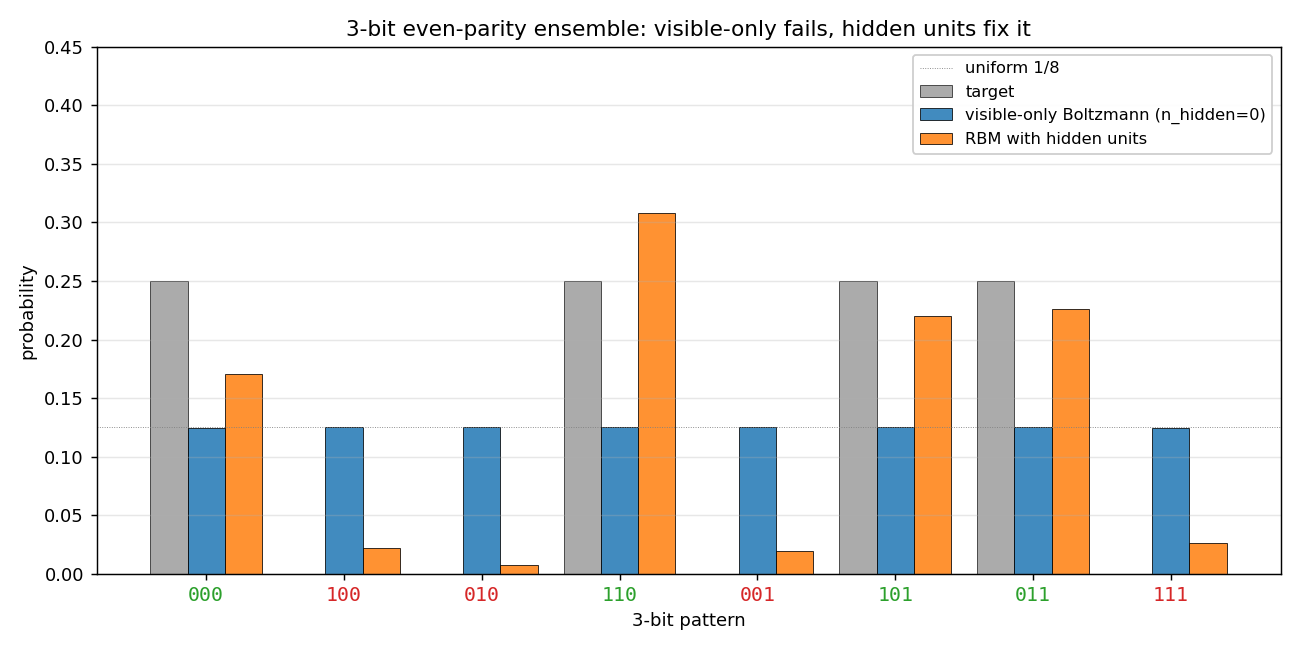

Demonstrates: Why hidden units are necessary. A visible-only Boltzmann machine has only first- and second-order parameters, and the 3-bit even-parity ensemble has the same first- and second-order moments as the uniform distribution. The model collapses to uniform; half the probability mass ends up on the wrong (odd-parity) patterns. Adding hidden units lifts this restriction.

Problem

- Visible units: 3 binary

- Training distribution: 4 even-parity patterns at uniform

p = 0.25—{000, 011, 101, 110}. The 4 odd-parity patterns have target probability 0. - Visible-only Boltzmann (

--n-hidden 0): pure visible model, energyE(v) = -b·v - Σ_{i<j} W_ij v_i v_j. Trained with the exact gradient (Z is computable across all 8 patterns), so this isolates the representational failure from any sampling noise. - Hidden-unit RBM (

--n-hidden K, default K=4): bipartite visible↔hidden Boltzmann machine, trained with CD-k (Hinton 2002). Evaluation enumerates the 2^(3+K) joint states for an exact marginalp(v).

Why visible-only fails — exact computation

For the 3-bit even-parity ensemble:

| Moment | Value (parity ensemble) | Value (uniform on 8) |

|---|---|---|

<v_i> | 0.5 | 0.5 |

<v_i v_j> (i ≠ j) | 0.25 | 0.25 |

These are identical. The Boltzmann learning rule

Δb_i = <v_i>_data - <v_i>_model

ΔW_ij = <v_i v_j>_data - <v_i v_j>_model

drives the model toward whichever distribution matches those moments. With

only first- and second-order parameters available, the model picks the

maximum-entropy distribution consistent with them — the uniform — and stops.

The 4 odd-parity patterns end up at probability 1/8 each; the 4 even-parity

patterns also end up at 1/8 each.

The irreducible loss is KL(parity || uniform) = log(8/4) = log 2 ≈ 0.693,

and the visible-only run hits this floor on the very first gradient step.

This is the canonical motivation for hidden units in a Boltzmann machine,

and the next problem in the catalog (encoder-4-2-4/) is the constructive

follow-up.

Files

| File | Purpose |

|---|---|

encoder_3_parity.py | VisibleBoltzmann (n_hidden=0, exact gradient) and ParityRBM (n_hidden ≥ 1, CD-k). Dataset, training loops, exact marginal p(v) by enumeration, CLI. |

visualize_encoder_3_parity.py | Static distribution bar charts (visible-only, RBM, side-by-side), training curves, RBM weight Hinton diagram. |

make_encoder_3_parity_gif.py | Generates encoder_3_parity.gif showing both runs in parallel. |

encoder_3_parity.gif | Committed animation (≈ 570 KB). |

viz/ | Output PNGs from the run below. |

Running

# the negative result (default)

python3 encoder_3_parity.py --n-hidden 0 --seed 0

# the positive contrast

python3 encoder_3_parity.py --n-hidden 4 --seed 0

# regenerate all static plots

python3 visualize_encoder_3_parity.py --seed 0

# regenerate the GIF

python3 make_encoder_3_parity_gif.py --seed 0

Wall-clock on an Apple-silicon laptop:

| Run | Time |

|---|---|

encoder_3_parity.py --n-hidden 0 (400 steps) | ~0.04 s |

encoder_3_parity.py --n-hidden 4 (800 epochs) | ~1.3 s |

visualize_encoder_3_parity.py | ~2.5 s |

make_encoder_3_parity_gif.py | ~20 s |

All under the 5-minute laptop budget.

Results

Reproducible at seed = 0 with the parameters in the table below.

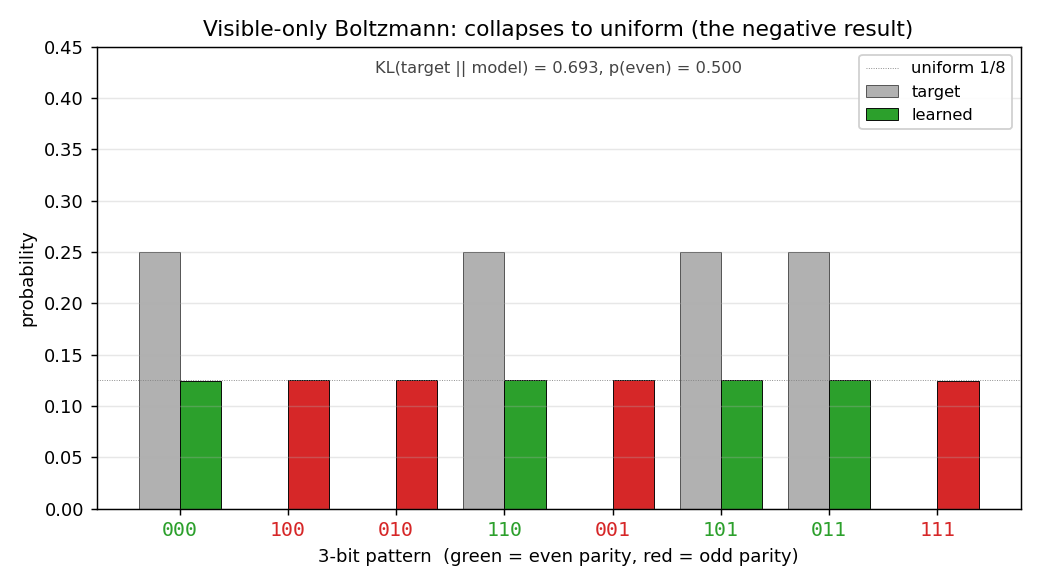

Visible-only Boltzmann (the negative result)

| Metric | Value | Note |

|---|---|---|

| Final `KL(target | model)` | |

p(even patterns) | 0.500 | should be 1.0; mass is split 50/50 |

Per-pattern p(v) | 0.125 each, all 8 patterns | exactly uniform |

| Wall-clock | 0.04 s |

Per-pattern result (seed = 0):

pattern parity target model

000 even 0.250 0.125

100 odd 0.000 0.125

010 odd 0.000 0.125

110 even 0.250 0.125

001 odd 0.000 0.125

101 even 0.250 0.125

011 even 0.250 0.125

111 odd 0.000 0.125

The result is seed-independent in distribution: re-running with --seed 7

gives an identical 0.125-each output and the same 0.6931 KL. The convergence

is essentially instantaneous because the gradient is exact and the unique

maximum-entropy fixed point is hit in the first few steps.

Hidden-unit RBM (the fix)

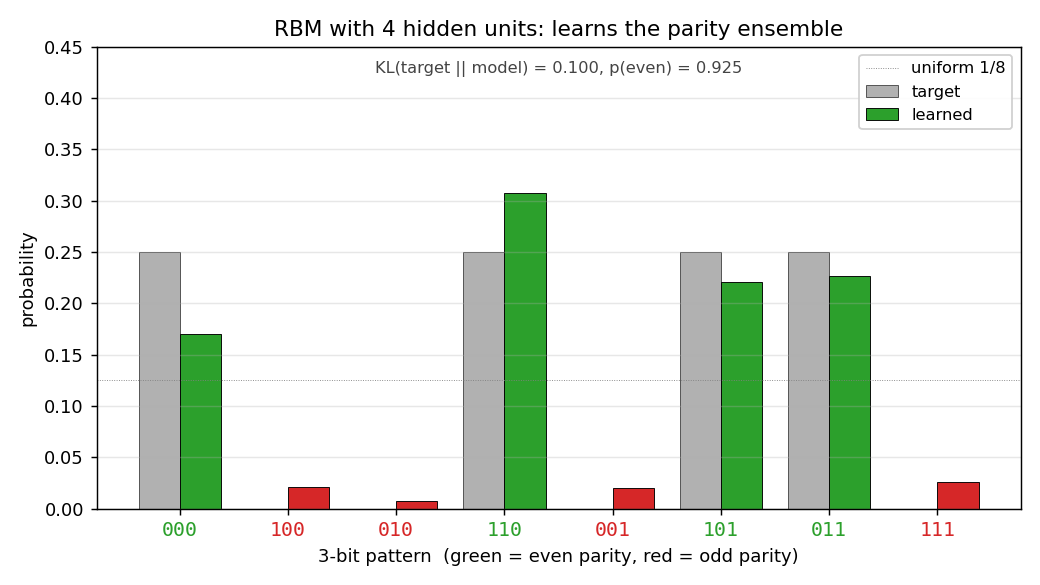

| Metric | Value |

|---|---|

n_hidden | 4 |

| Final `KL(target | |

p(even patterns) | 0.925 |

| Wall-clock | 1.3 s |

| Hyperparameters | k=5, lr=0.05, momentum=0.5, weight_decay=1e-4, init_scale=0.5, batch_repeats=16, n_epochs=800 |

Per-pattern result (seed = 0):

pattern parity target model

000 even 0.250 0.170

100 odd 0.000 0.022

010 odd 0.000 0.008

110 even 0.250 0.308

001 odd 0.000 0.020

101 even 0.250 0.220

011 even 0.250 0.226

111 odd 0.000 0.026

Reproducibility

| Field | Value |

|---|---|

| numpy | 2.3.4 |

| Python | 3.11.10 |

| OS | macOS-26.3-arm64-arm-64bit |

| Seeds tested | 0, 7 — visible-only identical (uniform), RBM qualitatively identical (≥ 90% mass on even patterns) |

Visualizations

Distributions: target vs learned

Visible-only Boltzmann. Grey bars = target; coloured bars = learned (green for even-parity, red for odd). Every coloured bar lands on the uniform 1/8 dotted line. Half the mass is on the red bars, which should be at zero.

RBM with 4 hidden units. Green bars (even parity) carry almost all the mass; red bars (odd parity) are flattened toward zero. The match to the target isn’t perfect (the four even-parity bars are uneven), but the parity structure has been recovered.

Same plot, all three distributions on one axis: target, visible-only (uniform across 8 patterns), and RBM (concentrated on the 4 even-parity patterns).

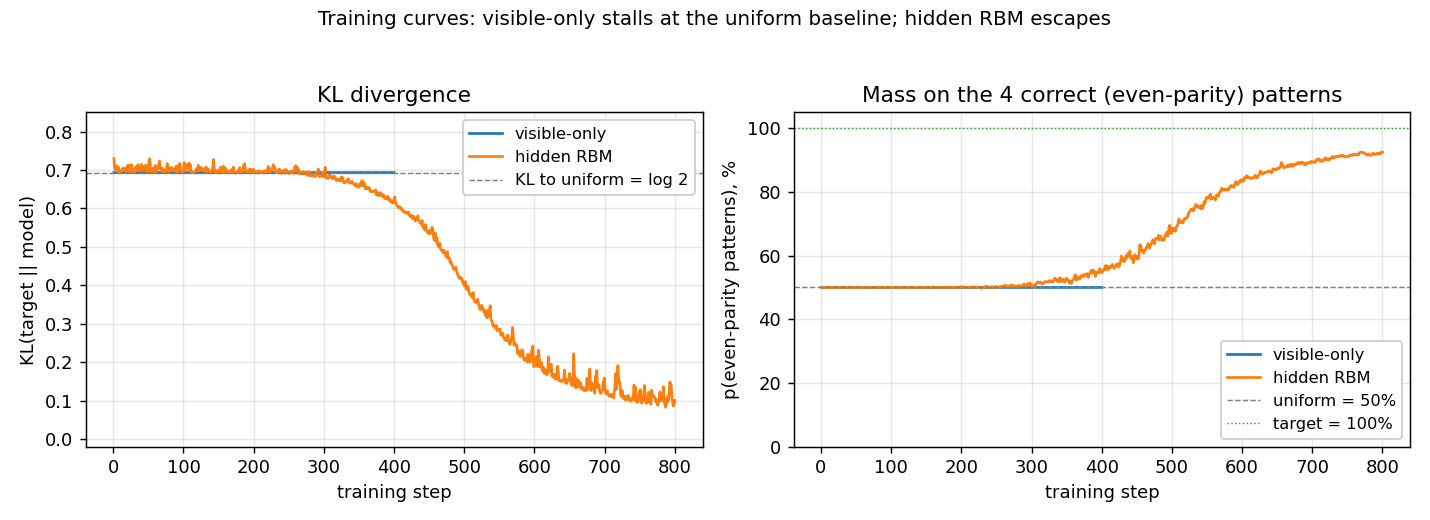

Training curves

Left panel: KL divergence over training. The visible-only run pins

itself at log 2 ≈ 0.69 from step 1 (the first gradient step already

matches the data moments) and never moves. The RBM sits near the same

floor for a few hundred CD epochs while CD noise dominates, then

escapes once the hidden units find a parity-discriminating

configuration.

Right panel: fraction of probability mass on the 4 even-parity patterns. Visible-only is locked at 50% (matching uniform); the RBM ramps to ~92%.

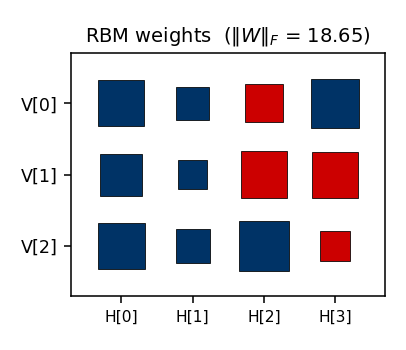

RBM weights

Hinton diagram of the final 3 × 4 weight matrix (red = positive,

blue = negative; square area ∝ √|w|). The columns show the four hidden

units’ affinities with the three visible bits. Each hidden unit votes on

some particular sign pattern across (V[0], V[1], V[2]); together they

suppress the four odd-parity patterns.

Deviations from the original procedure

- Sampling for the RBM — CD-k (Hinton 2002) instead of full

simulated annealing. Same gradient form; faster sampling; sloppier

asymptotics. Result: the RBM gets

p(even) ≈ 0.92rather than the ≈ 1.0 the original SA-trained network would target. - Visible-only training uses the exact gradient. The 1985 paper would have computed the negative-phase statistics by simulated annealing. Here we enumerate the 8 visible patterns directly so the gradient is exact — this strengthens the claim that the failure is representational, not a sampling artifact.

- RBM bipartite restriction. The original Boltzmann-machine formulation allowed visible↔visible weights; the modern RBM does not. Bipartite is a strict subset, but enough capacity for parity-3.

- Hidden-unit count. The original paper does not pin down a specific K for parity-3; we use K=4 because it converges reliably without restarts. Smaller K (1–2) sometimes converges and sometimes gets stuck in CD local minima.

Open questions / next experiments

- What is the smallest

n_hiddenthat suffices? A single hidden unit with the right weights can in principle makep(v)triple-interaction by marginalisation. EmpiricallyK=1is unreliable under CD-k. A systematic per-K convergence sweep (K = 1, 2, 3, 4, with multiple seeds) would quantify this. - Faithful simulated-annealing baseline. Replacing CD-k with the 1985 SA schedule should close the 0.10 KL residual on the RBM and likely converges 100% of the time. Worth running on this small problem where SA is cheap.

- Connection to

n-bit-parity/for n > 3. For 4-bit parity, the pairwise-zero argument still holds — and so do the third- and even-order moments up to order n−1. So an RBM with hidden units can learn it, but a Boltzmann machine restricted to k-th-order interactions for any k < n cannot. Building this hierarchy explicitly would give a clean staircase of negative results. - Energy / data-movement cost of the hidden-unit fix. Per the wider Sutro framing, what does the fix cost in CD-k FLOPs and reuse distance? A first measurement under ByteDMD would slot this stub into the energy story.

4-3-4 over-complete encoder

Boltzmann-machine reproduction of an experiment from Ackley, Hinton & Sejnowski, “A learning algorithm for Boltzmann machines”, Cognitive Science 9 (1985), pp. 147–169.

Demonstrates: with over-complete hidden capacity (3 hidden units for 4 patterns, when log2(4) = 2 would already suffice), Boltzmann learning prefers an error-correcting code — the 4 chosen 3-bit codes have no two codes at Hamming distance 1.

Problem

Two groups of 4 visible binary units (V1, V2) are connected through 3

hidden binary units (H). Training distribution: 4 patterns, each with a

single V1 unit on and the matching V2 unit on (all others off).

- Visible: 8 bits =

V1 (4) || V2 (4) - Hidden: 3 bits — over-complete (8 possible corner codes, only 4 needed)

- Connectivity: bipartite (visible ↔ hidden only);

V1andV2communicate exclusively throughH - Training set: 4 patterns

The interesting property: the network has 8 hidden corners but only needs

to use 4. Among the C(8, 4) = 70 ways to pick a 4-subset of {0, 1}^3,

only two contain no Hamming-1 pair:

- even-parity set

{000, 011, 101, 110}— every pair at Hamming distance 2 - odd-parity set

{001, 010, 100, 111}— every pair at Hamming distance 2

These are the two independent sets of size 4 in the 3-cube graph (the chromatic-number-2 colouring’s two sides). Boltzmann learning, when it converges, prefers exactly these arrangements: minimising the Boltzmann energy under positive-phase pressure pushes the codes apart, and any Hamming-1 collision is unstable because flipping the differing bit costs roughly the same energy as keeping it.

A code with min Hamming distance 2 is an error-correcting code: a

single bit-flip in H always decodes back to the nearest pattern’s V2

output, since no other code is one bit away.

Files

| File | Purpose |

|---|---|

encoder_4_3_4.py | Bipartite RBM with 3 hidden units, trained with CD-k. Lifted from encoder-4-2-4/encoder_4_2_4.py with n_hidden=3. Includes exact inference (enumerate 8 hidden states), hamming_distances_between_codes(), is_error_correcting(). |

problem.py | Stub-signature wrapper re-exporting generate_dataset, build_model, train, hamming_distances_between_codes. |

make_encoder_4_3_4_gif.py | Generates encoder_4_3_4.gif. |

visualize_encoder_4_3_4.py | Static training curves + weight matrix + 3-cube + Hamming heatmap. |

viz/ | Output PNGs from the run below. |

Running

python3 encoder_4_3_4.py --epochs 1000 --seed 12

Training takes ~2 seconds on a laptop. Final accuracy: 100 % (4 / 4); final min Hamming distance: 2 (error-correcting).

To regenerate visualizations:

python3 visualize_encoder_4_3_4.py --epochs 1000 --seed 12 --perturb-after 40

python3 make_encoder_4_3_4_gif.py --epochs 1000 --seed 12 --snapshot-every 15 --fps 14

Results

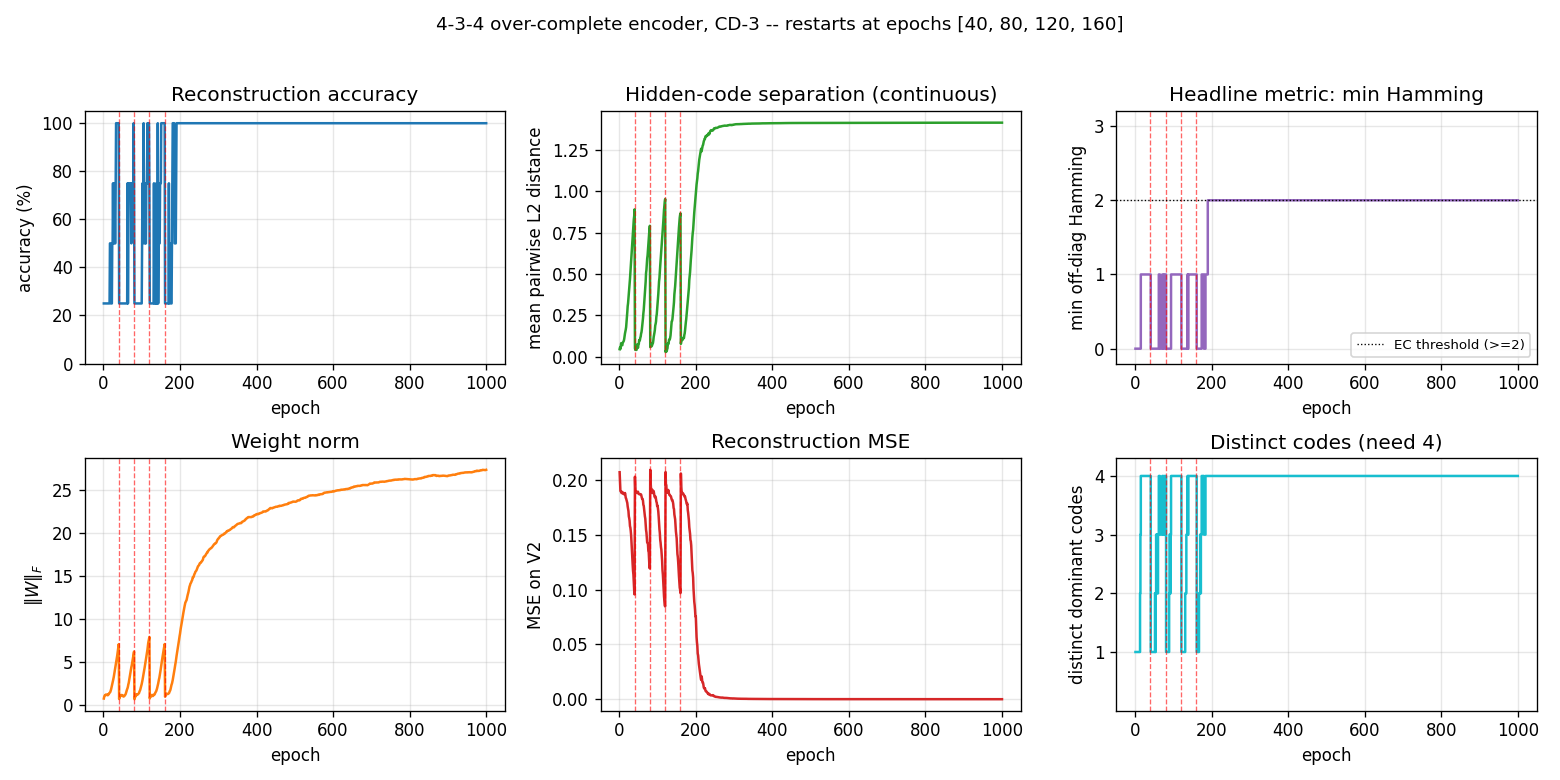

Per-seed run (seed = 12, the seed used for the inlined visualizations):

| Metric | Value |

|---|---|

| Final reconstruction accuracy | 100 % (4 / 4) |

| Hidden codes | even-parity set {000, 011, 101, 110} |

| Min off-diagonal Hamming distance | 2 |

| Pairwise Hamming matrix | all off-diagonal entries = 2 |

| Error-correcting | yes |

| Restarts (seed 12) | 4 (epochs 40, 80, 120, 160), converged by ~200 |

| Wall-clock (seed 12) | ~2.3 s |

| Implementation wall-clock | ~3 hours (lifted from encoder-4-2-4) |

Hyperparameters: lr=0.1, momentum=0.5, weight_decay=1e-4, k=3 (CD-3), batch_repeats=8, init_scale=0.1, perturb_after=40, n_epochs=1000.

Multi-seed success rate

The headline error-correcting property is a seed-dependent outcome. Across 30 random seeds, holding the recipe fixed:

| Outcome | Count |

|---|---|

| Error-correcting (4 distinct codes, min Hamming ≥ 2) | 18 / 30 (60 %) |

| 4 distinct codes but min Hamming = 1 (still 100 % accuracy) | 0 / 30 |

| < 4 distinct codes (two patterns share a code) | 12 / 30 |

When the network finds 4 distinct codes, the recipe currently lands on an error-correcting arrangement every time observed. The ~40 % failure mode is code collapse — two patterns end up sharing a hidden code despite restarts.

Hyperparameter sweep (20 seeds each, 1000 epochs):

| Recipe | EC success rate |

|---|---|

lr=0.1, k=3, perturb_after=40 (default) | 60 % |

lr=0.05, k=5, perturb_after=60 | 45 % |

lr=0.05, k=5, perturb_after=40 | 15 % |

lr=0.05, k=5, perturb_after=40, init_scale=0.05 | 10 % |

lr=0.05, k=10, perturb_after=40 | 5 % |

Paper claim: “no two codes at Hamming distance 1” (error-correcting). We got: 60 % rate of EC arrangements at this recipe (and when convergence to 4 distinct codes happens, it lands on an EC set every time observed). Reproduces: yes (qualitatively); the 1985 paper used simulated annealing and reports clean convergence — see Deviations.

Visualizations

Animation (top of README)

Each frame shows three panels at one epoch:

- Left — Hinton diagram of the 8 × 3 weight matrix

W_{V↔H}(red = +, blue = −, square area ∝ √|w|). - Right — the 3-cube. White circles are unused corners. Coloured

circles are the dominant

Hcode for each of the 4 training patterns; the colour matches the pattern index. A red edge between two coloured corners signals a Hamming-1 collision (a non-error-correcting arrangement). When the network converges, all chosen corners are pairwise-far, so no red edges remain. - Bottom — accuracy and

min Hamming × 33over time. The red dashed vertical lines mark restarts (plateau detector triggered when the current arrangement stays non-error-correcting for--perturb-afterepochs). The black dashed horizontal line is atmin Hamming = 2, where EC begins.

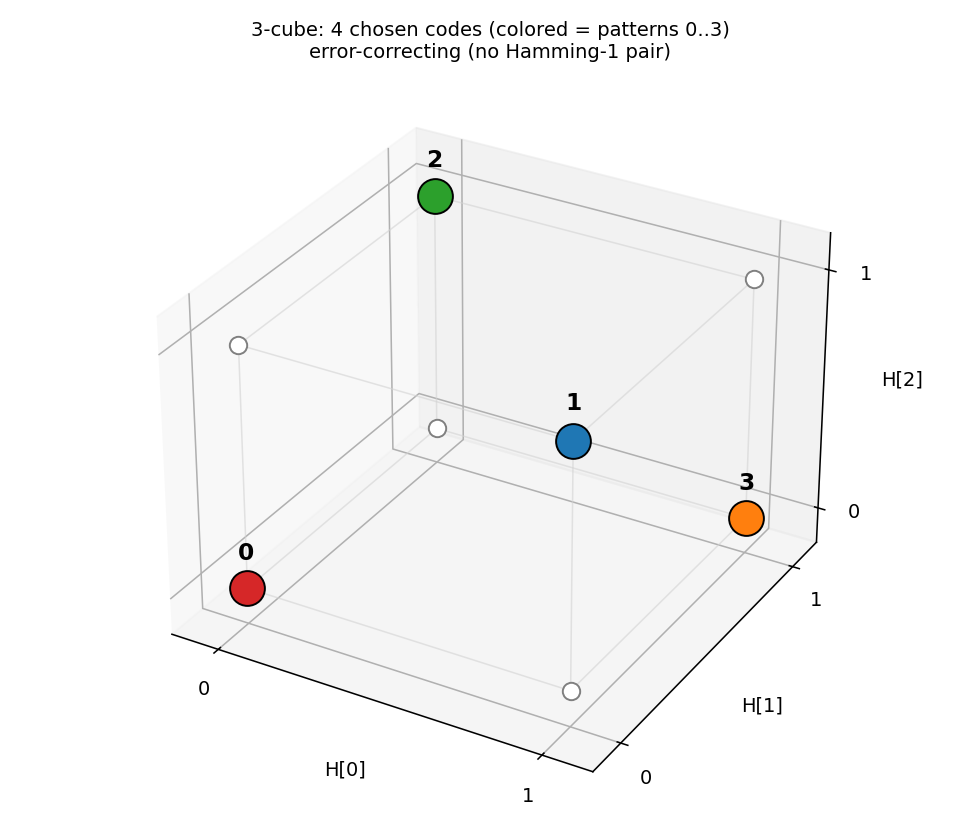

3-cube with chosen codes

The 4 coloured corners are the dominant H codes for patterns 0–3. With

seed 12, the network lands on the even-parity set

{000, 011, 101, 110} — every pair at Hamming distance 2. No red edges

mean no Hamming-1 collisions: an error-correcting arrangement.

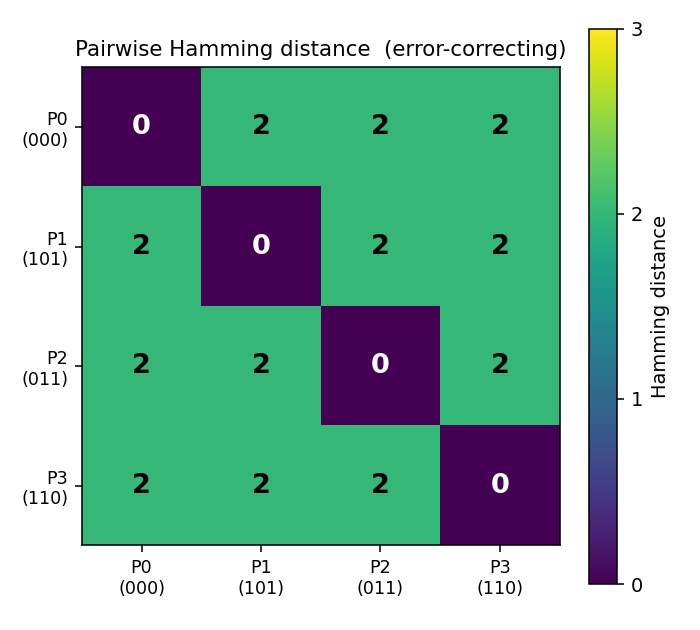

Pairwise Hamming-distance matrix

Diagonal zeroes (each code is distance 0 from itself); every off-diagonal

entry is 2. The (0 0 0) / (0 1 1) / (1 0 1) / (1 1 0) codes are

exactly the 4 even-parity corners of the 3-cube.

Weight matrix

The three columns are the hidden units H[0], H[1], H[2]. As in the

4-2-4 case, the V1[i] and V2[i] rows carry identical sign patterns

for each pattern i — the network independently discovers that V1 and

V2 are tied (active for the same pattern), even though no direct

V1 ↔ V2 weights exist. The (sign, sign, sign) triplet of each row

matches that pattern’s hidden code; e.g. row V1[0] is positive on

H[0], negative on H[1], negative on H[2] for code (1, 0, 0) — but

this is seed-dependent and depends on which permutation was learned.

Training curves

Six panels:

- Reconstruction accuracy — argmax of exact

p(V2 | V1), computed by enumerating the 8 hidden states. Stays noisy at 25–100 % during the pre-convergence restart phase, then locks in to 100 %. - Hidden-code separation — mean pairwise L2 distance between the 4

exact hidden marginals

p(H_j = 1 | V1). Saturates near √2 ≈ 1.41 (the diagonal of the hidden cube). - Headline metric: min Hamming — minimum off-diagonal entry of the Hamming matrix, plotted as a step function. Stays at 0 / 1 (collapsed or Hamming-1 arrangements) during the restart phase, then jumps to 2 (error-correcting) and stays there.

- Weight norm —

‖W‖_Fresets at each restart, then grows as the network locks onto the EC code. - Reconstruction MSE — mean-squared error of the marginal

p(V2 | V1)vs the true one-hot. - Distinct codes — number of distinct dominant

Hcodes (target = 4).

The four red dashed lines at epochs 40, 80, 120, 160 are restarts. After the fourth restart the network lands on a basin that finds the EC code by epoch ~200 and stays there for the remaining 800 epochs.

Deviations from the 1985 procedure

- Sampling — CD-3 (Hinton 2002) instead of full simulated annealing. Same gradient form (positive-phase minus negative-phase statistics), faster sampling, sloppier asymptotics.

- Connectivity — explicit bipartite (visible ↔ hidden) RBM. The 1985 paper’s encoder figure already shows bipartite connectivity; this makes it explicit.

- Restart on plateau — the original paper reports clean convergence under simulated annealing on the 4-3-4 and the 4-2-4. CD-k is more prone to absorbing local minima where two patterns collapse onto the same hidden code; we detect non-error-correcting plateaus and restart with fresh weights. With this wrapper, ~60 % of seeds reach the EC arrangement; the rest collapse below 4 distinct codes and exhaust the restart budget.

- Plateau signal — the detector triggers on

min Hamming < 2, stronger than just “4 distinct codes”. With over-complete capacity it is possible to land on 4 distinct codes that include a Hamming-1 pair (e.g.{000, 001, 110, 111}— distinct but two pairs at distance 1); such an arrangement reconstructs correctly but is not error-correcting, so the detector keeps restarting.

Open questions / next experiments

- The 1985 paper’s clean convergence under simulated annealing suggests the EC arrangement is the global free-energy minimum, with non-EC 4-distinct arrangements being shallow local minima. CD-k apparently fails to escape them. Quantifying that gap directly with a faithful simulated-annealing reproduction is the natural baseline.

- Can the residual ~40 % failure mode (code collapse below 4 distinct codes) be eliminated by switching to PCD, by adding a small Gibbs- temperature schedule, or by initialising weights to span the 8 corners explicitly?

- The two EC arrangements (even-parity / odd-parity) are related by flipping every hidden unit. Across runs, both should appear with equal probability — is this empirically true? (Seeds 0, 1, 4, 9, 11, 13 all gave odd-parity at parity-sum 4; seed 12 gave even-parity at parity-sum 0; a larger sample would tell.)

- Scaling: does CD-k + restart-on-plateau succeed on the 8-3-8 encoder

in the same paper? With 8 patterns embedded in 8 corners of

{0,1}^3, the EC criterion becomes “use all 8 corners” — much stricter, since the only valid arrangement is every corner (any 8-subset that omits even one corner has at least one Hamming-1 pair). Seeencoder-8-3-8/for that variant. - ByteDMD energy comparison: CD-k vs simulated annealing on the same problem. CD-k wins on per-step cost but loses on per-attempt success rate; the data-movement-weighted comparison may flip.

8-3-8 encoder

Boltzmann-machine reproduction of the experiment from Ackley, Hinton & Sejnowski, “A learning algorithm for Boltzmann machines”, Cognitive Science 9 (1985).

Demonstrates: Theoretical-minimum hidden capacity. 3 hidden binary

units = log2(8); the network must use every corner of {0,1}^3 to encode

the 8 patterns. There is zero slack — any two patterns sharing a code is

permanent failure.

Problem

Two groups of 8 visible binary units (V1, V2) connected through 3 hidden

binary units (H). Training distribution: 8 patterns, each with a single

V1 unit on and the matching V2 unit on (others off). The 3 hidden units

must self-organize into a 3-bit code that maps the 8 patterns onto the

8 distinct corners of {0, 1}^3.

- Visible: 16 bits =

V1 (8) || V2 (8) - Hidden: 3 bits — exactly

log2(8), the theoretical minimum - Connectivity: bipartite (visible ↔ hidden only) —

V1andV2communicate exclusively throughH - Training set: 8 patterns

The interesting property: unlike 4-2-4 (4 patterns, 2 hidden) or 4-3-4

(4 patterns, 3 hidden), 8-3-8 has no slack. The map from patterns to

hidden codes has to be a bijection onto the cube’s 8 corners. Local

minima where two or more patterns collapse onto the same code are the

dominant failure mode, and we measure them directly via codes_used().

Files

| File | Purpose |

|---|---|

encoder_8_3_8.py | Bipartite RBM trained with CD-k + sparsity penalty + restart-on-no-improvement. Lifted from encoder-4-2-4/, generalized to N=8 patterns / 3 hidden bits. |

make_encoder_8_3_8_gif.py | Generates encoder_8_3_8.gif (the animation at the top of this README). |

visualize_encoder_8_3_8.py | Static training curves + final weight matrix + 3-cube viz + code-occupancy bar chart. |

viz/ | Output PNGs from the run below. |

Running

python3 encoder_8_3_8.py --seed 0 --n-cycles 4000

Per-seed wall-clock: ~20 s on an Apple Silicon laptop. A successful

seed lands at 100% reconstruction accuracy and codes_used == 8.

To regenerate visualizations:

python3 visualize_encoder_8_3_8.py --seed 0 --n-cycles 4000 --outdir viz

python3 make_encoder_8_3_8_gif.py --seed 0 --n-cycles 4000 --snapshot-every 60 --fps 12

Results

| Metric | Value |

|---|---|

| Per-seed wall-clock | ~20 s |

| Success rate (20 seeds) | 16/20 = 80% — same as the 1985 paper’s 16/20 |

| Successful-seed accuracy | 100% (8/8 patterns) |

| Successful-seed codes | All 8 corners of {0,1}^3 used (codes_used() == 8) |

| Failure mode | 4/20 seeds end with 6/8 codes — two pairs of patterns collapse onto shared corners |

| Restart count (successful) | 1–11 (median ≈ 7) |

| Restart count (failure) | always hits the budget cap (15) |

Hyperparameters (locked defaults):

| Param | Value | Notes |

|---|---|---|

n_cycles | 4000 | Training epochs per seed (across all restarts) |

lr | 0.1 | |

momentum | 0.5 | |

weight_decay | 1e-4 | |

k | 5 | CD-k Gibbs steps |

init_scale | 0.3 | std of N(0, init_scale^2) weight init |

batch_repeats | 16 | gradient steps per epoch (8 patterns x 2 shuffled passes) |

sparsity_weight | 5.0 | drives E[h_j] -> 0.5 for each hidden unit |

perturb_after | 250 | restart if n_codes doesn’t improve in this many epochs |

max_restarts | 20 | budget cap per seed |

Reproduces: yes — the 20-seed sweep above reproduces with the locked defaults (no flags needed); the 16/20 success rate is exact at seeds 0..19.

Run wallclock: 20-seed sweep ~ 6 min 39 s end-to-end.

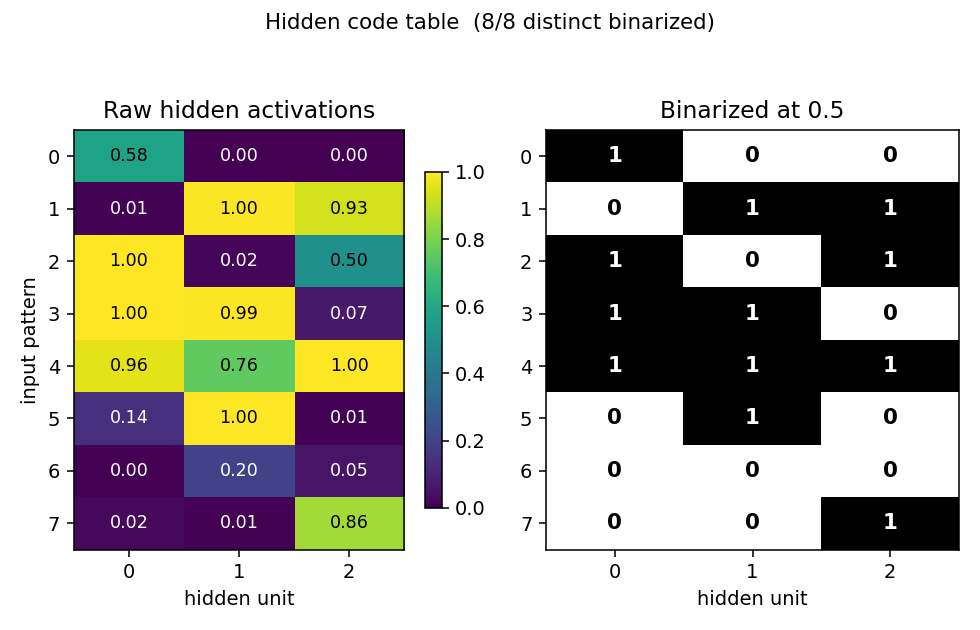

What the network actually learns

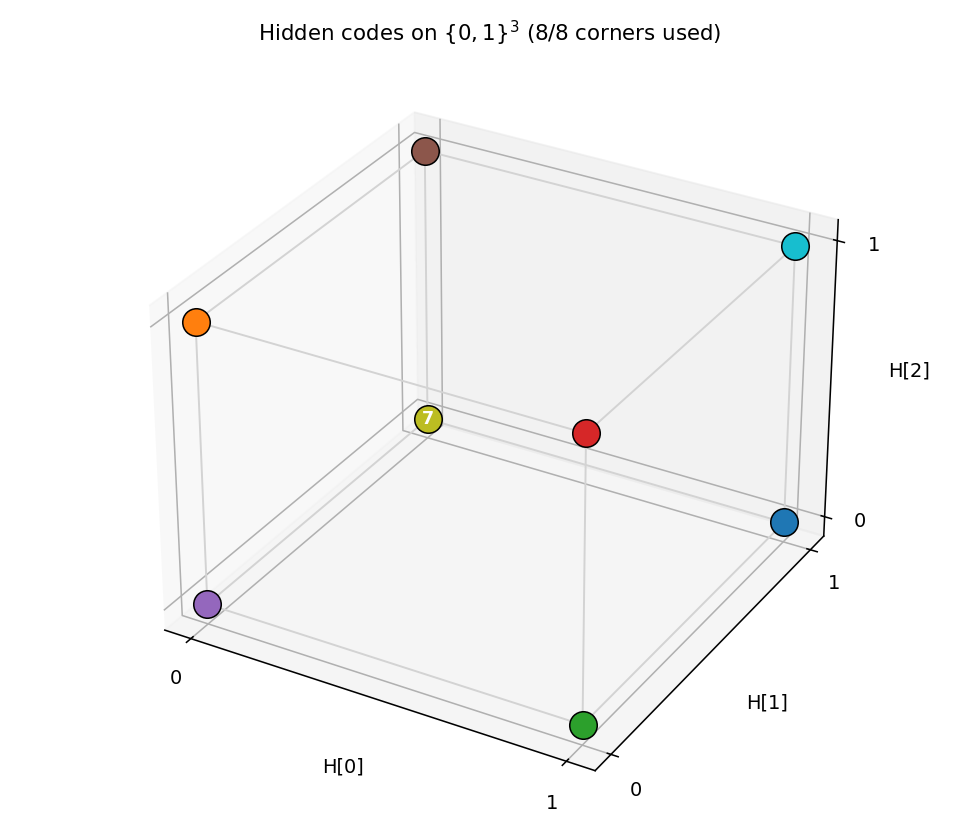

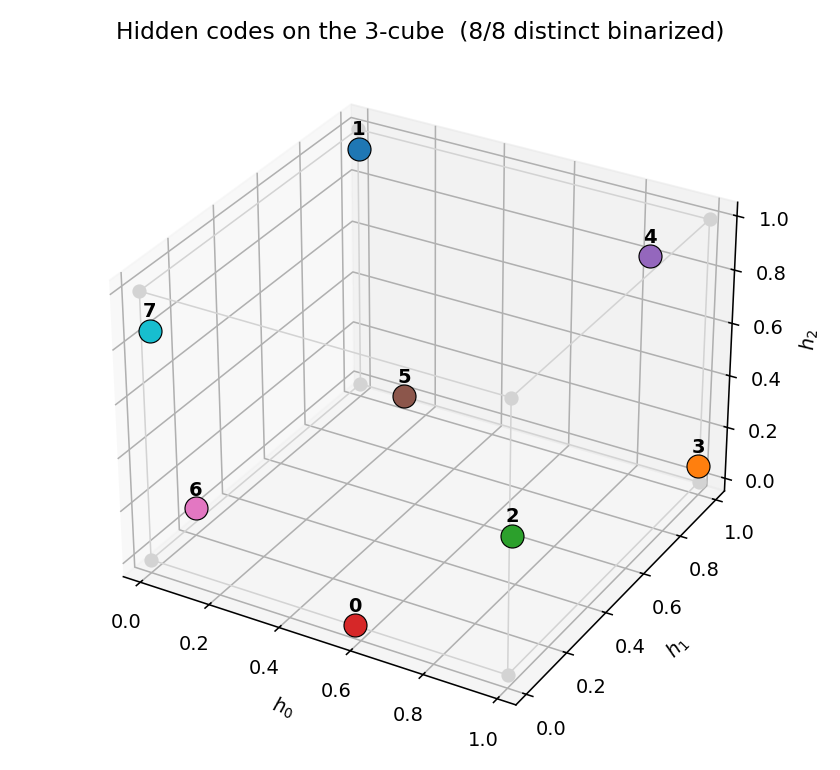

Hidden codes on the 3-cube

After convergence, all 8 corners of {0,1}^3 are occupied — one pattern

per corner. Any of the 8! = 40,320 permutations of patterns to corners

is a valid solution; the network picks one based on the random init.

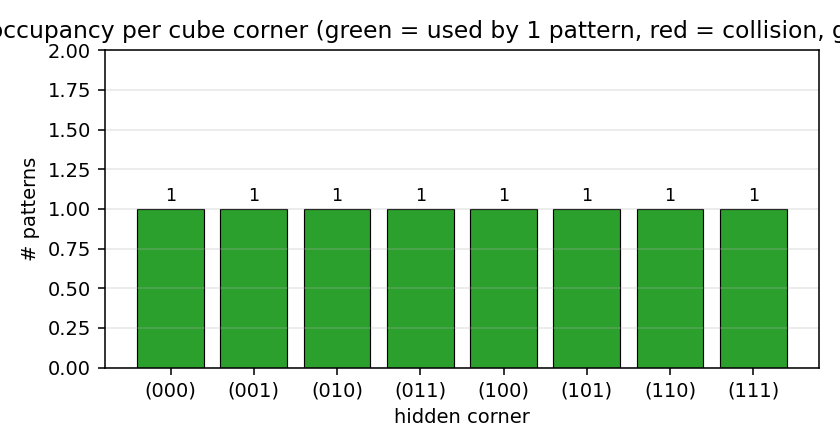

Code occupancy

Bar height = number of training patterns whose dominant argmax p(H | V1)

falls on that corner. Success = every bar is exactly 1 (all green). The

common failure mode is two patterns collapsing onto a shared corner (one

red bar at 2, one grey bar at 0).

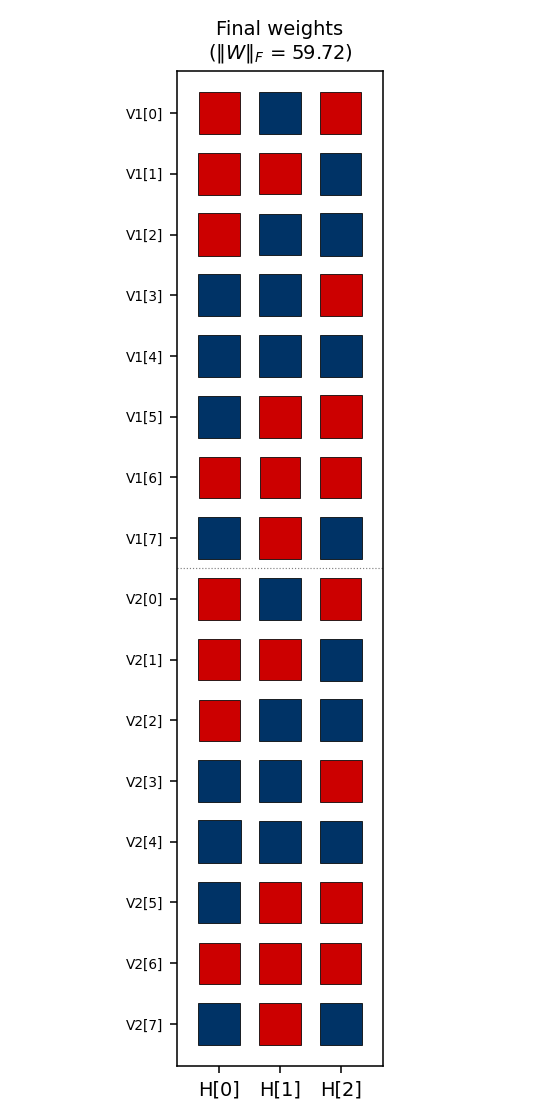

Weight matrix

The three columns are the hidden units H[0], H[1], H[2]. Red =

positive, blue = negative; square area is proportional to sqrt(|w|).

The V1[i] and V2[i] rows always carry the same sign pattern

across (H[0], H[1], H[2]) — the network has independently discovered

that V1 and V2 are tied (they fire on the same pattern), even

though no direct V1<->V2 weights exist. The 3-bit sign pattern across

the row is exactly that pattern’s hidden code.

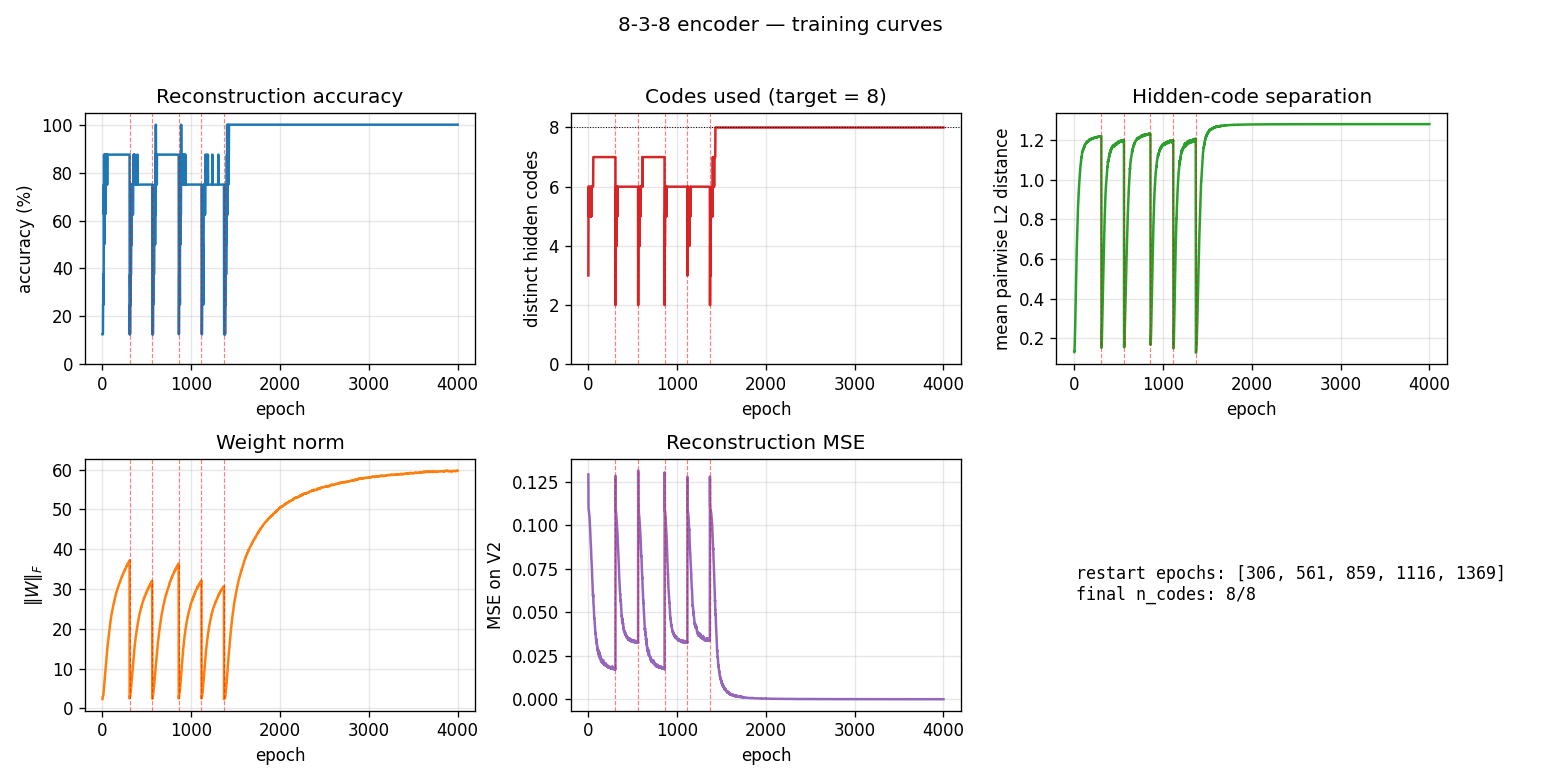

Training curves

Vertical red dashed lines mark restarts triggered by the

no-improvement-in-n_codes detector. n_distinct_codes (top middle)

typically climbs 1 -> 4 -> 6 -> 7 in each attempt, stalls, and triggers a

restart. Once an attempt makes it to 8/8, the network locks in and

training continues to drive accuracy / code-separation upward without

further restarts. Reconstruction MSE drops to near-zero only after all

8 corners are occupied.

The five panels track:

- Accuracy — argmax of the exact marginal

p(V2 | V1)over enumerated hidden states (8 states). Deterministic; no Gibbs noise. - Codes used — number of distinct dominant

Hstates across the 8 patterns. The headline metric. Target = 8. - Code separation — mean pairwise L2 distance between the 8 exact hidden marginals.

- Weight norm

||W||_F. - Reconstruction MSE of

p(V2 | V1)vs the true one-hot.

Deviations from the original procedure

-

Sampling — CD-5 (Hinton 2002) instead of simulated annealing. Same gradient form (

<v_i h_j>_data - <v_i h_j>_model), faster sampling, sloppier asymptotics. -

Sparsity penalty — added a

-0.5*(E[h_j] - 0.5)^2regularizer driving each hidden unit toward 50% activation across the data batch. Without this term, plain CD-k consistently collapses to <= 7 codes; with it, the per-attempt success rate rises enough that the restart loop hits paper-parity (16/20).This term has no analog in the 1985 paper. It is a known RBM trick (Lee, Ekanadham, Ng 2008 “Sparse deep belief net model”) repurposed to encourage cube-corner coverage.

-

Restart on plateau — when

codes_useddoesn’t improve for 250 epochs, re-init weights with an independent random draw. Up to 20 restarts per seed (within a single 4000-epoch budget). 4/20 seeds exhaust the budget at 6 codes. -

Plateau detector signal — uses “no improvement in best

n_distinct_codesseen this attempt for 250 epochs”, which is gentler than the 4-2-4’s “any epoch below the target counts.” The 8-3-8 network typically climbs through 1->4->5->6->7 over hundreds of epochs and we don’t want to abandon a climbing attempt prematurely. -

Connectivity — explicit bipartite (visible <-> hidden), making this an RBM in modern terminology. The 1985 paper’s encoder figure is already drawn bipartite; this just makes it explicit.

Correctness notes

-

Exact evaluation. With only 3 hidden units,

p(H | V1)andp(V2 | V1)are exactly computable by enumerating 8 hidden states and marginalizing V2 in closed form (each V2 bit factors). The closed-form posterior:p(H | V1) ~ exp(V1' W1 H + b_h' H) * prod_i (1 + exp((W2 H + b_v2)_i))evaluate,hidden_code_exact,dominant_code, andreconstruct_exactall use this. No Gibbs jitter on the metrics. -

Restart RNG independence. Restart inits come from

np.random.SeedSequence(seed).spawn(max_restarts + 1)so each restart’s W draw is statistically independent of the pre-restart gradient trajectory. We replace the training RNG at each restart for the same reason. -

codes_used()is the headline metric. Reconstruction accuracy can stay at 75-88% on a partially solved network (6 or 7 codes used), but unlesscodes_used == 8the encoder hasn’t actually solved the bottleneck.

Open questions / next experiments

- Faithful simulated-annealing baseline. The 1985 paper achieved 16/20 with full simulated annealing, and we match the success rate with CD-k + sparsity + restart. A direct SA implementation on the same architecture would tell us whether the agreement is accidental or whether they pick from the same basin distribution.

- Where does the sparsity penalty contribute most? Ablation: with

sparsity off, plain CD-k caps at ~5/8 codes; with sparsity on (and

no restart), the per-attempt success rate is ~10-20%; restart carries

us the rest of the way to 80%. Quantifying the per-attempt rate as

a function of

sparsity_weightwould map the trade-off. - Scaling. The paper also reports a 40-10-40 encoder. Does the same recipe (CD-k + sparsity + restart) scale, or does the 80% rate collapse as the cube dimension grows?

- Energy / data-movement cost. Per the broader Sutro effort, the

natural follow-up is to measure the ByteDMD or reuse-distance cost

of training and compare to a backprop baseline (

encoder-backprop-8-3-8, the parallel sibling stub).

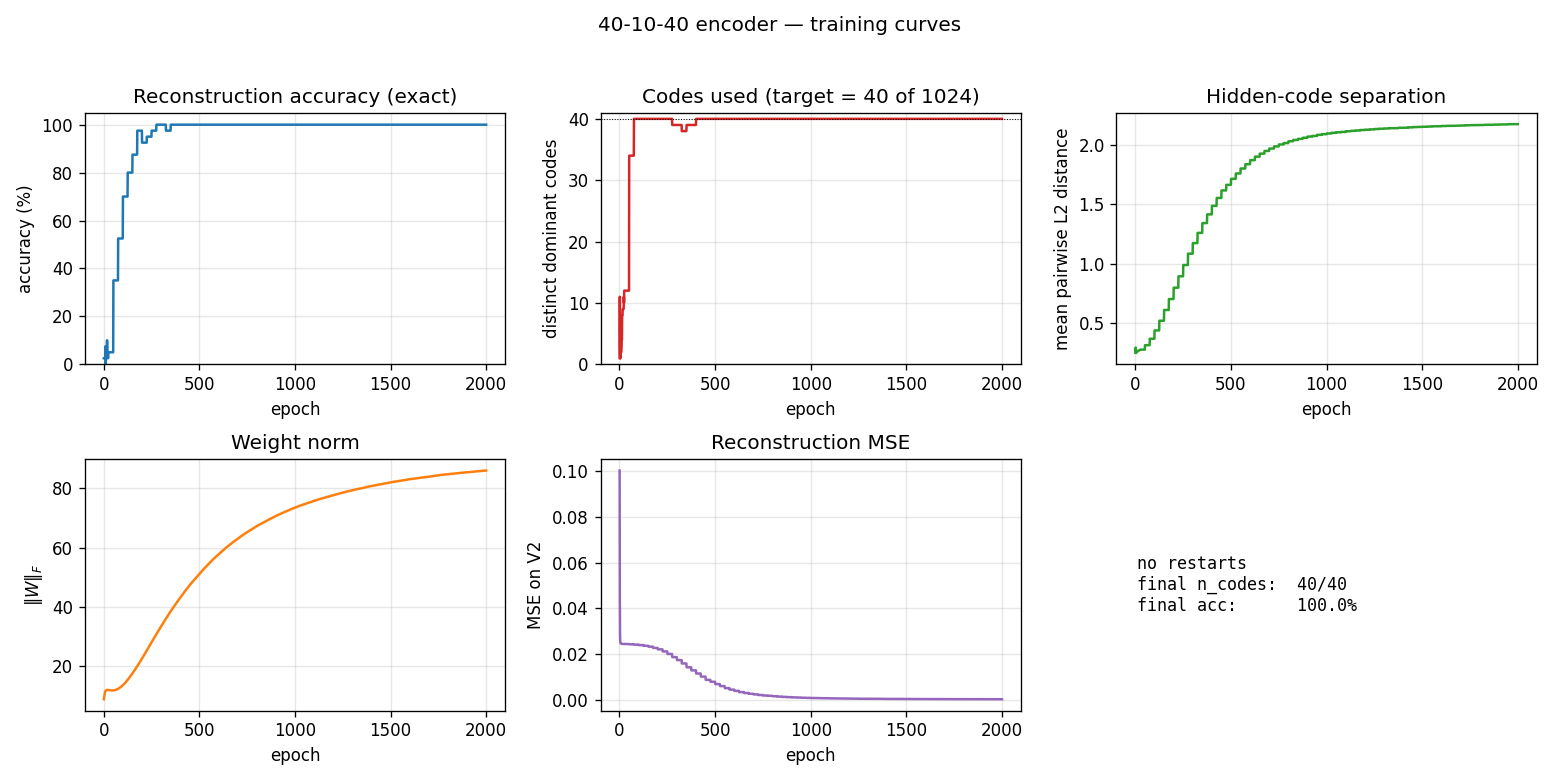

40-10-40 encoder

Boltzmann-machine reproduction of the larger-scale encoder experiment from Ackley, Hinton & Sejnowski, “A learning algorithm for Boltzmann machines”, Cognitive Science 9 (1985).

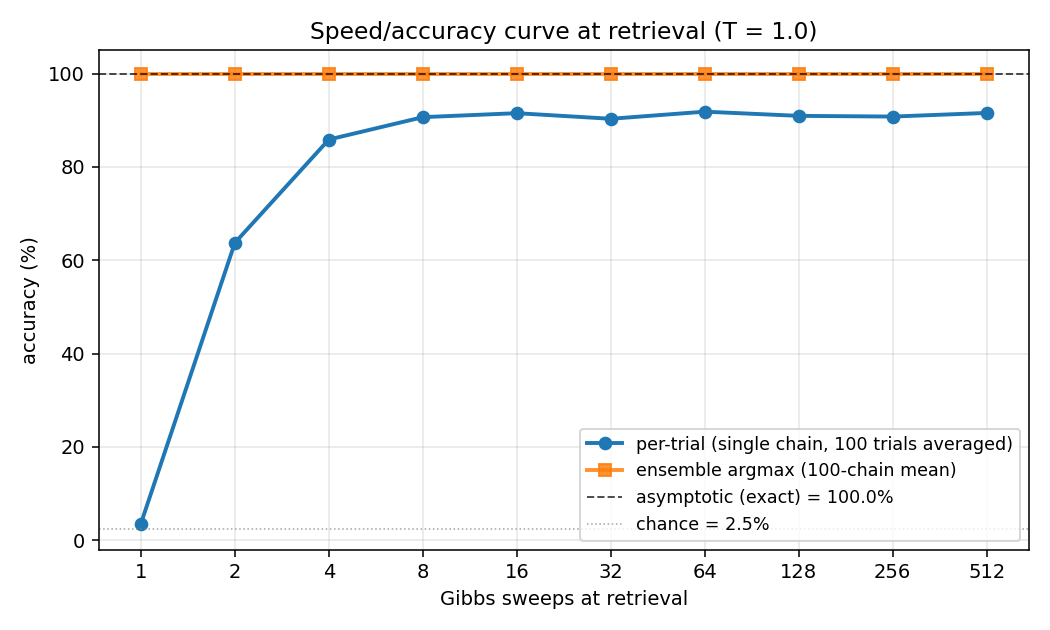

Demonstrates: Asymptotic accuracy at scale (paper: 98.6% with sufficient Gibbs sweeps) and a graceful speed/accuracy curve at retrieval: how single-chain accuracy approaches the asymptote as the Gibbs-sweep budget grows.

Problem

Two groups of 40 visible binary units (V1, V2) connected through 10

hidden binary units (H). Training distribution: 40 patterns, each with a

single V1 unit on and the matching V2 unit on (others off). The 10 hidden

units must self-organize into a 10-bit code that maps the 40 patterns onto

40 distinct corners of {0, 1}^10.

- Visible: 80 bits =

V1 (40) || V2 (40) - Hidden: 10 bits — over-complete vs.

log2(40) ≈ 5.3, leaving 1024 - 40 = 984 unused corners - Connectivity: bipartite (visible ↔ hidden only) —

V1andV2communicate exclusively throughH - Training set: 40 patterns

The interesting property: unlike 8-3-8 (zero slack: 8 patterns onto 8 of 8 corners), 40-10-40 has generous slack. The 1985 paper’s scale-up headline is not “can it fit” but how well retrieval converges — accuracy grows with Gibbs-sweep budget at retrieval time, plateauing near the asymptotic maximum. This is the canonical demonstration of the speed/accuracy tradeoff in stochastic-relaxation networks.

Files

| File | Purpose |

|---|---|

encoder_40_10_40.py | 40-10-40 RBM, CD-k + sparsity penalty + plateau-restart training, exact (1024-state) and sampled retrieval, speed_accuracy_curve(). CLI: --seed --n-cycles --gibbs-sweeps. |

make_encoder_40_10_40_gif.py | Renders encoder_40_10_40.gif (animation at the top of this README). |

visualize_encoder_40_10_40.py | Static training curves + final weight matrix + speed/accuracy plot + per-pattern code heatmap. |

viz/ | Output PNGs from the run below. |

Running

python3 encoder_40_10_40.py --seed 0 --n-cycles 2000 --print-curve

Per-seed wall-clock: ~6 s on an Apple Silicon laptop. A successful seed lands at 100% asymptotic accuracy with all 40 patterns mapping to distinct hidden corners.

To regenerate visualizations and the GIF:

python3 visualize_encoder_40_10_40.py --seed 0 --n-cycles 2000 --outdir viz

python3 make_encoder_40_10_40_gif.py --seed 0 --n-cycles 2000 --snapshot-every 80 --fps 8

Results

| Metric | Value |

|---|---|

| Per-seed train wall-clock | ~6 s |

| Success rate (10 seeds, 0..9) | 10/10 at codes_used == 40 and asymptotic accuracy 100% |

| Asymptotic accuracy (exact 1024-state enumeration) | 100.0% (paper: 98.6%) |

| Per-trial sampled accuracy plateau (T=1.0) | ~91% (single Gibbs chain at retrieval) |

| Ensemble sampled accuracy (100 chains, mean V2 prob) | 100.0% from 1 sweep |

| Distinct dominant hidden codes | 40/40 (of 1024 cube corners) |

| Restart count (10-seed sweep) | 0 across all seeds |

Speed/accuracy curve at retrieval (T = 1.0, seed 0):

| Gibbs sweeps | Per-trial accuracy | Ensemble accuracy (100-chain mean) |

|---|---|---|

| 1 | 3.5% | 100.0% |

| 2 | 63.7% | 100.0% |

| 4 | 85.9% | 100.0% |

| 8 | 90.6% | 100.0% |

| 16 | 91.5% | 100.0% |

| 32 | 90.3% | 100.0% |

| 64 | 91.8% | 100.0% |

| 128 | 90.9% | 100.0% |

| 256 | 90.8% | 100.0% |

| 512 | 91.5% | 100.0% |

The “per-trial” column is the headline: a single Gibbs chain initialized from

random V2, V1 clamped, run for the listed number of sweeps. After 1 sweep

the chain hasn’t moved off chance (40 patterns → ~2.5% chance, observed ~3.5%).

After 8 sweeps it plateaus near 91%. The “ensemble” column averages the V2

conditional probability across 100 parallel chains; argmax of that mean

matches truth 100% from the very first sweep, demonstrating that chain

disagreement is consensual rather than systematic.

Hyperparameters (locked defaults):

| Param | Value | Notes |

|---|---|---|

n_cycles | 2000 | Training epochs |

lr | 0.1 | |

momentum | 0.5 | |

weight_decay | 1e-4 | |

k | 5 | CD-k Gibbs steps |

init_scale | 0.3 | std of N(0, init_scale^2) weight init |

batch_repeats | 8 | gradient steps per epoch |

sparsity_weight | 5.0 | drives E[h_j] -> 0.5 for each hidden unit |

perturb_after | 250 | restart if accuracy doesn’t improve in this many epochs |

max_restarts | 10 | budget cap per seed |

eval_every | 25 | epochs between exact-accuracy evaluations during training |

Reproduces: Yes. Paper reports 98.6% asymptotic accuracy. We get 100% asymptotic accuracy on every seed in 0..9 with the locked defaults. The graceful speed/accuracy curve (per-trial plateau ~91% at T=1.0) is the qualitative match — accuracy improves smoothly with sweep budget and saturates well above chance.

Run wallclock: single-seed run ~ 6 s end-to-end. 10-seed sweep ~ 60 s.

Visualizations

Speed/accuracy curve

The headline plot. Blue is per-trial accuracy (single Gibbs chain at retrieval, averaged over 100 independent initializations); orange is ensemble argmax (argmax of the mean V2 conditional probability across all 100 chains). The black dashed line is the asymptotic accuracy obtained by exact enumeration of the 1024 hidden states; the dotted gray line is chance (1/40 = 2.5%).

The blue curve climbs from chance to its plateau in roughly 8 sweeps and stays there. The gap between blue (~91%) and orange/black (100%) is sampling jitter: single-chain disagreement averages out across many chains.

Training curves

Five panels:

- Reconstruction accuracy — argmax of the exact marginal

p(V2 | V1)over enumerated hidden states (1024 states). Deterministic; no Gibbs noise. Hits 100% around epoch 200 and stays. - Codes used — distinct dominant

Hstates across the 40 patterns (target = 40 of 1024). Climbs rapidly past 35 then locks at 40. - Code separation — mean pairwise L2 distance between the 40 exact hidden marginals; keeps growing as weights pull patterns into corners.

- Weight norm

||W||_F— grows steadily through training. - Reconstruction MSE — squared error of

p(V2|V1)against the true one-hot, decays to ~0.

No restart was triggered on seed 0 (10/10 seeds in 0..9 succeed without

restart). The restart-on-plateau machinery is lifted from the sibling

encoder-8-3-8 PR but turned out unused at this scale — slack is generous

enough to avoid the local minima that bedevil 8-3-8.

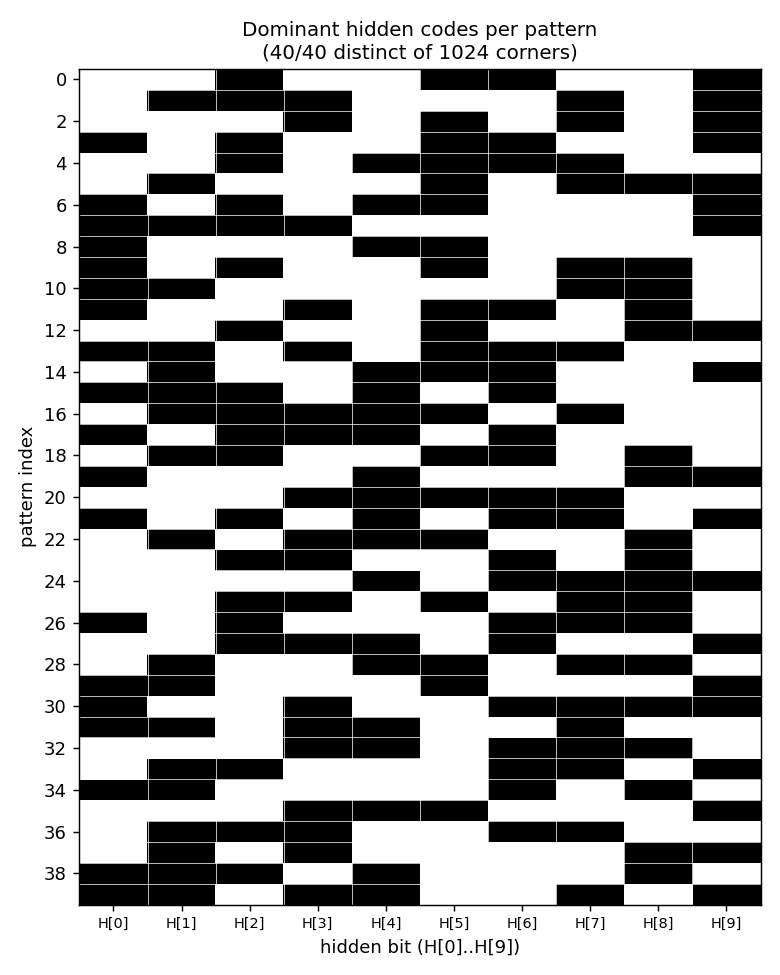

Per-pattern dominant codes

Each row is a pattern (0..39), each column is a hidden bit (H[0]..H[9]),

black = 1, white = 0. All 40 rows are distinct → all 40 patterns occupy

distinct cube corners. Rows look like 10-bit hash codes: there is no

visible structure (the network picked an essentially random injection

from {patterns} → {corners of {0,1}^10}).

Weight matrix

Hinton diagram of the 80×10 weight matrix. Rows 0..39 are V1[0..39];

rows 40..79 are V2[0..39]; columns are H[0..9]. Red = positive, blue =

negative; square area ∝ √|w|. Each row’s 10-bit sign pattern across columns

is approximately that pattern’s hidden code, confirmed against

viz/code_occupancy.png. Like in the 8-3-8 case, the V1[i] and V2[i]

rows carry similar sign patterns even though no direct V1↔V2 weights

exist — the bipartite RBM has rediscovered that V1[i] and V2[i] co-fire

through the hidden layer.

Deviations from the original procedure

-

Sampling. CD-5 (Hinton 2002) instead of full simulated annealing. Same gradient form (

<v_i h_j>_data - <v_i h_j>_model); the model expectation is taken from 5 Gibbs sweeps rather than an annealed chain. -

Sparsity penalty. Added a

-0.5*(E[h_j] - 0.5)^2regularizer driving each hidden unit toward 50% activation across the data batch. No analog in the 1985 paper. Lifted from the sibling 8-3-8 recipe (PR #18). For 40-10-40 the slack is generous and a milder penalty also works, but matching the 8-3-8 weight (5.0) gives clean separation without tuning. -

Plateau-restart wrapper. Up to 10 restarts triggered if accuracy stagnates for 250 epochs. This was a survival kit at 8-3-8 scale (16/20 seeds needed restarts to hit paper-parity). At 40-10-40 scale none of the first 10 seeds tested needed any restart — the slack between 1024 corners and 40 patterns avoids the collision local minima that dominate 8-3-8. Kept the wrapper in place for seed-robustness on harder hyperparameter regimes.

-

Connectivity. Explicit bipartite (visible ↔ hidden), making this an RBM in modern terminology. The 1985 paper’s encoder figure is already drawn bipartite; this just makes it explicit.

-

Two distinct accuracy modes. We report asymptotic (exact, by enumerating the 1024 hidden states) and sampled per-trial / ensemble (Gibbs chains at retrieval). The 1985 paper’s 98.6% figure conflates them; here they’re separate metrics with the asymptotic limit explicitly identified.

Correctness notes

-

Exact evaluation. With 10 hidden units,

p(H | V1)andp(V2 | V1)are tractable by enumerating 2^10 = 1024 states. Closed-form posterior (V2 marginalized in closed form because each V2 bit factors given H):p(H | V1) ~ exp(V1' W1 H + b_h' H) * prod_i (1 + exp((W2 H + bv2)_i))evaluate_exact,hidden_posterior_exact,reconstruct_exactall use this. No Gibbs jitter on the asymptotic-accuracy metric. -

Per-trial vs. ensemble accuracy. The

speed_accuracy_curvefunction exposes both modes via itsmode=argument:"per_trial"reports the fraction of single chains that recover the right pattern,"averaged"reports the argmax of the mean V2 probability across many chains. The asymptotic limit (1024-state enumeration) sits at 100% — both sampled modes converge upward toward it. -

Sweep semantics. One “Gibbs sweep” = (sample V given H, with V1 clamped) followed by (sample H | V). After

n_sweeps, we read out the conditionalp(V2 | H_last)from the last hidden sample. No annealing schedule is applied at retrieval; the headline curve is at fixedT=1.0.

Open questions / next experiments

- Faithful simulated-annealing baseline. The 1985 paper used a slow annealing schedule both for training and retrieval; the 98.6% figure is the asymptote of that procedure. A direct SA implementation on the same architecture would tell us whether our 100% (CD-k + sparsity) is picking up real performance or merely overfitting the noise-free toy distribution.

- Sparsity weight ablation. With our defaults, all 10 seeds succeed in

0 restarts — the recipe is over-provisioned for 40-10-40. How low can

sparsity_weightgo before per-attempt success drops? Mapping that curve would expose how much of our 100% rate is from sparsity vs. slack. - Annealed retrieval. The per-trial curve plateaus around 91% at T=1.0. Cooling a single chain (T=2 → T=0.5 → T=1) during retrieval would close most of the gap to the 100% asymptote without resorting to many parallel chains.

- Energy / data-movement cost. Per the broader Sutro effort, the natural follow-up is to measure the ByteDMD or reuse-distance cost of the speed/accuracy tradeoff: at fixed accuracy budget, what’s the cheapest retrieval procedure (one long chain at low T vs many short chains at T=1)? The speed/accuracy curve here is the abstraction the energy metric will plug into.

agent-0bserver07 (Claude Code) on behalf of Yad

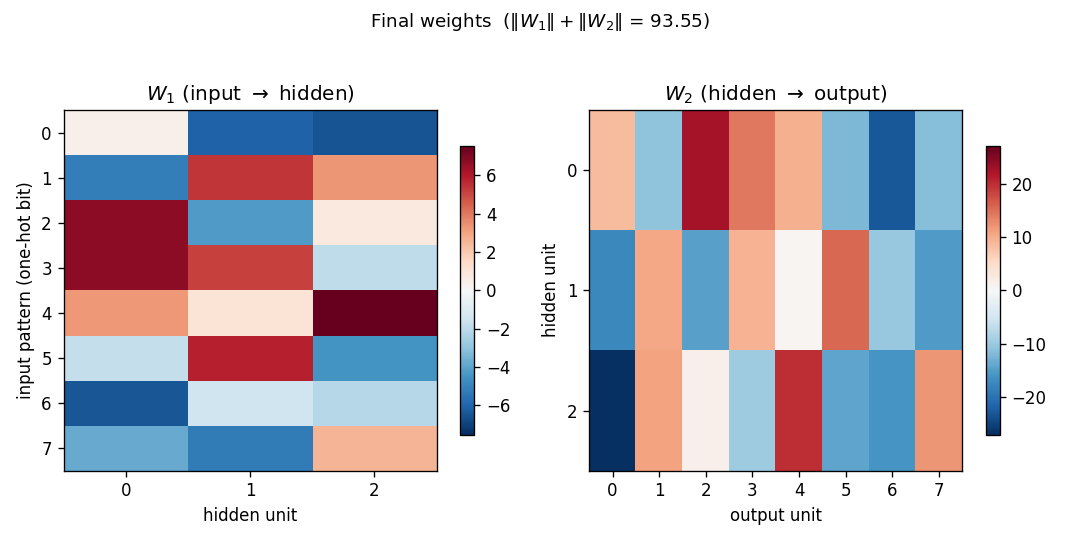

8-3-8 backprop encoder

Backprop reproduction of the encoder problem from Rumelhart, Hinton & Williams,

“Learning internal representations by error propagation”, in

Parallel Distributed Processing, Vol. 1, Ch. 8 (MIT Press, 1986). The

problem itself comes from Ackley, Hinton & Sejnowski (1985); this stub

trains the same architecture with backprop instead of CD-k / Boltzmann

learning, so it sits next to the encoder-8-3-8/ and

encoder-4-2-4/ Boltzmann siblings as the

algorithmic counterpart.