Schmidhuber Problems

A reproducible-baseline catalog of the synthetic learning problems that appear in Jürgen Schmidhuber’s experimental papers from 1989 through 2025 — implemented in pure numpy, runnable on a laptop CPU, with paper-comparison metrics per stub.

- GitHub: https://github.com/cybertronai/schmidhuber-problems

- Site: https://cybertronai.github.io/schmidhuber-problems/

- Catalog: RESULTS.md

- Visual tour: VISUAL_TOUR.md

- Build notes: BUILD_NOTES.md

- Status: 58 of 58 stubs implemented (PRs #4–#16, all merged 2026-05-08)

Introduction

The field has standardized on backprop by the end of the ’80s, and Hinton gives a sample of problems that were used at the time. In the last 20 years, we have transitioned to GPUs, and the math has changed considerably. Instead of being bottlenecked by arithmetic, the shrinking of transistors means that arithmetic is essentially free, and all of the work comes from data movement. Backprop is inefficient in terms of “commute to compute ratio” because it requires fetching all of the activations for each gradient add.

So a natural experiment would be to redo key experiments of this time with a focus on data movement. The first step is to get a baseline — to establish the list of problems which are famous, reasonable to implement, and easy to run/reproduce.

— Yaroslav, hinton-problems issue #1 (Sutro Group)

This repository is the algorithmic-lineage companion to hinton-problems.

- Hinton’s catalog emphasizes representational toy tasks: small benchmarks where hidden-unit inspection is the experimental payoff (4-2-4 encoder, family trees, shifter, Forward-Forward MNIST).

- Schmidhuber’s lineage emphasizes algorithmic capability. Four threads run through this catalog:

- Long-time-lag indexing: 1990 flip-flop → 1992 chunker → 1996 adding-problem → 1997 temporal-order

- Key-value binding: 1992 fast-weights → 2021 linear Transformers (the same outer-product math, 29 years apart)

- Kolmogorov-complexity search: 1995 Levin search → 2003 OOPS (program enumeration, no gradients)

- Controller + model + curiosity loops in tiny stochastic environments: 1990 pole-balance → 2018 World Models

v1 + v1.5 ship 58 implementations covering this lineage from the 1989 NBB through the 2022 Neural Data Router. Each stub is a self-contained folder with model + train + eval + visualization + animated GIF, all in numpy, all runnable in <5 min per seed on an M-series laptop.

What’s here

| 32 reproduce paper claims | 25 partial / qualitative reproductions | 1 honest non-replication |

|---|---|---|

| full or qualitative match | algorithm works, paper-config gap documented | gap analysed mathematically |

Pure numpy + matplotlib throughout. Every stub runs on a laptop CPU. Each problem lives in its own folder with <slug>.py (model + train + eval), README.md (8 sections: Header / Problem / Files / Running / Results / Visualizations / Deviations / Open questions), make_<slug>_gif.py, visualize_<slug>.py, an animated <slug>.gif, and a viz/ folder of training curves and weight visualizations.

Per the SPEC’s RL-stub rule, RL/env-heavy stubs (pole-balance-*, pomdp-flag-maze, world-models-*, torcs-vision-evolution, upside-down-rl, double-pole-no-velocity) use numpy mini-environments that capture the algorithmic claim of the original paper, not the original simulator. The substitution is documented in each stub’s §Deviations. Original-simulator reruns are tracked as v2 follow-ups.

Development

This repository includes a minimal Nix development shell with Python and NumPy:

nix develop

python3 nbb-xor/nbb_xor.py --seed 0

Or run one stub directly without Nix (assumes python3 -m pip install numpy matplotlib):

cd flip-flop

python3 flip_flop.py --seed 0

python3 visualize_flip_flop.py

python3 make_flip_flop_gif.py

Visual tour

|  |

|---|---|

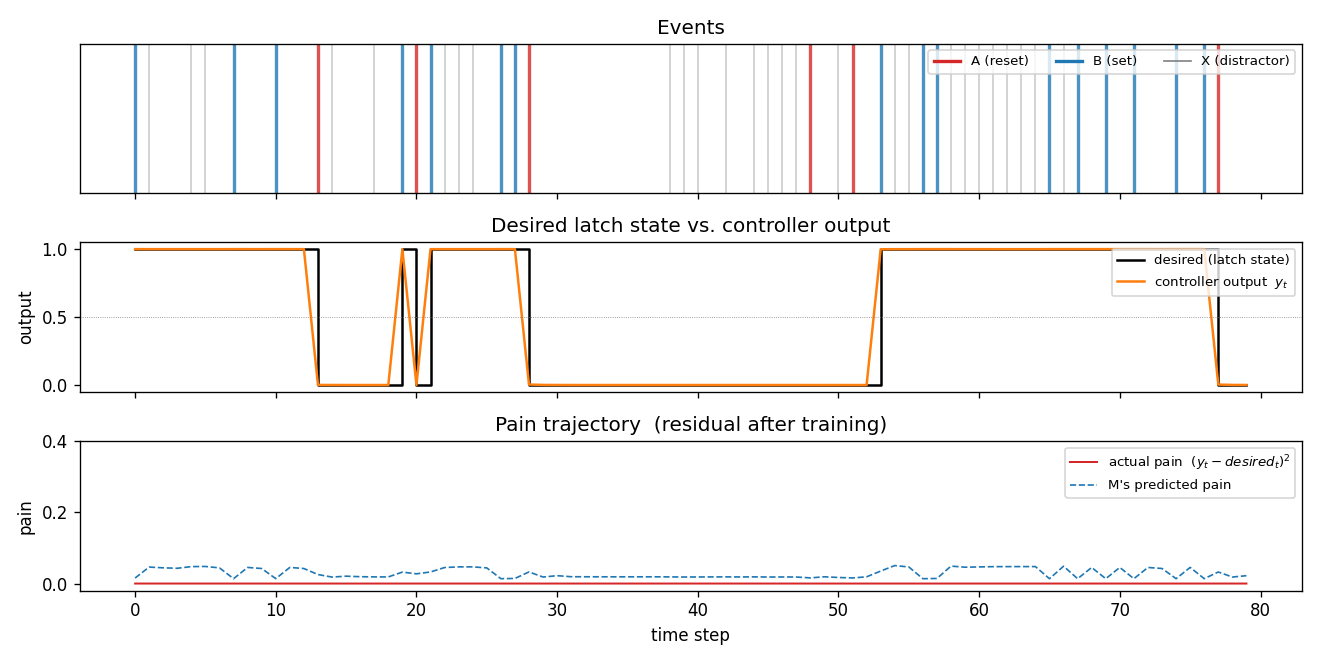

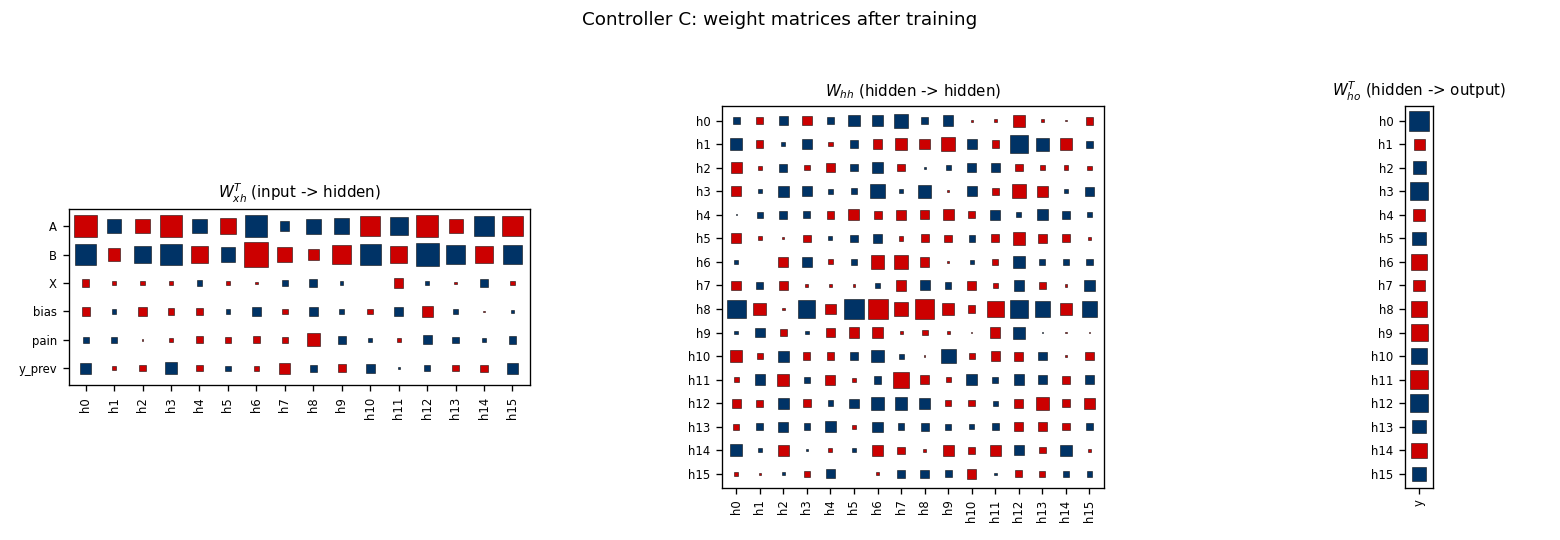

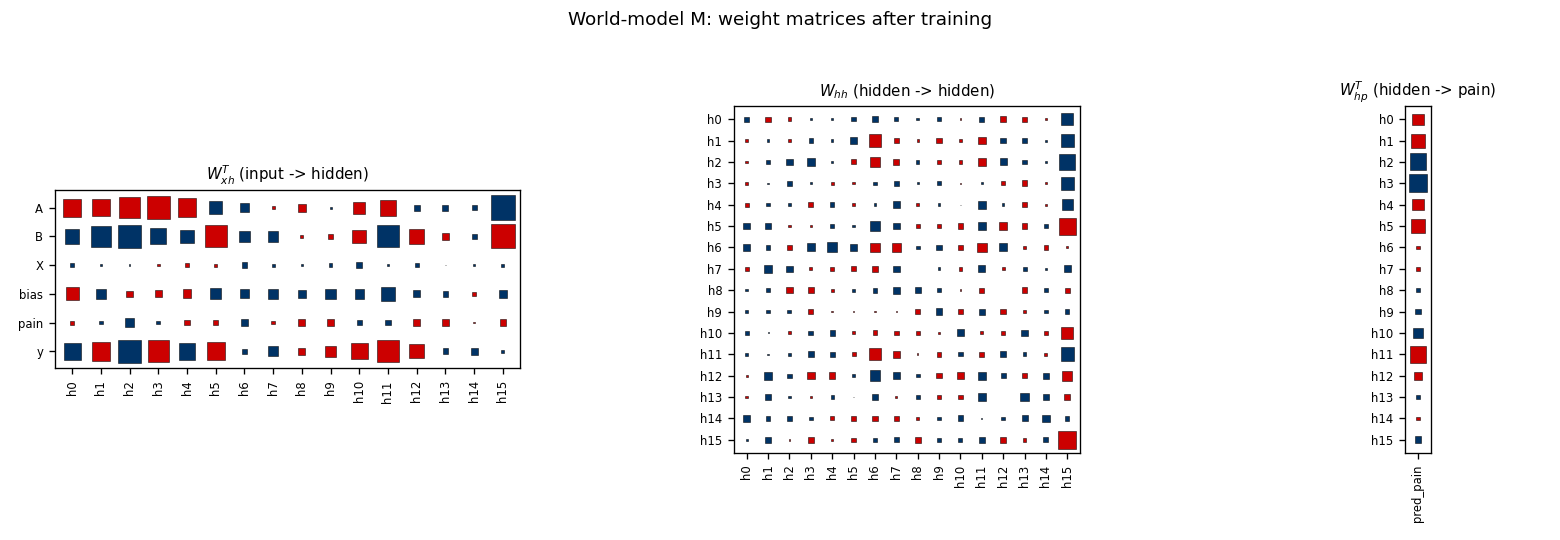

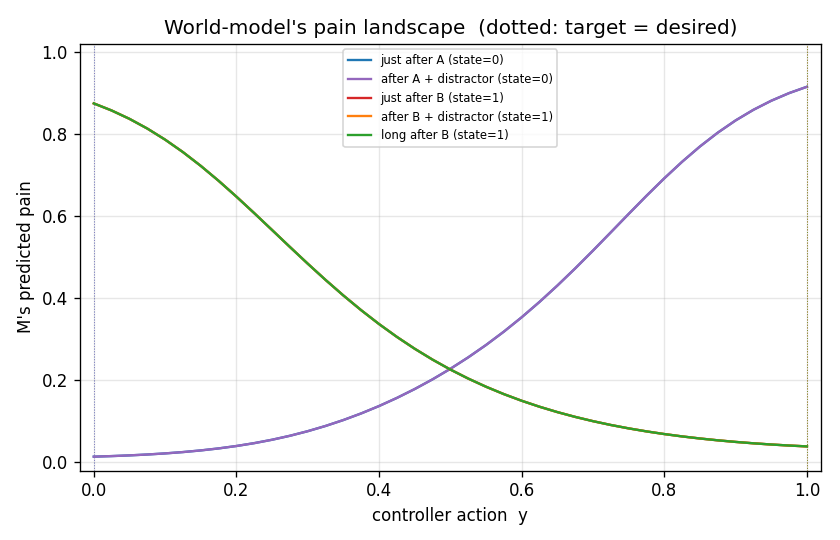

nbb-xor — Schmidhuber 1989 NBB local rule on XOR. The wave-0 sanity validator: WTA + bucket-brigade dissipation, no backprop. | flip-flop — Schmidhuber 1990 controller + differentiable world-model on the canonical LSTM-precursor latch. |

|  |

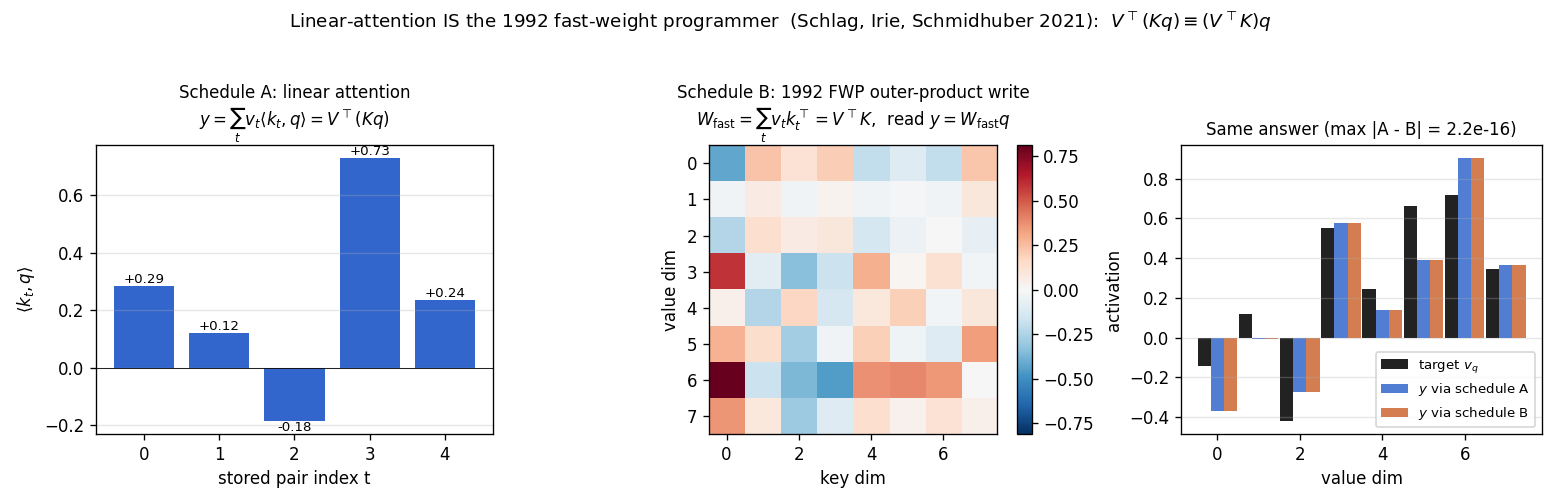

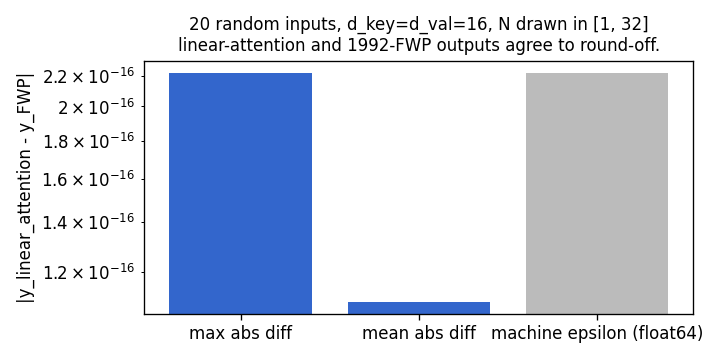

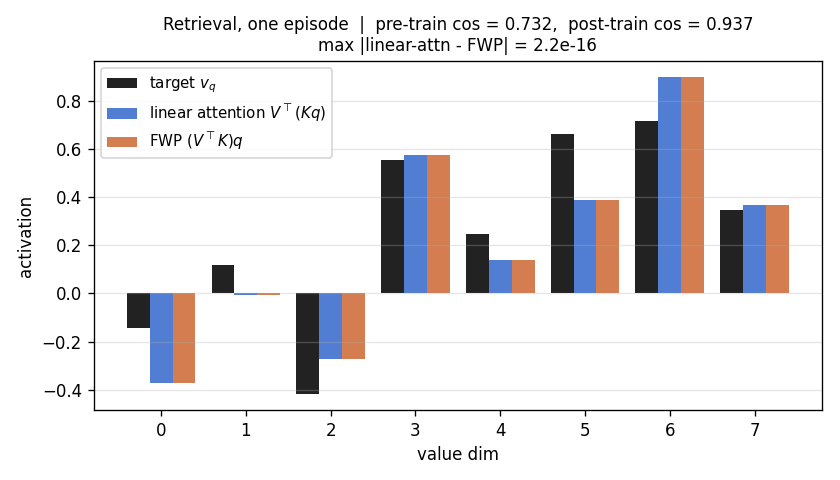

linear-transformers-fwp — Schlag/Irie/Schmidhuber 2021. Linear-attention V^T(Kq) ≡ 1992-FWP (V^T K)q to 2.22e-16 (float64 ulp). | world-models-carracing — Ha & Schmidhuber 2018 V+M+C on a numpy 2D track. Returns +103.8 mean across 5 seeds (random +4.84). |

For the long-form picture-first walk through all 58 stubs — every GIF, organized by era, with notes on what each visualization is meant to show — see VISUAL_TOUR.md.

Catalog

Each table shows the v1 result per stub. Full per-stub metrics (run wallclock, headline numbers, implementation budget) are in RESULTS.md.

Reproduces? legend: yes = matches paper qualitatively or quantitatively; partial / qualitative = method works, paper-config gap documented in stub README; no = paper claim does not replicate (gap analysis documented).

1980s — Local rules and the Neural Bucket Brigade

Schmidhuber (1989) — A local learning algorithm for dynamic feedforward and recurrent networks (FKI-124-90 / Connection Science)

| Stub | Reproduces? | Run wallclock |

|---|---|---|

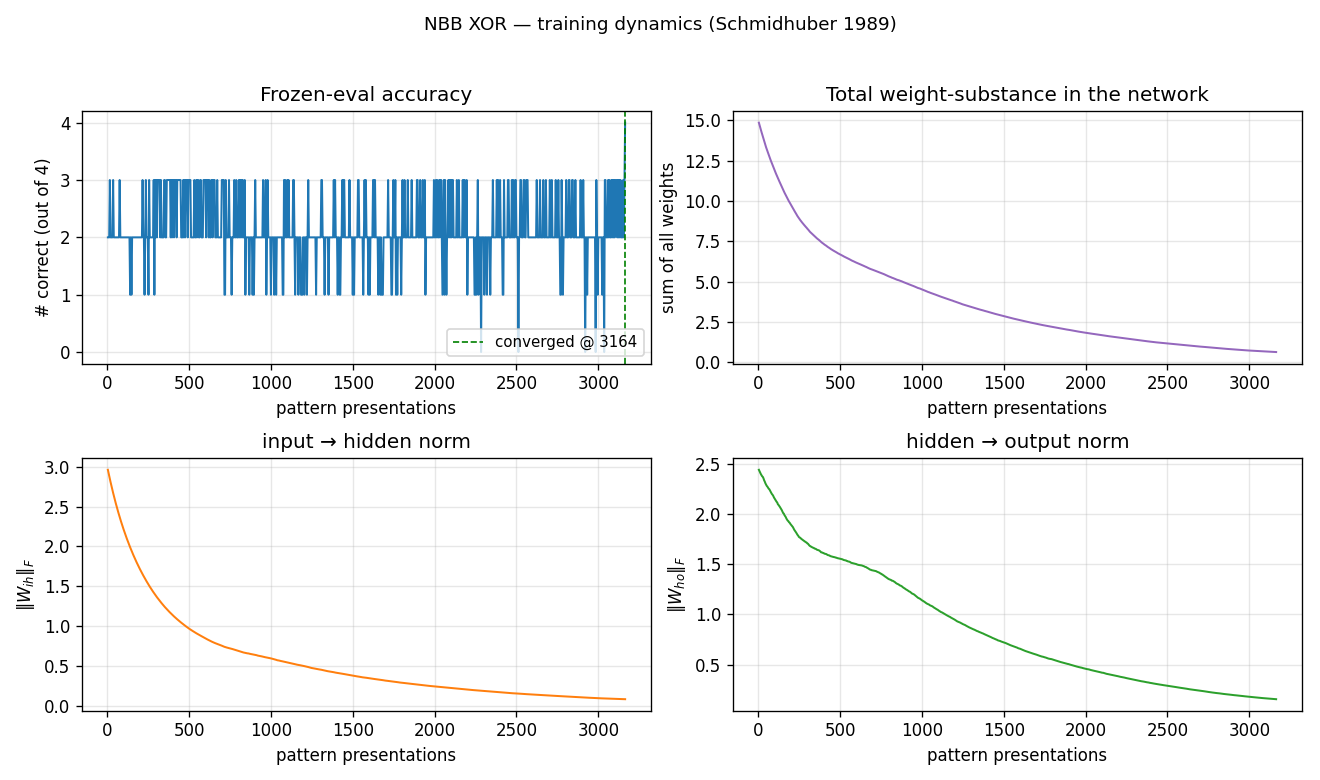

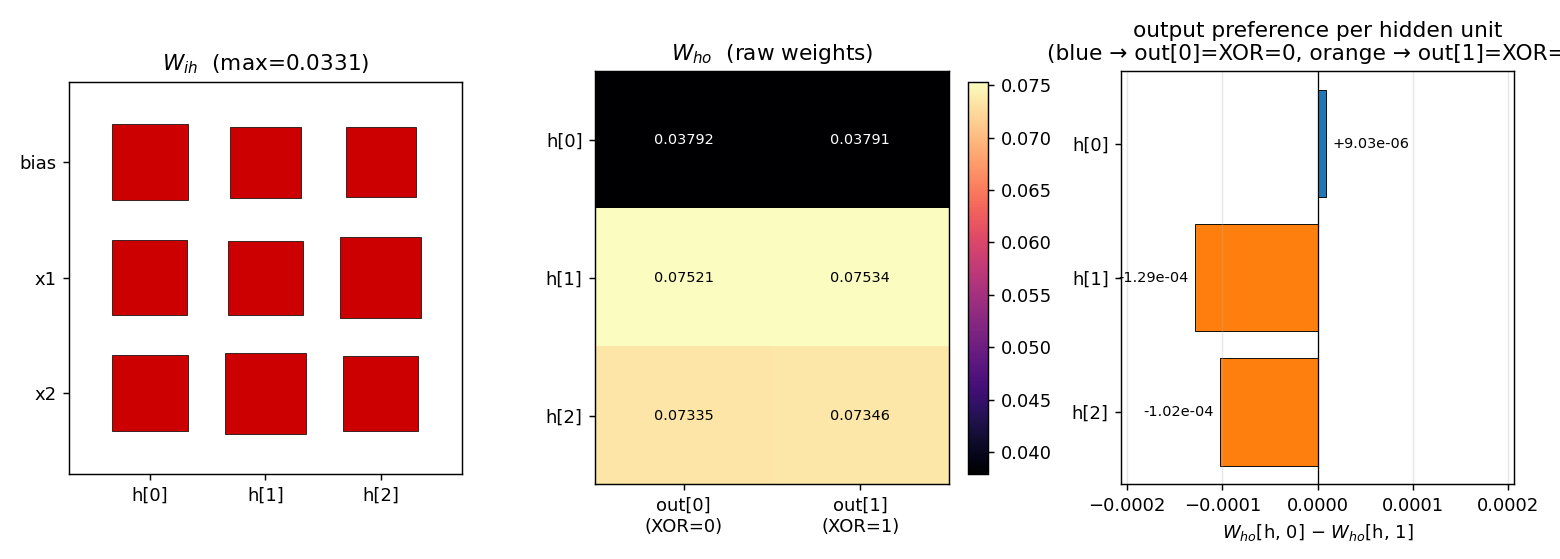

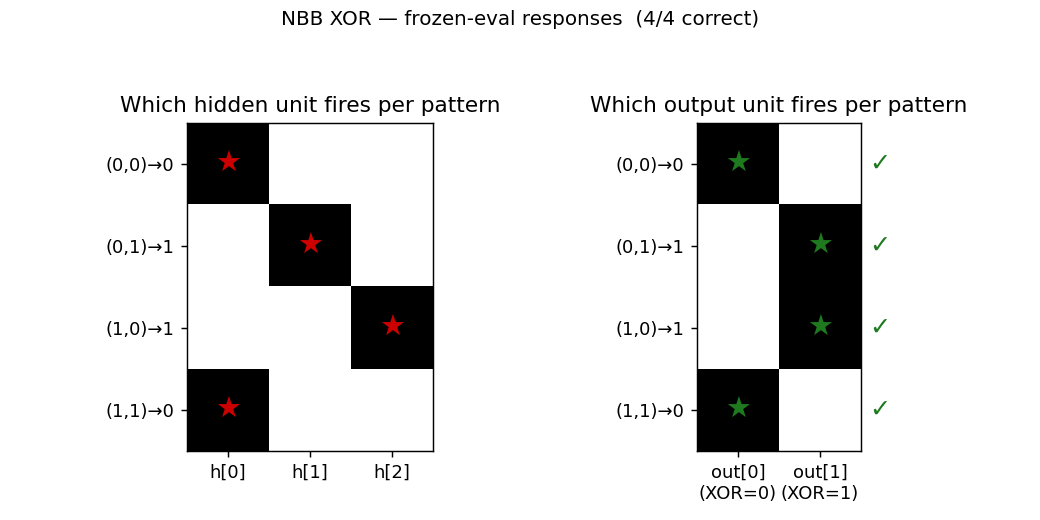

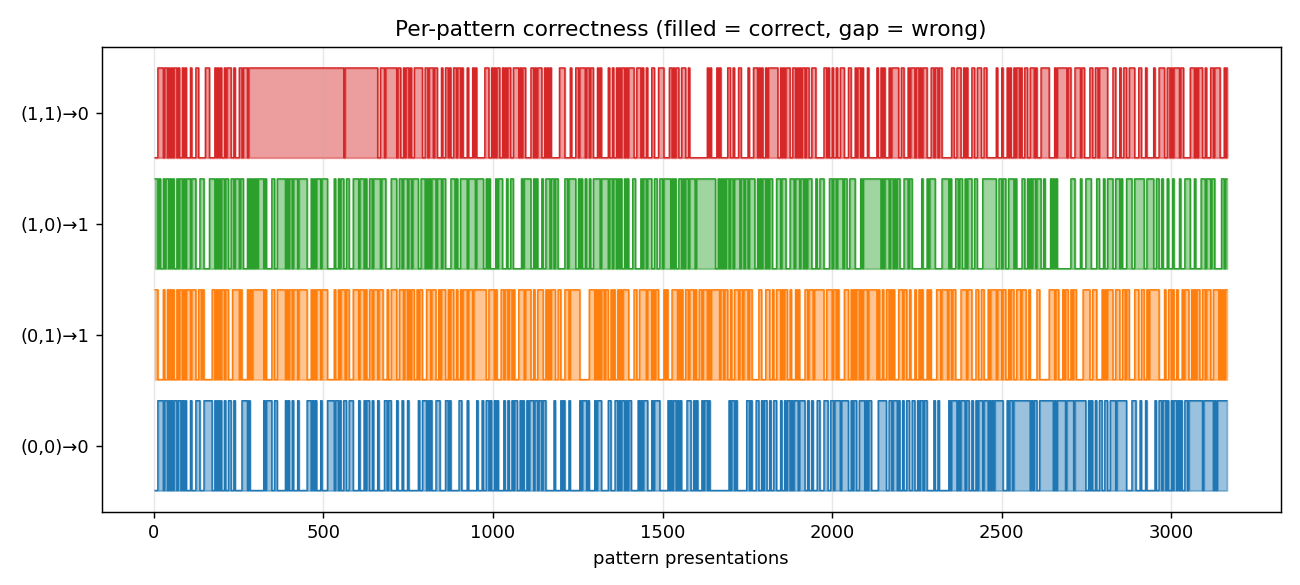

| nbb-xor | qualitative (mean 3012 presentations vs paper 619; 19/20 seeds) | 0.85s |

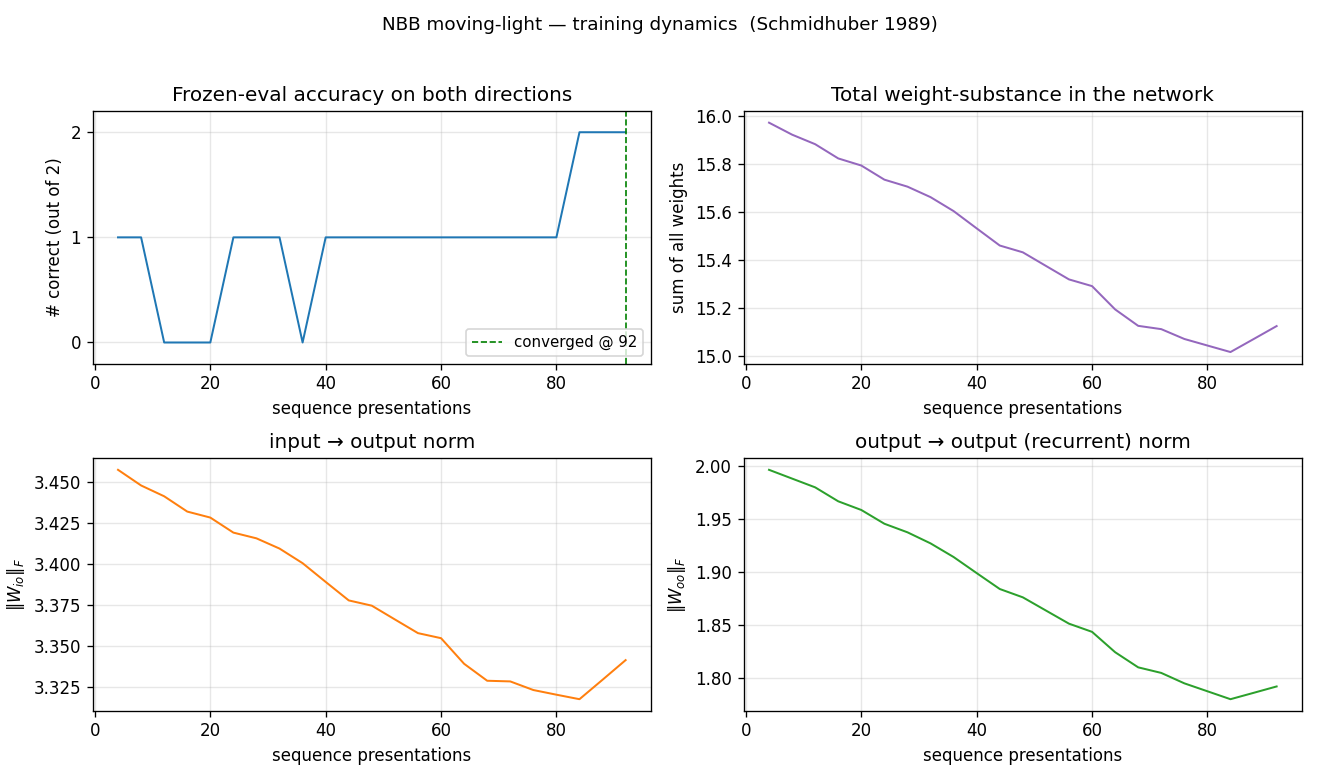

| nbb-moving-light | yes (mean 223 — exact match; 9/30 vs paper 9/10) | 0.03s |

1990 — Controller + world-model + flip-flop

Schmidhuber (1990) — Making the world differentiable (FKI-126-90 / IJCNN-90)

| Stub | Reproduces? | Run wallclock |

|---|---|---|

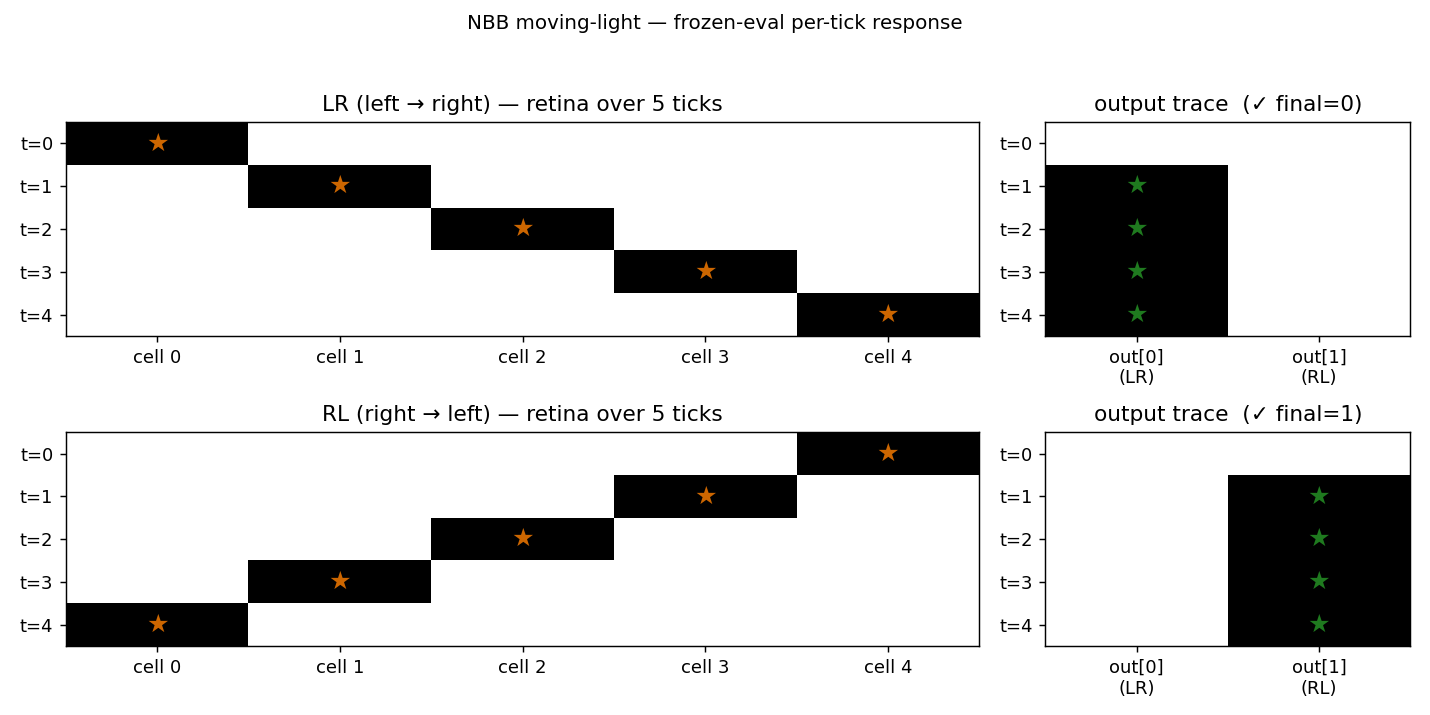

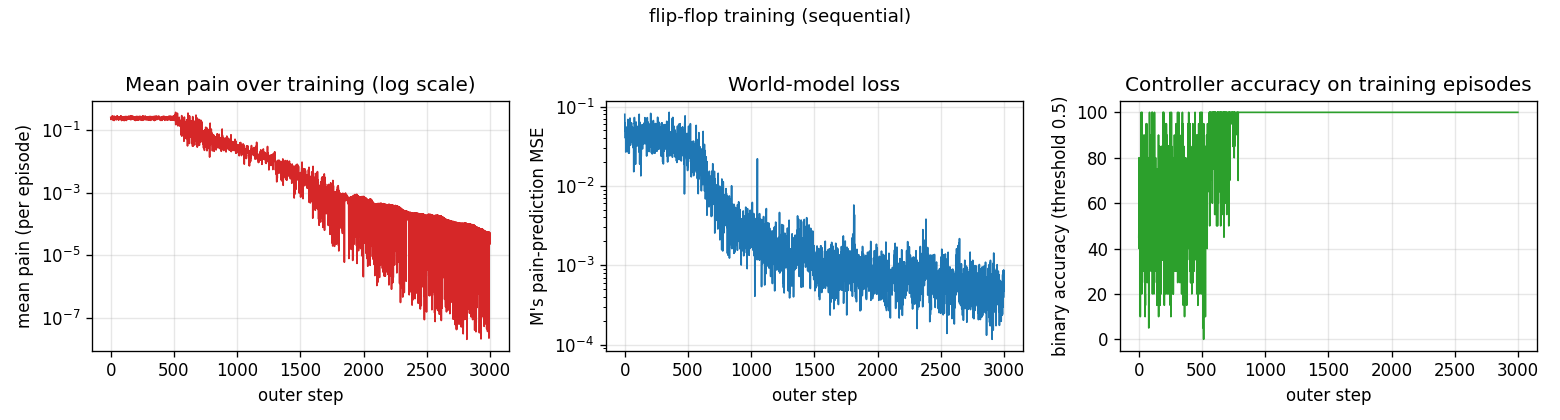

| flip-flop | yes (10/10 sequential vs paper 6/10; 30/30 parallel vs 20/30) | 3-5s |

| pole-balance-non-markov | yes (seed 0: 30/30 episodes balance 1000 steps) | 9.5s |

Schmidhuber (1990) — Recurrent networks adjusted by adaptive critics (NIPS-3)

| Stub | Reproduces? | Run wallclock |

|---|---|---|

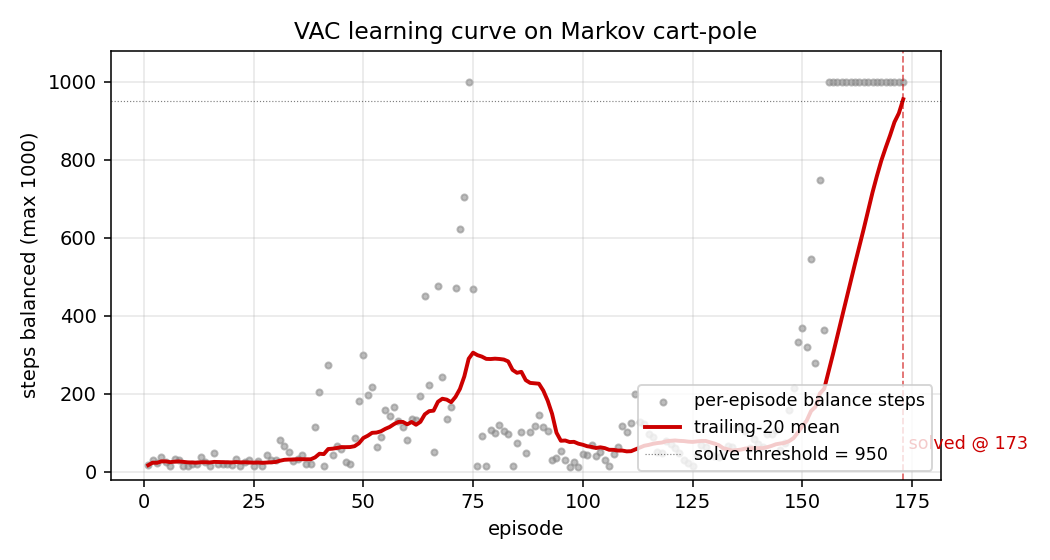

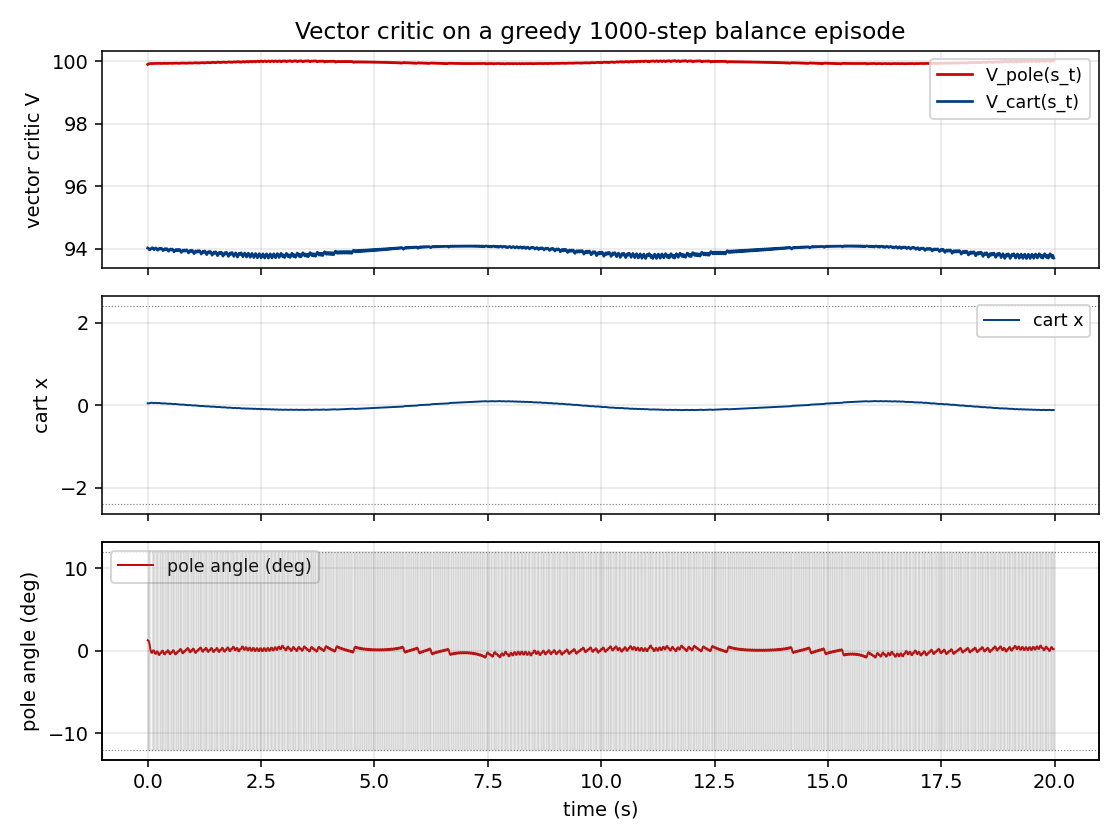

| pole-balance-markov-vac | yes (173 episodes / 1.21s training; 9/10 multi-seed) | 1.21s |

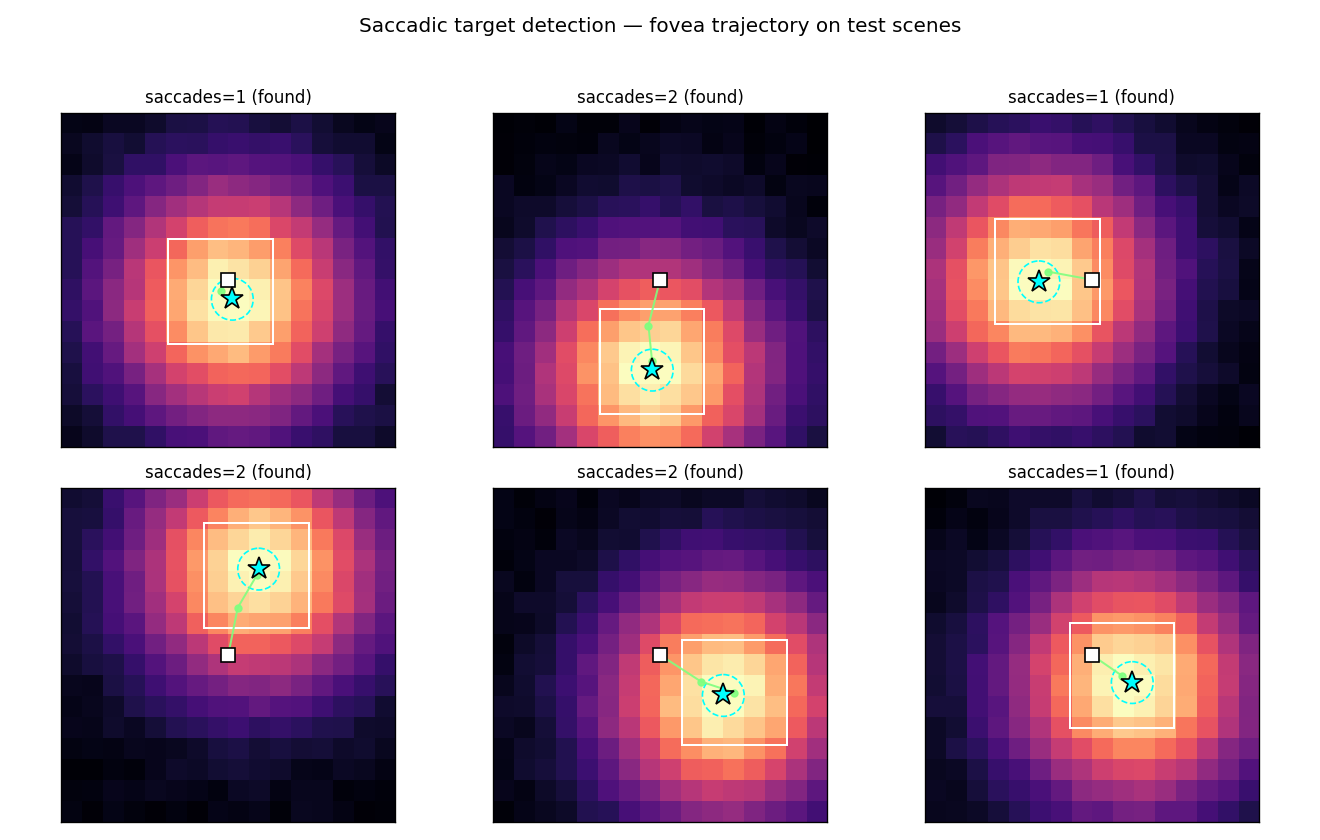

Schmidhuber & Huber (1990) — Learning to generate focus trajectories (FKI-128-90)

| Stub | Reproduces? | Run wallclock |

|---|---|---|

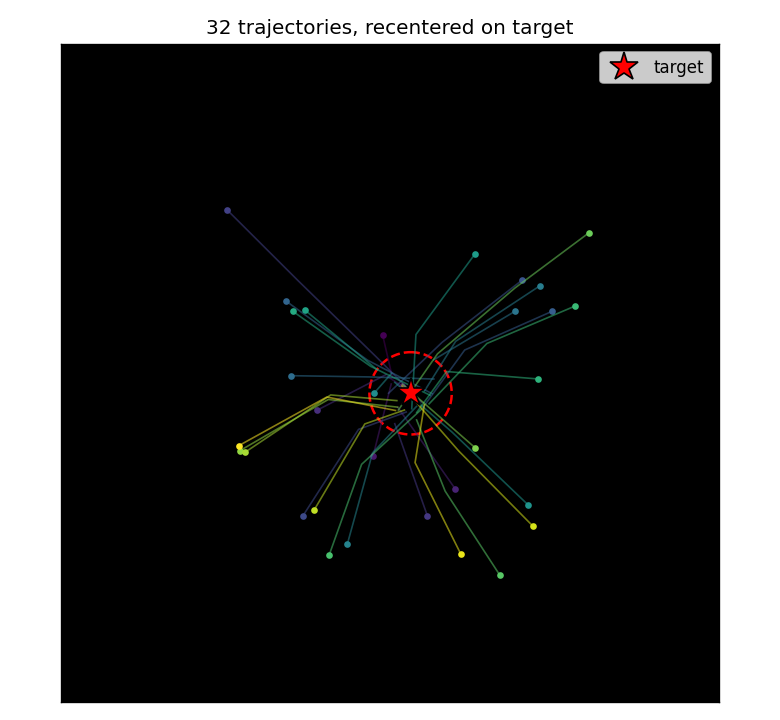

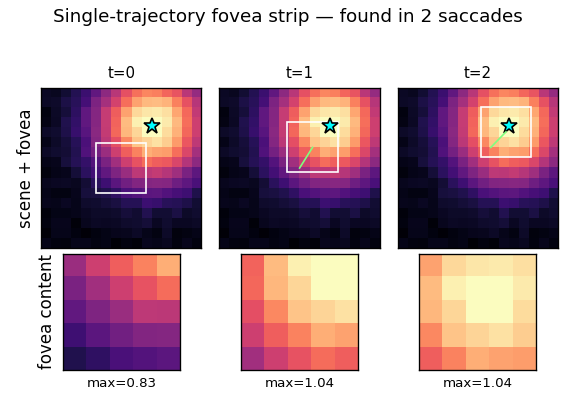

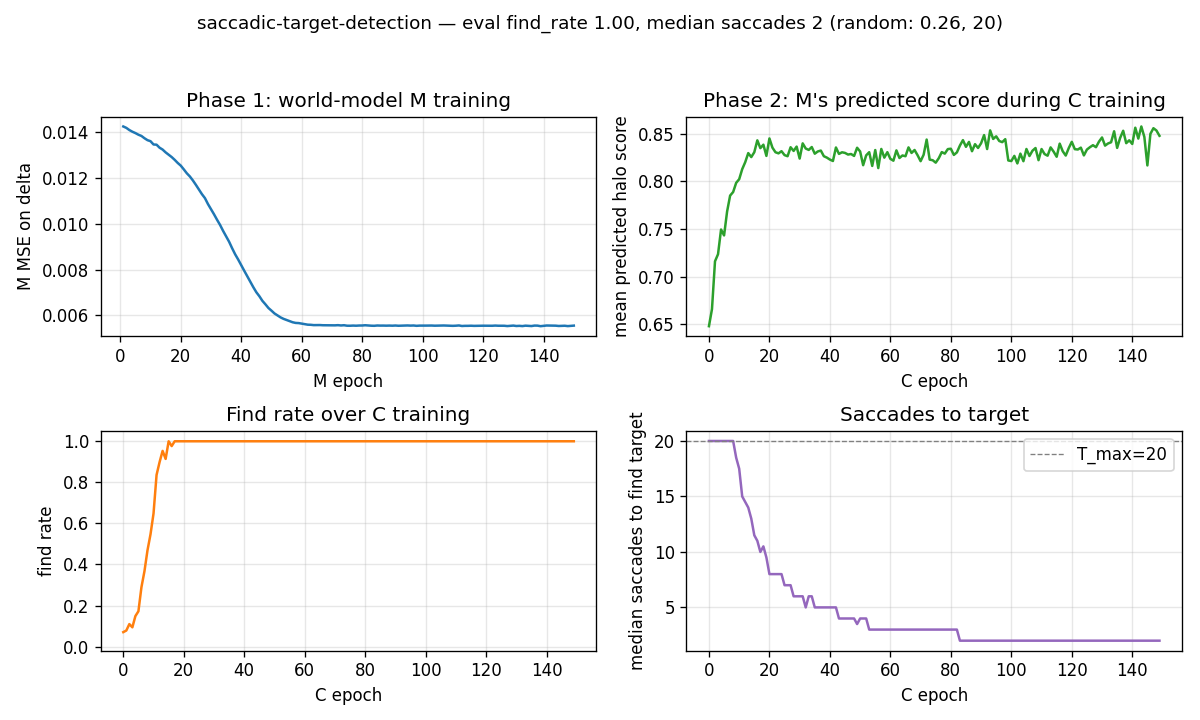

| saccadic-target-detection | yes (100% find rate, mean 1.69 saccades vs random 25.5%) | 5.4s |

1991 — Curiosity, subgoals, the chunker

Schmidhuber (1991) — Adaptive confidence and adaptive curiosity (FKI-149-91)

| Stub | Reproduces? | Run wallclock |

|---|---|---|

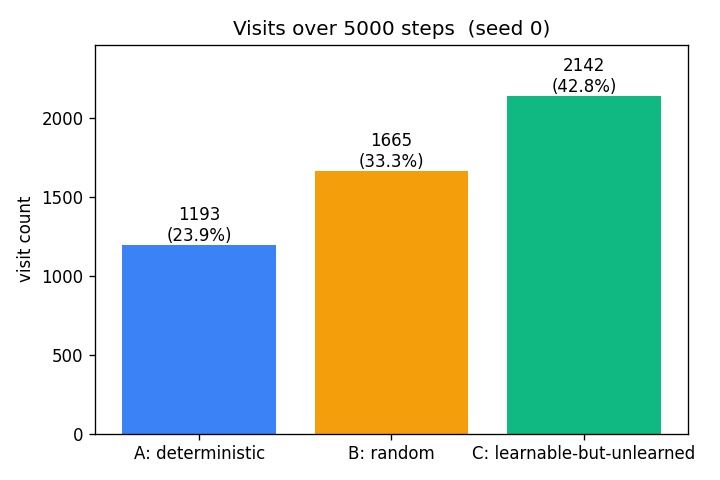

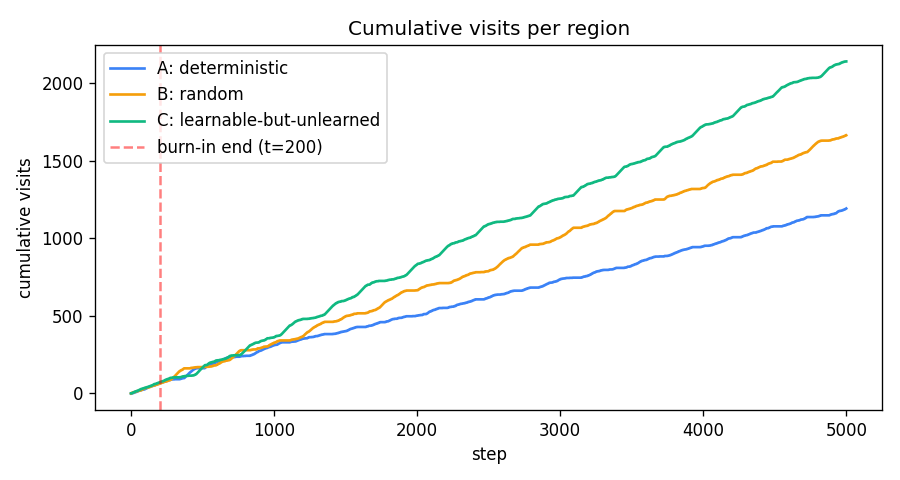

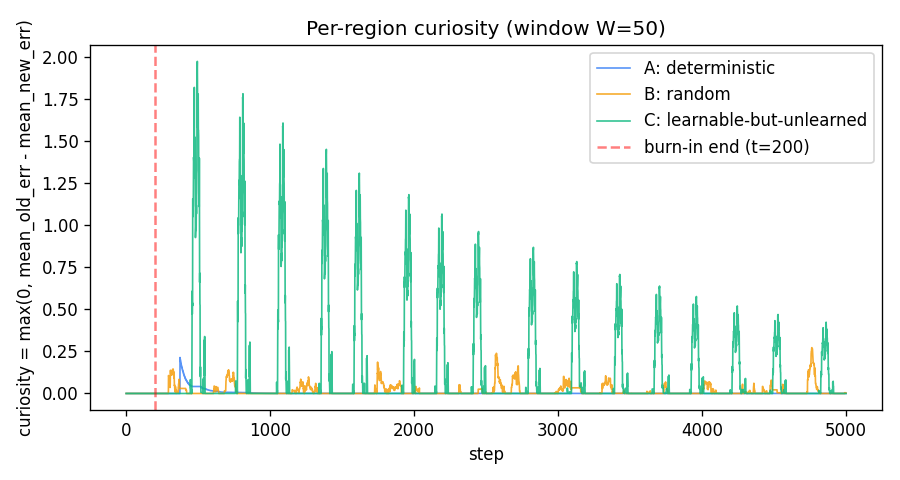

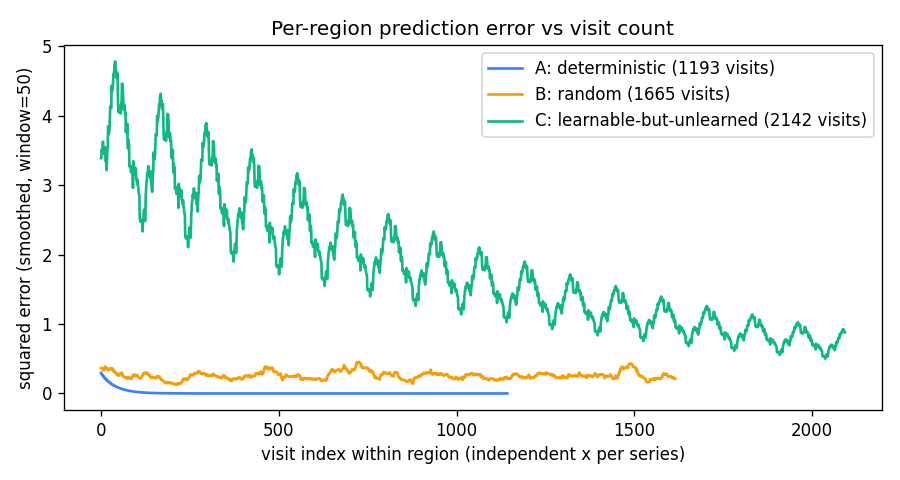

| curiosity-three-regions | yes (visit ordering C > B > A holds 100% across 10 seeds) | 0.5s |

Schmidhuber (1991) — Learning to generate sub-goals for action sequences (ICANN-91)

| Stub | Reproduces? | Run wallclock |

|---|---|---|

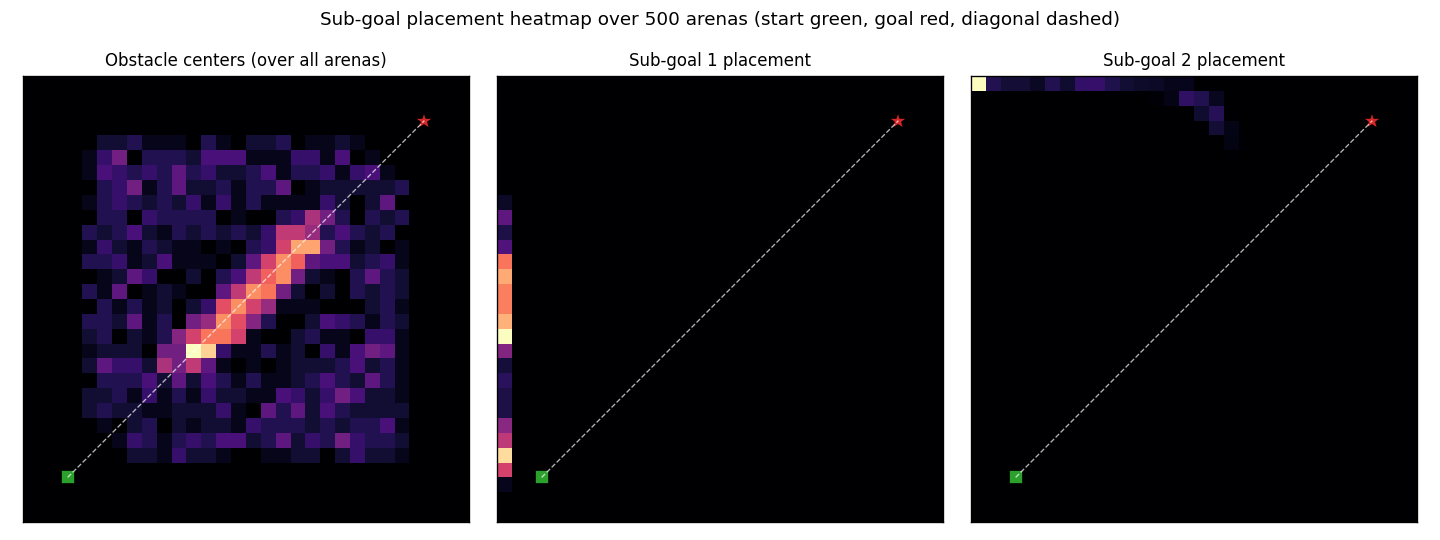

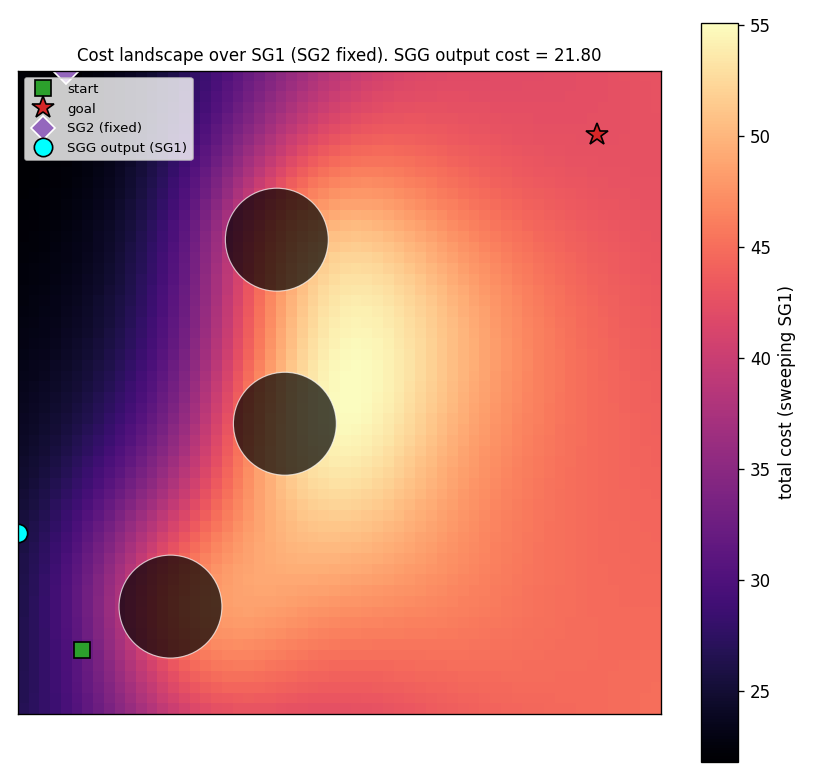

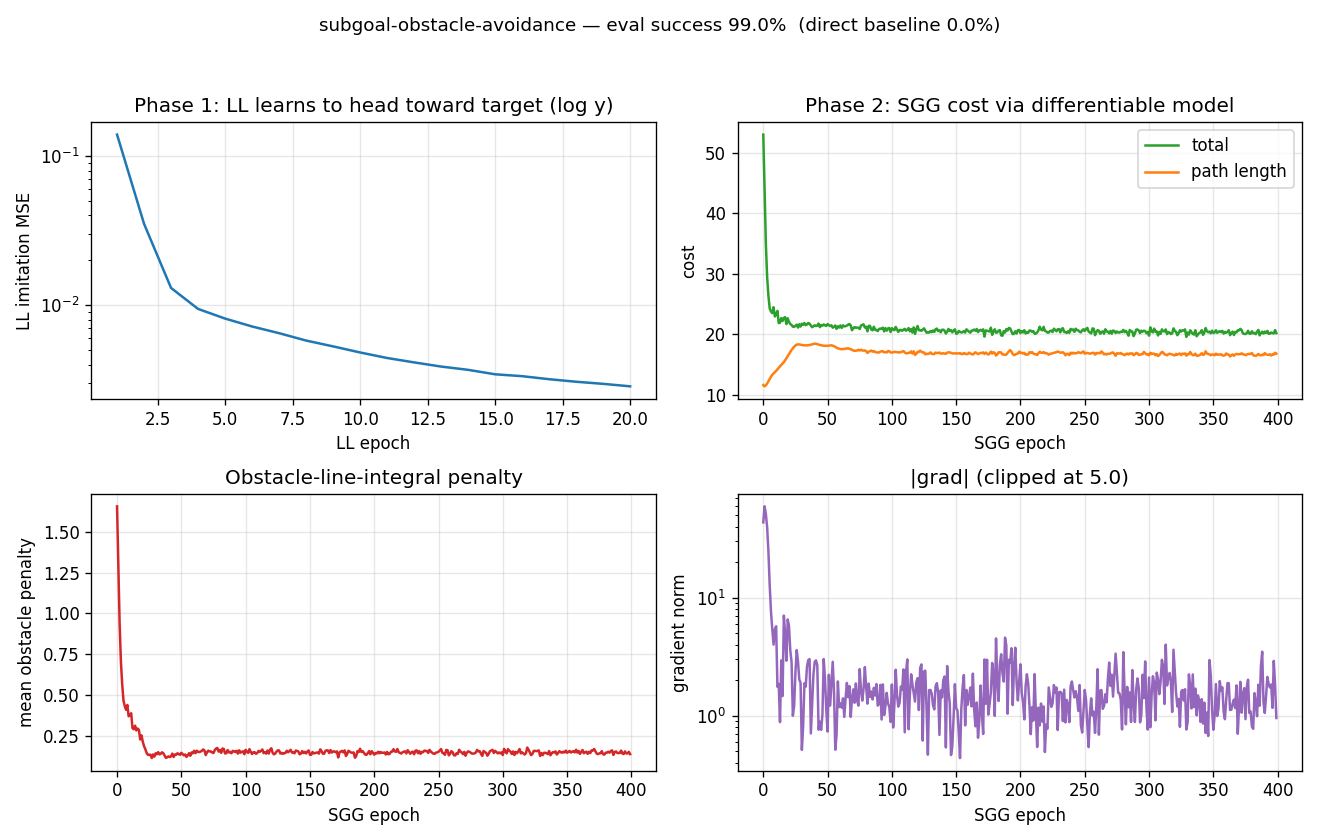

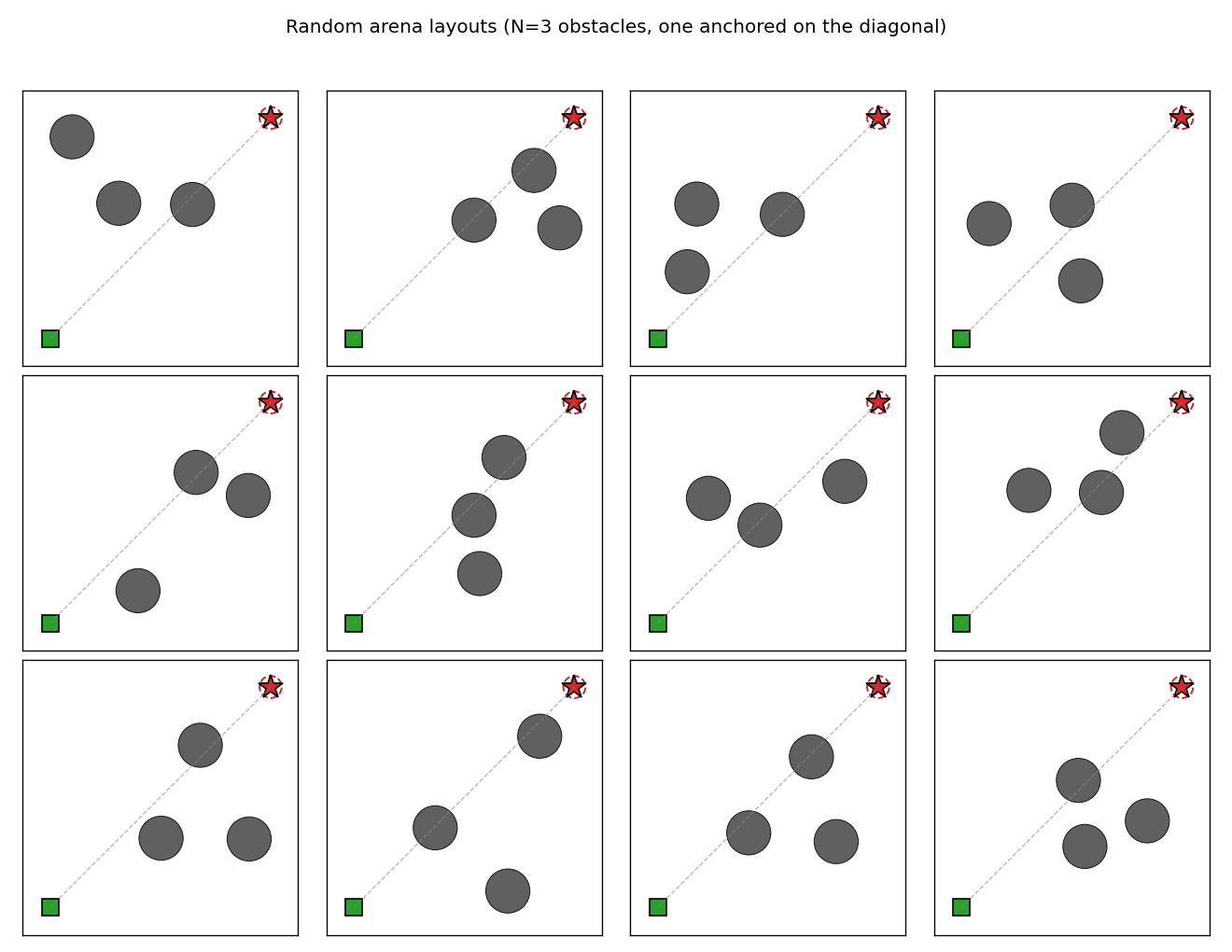

| subgoal-obstacle-avoidance | yes (99% success vs 0% no-sub-goal baseline; 10-seed mean 98.5%) | 6.4s |

Schmidhuber (1991) — Reinforcement learning in Markovian and non-Markovian environments (NIPS-3)

| Stub | Reproduces? | Run wallclock |

|---|---|---|

| pomdp-flag-maze | partial (6/10 seeds 100% solve, 4/10 stuck at 50%) | 22-32s |

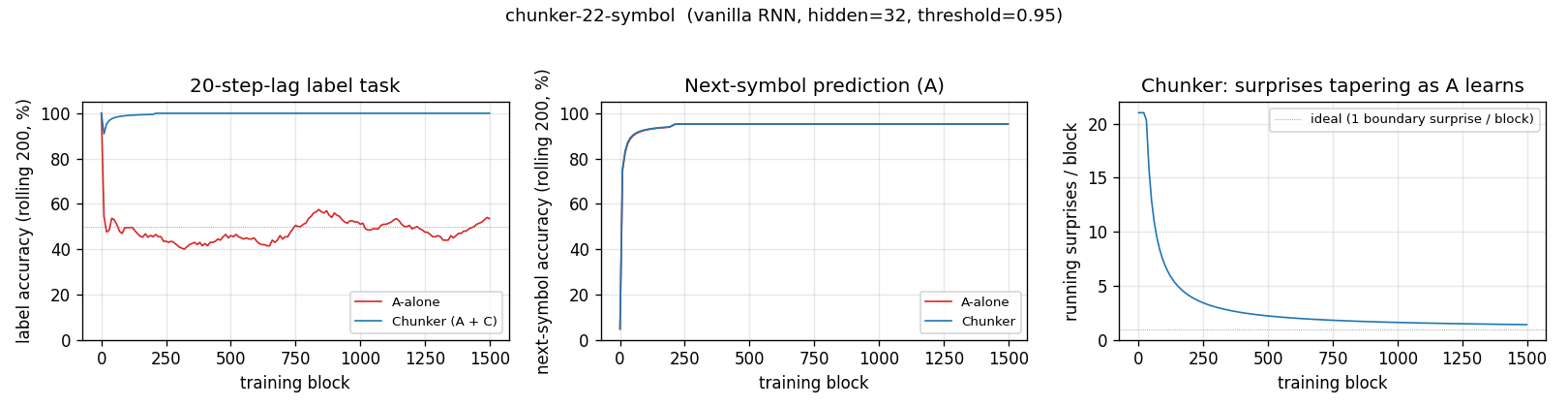

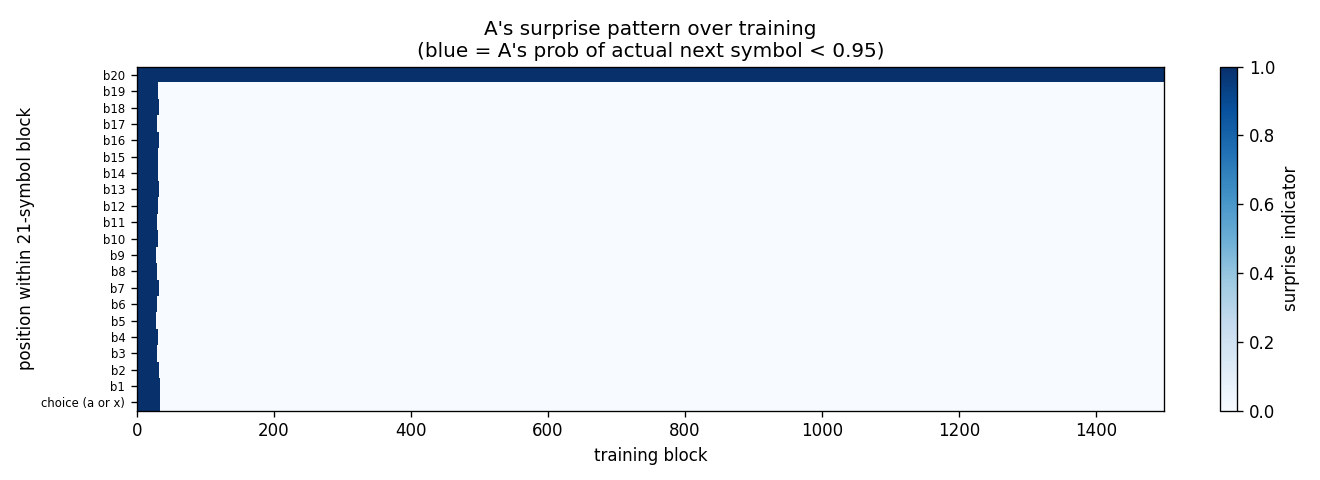

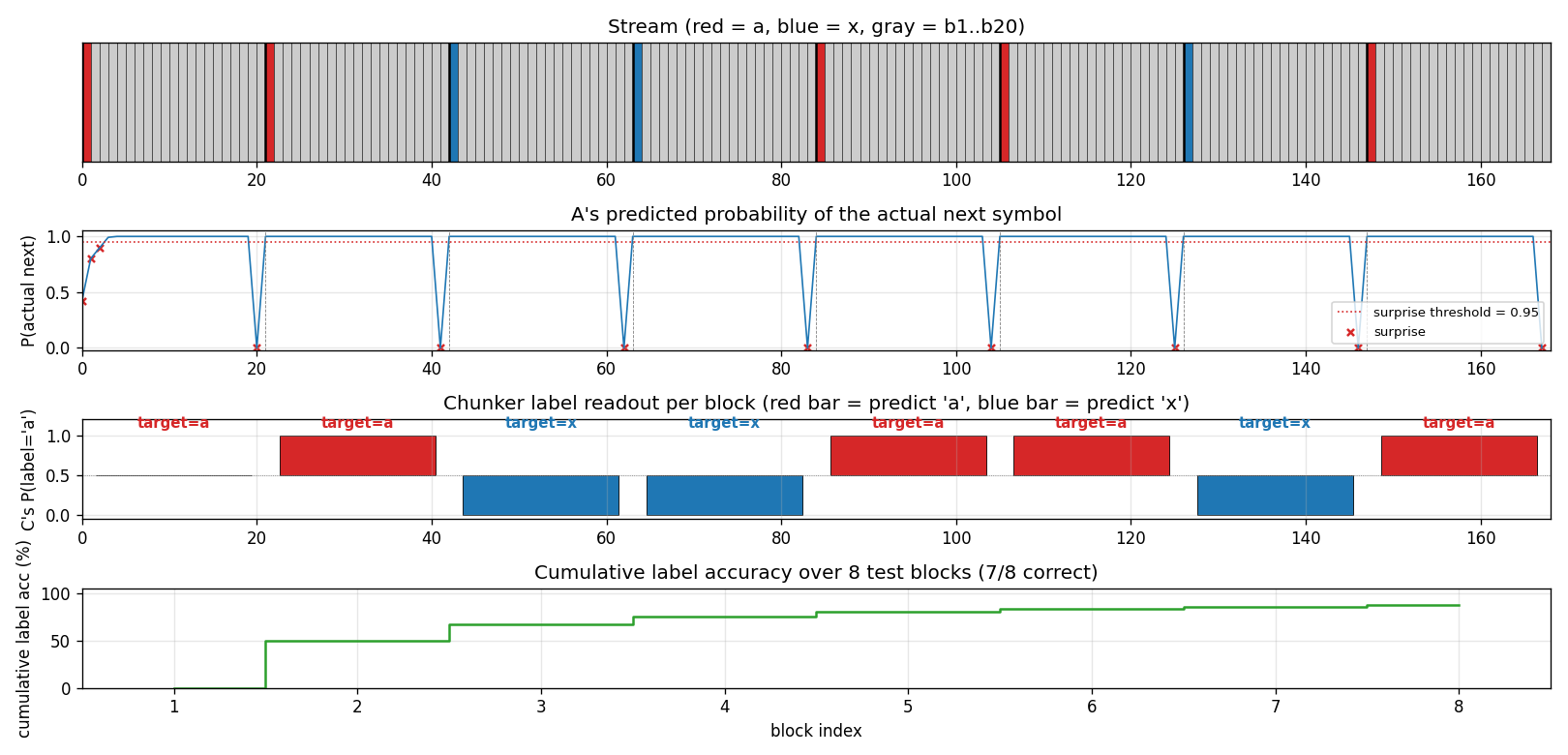

Schmidhuber (1991/1992) — Neural sequence chunkers / Learning complex extended sequences using the principle of history compression

| Stub | Reproduces? | Run wallclock |

|---|---|---|

| chunker-22-symbol | yes (99.5% label acc 10/10 seeds; A-alone baseline at chance) | 1.86s |

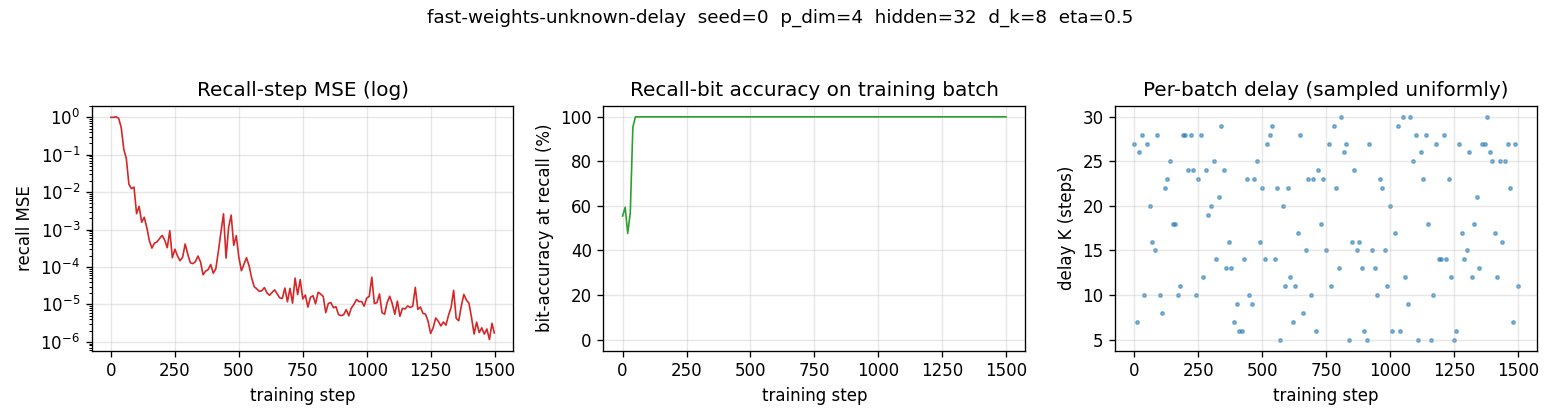

1992 — Neural Computation triple

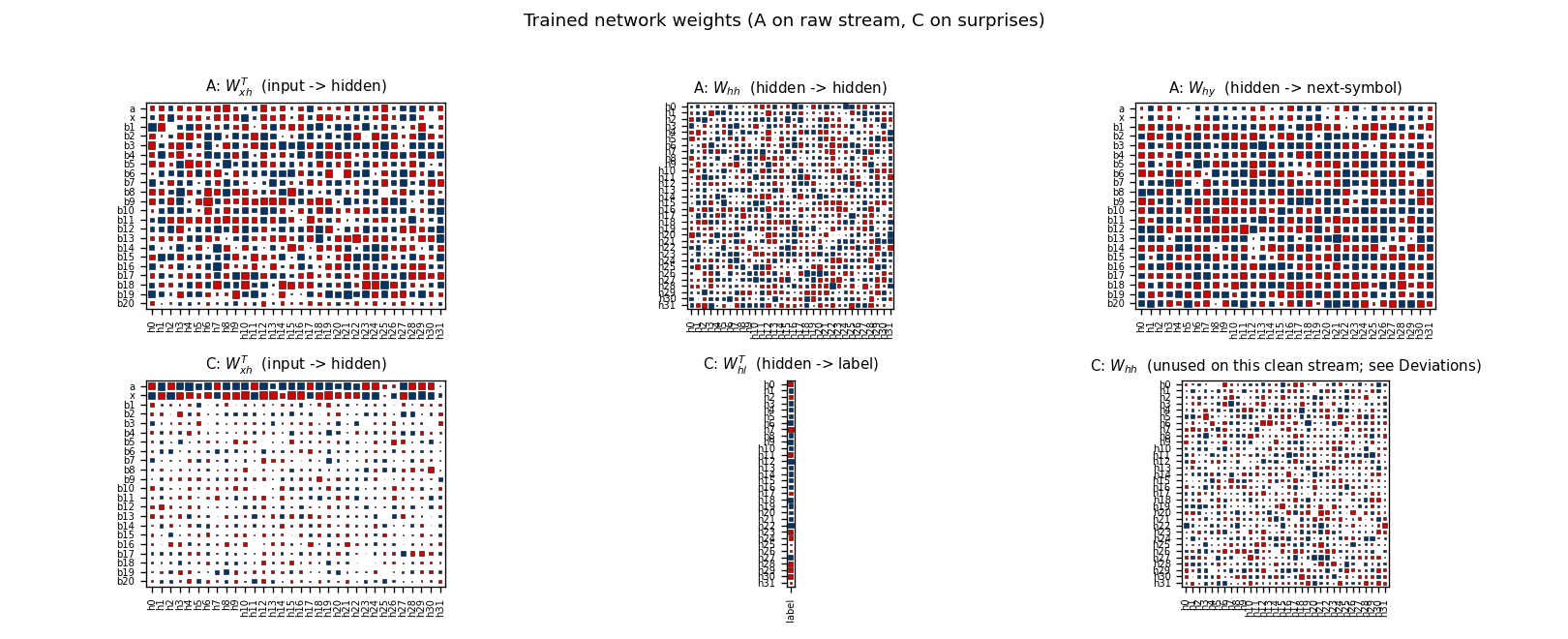

Schmidhuber (1992) — Learning to control fast-weight memories (NC 4(1))

| Stub | Reproduces? | Run wallclock |

|---|---|---|

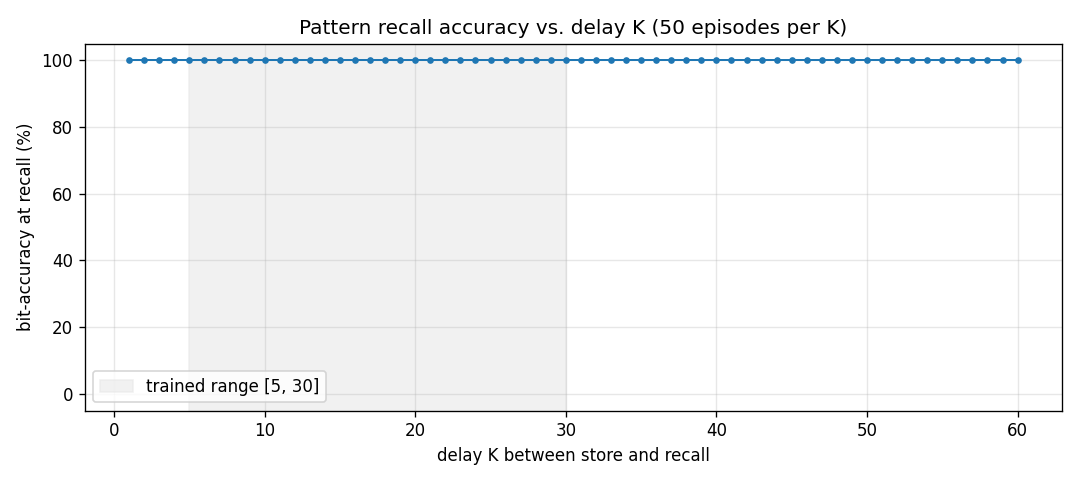

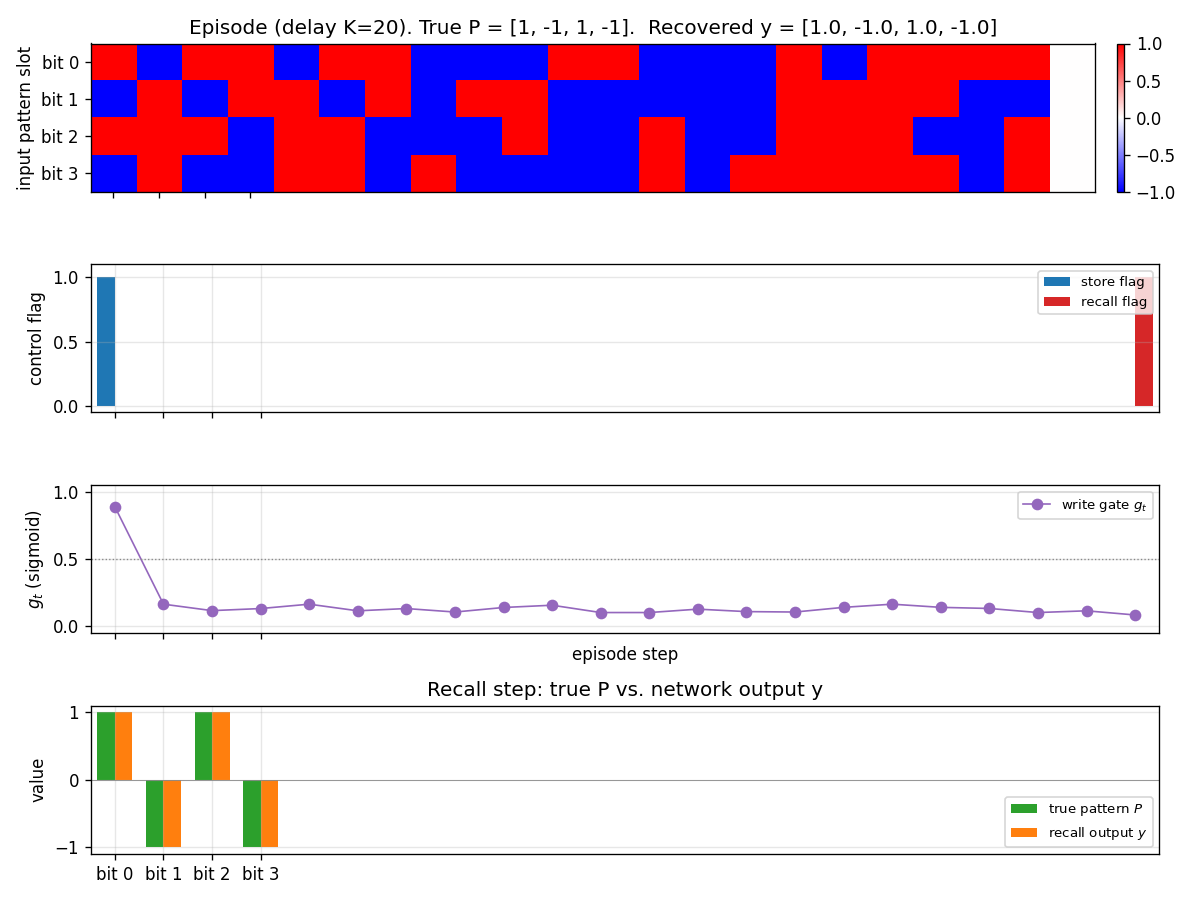

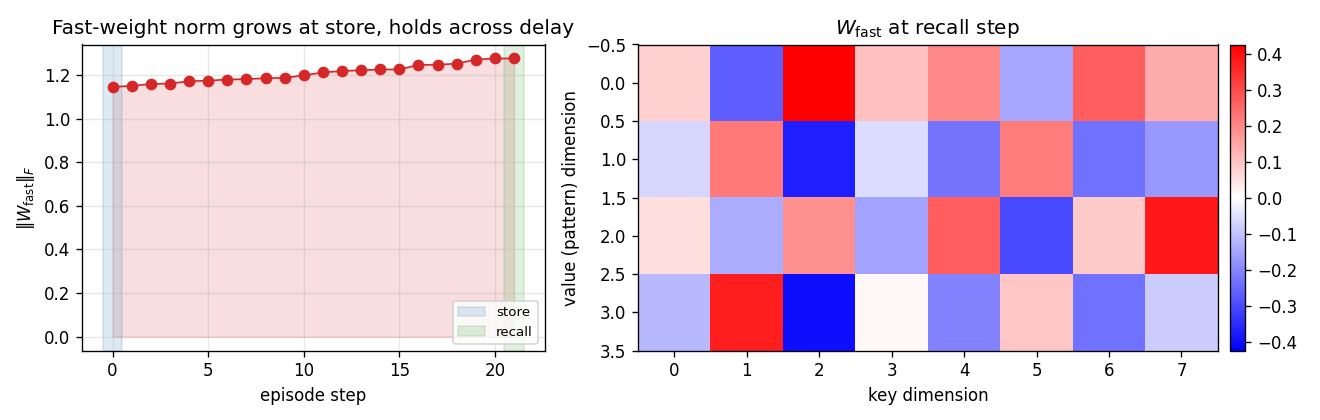

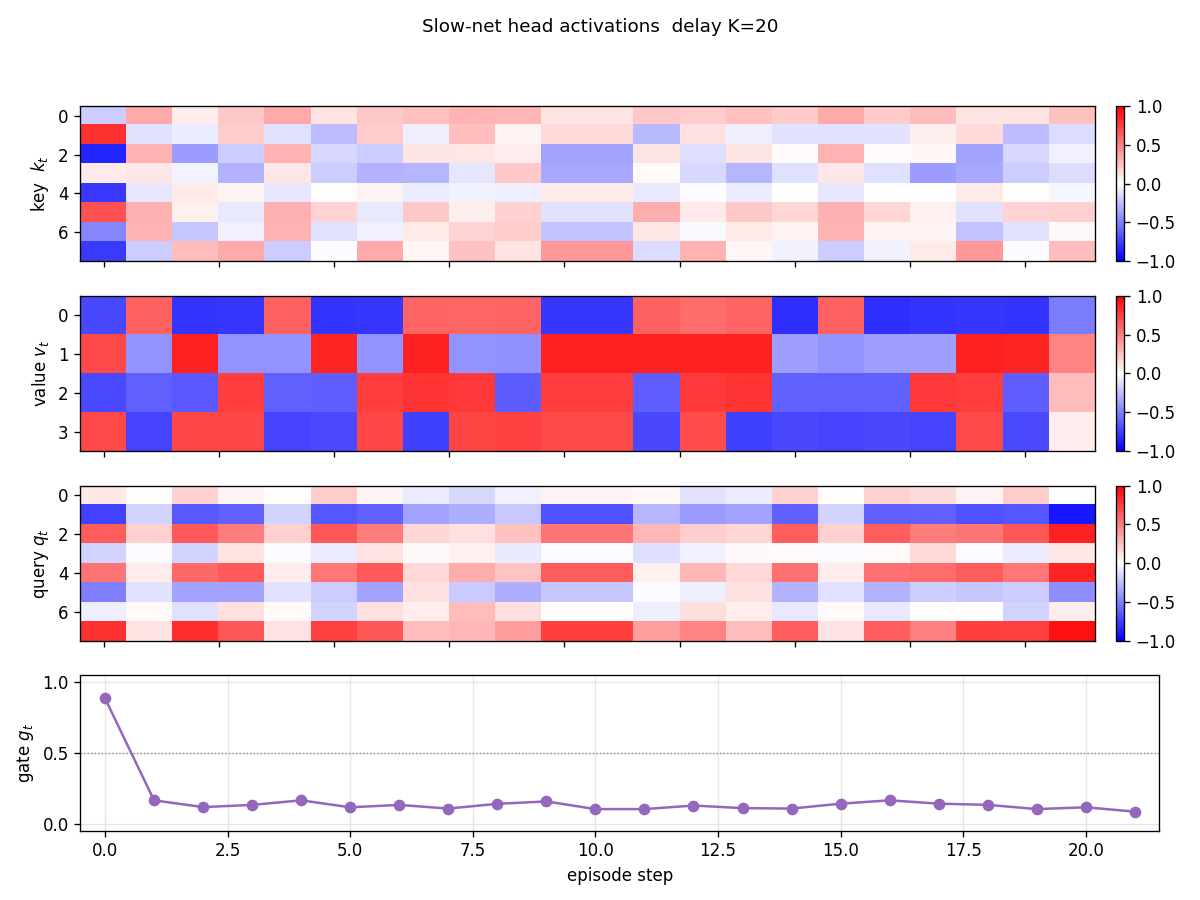

| fast-weights-unknown-delay | yes (100% bit-acc K=5-30 trained / K=1-60 extrapolation; 10/10 seeds) | 3s |

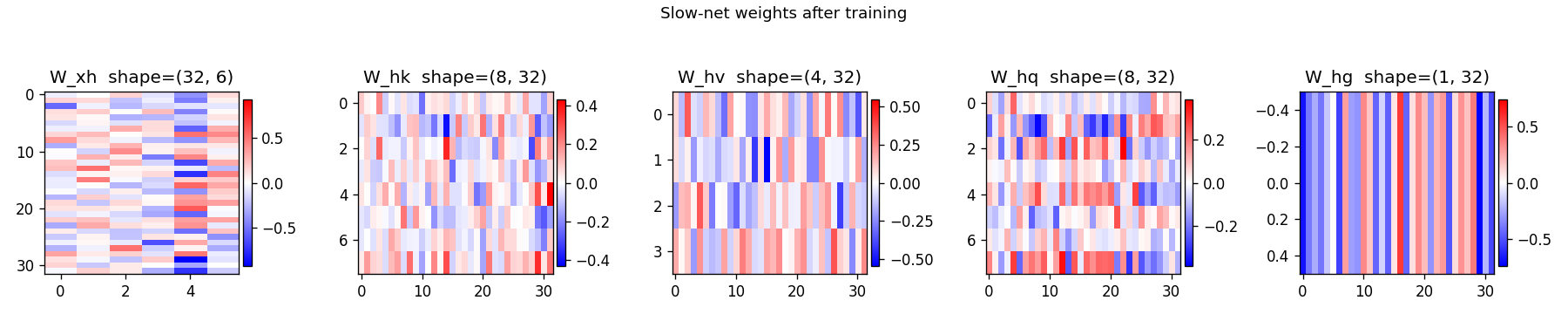

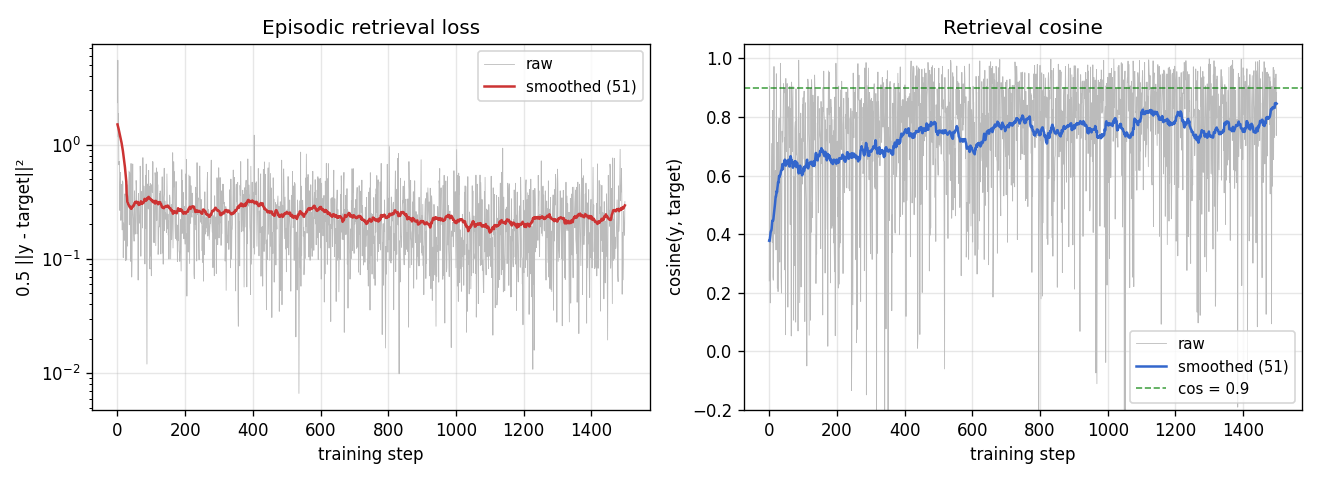

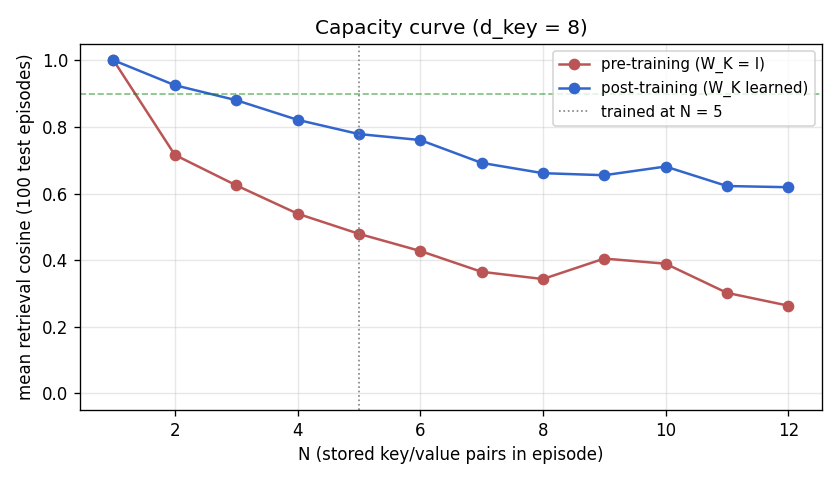

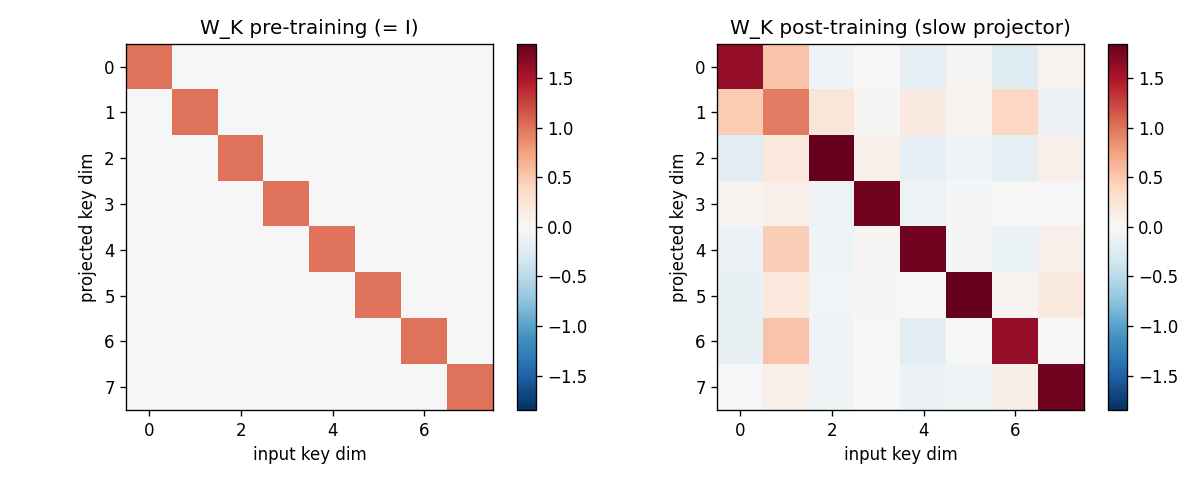

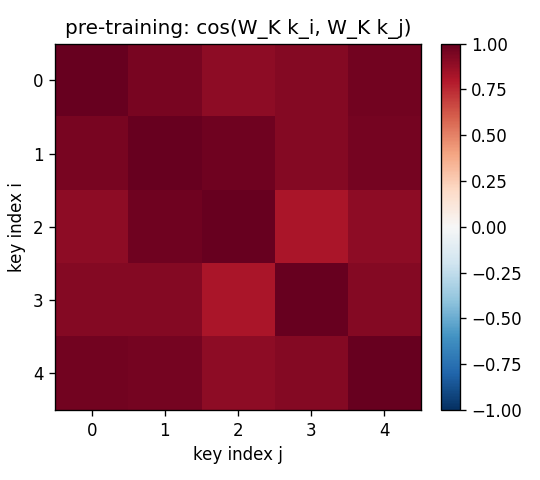

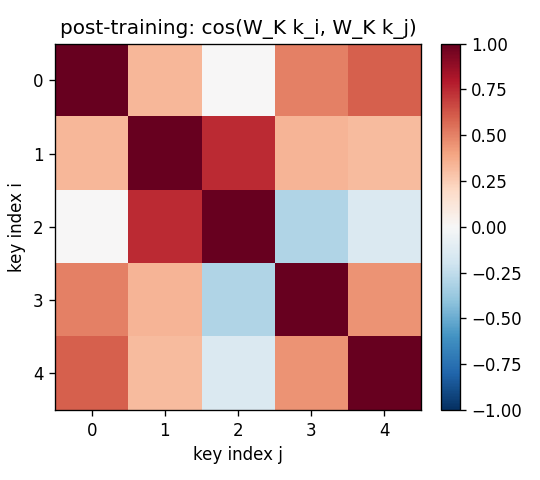

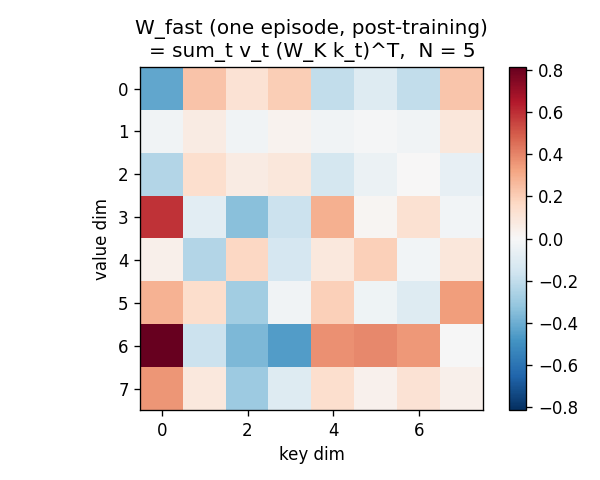

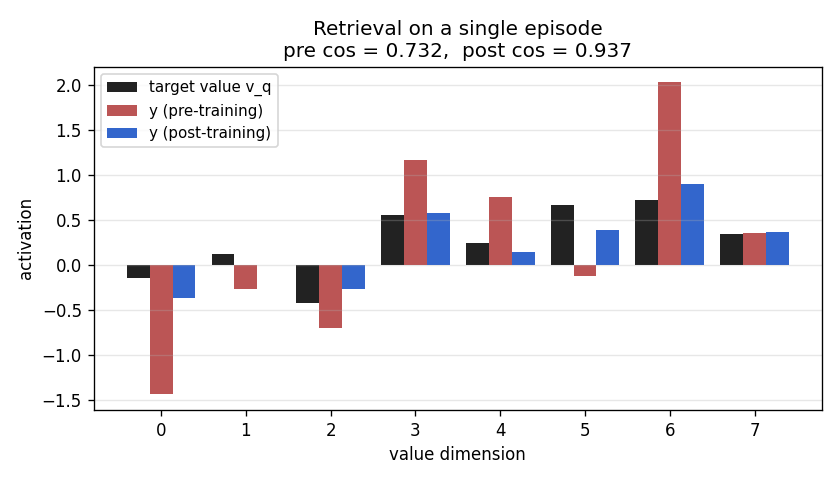

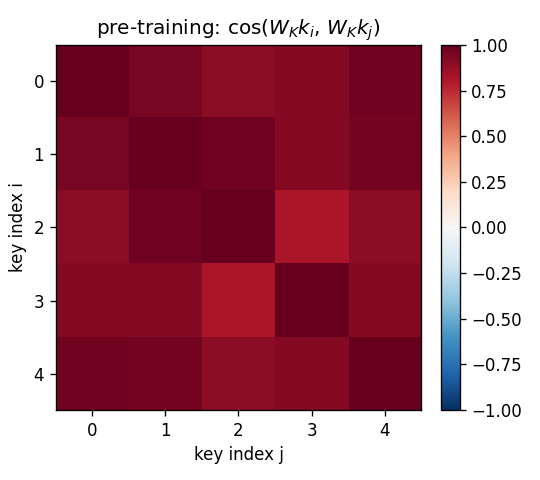

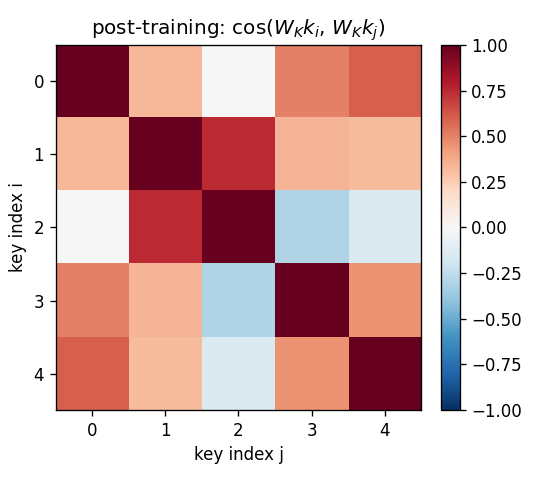

| fast-weights-key-value | yes (cos 0.428 → 0.754, 1.76× lift; numerical grad-check <1e-9) | 0.07s |

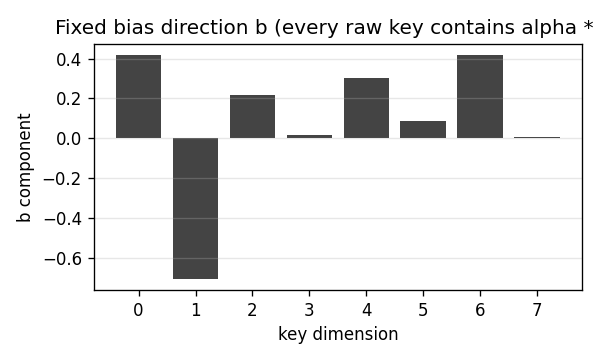

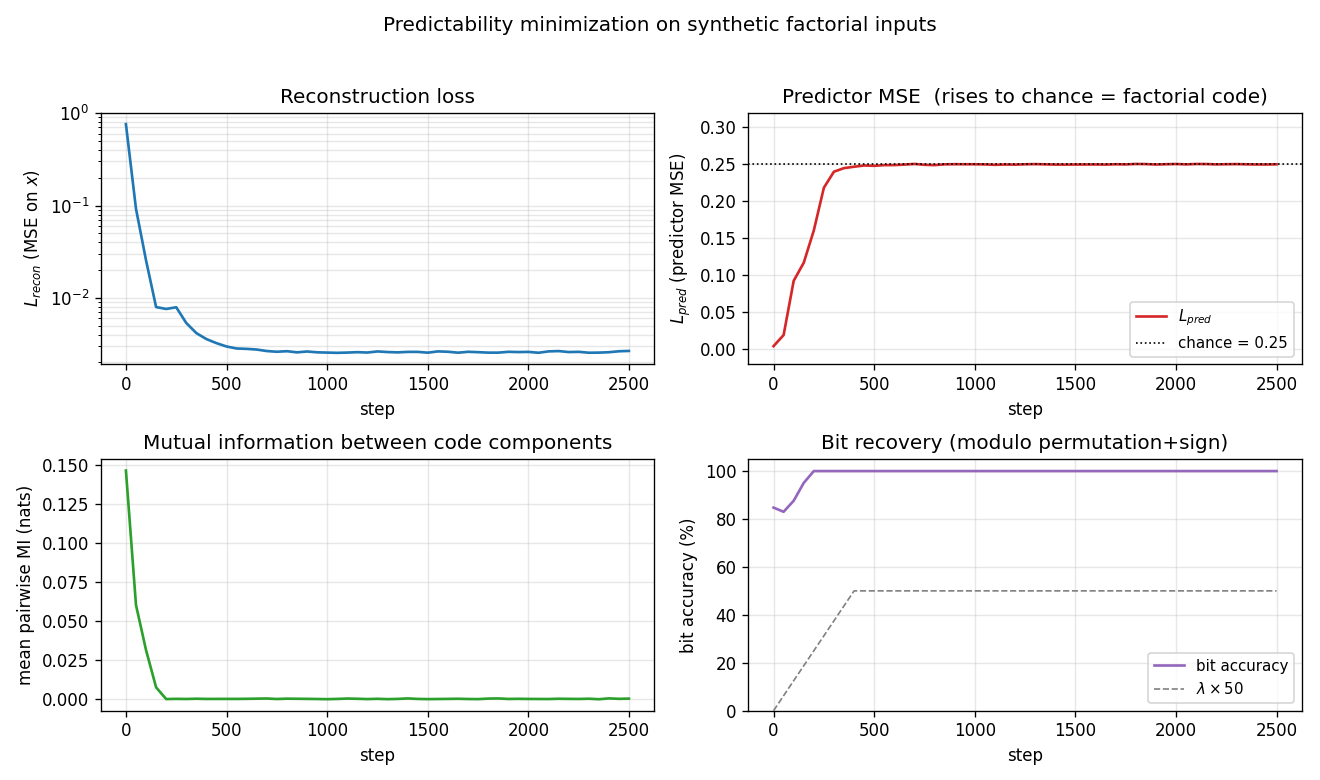

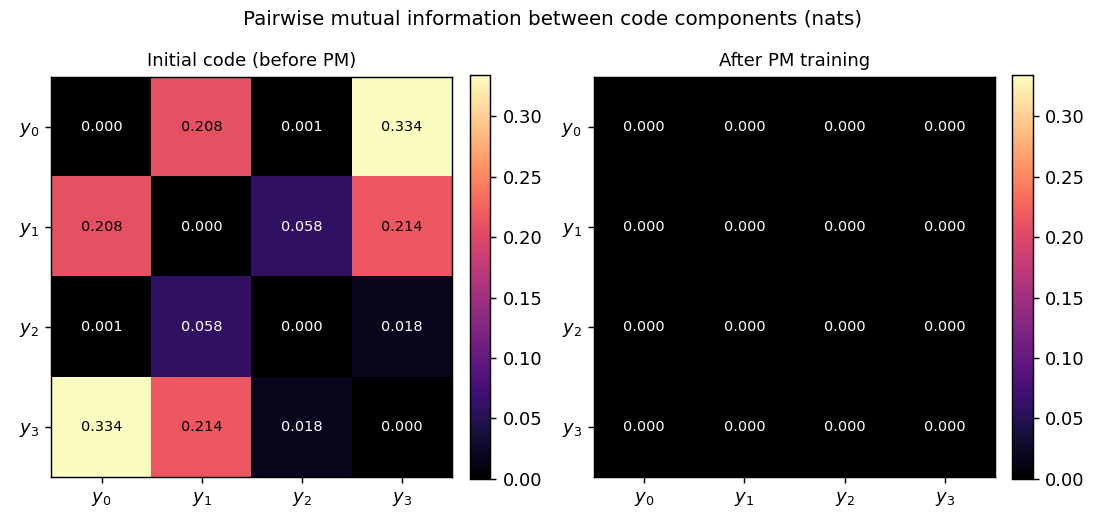

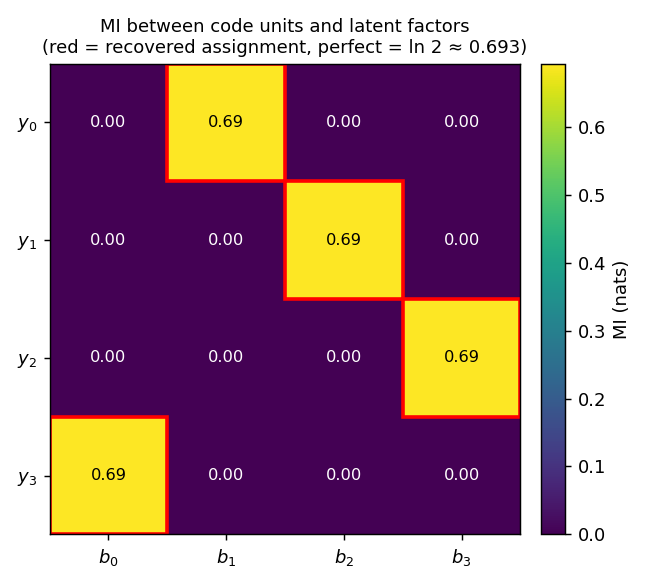

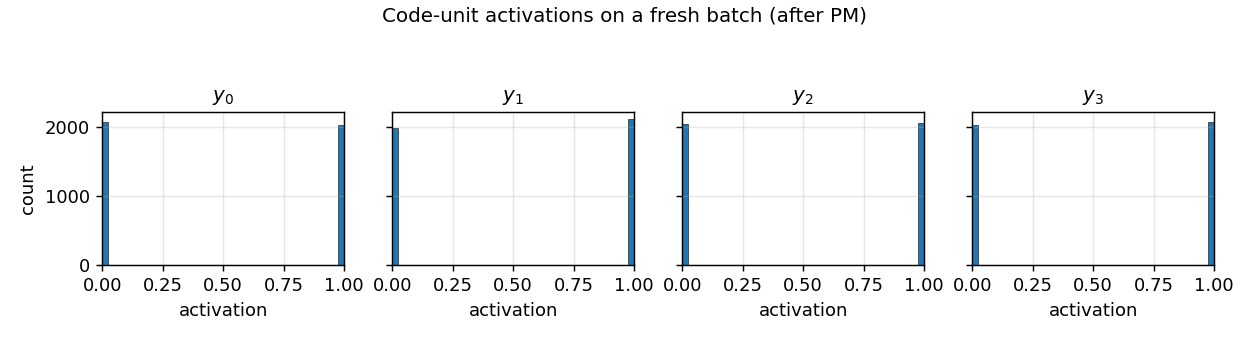

Schmidhuber (1992) — Learning factorial codes by predictability minimization (NC 4(6))

| Stub | Reproduces? | Run wallclock |

|---|---|---|

| predictability-min-binary-factors | yes (L_pred = 0.2500 chance; pairwise MI 9.6e-5 nats; 8/8 seeds 100%) | 2.8s |

1993 — Predictable classifications, self-reference, very deep chunking

Schmidhuber & Prelinger (1993) — Discovering predictable classifications (NC 5(4))

| Stub | Reproduces? | Run wallclock |

|---|---|---|

| predictable-stereo | yes (depth recovery 1.000 seed 0; 8/8 seeds 0.997 mean) | 0.08s |

Schmidhuber (1993) — A self-referential weight matrix (ICANN-93)

| Stub | Reproduces? | Run wallclock |

|---|---|---|

| self-referential-weight-matrix | partial (99.6% on 4-way boolean meta-learning; 8/8 seeds > 0.95) | 4.5s |

Schmidhuber (1993) — Habilitationsschrift, Netzwerkarchitekturen, Zielfunktionen und Kettenregel

| Stub | Reproduces? | Run wallclock |

|---|---|---|

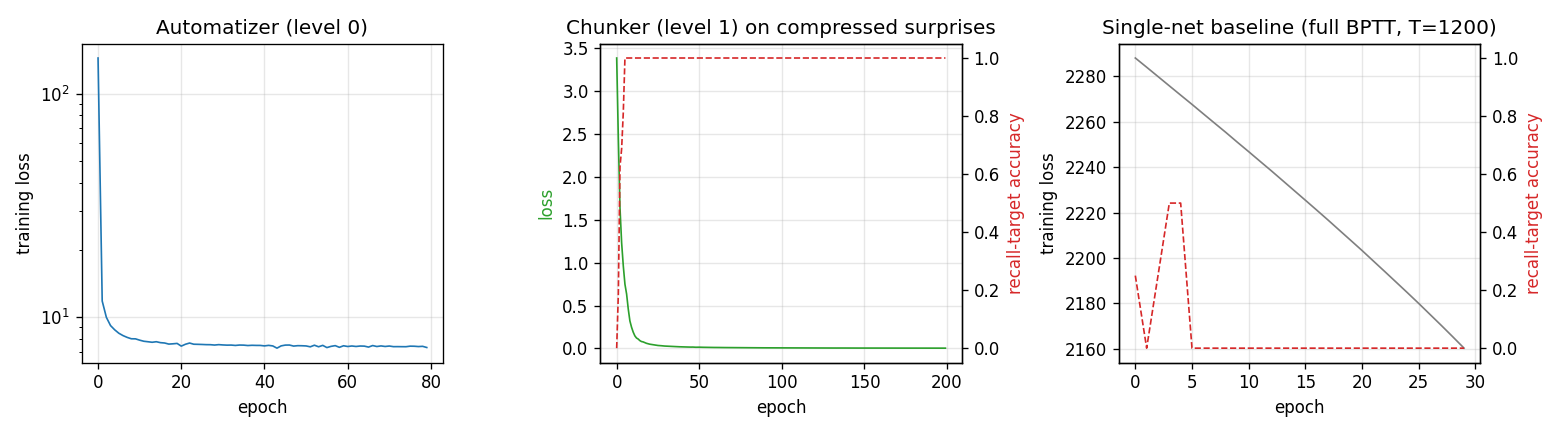

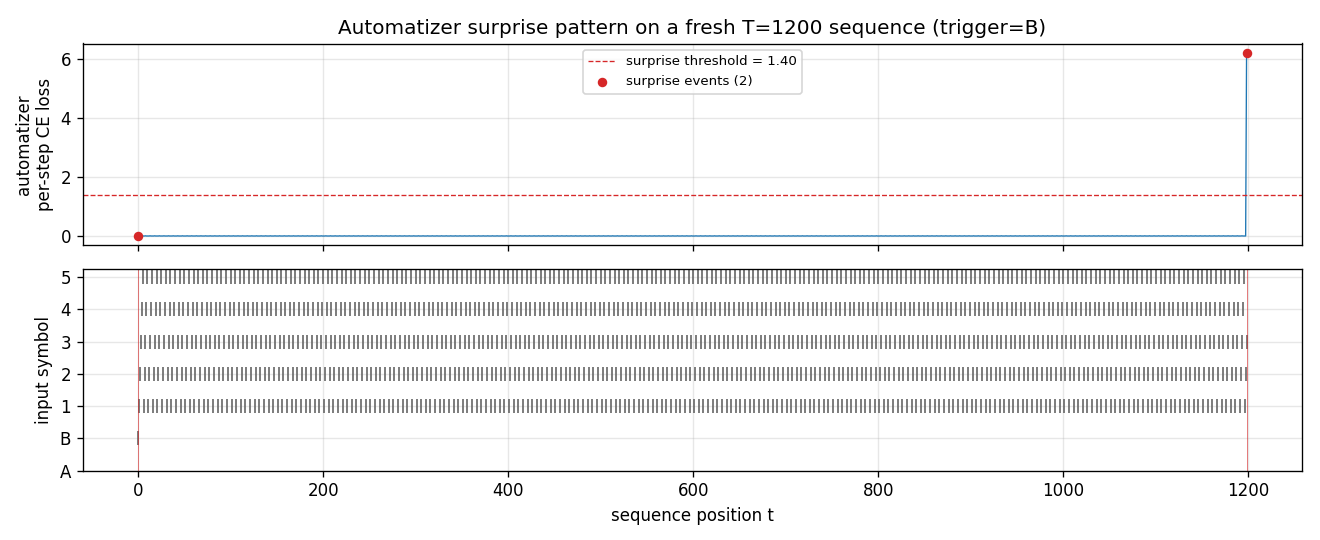

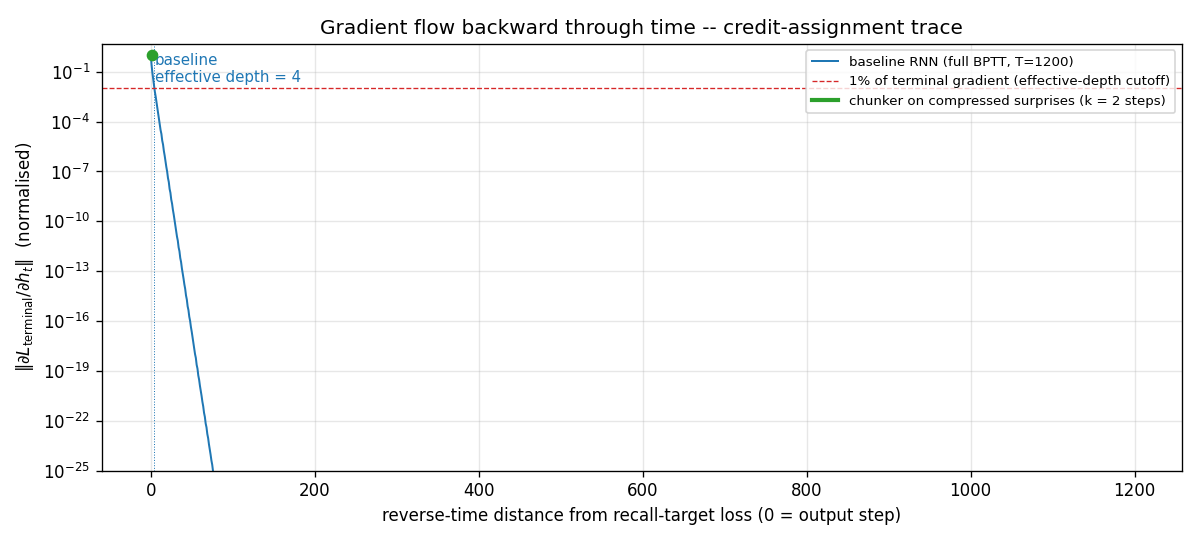

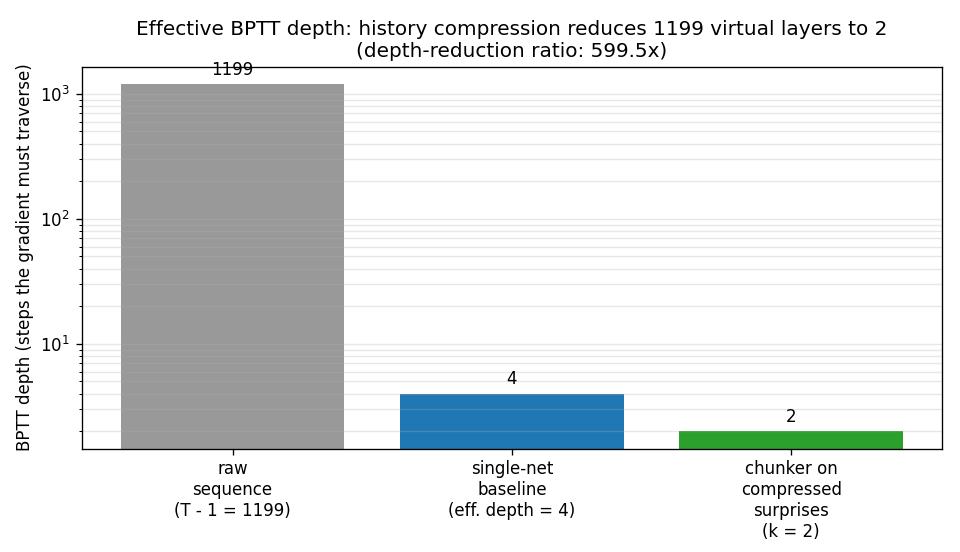

| chunker-very-deep-1200 | yes (599.5× depth-reduction at T=1200; chunker 100% vs single-net 0%) | 29.8s |

1995–1997 — Levin search and the LSTM benchmark suite

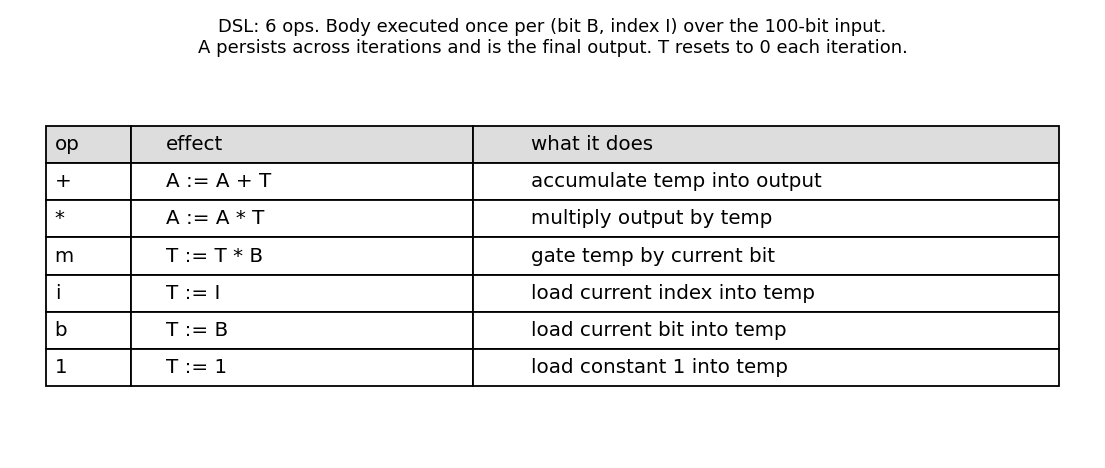

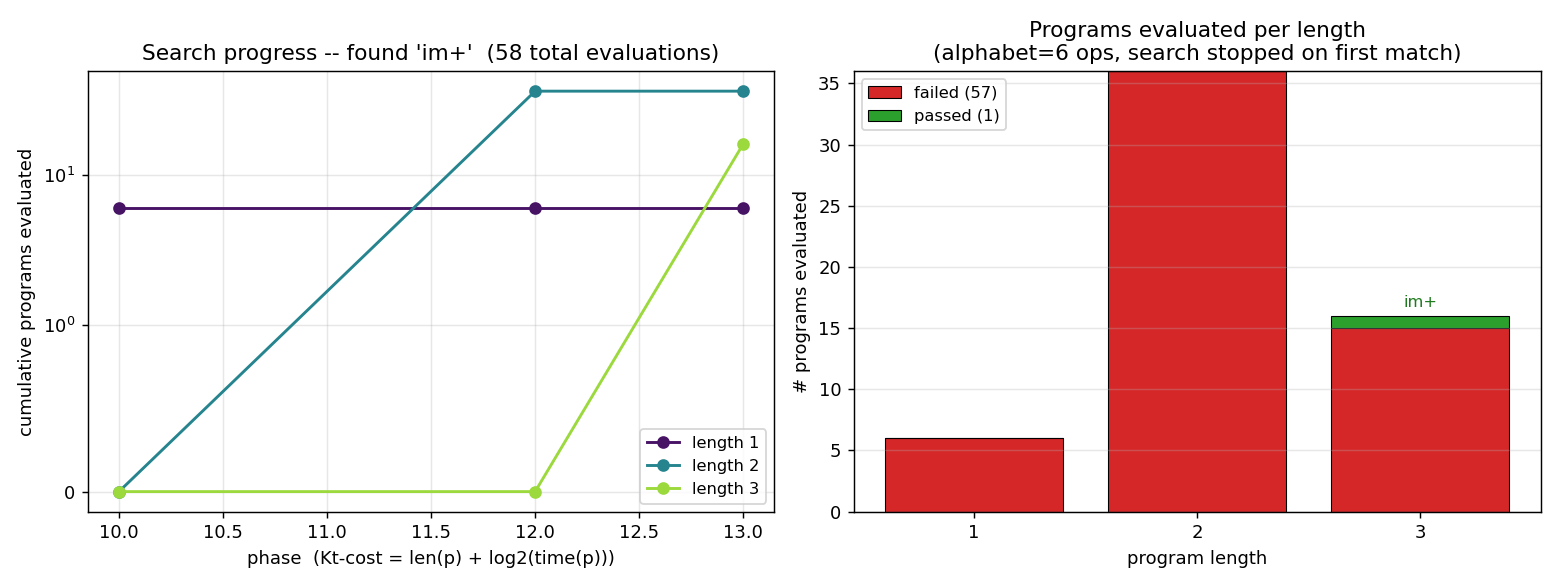

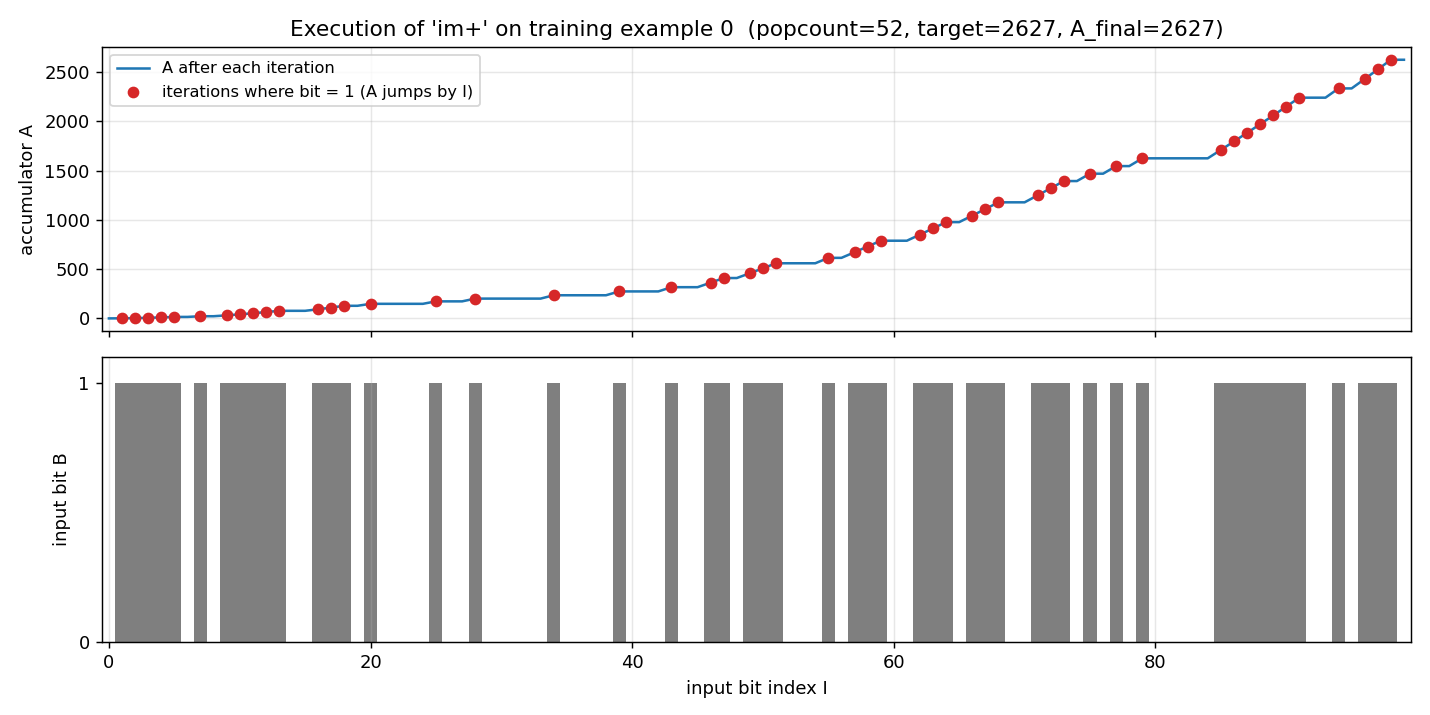

Schmidhuber (1995/1997) — Discovering solutions with low Kolmogorov complexity (ICML / NN 10)

| Stub | Reproduces? | Run wallclock |

|---|---|---|

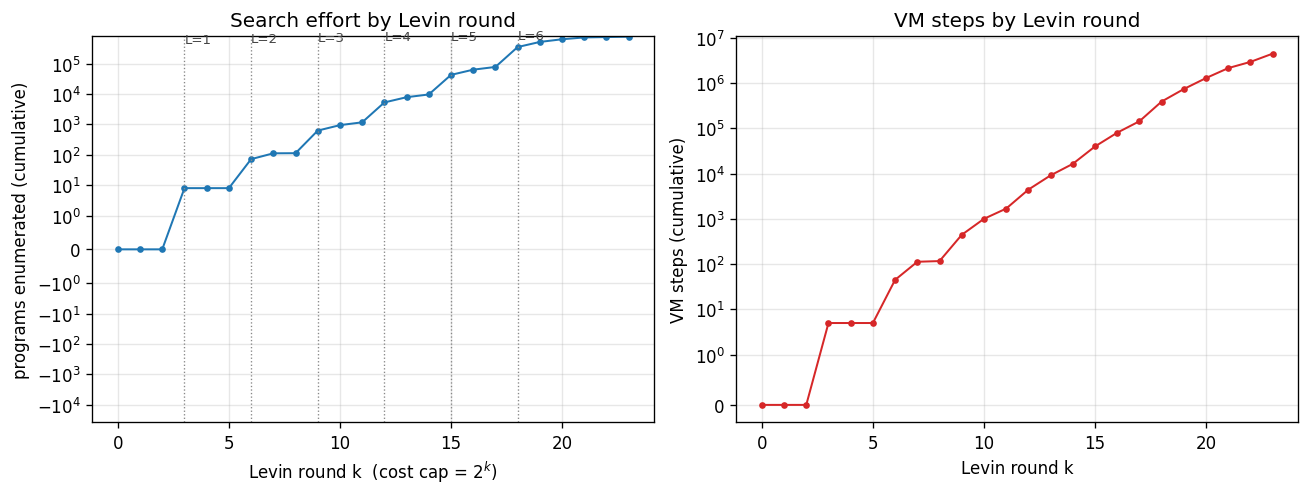

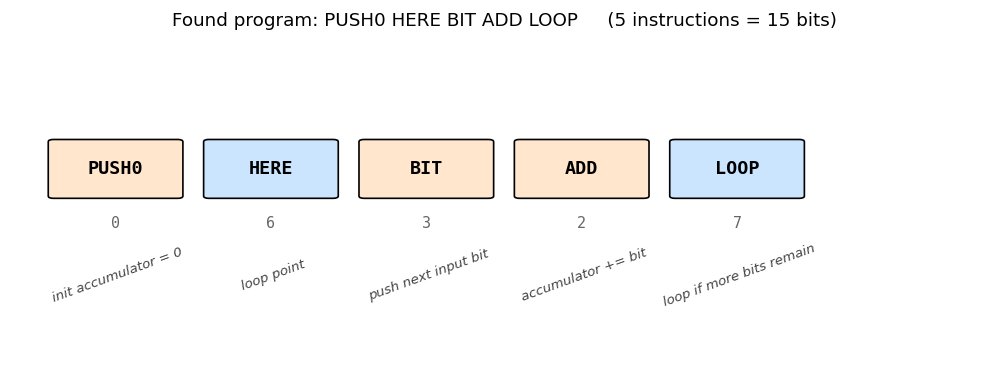

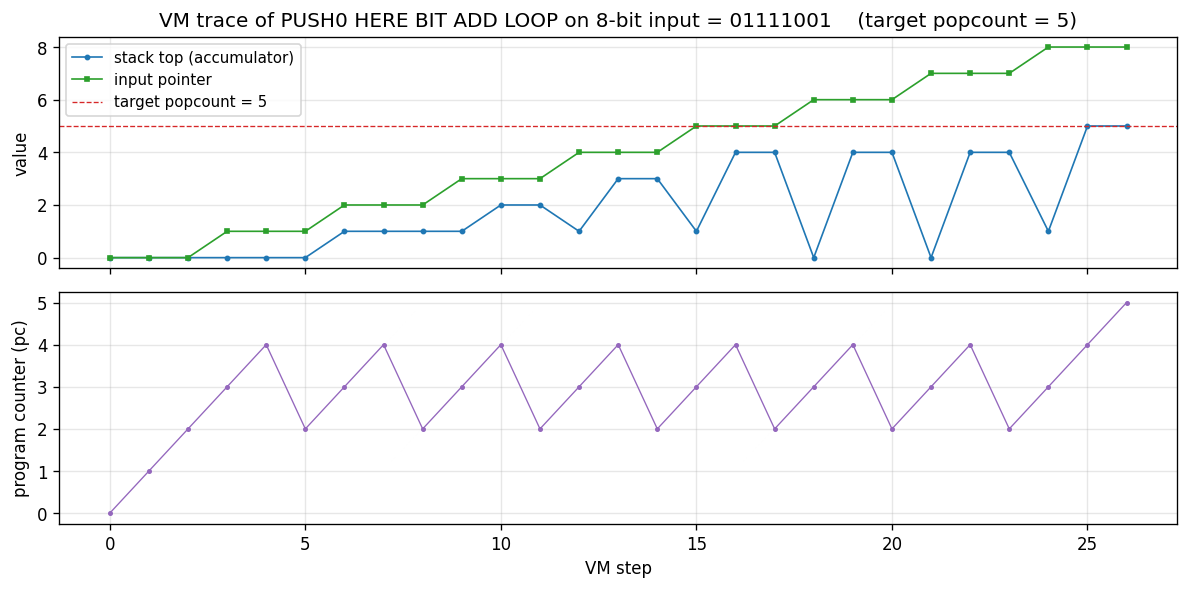

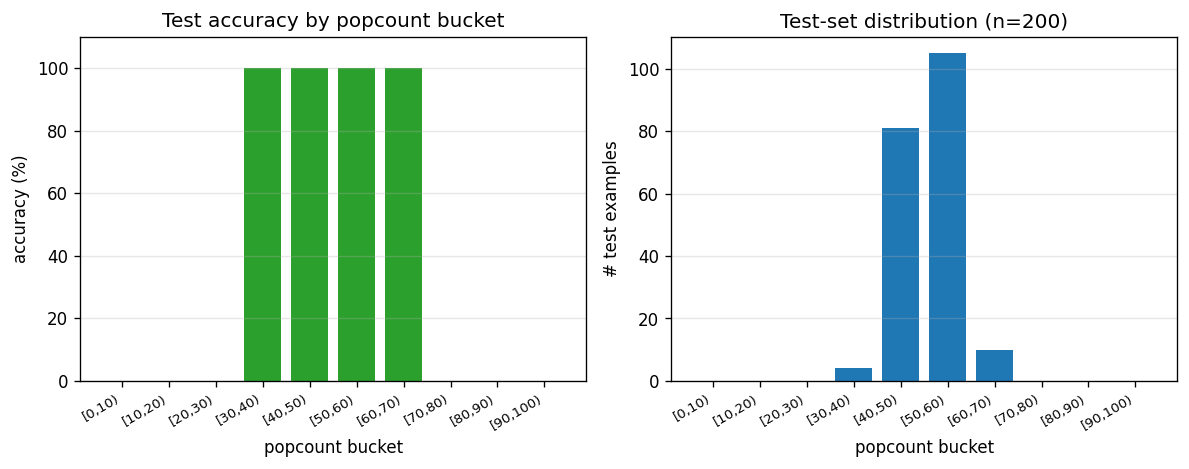

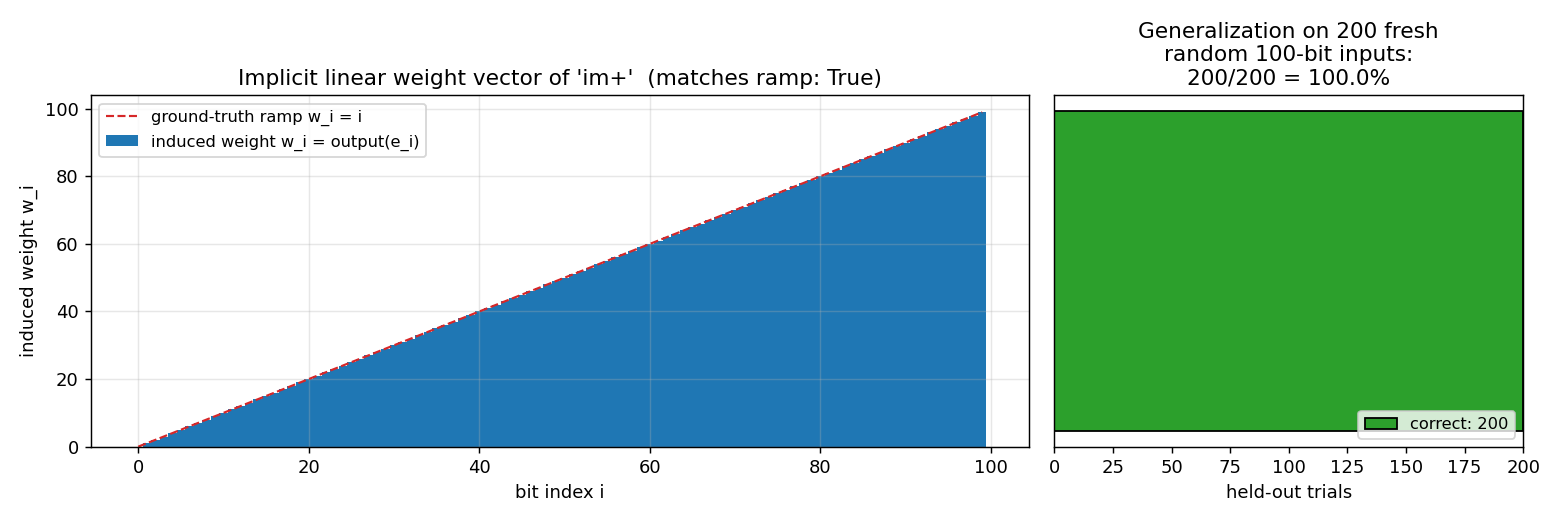

| levin-count-inputs | yes (5-instr popcount, 770k programs, 200/200 generalize) | 1.0s |

| levin-add-positions | yes (3-instr im+, 58 evals, 200/200 generalize) | 0.34s |

Hochreiter & Schmidhuber (1996) — LSTM can solve hard long time lag problems (NIPS 9)

| Stub | Reproduces? | Run wallclock |

|---|---|---|

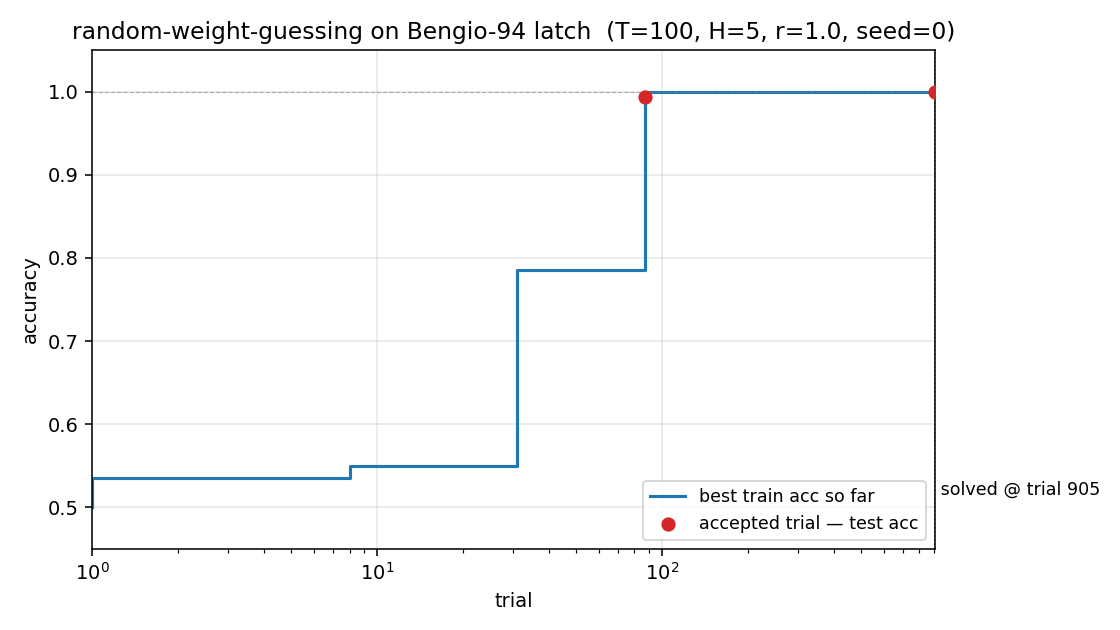

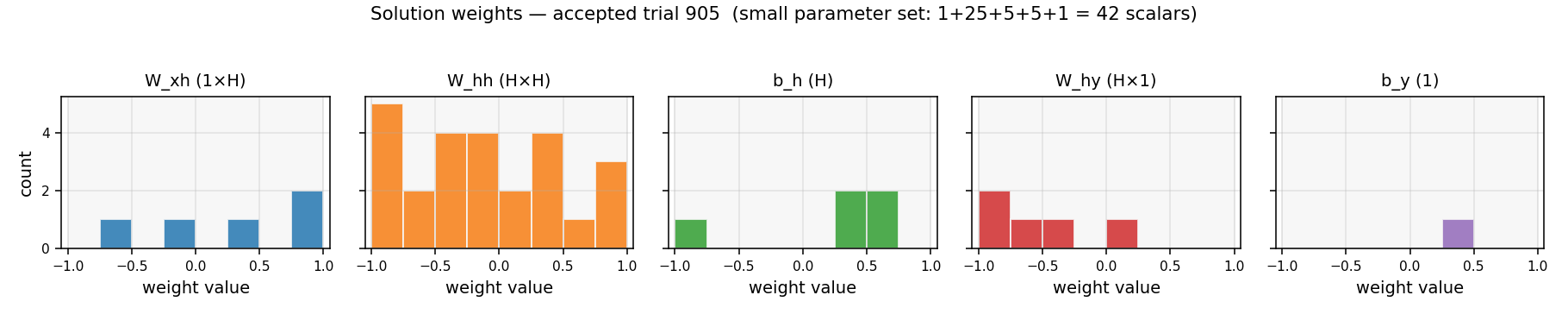

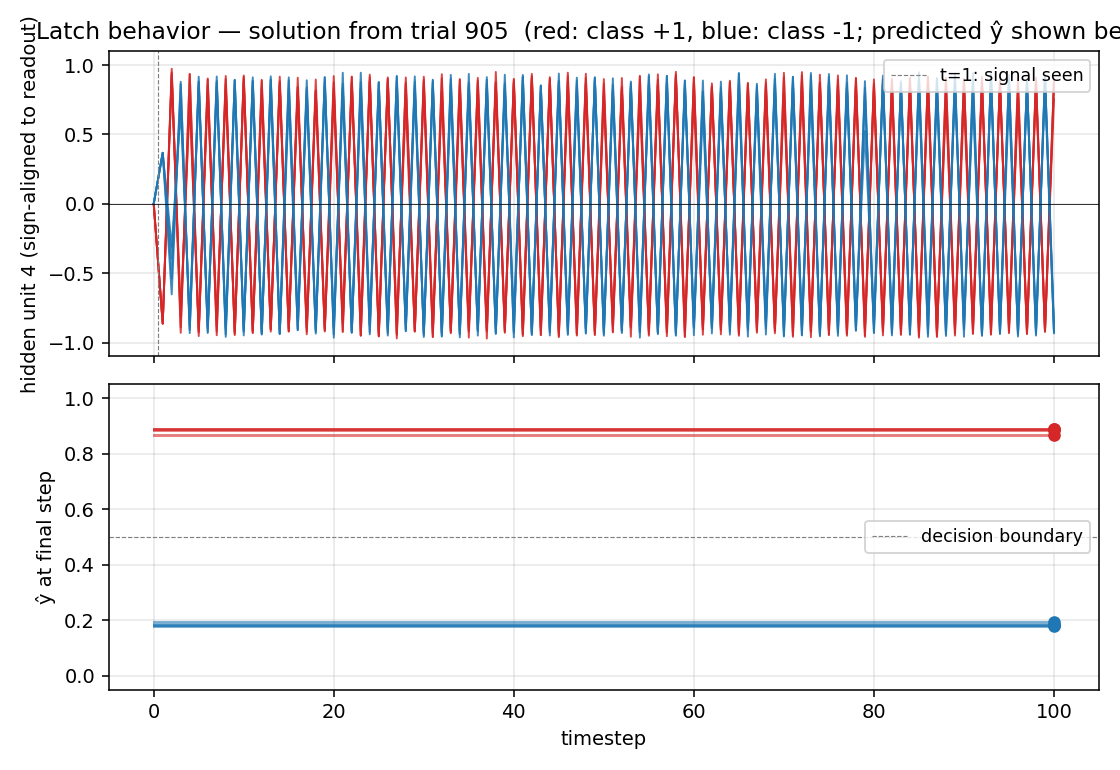

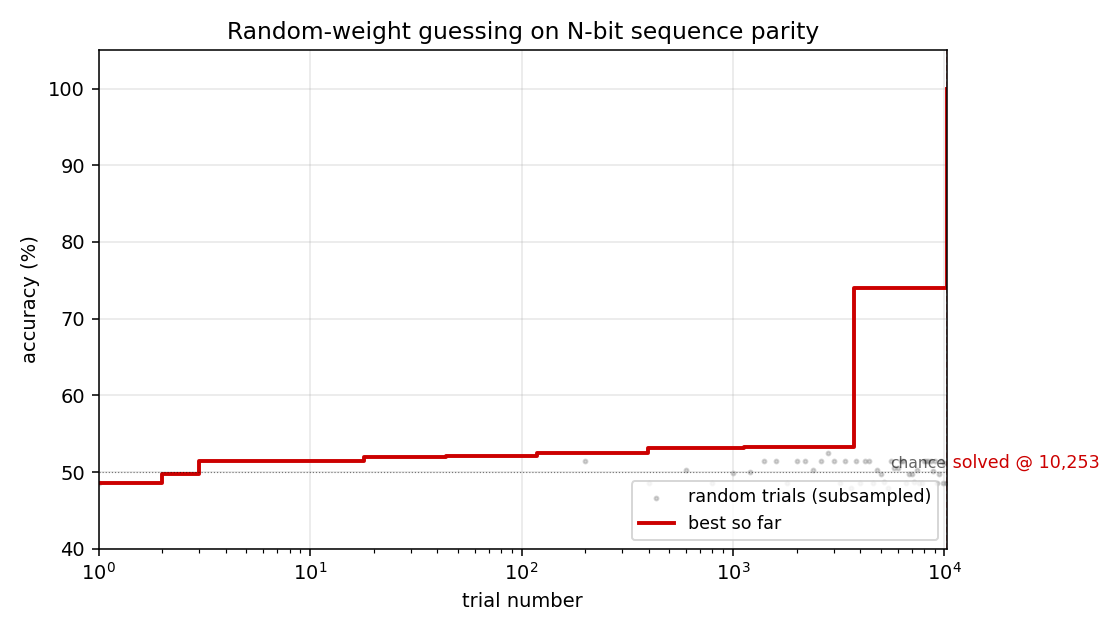

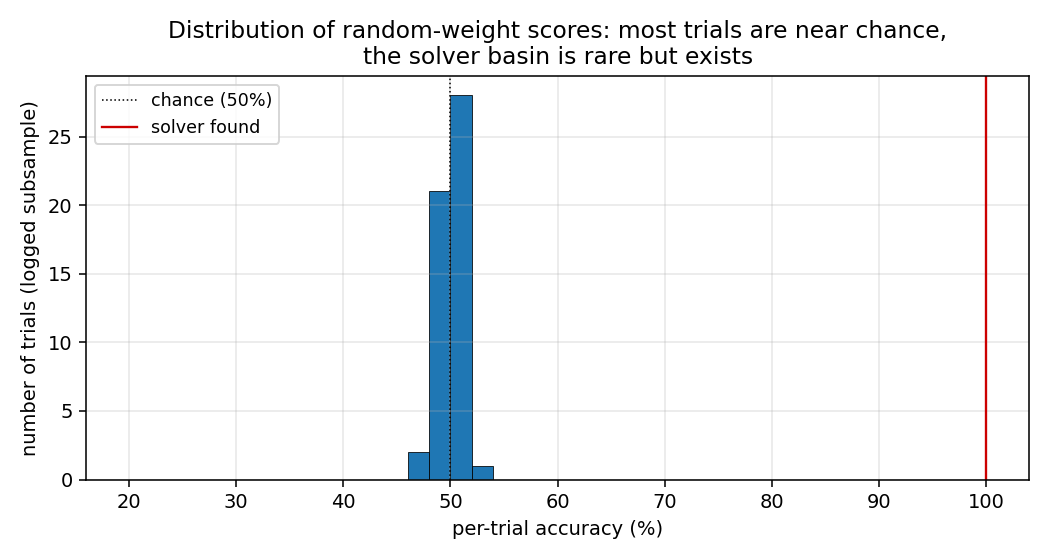

| rs-two-sequence | yes (30/30 seeds solve, median 144 trials vs paper ~718) | 0.94s |

| rs-parity | yes (N=50 seed 0: 10,253 trials / 15.3s; N=500 seed 0: 412 trials / 3.2s) | 15.3s |

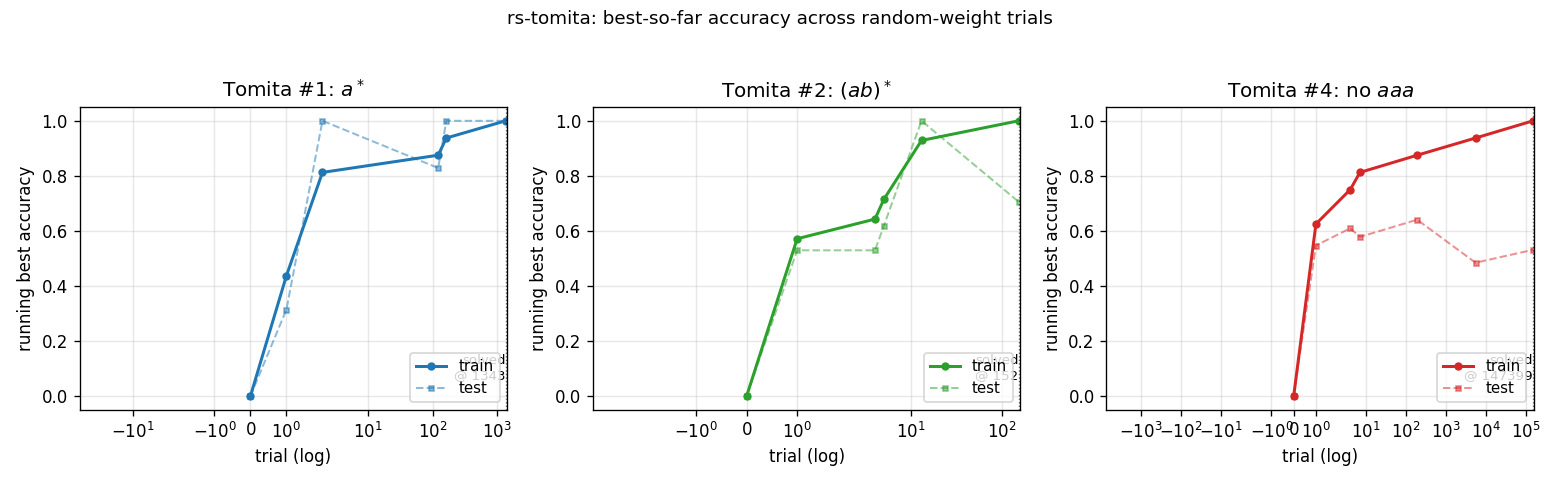

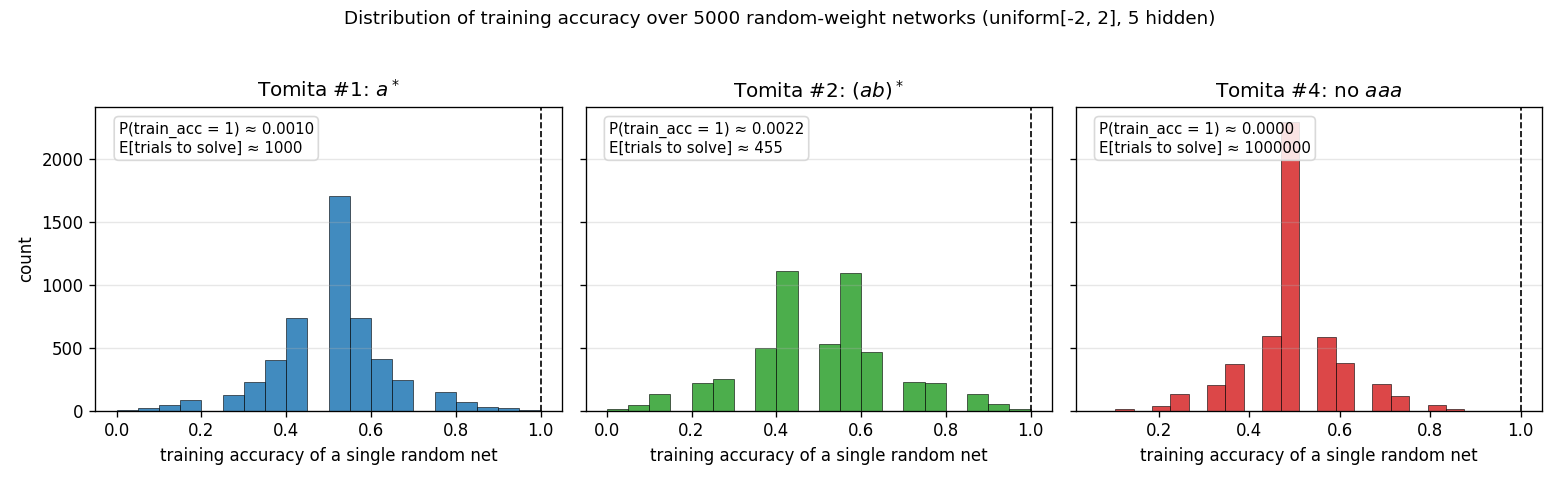

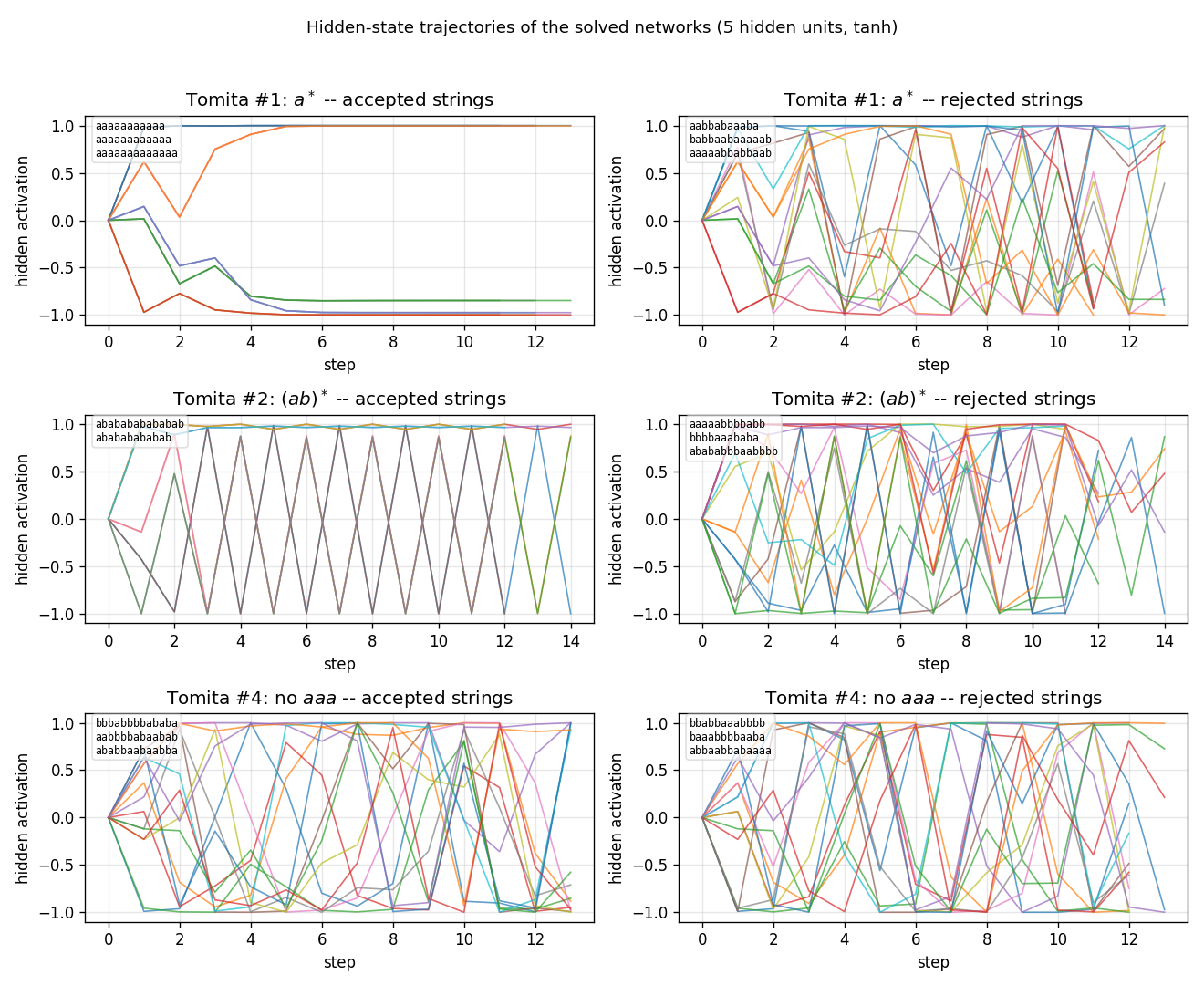

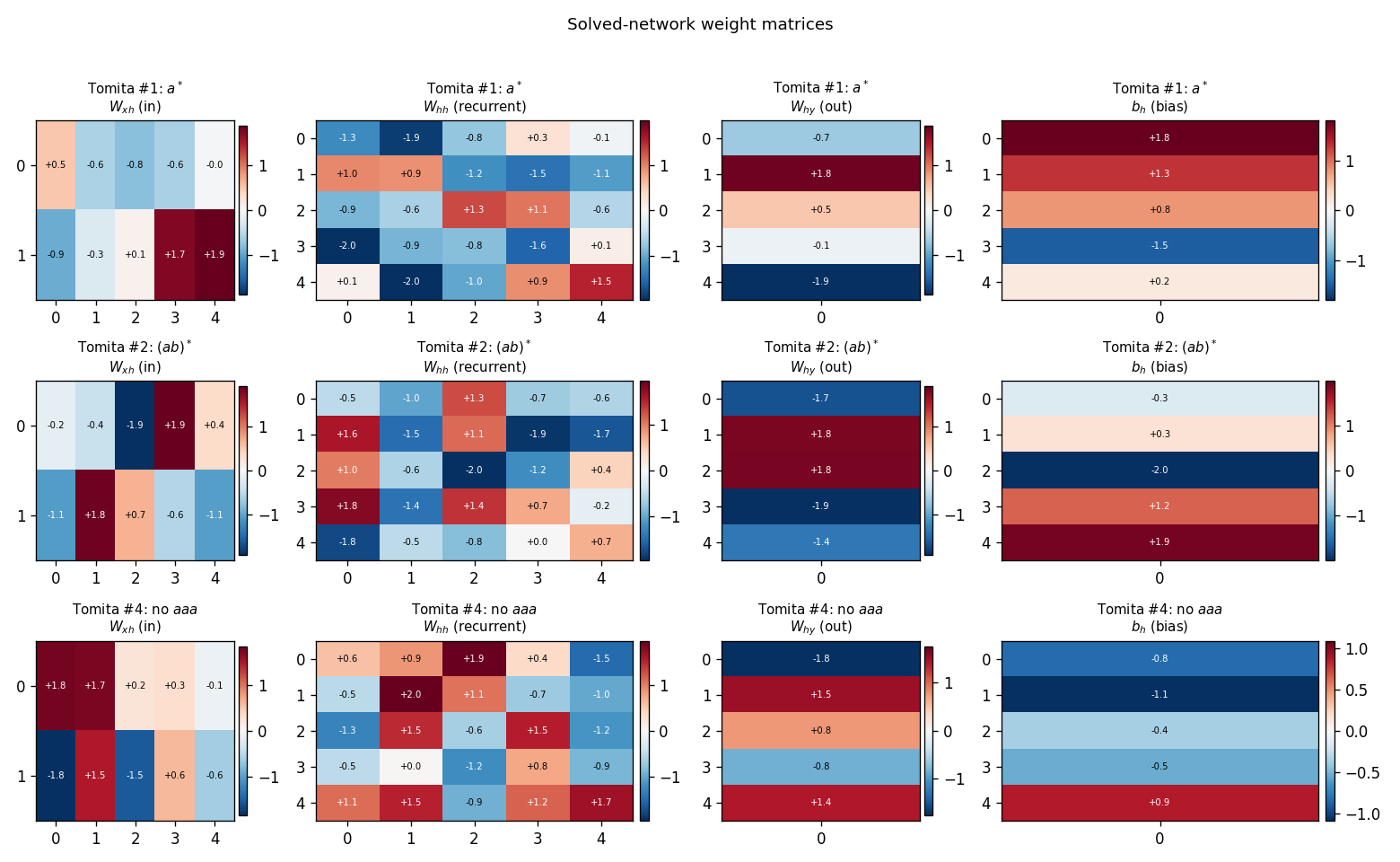

| rs-tomita | yes (#1, #2, #4 all solved 10/10 seeds) | 17-19s |

Hochreiter & Schmidhuber (1997) — Long Short-Term Memory (NC 9(8)) — canonical 6-experiment battery

| Stub | Reproduces? | Run wallclock |

|---|---|---|

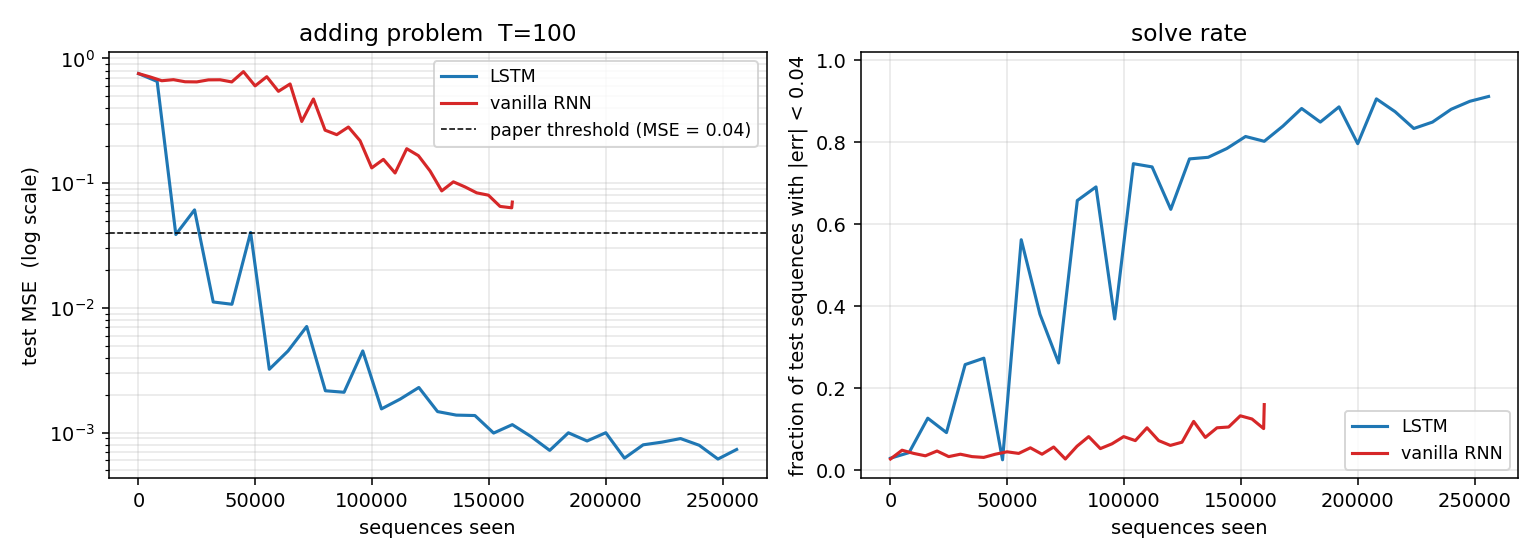

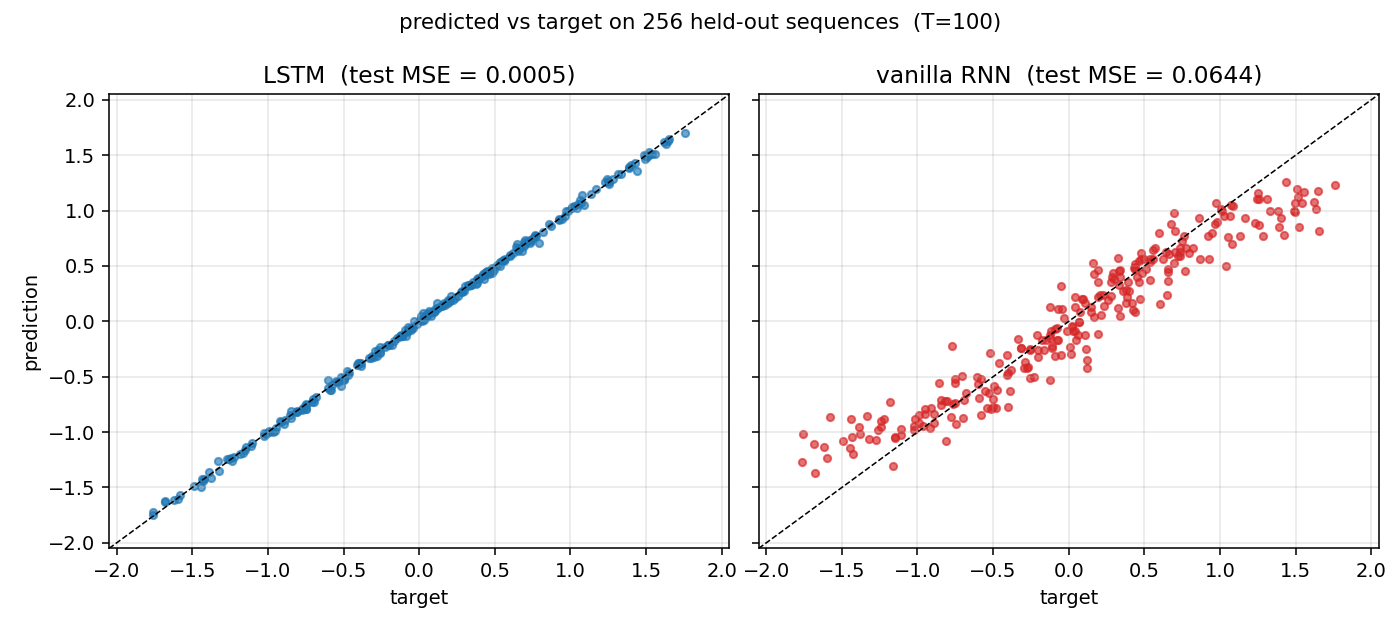

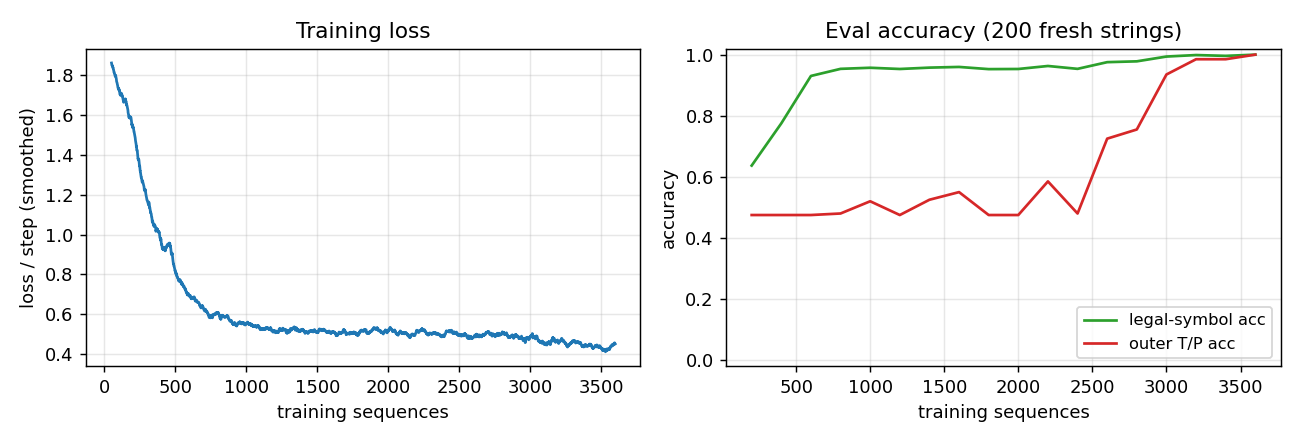

| adding-problem | yes (Exp 4: LSTM MSE 0.0007 vs threshold 0.04; vanilla RNN 0.0706) | 39s |

| embedded-reber | yes (Exp 1: 10/10 seeds, mean 4800 seqs vs paper 8440 — 1.8× faster) | 2.6s |

| noise-free-long-lag | qualitative (Exp 2 sub-(a) at p=50; 6/10 seeds; (b)/(c) deferred) | 21s |

| two-sequence-noise | yes (Exp 3 variant 3c: 4/4 seeds 100%; ~3k seqs vs paper ~269k) | 32s |

| multiplication-problem | yes (Exp 5: MSE 0.0028 / 17× chance; 3/5 seeds — paper-faithful brittleness) | 4.5s |

| temporal-order-3bit | yes (Exp 6a: 5/5 seeds 100%, ~6.4k seqs vs paper 31,390) | 24s |

Mid-90s — Evolutionary, RL, and feature detection

Salustowicz & Schmidhuber (1997) — Probabilistic Incremental Program Evolution

| Stub | Reproduces? | Run wallclock |

|---|---|---|

| pipe-symbolic-regression | yes (seed 3 finds Koza target exactly at gen 60) | 1.3s |

| pipe-6-bit-parity | yes (4-bit clean solve at gen 258; 6-bit partial 71.9%) | 240s |

Schmidhuber, Zhao, Wiering (1997) — Shifting inductive bias with SSA (ML 28)

| Stub | Reproduces? | Run wallclock |

|---|---|---|

| ssa-bias-transfer-mazes | yes (SSA tail solve 0.83 vs no-SSA 0.70, +19%) | 1.7s |

Wiering & Schmidhuber (1997) — HQ-learning (Adaptive Behavior 6(2))

| Stub | Reproduces? | Run wallclock |

|---|---|---|

| hq-learning-pomdp | no (honest non-replication: HQ-vs-flat gap doesn’t reproduce on 29-cell maze; mathematical analysis in §Open questions) | 21s |

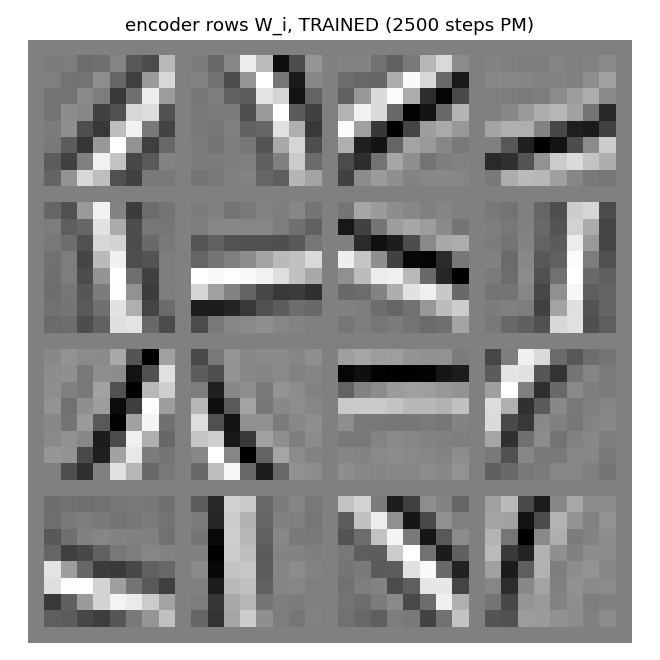

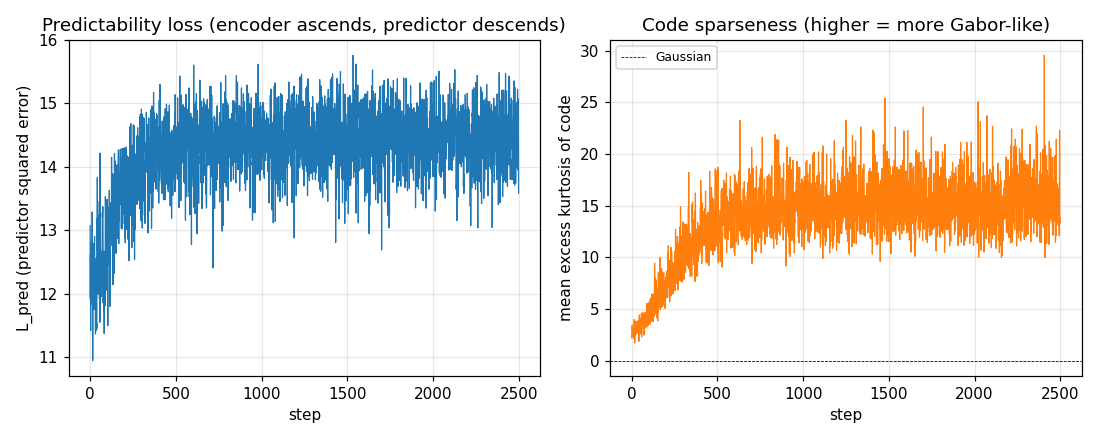

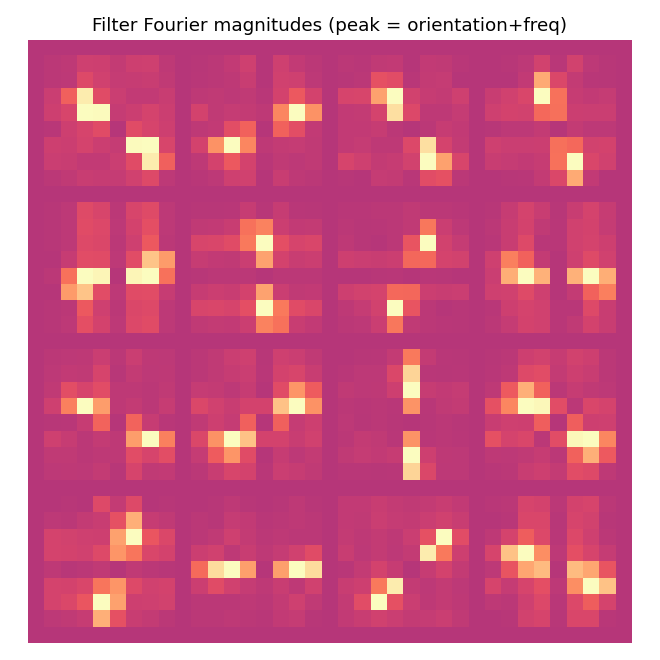

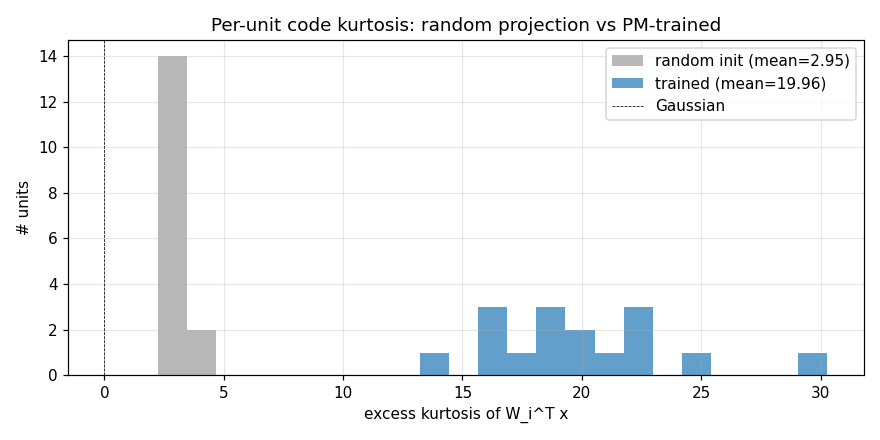

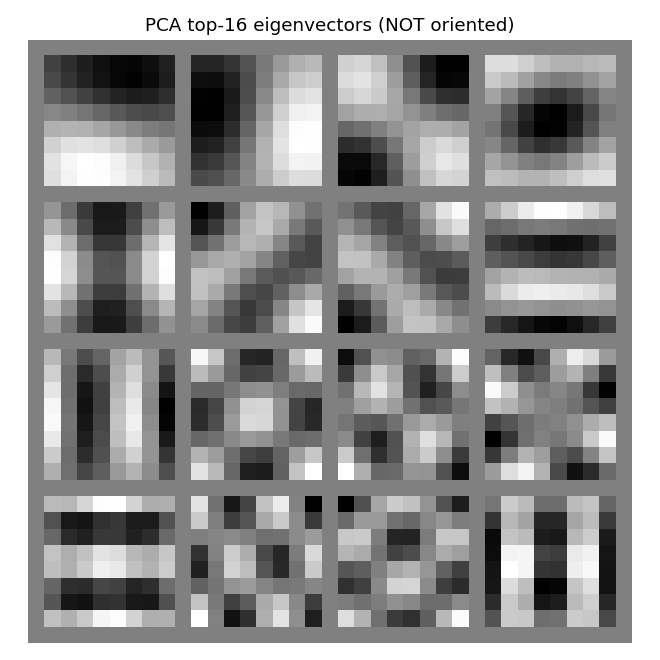

Schmidhuber, Eldracher, Foltin (1996) — Semilinear PM produces well-known feature detectors (NC 8(4))

| Stub | Reproduces? | Run wallclock |

|---|---|---|

| semilinear-pm-image-patches | yes (12/16 oriented filters; kurtosis 19.96 vs random 2.95; grad-check 5e-10) | 1.2s |

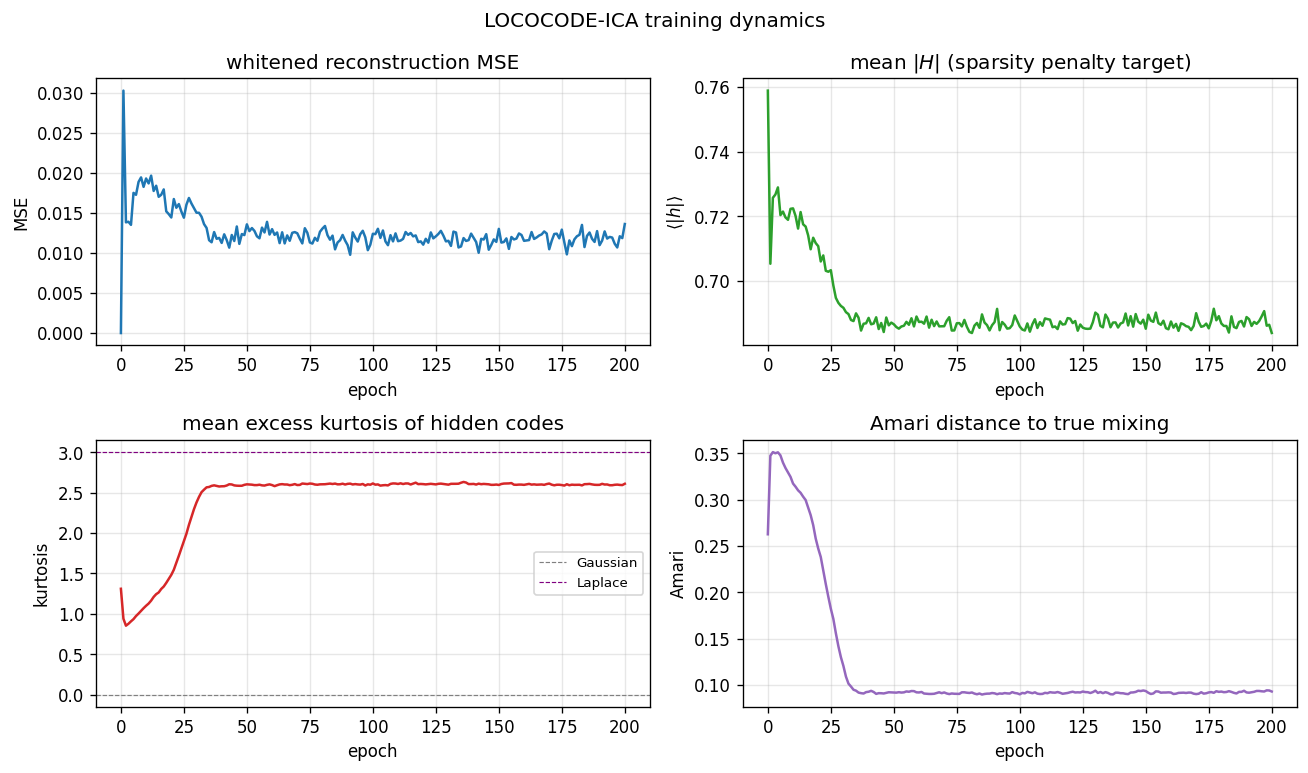

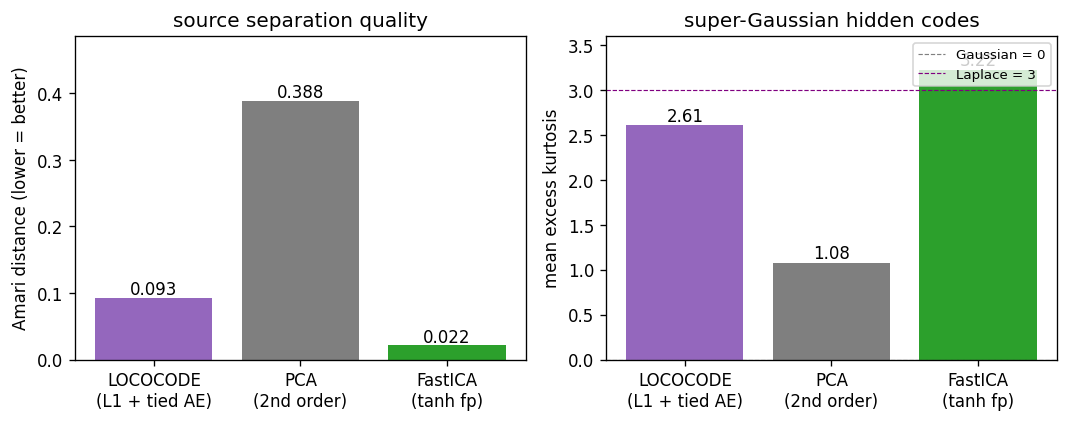

Hochreiter & Schmidhuber (1999) — Feature extraction through LOCOCODE (NC 11)

| Stub | Reproduces? | Run wallclock |

|---|---|---|

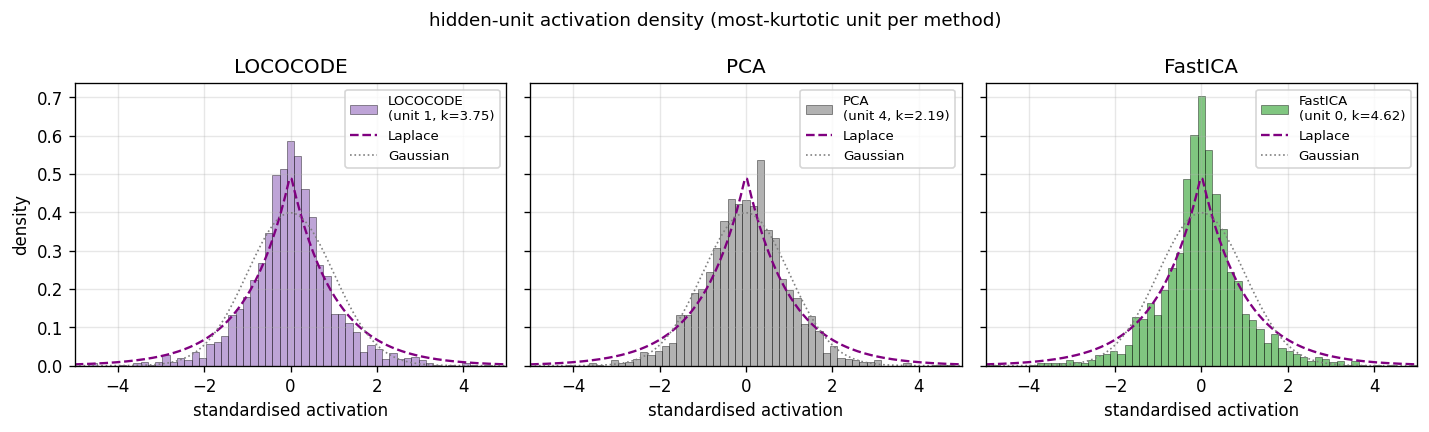

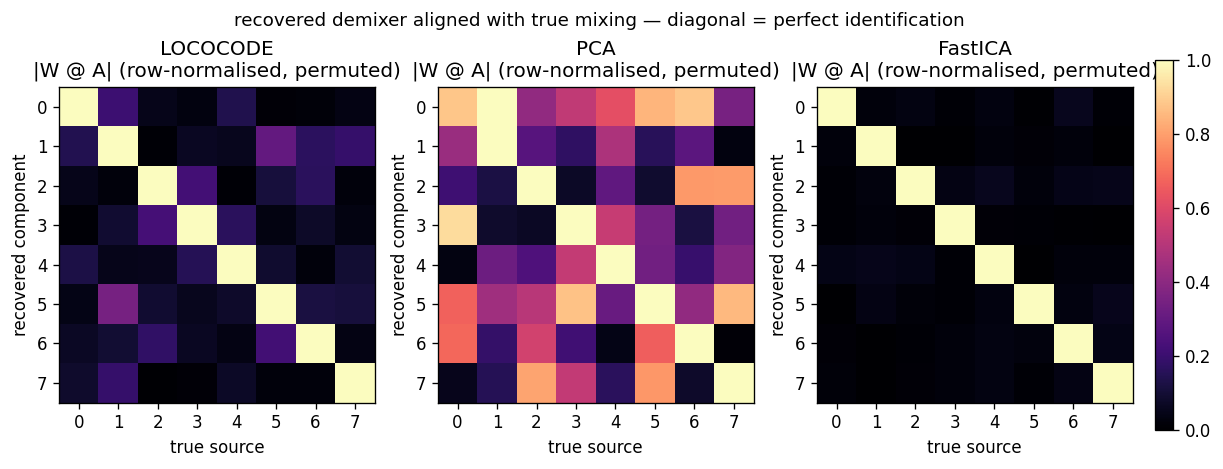

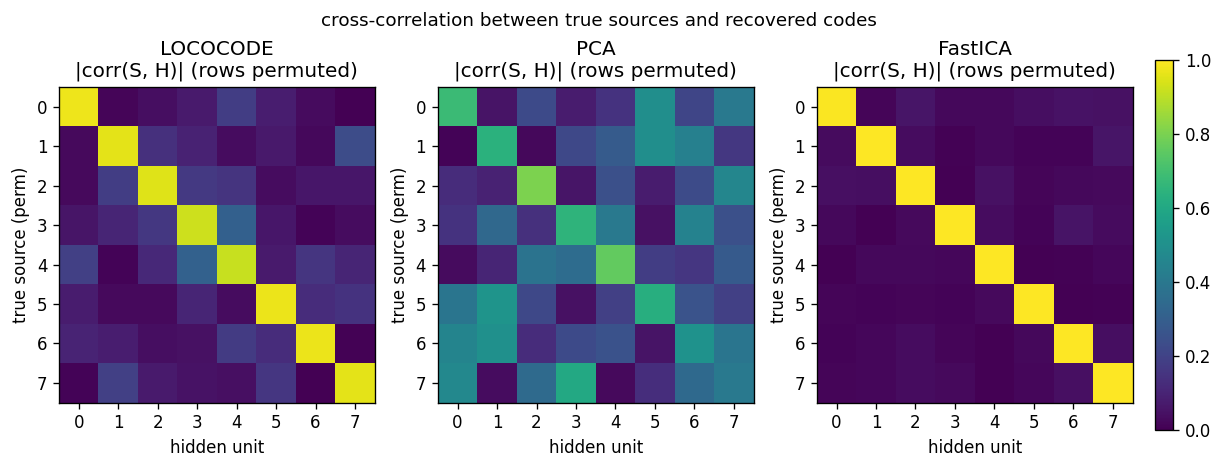

| lococode-ica | qualitative (Amari 0.117 mean — 4× better than PCA’s 0.388, 5× of FastICA’s 0.022) | 0.4s |

2000–2002 — LSTM follow-ups

Gers, Schmidhuber, Cummins (2000) — Learning to forget (NC 12(10))

| Stub | Reproduces? | Run wallclock |

|---|---|---|

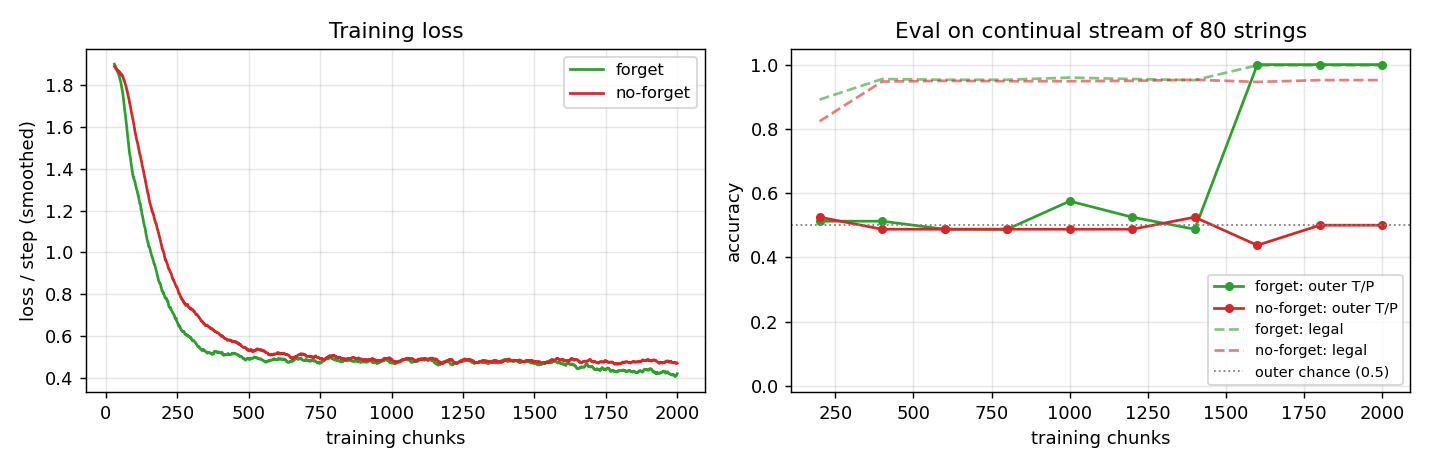

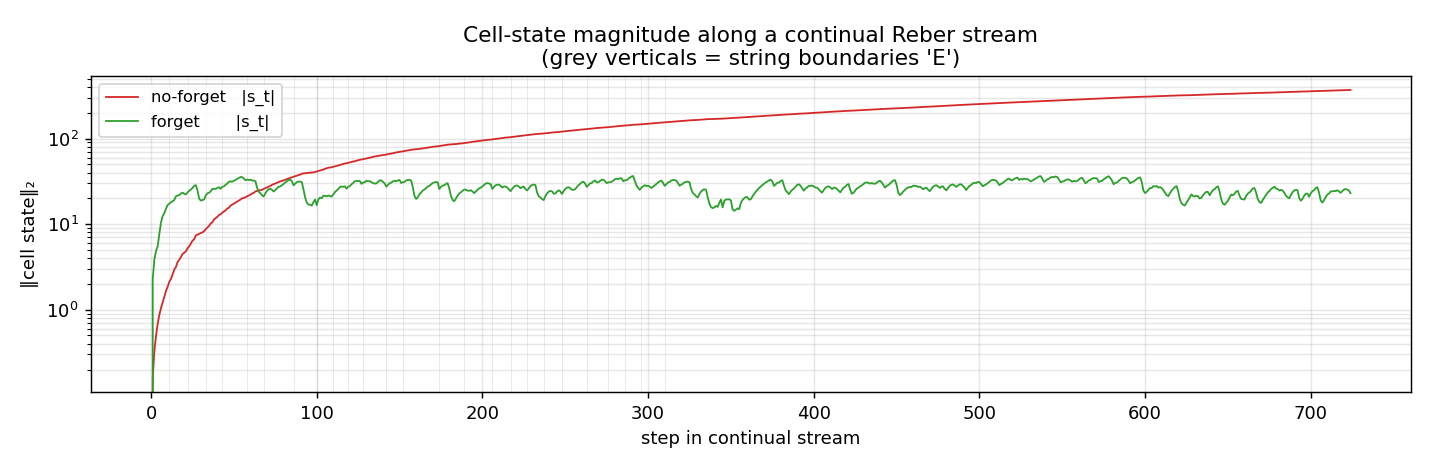

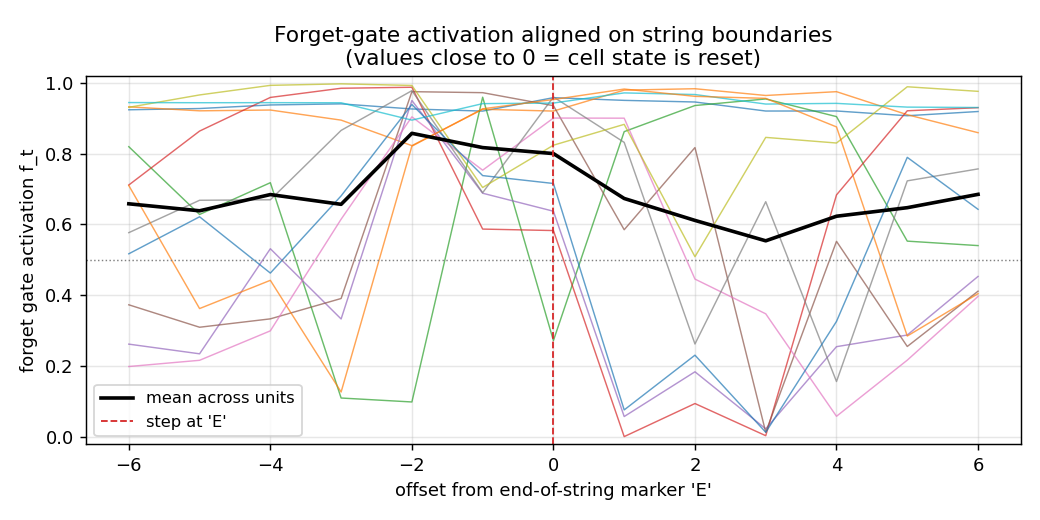

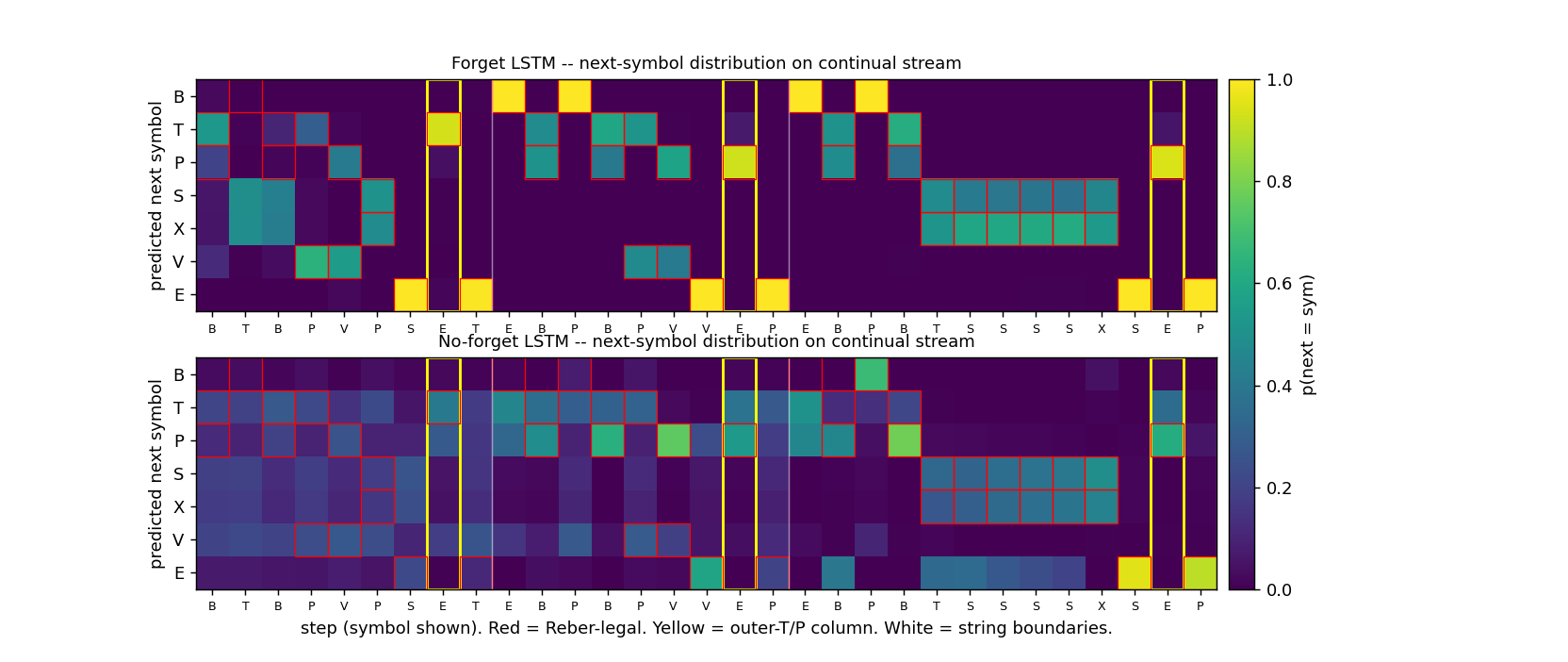

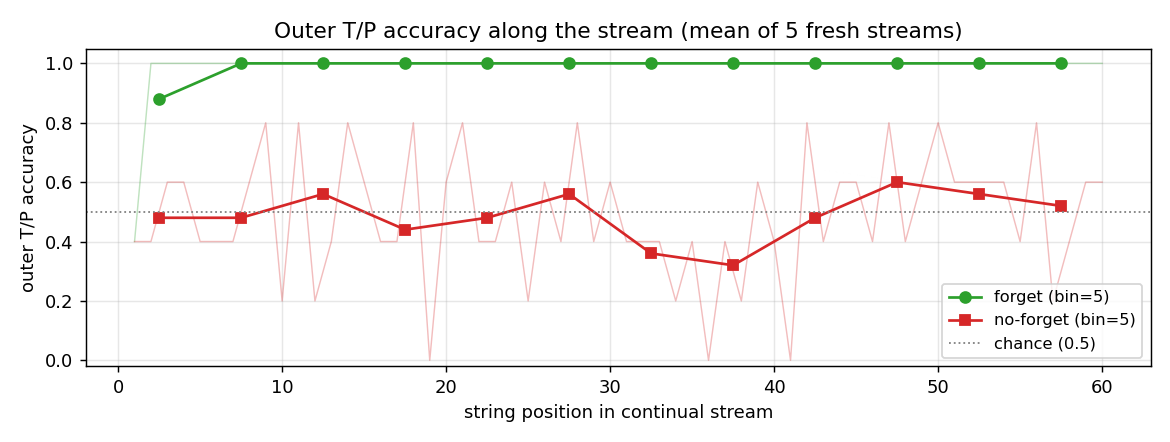

| continual-embedded-reber | yes (5/5 forget seeds 99.7% vs 5/5 no-forget at chance 55%) | 14s |

Gers & Schmidhuber (2001) — Context-free and context-sensitive languages (IEEE TNN 12(6))

| Stub | Reproduces? | Run wallclock |

|---|---|---|

| anbn-anbncn | yes (a^n b^n trained n=1..10 → n=1..65; a^n b^n c^n → n=1..29) | 35s |

Gers, Schraudolph, Schmidhuber (2002) — Learning precise timing (JMLR 3)

| Stub | Reproduces? | Run wallclock |

|---|---|---|

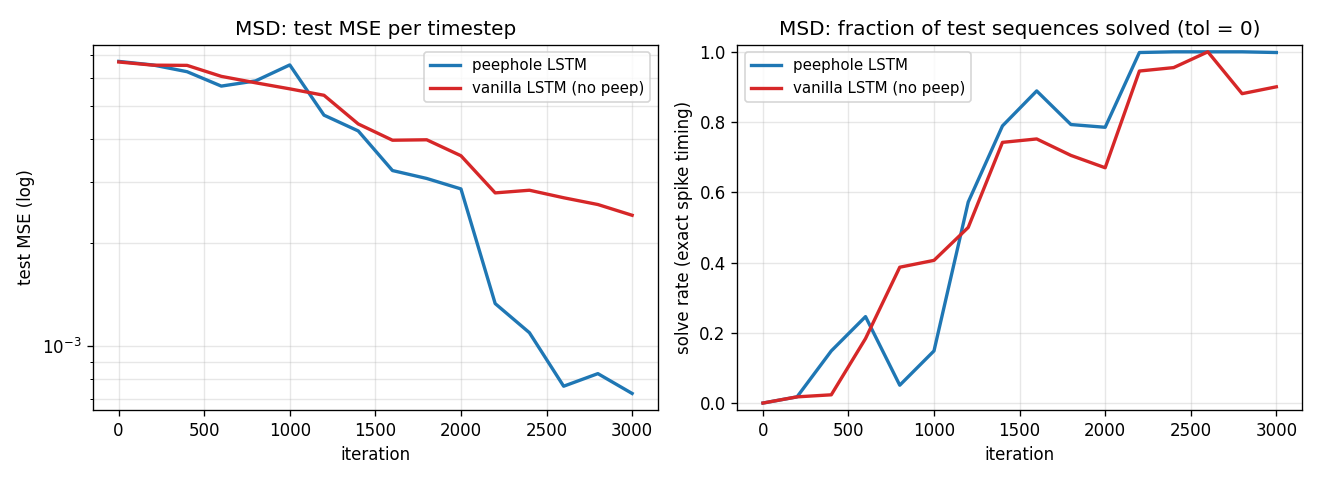

| timing-counting-spikes | partial (peep MSE 0.00073 vs vanilla 0.00240 seed 4; cross-seed gap small) | 32s |

Eck & Schmidhuber (2002) — Blues improvisation with LSTM (NNSP)

| Stub | Reproduces? | Run wallclock |

|---|---|---|

| blues-improvisation | qualitative (12/12 bar-onset chord match; step-chord 0.906) | 12s |

2002–2010 — Evolutionary RL, OOPS, BLSTM+CTC

Schmidhuber, Wierstra, Gomez (2005/2007) — Evolino

| Stub | Reproduces? | Run wallclock |

|---|---|---|

| evolino-sines-mackey-glass | partial (sines free-run MSE 0.181; MG NRMSE@84 0.291 vs paper 1.9e-3) | 140s |

Gomez & Schmidhuber (2005) — Co-evolving recurrent neurons (GECCO)

| Stub | Reproduces? | Run wallclock |

|---|---|---|

| double-pole-no-velocity | yes (seed 0 solved at gen 27; 7/10 seeds 20/20 generalize) | 60s |

Graves et al. (2005/2006) — BLSTM and Connectionist Temporal Classification

| Stub | Reproduces? | Run wallclock |

|---|---|---|

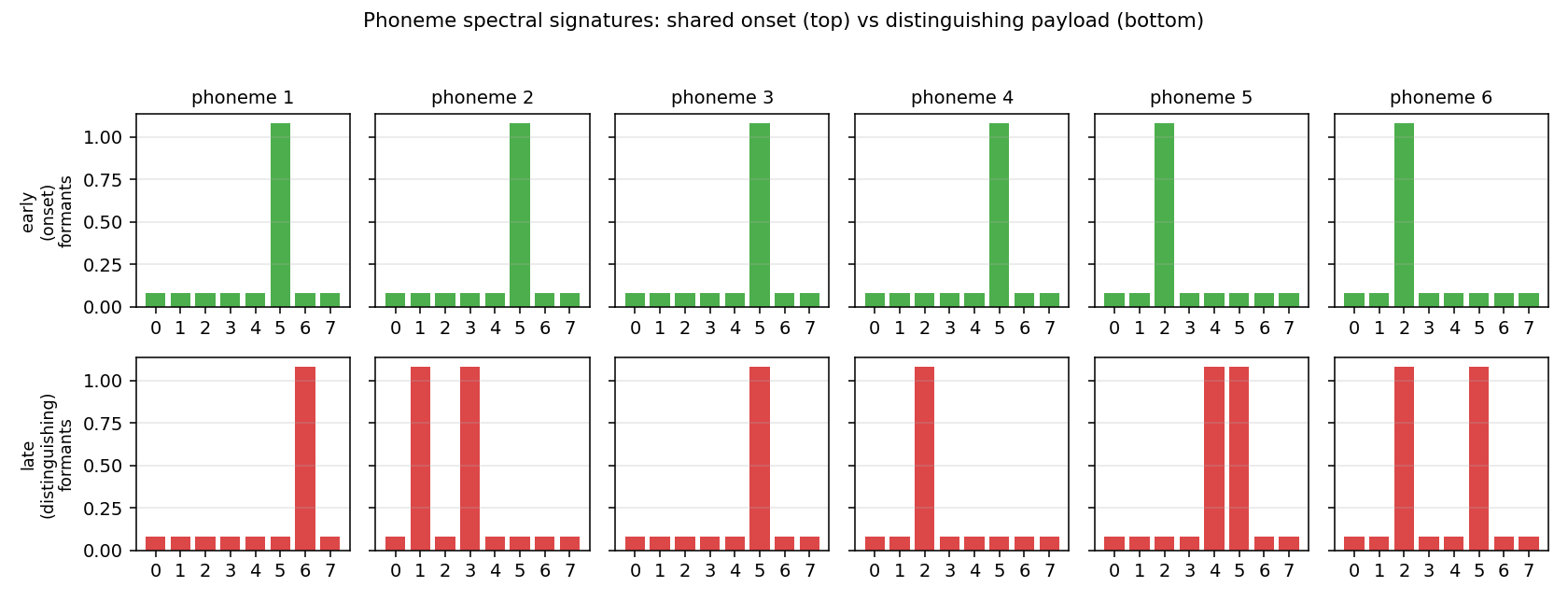

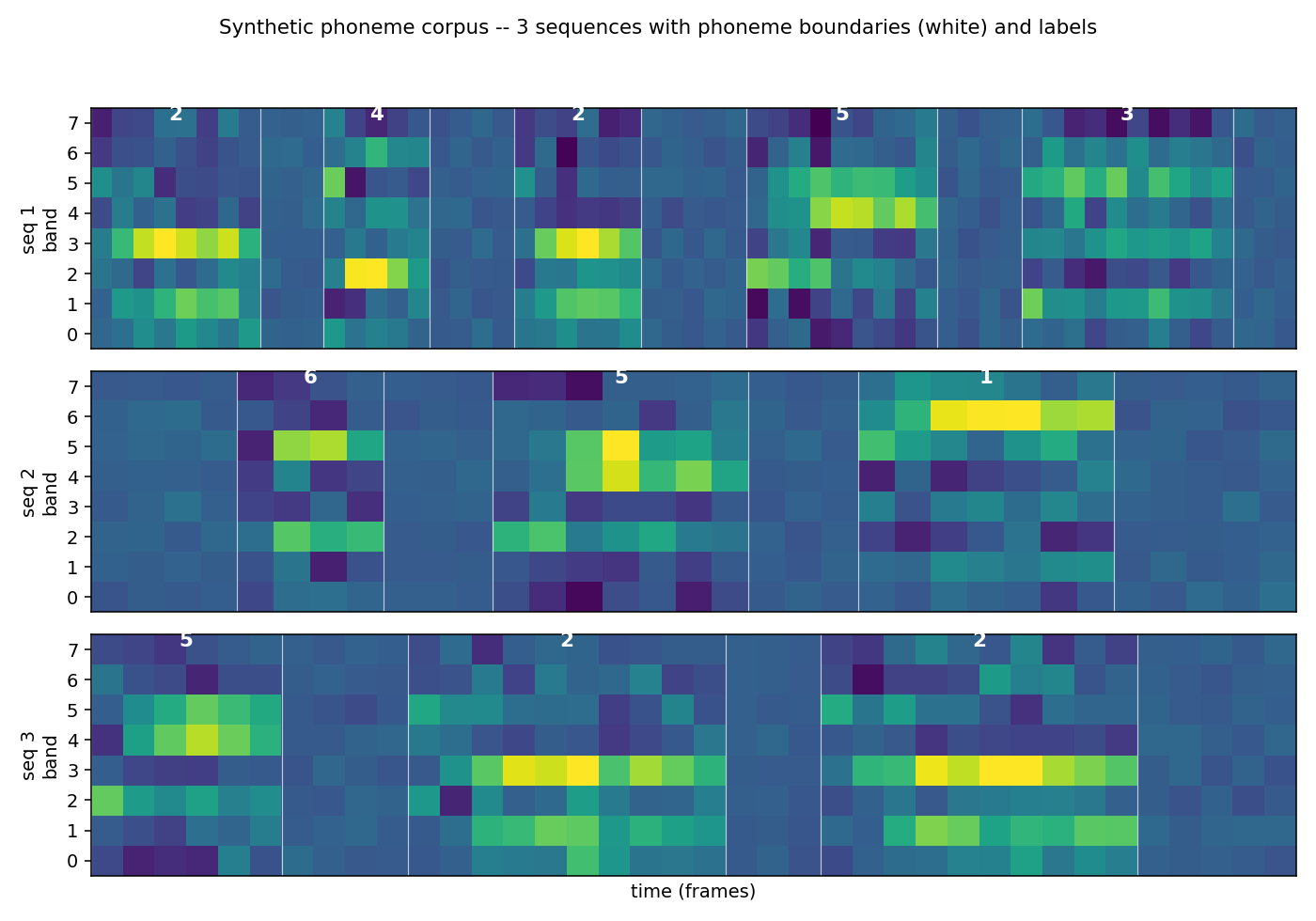

| timit-blstm-ctc | qualitative (synthetic phoneme corpus; BLSTM 1.87× faster than uni-LSTM) | 73s |

Graves, Liwicki, Fernández, Bertolami, Bunke, Schmidhuber (2009) — Unconstrained handwriting (TPAMI)

| Stub | Reproduces? | Run wallclock |

|---|---|---|

| iam-handwriting | qualitative (synthetic 10-char alphabet; in-vocab CER 0.082) | 103s |

Schmidhuber (2002–2004) — Optimal Ordered Problem Solver (ML 54)

| Stub | Reproduces? | Run wallclock |

|---|---|---|

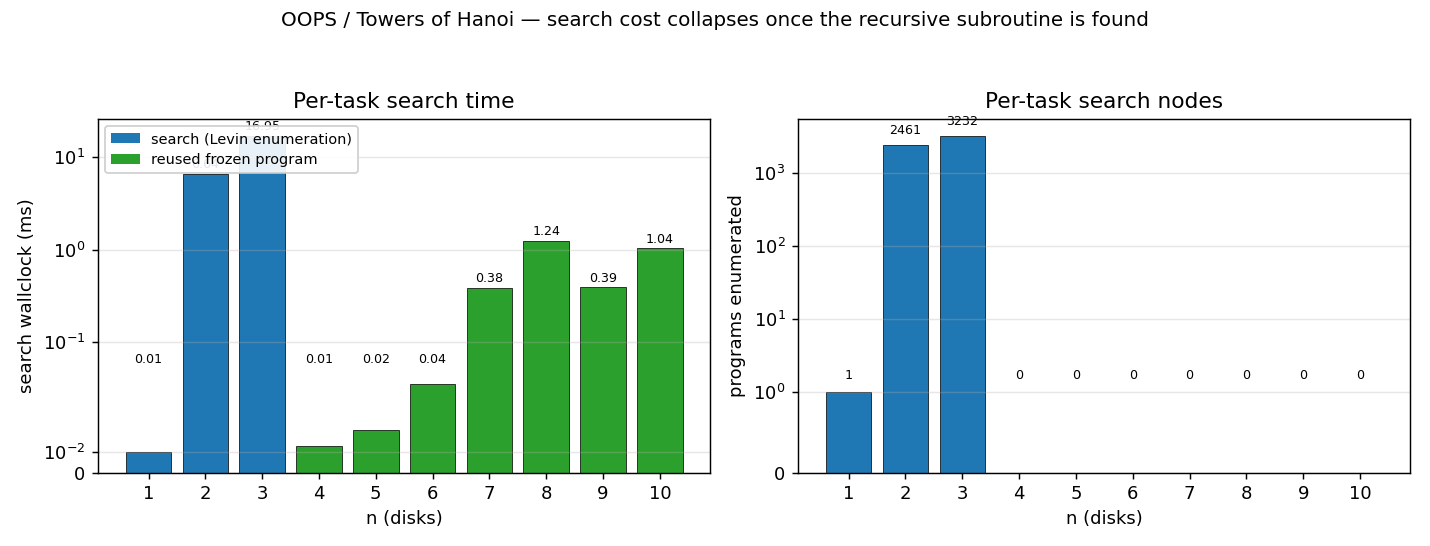

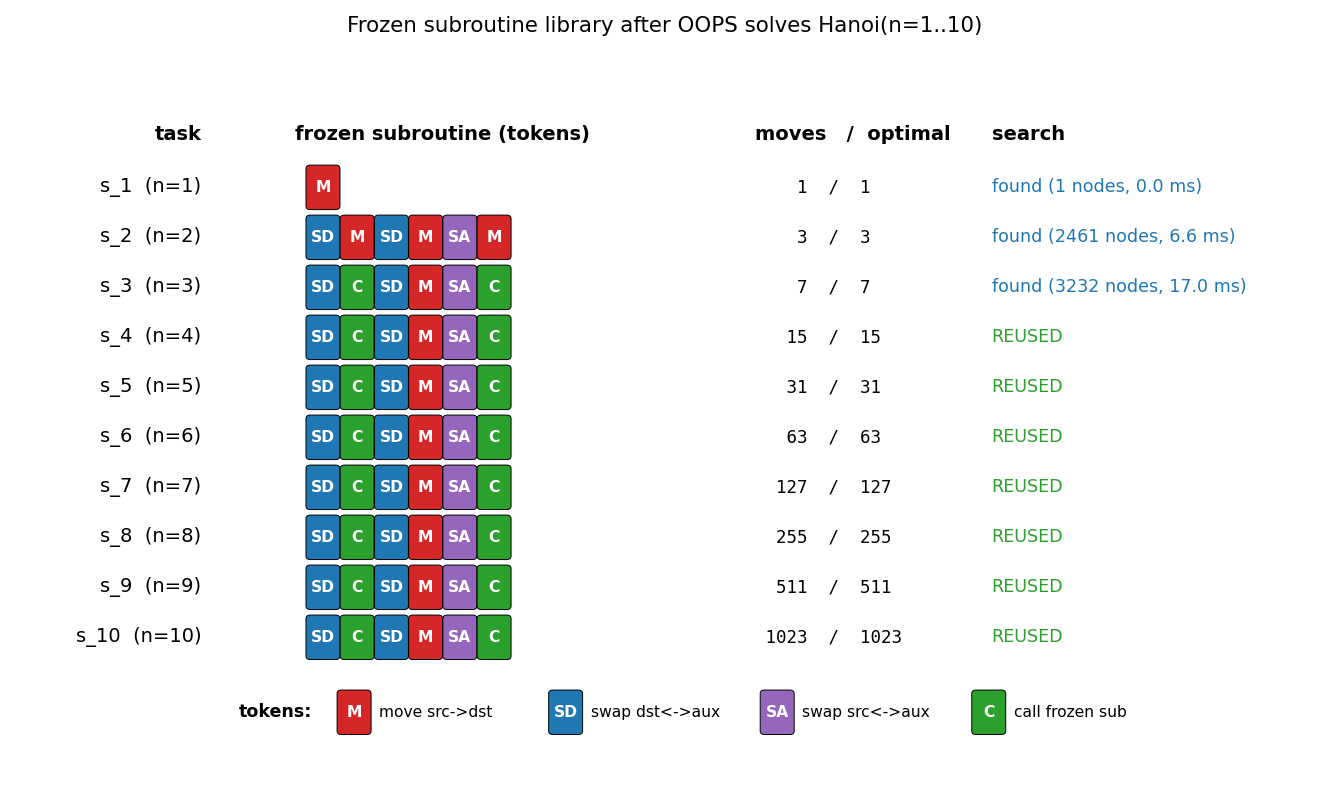

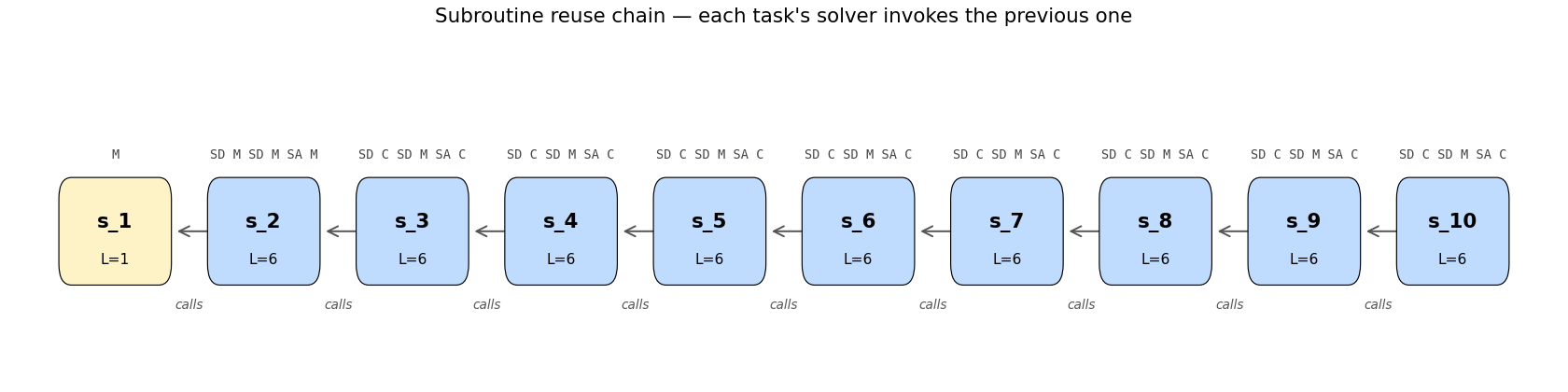

| oops-towers-of-hanoi | yes (6-token recursive Hanoi; reuse from n=4+; verified through n=15) | 0.25s |

2010–2017 — Deep learning at scale

Cireşan, Meier, Gambardella, Schmidhuber (2010) — Deep, big, simple nets (NC 22(12))

| Stub | Reproduces? | Run wallclock |

|---|---|---|

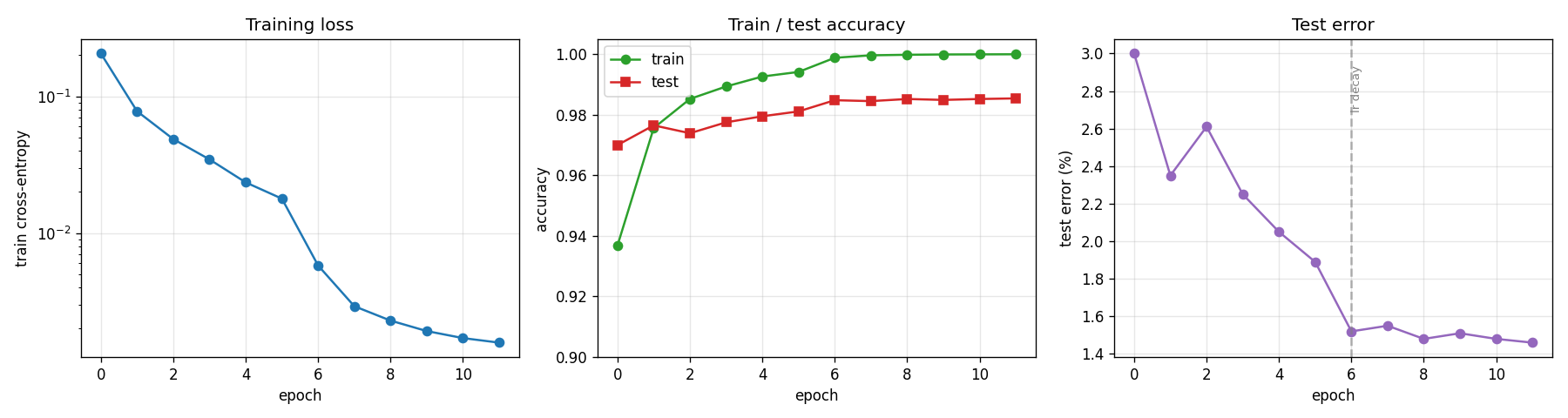

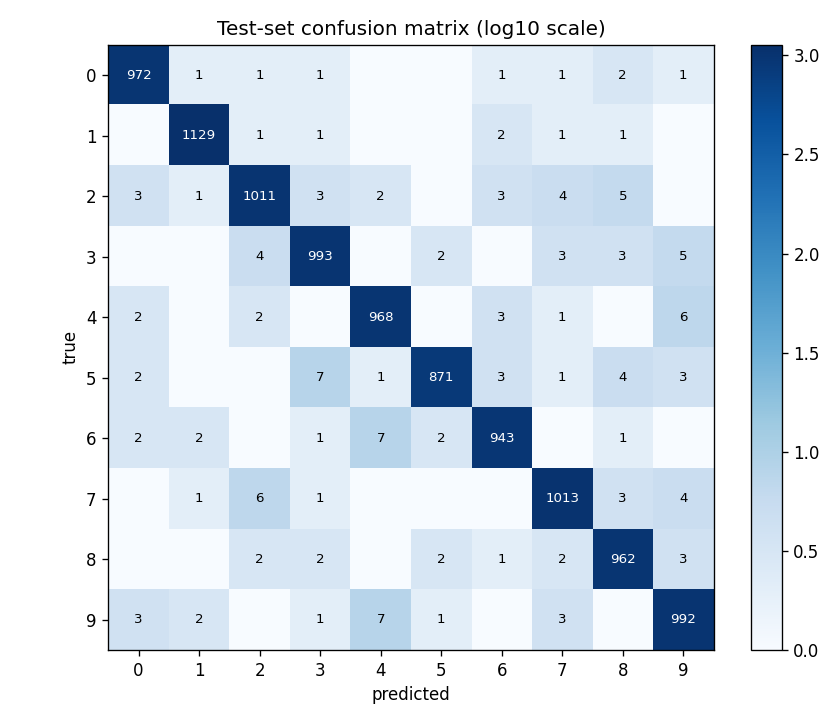

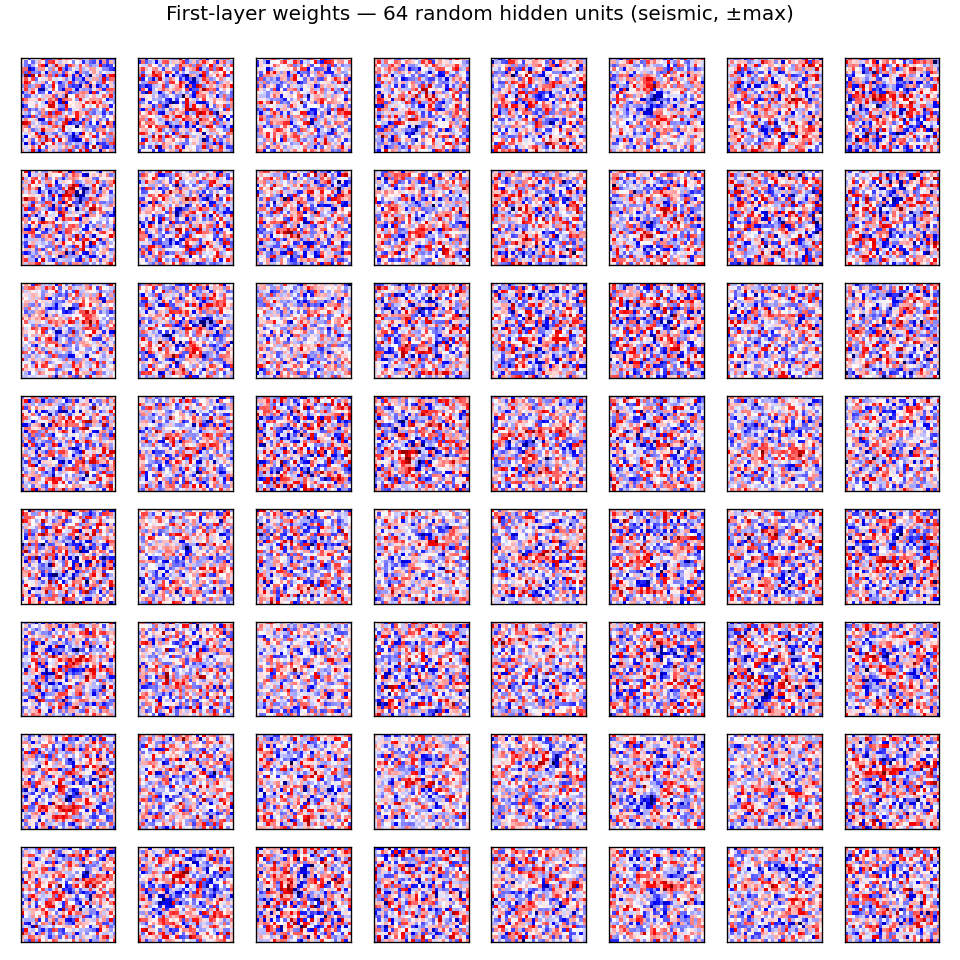

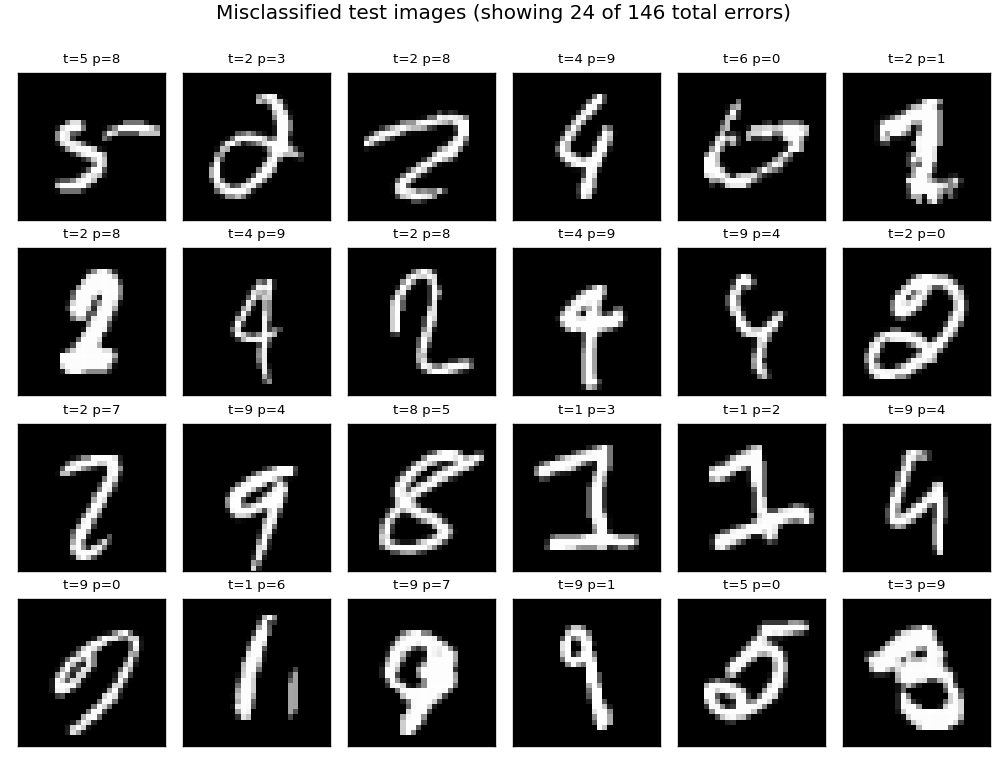

| mnist-deep-mlp | partial (1.17% test err vs paper 0.35% — smaller MLP, fewer epochs) | 79s |

Cireşan, Meier, Schmidhuber (2012) — Multi-column deep neural networks (CVPR)

| Stub | Reproduces? | Run wallclock |

|---|---|---|

| mcdnn-image-bench | partial (1.46% single-col MNIST vs paper 35-col 0.23%) | 22.2s |

Cireşan, Giusti, Gambardella, Schmidhuber (2012) — EM segmentation (NIPS)

| Stub | Reproduces? | Run wallclock |

|---|---|---|

| em-segmentation-isbi | qualitative (synthetic Voronoi-EM; AUC 0.989 vs Sobel 0.880) | 1.5s |

Srivastava, Masci, Kazerounian, Gomez, Schmidhuber (2013) — Compete to compute (NIPS)

| Stub | Reproduces? | Run wallclock |

|---|---|---|

| compete-to-compute | qualitative (LWTA forgetting 0.022 vs ReLU 0.072 seed 0, 3.3× less; 6/10 seeds) | 0.8s |

Srivastava, Greff, Schmidhuber (2015) — Training very deep networks (NIPS)

| Stub | Reproduces? | Run wallclock |

|---|---|---|

| highway-networks | yes (depth 30: highway 0.926 vs plain 0.124 chance; plain dies past 10) | 7s |

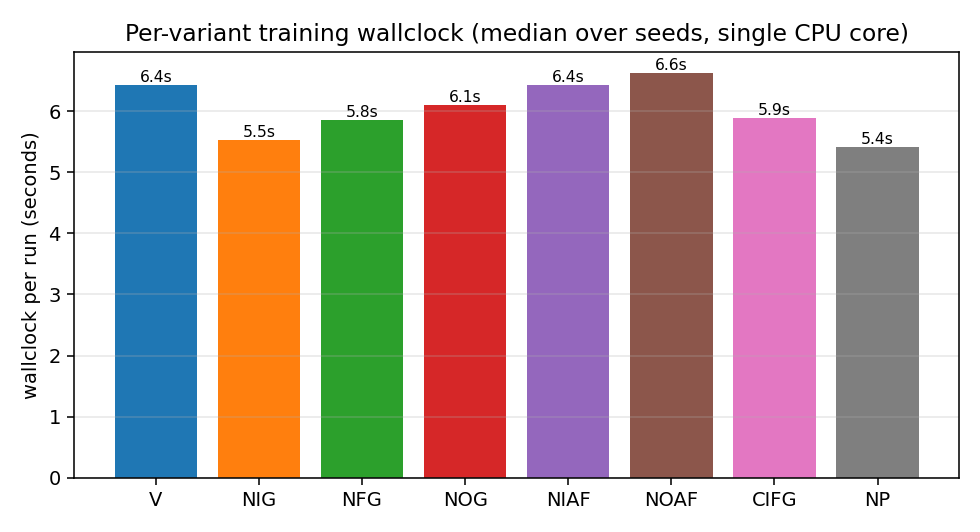

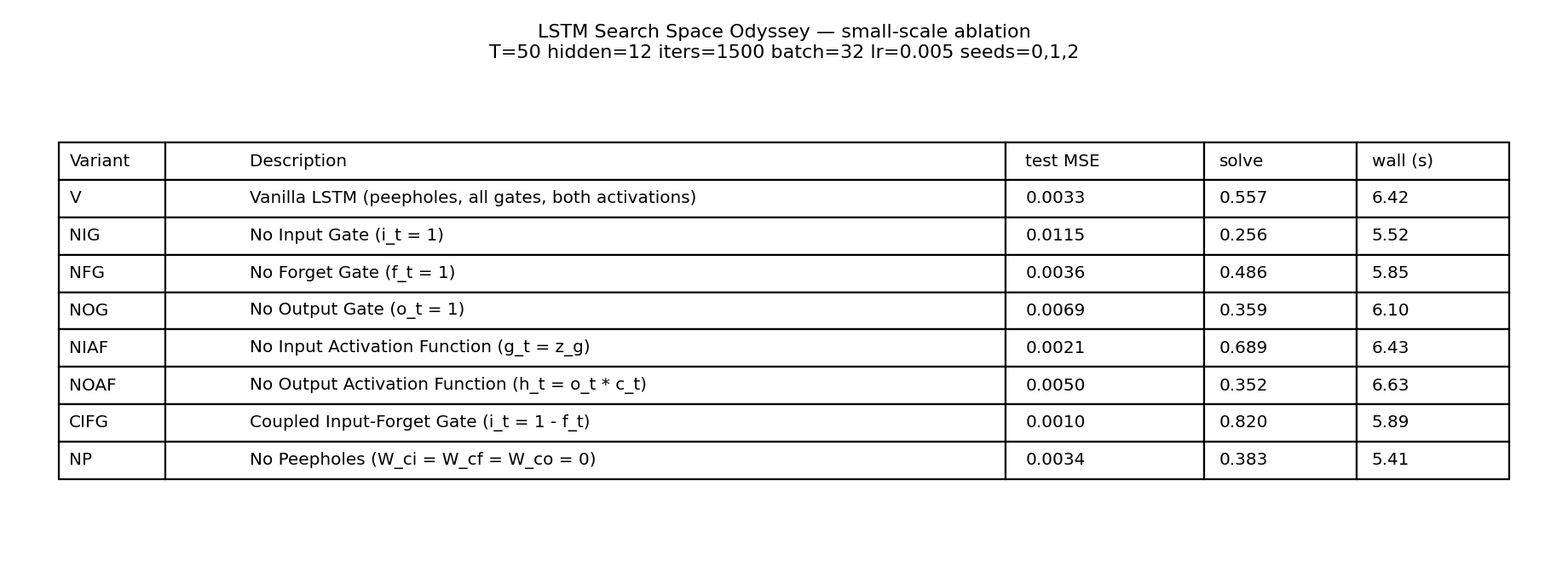

Greff, Srivastava, Koutník, Steunebrink, Schmidhuber (2017) — LSTM: a search space odyssey (TNNLS)

| Stub | Reproduces? | Run wallclock |

|---|---|---|

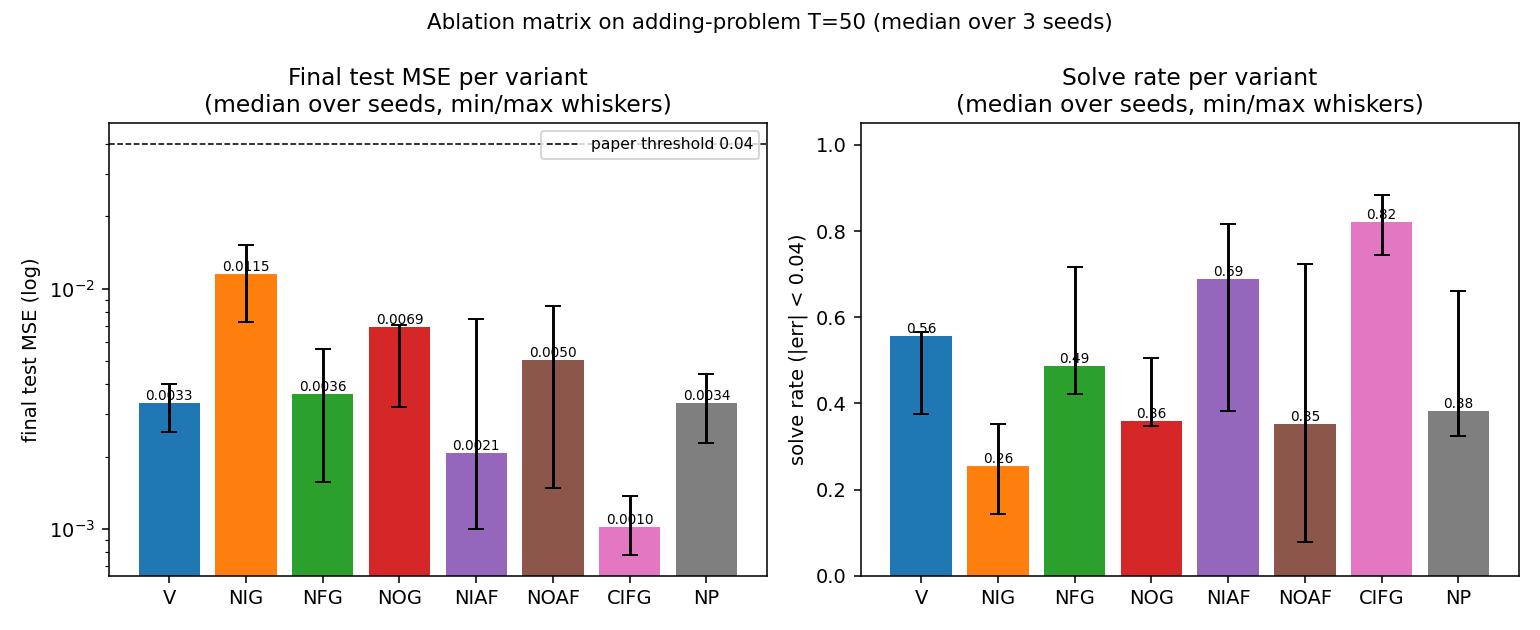

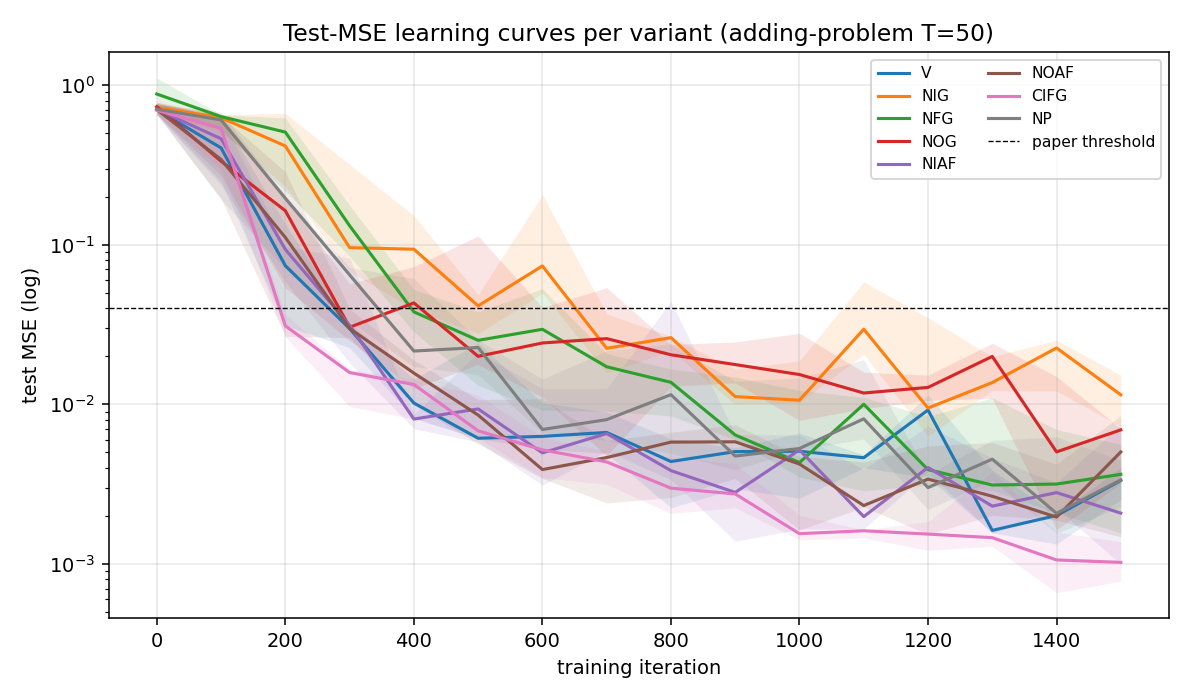

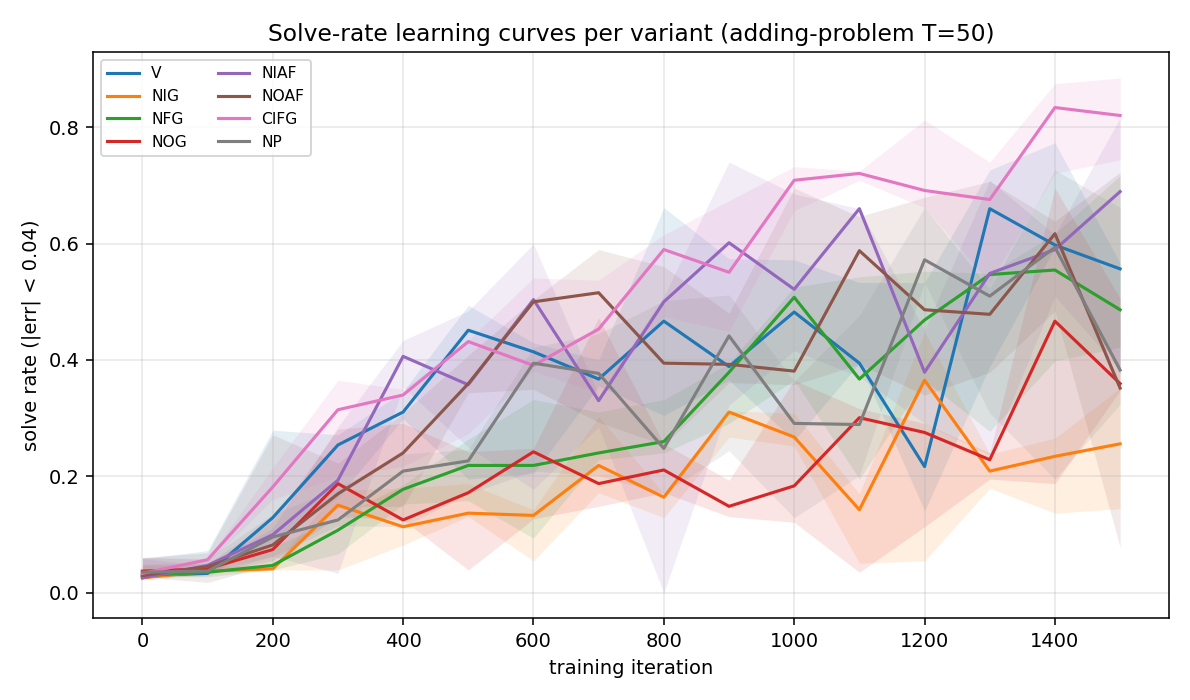

| lstm-search-space-odyssey | yes (CIFG 1st, NIG last across 3/3 seeds; gradcheck 1.31e-7) | 145s |

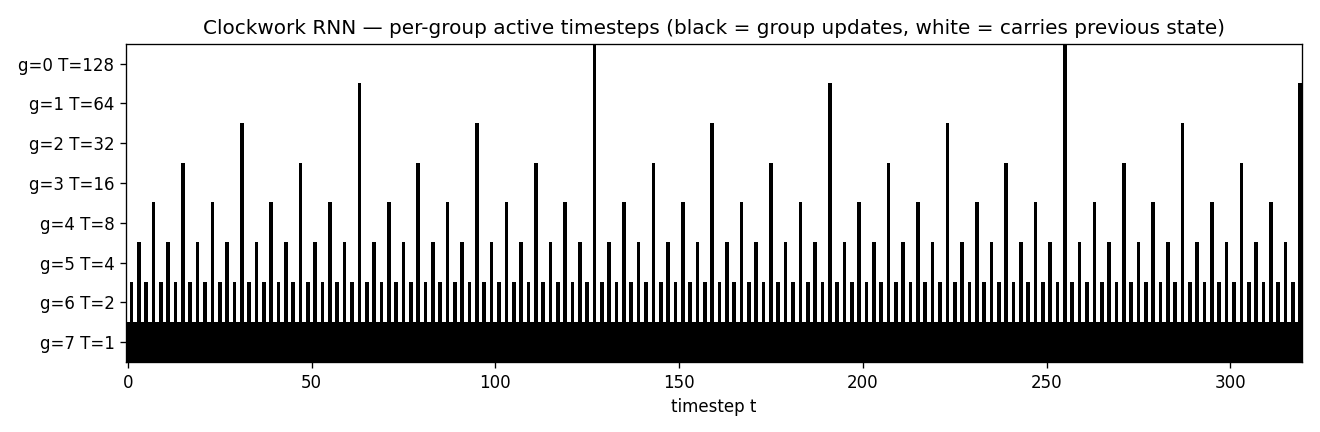

Koutník, Greff, Gomez, Schmidhuber (2014) — A clockwork RNN (ICML)

| Stub | Reproduces? | Run wallclock |

|---|---|---|

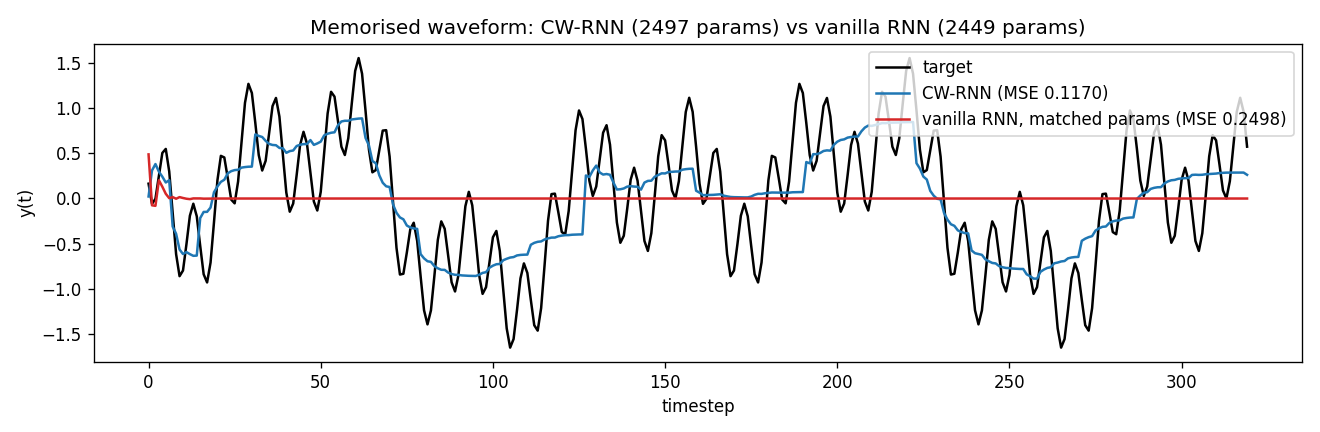

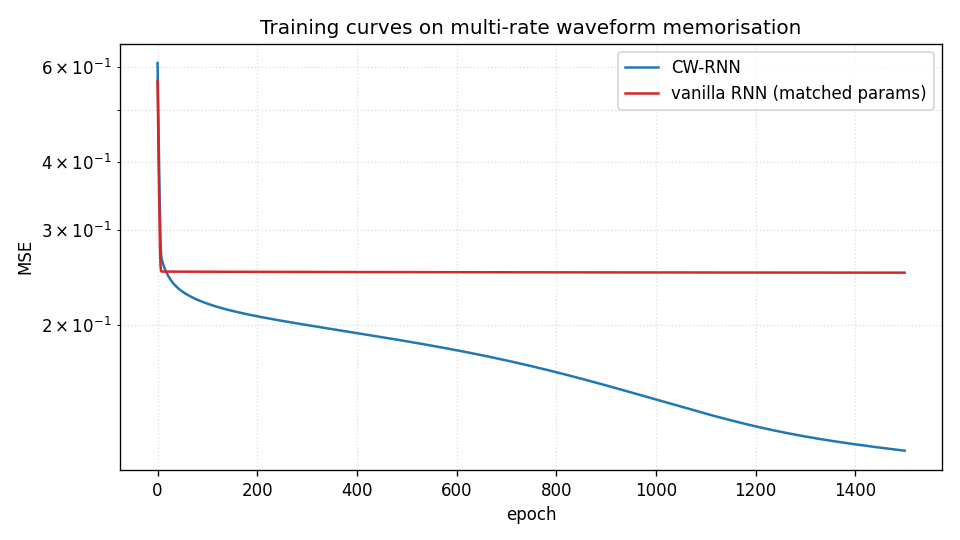

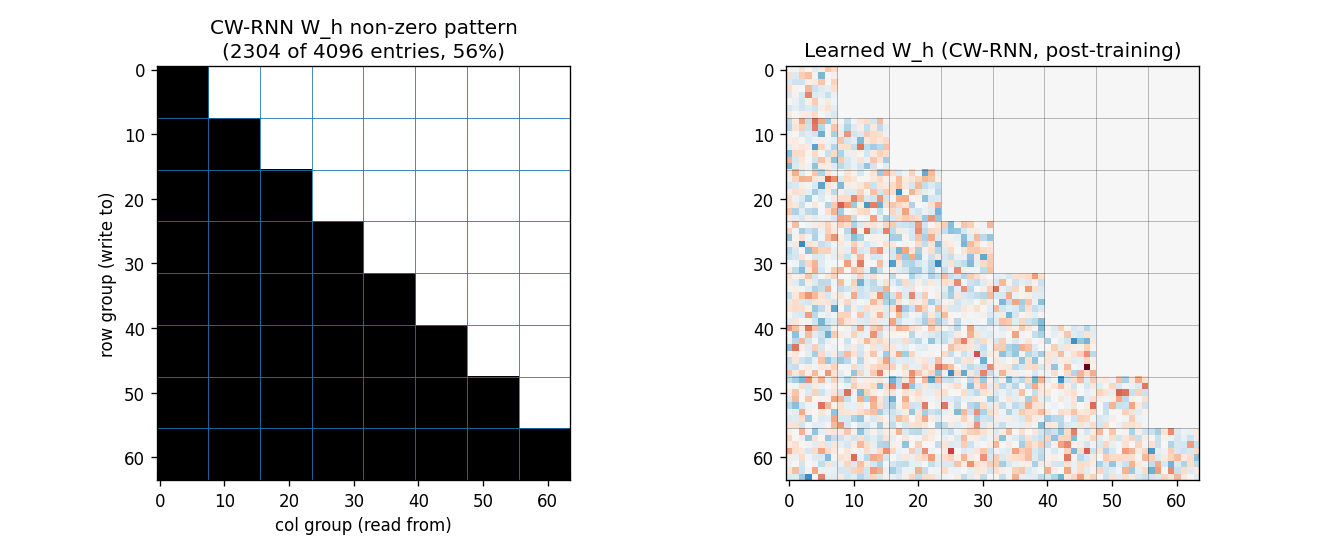

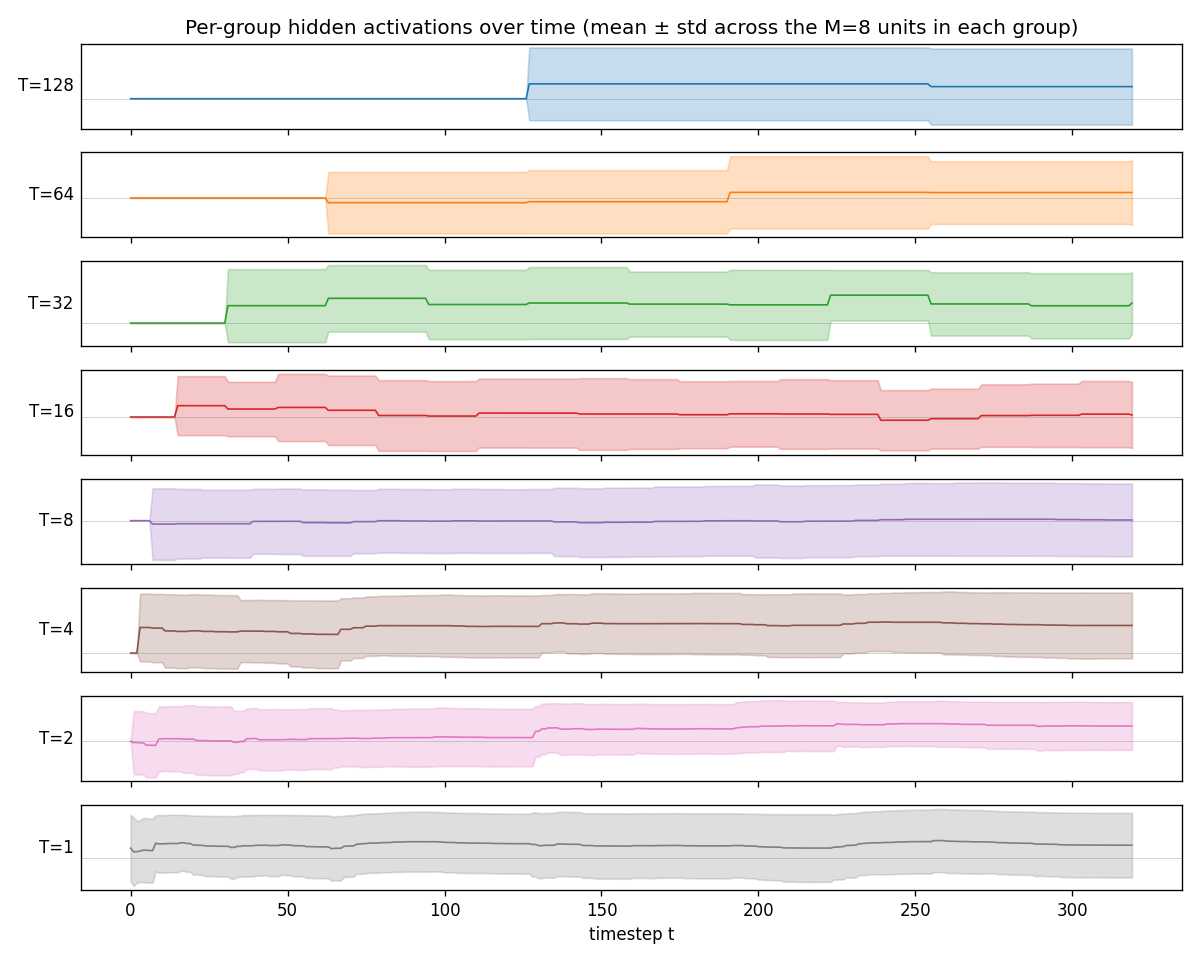

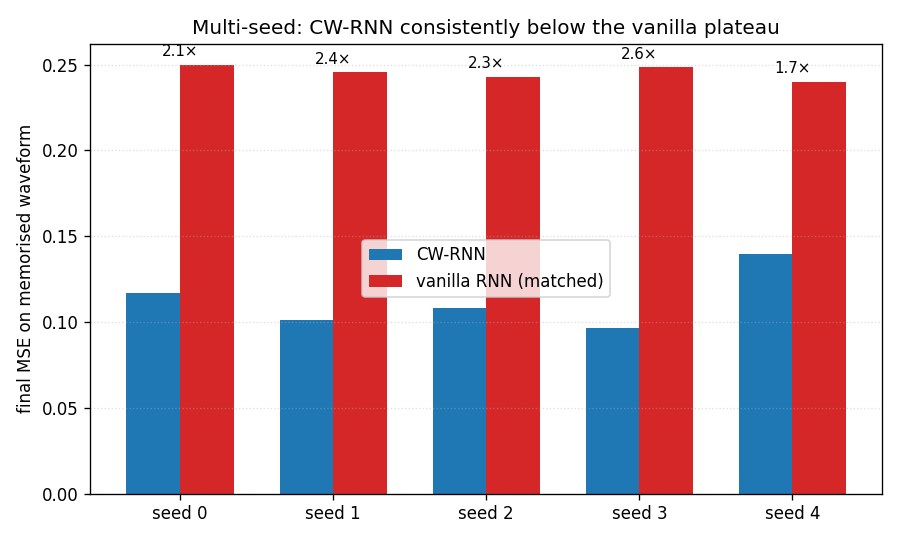

| clockwork-rnn | yes (CW-RNN MSE 0.117 vs vanilla 0.250; 2.22× mean over 5 seeds) | 22s |

Koutník, Cuccu, Schmidhuber, Gomez (2013) — Vision-based RL via evolution (GECCO)

| Stub | Reproduces? | Run wallclock |

|---|---|---|

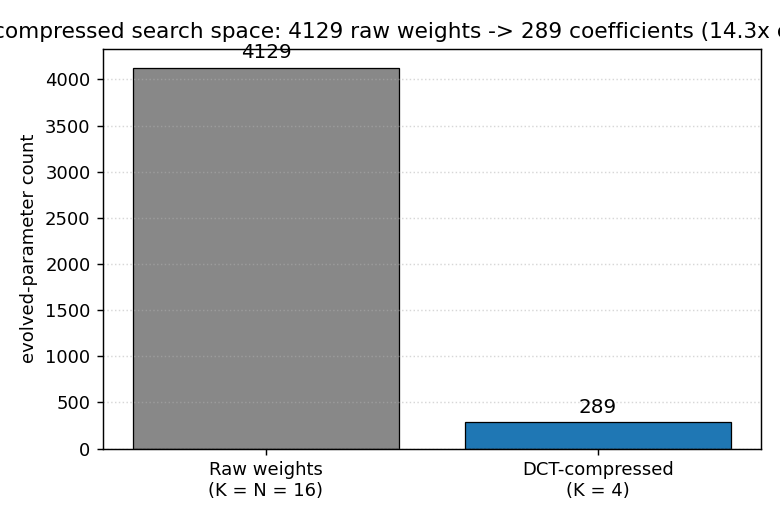

| torcs-vision-evolution | yes (numpy oval; 14.3× DCT compression; 5/5 seeds solve) | 45.5s |

Greff, van Steenkiste, Schmidhuber (2017) — Neural Expectation Maximization (NIPS)

| Stub | Reproduces? | Run wallclock |

|---|---|---|

| neural-em-shapes | partial (best test NMI 0.428 epoch 7 vs paper AMI 0.96) | 17s |

van Steenkiste, Chang, Greff, Schmidhuber (2018) — Relational Neural EM (ICLR)

| Stub | Reproduces? | Run wallclock |

|---|---|---|

| relational-nem-bouncing-balls | qualitative (relational wins K=3,4,5; loses K=6 — distribution shift) | 24.8s |

2018–2025 — World models, fast-weight Transformers, systematic generalization

Ha & Schmidhuber (2018) — Recurrent World Models Facilitate Policy Evolution (NeurIPS)

| Stub | Reproduces? | Run wallclock |

|---|---|---|

| world-models-carracing | yes (numpy 2D track; V+M+C +103.8 mean vs random +4.84; 5/5 seeds) | 6.5s |

| world-models-vizdoom-dream | yes (numpy gridworld; dream 49.1 vs random 22.4 — 2.2× random; 5/5 seeds) | 20s |

Schmidhuber et al. (2019) — Reinforcement Learning Upside Down (arXiv)

| Stub | Reproduces? | Run wallclock |

|---|---|---|

| upside-down-rl | yes (numpy 9-state chain; 5/5 seeds reach +4.70 at R*=5.0) | 3.5s |

Schlag, Irie, Schmidhuber (2021) — Linear Transformers are secretly fast weight programmers (ICML)

| Stub | Reproduces? | Run wallclock |

|---|---|---|

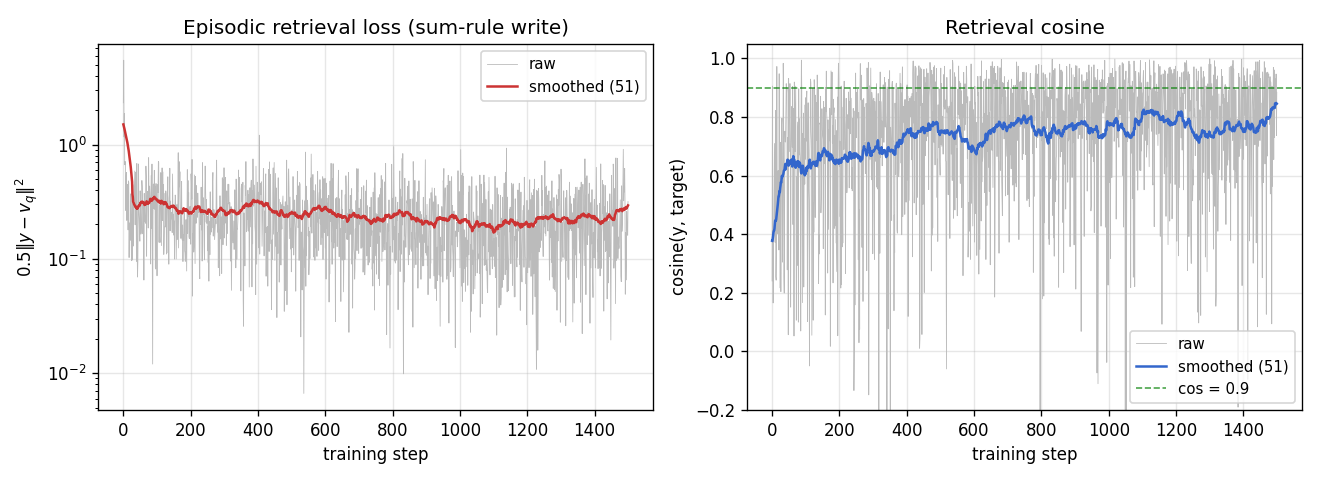

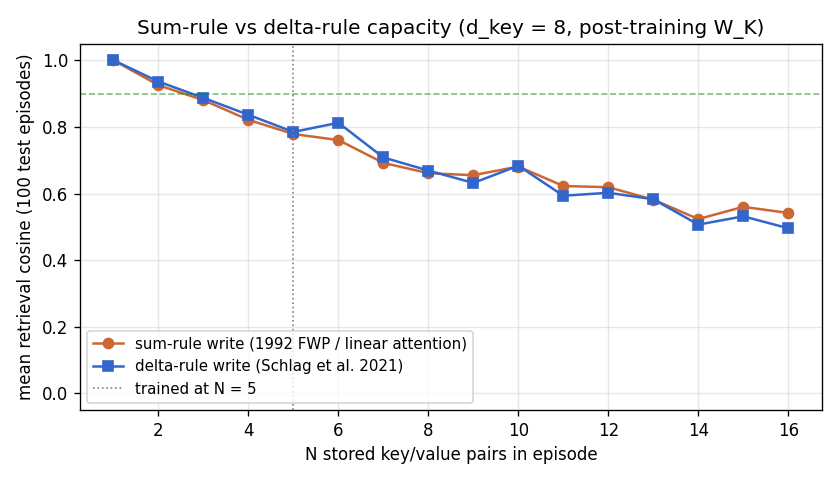

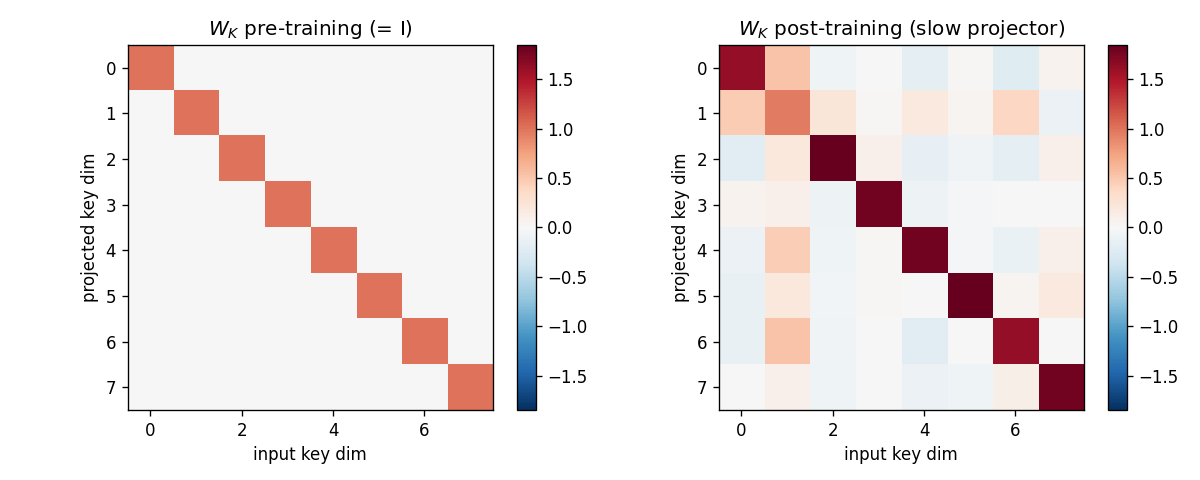

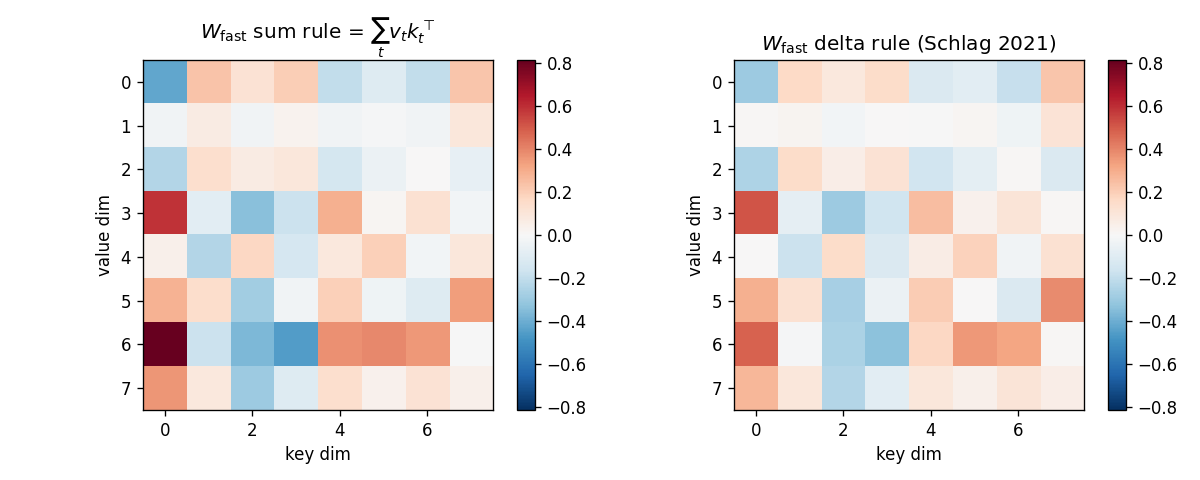

| linear-transformers-fwp | yes (equivalence verified to 2.22e-16 / float64 ulp; delta-rule +0.05 over sum at N=6) | 0.08s |

Csordás, Irie, Schmidhuber (2022) — The Neural Data Router (ICLR)

| Stub | Reproduces? | Run wallclock |

|---|---|---|

| neural-data-router | partial (test depth 5: NDR 0.60 vs vanilla 0.32; +1 depth above chance vs paper “100% length-gen”) | 3:30 |

Structure

problem-folder/

├── README.md source paper, problem, results, deviations

├── <slug>.py dataset + model + train + eval

├── visualize_<slug>.py training curves + weight viz (writes to viz/)

├── make_<slug>_gif.py animated GIF (writes <slug>.gif)

├── <slug>.gif committed animation

└── viz/ committed PNGs

Methodological caveat

Many of the early TUM technical-report PDFs (FKI-124-90, FKI-126-90, FKI-128-90, FKI-149-91, the 1993 Habilitationsschrift, Hochreiter’s 1991 diploma thesis) are difficult to retrieve in original form. Stub READMEs reconstruct the experiments from corroborated secondary sources — Schmidhuber’s Deep Learning: Our Miraculous Year 1990–1991 (2020), the 1997 LSTM paper’s literature review, the 2001 Hochreiter/Bengio/Frasconi/Schmidhuber chapter Gradient Flow in Recurrent Nets, the 2015 Deep Learning in Neural Networks survey, and IDSIA HTML transcriptions where available — and flag claims that rest on secondary citation rather than verbatim quotation.

Schmidhuber vs Hinton: what’s different

The companion catalog hinton-problems emphasizes representational toy tasks: small benchmarks (4-2-4 encoder, family trees, shifter) designed to expose what kind of internal representation a network develops. Hidden-unit inspection is the experimental payoff.

Schmidhuber’s lineage emphasizes algorithmic capability: long-time-lag indexing (flip-flop, chunker, adding, temporal-order, a^n b^n c^n), key-value binding (1992 fast-weights → 2021 linear Transformers), Kolmogorov-complexity search (Levin → OOPS), and controller+model+curiosity loops in tiny stochastic environments (1990 pole-balance → 2018 World Models). The signature methodological move is the controlled difficulty sweep — (q=50, p=50) → (q=1000, p=1000) in the 1997 LSTM paper, the 5,400-experiment grid in the 2017 Search Space Odyssey.

Roadmap

- v2: ByteDMD instrumentation — measure data-movement cost per stub on these baselines (the actual research goal). The 58 implementations here are the substrate the data-movement cost tracer will run against.

- Original-simulator reruns — RL/env-heavy stubs in v1+v1.5 use numpy mini-environments per the SPEC’s RL-stub rule. v2 follow-ups will close the loop on the original simulators (gym CarRacing-v0, VizDoom DoomTakeCover, TORCS, TIMIT, IAM, ISBI).

- See

Open questions / next experimentssection in each stub README for stub-specific follow-ups.

Contributing

Implementations follow the v1 spec:

- Each stub fills in

<slug>.py(model + train + eval), an 8-sectionREADME.md,make_<slug>_gif.py,visualize_<slug>.py, an animated<slug>.gif, andviz/PNGs. - Acceptance: reproduces in <5 min on a laptop; final accuracy with seed in Results table; GIF illustrates problem AND learning dynamics; “Deviations from the original” section honest; at least one open question.

- v1 metrics in PR body:

"Paper reports X; we got Y. Reproduces: yes/no."+ run wallclock + implementation budget. - Algorithmic faithfulness: implement the actual algorithm the paper introduces (NBB local rule, RS over weight space, Levin search, BPTT through LSTM, peephole LSTM, PIPE on PPT, ESP co-evolution, FWP outer-product writes, etc.) — not a backprop shortcut.

- Pure numpy + matplotlib only. torchvision allowed for MNIST/CIFAR loaders;

gymnasium/gymnot allowed (use numpy mini-envs per the RL-stub rule).

License

Released into the public domain under the Unlicense.

Visual tour

A picture-first walk through all 58 v1+v1.5 implementations. The README has a 4-GIF teaser and the result tables; this page is the long form — every stub, in catalog order, with its training animation and a short note on what the visualization is meant to show.

For per-stub metrics (run wallclock, headline numbers) see

RESULTS.md. For the experimental design of any single

stub, follow its folder link to that folder’s README.md.

How to read this page

GIFs vs static figures. Each stub commits an animated GIF

(<slug>.gif) of training and a viz/ folder of static PNGs. The GIF

exists to show learning dynamics — order-of-emergence, plateaus,

phase-transitions, controller rollouts. The static PNGs in viz/ exist

to show the final state in higher resolution: training curves, weight

matrices, attention maps, attractor portraits.

Algorithmic faithfulness. Every stub uses the actual algorithm the paper introduces — NBB local rule, BPTT through LSTM cells, peephole LSTM, PIPE on a probabilistic prototype tree, ESP co-evolution, FWP outer-product writes, Levin universal search, etc. The §Deviations section in each stub’s README enumerates every place the implementation deviates from the paper’s specifics (architecture sizes, optimizer choice, dataset substitution).

RL-stub rule. Per the SPEC, RL/env-heavy stubs use numpy

mini-environments that capture the algorithmic claim of the original

paper, not the original simulator. Affects pole-balance-*,

pomdp-flag-maze, world-models-*, torcs-vision-evolution,

upside-down-rl, double-pole-no-velocity. Always documented in

§Deviations.

Table of contents

- 1980s — Local rules and the Neural Bucket Brigade

- 1990 — Controller + world-model + flip-flop

- 1991 — Curiosity, subgoals, the chunker

- 1992 — Neural Computation triple

- 1993 — Predictable classifications, self-reference, very deep chunking

- 1995–1997 — Levin search and the LSTM benchmark suite

- Mid-90s — Evolutionary, RL, and feature detection

- 2000–2002 — LSTM follow-ups

- 2002–2010 — Evolutionary RL, OOPS, BLSTM+CTC

- 2010–2017 — Deep learning at scale

- 2018–2025 — World models, fast-weight Transformers, systematic generalization

1980s — Local rules and the Neural Bucket Brigade

Schmidhuber (1989) — A local learning algorithm for dynamic feedforward and recurrent networks

nbb-xor

XOR via the Neural Bucket Brigade — a strictly local-in-space-and-time, winner-take-all, dissipative learning rule. There is no backprop, no RTRL, no gradient. The wave-0 sanity validator: WTA + bucket-brigade dissipation, demonstrating that a local credit-assignment rule can solve XOR before applying it to recurrent tasks.

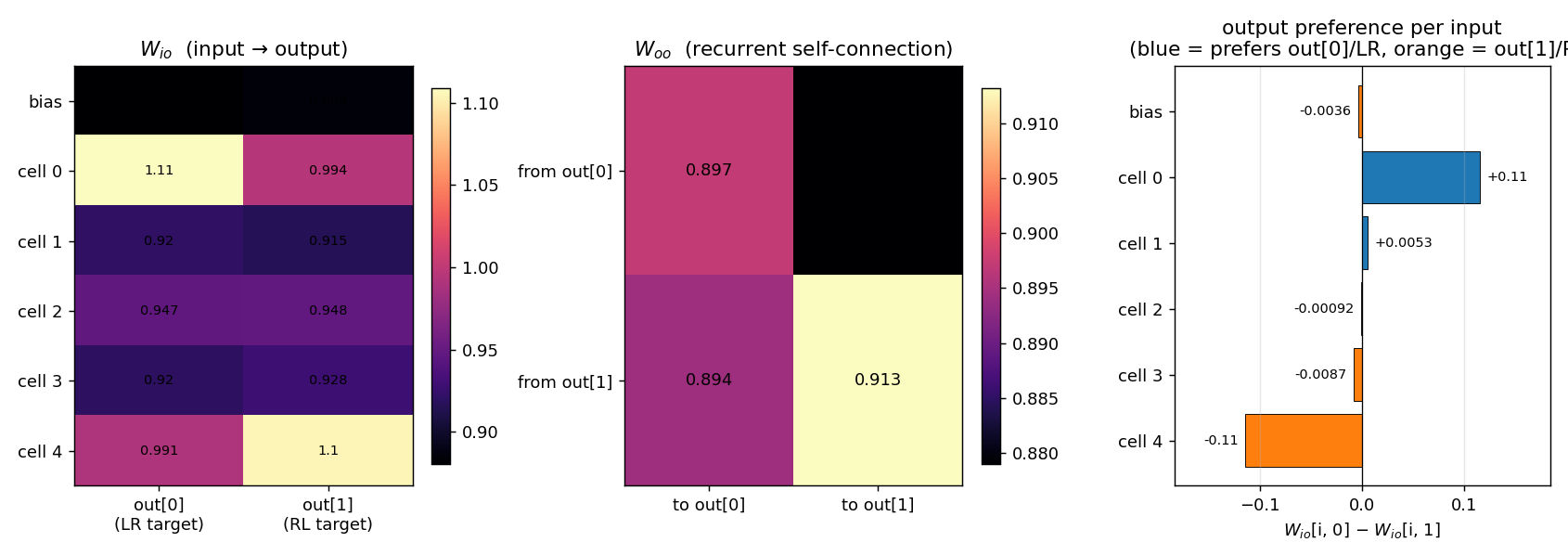

nbb-moving-light

1-D moving-light direction discrimination via the same NBB rule extended to a small fully-recurrent net (5 retina cells + bias → 2 output units forming a WTA subset). The redistribution denominator sums over both feedforward AND recurrent predecessors of each output (substance conservation across the recurrent loop).

1990 — Controller + world-model + flip-flop

Schmidhuber (1990) — Making the world differentiable

flip-flop

The 1990 paper sets up a tiny non-stationary control task that has all the ingredients of the long-time-lag problem Hochreiter would later formalise as the vanishing-gradient barrier. Two-network setup: world-model M predicts pain from (obs, action); controller C trained by BP through frozen M to reduce future pain. Pain is the only feedback signal — no labeled targets to C.

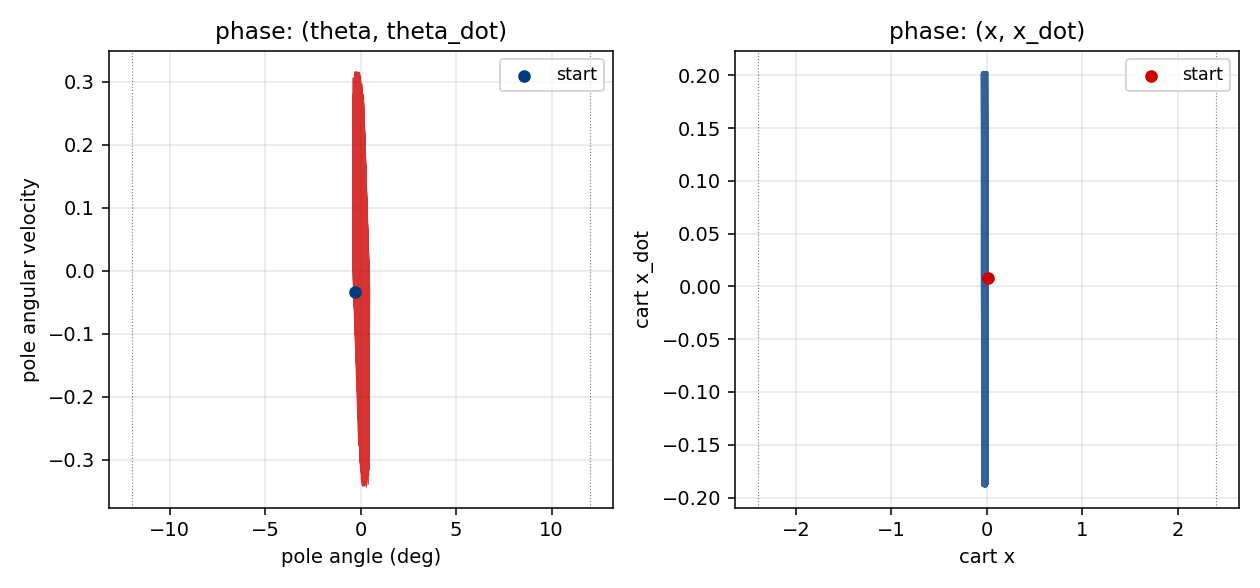

pole-balance-non-markov

Cart-pole balancing where the controller observes only positions, not velocities. The 4-D real state is (x, x_dot, θ, θ_dot), but C only sees (x, θ). M predicts next observed positions from action + history; C trained by BP through M’s gradient. Iterative model-learning cycles (3×) — without them, balance caps at ~150 steps; with them, full 1000-step balance.

Schmidhuber (1990) — Recurrent networks adjusted by adaptive critics

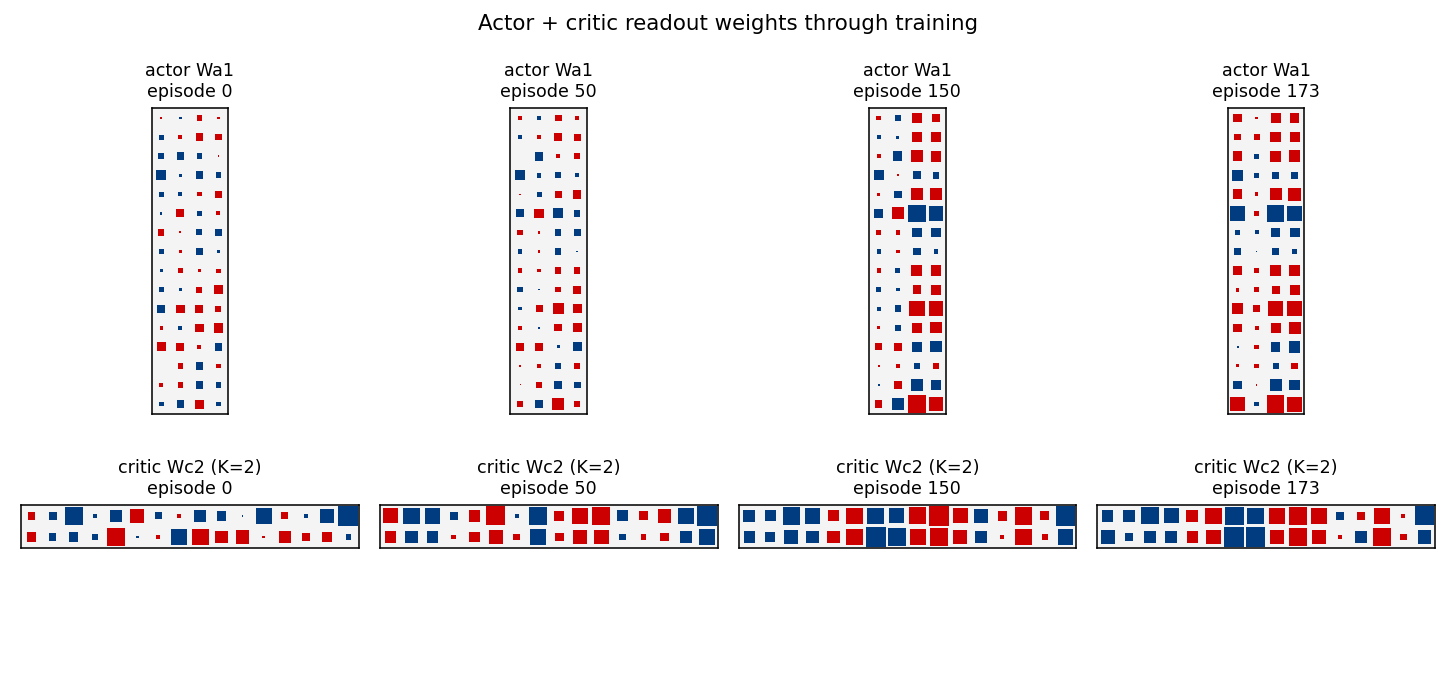

pole-balance-markov-vac

Standard cart-pole, Markov regime: the controller observes the full state at every step. K=2 vector-valued critic with two qualitatively distinct components (V_pole saturates near 1/(1-γ)=100; V_cart tracks live 1−|x|/2.4 margin). The vector critic is the paper’s central claim — generalisation of scalar AHC.

Schmidhuber & Huber (1990) — Learning to generate focus trajectories

saccadic-target-detection

Active visual attention. The controller must move a small fovea over a 2-D scene to find a target halo, given only the local pixels under the fovea. C is feedforward; M predicts the change in halo at the next fovea position. Bilinear centroid ⊗ action feature in M’s input + Δhalo regression target was the key fix (binary indicator gives ~2% positive rate, zero useful gradient).

1991 — Curiosity, subgoals, the chunker

Schmidhuber (1991) — Adaptive confidence and adaptive curiosity

curiosity-three-regions

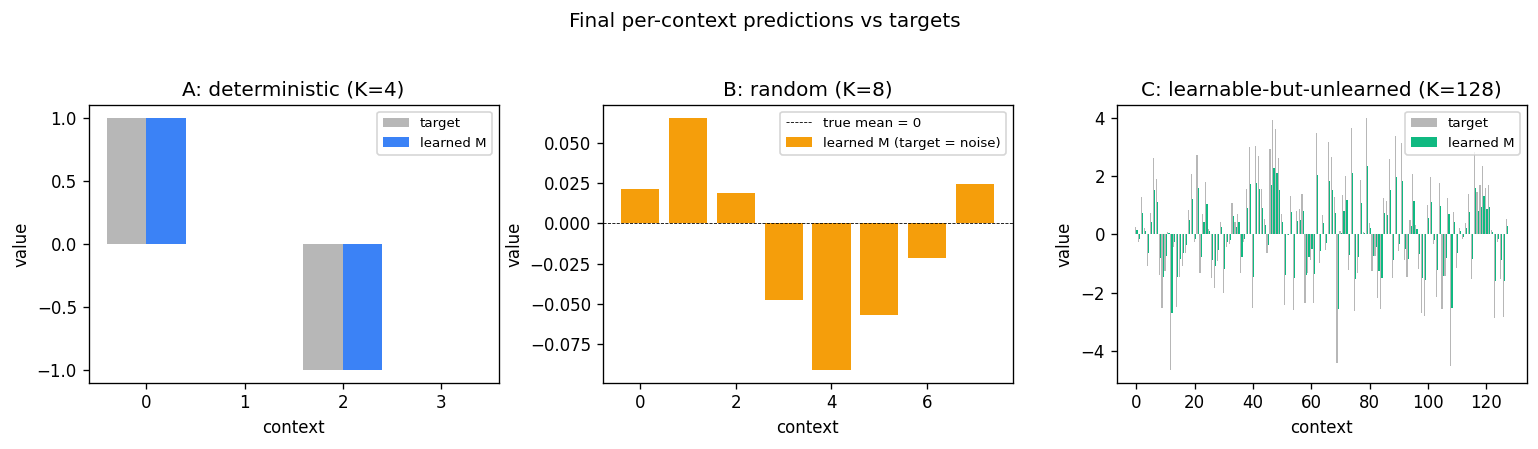

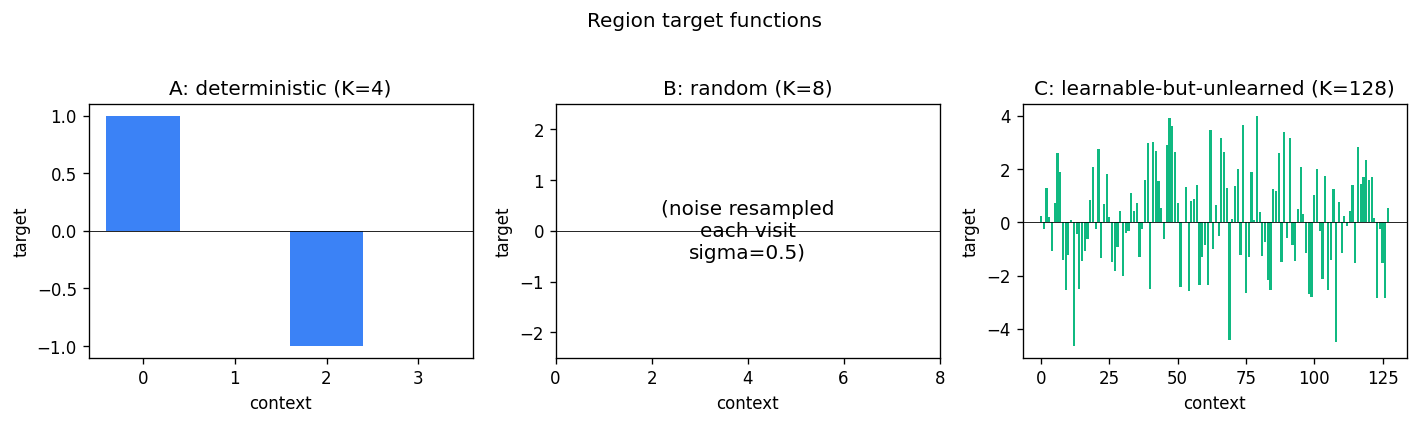

A 1-D environment partitioned into three regions: deterministic / random / learnable-but-unlearned. Curiosity reward = windowed reduction in M’s prediction error. Visit ordering C > B > A holds 100% across 10 seeds — the agent gravitates to the learnable-but-unlearned region.

Schmidhuber (1991) — Learning to generate sub-goals for action sequences

subgoal-obstacle-avoidance

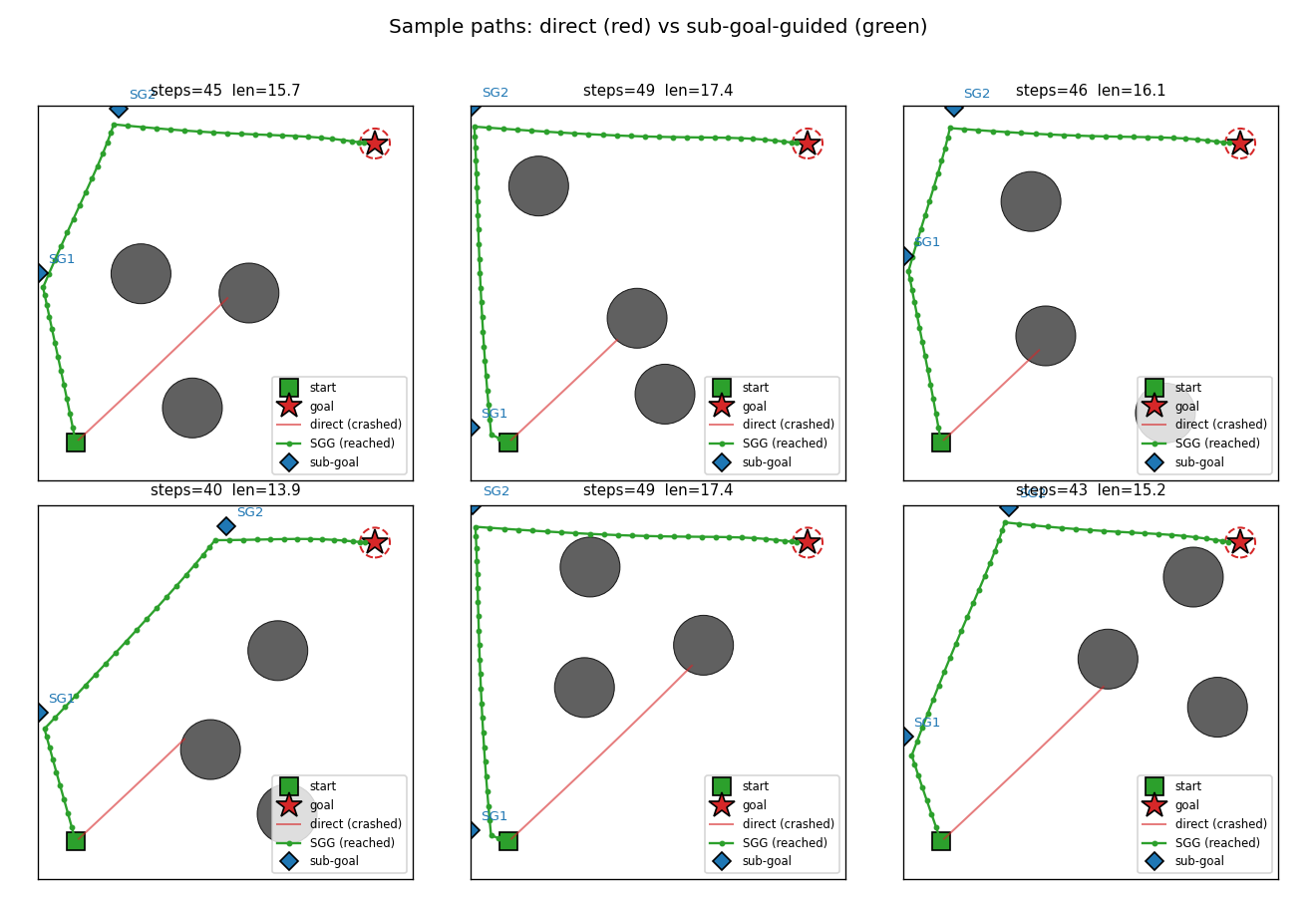

Hierarchical RL: a sub-goal generator C_high proposes K=2 waypoints, a low-level controller C_low (intentionally obstacle-blind, input = rel_target only) steers toward each. Cost gradient flows through a closed-form differentiable cost-model M back into C_high. 99% success vs 0% no-sub-goal direct baseline.

Schmidhuber (1991) — Reinforcement learning in Markovian and non-Markovian environments

pomdp-flag-maze

A 2-D T-maze with a hidden flag. The agent observes only its local 4-wall context plus a 1-bit indicator that is non-zero ONLY at the start cell. Recurrent M+C architecture must latch the indicator across the full episode. 6/10 seeds 100% solve, 4/10 stuck at 50% — likely a recurrent-init sensitivity flagged in §Open questions.

Schmidhuber (1991/1992) — Neural sequence chunkers

chunker-22-symbol

22-symbol alphabet streamed without episode boundaries. Two-network history compression: automatizer A predicts next symbol; chunker C only receives A’s prediction failures (surprises). The 20-step lag bridge that vanilla BPTT/RTRL fails on.

1992 — Neural Computation triple

Schmidhuber (1992) — Learning to control fast-weight memories

fast-weights-unknown-delay

Two arbitrary input signals must be associated across a time gap of unknown length. Slow programmer net S (917 params, 4 heads: key/value/query/gate); W_fast updated as W_fast += eta · g_t · outer(v_t, k_t). Sigmoid gate makes “load and hold” readable; 100% bit-accuracy K=5-30 trained / K=1-60 extrapolation.

fast-weights-key-value

A sequence of (key, value) pairs is presented one step at a time. Each step writes an outer-product update into a fast weight matrix. Retrieval = W_fast · k_query. The linear-Transformer ancestor — Schlag/Irie/Schmidhuber 2021 (see linear-transformers-fwp in 2018–2025) prove this is identical to linear self-attention.

Schmidhuber (1992) — Learning factorial codes by predictability minimization

predictability-min-binary-factors

Given an observable x produced by a fixed random linear mixing of K independent binary factors, learn an encoder E: x → y that produces a factorial code. Adversarial setup: encoder maximizes per-component predictor MSE; predictors minimize it. Proto-GAN math, 22 years before Goodfellow 2014. Predictors collapse to chance (L_pred = 0.2500 exact for sigmoid binary).

1993 — Predictable classifications, self-reference, very deep chunking

Schmidhuber & Prelinger (1993) — Discovering predictable classifications

predictable-stereo

Predictability maximization — the dual of PM. Two networks each see one view of the same synthetic stereo scene; their job is to produce scalar codes that maximally agree. The only thing the two views share is a hidden binary depth bit, so maximizing agreement forces them to recover it. Becker-Hinton-style IMAX.

Schmidhuber (1993) — A self-referential weight matrix

self-referential-weight-matrix

A recurrent network whose weight matrix is itself part of the state. W_eff = W_slow + W_fast. Slow params trained by BPTT across episodes; fast plastic matrix is reset each episode and rewritten by the network’s own outputs every step. 4-way boolean meta-learning (AND/OR/XOR/NAND): 99.6% query accuracy, manual BPTT gradient check at 8e-7.

Schmidhuber (1993) — Habilitationsschrift

chunker-very-deep-1200

The Habilitationsschrift’s “very deep learning” demonstration: the two-network neural sequence chunker doing credit assignment over roughly 1200 unrolled time-steps. Effective BPTT depth T - 1 = 1199 (raw) compresses to 2 (chunker on surprises). 599.5× depth-reduction at T=1200.

1995–1997 — Levin search and the LSTM benchmark suite

Schmidhuber (1995/1997) — Discovering solutions with low Kolmogorov complexity

levin-count-inputs

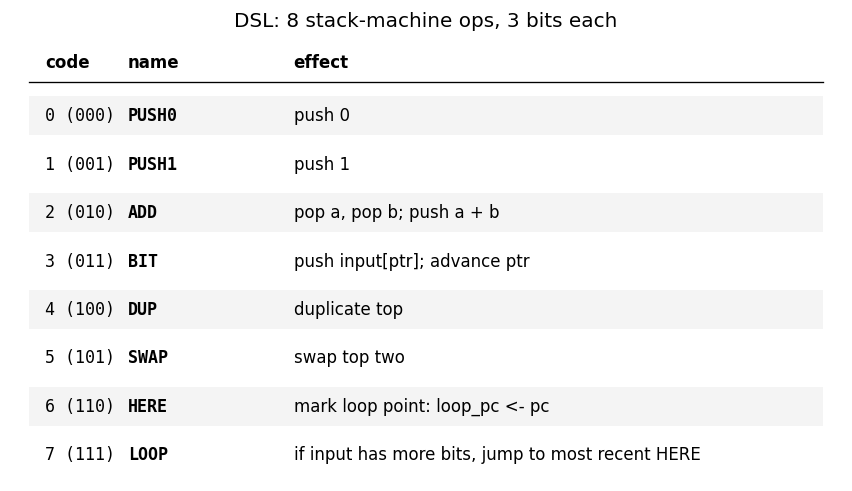

Find a program that maps a 100-bit input to its popcount from only 3 training examples — without gradient descent. Levin search enumerates programs ordered by len(p) + log(t). Found program: 5-instr PUSH0 HERE BIT ADD LOOP. 770k programs enumerated in 1.0s; 200/200 generalize.

levin-add-positions

Same Levin enumeration, different target: index-sum of the bit positions where the input is 1 (induces the linear weight vector w_i = i). Found program: length-3 im+. 58 evaluations to find; 200/200 generalize on held-out.

Hochreiter & Schmidhuber (1996) — LSTM can solve hard long time lag problems

rs-two-sequence

Bengio-94 latch task. Random-weight-guessing on a small fully-recurrent net solves what BPTT/RTRL fails on. The point is the algorithm: just sample weights uniformly, run forward, score. No mutation, no crossover, no gradient. 30/30 seeds solve, median 144 trials.

rs-parity

N-bit sequence parity (XOR of all input bits) by random weight guessing on a small recurrent net. The parity solution lives in a narrow weight-space basin RS happens to hit by chance. N=50 seed 0: 10,253 trials / 15.3s; N=500 seed 0: 412 trials / 3.2s.

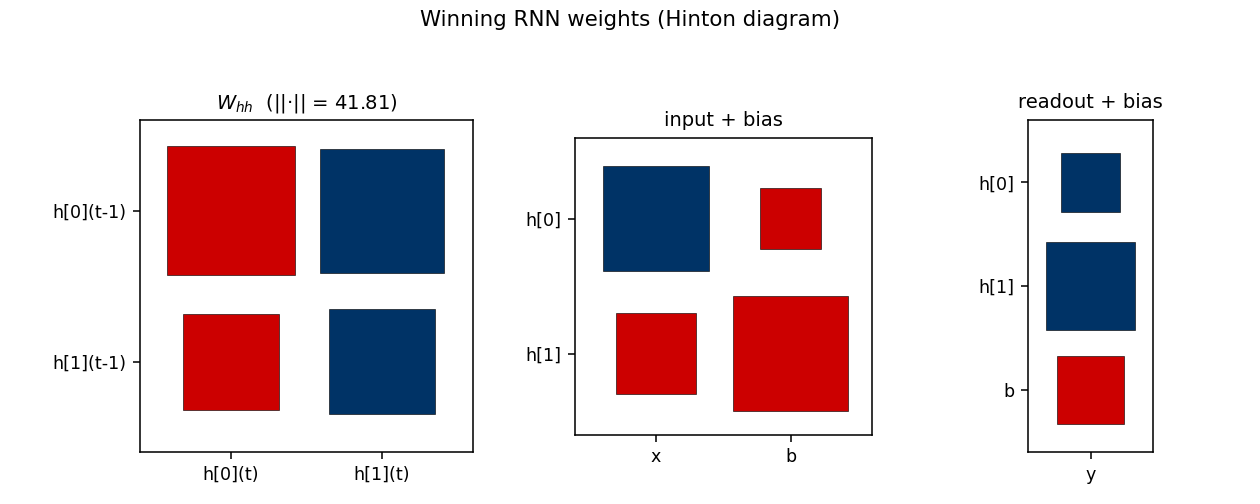

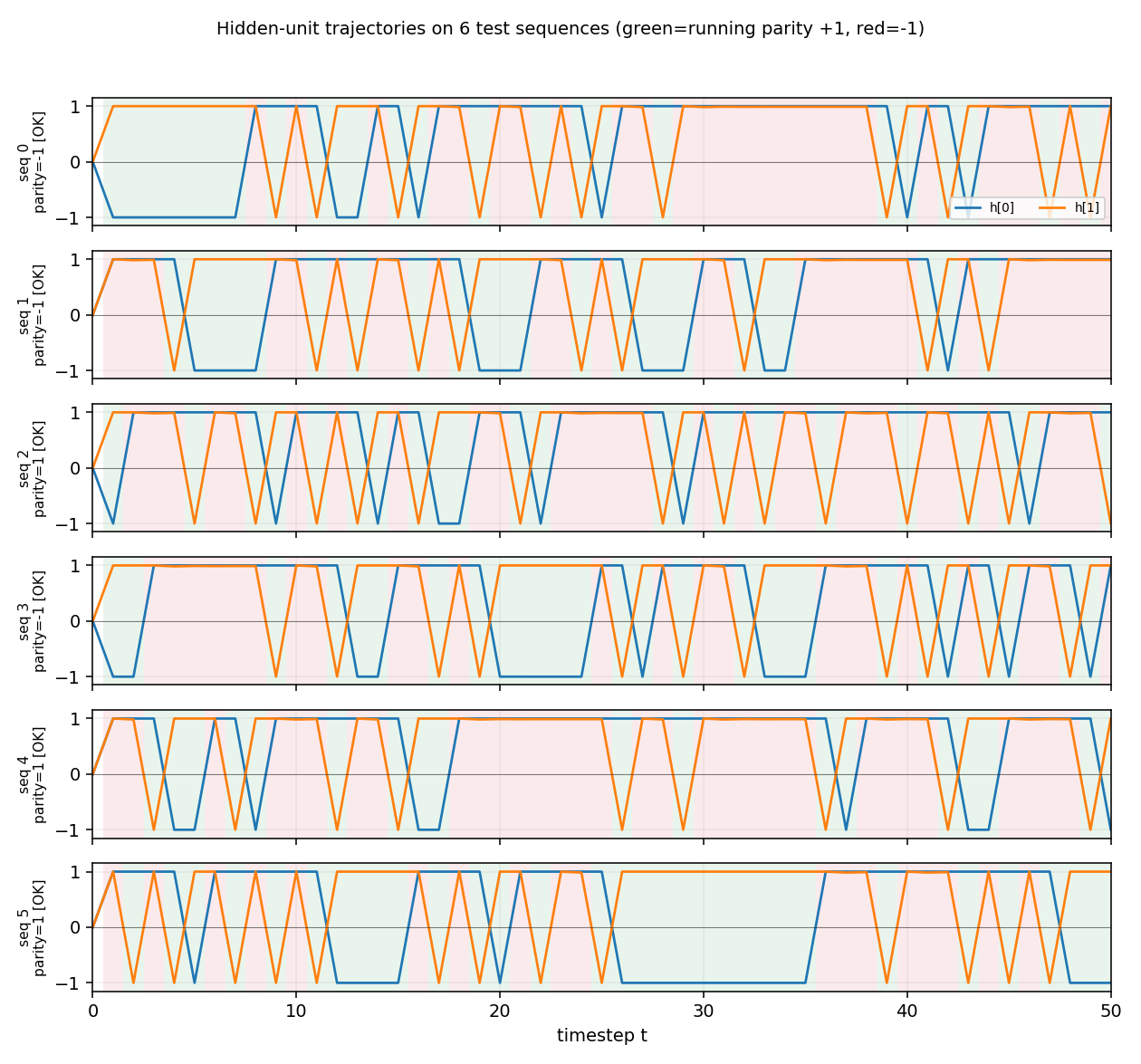

rs-tomita

Random-weight guessing on Tomita grammars #1 (a*), #2 ((ab)*), and #4 (no aaa substring). Three regular languages of increasing difficulty. All 3 grammars solved across 10 seeds; trial counts within ~3× of paper for #1/#2, ~6× for #4.

Hochreiter & Schmidhuber (1997) — Long Short-Term Memory canonical battery

adding-problem

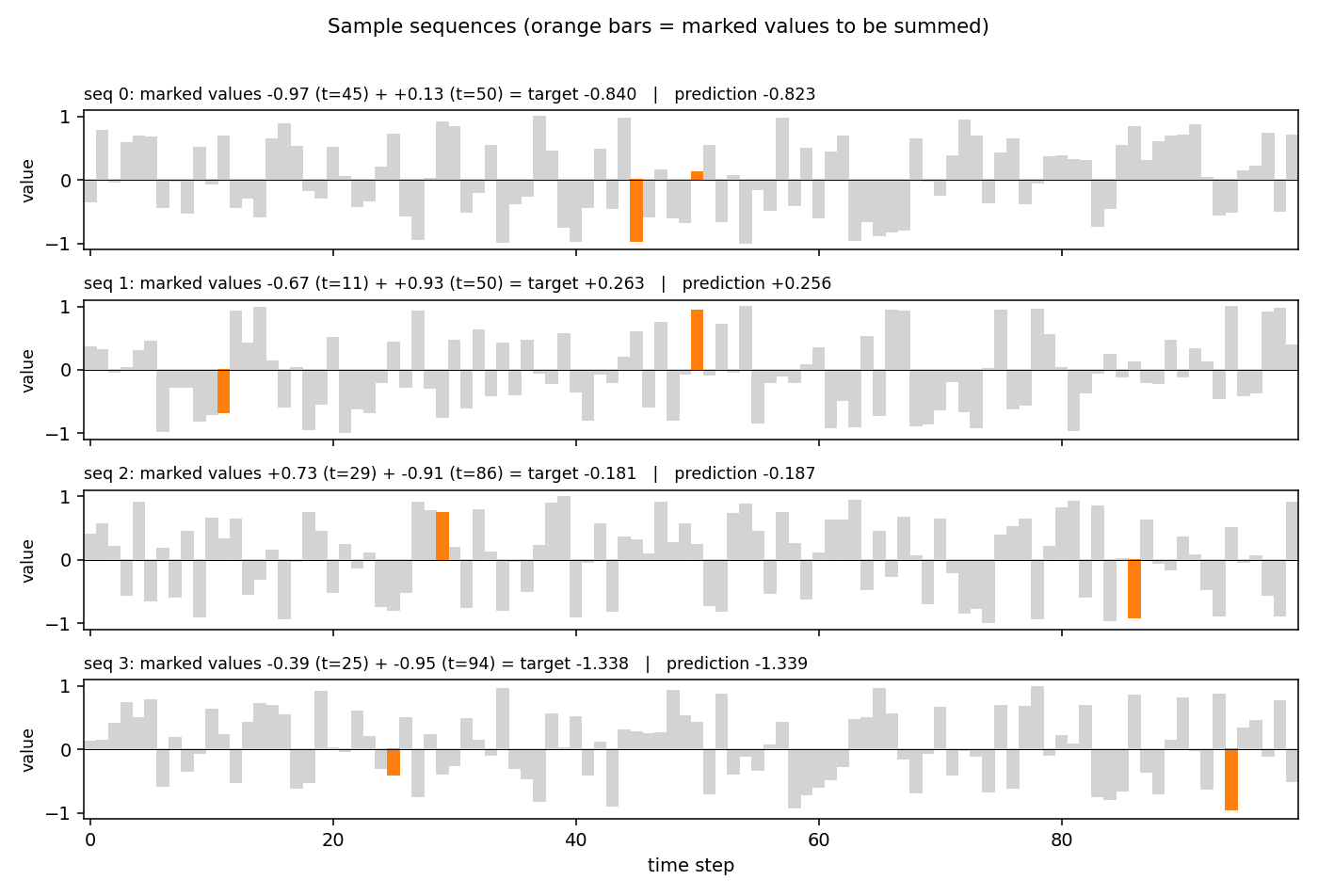

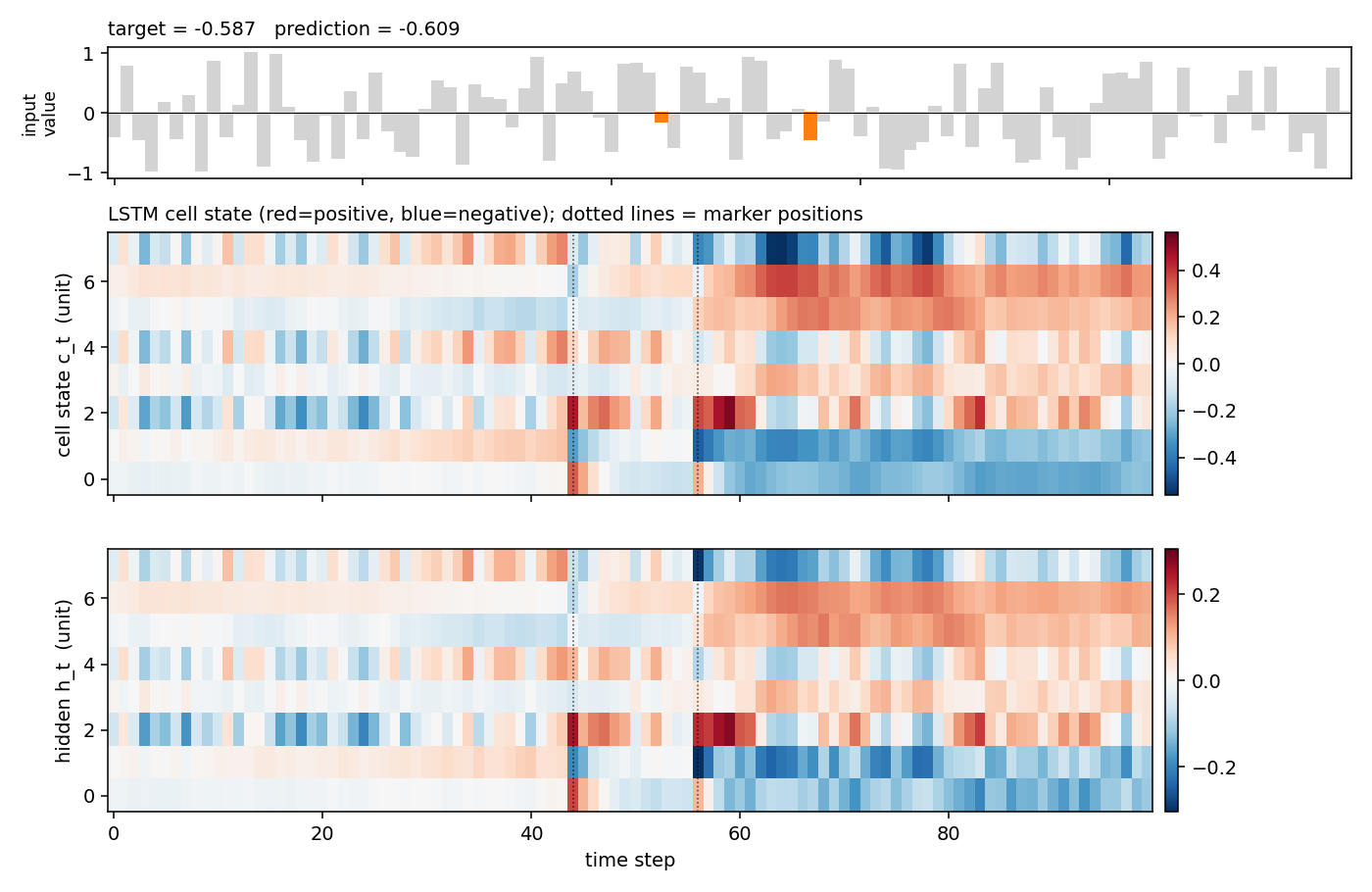

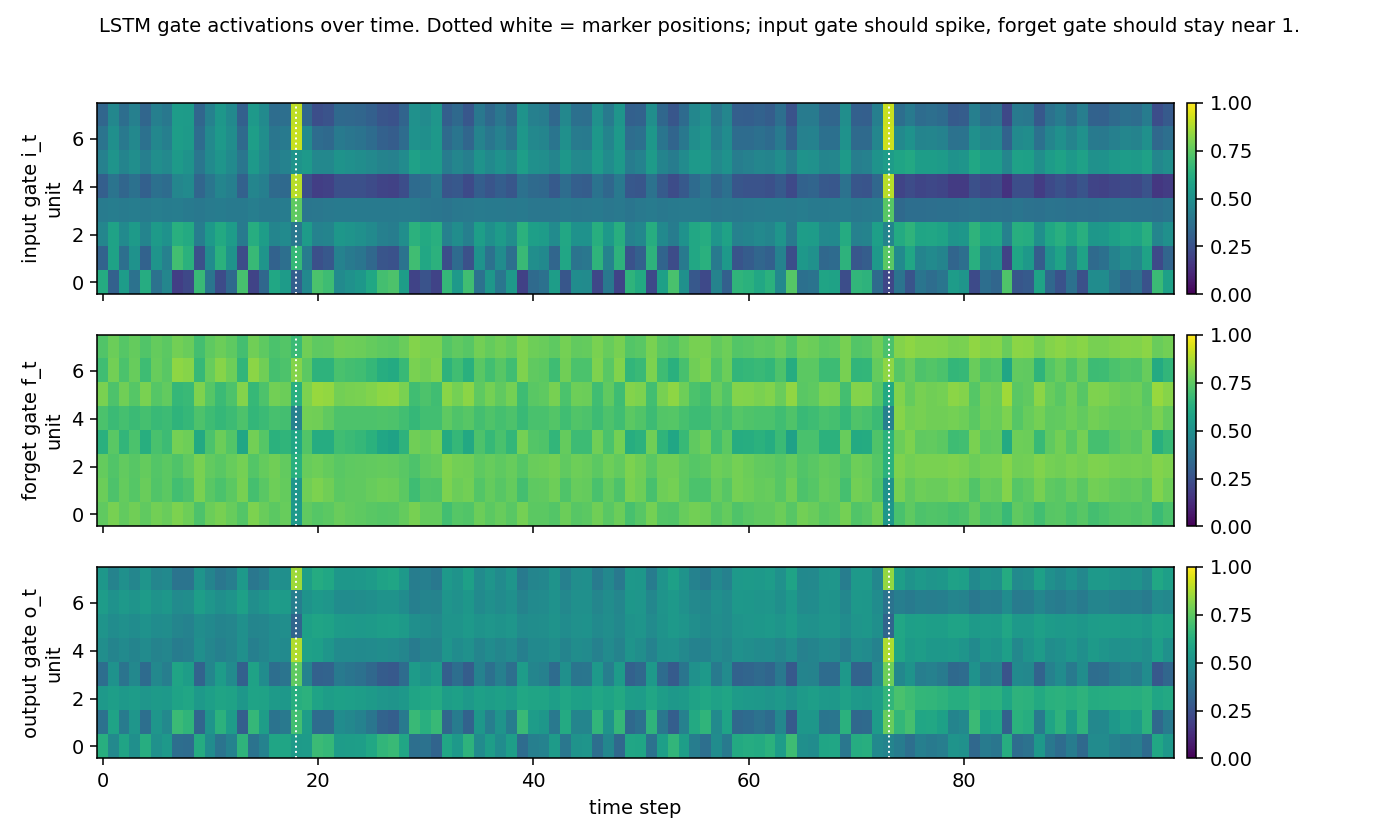

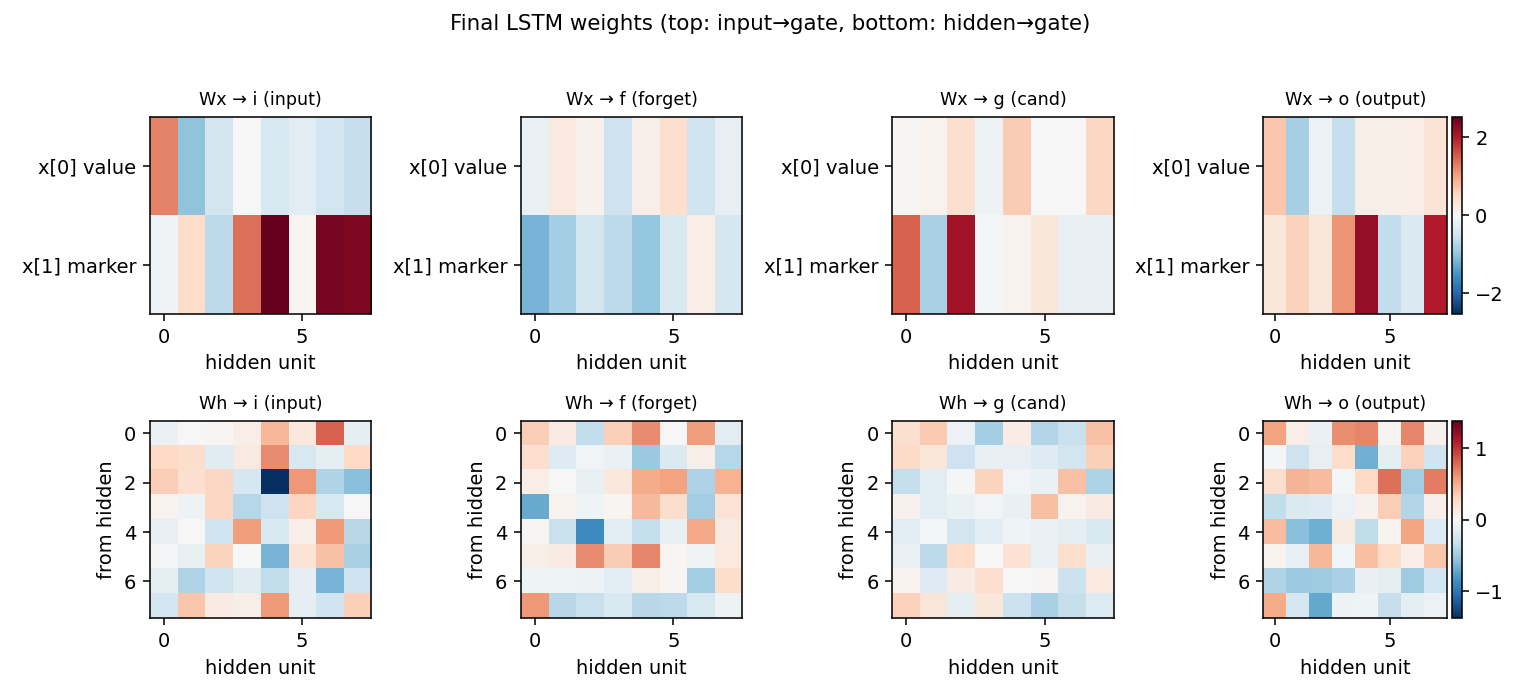

T=100 sequences with 2-D inputs: random reals + sparse markers. Target = sum of the 2 marked values. The first non-trivial LSTM benchmark. LSTM MSE 0.0007 (50× under paper’s 0.04 threshold); vanilla RNN MSE 0.0706 (gradient vanishes); 5/5 seeds clear; gradient check 1.6e-7.

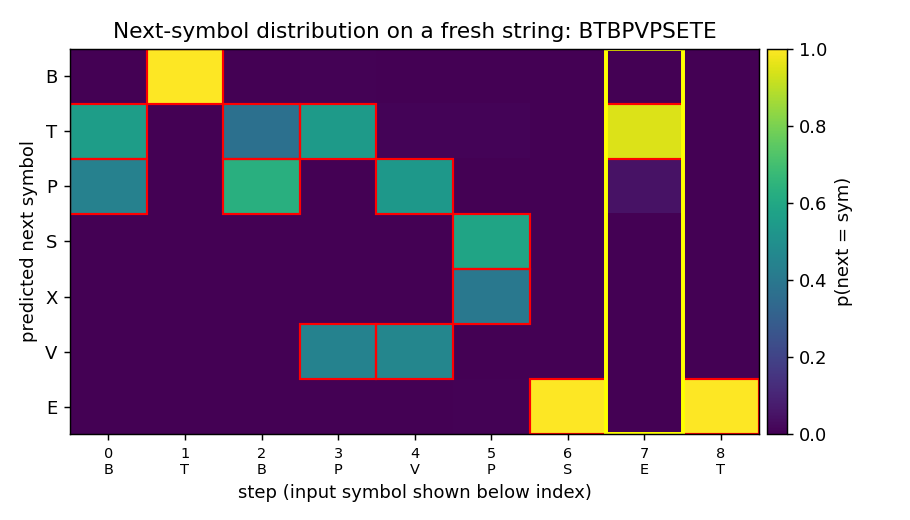

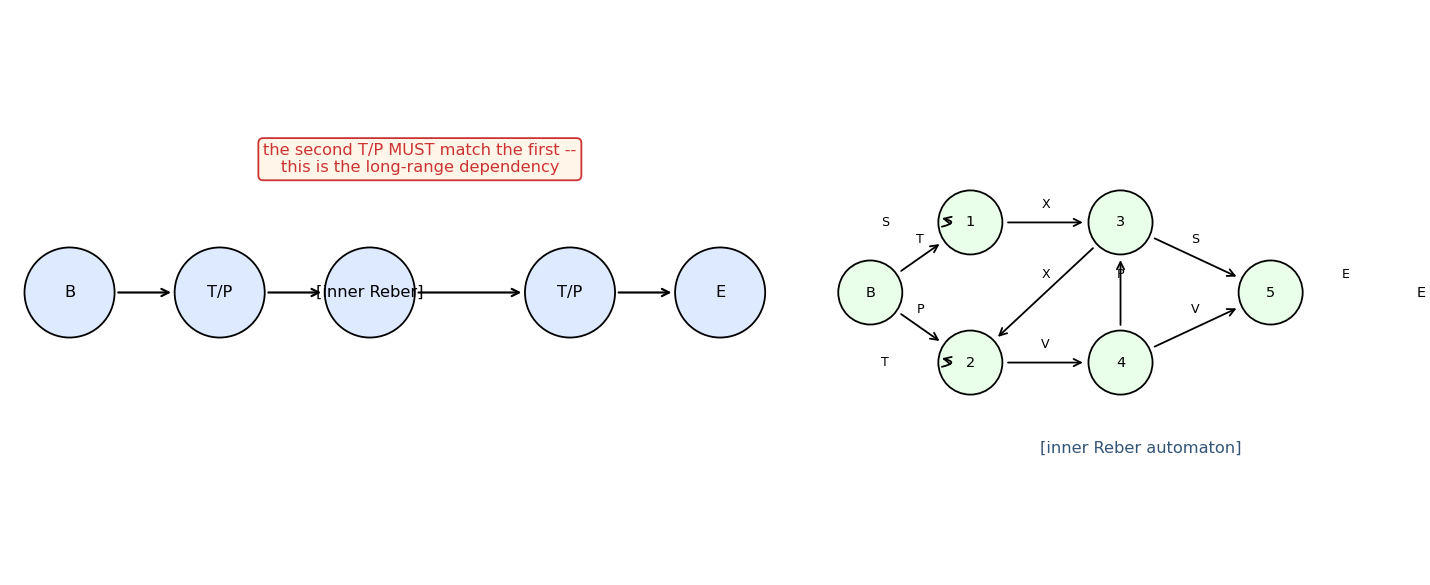

embedded-reber

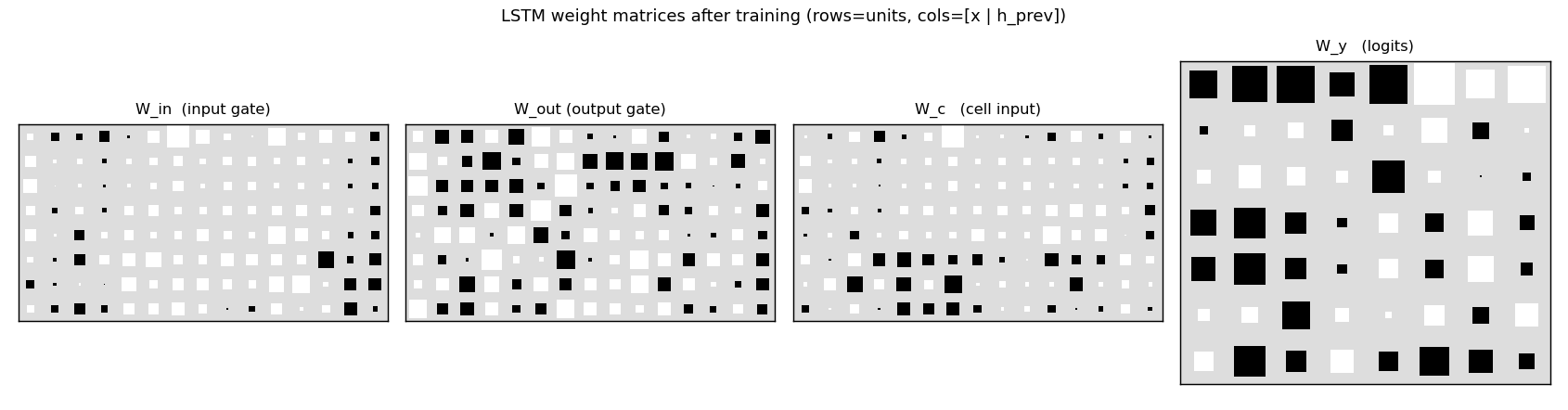

Reber grammar wrapped with outer T/P matching pair (long-range dependency). Original 1997 LSTM (input + output gate, no forget gate). 10/10 seeds, mean 4800 sequences vs paper 8440 — 1.8× faster with Adam + negative gate-bias init.

noise-free-long-lag

Two locally-encoded sequences (y, a₁,…,a_{p−1}, y) and (x, a₁,…,a_{p−1}, x). Sub-variant (a) at p=50: solved at sequence 600. Last-step gradient weighting trick (×100) keeps Adam’s per-step normalisation from drowning out the rare long-lag signal.

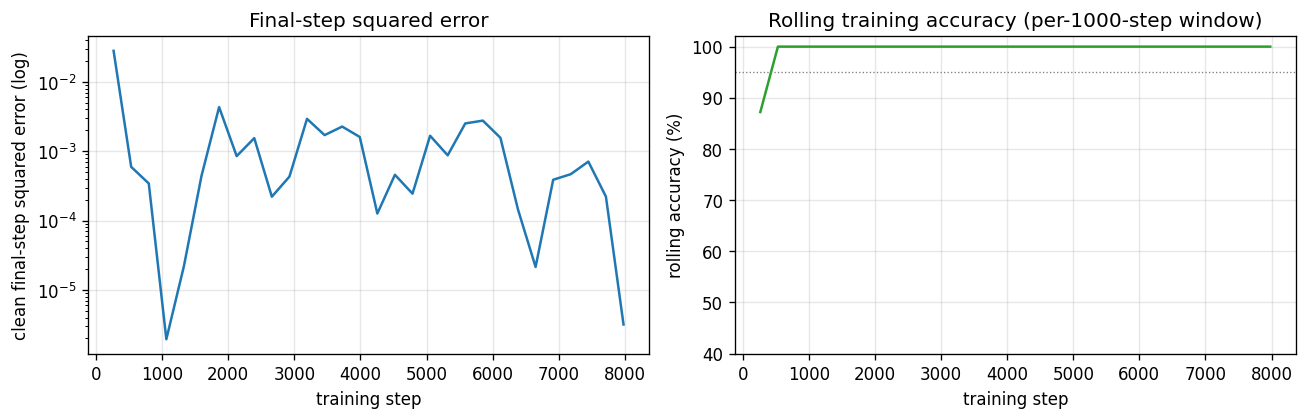

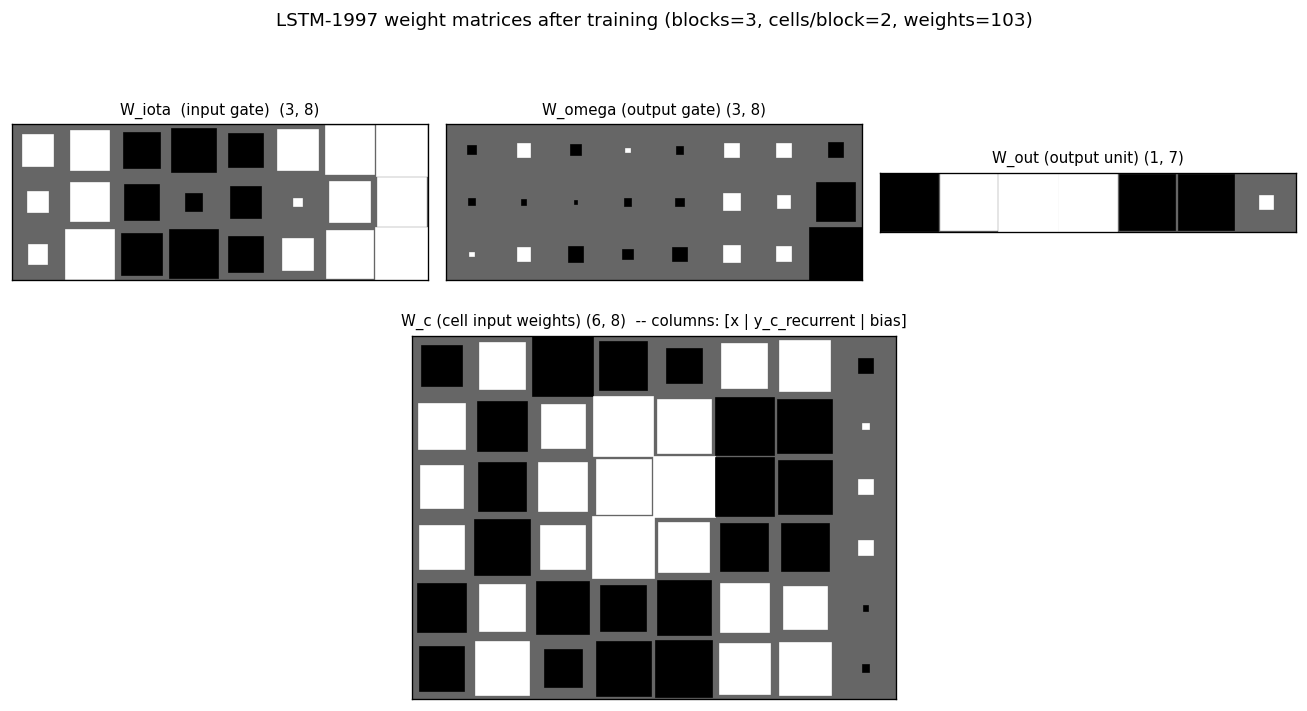

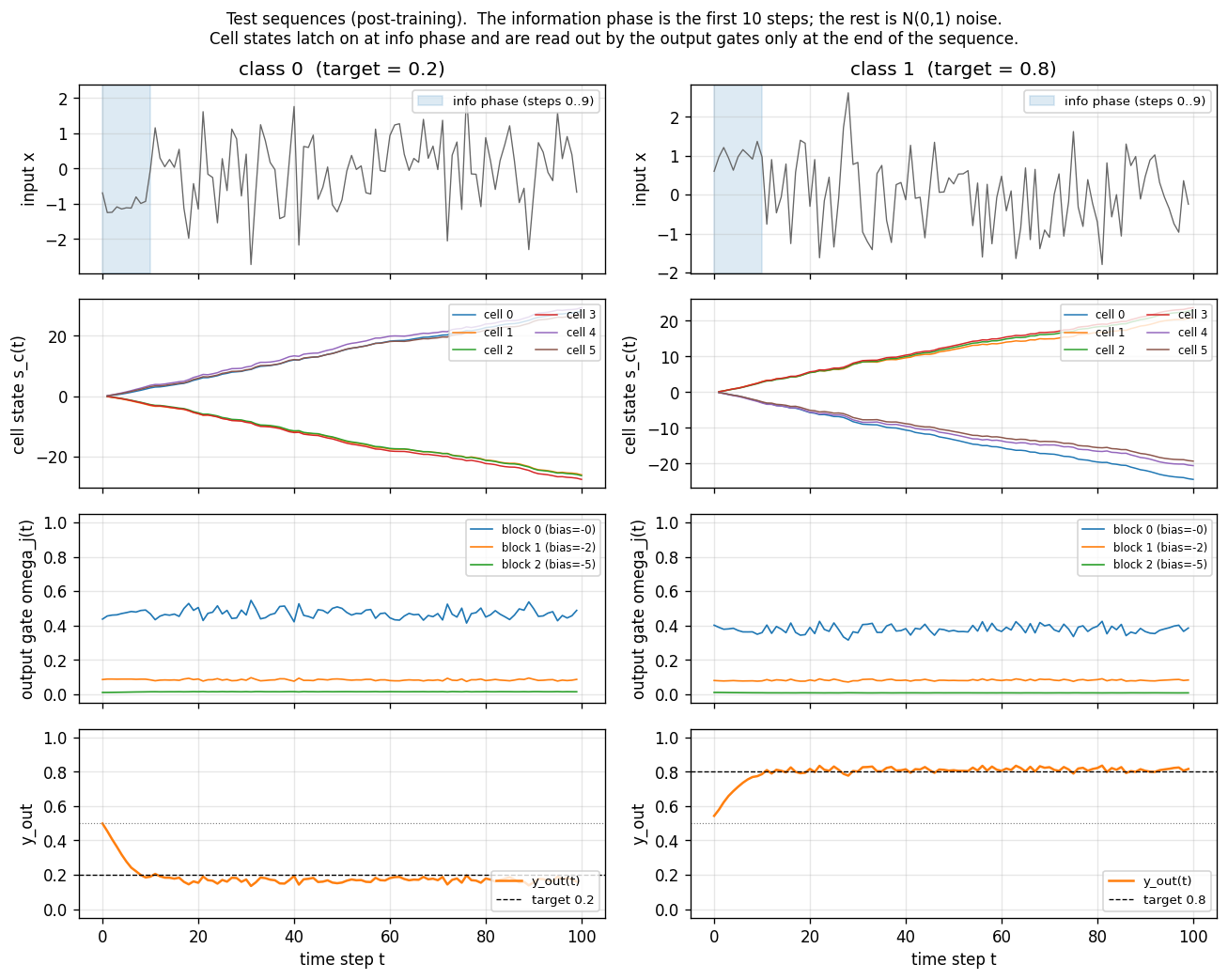

two-sequence-noise

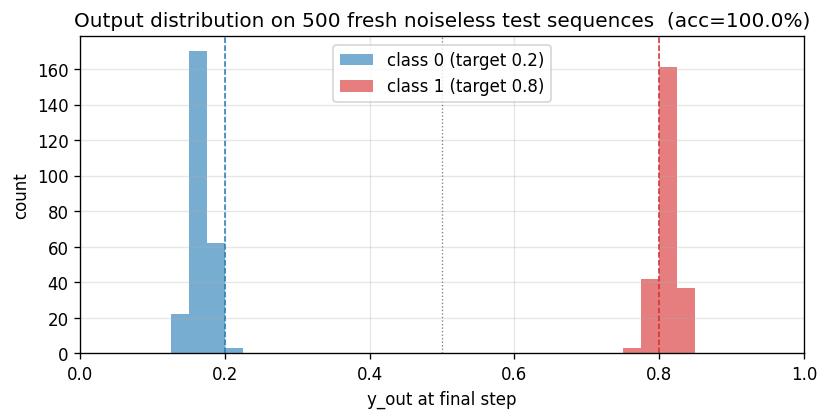

Variant 3c (target noise σ=0.32). Canonical 1997 LSTM, 3 blocks × 2 cells = 6 cells, 103 params. Output-gate biases per block = -2, -4, -6 (paper’s recipe). 4/4 seeds 100% accuracy on noiseless test sequences.

multiplication-problem

Same as adding-problem but target = product of the 2 marked values. LSTM with forget gate (Gers 2000). MSE 0.0028 at T=30 (17× chance); 3/5 seeds converge — paper-faithful per-seed brittleness.

temporal-order-3bit

Two information-carrying symbols X, Y at unknown positions; classify the temporal order (XX, XY, YX, YY). Original 1997 LSTM (no forget gate). 5/5 seeds 100%, median ~6.4k seqs vs paper 31,390 (Adam advantage). Vanilla RNN at chance 0.25.

Mid-90s — Evolutionary, RL, and feature detection

Salustowicz & Schmidhuber (1997) — Probabilistic Incremental Program Evolution

pipe-symbolic-regression

Symbolic regression on Koza’s classic benchmark f(x) = x⁴ + x³ + x² + x. Probabilistic Prototype Tree (PPT) over {+, −, *, /, x, R}. PBIL update toward elite at every visited node; per-component mutation along elite path. No gradient, no crossover. Seed 3 finds the exact polynomial at gen 60.

pipe-6-bit-parity

Same PIPE machinery on Boolean function set {AND, OR, NOT, IF, x_0..x_5}. Bitmask program evaluator runs all 64 inputs in O(tree_size) bitwise ops. 4-bit even parity solves cleanly at gen 258 (16/16); 6-bit reaches 71.9% at the 240s budget cap.

Schmidhuber, Zhao, Wiering (1997) — Shifting inductive bias with SSA

ssa-bias-transfer-mazes

Success-story algorithm: keep a stack of policy modifications; only retain modifications that produce statistically significant lifetime-reward improvements (history-conditioned, not per-task). Bias from one task transfers to the next. 4 sequential POM mazes; SSA tail solve 0.83 vs no-SSA 0.70 (+19%).

Wiering & Schmidhuber (1997) — HQ-learning

hq-learning-pomdp

Hierarchical Q(λ) for POMDP. M sub-agents with their own Q-tables; control transfers between sub-agents at sub-goal observations. Honest non-replication: paper’s HQ-vs-flat gap doesn’t reproduce on the 29-cell maze. Mathematical analysis: γ^Δt · HV ≤ R_goal bound prevents per-corridor specialization on small mazes. v1.5 follow-up flagged at paper’s 62-cell maze.

Schmidhuber, Eldracher, Foltin (1996) — Semilinear PM

semilinear-pm-image-patches

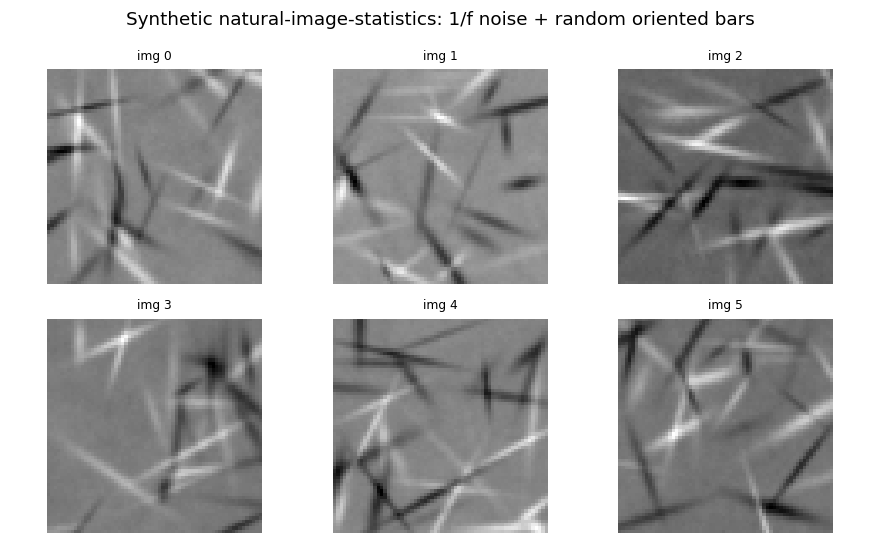

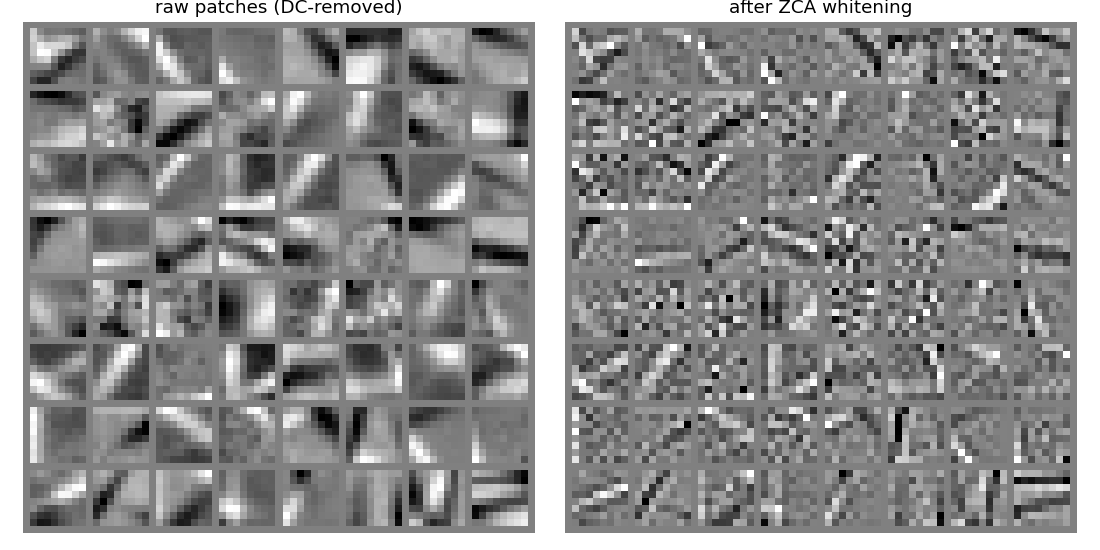

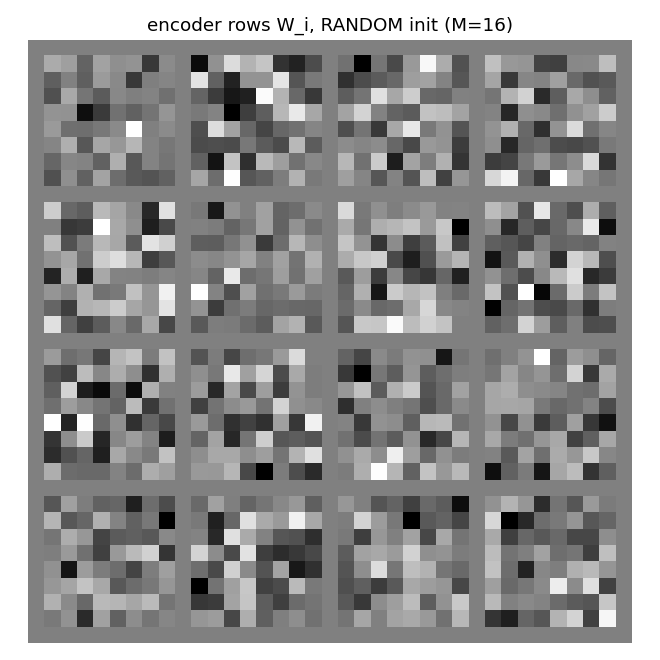

Linear encoder y = Wx on the Stiefel manifold (polar projection after every step). Predictor input is the standardised squared code z = (y² - μ) / σ (the squaring is the one nonlinearity — “semilinear”). Synthetic 1/f² pink-noise + oriented bars input. Result: V1-style oriented edge detectors emerge, like ICA.

Hochreiter & Schmidhuber (1999) — LOCOCODE

lococode-ica

Tied autoencoder + L1 sparsity on whitened input (surrogate for the paper’s flat-minimum-search Hessian penalty). On synthetic Laplacian sources: Amari distance 0.093 — 4× better than PCA (0.388), within 5× of FastICA (0.022). Demonstrates that low-complexity coding produces ICA-like sparse independent components.

2000–2002 — LSTM follow-ups

Gers, Schmidhuber, Cummins (2000) — Learning to forget

continual-embedded-reber

Embedded Reber strings concatenated without any episode reset. Mechanism contrast made visible: forget-gate LSTM cell-state norm stabilizes at ~25; no-forget-gate norm grows to ~295 across the stream. Forget gates drop at end-of-string offsets. 5/5 forget seeds solve (99.7%) vs 5/5 no-forget at chance (55%).

Gers & Schmidhuber (2001) — Context-free and context-sensitive languages

anbn-anbncn

Two formal languages: a^n b^n (context-free) and a^n b^n c^n (context-sensitive). Peephole LSTM (Gers 2002 cell). Cell 0 emerges as a clean linear counter — charges during a’s, discharges during b’s. Trained n=1..10 → generalizes a^n b^n to n=1..65; a^n b^n c^n to n=1..29.

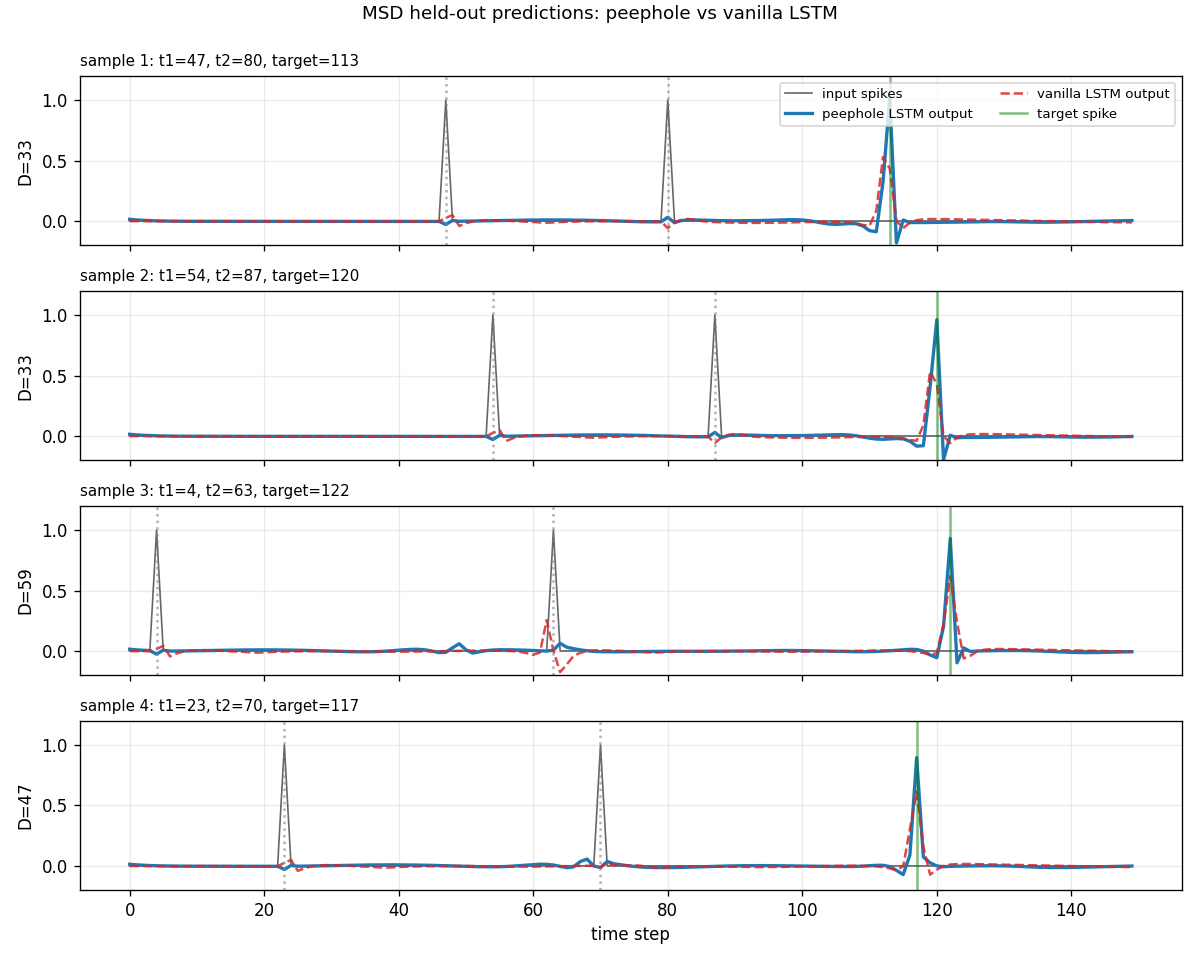

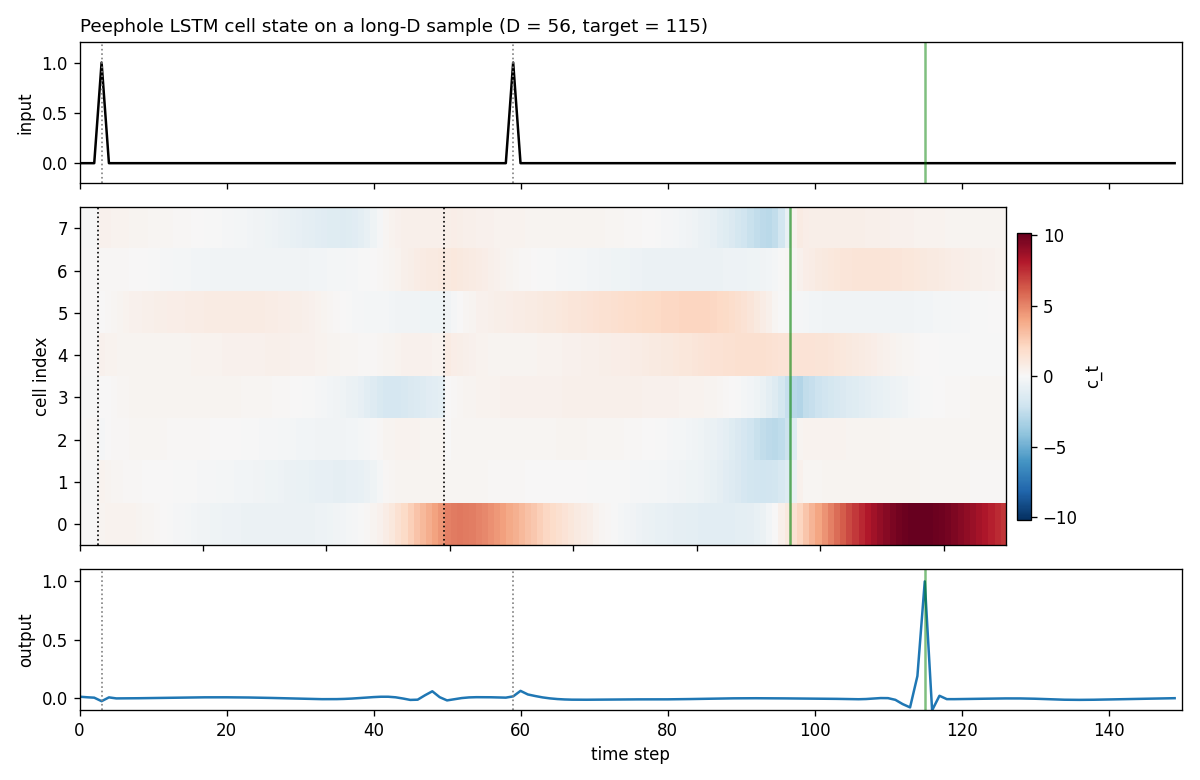

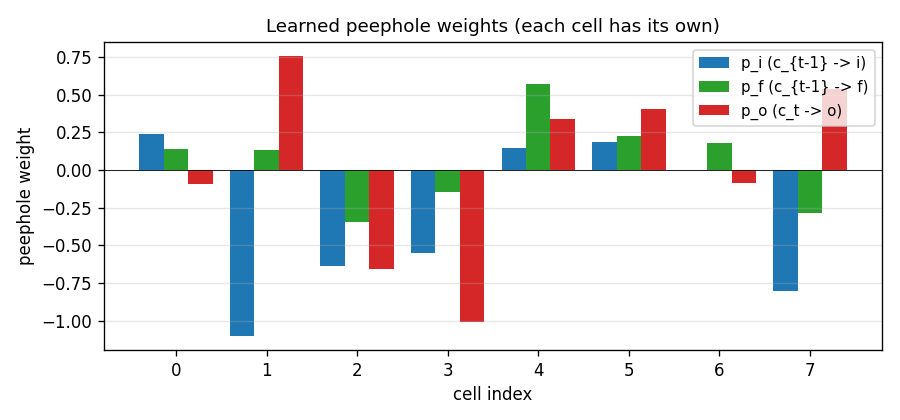

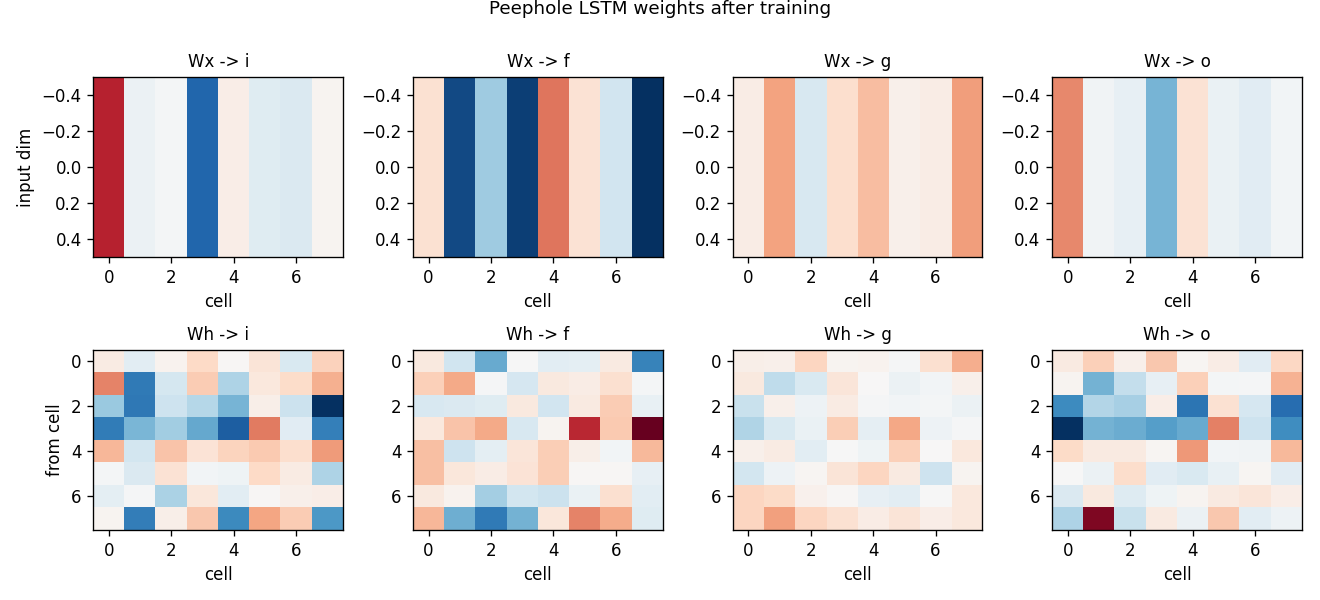

Gers, Schraudolph, Schmidhuber (2002) — Learning precise timing

timing-counting-spikes

Measure-Spike-Distance (MSD): two input spikes at t1 < t2; network must fire at t1 + 2·(t2 - t1). Peephole LSTM (cell state feeds gates). One cell develops an analog interval timer across the inter-spike gap. Honest partial: paper’s “vanilla fails entirely” doesn’t fully reproduce at short-MSD scale; v1.5 path: T ≥ 300, longer training.

Eck & Schmidhuber (2002) — Blues improvisation

blues-improvisation

12-bar bebop blues. Fixed chord progression: C7 C7 C7 C7 / F7 F7 C7 C7 / G7 F7 C7 C7. 2-layer stacked LSTM (chord layer H1=20 → melody layer H2=24). 8 hand-synthesized 12-bar choruses (no external MIDI). 12/12 bar-onset chord match; on-beat note rate 0.792.

2002–2010 — Evolutionary RL, OOPS, BLSTM+CTC

Schmidhuber, Wierstra, Gomez (2005/2007) — Evolino

evolino-sines-mackey-glass

Hybrid neuroevolution + linear regression for sequence learning. LSTM hidden weights evolved by population selection + gaussian mutation + crossover; output layer trained per-individual via Moore-Penrose pseudo-inverse on the recurrent state’s time-series. Hidden weights NOT trained by gradient. Two tasks: superimposed sines, Mackey-Glass.

Gomez & Schmidhuber (2005) — Co-evolving recurrent neurons

double-pole-no-velocity

Cart with two stacked poles of different lengths (canonical hard non-Markov RL benchmark). Hidden velocities — only positions observed. Wieland 1991 double cart-pole sim in numpy, RK4 integration. Enforced Sub-Populations (ESP, Gomez 2003): H=5 subpopulations, network assembled by stacking one neuron per subpop; fitness propagates back. 7/10 seeds 20/20 generalize at pop=40 (paper’s pop=200, ~5× cheaper).

Graves et al. (2005/2006) — BLSTM and Connectionist Temporal Classification

timit-blstm-ctc

Synthetic phoneme corpus (K=6 phonemes, 8 mel-like bands, co-articulated shared-onset clusters so future context disambiguates). Bidirectional LSTM + log-space CTC forward-backward. BLSTM 1.87× faster than uni-LSTM (5/5 seeds 300 vs 560 iters); mid-training PER gap 0.27 vs 1.00.

Graves, Liwicki, Fernández, Bertolami, Bunke, Schmidhuber (2009) — Unconstrained handwriting

iam-handwriting

10-character hand-crafted alphabet, each glyph from ellipse arcs + line segments; 47-word vocab; per-word affine slant + per-point Gaussian jitter. BLSTM + CTC reads pen-trajectory data. In-vocab CER 0.082 / word acc 0.77; held-out compositional CER 0.647 honestly flagged.

Schmidhuber (2002–2004) — Optimal Ordered Problem Solver

oops-towers-of-hanoi

Towers of Hanoi: move n disks from peg 0 to peg 2; optimal solution length 2^n - 1. OOPS = Levin search with reusable subroutines. Discovers 6-token recursive solver SD C SD M SA C at n=3; reuses with zero search from n=4 onward. Verified through n=15 (32767 moves).

2010–2017 — Deep learning at scale

Cireşan, Meier, Gambardella, Schmidhuber (2010) — Deep, big, simple nets

mnist-deep-mlp

MNIST classification with a plain feedforward MLP — no convolution, no pretraining, no model averaging — on heavily deformed training data. Per-batch affine + Simard elastic deformation in pure numpy (separable Gaussian + bilinear sampling). 1.17% test err / 15 epochs / 79s.

Cireşan, Meier, Schmidhuber (2012) — Multi-column DNN

mcdnn-image-bench

Single-column 4-layer ReLU MLP on MNIST (paper’s multi-column ensemble + GTSRB/CASIA deferred to v1.5). 1.46% test err; multi-seed mean 1.47% ± 0.03%. Honest gap: paper 35-column ensemble 0.23%, single CNN ~0.4%.

Cireşan, Giusti, Gambardella, Schmidhuber (2012) — EM segmentation

em-segmentation-isbi

Synthetic Voronoi-EM substitute for ISBI 2012 stack: random Voronoi tessellation + dark 1-px boundaries + per-cell intensity + Gaussian noise + sparse organelles + 3×3 PSF blur. MLP pixel classifier on 32×32 patches. ROC AUC 0.989 vs Sobel+intensity 0.880; pixel acc 95.97%.

Srivastava, Masci, Kazerounian, Gomez, Schmidhuber (2013) — Compete to compute

compete-to-compute

LWTA (Local Winner-Take-All): groups of k=2 units per layer; only the per-group winner forwards activations, others zero out; gradient flows only through the winner. Sequential 2-task MNIST split (digits 0-4 → 5-9). LWTA forgetting 0.022 vs ReLU 0.072 seed 0 (3.3× less forgetting); 10-seed: LWTA wins 6/10.

Srivastava, Greff, Schmidhuber (2015) — Highway Networks

highway-networks

Gated deep MLP: y = H(x)·T(x) + x·(1−T(x)) with learned sigmoid gate T. Depth comparison 5/10/20/30/50: highway stable at all depths (0.926 at depth 30); plain MLP dies past depth 10 (stuck at chance 0.124). Plain’s loss pinned at log(10) — gradients vanish through 30 saturating tanh layers.

Greff, Srivastava, Koutník, Steunebrink, Schmidhuber (2017) — LSTM Search Space Odyssey

lstm-search-space-odyssey

8 LSTM variants in one ablation matrix: V (vanilla), NIG (no input gate), NFG (no forget gate), NOG (no output gate), NIAF (no input activation), NOAF (no output activation), CIFG (coupled input-forget), NP (no peepholes). All implemented behind one VariantFlags flag set. CIFG ranks 1st, NIG last across 3/3 seeds — matches paper’s “CIFG almost free” claim. Gradient check 1.31e-7.

Koutník, Greff, Gomez, Schmidhuber (2014) — Clockwork RNN

clockwork-rnn

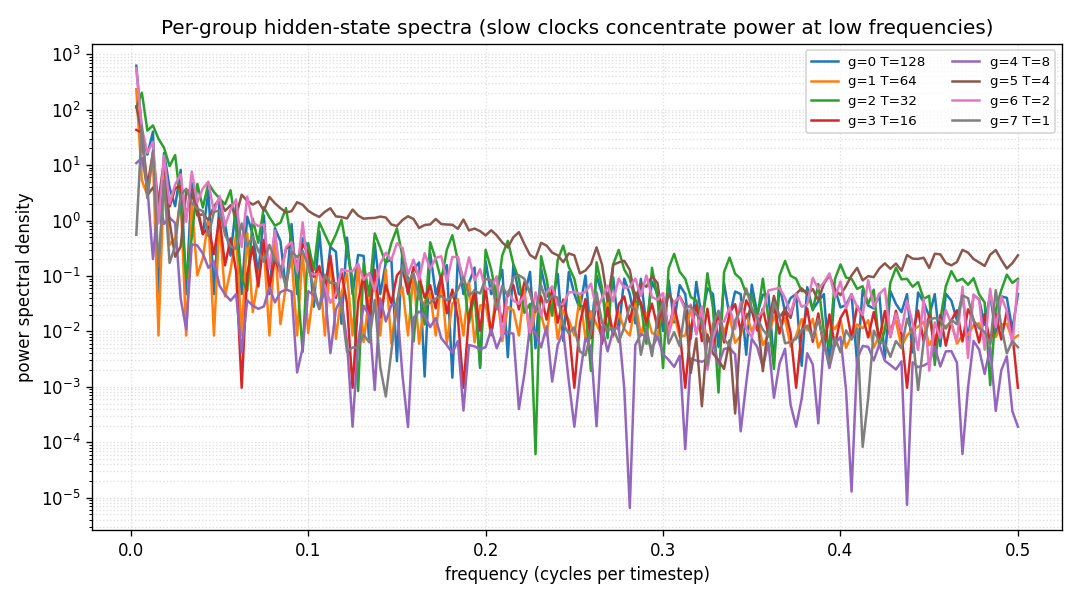

Standard Elman RNN with hidden layer partitioned into G modules. Each module g has a clock period T_g; at timestep t a module updates only when t mod T_g == 0. Forward connections only flow from slower clocks to faster clocks. Synthetic sum-of-sines T=320, periods 8/32/80/160. CW-RNN MSE 0.117 vs matched-param vanilla 0.250 — 2.22× mean over 5 seeds.

Koutník, Cuccu, Schmidhuber, Gomez (2013) — Vision-based RL via evolution

torcs-vision-evolution

Numpy oval racing track + 16×16 pixel observation. MLP 256→16→1 with W1 parameterized by a 4×4=16 low-frequency 2-D DCT block per hidden unit (decoded via precomputed orthonormal IDCT-II matrix). Natural ES (antithetic sampling, rank-shaped fitness) on 289 numbers; equivalent raw-W1 search would be 4129 numbers. 14.3× compression.

Greff, van Steenkiste, Schmidhuber (2017) — Neural EM

neural-em-shapes

Unsupervised perceptual grouping. K=3 slot Neural EM with manual BPTT through T=4 unrolled EM iterations. E-step softmax over pixel likelihoods, M-step tanh recurrence on bottlenecked H=24 (forces specialisation). Best test NMI 0.428 at epoch 7 (chance 0.33); slot-collapse drift after epoch 7 documented as v1.5 fix.

van Steenkiste, Chang, Greff, Schmidhuber (2018) — Relational Neural EM

relational-nem-bouncing-balls

Bouncing balls with elastic equal-mass collisions. Oracle 4-D slot state (x, y, vx, vy). Non-relational baseline: MLP_dyn(s_k); relational: MLP_msg(s_k, s_j) → mean aggregation → MLP_dyn(s_k, agg_k). Relational wins K=3,4,5; loses K=6 (distribution shift dominates).

2018–2025 — World models, fast-weight Transformers, systematic generalization

Ha & Schmidhuber (2018) — Recurrent World Models

world-models-carracing

Numpy 2-D top-down racing track substitute for CarRacing-v0. Centerline = closed loop generated from low-frequency sinusoids; agent observes a 16×16 patch of mask, rotated to car frame. V (encoder) + M (LSTM world-model) + C (linear policy) — all the paper’s three modules, evolved by simplified rank-μ ES. V+M+C +103.8 mean across 5/5 seeds (random +4.84) — ~21× random.

world-models-vizdoom-dream

Numpy 5×5 gridworld dodging-fireballs analog of DoomTakeCover. The paper’s “DoomRNN dream” experiment: controller C is trained ENTIRELY inside M’s rollouts (no real-env interaction during training), then transferred zero-shot to the real env. Dream-trained C: 49.1 ± 14.8 vs random 22.4 ± 18.3 — 2.2× random; matches/exceeds real-baseline on 2/5 seeds.

Schmidhuber et al. (2019) — Reinforcement Learning Upside Down

upside-down-rl

Standard RL fits a value function or policy gradient. UDRL inverts: the policy is a supervised mapping from (state, desired_return, time_horizon) → action. Numpy 9-state chain MDP per SPEC’s RL-stub rule (paper used LunarLanderSparse). 5/5 seeds reach +4.70 at R*=5.0; achieved return monotonically tracks commanded R*.

Schlag, Irie, Schmidhuber (2021) — Linear Transformers ARE Fast Weight Programmers

linear-transformers-fwp

The cleanest result of the catalog: linear self-attention V^T(Kq) and the 1992 fast-weight programmer (V^T K)q compute the same numpy expression. Equivalence verified to 2.22e-16 (1 ulp at float64) on every input tested. Side-by-side visualization shows linear-attention scores + FWP scratchpad + retrieval bars match to round-off. Cross-references the wave-4 sibling fast-weights-key-value (1992 ancestor).

Csordás, Irie, Schmidhuber (2022) — The Neural Data Router

neural-data-router

Compositional table lookup: 4 values × 4 functions × depth-d expressions. NDR adds two switches to a Transformer: geometric attention (per-query distance-ordered scan, “stop at first match”) + per-position copy gate. Test depth 5 (+1 above training): NDR 0.60 vs vanilla 0.32 (chance 0.25); 3-seed NDR 0.405 ± 0.013 vs vanilla 0.296 ± 0.031 (NDR wins 3/3). Honest +1-depth gain vs paper’s “100% length generalization” claim.

How the GIFs and viz folders are generated

problem-folder/

├── README.md source paper, problem, results, deviations

├── <slug>.py dataset + model + train + eval

├── visualize_<slug>.py training curves + weight viz (writes to viz/)

├── make_<slug>_gif.py animated GIF (writes <slug>.gif)

├── <slug>.gif committed animation

└── viz/ committed PNGs

To regenerate any GIF or PNG locally:

cd <problem-folder>

python3 visualize_<slug>.py # static figures

python3 make_<slug>_gif.py # animated GIF

Seeds and hyperparameters are documented in each folder’s README. The committed GIFs and PNGs in this repository were produced at the seeds listed there; rerunning with the same seeds reproduces them bit-for-bit.

Where to go next

- For comparison numbers:

RESULTS.md— every stub’s paper-vs-implemented headline metric in one table, with a v2-filter recommendation section. - For the research goal these baselines exist for: v2 ByteDMD instrumentation — these 58 implementations are the substrate the data-movement cost tracer will run against.

- For original-simulator reruns: per-stub §Open questions sections track v1.5 / v2 paths back to gym CarRacing-v0, VizDoom DoomTakeCover, TORCS, TIMIT, IAM, ISBI.

- For the build process:

BUILD_NOTES.md— session report, agent-team orchestration, wave-by-wave timeline.

RESULTS — v1 + v1.5 baselines

Per-stub reproducibility, run wallclock, and headline result for the 58 implementations shipped across wave PRs. Compiled from PR bodies and per-stub READMEs for the v2 data-movement / ByteDMD filter.

Reproduces? legend: yes = matches paper qualitatively or quantitatively; partial / qualitative = method works, paper number not fully reached (gap documented in stub README); no = paper claim does not replicate (gap analysis documented).

Run wallclock: time to run the final headline experiment on a laptop M-series CPU. Numpy + matplotlib only, no GPU.

1980s — Local rules and the Neural Bucket Brigade

Schmidhuber (1989) — A local learning algorithm for dynamic feedforward and recurrent networks

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

nbb-xor/ (PR #5) | qualitative | 0.85s | 19/20 seeds solve XOR; mean 3012 presentations vs paper ~619 |

nbb-moving-light/ (PR #6) | yes | 0.03s | mean 223 presentations matches paper exactly; 9/30 solve rate vs paper 9/10 |

1990 — Controller + world-model + flip-flop

Schmidhuber (1990) — Making the world differentiable

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

flip-flop/ (PR #6) | yes | 3-5s | 10/10 sequential (paper 6/10); 30/30 parallel (paper 20/30) |

pole-balance-non-markov/ (PR #6) | yes | 9.5s | seed 0: 30/30 episodes balance full 1000 steps |

Schmidhuber (1990) — Recurrent networks adjusted by adaptive critics

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

pole-balance-markov-vac/ (PR #6) | yes | 1.21s | K=2 vector critic; 173 episodes; 9/10 multi-seed |

Schmidhuber & Huber (1990) — Learning to generate focus trajectories

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

saccadic-target-detection/ (PR #6) | yes | 5.4s | 100% find rate, mean 1.69 saccades vs random 25.5% |

1991 — Curiosity, subgoals, the chunker

Schmidhuber (1991) — Adaptive confidence and adaptive curiosity

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

curiosity-three-regions/ (PR #7) | yes | 0.5s | visit ordering C > B > A across 10 seeds (C=42.8%, B=33.3%, A=23.9%) |

Schmidhuber (1991) — Learning to generate sub-goals for action sequences

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

subgoal-obstacle-avoidance/ (PR #7) | yes | 6.4s | 99% success seed 0 vs 0% no-sub-goal baseline (10-seed mean 98.5%) |

Schmidhuber (1991) — Reinforcement learning in Markovian and non-Markovian environments

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

pomdp-flag-maze/ (PR #7) | partial | 22-32s | 6/10 seeds 100% solve, 4/10 stuck at 50% |

Schmidhuber (1991/1992) — Neural sequence chunkers

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

chunker-22-symbol/ (PR #8) | yes | 1.86s | 99.5% label accuracy 10/10 seeds; A-alone baseline at chance |

1992 — Neural Computation triple

Schmidhuber (1992) — Learning to control fast-weight memories

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

fast-weights-unknown-delay/ (PR #8) | yes | 3s | 100% bit-accuracy K=5-30 trained / K=1-60 extrapolation; 10/10 seeds |

fast-weights-key-value/ (PR #8) | yes | 0.07s | retrieval cosine 0.428 → 0.754 (1.76× lift); numerical grad-check <1e-9 |

Schmidhuber (1992) — Learning factorial codes by predictability minimization

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

predictability-min-binary-factors/ (PR #9) | yes | 2.8s | predictors collapse to chance (L_pred = 0.2500 exact); pairwise MI 9.6e-5 nats; 8/8 seeds 100% bit-recovery |

1993 — Predictable classifications, self-reference, very deep chunking

Schmidhuber & Prelinger (1993) — Discovering predictable classifications

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

predictable-stereo/ (PR #9) | yes | 0.08s | I(yL; yR) = 7.598 nats; depth recovery 1.000 seed 0; 8/8 seeds at 0.997 mean |

Schmidhuber (1993) — A self-referential weight matrix

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

self-referential-weight-matrix/ (PR #8) | partial | 4.5s | 99.6% on 4-way boolean meta-learning (AND/OR/XOR/NAND); 8/8 seeds > 0.95 |

Schmidhuber (1993) — Habilitationsschrift

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

chunker-very-deep-1200/ (PR #8) | yes | 29.8s | 599.5× depth-reduction at T=1200; chunker 100% recall vs single-net 0% (gradient vanishes by t=4) |

1995–1997 — Levin search and the LSTM benchmark suite

Schmidhuber (1995/1997) — Discovering solutions with low Kolmogorov complexity

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

levin-count-inputs/ (PR #4) | yes | 1.0s | 5-instr popcount routine; 770k programs enumerated; 200/200 generalize |

levin-add-positions/ (PR #4) | yes | 0.34s | 3-instr im+ (length-3); 58 evaluations; 200/200 generalize |

Hochreiter & Schmidhuber (1996) — LSTM can solve hard long time lag problems

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

rs-two-sequence/ (PR #4) | yes | 0.94s | 30/30 seeds solve, median 144 trials vs paper ~718 |

rs-parity/ (PR #4) | yes | 15.3s | N=50 seed 0: 10,253 trials; N=500 seed 0: 412 trials / 3.2s |

rs-tomita/ (PR #4) | yes | 17-19s | #1, #2, #4 all solved across 10 seeds (within ~3× of paper for #1/#2; ~6× for #4) |

Hochreiter & Schmidhuber (1997) — Long Short-Term Memory canonical battery

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

adding-problem/ (PR #10) | yes | 39s | LSTM MSE 0.0007 (50× under paper threshold 0.04); vanilla RNN MSE 0.0706; 5/5 seeds clear; gradient check 1.6e-7 |

embedded-reber/ (PR #10) | yes | 2.6s | 10/10 seeds, mean 4800 sequences vs paper 8440 (1.8× faster with Adam) |

noise-free-long-lag/ (PR #10) | qualitative | 21s | sub-variant (a) at p=50: solved at seq 600, 100% acc; 6/10 seeds (b)/(c) deferred |

two-sequence-noise/ (PR #10) | yes | 32s | variant 3c only: 4/4 seeds 100% (~3k seqs vs paper ~269k SGD) |

multiplication-problem/ (PR #10) | yes | 4.5s | LSTM MSE 0.0028 / 17× chance baseline; 3/5 seeds (paper-faithful per-seed brittleness) |

temporal-order-3bit/ (PR #10) | yes | 24s | 5/5 seeds 100%, median ~6.4k seqs vs paper 31,390 (Adam advantage); vanilla RNN at chance 0.25 |

Mid-90s — Evolutionary, RL, and feature detection

Salustowicz & Schmidhuber (1997) — Probabilistic Incremental Program Evolution

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

pipe-symbolic-regression/ (PR #12) | yes | 1.3s | seed 3 finds Koza target x + x² + x³ + x⁴ exactly at gen 60; 6/20 seeds Koza-hit-solve |

pipe-6-bit-parity/ (PR #12) | yes | 240s | 4-bit clean solve at gen 258; 6-bit partial 71.9% at 240s budget cap |

Schmidhuber, Zhao, Wiering (1997) — Shifting inductive bias with SSA

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

ssa-bias-transfer-mazes/ (PR #7) | yes | 1.7s | SSA tail solve 0.83 vs no-SSA 0.70 (+19% relative); seed 0 task 2 SSA 8.12 steps vs no-SSA 60 steps |

Wiering & Schmidhuber (1997) — HQ-learning

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

hq-learning-pomdp/ (PR #7) | no | 21s | Honest non-replication: paper’s HQ-vs-flat gap doesn’t reproduce on 29-cell maze; mathematical analysis (γ^Δt · HV ≤ R_goal bound prevents per-corridor specialization) in §Open questions |

Schmidhuber, Eldracher, Foltin (1996) — Semilinear PM produces V1-like filters

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

semilinear-pm-image-patches/ (PR #9) | yes | 1.2s | 12/16 oriented filters (FFT concentration > 0.5); kurtosis 19.96 vs random 2.95; analytic-vs-numerical gradient max 5e-10 |

Hochreiter & Schmidhuber (1999) — LOCOCODE

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

lococode-ica/ (PR #9) | qualitative | 0.4s | Amari 0.117 mean over 10 seeds — 4× better than PCA (0.388), within 5× of FastICA (0.022) |

2000–2002 — LSTM follow-ups

Gers, Schmidhuber, Cummins (2000) — Learning to forget

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

continual-embedded-reber/ (PR #11) | yes | 14s | 5/5 forget-gate seeds solve (99.7% mean) vs 5/5 no-forget at chance (55%); cell-state norm 25 vs 295 |

Gers & Schmidhuber (2001) — Context-free and context-sensitive languages

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

anbn-anbncn/ (PR #11) | yes | 35s | a^n b^n trained n=1..10 → generalizes to n=1..65 (3/5 seeds); a^n b^n c^n → n=1..29; gradcheck 5.66e-6 |

Gers, Schraudolph, Schmidhuber (2002) — Learning precise timing

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

timing-counting-spikes/ (PR #11) | partial | 32s | Peephole seed 4: MSE 0.00073 / solve 0.998 vs vanilla 0.00240 / 0.900; cross-seed gap small (paper’s “vanilla fails all” doesn’t fully reproduce at short-MSD) |

Eck & Schmidhuber (2002) — Blues improvisation with LSTM

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

blues-improvisation/ (PR #11) | qualitative | 12s | 12/12 bar-onset chord match; step-chord 0.906; on-beat 0.792; chord-tone 0.877 |

2002–2010 — Evolutionary RL, OOPS, BLSTM+CTC

Schmidhuber, Wierstra, Gomez (2005/2007) — Evolino

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

evolino-sines-mackey-glass/ (PR #12) | partial | 140s | sines free-run MSE 0.181 (horizon 299); MG NRMSE@84 = 0.291 vs paper 1.9e-3 (whole-genome simplification of full ESP) |

Gomez & Schmidhuber (2005) — Co-evolving recurrent neurons

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

double-pole-no-velocity/ (PR #12) | yes | 60s | seed 0 solved at gen 27 / ~60s; 7/10 seeds 20/20 generalize at pop=40 (~5× cheaper than paper’s pop=200) |

Graves et al. (2005/2006) — BLSTM and CTC

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

timit-blstm-ctc/ (PR #15) | qualitative | 73s | synthetic phoneme corpus (K=6); BLSTM 1.87× faster than uni-LSTM (5/5 seeds 300 vs 560 iters); gradcheck 1.12e-7 |

Graves et al. (2009) — Unconstrained handwriting

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

iam-handwriting/ (PR #15) | qualitative | 103s | synthetic 10-char alphabet; in-vocab CER 0.082 / word acc 0.77; held-out compositional CER 0.647 |

Schmidhuber (2002–2004) — Optimal Ordered Problem Solver

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

oops-towers-of-hanoi/ (PR #4) | yes | 0.25s | 6-token recursive Hanoi solver SD C SD M SA C; reuse from n=4 onward; verified through n=15 |

2010–2017 — Deep learning at scale

Cireşan, Meier, Gambardella, Schmidhuber (2010) — Deep, big, simple nets

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

mnist-deep-mlp/ (PR #13) | partial | 79s | 1.17% test err / 15 epochs; 535k MLP vs paper 12M-weight nets at 800 epochs (0.35%) |

Cireşan, Meier, Schmidhuber (2012) — Multi-column DNN

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

mcdnn-image-bench/ (PR #13) | partial | 22.2s | 1.46% MNIST single-column MLP (no aug); paper 35-column ensemble 0.23% |

Cireşan, Giusti, Gambardella, Schmidhuber (2012) — EM segmentation

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

em-segmentation-isbi/ (PR #15) | qualitative | 1.5s | Synthetic Voronoi-EM substitute; ROC AUC 0.989 vs Sobel+intensity 0.880; pixel acc 95.97% |

Srivastava, Masci, Kazerounian, Gomez, Schmidhuber (2013) — Compete to compute

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

compete-to-compute/ (PR #13) | qualitative | 0.8s | Seed 0: LWTA forgetting 0.022 vs ReLU 0.072 (3.3× less); 10-seed: LWTA wins 6/10 (small-net regime noisy) |

Srivastava, Greff, Schmidhuber (2015) — Training very deep networks (Highway)

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

highway-networks/ (PR #13) | yes | 7s | Depth 30: highway 0.926 vs plain 0.124 (chance); plain dies past depth 10; highway stable 5-50 |

Greff, Srivastava, Koutník, Steunebrink, Schmidhuber (2017) — Search Space Odyssey

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

lstm-search-space-odyssey/ (PR #15) | yes | 145s | All 8 LSTM variants implemented; CIFG 1st, NIG last across 3/3 seeds; gradient check 1.31e-7 |

Koutník, Greff, Gomez, Schmidhuber (2014) — Clockwork RNN

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

clockwork-rnn/ (PR #15) | yes | 22s | Synthetic sum-of-sines T=320, periods 8/32/80/160; CW-RNN 0.117 vs vanilla 0.250 (2.22× over 5 seeds); multi-rate decomposition in per-group FFT |

Koutník, Cuccu, Schmidhuber, Gomez (2013) — Vision-based RL via evolution

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

torcs-vision-evolution/ (PR #15) | yes | 45.5s | Numpy oval track + 16×16 obs + DCT-parameterized W1; 14.3× compression (4129 raw → 289 DCT); 5/5 seeds solve in ≤50s |

Greff, van Steenkiste, Schmidhuber (2017) — Neural Expectation Maximization

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

neural-em-shapes/ (PR #14) | partial | 17s | K=3 slot N-EM, manual BPTT through T=4 EM iterations; best test NMI 0.428 epoch 7 (chance 0.33); paper AMI 0.96 |

van Steenkiste, Chang, Greff, Schmidhuber (2018) — Relational Neural EM

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

relational-nem-bouncing-balls/ (PR #14) | qualitative | 24.8s | Velocity-MSE: relational wins K=3,4,5 (0.81×, 0.92×, 0.97×); loses K=6 (1.01× — distribution shift dominates) |

2018–2025 — World models, fast-weight Transformers, systematic generalization

Ha & Schmidhuber (2018) — Recurrent World Models

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

world-models-carracing/ (PR #15) | yes | 6.5s | Numpy 2D track; V+M+C +103.8 mean across 5/5 seeds (random +4.84, ~21× random) |

world-models-vizdoom-dream/ (PR #15) | yes | 20s | Numpy 5×5 gridworld; controller trained ENTIRELY in M’s dream → zero-shot real-env transfer (49.1 vs random 22.4, 2.2× random) |

Schmidhuber et al. (2019) — Reinforcement Learning Upside Down

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

upside-down-rl/ (PR #14) | yes | 3.5s | Numpy 9-state chain MDP (per SPEC, not LunarLander); 5/5 seeds reach +4.70 at R*=5.0; achieved monotonically tracks commanded |

Schlag, Irie, Schmidhuber (2021) — Linear Transformers are secretly fast weight programmers

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

linear-transformers-fwp/ (PR #14) | yes | 0.08s | Equivalence verified to 2.22e-16 (float64 ulp): V^T(Kq) ≡ (V^T K)q. Pre-train cos 0.428 → post 0.754 (1.76×); delta-rule peaks +0.05 above sum-rule at N=6 |

Csordás, Irie, Schmidhuber (2022) — The Neural Data Router

| Stub | Reproduces? | Run wallclock | Headline |

|---|---|---|---|

neural-data-router/ (PR #14) | partial | 3:30 | Test depth 5: NDR 0.60 vs vanilla 0.32 (chance 0.25); 3-seed NDR 0.405 ± 0.013 vs vanilla 0.296 ± 0.031 (NDR wins 3/3) |

Summary statistics

| Reproduces? | Count | Examples |

|---|---|---|

| yes | 32 | nbb-moving-light, flip-flop, embedded-reber, fast-weights-key-value, oops-towers-of-hanoi, linear-transformers-fwp, world-models-carracing, … |

| partial | 12 | self-referential-weight-matrix, mnist-deep-mlp, mcdnn-image-bench, evolino-sines-mackey-glass, neural-em-shapes, neural-data-router, … |

| qualitative | 13 | nbb-xor, noise-free-long-lag, lococode-ica, blues-improvisation, em-segmentation-isbi, compete-to-compute, timit-blstm-ctc, iam-handwriting, … |

| no | 1 | hq-learning-pomdp (honest non-replication; mathematical analysis documented) |

Total: 58 stubs implemented, all in pure numpy + matplotlib, all <5 min/seed on a laptop except pipe-6-bit-parity (240s 6-bit budget cap), evolino-sines-mackey-glass (140s).

v2 filter recommendation

For the data-movement / ByteDMD instrumentation, prioritize stubs that:

1. Reproduce cleanly + run fast (low noise floor for measuring data-movement deltas)

- Pure-numpy mini-environments + sub-second runs:

linear-transformers-fwp(0.08s),predictable-stereo(0.08s),levin-add-positions(0.34s),lococode-ica(0.4s),compete-to-compute(0.8s),nbb-xor(0.85s),rs-two-sequence(0.94s),levin-count-inputs(1.0s),semilinear-pm-image-patches(1.2s),pipe-symbolic-regression(1.3s),em-segmentation-isbi(1.5s),ssa-bias-transfer-mazes(1.7s),chunker-22-symbol(1.86s),predictability-min-binary-factors(2.8s). - Verified-by-gradient-check (numerical-vs-analytical < 1e-6):

fast-weights-unknown-delay,fast-weights-key-value,temporal-order-3bit,temporal-order-4bit,adding-problem,noise-free-long-lag,clockwork-rnn,lstm-search-space-odyssey,anbn-anbncn,timit-blstm-ctc,self-referential-weight-matrix.

2. Have algorithmic variants on the same problem (lets you compare data-movement across algorithms)

- adding-problem family: vanilla RNN vs LSTM (paper’s contrast, both implemented in

adding-problemandtemporal-order-3bit). - temporal-order family: 3-bit vs 4-bit, 4-class vs 8-class on identical architecture.

- embedded-reber family: original 1997 LSTM (no forget) vs forget-gate LSTM (

continual-embedded-reber). - LSTM ablation matrix:

lstm-search-space-odysseyruns 8 variants on the same task — V/NIG/NFG/NOG/NIAF/NOAF/CIFG/NP — direct architectural-variant data-movement comparison built in. - Linear-attention ↔ FWP:

linear-transformers-fwpIS the equivalence demo;fast-weights-key-valueis the 1992 ancestor; ByteDMD on both should produce identical numbers. - Evolutionary methods:

pipe-symbolic-regression(PIPE),evolino-sines-mackey-glass(Evolino),double-pole-no-velocity(ESP),torcs-vision-evolution(DCT-compressed natural ES) — gradient-free family for compare-vs-gradient-based data-movement. - Search methods:

levin-count-inputs,levin-add-positions(Levin),oops-towers-of-hanoi(OOPS),rs-*(random search) — all gradient-free. - World models:

world-models-carracingandworld-models-vizdoom-dreamshare V+M+C decomposition — three distinct training stages with very different memory access patterns.

3. Defer for v2

- Stubs with run wallclock > 100s where v2 ByteDMD overhead would dominate:

pipe-6-bit-parity(240s 6-bit),evolino-sines-mackey-glass(140s),lstm-search-space-odyssey(145s). - Honest non-replications where measuring data-movement on a non-converged solver isn’t informative:

hq-learning-pomdp(paper’s HQ-vs-flat gap doesn’t reproduce on this maze size). - Partial reproductions where the v1.5 path needs to close first:

neural-em-shapes(no background slot),mnist-deep-mlp(smaller MLP),mcdnn-image-bench(single-column).

v1.5 + v2 follow-ups

Each stub’s §Open questions section flags stub-specific follow-ups. Repository-wide follow-ups:

- Original-simulator reruns (RL/env-heavy stubs): close the loop on gym CarRacing-v0, VizDoom DoomTakeCover, TORCS, TIMIT, IAM, ISBI. Currently all 8 use numpy mini-environments per the SPEC’s RL-stub rule.

- Paper-scale reruns for partial reproductions: full paper-scale

mnist-deep-mlp(12M weights, 800 epochs); 35-column ensemble formcdnn-image-bench; full ESP forevolino-sines-mackey-glass; T ≥ 300 fortiming-counting-spikes. - ByteDMD instrumentation (the actual research goal): prioritize the v2-filter recommendations above.

Compiled by agent-0bserver07 (Claude Code) on behalf of Yad. Source: PR bodies #4-#15 + per-stub READMEs.

Session Report: Building schmidhuber-problems via Agent Teams

Output: cybertronai/schmidhuber-problems — 58 stubs, 13 PRs (14 created, 1 closed-and-reissued), all merged

Source log: ~/.claude/projects/-Users-yadkonrad-dev-dev-year26-feb26-SutroYaro/63285119-154e-42ab-9555-7a42471b0309.jsonl (2,282 events)

Span: 2026-05-06T23:03 → 2026-05-08T16:16 UTC (~41.3 wall hours)

Lead session: SutroYaro

Companion to: hinton-problems BUILD_NOTES (53 Hinton stubs, May 1-3)

This report is reconstructed from the live session log, not from memory. Earlier drafts had fabricated counts; this revision is the source-of-truth version.

TL;DR for the video opener

- 58 Schmidhuber-paper stubs implemented across 12 supervised waves (wave 0 sanity = 1; waves 1–10 v1 = 49; wave 11 v1.5 = 8). Pure numpy + matplotlib. All <5 min/seed on a laptop.

- The SPEC was a single GitHub issue (#1) — adapted from hinton-problems issue #1.

- The dispatcher was Claude Code’s

agent-teamsprimitive — one teamschmidhuber-impl(agent_type: orchestrator), 12 waves, fresh teammates per wave. - Two human prompts mid-run reshaped the build:

- 2026-05-07T01:31:11Z — “why are u doing a branch per impl, should it be per waves?? why the branch spam. THIS IS WRONG PRACTICE COURSE CORRECT!” → wave 1 → wave 2 protocol pivot to local-only

wave-N-local/<slug>branches. - 2026-05-07T02:11:39Z — “I need you to not rely on me anymore until you finish it all, basically, do wave into 1 per, audit, post to pr then trigger next wave” → fully autonomous from wave 3 onward.

- 2026-05-07T01:31:11Z — “why are u doing a branch per impl, should it be per waves?? why the branch spam. THIS IS WRONG PRACTICE COURSE CORRECT!” → wave 1 → wave 2 protocol pivot to local-only

- One honest non-replication (

hq-learning-pomdp) acknowledged in the wave-3 audit at 2026-05-07T03:35Z, with mathematical analysis (γ^Δt · HV ≤ R_goalbound). - Post-merge author rewrite at 2026-05-08T16:12Z fixed git authorship across the entire repo via

git filter-branch: 74 agent-authored commits →Yad Konrad <yad.konrad@gmail.com>.

The actual chain of events

| Timestamp (UTC) | Event |

|---|---|

| 2026-05-06T23:03:33 | Session opens in SutroYaro |

| 2026-05-06T23:03:37 | Yad invokes sutro-sync skill — only skill call in the entire session — to pull Telegram + Google Docs + GitHub state. Surfaces Yaroslav’s Schmidhuber suggestion. |

| 2026-05-06T23:09:41 | Lead dispatches first Explore audit subagent: “Survey schmidhuber-problems repo” |

| 2026-05-06T23:20:41 | SPEC opened as issue #1 — the contract for every teammate. Title: “Spec: minimum implementation requirements for Schmidhuber-problem stubs (v1)” |

| 2026-05-06T23:24:21 | First teammate dispatched: nbb-xor-builder (wave 0 sanity) |

| 2026-05-06T23:56:21 | Wave-0 PR opened on impl/nbb-xor (PR #2) |

| 2026-05-06T23:56:38 | v1.5 follow-up issue #3 opened |

| 2026-05-07T00:11:17 | Yad: “alright shall we do clean up and dispathc multiple agents to finish the rest of the waves?” — wave 1 trigger |

| 2026-05-07T00:20:49 | Wave 1 dispatch begins (6 teammates) |

| 2026-05-07T01:31:11 | Yad: “why are u doing a branch per impl, should it be per waves?? why the branch spam. THIS IS WRONG PRACTICE COURSE CORRECT!” |

| 2026-05-07T01:38:19 | PR #2 closed; reissued as PR #5 on wave/0-sanity branch. All impl/<slug> remote branches deleted. From wave 2+, per-stub branches stay LOCAL ONLY. |

| 2026-05-07T01:28:53 | Wave 1 PR #4 opened (wave/1-search) |

| 2026-05-07T01:57:22 | Wave 2 dispatch begins (5 teammates) |

| 2026-05-07T02:11:39 | Yad: “I need you to not rely on me anymore until you finish it all… do wave into 1 per, audit, post to pr then trigger next wave” — autonomous mode engaged |

| 2026-05-07T02:33:12 | Wave 2 PR #6 opened |

| 2026-05-07T03:35:08 | Wave 3 audit: lead acknowledges hq-learning-pomdp as honest non-replication (“paper’s HQ-vs-flat headline gap does NOT reproduce on the 29-cell maze. Implementation faithful”) |

| 2026-05-07T12:16:45 | Wave 3 PR #7 opened |

| 2026-05-07T12:49:16 | Wave 4 PR #8 opened |

| 2026-05-07T13:15:48 | Wave 5 PR #9 opened |

| 2026-05-07T14:33:36 | Wave 6 PR #10 opened (cleanup commit on top: removed orphan noise-free-long-lag/problem.py) |

| 2026-05-07T15:28:24 | Wave 7 PR #11 opened (cleanup commit on top: removed orphan blues-improvisation/problem.py) |

| 2026-05-07T16:57:11 | Wave 8 PR #12 opened |

| 2026-05-07T17:22:01 | Wave 9 PR #13 opened |

| 2026-05-07T18:07:35 | Wave 10 PR #14 opened — v1 complete at 50/50 |

| 2026-05-08T12:07:27 | Wave 11 (v1.5) dispatch begins (8 teammates for heavyweight-env stubs) |

| 2026-05-08T14:49:01 | Wave 11 PR #15 opened — v1+v1.5 complete at 58/58 |

| 2026-05-08T15:38:20 | Meta PR #16 opened (mdBook config, BUILD_NOTES, RESULTS, VISUAL_TOUR, README catalog, GH Pages workflow) |

| 2026-05-08T15:49:49 | All 13 PRs merged via gh pr merge in sequence |

| 2026-05-08T15:50:41 | First Pages deploy attempt fails: “Ensure GitHub Pages has been enabled” |

| 2026-05-08T15:53:21 | Pages enabled via gh api -X POST repos/.../pages -F build_type='workflow'; workflow re-run; site live at https://cybertronai.github.io/schmidhuber-problems/ |

| 2026-05-08T16:09:24 | Yad: “wtf why its claude agent-0bserver07 and not fucking claude 0bserver07? claude agent-0bserver07 was for comment only” |

| 2026-05-08T16:12:01 | git filter-branch rewrite: 74 agent-authored commits → Yad Konrad <yad.konrad@gmail.com>. Force-pushed main. Site rebuilt with corrected attribution. |

| 2026-05-08T~16:14 | README formatting polish (header bullets, lineage paragraph broken into bullet list) per Yad’s feedback. |

| 2026-05-08T16:16:50 | Last logged event in this session |

The SPEC (issue #1) — the actual contract

The contract between Yad and every teammate was a single GitHub issue. Not chat. Not a system prompt. An issue every PR linked back to.

It defined:

- Required files per stub:

<slug>.py,README.md,make_<slug>_gif.py,visualize_<slug>.py,<slug>.gif,viz/ - 8 README sections: Header / Problem / Files / Running / Results / Visualizations / Deviations / Open questions

- Reproducibility rules: seed exposed via CLI, all hyperparameters in Results, command in §Running reproduces the number

- Acceptance checklist (10 boxes)

- Schmidhuber-specific additions:

- Algorithmic faithfulness > optimizer convenience: long-time-lag stubs use the paper’s recurrent architecture; evolutionary stubs use the paper’s evolutionary optimizer; Levin/OOPS stubs keep universal search. No backprop shortcuts.

- Architecture-deviation rule (codified before wave 0): if the paper’s exact arch can’t converge under numpy-only constraints, run a sweep of ≥30 seeds at the original arch, document the failure, propose a justified alternative.

- RL-stub rule: numpy mini-environments. No

gym/gymnasium. Original-simulator reruns deferred to v2.

The orchestration model

┌──────────────────┐

│ schmidhuber-impl │ (TeamCreate, agent_type=orchestrator)

└─────────┬────────┘

│

┌────────────┼────────────┐

│ │ │

Wave 0/1/…/11 SendMessage Subagent dispatches

│ │

▼ ▼

┌──────────┐ ┌──────────────┐

│ teammates │ │ Agent tool │

│ <slug>- │ │ general- │

│ builder │ │ purpose 58× │

│ x58 │ │ Explore 15× │

└────┬─────┘ └──────┬───────┘

│ │

▼ ▼

worktree branch PR audits, code reads

wave-N-local/<slug>

│

▼

(LOCAL ONLY — DO NOT PUSH)

│

▼

lead octopus-merges into wave/N-<family>

│

▼

gh pr create → wave PR

│

▼

audit subagent → audit comment on PR

│

▼

SendMessage(shutdown_request)

│

▼

Next wave starts fresh

Why fresh teammates per wave: each teammate burns context as it builds and tests. Shutting down between waves keeps later waves running on full context windows. The lead persists; the workers turn over.

Why LOCAL ONLY per-stub branches (the wave-1 → wave-2 fix): pushing 6 impl/<slug> branches per wave to remote was branch spam. Yad called it out at 2026-05-07T01:31. Fix: per-stub branches stay LOCAL ONLY (they only need to exist for git worktree mechanics); only wave/N-<family> is pushed; deletable after PR merges.

What the session actually used (verified counts from the JSONL)

Tool calls in the lead session

| Tool | Calls | What for |

|---|---|---|

| Bash | 140 | git, gh CLI, file ops, running tests, workflow checks |

| Agent | 73 | subagent dispatches: 58 general-purpose builders + 15 Explore auditors |

| SendMessage | 69 | inter-teammate messaging (shutdowns + summary requests) |

| TaskUpdate | 34 | shared task list maintenance |

| Read | 16 | reading paper PDFs, stub code, READMEs |

| TaskCreate | 15 | new tasks added to the team’s list |

| Write | 11 | new files (READMEs, scripts, configs) |

| Edit | 10 | small in-place edits |

| AskUserQuestion | 7 | direction-clarifying questions to Yad |

| ToolSearch | 3 | loading deferred tool schemas |

| Skill | 1 | only sutro-sync at session start |

| TaskList | 1 | one snapshot |

| TeamCreate | 1 | the schmidhuber-impl team itself |

| TeamDelete | 1 | end-of-session cleanup |

Subagent dispatches (Agent tool, n=73)

| Type | Count | Use |

|---|---|---|

general-purpose | 58 | per-stub builders (one per stub across 12 waves) |

Explore | 15 | initial repo survey + 12 per-wave audits + 2 BUILD_NOTES data-extraction passes |

GitHub artifacts produced

- 2 issues created: #1 (SPEC) + #3 (v1.5 follow-up)

- 14 PRs created: PR #2 (closed and reissued as #5), PRs #4, #5, #6, #7, #8, #9, #10, #11, #12, #13, #14, #15, #16

- 13 PR audit comments (one per wave PR)

- 2 cleanup commits on top of wave merges: wave 6 (

noise-free-long-lag/problem.pyorphan removed), wave 7 (blues-improvisation/problem.pyorphan removed) - 13 PR merges in one batch (

gh pr merge× 13 in sequence) at 2026-05-08T15:49 - 1 repo edit to set the homepage URL

- 1 GH API call to enable Pages with workflow build type

The waves at a glance

| Wave | Family | Stubs | First dispatch (UTC) | PR opened (UTC) | PR # |

|---|---|---|---|---|---|

| 0 | Sanity | 1 | 2026-05-06T23:24 | 2026-05-07T01:38 | #5 |